►

From YouTube: IETF99-SAAG-20170720-1330

Description

SAAG meeting session at IETF99

2017/07/20 1330

https://datatracker.ietf.org/meeting/99/proceedings/

C

F

G

D

So

yes,

we're

starting

with

working

group

reports.

She's

very

we

know

well,

I

forgot

you

all

to

be

familiar

with

this.

If

you're

not

familiar

this

well

I

guess

you

can

read

it

or

read

it

later

right.

So

we'll

start

with

some

working

Barry.

Did

you

have

a

yeah

okay,

good

enough?

If

you

bring

them

in

order,

always

I

know

we

already

in

the

order,

turns

out

ace.

H

We

met

Monday

morning

and

we

have

lots

of

different

proposals

floating

around

and

how

to

carry

these

different

tokens

around

into

the

authentication

authorization.

So

we

had

lots

of

researchers

coming

along

and

providing

input.

We

unfortunately

didn't

make

that

much

progress

on

the

already

charted

items.

So

we

need

to

improve

on

that,

but

there's

a

lot

of

ideas.

C

I

So

we

met

Thursday,

we

actually

have

two

sessions.

Why

is

the

procession

and

then

the

formal

session?

So

this

time

we

had

lots

of

contributions

on

the

data

models

and

information

models,

so

this

meeting

mainly

is

to

make

sure

they

are

lying

with

each

other,

so

we

reached

a

really

good

result

on

that.

Thank

you.

Thank

you.

C

H

Yeah,

actually,

the

final

session

is

tomorrow.

So

in

the

first

session

we

talked

about

wrapping

up

some

existing

working

group

documents

and

we

also

had

Mike

Jones

present

a

best

current

practice

on

a

JWT.

After

we've,

some

researchers

found

attacks,

so

we

documented

those-

and

we

did

a

hum-

and

the

group

wanted

to

work

on

that

document.

So

that's

something

we

will

confirm

on

a

mini

list.

So

if

you

use

JW

T's,

that

may

be

a

good

document

to

look

at

or

if

you

implement

them.

E

Pgp

has

had

quite

remarkable

success

in

not

getting

anything

done

and

that

has

resisted

all

attempts

from

both

chairs

to

talk,

talk

to

people

offline

and

try

to

poke

things,

and

so

we

have

asked

Eric

to

close

the

working

group

whenever

he

is

ready

to

do

that

and

told

the

working

group

that

and

hope

that

that

might

spur

some

things

and

it

it

spurred

a

few

words

but

no

actual

action.

Eric.

Any

comments,

I

think.

E

D

K

The

as

the

co-chair,

this

is

dkg

I'm,

also

bummed,

that

we

didn't

manage

to

get

that

done.

There

is

continuing

work

in

that

space,

probably

and

if

I

can

manage

to

get

people

to

document

it

I'll

try

to

get

them

to

submit

drafts

and

if

they

actually

start

submitting

drafts

that

are

actually

functional,

then

maybe

I

will

come

back

and

ask

for

more,

but

we

have.

We

have

not

had

any

luck

in

getting

in

to

move.

We

don't

have

enough

implementers

in

the

group

right,

I.

Think.

D

D

C

C

G

C

C

L

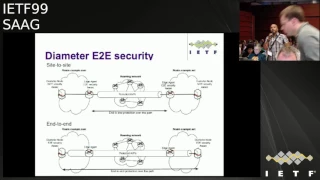

Okay,

thank

you

so

in

the

dime

working

group,

so

we

have

the

base

protocol

and

initially,

in

the

previous

version

of

the

protocol,

we

have

an

end-to-end

security

staff

and

when

we

we

publish

a

new

version

of

this

one,

this

end-to-end

security

mechanism

was

deprecated.

It

was

based

on

CMS

and-

and

nothing

was

done,

so

we

are

still

looking

for

a

solution

for

end-to-end

security.

L

Basically,

it

could

be

between

edge

edge

agent

between

a

to

two

different

two

different

networks.

Anyway,

you

will

go

through

what

we

call

a

roaming

networks

or

you

have

a

bunch

of

agent

in

the

middle

so

or

you

may

have

end-to-end

real

end-to-end

communication

between

edge

two

clients,

client

and

server.

So

the

idea

to

have

a

solution

to

be

able

to

protect

a

VP's

next

slide

yeah.

L

So

the

status

is

that

the

end-to-end

security

requirements

is

now

an

RFC,

so

we

have

existing

requirements

coming

from

the

mobile

community

so

for

roaming,

especially,

but

also

by

operators

that

would

like

to

protect

their

diameter

signaling.

So

what

we

have

done

is

to

reuse

a

previous

draft

called

end-to-end

security,

but

no

work

was

done

on

this

one.

So

there

is

a

lake

lack

of

resources

and

also

like

of

expertise,

because

we

also

died

metal

part,

but

we

have

also

the

security

aspects

next

slide,

please

so

we

have

in

the

existing

draft.

L

We

have

a

basic

solution

where

it's

possible

to

have

integrity

and

confidentiality.

So

what

we're

defining

its

specific

AVP?

Where

were

you

able

to

sign

and

another

one

where

you

are

able

to

group

all

the

sensitive

EVPs

and

to

encrypt

them?

And

to

put

this

in

this

in

this?

What

we

call

a

group

AVP?

So

it's

it's

how

to

be

able

to

convey

to

convey

this

information,

so

the

solution

was

based

on

Jason,

so

using

the

Jason

Webb

signature

for

the

integrity,

protection

and

Webber

Kishin

for

the

confidentiality

we

were

thinking

about.

L

L

So

I

think

that

the

proposal

is

quite

simple

just

to

be

able

to

have

a

solution,

so

I

know

that

people

in

the

room

are

aware

of

this

issue,

so

we

I'm

looking

for

some

guys

that

could

help

on

this

work.

So

we

could

create

a

kind

of

a

design

team

and

so

on,

just

to

be

able

to

have

a

solution

done

so

any

any

volunteers

would

be

welcome.

So

you

can

contact

a

katrien

myself

and.

M

N

Phil

hon

Baker

I

had

to

generate

something

very

similar

for

my

meshwork

I.

It's

written

up,

but

not

separately

from

the

other

stuff,

but

I

can

pull

it

out.

I

did

notice

the

problem

with

you

with

applying

Jose

encryption

and

rolled

my

own

based

on

Jose,

but

doing

things

in

a

more

sane

fashion

for

doing

end-to-end

encryption

within

a

web

service.

So

so.

C

E

You

mark

met

this

morning

and

I

guess

the

main

thing

that

it's

discussing

right

now

is

whether

to

put

out

the

document

as

experimental

first

or

go

straight

to

standards

track

we'd

originally

been

figuring

standards

track,

but

Dave

Crocker

pointed

out

that

we

don't

know

the

operational

effects

of

of

what

we're

doing

and

could

use

an

experiment

with

that.

So

the

working

group

still

hasn't

decided

that

and

we'll

figure

it

out.

Otherwise,

it's

almost

ready

for

working

group

last

call

that's

the

arc.

E

O

Q

C

S

Perk

is

getting

around

some

of

the

issues

it

had

with

its

double

context:

encryption

for

RTP,

that's

getting

to

a

point

where

it

would

benefits

from

some

more

review.

Well,

probably

in

a

couple

of

weeks

once

the

new

draft

is

out

would

benefit

from

some

review

from

folks

who

are

cryptographically

minded

and

have

some

enthusiasm

for

real

time

protocols.

So,

like

I,

said,

I

expect

there

will

be

a

new

version

of

that

double

encryption

draft.

In

a

few

weeks,

then

I'll,

maybe

ping,

the

cyclists

for

some

more

juice.

S

C

G

G

Okay,

talking

to

somebody

else

so

right

now,

we've

got

our

the

the

core

documents

that

we

were

charted

to

do.

They

are

in

they've,

been

overseas

for

a

while

we're

working

on

a

few

specs

related

to

the

best

best

practice

of

using

TLS

in

in

email,

various

forms

and

shapes.

But

after

that

we

can't

really

see

any

other

work.

So

if

the

security

community

has

stuff

for

you

ta,

you

should

bring

it

to

our

attention

before

Singapore,

because

after

that,

I

think

we're

gonna

be

done.

Thank.

R

T

C

R

C

H

Yep

for

deep,

we

had

a

tutorial

on

Sunday,

which

I

think

was

well

attended,

describing

on

how

Trust

song

works

and

and

the

scope

of

the

deep

effort

and

Wednesday.

We

we

met

to

discuss

the

Charter

and

I

posted

it

to

the

deep

mailing

list,

and

we

will

also

continue

with

the

webinars,

so

that

would

be

in

webinar

from

the

Intel

guys

about

the

trusted

execution

environments.

H

H

We

actually

met

today

and

sort

of

a

site

meeting

to

talk

to

those

who

are

developing

operating

systems

for

IOT

devices

were

the

potential

customers

or

the

potential

guys

who

are

impacted

by

this,

and

there

are

two

new

documents

out

there

posted

on

the

mailing

list

describing

what

the

standards

effort

could

look

like,

and

so

please

join

that

list.

If

you

care

about

firmware,

updates

and

or

IOT

devices.

Thank.

C

U

Sorry

I

didn't

realize:

I

was

on

the

agenda.

This

is

Sam

Euler

w3c

is

web.

Authentication

working

group

is

making

interesting

progress,

bringing

token

based

device

based

authentication

up

into

the

browser,

but

I

think

the

thing

they'll

be

of

more

interest

to

the

room,

is

we're

reaching

out

and

trying

to

get

more

eyes

doing

security

and

privacy

reviews

on

our

specs?

C

C

V

So

30

minutes

on

post

quantum,

cryptography

and

I

promise

you

there

are

no

equations

in

any

of

my

slides.

So

if

you

are

thinking

of

making

a

run

for

it

because

of

the

possibility

of

equations,

you

can

stay

stay

in

your

seats.

I'm

gonna

start

by

looking

at

something.

That's

manifestly

no

post

once

I'm

going

to

start

by

talking

a

little

bit

about

sha-1

and

talk

about

the

lifetime

of

sha-1,

so

we

go

back

to

deepest

darkest

history.

The

1990s

sha-1

was

published.

It

was

actually

a

tweak

of

a

design

from

1993.

V

There

were

various

attacks

on

Shia

zero

in

the

1990s

which

validated

that

switch.

Then

char

2

was

published

by

NIST

in

2001.

Nobody

used

it.

We

had

the

first

collision

attack

for

sha-1

and

2005,

except

it

was

an

attack

that

didn't

provide

any

collision.

It

was

an

estimated

complexity

of

2

to

the

63

hash

operations.

There's

a

famous

breakthrough

paper

by

wine

get

out.

It's

maybe

worth

remembering

that

wine

was

denied

a

visa

to

come

and

present

our

result

in

United

States.

At

the

time

things.

V

Some

things

don't

change

very

much

then

between

then,

and

now

there

were

lots

of

claims

and

counterclaims

about

improvements

to

this

attack,

and

you

know,

reactivations

of

the

complexity

in

2006

NIST

took

the

chef's

the

the

step

of

deprecating

sha-1

from

2010

onwards

for

all

federal

agencies

for

all

new

applications

requiring

collision

resistance

or

a

limited

set

of

applications

and

use

cases

in

2013,

Microsoft

gar

to

doing

something

about

showering

deprecation

from

2016

onwards.

For

new

code

signing

certificates

2014

still

no

collisions.

This

is

now

quite

some

time.

V

Nearly

10

years

after

Wang's

paper,

Wang

at

Al's

paper,

I,

should

say.

The

best

estimate

at

this

point

in

time

is

to

do

the

61

hash

operations

to

computer

collision

due

to

mark

Stevens

and

his

collaborators

in

2015.

Something

really

interesting

happened.

We

saw

something

called

three

star

collisions

which

are

a

precursor

of

food,

collisions

against

Taiwan,

not

quite

there

yet

requiring

moderate

computation.

Ten

days

on

a

GPU

cluster

with

sixty-four

GPUs

2017

sha-1

is

still

widely

used.

There's

lots

of

activity

in

the

C

browser

forum.

V

Maybe

some

of

you

have

seen

some

of

that

lovely

controller

state

happening

over

there.

Resisting

the

removal

of

sha-1

trying

to

extend

this

lifetime

is

gone

and

then

finally,

we

got

the

first

collision

since

sha-1

in

ferry

this

year,

I

joined

research

activity

between

the

researchers

at

CWI,

led

by

Mark

Stevens

and

by

Google

they

finally

actually

exhibited

sha-1,

collisions

q,

much

press

coverage

and

then

lots

of

researchers.

Like

me

saying.

Well,

you

knew

this

was

coming.

This

should

not

be

a

surprise.

V

V

Indeed,

here,

here's

a

related

graph

that

shows

the

uptake

of

sha-2

in

browser

trusted

certificates

on

the

on

the

public

Internet,

and

you

see

a

sha-1

search,

which

is

the

redline,

suddenly

started

decreasing

in

around

early

2014

and

then

sha-2

started

to

shoot

up.

An

md5

is

effectively

reached

you,

which

is

a

good

thing.

Anybody

want

to

guess

yeah

by

all

means

support,

but

let's

make

this

as

interactive

as

you

like,

except

please

don't

throw

things.

V

So

anybody

want

to

guess

what

happened

in

in

in

early

2014

to

make

this

thing

tick

up,

horribly,

the

first

vulnerability

with

the

logo,

not

the

last.

Only

the

first

okay,

so

I

think

what's

important

to

say

here

is

it

was

not

a

cryptographic

attack

that

led

to

the

switchover

from

sha-1

to

sha-2

in

certificates.

It

was

a

computer

security,

vulnerability

in

a

very

widely

deployed

piece

of

software,

open,

SSL.

V

Okay,

what

about

progress

in

quantum

computing

we've

seen

sometimes

something

happening

and

in

the

kind

of

classical

world

I

apologize

for

the

very

small

font

in

this

slide.

I

think

the

slides

are

online

if

you

want

to

have

a

look

at

them

and

follow

along.

So

there

were

so

many

things

to

put

in

here,

there's

been

so

much

progress

that

I

had

to

make

the

font

very

small.

So

it's

interesting

I

got

all

of

this

from

Wikipedia,

because

I

am

NOT

an

expert

on

on

quantum

computing

or

quantum

physics.

V

I

actually

missed

that

class

in

physics.

So

pre-1994

wikipedia

says

that

there

were

these

isolated

contributions

by

various

great

physicists

people

like

Stephen,

Fry's,

nah-ha

level,

Charlie,

Bennett,

etc.

Lots

of

interesting

little

nuggets,

but

nothing

no

kind

of

connecting

theory,

no

kind

of

concept

of

quantum

computing

as

a

thing

that

really

changed

in

94

and

then

96

because

of

Shor's,

algorithm

and

Grover's

algorithm.

So

short,

algorithm,

basically

breaks

discrete

log

and

factoring

problems

in

with

poly

many

gates

and

polynomial

depth,

polynomial

in

the

size

of

the

numbers

that

you're

working

with.

V

In

other

words,

all

public

key

cryptography

deployed

on

the

Internet

today

is

broken.

If

you

could

build

a

large-scale

quantum

computer,

they

could

implement.

Shor's

algorithm

in

Grover's

algorithm

in

1996

basically

gives

you

a

square

root,

speed-up

for

search

problems,

and

the

effect

of

that

is

to

have

the

effective

key

size

of

your

symmetric

primitives.

So

some

would

say

that's

why

we

have

aes

256

in

the

first

place

as

a

hedge

against

quantum

attacks

and

Grover's

algorithm

in

1998,

a

two

bit

quantum

computer

was

built

and

three

bits

using

NMR

in

2000.

V

We

got

to

five

bits

and

seven

bits

using

NMR

in

2001.

So

this

is

great.

The

number

15

was

factored

oh,

no,

no!

No!

No

seriously

on

Iran

a

quantum

computer

I'm,

not

I'm,

not

making

a

joke

here.

This

is

significant

progress

because

it

means

you

have

to

build

a

large

enough

computer

and

run

it

for

long

enough

to

find

out

that

15

is

three

times

five.

V

The

cube

eight

was

announced

in

2005.

If

I

have

no

idea

whether

I

know

it

means,

but

it's

good

good,

good

publicity

in

26

2006,

we

got

to

12

bits,

12

quantum

bits,

28

quantum

bits,

and

now

you

start

to

see

a

runaway

2008.

128

quantum

bits

this

stuff

just

got

real.

This

got

really

worrying,

except

this

is

d-wave,

so

there's

some

knowledgeable

laughter

from

the

front

for

those

of

you

further

back.

What

D

way

we're

building

is

a

quantum

annealing

machine,

so

it

implements

some

kind

of

annealing

algorithm

in

a

quantum

way.

V

It's

not

doing

general-purpose

quantum

computation

you

couldn't

use.

As

far

as

we

know,

one

of

these

T

wave

computers

to

do

to

run

Shor's

algorithm.

For

example,

we

do

know

that

Google

bought

a

dua,

wysz

een.

Anybody

from

Google

here

willing

to

confirm

that

and

then

tell

us

what

you're

doing

with

it.

I

have

been

told

that

they

dismantled

it

and

rebuilt

it

in

their

own

image,

so

some

how

to

do

their

own

stuff.

V

So

they

have

those

kind

of

people-

okay,

yeah,

true,

okay,

so

back

to

the

real

world

of

actual

general-purpose

quantum

computation,

fourteen

qubits

in

2011

and

then

in

2012,

the

number

21

was

factored

as

three

times:

seven,

then,

actually,

between

2013

and

2017,

there

wasn't

a

huge

amount

of

kind

of

publicly

announced

progress

in

factoring

and

I've

been

told

by

experts

in

the

field.

That's

because

factoring

is

the

wrong

problem

to

focus

on.

V

We

should

be

looking

at

other

things

than

factoring

I

think

it's

because

they

find

it

hard

to

scale

their

quantum

computers

beyond

the

number

of

bits

needed

here

there,

instead

focusing

on

more

fundamental

technologies

trying

to

get

them

in

place

before

attempting

to

fight

for

larger

numbers

in

late

2016

onwards,

the

physicists

did

a

pivot,

I

believe

is

called

the

pivot

in

the

tech

world

towards

focusing

on

something

called

quantum

suit

primacy,

which

is

actually

a

phrase.

I

find

personally

objectionable.

It

has

a

lot

of

really

ugly

overtones.

V

The

use

of

the

word

supremacy

here

and

what

this

means

is

building

a

large

enough

quantum

computer,

that

you

could

solve

a

problem

that

no

classical

computer

could

solve

in

a

reasonable

amount

of

time,

with

a

reasonable

amount

of

resources.

Think

of

gluing

all

of

Google

and

Akamai

and

CloudFlare

servers

all

together,

trying

to

solve

some

computational

problem,

not

being

able

to

do

it

but

being

able

to

do

on

a

quantum

computer,

something

like

some

kind

of

corns

from

chemistry

simulations

for

example,

and

so

now

there

are

rumors.

V

There

are

circulating

around

in

the

research

community

that

quantum

supremacy

will

be

announced

at

a

conference

in

Berlin

last

month,

Google

announced

that

they

would

achieve

quantum

supremacy

by

the

end

of

the

year,

but

I

think

that's

using

one

of

these.

Well,

who

knows

exactly

what

that

means?

Let's,

let's

not

speculate

too

much

in

2017

d-wave

launched

the

two

thousand

queue

which

has

two

thousand

quantum

bits

in

their

simulated

annealing

world.

That

reminds

me

of

the

story

of

Hewlett

Packard,

whose

very

first

product

was

called

the

Hewlett

Packard

200.

V

Okay,

because

it

sounded

good

to

start

with

something

that

had

a

large

number

and

a

small

number

it

looked

like

you

were

more

established,

I

guess,

D

Way

have

been

a

p-wave

have

been

around

for

a

while

now

IBM

unveiled

a

seventeen

bit

quantum

machine.

Sorry,

a

seventeen

qubit

machine

and

Google

and

Microsoft

Research

are

all

doing

cool

stuff

in

this

area.

So

there's

a

lot

of

energy

going

in

a

lot

of

money

going

into

this

progress

hard

to

judge

for

me.

V

So

what

I'd

say

is

that

to

draw

these

two

things

together

is

that

the

threat

of

large-scale

quantum

computing

is

kind

of

weakly

analogous

and

go

with

me

on

this.

It's

pretty

weak

analogy

to

the

threat

of

a

breakthrough

in

sha-1

collision.

Finding

and

the

breakthrough

might

be

imminent,

but

then

again

it

might

not,

and

we

know

do

actually

have

sha-1

collisions.

V

So

this

is

slight

really

should

have

been

updated,

but

for

a

long

long

time

people

were

speculating

well,

we

can

do

sha-1

collisions,

but

nobody's

done

it

yet

because

the

reward

is

not

high

enough

or

because

it's

actually

harder

than

we

think.

We

didn't

really

know

whether

it

was

going

to

be

possible.

No,

we

didn't

really

know

whether

those

algorithms

would

scale

or

not

to

the

size

required

it's

hard

to

quantify

the

risk

that

something

will

happen

and

hard

to

put

a

timeframe

on

it

happening,

but

meaningful

results

in

this

area.

V

We'd

have

a

substantial

impact

and

lots

of

smart

people

are

working

on

it

and

there's

been

a

tremendous

amount

of

research

investment.

On

the

other

hand,

maybe

quantum

computing

is

a

bit

like

fusion

research.

If

any

of

you

tracked

fusion

research

over

the

years,

it's

been

very

interesting,

there's

been

tokamaks

and

there's

been

no

kinds

of

plasmas

and

firing

lasers

at

pellets,

and

none

of

it

really

works

yet.

Okay,

so

here's

some

conversations

I've

been

party

to

with

people

in

the

quantum

computing

world.

V

Large-Scale

quantum

computing

is

only

a

decade

away,

but

he's

been

saying

that

for

25

years,

okay

in

terms

of

fundamental

physics

were

pretty

close

to

what

we

need.

There's

just

tons

of

engineering

work-

and

this

is

a

quote

from

Evan

Jeffery,

who

is

now

with

Google,

was

with

UC

Santa

Barbara

and

he

was

he

and

his

team

were

acquired

by

Google,

and

this

was

in

a

really

great

talk

that

he

gave

a

very

cold

head.

It's

sensible

talk

ly.

V

He

gave

about

a

quantum

computation,

a

real-world

crypto

in

2017,

and

you

can

find

that

on

the

real

world,

crypto

Google

as

for

a

YouTube

feet,

and

he

estimated

also

in

that

talk

that

to

break

a

1024

bit

RSA

modulus

would

require

something

like

250

million

quantum

bits

to

be

held

in

a

coherent

state

for

long

enough

to

do

the

to

do.

The

computation

I'll

just

remind

you.

The

current

state

of

they

are

is

17,

so

there's

a

gap,

but

apparently

it's

only

engineering

work.

V

Okay.

We

should

get

him

to

come

and

say

that

to

the

IETF

see

how

you

guys

react

to

that

yeah.

Okay,

so

is

there

a

coming

crypt

apocalypse?

I

should

never

put

a

word

on

a

slide

that

I

can't

actually

pronounce

I

find

apocalypse

very

hard

to

say.

I've

done

it

twice

correctly

now

and

I

think

I'll

quit

there.

We

don't

know

whether

there'll

be

a

scaling

breakthrough

or

not.

If

it

comes,

it

would

be

pretty

catastrophic

for

the

internet

and

for

internet

security.

V

So,

as

I

mentioned

before,

shor

Shor's

algorithm

would

mean

that

all

currently

deployed

of

a

key

cryptography

on

the

Internet

today

would

be

broken

without

exception.

Okay,

you

can

break

elliptic

curve,

cryptography

all

discrete

log

based

cryptography

based

on

finite

fields,

all

RSA

base

cryptography

and

that's

pretty

much

what

we

have

in

deployment

today,

interestingly,

because

of

the

key

sizes

and

the

sort

of

scaling

properties

of

quantum

computation

elliptic

curve,

cryptography

would

break

before

an

RSA.

V

So

lipton

of

cryptography

is

weaker

for

the

same

key

cipher

for

a

smaller

key

size

and

I,

see

and-

and

one

of

the

one

of

the

things

to

think

about

here-

is

that

an

attacker,

a

well

resourced

attacker,

think

nation-state

adversary.

Nsa

could

capture

trusting

okay,

you're

catching

up

good?

Okay,

a

nation-state

bursary.

Could

capture

interesting,

looking

difficult

exchanges

now,

okay,

so

from

my

email,

server

to

Eric's

mail

server,

where

we're

discussing

this

talk

and

what

am

I

going

to

say,

I

think

was

a

question

yeah.

Just

a

quick

question

on.

S

The

ECC

point

it

seemed

Richard

parts

is

this

been?

Is

this

been

a

point

with

that?

Is

it

gotten

actually

gotten

some

analysis?

Besides

the

hand

waving

keys

are

longer

need

more

cubits,

because

there

are

like

time-space

trade-offs

that

apply

here,

and

so,

even

though

you

might

be

able

to

get

by

with

fewer

cubits,

you

might

need

a

longer

coherence

time.

Is

it

similar

walls

I

think

a

detailed?

How

all

of

this

analysis.

V

W

Paul

happened

here.

There

was

just

a

paper

like

two

months

ago

on

this,

which

actually

says

that

maybe

ECC

is

going

to

be

almost

as

hard

for

equivalent

strikes.

The

last

thing

I

heard

was,

you

need

many

more

registers,

so

you

need

a

bigger

computer.

I

believe

the

answer

is:

there's

not

agreement,

yet

there

probably

will

be

agreement

on

this

within

the

next

few

years.

Thank.

V

You

very

much

for

that

clarification

poll,

okay,

I'm

as

I

said,

I'm,

not

an

expert.

So

if

people

here

know

more,

please

come

and

grab

the

mic

and

give

the

rest

of

the

presentation

that

would

be

really

great.

I

could

sit

down

okay,

so

we

really

expect

some

warning

of

impending

disaster.

We

would

hopefully

see

in

research

papers

published

research,

but

of

course

you

don't

necessarily

know

what's

going

on

inside

the

government

research

lab-

and

we

know

that

replacing

kryptonite

scale

takes

time.

V

Okay,

it

took

a

long

time

to

get

rid

of

max

and

encrypt

and

TLS

they're

still

hanging

on.

There

I

think

we

kind

of

killed

our

c4,

but

still

it

takes

time

and

you'll

still

see

it

in

either

normal

corporate

data

centers,

where

they

don't

really

keep

that

up

to

date

with

security.

Oh

sorry,

I

didn't

say

that

I

didn't

say

that

okay,

replacing

crypto

at

scale

takes

time

and,

of

course,

as

I

mentioned

traffic

capture

now

it

could

be

broken

later.

V

So

if

you've

got

data

that

needs

to

be

kept

secure

for

decades

and

you

think

there's

a

risk

of

large-scale

quantum

computation,

then

you

need

to

apply

some

kind

of

precautionary

principle

and

think

about

migrating

your

crypto

sooner

rather

than

later.

Okay,

there's

even

a

conference

for

this.

It's

called

katha

crypt,

it's

a

pretty

doom-laden

conference,

but

you

know

if

you

you

could

go

okay,

okay,

so

how

should

we

move

forward

from

here?

Well,

this

is

not

the

way

to

move

forward,

let's

think

about

post

quantum

cryptography.

V

Instead,

what

is

pqc

post

pontipee

of

geography

is

cryptography

based

on

conventional

techniques,

so

conventional

public

key

cryptosystems.

These

would

run

we

would

compile

on

your

computer

and

run

okay

on

a

classical

computer

in

a

reasonable

amount

of

time,

and

we

believe

to

the

best

of

our

knowledge

that

they

resist

the

known

quantum

algorithm,

so

Shor's,

algorithm

and

so

on.

So

this

area

is

called

pqc

and

sometimes

it's

called

quantum

safe

cryptography.

Sometimes

it's

called

quantum

immune

cryptography.

V

If

you

work

in

the

area

of

quantum

key

distribution

and

you're

a

physicist

and

you

want

to

embrace

post

quantum

cryptography,

then

you

call

it

quantum

safe

okay,

so

we

are

bringing

other

things

into

your

fold

and

extending

your

reach

and

so

on.

So

there's

a

little

bit

of

politics

here,

we're

going

to

call

it

post

quantum

cryptography

the

main

candidates

for

this-

and

this

is

what

I

would

have

had.

The

equations

had

I

been

braver.

V

Our

lattice

based

cryptography,

which

has

been

studied

for

twenty

years

now,

I

think

code

based

cryptography,

which

has

actually

been

studied

for

much

longer.

The

michaelis

code

base

scheme

uses

gompa

codes.

These

are

error,

correcting

codes,

and

it

was

already

in

the

late

1970s

early

1980s

being

proposed

and

explored

there's

newfangled

stuff

based

on

nonlinear

systems

of

equations.

They

have

a

pretty

patchy

history,

there's

been

a

lot

of

schemes

proposed

and

then

broken

we're

still

finding

out

what

makes

these

problems

hard

and

then

the

latest

greatest

thing

is

elliptic

curve.

V

Isogen

ease

if

I

was

our

tech,

investor

I

would

not

invest

and

let

the

current

isogen

ease

right

now

we're

extremely

new

and

we're

still

really

figuring

out

what

kind

of

security

they

offer.

So

there's

four

main

approaches

that

the

researchers

are

currently

exploring

and

these

different

approaches

they

could

be

vulnerable

to

further

advances

in

quantum

algorithms.

So

it

could

be

that

tomorrow,

Peter

shor

comes

up

with

a

new

algorithm

that

solves

hard

lattice

problems.

We

simply

don't

know.

V

What

we

do

know

is

that

smart

people

in

the

quantum

algorithms

community

have

been

working

for

a

long

time

to

develop

new

quantum

algorithms

and

so

far

with

one

exception.

We

have

no

applied

to

lattice

based

schemes

or

to

these

other

schemes,

and

the

exception

is

this

paper

by

Elder

and

shor

that

was

published

much

earlier

this

year

and

then

almost

immediately

withdrawn,

because

it

was

a

bug

in

the

paper.

V

It

was

a

very

unfortunate

thing,

because

this

was

the

first

time

that

somebody

of

Peter

short

stature

had

something

interesting

to

say

about

lattice

based

cryptography,

and

this

was

kind

of

a

wake-up

for

the

classical

cryptography

community.

This

was

a

very

interesting

piece

of

news.

It

turned

out

that

the

the

the

parameters

that

he

was

able

to

attack

using

his

quantum

algorithm

were

not

the

parameters

that

we

would

use

in

cryptography,

but

still

it

was

maybe

the

first

quantum

in

the

armor

for

lattice

based

crypto.

V

So

this

was

a

significant

thing,

but

as

I

say

that

they

were

was

withdrawn,

but

maybe

he'll

come

back

with

a

new

idea

with

working

with

Eldar

in

the

future.

We

just

don't

know.

There

was

also

a

paper

published

by

GCHQ

in

the

UK

now

renamed

as

NCSC

called

soliloquy,

and

this

was

a

explication

of

their

failed

attempt

to

build

a

lattice

paste

crypto

system

that

was

continum

in

they

found

a

quantum

attack

against

their

particular

scheme.

V

So

with

another

data

point

there

for

you

that

maybe

this

stuff

has

some

ways

to

go

before

we

really

understand

in

security

against

quantum

algorithms.

Even

the

conventional

security

of

these

schemes

is

not

well

understood.

In

all

cases,

we

have

a

pretty

good

handle

on

the

security

of

hash

based

signature

schemes,

for

example

X

MSS,

which

has

been

working

its

way

through

CF

RG

and

is

close

to

being

an

RFC.

V

Now,

there's

a

later

scheme

called

Sphinx,

which

is

developed

by

some

academic

researchers

a

couple

of

years

ago,

and

we

have

a

pretty

good

handle

on

how

to

choose

parameters

to

make

these

kinds

of

things

secure.

They

rely

on

pretty

standard

properties

of

hash

functions,

which

we

believe

are

not

really

susceptible

to

quantum

attacks.

We

even

have

some

lower

bounds

or

the

strength

of

quantum

attacks

against

these

things,

but

for

things

like

lattice

based

crypto

and

code

based

crypto

and

nonlinear

systems

of

equations,

the

academic

research

community

is

really

no

digging.

V

X

V

A

question

of

parameter

selection

and

yes

and

no

I

mean

there's

trade-offs

between

the

number

of

signatures

you

can

sign

and

the

size

of

signatures

and

the

cost

of

signing

and

so

on.

I'm

I

think

on

the

next

slide.

I'll

come

to

this

point,

talk

about

some

of

the

characteristics

of

these

schemes

and

maybe

maybe

I'll,

try

and

address

it

there.

Okay,

yeah!

Here

we

go

so

it's

true,

and

this

is

really

what

Steven

is

hinting

at,

that

the

current

post

quantum

schemes

that

we

have

are

not

as

performant

as

the

pre

quantum

schemes.

V

We've

all

got

very

used

to

it

to

curve

cryptography,

not

really

consuming

that

much

power

on

our

servers

or

you

know

not

that

much

bandwidth

on

our

networks.

So

typically,

what

you're

going

to

find

in

the

future

is

larger,

public

keys

and

larger

key

exchange

messages,

larger

cipher

texts,

etc.

I,

don't

have

hard

numbers

for

you

here.

We

can

discuss

that

later.

V

If

you

want

this,

this

field

is

still

very

much

evolving

and

on

the

flip

side,

you

actually

often

get

much

faster

cryptographic

operations

to

actually

do

an

encryption

with

some

of

these

lattice

based

schemes

is

just

a

matrix

multiplication

and

adding

some

noise

vector.

So

encryption

is

much

easier

than

some

kind

of

finite

field

exponentiation,

for

example.

This

is

good

news.

V

The

performance

may

suffer

as

we

refine

our

understanding

of

how

hard

the

hard

problems

are

in

this

area,

as

we

refine

our

understanding

of

how

to

choose

parameters

for

security,

so

better

attacks

which

will

emerge

over

time

as

people

tweak

their

code

and

come

up

with

new

ideas,

imply

that

larger

parameters

are

needed

for

the

schemes

and

that

can

also

lead

to

a

particular

approach

is

just

being

completely

abandoned.

So

we've

seen

that

a

lot

with

some

of

the

systems

of

equations

approaches.

V

People

had

ideas,

they

tweak

them,

you

tweak

them

interpret

them,

and

eventually

they

said.

Okay,

we

give

up.

We

can't

make

this

secure

using

this

current

set

of

ideas

and

parameter

selection.

This

area

is

a

much

more

complicated

question

than

it

is

for

RSA

and

ECC.

We've

got

used

to

magic

numbers

like

2

or

4

8

bits

for

RSA

or

3

or

two

bits.

If

we

were

crazy

about

security

or

two

five,

six

bits

for

ECC.

V

There

are

more

parameters

to

play

with

in

the

post

quantum

schemes

and

it's

harder

at

the

moment

to

figure

out

exactly

how

to

set

them

put

another

way,

we're

roughly

where

we

were

for

RSA

in

about

1982,

okay,

I

would

say:

I

wasn't

around

I

mean

I

was

born

then,

but

I

wasn't

doing

cryptography.

Then

I

was

just

out

of

short

trousers,

but

looking

back

in

the

literature,

there

was

a

lot

of

debate

about

exactly

how

big

or

ISA

should

be

our

RSA

number

should

be.

V

V

Okay,

I'm

gonna

speed

up

a

little

bit.

So

where

are

we

right

now

with

post

quantum

cryptography?

We

are

still

doing

research,

lots

and

lots

of

research

and

we're

progressing

from

research

toward

standardization

and

appointment

at

a

fair

rate

of

notes.

There's

a

couple

of

examples

here:

there's

something

called

the

Internet

defence

prize

which

is

awarded

at

using

execute

each

year

and

it

was

given

to

a

lattice

based

key

change.

V

V

It

was

a

limited

time

experiment

to

understand

the

impact

on

bandwidth

and

processing

time

and

there's

a

huge

amount,

a

really

an

increasing

amount

of

mainstream

crypto

research

going

on

at

the

moment

in

this

area,

but

we're

not

there

yet

and

on

the

standardization

site,

NIST

last

year

announced

a

process

for

standardizing

post

quantum

public

key

algorithms,

starting

announced

in

2016

the

closing

date

for

your

submissions.

If

you're

planning

to

make

one

is

November

the

30th,

2017

and

I

know

there

are

lots

of

academic

and

industrial

research

lab

teams

now

getting

their

proposals

together.

V

I

just

want

to

show

you

how

long

the

process

is

expected

to

run

for

so

thank

back

to

the

days

of

standardization

of

aes

and

add

an

extra

two

or

three

years

to

that,

and

then

at

the

end

of

that

we

may

have

a

portfolio

of

post

quantum

algorithms

that

we

can

use

or

nist

have

reserved

the

right

to

make

a

decision,

but

further

evaluation

is

needed.

Okay,

so

it's

going

to

take

some

time,

Haemon,

you're

thinking,

I've

heard

about

this

thing

called

quantum

cryptography

and

quantum

key

distribution.

V

Why

are

we

not

using

that

instead

of

post

quantum

cryptography?

Well,

let

me

let

me

destroy

quantum

key

distribution

that

destroys

too

strong

a

word.

Let

me

let

me

critique

quantum

key

distribution,

okay,

so

this

is

great

right.

This

is

the

physicists

will

tell

you.

This

promise

is

security

based

only

on

the

correctness

of

the

laws

of

quantum

physics

and

the

correctness

of

quantum

physics

is

not

in

doubt.

It's

been

verified

to

ten

decimal

places.

V

Maybe

ten

decimal

places

is

not

very

large

when

you're

thinking

about

security

parameters

for

crypto,

okay,

we

considered

an

attack

at

a

level

like

two

to

the

minus

120,

and

then

we

scrapped

your

block

cipher.

So

ten

decimal

places

doesn't

really

cut

it

anyway.

Okay-

and

this

is

often

contrasted

to

the

security

offered

by

public

key

cryptography.

We

always

said

that

public

key

cryptography

is

vulnerable

to

quantum

computers

and

and

quantum

key

distribution

would

not

be

etc,

etc.

I

got

some

quotes

together

here.

This

is

from

Brian

loans.

Dr.

V

Brian

loans

from

kinetic

quantum

cryptography

offers

the

only

protection

against

quantum

computing,

and

all

future

networks

will

undoubtedly

combine

both

through

the

air

and

fiber

optic

technologies.

Okay,

here's

another

example:

all

cryptographic

schemes

used

currently

on

the

internet

would

be

broken.

That's

not

actually

true.

This

was

said

by

you

bizarre

who

is

an

IEC,

our

fellow

and

ex

editor-in-chief

of

the

Journal

of

cryptology,

so

he's

a

pretty

solid

cryptographer,

and

he

said

that

too,

at

a

lunch

meeting

of

some

Institute.

This

is

an

early

example

of

alternative

facts.

V

Here's

MIT

technology

review

as

early

as

2003.

This

is

2td

is

one

of

10

emerging

technologies

that

will

change

the

world,

even

Burt

cholesky,

who

many

of

us

know

and

highly

respect

said

back

at

this

time.

If

there

are

things

that

you

want

to

keep

protected

for

another

10

to

30

years,

you

need

quantum

cryptography.

V

These

are

examples

that

I

gave

at

a

talk

10

years

ago

this

year

at

the

fuse

Institute

at

the

University

of

Toronto.

So

these

are

all

10

years

old.

So

what's

the

big

holdup,

why

we're

not

already

using

quantum

key

distribution

but

I'll,

give

you

four

reasons

and

I

have

backup

slides.

So

if

anybody

wants

to

debate

this

with

me,

let's

list

it

in

the

coffee

break

after

and

qkd

doesn't

actually

do

what

it

says

on

the

tin.

V

It

doesn't

distribute

keys,

it

expands

existing

keys

and

if

you

already

have

a

key

in

place,

you

can

expand

it

in

all

kinds

of

ways

and

build

as

many

keys

as

you

need

to

using

things

like

key

derivation

functions.

Ok,

qkd

has

significant

limits

on

both

range

and

rate

range

is

the

most

fundamental

one.

You

may

have

heard

about

the

Chinese

quantum

key

distribution

network

running

from

Beijing

to

Shanghai.

It

sounds

very,

very

impressive,

but

it

does

not

provide

end-to-end

security.

V

It's

a

sequence

of

links

join

together

and

each

intermediate

point

you

can

read

the

traffic

in

the

clear.

Ok,

if

you

want

end-to-end

security,

qkd

is

not

going

to

give

it

to

you

until

we

have

quantum

repeaters,

but

they

require

a

quantum

computers

and

I.

Think

I've

told

you

about

quantum

computers.

Already.

V

Security

in

theory

does

not

imply

security

and

practice.

In

theory,

they're

the

same

in

practice,

they're,

not

I,

think

Yogi

Berra

said

that

I'm

here.

The

idea

is

that

we

have

to

build

devices.

We

have

idealized

models

of

the

physics,

but

the

devices

will

have

weaknesses.

They'll

have

side

channels,

you

can

shine

laser

beams

into

single

photon

detectors

and

cause

them

to

go,

haywire,

etc,

etc,

etc.

V

Okay,

so

proving

secure

systems

is

different

from

designing

secure

systems

and,

finally,

quantum

key

distribution

does

know,

offer

significant

practical

security

advantages

over

what

we

can

currently

do

at

low

cost

with

conventional

techniques,

by

which

I

mean

symmetric

key

cryptography.

Ok

check

me

later.

If

you

want

the

details

on

exactly

why

that's

true?

Ok,

it's

not

just

me

this

is

this

is

my

government.

This

is

the

view

from

ncsc

or

GCHQ,

as

was

quantum

key

distribution.

V

They

actually

took

the

unusual

step

of

publishing

a

white

paper

about

two

years

ago

outlining

their

view

and

here's

what

they

say.

The

summary

is

that

Q

KD

has

fundamental

practical

limitations,

does

not

address

large

March

the

security

problem