►

From YouTube: IETF99-HTTPBIS-20170719-1520

Description

HTTPBIS meeting session at IETF99

2017/07/19 1520

https://datatracker.ietf.org/meeting/99/proceedings/

A

B

B

B

Again,

you

may

have

seen

this

in

the

last

session,

but

since

it's

a

new

session,

we

should

do

it.

These

are

the

IPR

terms

that

we

participate

in

the

ITF

under

this

is

the

famous

note

well

statement:

if

you're

not

familiar

with

it,

you

can

find

it

on

your

favorite

search

engine

by

searching

for

IETF

note

well,

and

it's

important,

because

IPR

protections

and

and

antitrust

protections

are

why

we

do

standards

or

a

large

part

of

it.

B

Likewise,

we

have

other

policies

regarding

harassment

which

we

take

very

seriously.

We

want

this

to

be

a

professional

environment,

so

if

you

feel

that

you're

being

harassed-

or

you

see

behavior

that

you're

concerned

about,

we

have

an

Ombuds

team

which

is

very

hard

to

pronounce,

and

these

folks

here

are

our

folks.

You

can

reach

out

to

and

talk

to

either

via

that

email

address

or

in

person.

B

B

D

B

You

probably

have

too

much

to

scrub

right

away,

so

feel

free

to

get

set

up.

Well,

we

use

the

HTTP

this

jabber

room

just

for

those

who

are

confused

agenda

bash.

All

we

have

is

a

discussion

of

of

the

HTTP

binding.

We've

got

a

presentation

from

from

Mike

Bishop,

the

editor

or

one

of

the

editors,

and

then

we'll

probably

discuss

some

of

the

issues

that

are

related

to

HTTP,

as

I

mentioned.

B

B

E

B

E

B

E

E

We

quick

has

a

nice

property

and

handshake

that

you

can

get

the

version,

the

crypto

and

the

all

agreement,

all

in

between

0

&,

2,

RT,

T's

and

hopefully,

most

of

the

time

that's

0.

Tt

has

some

nice

retransmission

properties,

where

we

don't

retransmit

things

from

cancelled

streams,

which

is

not

always

true

in

TCP

and

most

of

the

frames

handle

things

below

where

it

sheep.

E

He

cares

about

mostly

we

care

about

the

stream

frames

and

how

the

data

gets

transferred

next

slide,

and

this

is

also

handy

for

a

lot

of

browser

and

app

implementers,

because

if

it's

UDP

based

protocol,

you

can

implement

it

at

the

a

player

or

at

the

OS

line

layer

or

some

combination

of

the

two.

So

you

can

update

it

your

cadence

or

you

can

have

something

in

the

OS

that

gets

updated

in

the

abroad

way.

E

E

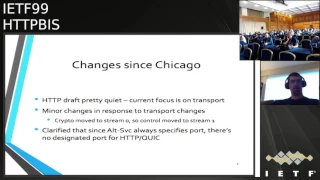

So

in

terms

of

what's

changed,

since

we

last

met

in

Chicago

not

a

whole

lot

on

the

HTTP

level,

most

of

the

work

has

been

on

the

transport

layer

and

the

changes

in

the

HTTP

draft

are

primarily

distracting.

What's

going

on

in

transport,

quick

moved

their

special

stream

from

one

to

zero,

so

the

control

stream

moved

from

three

to

one

for

the

SUV

layer.

E

So

if

you

advance

there

are

a

couple

key

issues

that

I'd

like

to

kind

of

frame

a

little

bit

if

you'll

pardon

a

slight

quick

one

and

then

I

think

most

of

this

time

will

be

for

issue

discussion.

And

if

we

want

to

have

some

of

this

discussion

as

we

step

through

them,

I

think

that

would

be

a

good

way

to

proceed

through

the

issues.

E

E

It

pushes

the

resource,

you're

fine,

but

then

an

HTTP,

there's,

no

client

response

on

the

push

stream,

there's

nothing

for

the

client

to

say,

but

because

of

the

stream

state

machine

as

it

currently

stands,

the

client

still

has

to

send

an

empty

stream

frame.

Closing

the

stream

saying,

yeah

I

really

have

nothing

to

say.

Both

sides

already

know

that

there

should

be

a

better

way

around

that

and

there

have

been

a

couple

proposals.

E

E

So

next

slide,

there's

been

a

whole

host

of

exactly

what

these

should

look.

Like

in

the

current

state,

we

have

bi-directional

streams

you

send

in

both

directions,

and

the

state

machine

looks

a

lot

like

the

HT

of

each

u1,

where

you

go

from

idle

to

open

to

half

closed

and

one

of

two

states

to

fully

closed.

And

then

the

discussion

has

been

if

we

make

those

a

little

bit

more

unidirectional.

E

There

is

a

pull

request

from

en

that

proposes

marking

a

stream

when

it's

opened

as

being

unidirectional

so

that

it

effectively

goes

from

idle

to

half

closed

immediately

and

there's

a

pull

request

from

Martin

that

takes

the

scram

is

to

fully

unidirectional

that

says:

there's

a

separate

stream

ID

in

space

in

each

direction.

Each

stream

has

a

very

simple

life

cycle.

But

then,

if

you

want

bi-directional

streams,

somebody

has

to

handle

the

correlation

either

at

the

quick

layer

or

the

application.

E

Architectural

II

I

kind

of

like

the

fully

unidirectional

approach

it

does

seem

to

make

a

lot

of

people.

I

will

say

at

least

nervous

because

it's

not

like

TCP,

but

also

because

there

are

use

cases

for

bi-directional

streams

as

well

and

I've

heard

the

very

reasonable

concern

that

we

don't

want

everyone

to

have

to

rebuild

how

to

do

a

bi-directional

stream.

E

A

I'm,

like

Thompson

I'm,

not

sure

that

I'm

really

prepared

to

have

this

discussion

yeah

as

I

understand

it.

There

are

definitely

interactions

with

CP,

depending

on

which

one

of

these

options

we

take,

but

at

this

point

I

think

we

should

leave

that

discussion

for

the

other

session,

where

we

it's

only

a

tender

there.

At

least

it

is,

and

the

question

I

think

that

is

reasonable.

To

ask

at

this

point

in

time.

Is

there

any

requirements

that

we

would

have

and

I?

Don't

think

that

there

are

any

new

requirements

exist,

but

I'd

like

to

hear

yeah.

E

B

So

I

think

one

way

we

could

look

at

this

is

focusing

more

on

the

you

know,

single

stream

versus

pairs

with

streams

issue

then

on

the

directional

versus

bidirectional,

but

I'm

curious

at

this

point

who,

in

the

room

considers

themselves

to

be

a

you

know:

I

know

we

don't

have

membership,

but

an

active

participant

in

HTTP.

The

HTTP

working

group

just

raise

your

hand.

Okay

of

any

of

you

do

any

of

our

interview

not

participating

in

the

quick

group.

Is

there

anyone

here,

who's,

just

an

HTP,

but

not

quick?

F

G

Angrily

you

just

updated

my

question:

I

was

going

to

suggest

that

maybe

we

ask

folks

here

who

I

think

it

should

be,

but

not

too

quick,

let

me

say

something

and

obviously

there

aren't

any.

You

confuse

me,

though,

with

something

that

he

just

said.

This

isn't

about

two

streams

versus

one

stream

issue

right.

It

I'm.

B

E

So

currently,

and

if

everybody's

been

following,

you

probably

know

this-

the

connection,

control

stream

and

it

should

be

over

quick

instrum

used

to

be

stream.

Three,

that's

a

response

to

crypto

moving

from

one

zero

and

that

carry

is

session,

wide

information

settings

and

priority

frames

primarily

as

well

as

potentially

extensions

in

the

future.

And

currently

each

request

is

straddling

two

quick

streams

where

you

have

one

stream

that

carries

frames

which

is

primarily

headers

and

push

promise.

E

The

other

stream

carry

is

the

unframed

unframed

data

so

that

you

don't

have

additional

framing

at

the

HDP

layer

and

if

you

go

forward,

one

slide

kind

of

illustrated

the

rationale

of

why

we

went

to

two

streams

in

the

first

place.

There

are

two

cue

reasons:

one

of

them

is

HVAC

that

HVAC

data

HVAC

assumes

that

all

data

has

to

have

been

delivered

previously

in

order

and

reliably,

because

HTTP

2

is

over

TCP.

E

So

if

you

have

any

HVAC

frame

that

the

packet

containing

that

frame

gets

dropped

and

the

stream

gets

reset,

it's

not

retransmitted

and

H

pack

dies

horribly.

So

one

reason

for

having

two

streams

is

so

that

we

can

say

one

stream,

thou

shalt

never

reset,

and

the

other

stream

hopefully

curious

the

bulk

of

the

data.

So

you

can

reset

that

freely.

If

you

want

to.

E

But

if

you

go

forward

once

thought,

we've

also

went

into

reasons

why

we

might

want

to

go

back

to

one

strand

and

the

biggest

one.

There

is

that

quick

gives.

You

know,

ordering

guarantees

between

streams.

Http

2

has

a

should

that

the

push

promise

brain

should

always

come

ahead

of

whatever

on

the

main

stream

references.

E

The

thing

that

you're

pushing

so

that

could

be

the

headers

frame

because

you've

got

a

reference

in

a

line

counter,

but

it

could

also

be

a

reference

to

something

that

is

in

the

body

of

the

resource

and

there's

currently

no

way

to

correctly

order.

The

push

promise

frame

on

the

header

stream

with

something

that

tons

to

be

mixed

to

it

in

the

body.

E

So

if

you

want

to

move

back

to

once

premium,

we're

gonna

have

to

fix

the

vulnerability

to

loss

and

header

compression

so

got

slides

on

that

later.

But

there

are

ways

to

do

that

as

far

as

the

overhead

that

we

save

by

not

having

data

frames,

that's

relatively

small,

you've

got

a

four

byte

header

and

the

size

of

the

data

frame

can

be

up

to

64

K.

E

At

that

point,

you

have

to

start

having

multiple

data

frames,

but

even

that

we

could

change

the

max

size

of

a

of

a

of

a

frame

if

we

want

to,

and

some

of

the

other

proposals

for

how

we

could

fix

the

ordering

and

have

multiple

streams

actually

still

use

the

data

frame

on

the

headers

stream.

That

says

now,

you

need

to

go.

Look

at

this

other

stream

to

find

a

chunk

of

the

body,

read

there

and

then

come

back

here

for

the

next

thing,

which

effectively

makes

it

one

stream.

E

Anyway,

oh

and

one

thing

I

missed

to

say

the

other

piece

of

the

reason

why

we

wanted

two

streams

was

so

that

we

could

separately

flow

control,

headers

and

body

that

in

HGB

2

there

is

no

flow

control.

1

headers

there's

some

disagreement

about

whether

or

not

that

was

the

right

decision,

but

quick

originally

allowed

you

to

exempt

some

streams

from

from

flow

control,

and

that

is

and

that

property

has

been

removed

from

quick.

So

that's

no

longer

something.

E

That's

splitting

into

two

streams

affords

us

now

at

the

interim,

there

seemed

to

be

pretty

strong

consensus

about

moving

toward

one

stream,

so

I'm

planning

to

put

up

a

pull

request

and

probably

merge

that

fairly

soon.

But

I

would

like

to

hear

comments

here

from

the

larger

working

group.

If

anyone

thinks

that

they

horrendously

bad

idea.

A

Is

this

questionable

anyway?

The

the

idea

about

having

synchronization

points

between

streams

and

and

all

that

sort

of

businesses

is

something

that

just

adds

more

complexity

and

I.

Think

they're

good

for

and

I

write

it

it

just

it's

kind

of

unmanageable

ultimately,

and

we

have

a

really

simple

solution.

A

E

G

Jenna

I

ain't

got

I

generally

agree

with

what

Martin

said:

I

don't

think

the

mod

4

is

that

tricky

to

do

I

mean

it's

odd,

for

instead

of

more

but

in

in

I,

think

the

debilitating

problem

for

me

with

two

streams

instead

of

one

as

we

have

it

right

now,

the

draft

is

this:

the

one

that's

on

this

picture.

It's

the

push,

promise

one

that

seems

like

a

pretty

bad

blow

to

that

whole

proposal

and

if

you

have

to

do

any

synchronization

at

all

across

streams,

you

lost

the

point

of

streams.

G

It's

not

the

same

abstraction

anymore,

so

I

am

I

completely

agree

that

early

on

way,

early

on

when,

when

Mike

and

I

was

speaking

about

this

before

the

draft

was

even

written,

I

think

we

were

talking

about

data

frames

as

being

unnecessary

means

just

because

you

could

even

streams.

You

didn't

have

to

use

the

HTTP

framing

but

you're

right

in

pointing

out

that,

of

course,

that

doesn't

add

that

much

overhead.

G

Ultimately,

it's

and

it's

going

to

be

required

if

you

need

to

insert

a

push

promise

in

the

middle

of

a

body

anyways,

so

I'm,

very

much

in

favor

of

going

towards

one

stream.

It'll

be

wonderful

to

have

that

decision

made

so

that

we

can

do

other

things.

Last

point:

yes,

on

H

pack,

it's

going

to

be

broken

for

just

a

little

bit,

but

we

are

anyways

going

to

try

in

the

quick

working

group

there

anyways

trying

to

move

towards

either

Q

power

call

Q,

cam

or

one

of

those

proposals.

We

know

that.

H

So

that

anger,

I

also

wanted

to

echo

my

support

for

one

stream

proposal.

I

think

it

also

like

removes

the

precedent

of

interrelationships

between

streams

that,

like

other

trans

other

application

transports,

are

designed

on

this.

Take

the

example

and

say

that

streams

are

just

just

you

just

you

can

just

use

the

next

stream

and

you

don't

need

to

maintain

complex

relationships

between

streams

and

might

in

the

future,

simplify

multiplexing

application

transports

over

this

over

one

quick

transport

as

well.

We

don't

have

to

preserve

certain.

B

I

E

E

So

on

the

note

of

a

Petter

compression,

we

currently

still

use

H

pack,

as

is

in

draft

four.

We

have

a

sequence

number

on

the

H

back

frames,

which

requires

you

to

be

pretty

compressed

them

and

the

order

they

were

encoded.

It's

not

anymore

head

of

line

blocking

than

it

used

to

be,

but

it's

no

better

with

ideally

liked

it

to

be

better.

E

E

E

Q

pack

has

a

new

wire

format

that

if

you

look

at

it

will

be

very

very

familiar

to

you

if

you

have

built

each

pack

before,

but

it

is

not

h.

Packs

wire

format

to

cram

is

much

closer

to

H

pack

and

in

fact

the

same

compressor

could

output

either

one

with

some.

It

has

some

additions

on

the

headers

frame

to

communicate

additional

information.

E

Q

crammed

I

think

the

way

I

would

generalize

it

is.

The

Q

crammed

requires

a

lot

of

things

that

a

Q

pack

implementation

might

choose

to

do

so.

Q

pack

is

more

focused

on

the

wire

format

and

lets

you

manage

your

trade

off

between

I

want

to

guarantee

them.

There

will

never

be

head

of

line

blocking

or

I

want

the

best

efficiency

possible

and

I'm

willing

to

take

some

risk

and

I.

E

A

A

To

the

extent

that

I

think

I

would

very

much

prefer

Q

pack

and

the

primary

one

is

that

it

requires

knowledge

of

your

packetization

logic

at

the

point

that

you

encode

headers,

and

that

is

hazardous

in

light

of

the

fact

that

you

might

have

to

send

the

that

data

in

different

packets

at

some

point

in

the

future

in

case

of

loss

and

there's

no

treatment

of

that

at

all

in

the

draft.

Unfortunately-

and

it

I

think

that's

the

the

single

most

brittle

part

of

the

whole

thing.

A

And

yes,

while

it

doesn't

rely

on

that

I

think

it

relies

on

that

for

performance

reasons

and

the

recommendation

to

have

that

degree

of

awareness.

It's

problematic.

The

other

thing

that

I

noticed

was

that

it

has

an

eviction

race

problem

and

the

eviction

race

problem.

The

way

that

it

resolves.

That

problem

is

failing.

A

It's

like

open

catch

fire.

If

this

happens-

and

that

to

me

is

problematic

for

other

reasons-

and

we

know

in

H

pack

that

eviction

is

pretty

damn

common,

it

happens

all

the

time

and

particularly

in

long-lived

connections.

And

if

you,

if

you

set

this

up

for

failure,

then

you

you're

setting

yourself

up

for

failure.

But

by

doing

it

that

way,

so.

B

J

Yeah,

so

I

do

want

to

respond

on

the

firaon.

The

first

point,

I

think

maybe

that

raft

is,

could

be

clearer

in

that

the

packetization

is

definitely

kind

of

optional

and

badly

in

need

of

data.

I'm,

definitely

open

to

the

idea

of

actually

just

removing

that

I

think.

In

fact

we

can

get

some

clarification

from

Alan,

but

I

think

in

his

study.

It

was

not

fully

implemented.

J

But

Alan

can

clarify

I'm,

not

really

convinced

I,

don't

have

data

yet

on

how

much

it

moves

a

needle.

It

could

be

that

it

doesn't

move

the

meter,

the

needle,

much

at

all

and

now

I

guess,

I.

Think

to

what

I

heard

about

the

original

H

pack,

where

you

know

there

were

smarter

proposals

and,

in

the

end,

the

performance

difference

wasn't

enough

and

simplicity,

sort

of

ruled.

J

The

part

that

does

there's

sort

of

two

forms

of

this

packages:

a

ssin

or

two

forms

of

transport

coordination

and

Q

cram.

The

other

one

is

just

knowing

when

data

has

been

act,

that

one

is

kind

of

needed,

but

I

would

argue

that

that

one

is

not

so

hairy

as

as

the

one

of

knowing

where

packet

boundaries

are

so

I

would

hope.

I.

A

Think

that's

perfectly

fine.

That

is

like

a

very

reasonable

requirement.

We

have

a

number

of

other

code

places

in

the

protocol

where

knowledge

of

acknowledgments

has

such

a

positive

benefit

on

performance,

or

what

have

you

that

I

think

that

the

transport

API

for

quick

will

necessarily

have

some

sort

of

acknowledgment

signals

regarding

string

data

I

think

that's!

That's

a

that's!

A

good

property.

E

I

F

D

J

So

that's

another

one

where

I

think

we

really

need

data

I,

the

I

went

with

the

nuke

this

dream

option

because

I

think

it's

going

to

be

very

rare.

This

isn't

any

table

eviction

nukes

of

stream.

This

is

a

table

eviction

that

is

coincident

with

a

packet

drop

that

happens

to

affect

you

know

the

current

header

in

flight,

so

I

really

think

it's

going

to

be

quite

a

corner

case.

That

said,

I

would

also

question

the

basic

premise

that

table

eviction

is

really

common

and

long

live

connections

are

very

common.

J

J

The

you

know

we

have

telemetry

data

on.

You

know

the

number

of

streams

over

the

lifetime

of

a

connection

and

there's

a

lot

of

you

know

relatively

short-lived

connections

out

there,

and

you

know

in

in

practice

my

understanding

was

until

a

year

ago

nobody

increased

the

table

beyond

the

default

4k

these

days.

Quite

a

few

people

raised

it

to

64

K,

but

it's

extremely

rare

to

go

beyond

that.

I

I

don't

agree

that

table

size

and

table

eviction

is

actually

a

first-order

issue

and

we

need

data.

I

This

is

what

I

was

actually

comment,

that

I

read

the

current

queue

back

draft

very

closely

and

on

a

conceptual

level.

I

was

reasonably

happy

with

it,

but

I

had

a

lot

of

detailed

comments

that

probably

need

to

be

resolved

in

person.

So,

in

my

opinion,

it's

it's

certainly

far

enough

away

from

anything.

I

would

want

to

do

that.

I

would

not

say

yes

to

it

at

this

moment

and

I

would

rather

just

stick

with

the

static

you

know.

Hvac

and

the

qqm

draft

I

just

haven't

gotten

to

read

the

new

version.

Yet.

I

G

Chennai

but

I

think

we

should

in

fact

this

work

on

gathering

more

data

as

well,

so

I

think

it'd

be

very

useful

to

actually

have

more

data,

and

this

conversation

it's

not

just

about

design

niceties,

it's

actually

about

performance.

Ultimately,

all

of

this

is

about

performance

and,

if

you're

able

to

actually

show

some

numbers

about,

what's

hominin,

what's

not

and

what

actually

gives

us

better

performance

if

they

don't,

if

they

give

us

equal

performance,

then

we

can

talk

about

what

other

things

are

useful.

But

yeah

I'd

like

to

wait

to

see

some

numbers.

Okay,

I.

B

K

E

E

But

so

we

don't

allow

any

changes

to

the

settings

once

you've

sent

them,

so

we

remove

a

lot.

The

complexity

and

machinery

of

setting

Zach

there's

been

a

proposal

that

we

could

allow

the

application

layer

to

stuff

a

blob

into

the

quick

transport

settings

and

handshake

which

for

us

would

probably

just

be

something

serialized.

That

looks

a

lot

like

my

settings

frame

and

drop

it

in

one

of

those

values.

So

then,.

E

So

then,

you

would

need

to

include

settings

for

any

application

that

you're

offering

as

part

of

the

handshake.

Now

the

biggest

drawback

to

that

that's

been

pointed

out

is

that

everything

that's

in

the

client

hello,

which

is

where

the

transport

settings

are,

is

sent

in

the

clear

and

therefore

the

entire

world

would

be

able

to

see

your

settings

frame

now

and

the

settings

defined

in

the

spec

there's

nothing

terribly

sensitive

in

there.

E

I

L

M

H

M

Guess

so,

I'm

Martin

smothering

defined

as

it

is

generally

I.

Probably

my

intuition

as

well

I

mean

premiere

as

the

seems

like

there

are

two

of

it

to

potential

advantages

to

this

design.

One

is

that

might

be

conception

a

simpler,

and

the

second

is

that

I

guess

you

say,

get

to

save

a

round

trip,

because

the

client

can

send

his

consent.

His

settings

in

the

first

round

trip

I,

don't

think.

M

G

Jahmai

going

to

basically

relate

what

I

could

just

said.

I

agree

that

having

the

same

settings

for

HTTP,

2

and

quick

have

very

different

result

were

dead-set

having

different

privacy.

Properties

seems

like

a

problem.

We'd

want

to

avoid,

and

so

that's

yeah.

The

potential

drawback

is

pretty

significant

from

where

I

see

it.

E

H

E

We

think

that

that

is

something

that

at

hjp

is

their

reason

that

we

think

the

transport

would

need

to

close

a

stream.

And

would

we

be

okay

with

that?

Or

do

we

want

to

put

as

a

requirement

on

quick

that

the

transport

should

never

close

something

out

from

underneath

us

and

I

assume

that

we

need

the

ability

to

close

the

connection

with

an

error

from

the

application

layer,

which

implies

that

there

needs

to

be

some

ability

for

education

error

that

contamination

in

the

connection

coroner.

A

Would

be

to

forbid

the

transport

from

closing

specific

streams?

It

doesn't

know

whether

those

streams

contain

state

that

the

application

depends

on

in

and

it

doesn't

know

that

killing

them

is

safe

and

it

cannot

know,

and

that

means

that

the

error

code

space

for

reset

stream

would

be

entirely

under

the

control

of

the

application.

That's

been

used.

A

A

J

G

I'm

on

the

second

discussion

point,

whether

an

application

can

terminate

a

connection

with

error,

I

I'd

have

to

think

about

it,

some

more,

but

it

seems

odd

for

the

application

to

be

able

to

do

that

here

in

quick

in

TCP.

Yes,

it's

done,

but

in

quick,

given

that

we

expect

applications

to

have

a

signaling

stream,

it

seems

reasonable

to

use

that

as

a

way

to

signal

the

other

side

that

the

applications

shutting

down

and

the

connection

is

closed.

Transport

part

of

this

quickest,

close.

The

transfer

connection

is

closed

as

it

normally

would

be.

So.

E

A

So

so

Martin

Thompson

I

think

the

hazard

there

is

that

the

connection

continued

during

the

processing

during

that

the

time

that

that

signaling

is

happening,

whereas

having

a

single,

unified

way

to

say

nah.

This

is

bad

go

away

and

we're

using

all

of

that

logic

is,

is

an

efficiency

that

we

can.

We

can

take

advantage

of

I

think

it

is

mainly

just

an

efficiency.

A

I

mean,

ultimately,

the

same

messages

are

going

to

be

exchanged,

but

having

having

that

transport

aware

means

that

now

the

transport

is

no

longer

doing

things

like

we're,

transmitting

packets

and

and

providing

all

of

the

repair

mechanics

that

it

has,

and

you

know,

reading

from

its

send

buffers

and

all

of

those

sorts

of

other

things.

It's

just

going

to

be

simply

right

where

we've

initiated

the

shutdown

now

and

I,

think

that

I

think

it's

just

an

efficiency

game.

That's

probably

one

that's

worth

having.

G

Jen

I

am

that

I,

actually

you're

pointing

out

is

that

it's

maybe

more

than

just

an

efficiency

thing

and

I

think

you're

right

that

in

this

case,

there's

no

urgency

at

the

transport,

because

the

transport

sees

that

the

application

also

shut

down

this

particular

stream

and

does

nothing

and

it

schedules

it

with

other

rights,

and

it

could

be

actually

cute

way

behind

other

stream

rights.

If

things

aren't

done

right

and

on

the

read

side

again

the

same

thing,

the

transfers

happening

do

so

the

applications

come

exactly

the

applications

pending

the

process

data.

G

Well,

the

application

on

the

other

side

long

ago

said:

please

shut

down

everything

so

yeah.

Maybe

there

is

a

good

reason

to

do.

This.

I

was

wondering

if

the

conversation

we

are

having

here

it's

it's

sounds

like

it

should

be

something

that

we

should

either

continue

or

have

again

in

the

quick

working

group,

but

yeah,

that's

fair

enough.

E

So

this

is

an

interesting

one.

I

stole

a

graphic

out

of

a

presentation

from

a

while

back

about

how

Firefox

does

priorities

and

a

number

of

you

a

implementations,

do

the

priorities

by

taking

idle

streams

and

using

them

as

placeholders.

Quick

has

a

bit

of

a

preference

for

using

streams

in

order

continuously,

which

kind

of

makes

this

not

work,

and

now

that's

less

of

an

issue

now

that

we

have

the

mac

stream

ID

as

opposed

to

max

concurrent

streams.

D

Mean

I

was

gonna

say

this

is

you

know,

sort

of

always

a

weird

little

overlap,

but

the

function

it

provides.

The

grouping

function

is

like

really

wonderful.

So

is

it

particularly

hard

to

build

sort

of

a

fixed

set

of

slots

to

do

this

grouping

in

explicitly

you

know

into

into

our

new

protocol

to

accomplish

the

same

end,

but

to

do

it

rather

than

sort

of

abusing

on

ustream

ids

to

have

a

table

of

grouping

grouping

orders.

A

Some

mutton,

Thomson

I,

don't

know

if

Mike

can

hear

us

and

that's

unfortunate,

but

one

of

the

things

that

he

said

was

slightly

incorrect.

The

mac

stream

ID

does

not

allow

us

to

do

this

because

if

we

start

using,

if

we

were

to

allow

the

use

of

streams

as

placeholders,

then

we

have

to

allow

for

streams

not

to

be

opened

up

in

contiguous

blocks.

We

have

to

allow

them

to

remain

idle,

and

then

we

have

the

possibility

of

denial

of

service.

A

In

the

case

where

you

you've,

someone

thinks

that

a

stream

is

being

used

for

just

merely

as

a

placeholder,

but

then

requests

start

arriving

on

it

and

you're

you've

got

you've

had

a

connection

open

for

several

hours.

They've

been

several

thousand

of

these

things

happening.

Your

maximum

number

is

set

to

something

that

is

based

on

the

on

this

assumption,

and

now

you

have

a

thousand

extra

streams

arriving

and

that's

just

not

cool.

So

we

need

to

I.

Think

Patrick's

request

is

perfectly

reasonable

and

we

can

realize

it.

A

We

make

small

tweak

to

the

priority

frame,

put

another

flag

in

the

priority

frame,

header

and

say:

well,

you

know

the

the

root

of

this

dependency

or

the

target

of

this

dependency

we

have

Nick

Nick

two

bits

is,

is

actually

a

placeholder

and

the

value

in

there

comes

from

a

different

numbering

space.

There's

other

ways

you

can

do.

You

can

slice

this

particular

thing,

but

I

think

that's

a

reasonable

yeah.

D

A

J

Buck

wanted

to

respond.

I

had

a

question

in

response.

I've

heard

it.

It

articulated

a

few

times

that

there's

you

know

some

unhappiness

with

the

h2

priority

scheme

and

that

maybe

HTTP

over

quick

might

actually

go

for

something

different.

That,

for

example,

doesn't

have

the

unbounded

state

issues.

Is

that,

like

is

that?

Are

we

is

it

is?

Is

the

direction

to

probably

stick

to

the

h2

priority

scheme

or

or

is

there

openness

to

you

know

it's

changing

so.

B

So

far

in

the

discussions

we've

had

to

date,

the

feeling

that

I've

heard

expressed

most

is

that

we

want

to

keep

as

close

to

h2

as

we

can

to

minimize

introducing

you

know

more

than

one

change

at

a

time

now.

I

think

we'll

assess

that

as

we

go

along,

but

that's

the

philosophy

that

we

have

in

mind

overall

right

now,.

A

Right

now

is

frankly,

massive

whirring,

yeah,

very

worrying

and

let's

not

open

up

too

many

cans

of

them

at

the

same

time,

and

this

is

just

one

of

those

ones

that

I

think

well

yeah,

so

it's

maybe

not

ideal,

and

maybe

when

we

aren't

happy

about

it,

but

it

if

we're

going

to

do

it.

Justice

we'd

have

to

spend

a

lot

of

time

on

it

and

I

think

I'd

rather

spend

that

time

on

the

more

important

issues

like

getting

this

damn

thing

working

sure.

B

And

just

for

the

record,

I

see

nodding

heads

in

the

room,

I'd

add

to

that

one.

Additional

slight

thing,

which

is

I,

do

sense:

a

strong

aversion

to

using

this

as

an

opportunity

to

try

things.

Opportunistically

I

think

we'd

need

solid

data

that

we

were

actually

making

a

little

better

rather

than

just

having

another

go

at

it.

E

I

think

I

would

add

to

that

that,

since

this

is

a

quick

working

group

document,

even

though

a

lot

of

us

participate

in

both

working

groups,

if

we

were

to

do

something

that

doesn't

directly

relate

to,

we

have

to

change

this

because

of

how

it

interacts

with

quick

I

would

want

to

see

that

as

something

that

the

HTTP

working

group

did

and

sent

to

us,

and

that's

partly

why

I

think

a

joint

meeting

like

this

is

the

perfect

time

to

discuss.

If

we

wanted

to

do

that,

and

it

sounds

like

the

answer

is

currently

No.

D

D

E

So,

on

the

note

of

how

we

relate

to

HTTP

2,

this

is

a

slide

that

I

had

almost

verbatim

in

Chicago

and

I.

Don't

think

we

quite

reached

a

resolution

there

that

we

have

tried

to

keep

this

as

close

in

spirit

and

similarity

as

weekend,

HTTP

2,

but

because

of

certain

traits

of

quick

and

how

the

integration

goes.

Almost

everything

is

dive,

arranged

a

little

bit

and

I

have

a

section

on

the

spec

that

describes

what

the

differences

are.

E

What

the

similarities

are

and

I'm

wondering

at

what

point

we

actually,

whether

we

want

to

go

into

this

back

and

say

we're

just

going

to

use

separate

IANA

registries

and

call

this

a

different

protocol,

as

opposed

to

being

something

that

is

kind

of

HTTP

and

kind

of

not

was

talking

with

our

devs.

As

we

reviewed

things

through

here,

they

said

more

likely,

there's

a

lot

of

places.

E

They

could

reuse

code,

but

it

was

looking

less

and

less

likely

that

they

would

be

able

to

use

exactly

the

same

code

on

both

sides

and

likely

it

would

approach

as

a

copy

of

the

htv-2

code

and

run

that

over

and

start

adapting.

That

too,

quick,

so

I'm

wondering

do

we

want

to

reflect

that

in

the

document

and

to

what

degree.

B

So

Mike,

it's

Marc

I

had

thought,

and

maybe

it's

just

my

horrific

memory

but

I

had

thought

that

we

either

agreed

to,

or

at

least

had

a

strong

sense

that

we

were

going

to

do

that

personally.

I

find

the

current

registry

documentation

very

confusing

and

I

have

a

strong

personal

preference

for

separating

them.

But

that's

just

me:

I.

A

Yeah

Martin

Thompson,

others

may

disagree,

but

I

I

think

that

at

the

point

that

we

made

that

the

10

or

12

decisions

that

caused

us

to

be

ever

so

subtly

different

in

subtle

ways,

often

and

none

so

subtle

ways,

we

did

actually

make

a

new

protocol

it's

unfortunate,

but

made

a

new

problem.

There

are

some

things

where

extensions

to

h2

are

very

easy

to

port

across

into

into

quick

and,

for

example,

the

the

stuff

that

we

didn't

get

to

talk

about

earlier

on

secondary

certificates.

A

I

think

will

work

perfectly

well

in

this

context,

but

I

think

we

need

to

have

at

least

some

sort

of

explicit

signal

that

someone

once

has

considered

the

use

of

extensions

or

what

have

you

before?

We

just

let

them

work

in

both

protocols.

I,

don't

think

having

something

working

both

protocols

is,

is

a

feasible

plan.

B

A

The

question

of

registry

is,

is

fine,

but

echoes.

Echoes

core

concern

is

that

we

don't

end

up

making

it

very

difficult

to

to

gain

these

properties,

because

obviously,

one

of

the

things

that

we're

trying

to

preserve

here

is

some

sort

of

seamless

reuse

of

the

semantics

that

were

available

to

us

in

other

versions

of

the

protocol.

If

we

create

too

much

of

a

gulf,

we

end

up

with

the

possibility

of

fracturing

between

the

two

of

them,

and

that

would

be

a

very

bad

place.

A

I

would

like

to

see

what

mike

has

suggested

here,

which

is

a

transitioning

from

HTTP

to

section

actually

states

and

principles

under

which

the

designers

was

made.

Simply

because

that

that's

a

commitment

from

us

that

we

will.

We

intend

to

have

this

happen

and

also

to

have

that

that,

as

a

sort

of

strong

guidance

to

those

people

of

building

things

or

one

of

these

protocols

that

they

consider

the

other

one.

A

When

doing

so,

and

so

we

don't

end

up

win

a

situation

where

someone

knowing

unknowingly,

builds

a

product,

a

protocol

extension

for

one

or

other

of

the

protocols

that

simply

doesn't

work

in

the

other

one,

and

we

know

if

we

love

fracturing

in

but

in

various

ways,

because

I

think

that

would

be

very

unhealthy

for

the

protocol.

I

think

we

need

to

move

in

one

direction

as

much

as

possible.

Right.

B

So

I'm

bugs

waiting

but

I

just

want

to

insert

myself

to

answer

that

real

quick

I

agree

with

that

very

much

so,

but

you

know

in

this:

maybe

this

is

back

to

BCC

56

best

most

people

in

they

extend

HTTP

are

going

to

be

adding

headers

or

or

methods

or

or

truly

generic

artifacts.

When

it's,

you

know,

version

specific

stuff

like

h2

and

HQ.

B

J

Just

gonna

give

an

example

for

that

guidance

section:

you

know

for

the

foreseeable

future,

any

large-scale

deployment

is

probably

going

to

want

to

have

fallback

and

so

for

the

very

yin

n.

So

that

means

that

some

higher

level

you

know

applications

will

go

through

some

interface.

That

goes

either

direction

like

either

to

h2

or

HTV.

Over

quick

and

keeping

that

in

mind

in

terms

of

compatibility

makes

a

lot

of

sense.

M

Yeah

I

mean

I,

think

I

mean

like

the

way

Martin

framed

it

so

I

guess

I,

guess

I

want

to

give

a

sample.

Actually

I

mean

I.

Guess

with

this

unfortunate

situation.

We

have

these

things

that

were

designed

assuming

h2

semantics

and

now

they're

hard

to

port

it

to

quick,

but

going

forward

with

opportunity,

pull

things

that

potentially

you

know

or

you

can

take

bones,

can

new

accounts,

and

so

we

can

be

smarter

right

on

and

I

mean

I

was

thinking

earlier.

M

The

origin

frame

is

an

example

of

something

where

you

might

might

or

might

not

be

be

smarter,

but

we

know

be

sad

to

have

to

to

origin

frame

documents

right

that

be

like

silly.

So

I

guess,

do

you

think

it'd

be

possible

to

perhaps

see

a

PR

that

did

both

these

things

and

then

we

could

examine

it.

Sure.

M

E

I'll

be

happy

to

put

together

a

PR

or

actually

there's

an

existing

one

sitting

out

there.

I

would

be

happy

to

update

it

with

more

guidance

and,

in

particular,

I

think

we

might

even

want

to

have

the

I

Anna.

Even

if

it's

a

separate

registry

have

the

guidance

feed

that

you

should

not

allocate

the

same

good

point

in

both

registries

to

vastly

different

things

which.

B

B

B

Just

just

to

make

sure

we're

on

the

same

page,

you

know

what

I

think

about

that.

You

know

the

origin

frame

good

example.

That

document

should

be

one

dog.

You

know

if

existed,

that

would

be

one

document

and

the

INA

consideration

section

would

have

two

registrations

and

it's

done

a

more

complex.

You

know

document

that

had

some

protocol

implications.

Hopefully

you'd

have

one

big

section.

That

was

the

semantics

in

the

core.

You

know

artifice,

syntax

and

then

you'd

have

a

section,

that's

mapping

the

stage

to

which

is

hopefully

very

small.

E

E

E

The

only

path

to

reach

it

should

be

over

quick

right

now

is

the

alt

service

right,

which

means

you

have

to

have

TCP

in

embedded

in

there

somehow,

and

we

might

eventually

have

a

world

whether

it's

IOT

or

the

far

distant

future.

Where

not

every

client

wants

to

have

TCP,

we

might

go

the

approach

of

well.

Those

clients

should

just

know

that

the

endpoint

that

they

want

is

quick

can

be

if

we

can

think

good

for

that,

we

might

do

something

like

SRV

records

or

old

service

over

DNS,

but

I

feel

like

before.

E

A

So

I

I

actually

kind

of

like

the

observation

here

that

we

don't

actually

have

a

URI

that

identifies

something

a

resource

that

exists,

that

that

is

reachable

only

by

quick

or

a

resource

that

sits

on

something.

That's

a

quick.

We

have

on

some

of

it

services

and

I'm,

actually

kind

of

comfortable

with

that

for

the

for

the

moment

that

may

change

with

time.

A

It

doesn't

specifically

say

that

it's

UDP

port

number,

it's

a

number

and

how

the

protocol

interpret

number,

how

you

are

to

interpret

that

number,

given

that

it

is

actually

a

locator

is

something

that

we

do

have

a

little

bit

of

leeway

in

defining,

but

we'd

be

we'd,

be

sort

of

pushing

on

revising

or

updating

7230.

If

we,

if

we

started

down

that

path,

but

I

think

we

can

avoid

that.

B

G

Yeah

gen-I

and

god

I

yeah.

I

agree

that

we

don't

I,

don't

think

that

we

need

to

go

updating

HTTPS.

Yet

it

seems

like

it's

a

it's

an

interesting.

It's

certainly

an

interesting

use

case

where

you

know

that

the

other

endpoint

talks

quick,

but

not

DCP,

but

you

don't

know

where

to

talk

to

them.

We

certainly

aren't

in

a

place

where

we

need

to

do

this,

yet

we're

pretty

far

away

from

that

world.

Right

now,.

M

M

It

obviously

has

to

be

the

case

that

the

scheme

is

HTTPS

or

like

completely

screwed,

so

so

I

mean

it

may

be

the

case

that

we

need

to

find

someone

who's

in

the

URLs

to

say

actually

I

mean

UDP.

I

was

suggesting

to

large.

Before

that,

would

you

know

make

it

on

the

number

which

was

congruent

to

4

4

3

mod

to

the

16

uh-huh,

but

the

indicators

UDP,

but

in

any

case

I

think

probably

we

should

punt

this

until

we

have

a

but

I

mean.

Maybe

we

can

cue

up

some

sort

of?

L

L

B

M

L

Ted

Hardy,

it

is

in

fact

not

clear

that

di

use

G

has

that

power

I

had

a

conversation

with

the

folks

at

I

Anna,

and

it

would

be

very

valuable

if

we

could

actually

run

the

process

that

I

Anna

currently

understands

to

be

that

it

is

a

release

and

catch

system

where

the

current

registrant

releases

it

before

it

is

caught.

Now,