►

From YouTube: IETF99-TSVAREA-20170717-1740

Description

TSVAREA meeting session at IETF99

2017/07/17 1740

https://datatracker.ietf.org/meeting/99/proceedings/

A

Okay,

so

we

have

the

blue

sheets

going

around

welcome

to

TSV

area.

We

have

the

blue

sheets

going

around

and

David

like

is,

are

described

as

headlight

and

do

we

have

at

ever

slide,

or

do

we

need

one,

maybe

maybe

maybe

maybe

not

I

will

I

will

join

I

will

join

the

Medeco

and

watch

the

jeffer

from

up

here.

A

A

A

Because

here

you

guys

will

see

that

many

times

this

week,

but

this

is

another

one

we're

in

Monday

afternoon

in

session

3,

and

we

want

to

do

a

little

bit

on

TSP

overview

and

status

and

want

to

talk

about

a

proposed

research

group.

That's

probably

people

in

TSP

area

will

be

interested

in

at

least

some.

Then

we're

going

to

have

issue

Oh

Honda

talk

about

network

stacks,

integrating

with

envy

marketing

abstractions

and

then

we're

going

to

have

some

open

mic

time.

A

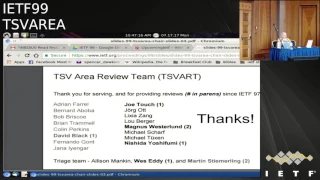

We

always

like

to

put

up

the

slide

for

who's

on

the

TSP

area

review

team

and

how

many

reviews

they've

done.

This

has

actually

been

a

fairly

light.

Ietf

cycle

for

reviews

and

actually

what's

coming

to

the

IHG,

has

been

kind

of

light

also,

but

they

say

we

wanted.

We

appreciate

every

one

of

them.

I

would

have

to

say

also

that

the

reviews

that

we've

been

getting

in

the

last

couple

of

ITF

cycles

have

had

a

lot

of

influence

on

hell.

Mary

and

I

fill

out

ballots.

So

we

really

appreciate

that

yeah.

A

I

ppm

is

doing

post

char,

recharter

discussions

that

would

cover

the

adoption

of

Instituto

am

capacity

for

is

doing

recharter

discussions

after

a

really

productive

couple

of

IETF

cycles.

Thank

you.

Thank

you.

Guys,

quick

is,

has

a

first

implementation

drat

that

the

hackathon

yesterday

ad

comment

as

well.

A

Taps

has

the

last

two

working

group,

graphs

or

past

working

group

last

call

so

moving

deep

into

implementation

and

API

space

I

have

no

say

I.

Catch

was

the

first

working

group

by

charter

and

I

have

never

been

as

happy

about

taps

as

I

am

now.

Thank

you.

All

tram

is

finishing

up

this

deliverable

final

deliverables

and

TSB

working

group.

When

you

got

14

active

working

group,

drafts

plus

17

active

related

drafts,

some

of

which

have

been

out

put

out

for

adoption,

calls

well.

B

So

I

will

only

mention

the

highlights,

and

one

highlight

is

that

ATM

is

basically

closed,

still

listed

here.

I

didn't

push

the

button,

but

all

documents

it

and

I'm

waiting

just

for

one

single

person

to

update

the

last

document,

then

so

TCP

Inc

is

has

just

submitted

their

main

documents

for

publication,

so

they

are

kind

of

done.

They

have

like

some

site

documents.

They

want

to

work

on

then

also

RM

ket

is

doing

really

good

progress.

B

B

A

Thank

you.

Our

documents

published

since

ITF

98

has

been

like

I,

say

a

little

bit

light,

but

that's

not

that.

That's

not

that's

not

unique

to

transport.

It's

just

in

kind

of

a

light.

Ietf

cycle

since

I

think

we

just

had

a

light

IETF

meeting

and

that

kind

of

stuff,

but

we

have

gotten

stuff

through

and

yeah.

A

Anyway,

but

again

think

you

know

they

say,

thank

you

all

for

the

work

you

do.

We

didn't

have

one

request

for

feedback

from

people

and

I

said:

I

would

throw

up

the

slide.

This

is

the

details

on

it,

but

there's

a

thread

already

started

on

the

TSP

area

list.

If

you

have

something

to

add,

please

follow

up

there

and

it's

it's

already.

You

know

the

people.

Look

like

they've

already

received

several

helpful

comments.

So

thank

you

for

that

anything

else

that

we

need

to.

B

A

B

Just

telling

you

so

before

this

meeting

there

was

a

banana

buff.

So

we're

not

reporting

on

that

and

we

don't

announce

it

because

it's

already

gone,

but

it

has

relation

to

you

and

transport.

So

maybe

you

should

look

at

it

if

you're

not

aware

of

it

and

then

the

other

thing

that

might

be

interesting

for

Transport

is

there's

proposed.

Research

group

called

passive

where

networking

and

Brian

is

sharing

that

and

has

yeah

Pichet.

C

D

C

C

The

thing

that

led

us

to

put

this

together

as

a

research

group

proposal,

was

we've

seen

a

whole

lot

of

work

recently

so

buffs

as

well

as

working

groups

both

in

the

internet

and

transport

areas,

where

there's

an

element

of

path,

awareness

where

the

endpoints

of

a

communication

actually

are

participating

more

in

sort

of

past

selection

or

path,

control

or

getting

more

information

about

the

past.

So

an

obvious

one

of

these

is

multipath

TCP.

C

There

are

other

there's

other

work

in

the

internet,

the

internet

area,

so

there's

spring.

So

if

we're

doing

source

routing

with

ipv6

segment

routing,

there

are

ahead

of

there's

a

giant

long

list

of

these

there's

a

lot

of

work

sort

of

in

in

Sdn,

which

gives

you

enabling

technologies

for

these

things.

So

we're

gonna

have

an

initial

proposed

work.

C

If

we

have

the

scope

of

this

right,

this

is

something

that

we're

looking

to

start

off

as

a

way

to

coordinate

work

on

a

path

awareness

at

these

two

layers:

research

on

path,

awareness,

these

two

layers

to

bring

that

into

the

IETF,

but

we've

taken

a

very

much

a

transport

focus

on

this,

because

I'm,

a

trans

person

and

an

internet

layer

focus

on

this

and

we're

looking

to

see

if

they're

sort

of

like

comments

on

how

that

should

be

scoped.

So

please

come

by

on

Wednesday

afternoon.

Thank

you

very

much.

A

B

E

E

E

F

E

E

J

All

right

so

good

afternoon,

everybody-

and

this

is

a

little

bit

about

the

off

topic

from

transport

layer,

because

this

is

not

particle

on

some

protocol.

But

given

that

these

days,

I

found

transport,

so

networking

API

for

transport

layer

is

quite

hot

topic

in

transport

area.

So

the

I

decided

make

presentation.

K

L

J

J

So

basically

the

main

memory

becomes

persistent,

a

lot

of

work

done,

for

example,

in

Terry

the

announcing

3d

crosspoint,

which

is

like

memory,

but

data

stays

there

all

the

time,

even

after

reboots

and

so

the

daily

lot

of

such

kind

of

normal

memory

technologies.

But

common

characteristics

is

they're

all

byte

addressable.

You

know

that

this

is

not

byte

addressable,

so

you

have

to

access

physical

disks

disks

by

the

brokenness

granularity,

but

the

persistent

memory

about

that

vessel,

and

also

they

are

very

low

latency

compared

to

disks.

J

So

they

only

access

latency

for

disks

is

basically

the

millisecond

or

hundreds

of

microseconds,

even

for

SSDs,

but

non

grata

in

main

memory.

Access

latency

become

tens

to

thousands

of

nanosecond.

That

is

a

very

very

long.

So

the

in

the

community

of

storage,

the

wrote

of

work

is

going

so

in

addition

to

research

paper

introduced

here,

they

were

lot

of

work

from

industry.

J

J

But

mean

when

you

have,

and

on

top

of

that,

you

have

operating

system

stack

like

memory

management,

Network

stack,

including

tcp/ip,

and

on

top

of

that

you

have

socket

API

which

resembles

pirate

yes

for

networking,

and

you

also

have

fire

systems

and

application

running

or

on

top

of

it,

and

when

we

plug

non-volatile

main

memory

into

the

system,

then

just

currently

the

system

looks

like

following.

This

is

how

Linux

and

windows

works

today.

J

So

once

you

plug

in

via

memory

device

into

main

memory,

then

the

you

can

create

fire

on

top

of

it,

because

you

can

create

fire

system

on

top

of

it,

but

once

this

fired,

em

mapped

to

user

space,

application,

user

space

directory

access

to

data

by

load

and

store

instructions.

So

if

the

data

is

disk,

then

you

have

to

copy

data

on

to

buffer

cache

or

memory.

One

stand:

you

can

only

access

there,

the

difference

and.

J

J

So,

though,

when

you

move

data

from

the

NIC

to

the

final

destination

or

some

file,

then

how

to

date,

what

is

data

comes

to

the

NIC

and

the

goes

up

to

the

network

stack

and

application,

read

that

data

and

application

memory

copy

that

data

from

DRAM

to

non-volatile

main

memory.

So

this

is

how

the

application

data,

reception

and

data

store

looks

like

today,

but

this

is

a

free

program

and

I'm

not

sure

how

this

is

problematic.

J

So

the

rest

think

about

some

simple

transaction

between

client

and

the

server

this

guy

is

the

client

send

some

data.

Hey

I,

want

to

keep

this

data

even

after

you

crash

I

want

you

to

recover

the

data.

So

in

this

case,

what

we

have

to

do

is

I

think

about

grant

client

wants

to

say

1

kilobyte

of

data,

the

how

the

thing

looks

like

is

granting

some

data

and

this

data

arrives

at

Annique.

Then

the

data

is

DMA.

K

J

To

DRAM

and

processed

by

TCP

and

IP

stack,

then

the

application

read

this

data

and

write

to

some

file.

After

that,

this

is

the

important

step

it

has

to

issue

some

everything

or

the

fighting,

the

already

a

mapped

it

has

to

issue

the

imaging

to

make

sure

data

is

actually

on

the

physical

disk

device

disk

or

a

safety

whatever

yep.

J

J

So

when

we

have

a

safety

or

disk,

this

process

took

milliseconds

so

out

of

that

so

networking

apart

or

in

this

picture

with

that

step

number

four,

it

took

only

only

40

nanoseconds,

a

40

micro

second,

so

there

was

nothing

to

do

with

the

networking,

but

once

we

press

that

the

disk

non-volatile

memory,

this

process

takes

only

40

to

microsecond

in

your

measurement.

So

the

now

the

storage

was

sharing.

Data

is

very

cheap,

so

networking

is

a

hypotonic,

so

we

have

to

add

it.

J

So

this

is

a

bit

more

synthetic

measurement

and

we

did

some

tests

like

having

one

client

on

one

server

and

client

sent

continually

the

HTTP

POST

to

save

one

kilobyte

of

data

and

server

takes

the

steps

to

store

data

persistently

and

we

changed

the

number

of

TCP

connection.

So

the

compared

to

direct

precinct.

J

Only

the

the

white

box

or

bars

and

the

dark

grey

box

and

path

so

in

creating

select

means

the

throughput

and

the

right

means

latency,

average

latency

so

clearly

compared

to

the

networking

only

case.

The

savings

storing

data

transition

entry.

The

code

is

a

lot

of

pattern,

a

lot

of

programs,

so

reducing

radiation

are

reducing

throughput

a

lot

and

increasing

latency

a

lot.

J

So

the

usually

the

copying

data

to

so

some

people

say

copy

the

expensive,

some

people

say

copy

even

copy

is

not

expensive,

so

it

depends

on

the

situation

so,

for

example

like

this

picture

so

reading

from

network

buffer

and

some

kind

of

buffer

to

application

buffer,

but

the

over

and

over

and

over,

but

if

the

application

buffer

stage

in

the

same

memory

address

or

same

location,

it's

actually

pretty

cheap,

because

application

bar

father

stays

in

the

cache.

But.

J

J

Imagine

that

is

the

destination

file

is

already

a

mapped

to

the

application

address

space.

So

here

the

result

of

the

where

the

cache

misses

happening

in

the

system.

So

the

when

we

don't

pass

this

the

data

or

we

don't

perform

data

copy

from

application

buffer

to

this

the

logo

file,

then

the

cache

misses

cache.

Miss

ratio

is

only

0.02,

0%

and

most

of

cache

misses

are

happening

in

the

network

stack

but

they're

only

a

little

bit,

but

once

we

start

saving

the

positioning

data,

then

the

lot

of

cache

misses

happens.

J

So

the

one

thing

we

so

the

our

integrated

network

stack

and

persistent

memory.

Abstraction

is

basically

pretty

simple,

so

the

one

important

thing

is:

we

have

to

allocate

packet

buffers

where

Nick

TM

HD

into

the

non-volatile

memory

memory

region.

It

is

just

a

file

created

and

after

that

we

have

to

employ

the

zero

copy

API,

because

we

have

seen

that

the

copy

the

expensive

so

the

overstep

you

track.

Data

arrival,

satanic

and

application

read

that

data

after

tcp/ip

processing

without

zero

copy,

the

end

application.

J

So

the

danger,

since

the

memory

is

already

the

persistent,

so

the

application

just

somehow

write

metadata

of

particular

buffer,

for

example

the

packet

buffer

number

one

length

and

offset

so

I'm

going

to

show

you

in

more

detail

next,

so

the

this

is

how

our

system

looks

like.

So

basically,

we

have

the

nickering.

Nickering

is

just

to

the

nick

hardware

and

we

have

some

the

file

containing

a

packet

buffers

and

we

have

a

tcp/ip

implementation,

so

the

sink

apart,

the

Nick

is

Nick

with

receiving

the

for

some

requests

to

be

stored.

J

In

that

case,

first

packet

is

sorry:

Nick

just

receive

the

full

packet.

Each

of

them

includes

data

or

client

request

and

think

so

then

the

first

packet

is

processed

by

tcp/ip

input

and

after

that

and

application

read

this

data

so

application.

Fine.

All

this

data

is

the

important

or

we

have

to

store

this

data.

Then

the

application

opens

another

five

to

store

metadata

or

some

descriptor

for

that

packet

and

say:

I

want

to

say

packet,

buffer,

zero,

offset

105

and

rings

1000

some

batch

okay,

but

after

that,

the

day

the

one

was

dead.

J

So

you

know

that

today

the

nicks-

this

is

a

good

thing,

so

today's

Nicks,

when

today's

Nick's

dmas

packet

into

memory,

it

only

logically

DMS

into

main

memory,

because

the

data

actually

states

in

last

level

cache

so

the

way

Nick

receives

packet

data

is

not

actually

on

memory

yet

so

it

it

is

on

the

Nick's.

It

is

on

CPU

cache,

so

the

application

must

explicitly

fresh

the

data

and

this

metadata

to

actually

is

persistent,

restore

data.

Yes,

so

the

next

packet

is

also

the

considered

of

data

to

be

persisted.

So

the.

J

So

after

that,

if

we

think

about

the

third

packet

was

something

some

data

which

is

not

to

be

stored.

In

that

case,

when

application

find

all

this

data

is

not

important

or

some

key

value

store

get

in

that

case,

it

simply

skips

this

data

to

be

written

back

to

memory,

and

the

final

data

was

also

considered

as

data

to

be

persisted.

So

it's

simply

the

fresh

data

and

metadata.

J

J

So

here's

some

result,

so

we

compared

this

using

some

some

extended

net

map

API

and

not

extended

net.

My

baby

yeah

but

extended

tcp/ip

API

and

using

the

method

data

persisting

method,

I

explained

before

so

the

by

avoiding

memory

copy

and

the

weekend.

Sorry,

the

white

bar

is

not

storing

data

at

all,

so

theoretical

the

limit

of

performance

and

the

white

right

way.

Part

and

boxes

are

the

storing

data

by

copying

data

and

the

gray.

One

are

the

our

system.

A

J

Sana

bottlenecks,

so

we

must

be

designing

networking

api.

So

actually

you

know

the

socket

API

today

resembles

disk

api,

so

we

can

read

and

write

and

we

can

send

a

file,

but

there

is

no

official

Emma

for

network

stack

efficiently.

So

we

need

some

more

memory

friendly

api's

for

tcp/ip

as

well,

because

it

becomes

a

bottleneck

and

we

have

a

lot

of

ongoing

work.

J

So

we

are

brushing

up

a

ps4

beta

integration

with

net

map

and

we

are

also

currently

the

packet

buffer

must

be

a

single

fire,

but

we

found

it

is

actually

not

very

convenient

because,

for

example,

like

a

database

application,

it

has

multiple

files

to

keep

two

breaks

or

chunks

file.

So

we

have

to

support

the

packet

buffer

formula

file

system

and

also

we

are

building

local

use,

gates,

applications

like

key

value

stores

and

also

some

softer

switches

as

well.

So

but

again

so

the

proposing

the

network

stack

and

some

persistent

memory.

J

We

are

building

this

picture

like

the

architecture

so

application.

The

database

API

application

using

net

map

because

it

is

networking

a

passion

of

a

map

to

a

file,

but

underneath

we

have

post

network

stack

and

file

system

and

we

have

non

brought

a

main

memory

and

mix

so

I

know

that

the

transport

working

group

basically

designing

a

new

transport

API

because

to

better

utilize.

The

rich

transport

protocol

features,

but

persistent

memory

actually

coming

not

only

to

data

center

service,

but

also

mobile

devices.

J

N

J

J

I

found

that

the

taps

working

group

are

defining

and

how

to

better

you

try

transport

feature

and

but

I

think

if

we

think

about

API

change,

it

quite

big

thing.

So,

the

if

we

design

we

redesign

API

I

think

we

have

to

not

only

take

into

account

some

transport

protocol

features,

but

also

some

upcoming

devices.

Yes,.

N

N

O

Tommy

Polly

Apple,

so

yeah,

thank

you

for

showing

this

is

really

cool.

Coming

from

working

on

some

of

the

stuff

and

taps

at

Apple,

you

know

we're

doing

a

lot

of

work

on

trying

to

find

the

API

layers.

Just

recently,

we

deployed

on

our

mobile

devices

the

fact

that,

underneath

the

higher

level

API

is,

we

are

using

kind

of

the

shared

memory

model

here

with

the

user

space

stack

mm-hmm

very

so

much

for

this.

O

O

J

O

O

B

Miracle,

even

so,

we

had

a

site

discussion

which

was

related

to

the

fact

that

we're

thinking

about

using

a

message

based

API

in

intercepts

instead

of

a

streaming

API

and

if

I

get

it

right,

I

think

that

would

actually

be

favorable

for

your

approach

right

or

is:

do

you

think,

there's

any

big

difference?

Yeah.

J

So

actually,

the

the

major

drawback

of

using

net

map,

API

or

kind

of

memory

based

API

for

networking

is

some

very

extremely

easy

support

of

streaming.

The

stream

says

is

be

difficult

because

application

has

to

the

chanc

data

by

the

application

has

to

segment

data

by

themselves,

but

I

think

I

mean

the

community

or

world

is

moving

into

accepting

this

kind

of

thing,

for

example

in

acts.

How

the

KCM

today

kind

of

multiplexer

today,

which

enables

message

based

application,

can

send

traffic

over

solid

TCP

application.

K

J

M

J

Haven't

actually

consider

using

the

PDK,

because

the

BTK

completely

runs

in

user

space,

even

device

driver,

you

know

so,

but

I

I,

actually

don't

like

the

perfect

by

complete

bypass

approach

or

I

want

to

take.

The

good

part

of

the

Canon,

like

Anya,

has

a

good

memory

management

system

and

very

good

the

protection

and.

J

M

J

J

N

P

I

have

a

little

evil

question

so

as

we

sing

that

a

message

based

the

api's

seems

to

be

much

better

for

most

kind

of

networking.

Terrific-

and

you

say,

memory

based

avi

is

also

much

better.

Do

you

see

there's

still

room

for

some

kind

of

screen

based

at

the

eye

in

the

future

in

the

future,

or

is

it

just

to

go

away

sometime

so.

J

K

J

So

you

know

that

we

do

spring,

maybe

I

own,

say

today's

TCP,

the

we

of

course

stream

data,

or

we

can

write,

say

10

megabyte

of

data

and,

like

the

changing

data

internally

I

think

we

can

also

write

10

megabyte

of

data

into

a

UDP,

socket

I.

Think

then,

the

in

that

case

UDP

layer,

the

segment

this

data

into

you

know,

IP

fragments

so

and

anyway,

we

have

at

some

point.

We

have

to

segment

some

data

I.

Think

many.

J

B

B

A

It

was

what

you

know.

That

was

one

of

the

big

one

of

the

considerations

for

me

being

willing

to

serve

a

third

term.

As

your

area,

director,

we've

gone

from

the

first

blow-up

that

I

had

on

after

joining

the

I

on

the

is

G,

was

the

MPLS

over

UDP

IETF

last

call.

Some

of

you

may

remember

that,

and

we've

moved

from

that

kind

of

relationship

with

the

rest

of

the

IETF

to

we

are

now

getting

requests

for

early

transport

review

from

a

working

group.

That's

concerning

adopting

a

draft.

You

know

it's

like.

A

B

A

Q

A

It's

also

worth

just

me

mentioning

so

my

co

area

director

will

be

up

for

her

position

will

be

up

for

Nam

Kham

review

this

year.

Please

be

considering

your

feedback,

because

Tom

come

really

appreciates

feedback

about

about

the

incumbents

and

about

and

about

the

needs

of

the

area.

Don't

let

don't

forget

that

part

they're

supposed

to

be

balancing

not

like

do

people

think

this

person

is

doing

a

good

job?

A

A

And

if

you

don't

put

your

name

in

this

year,

I

will

be

up

for

review

the

following

year

and

the

only

feedback

that

I

got

from

Nam

Kham,

which

they

met

with

the

people

that

the

the

willy

nominees

and

said

this

is

the

kind

of

feedback

we

got

about.

You

was

that

serving

a

third

term

was

a

really

big

hurdle

for

them

to

get

over.

He

said

there

will

not

be

a

fourth

it's

if

you

take,

you

know

if

it

takes

you.