►

Description

Day 2 of the IAB's Measuring Network Quality for End-Users Workshop, 2021-09-15.

Day 1: https://youtu.be/pFZEa3NN39A

Day 3: https://youtu.be/6q4-G9pnhfY

Workshop page: https://www.iab.org/activities/workshops/network-quality/

B

B

B

D

Okay,

hi

thanks

chris

next

slide,

please

so

today,

I'll

be

sharing

with

you,

some

metrics

that

were

developed

over

the

course

of

multiple

years

of

deploying

a

solution

that

promises

to

improve

users,

internet

quality

with

a

router

that

automatically

adapts

to

their

line.

So

some

of

these

metrics

were

necessary

for

the

automation

functions.

Many

were

necessary

for

triaging

issues

and

to

inform

and

alert

users

about

anomalies

with

their

service.

D

First

of

these

metrics

is

an

accurate

determination

of

line

capacity

which

actually

is

harder

than

held

down

than

one

would

think.

It

takes

multiple

samples,

at

varying

times

across

multiple

days,

to

really

profile

a

line,

since

those

are

not

always

consistent

from

our

data

set.

We

find

that

about

36

percent

of

lines

vary

by

more

than

10

percent

over

our

measurement

periods.

D

D

So,

during

these

capacity

tests,

the

capture

of

latency

under

working

loads

is

performed

to

determine

buffer

bloat

levels

with

the

traffic

manager,

both

disabled

and

later,

with

the

traffic

manager

enabled

to

confirm

whether

settings

are

appropriate

and

just

like

capacity.

Latency

metrics

from

the

router

truly

accurately

profile

current

internet

link

performance

and

allow

for

accurate

reporting

performing

these

latency

metrics

on

an

ongoing

basis,

allows

determining

whether

current

qs

settings

are

sufficient

to

mitigate

buffer

bloat

and

are

used

to

refine

these

settings

dynamically.

D

D

The

depicted

graph

is

a

good

illustration

of

a

cable

line

with

some

significant

sag

in

the

evenings,

which

is

usually

due

to

backhaul

or

loop

congestion,

requiring

the

traffic

manager

to

be

adjusted

down

until

latencies

are

acceptable.

The

system

will

also

dynamically

adjust

upwards

when

latencies

seem

to

have

recovered.

D

This

preserves

a

high

quality

of

user

experience

and,

in

this

case,

there's

still

enough

capacity

for

most

online

activities.

This

user

is

perfectly

happy

with

their

internet

service.

This

reinforces

points

made

in

other

papers

that

qoe

at

the

end

user

level

does

not

necessarily

correlate

with

line

capacity

alone.

A

stable,

low,

latency

line

wins

most

every

time

next

slide.

Please

one

thing

rarely

discussed

are

link

stability,

metrics,

which

are

vital

to

good

qe

link.

D

Loss

is

an

obvious

one,

so

logging

and

reporting

this

helps

users

and

their

isps

have

facts

to

inform

them

about

when

these

events

occur.

This

also

helps

people

understand

when

loss

of

connectivity

is

due

to

local

events

like

poor,

wi-fi

connectivity,

but

the

metric

I'd

like

to

share

a

bit

more

about

is

discussed

further

in

our

document,

and

this

is

the

unstable

line

which

we

define

as

an

increase

in

latencies,

with

a

little

to

no

load.

D

Often

this

is

a

transient

phenomenon

usually

caused

by

failing

modems

or

backhaul

congestion,

or,

as

we

frequently

use

it,

to

detect

a

cascaded,

router

scenario

where

traffic

is

bypassing

our

traffic

manager

in

the

iq

router,

and

this

is

usually

due

to

somehow

the

isp's

all-in-one

wi-fi

being

remotely

enabled

in

our

data

set.

We

see

that

more

than

a

quarter

of

deployments

see

this

at

some

point,

and

you

know

quite

a

few

deployments

have

just

a

tremendous

amount

of

these.

D

D

D

D

C

E

E

E

E

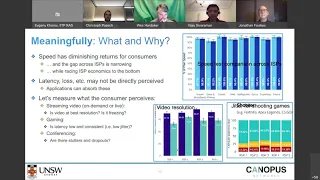

You

know,

obviously,

in

australia,

the

regulator,

measures,

things

like

speed

and

and

I've

just

put

a

sample

graph

of

what

consumers

or

users

get

to

see

in

the

latest

report

that

came

out

a

couple

of

weeks

back

and

and

frankly,

as

a

user.

I'm

not

getting

a

lot

of

information

out

of

this

in

the

sense

that

the

speeds

are

all

like

fairly

close

to

each

other.

The

gap

is

very

narrow.

E

In

fact,

the

top

three

are

within

one

percent

of

each

other,

and

if

anything

you

know

this

race

for

speed

is,

is

causing

the

economics

for

sp's

to

really

raise

to

the

bottom.

Of

course,

one

could

augment

speed

with

things

like

latency

and

loss.

But

again

these

are

things

the

user

does

not

really

perceive

or

understand

what

it

actually

means,

because

sometimes

loss

and

latency

can

be

absorbed

by

the

application.

E

So

we

definitely

take

the

view

that

it's

quite

useful

to

measure

experience

from

the

perspective

of

the

consumer,

exactly

how

they

think

of

experience,

and

by

that

we

mean,

for

example,

streaming

video

and

if

you're,

watching,

netflix

or

disney,

plus

or

prime,

or

what

have

you

you're

very

concerned

as

a

consumer

about

well,

I've

just

bought

a

4k

tv.

Am

I

really

getting

the

video

at

the

best

possible

resolution,

given

the

investment

I've

made

into

a

device

such

as

a

tv,

and

is

my

video

freezing?

E

Am

I

getting

that

buffering

or

that

spinning

wheel?

So

those

would

be

the

most

pertinent

things

to

from

a

user's

perspective

and

in

contrast

to

showing

the

speed,

as

in

the

plot

above,

we

could

show

them

with

the

different

options

they

have

for

isps

in

australia.

We

call

them

rsps

because

they're

retail,

because

the

access

network

is

actually

nationalized

by

the

government.

E

So

how

will

the

rsps

or

isps

compare

in

terms

of

their

capability

to

serve

video

at

the

highest

available

resolution,

and

you

can

go

down

further

and

break

it

up

by

the

provider

of

video?

Obviously,

youtube

is

different

to

netflix.

It's

different

to

disney,

plus

and

so

on

and

so

forth.

You

could

do

the

same

for

gaming.

Users

who

are

gamers

would

very

much

like

to

know

if

they

are

playing

fortnite

or

counter-strike

or

apex

legends.

E

You

know

bursts

of

speed

tests

or

via

latency

probes,

so

that

was

the

first

point

trying

to

really

show

these

metrics

that

are

very

easy

for

the

user

to

understand

and

appreciate

and

relate

to

if

you

could

move

on

to

the

second

slide.

That

will

be

the

second

point

and

final

point

we

want

to

make

today,

which

is

and

sorry

you

can

click

on

to

show

the

animations

as

well

and

yeah.

Thank

you.

E

E

The

answer

is

yes,

or

at

least

we

believe

that,

and

in

fact

we

have

staked

that

on

a

company

that

we

have

started

first

and

foremost

in

terms

of

the

scale.

You

know,

I'm

sure

the

community

is

aware

of

what's

been

happening

in

the

world

of

programmable

networks

and

the

fact

that

you

can

actually

get

a

multi-terabit

switch,

which

is

able

to

export

telemetry

at

really

fine

time

scale.

E

So

it

can

process

hundreds

of

thousands

of

subscribers

worth

of

traffic

and

we

can

isolate

every

single

flow

going

on

date,

streaming,

video

gaming

conferencing

and

so

on,

and

we

can

actually

extract

very

fine

grain

telemetry

down

at

the

sub

second

level,

often

in

the

tens

of

milliseconds

level.

That

is

able

to

reveal

the

exact

behavior

of

the

application

and

by

being

able

to

see

the

behavior,

as

shown

on

those

two

plots

as

examples,

I've

showed

the

the

pulse.

E

E

You

can

actually

make

some

inferences

about

not

just

the

fact

that

this

happens

to

be

streaming,

video

or

gaming,

and

this

is

netflix

and

that's

counter-strike,

but

also

the

whether

or

not

this

netflix

stream

is

working

at

the

highest

available

resolution.

Not

whether

or

not

the

buffer,

the

playback

buffer

on

this

netflix

stream

is

healthy,

or

it's

actually

depleting.

E

So

these

inferences

can

be

made.

There

are

many

academic

papers

that

have

been

published

on

this

in

recent

years.

We

have

published

a

few,

and

these

are

generally

trained

on

data,

because,

obviously

you

know

youtube's

algorithm

for

serving

videos

different

to

netflix's

is

different

to

listening,

plus

and

so

on,

and

likewise

the

games

are

all

different.

So

we

have,

you

know,

developed

ai

models

that

are

able

to

analyze

this

data

and

estimate

experience

quite

accurately,

and

we

have

actually

built

this

platform

and

it

is.

E

It

is

commercially

deployed

in

a

in

a

few

of

the

large

operators

in

australia

and

it's

able

to

show

experience

on

an

application

by

application

basis

across

hundreds

of

thousands

of

subscribers

per

instance

that

we're

putting

in

and-

and

this

is

helping

both.

Of

course,

the

operators

understand

various

you

know,

tuning

of

their

network,

what

impact

it's

having

on

application

experience

and

I'll

just

give

one

or

two

examples

and

stop

one

of

them

is

we

have

an

operator

who's

tuning.

E

The

buffers

in

their

network

is

because

there's

a

shaping

going

on

and

and

the

impact

of

the

buffers

on

different

applications

is

different.

Obviously,

a

larger

buffer

helps

downloads,

whereas

a

shorter

buffer

helps

gaming.

Our

applications

to

reduce

data

so

helping

them

tune

that,

to

balance

the

needs

of

the

various

applications

is

one

and

another

one

we

are

working

with

so.

C

E

E

C

F

Hear

me:

okay,

yes,

okay,

good

morning,

everyone

at

least

from

the

mountain

time

zone

here.

So

I

I'm

gonna

talk

about

for

those

of

you

who

recognize

the

broadband

america.

That's

the

fcc

measuring

broadband

america

program,

that's

published

about

10

reports

now,

since

2011

and

with

dr

levi

prego

who's.

Also

with

me

on

the

faculty

of

cs

and

at

cu

boulder,

we

took

a

look

at

the

latency

under

load

data.

F

That's

included

in

the

fcc

measurements

they

actually

in

the

reports

to

the

best

of

our

knowledge,

haven't

reported

it

in

the

reports

themselves.

But

if

you

go

to

the

raw

data,

they

do

have

a

latency

under

load

test

that

they

run

that

essentially

operates

when

they're

doing

their

10

second

downstream

and

upstream

speed

tests

and-

and

they

send

some

udp

packets

when

that's

occurring

and-

and

so

we

want

to

this

paper-

takes

a

look

at

what

the

data

looks

like

and

we're

just

going

quickly

through

the

results

here.

F

F

We

selected

centurylink

for

their

as

a

ds

representative

of

dsl

comcast

for

cable

and

verizon

for

fiber

to

the

home,

and

so

you

can

see

this

is

what

the

latency

under

load

data

look

like

for

the

last

year

that

they

reported

that

big

chunk

in

september

october.

The

fcc's

withheld

that

data

until

they

published

their

report,

which

hasn't

been

published

yet

on

their

web

page.

And

what

we

can

see

here

based

upon

these

results,

is

that

there's

a

substantial

difference

in

the

magnitude

of

the

downstream

between

these

different

technologies.

F

So

that

may

be

a

a

reason

for

that.

We

don't

know

we're

just

looking

at

the

data

here

and

can't

do

too

much

sleuthing

on

it,

but

we

see

there's

substantial

variation

by

the

technology

there's

also

not

shown

here,

but

in

our

paper

substantial

difference

in

the

downstream

and

upstream

latency

under

load.

F

F

For

the

different

technology

types

you

see

here,

they're

a

little

bit

more

bunched

up,

but

still

with

dsl

having

a

higher

amount

than

the

others

and

again

the

the

higher

magnitude

here

can

be

explained

in

part

by

the

upstream

or

by

just

the

the

speeds

associated

with

these

different.

You

know

broadband

service

speeds

that

are

offered

by

these

different

technologies.

If

we

can

go

to

next

slide.

F

So

this

is

the

historical

or

longitudinal

analysis

of

the

data

and

we

just

focused

on

one

isb

here.

This

is

a

comcast

and

you

can

see

this

this

plot

of

their

average

round-trip

time

and

it

shouldn't

say

2020.

So

it's

a

typo

in

in

the

title

there.

But

you

can

see

over

time

here

that

that

there's

been

an

improvement.

F

There's

a

long

trend

line

here

of

improvement

in

in

the

latency

about

10

every

year

kind

of

averaged

out,

and

you

know

recognizing

that

this

isn't

something

that

you

can

necessarily

predict

about

how

the

internet

performs

based

upon

different

the

changes

in

the

dynamics

associated

with

applications

and

like

but

you're.

Seeing

a

improvement

here

and

the

reasons

for

improvement

are

likely

due

to

the

increasing

service

speeds

that

have

been

occurring

during

this

time,

along

with

improvements

in

the

ip

protocol.

F

F

F

F

F

G

Christoph

I

had

a

super

quick

clarifying

question:

if

that's

okay,

thanks

very

much

david,

this

is

sam

from

sam-

knows

here,

actually

you're,

absolutely

right.

What

you

mentioned

at

the

beginning,

that

the

the

fcc

haven't

published

the

any

of

the

latency

on

the

load

measurements

in

the

nba

reports,

yet

so

first

I've

confirmed

that.

Secondly,

on

the

you

mentioned

something

about

verizon

one

gigabit

there

in

the

raw

measurements

you're

looking

at

the

raw

files

published

on

the

fcc's

website,

nothing

should

have

been

excluded

from

there.

If

there's

something

you're

missing.

F

That's

good

again,

we

didn't

do

it

kind

of

beyond

the

scope

of

this

and

a

quick

turnaround.

I'm

looking

at

the

data

to

try

to

go

the

next

level

to

explain

why

the

fiber,

the

home,

would

be

higher

than

say,

cable,

given

expectations

there,

and

some

of

the

earlier

idle

latencies

that

have

been

reported

by

the

fcc

did

have

lower

latency

idle

latencies

for

the

fiber,

the

home

as

compared

to

the

cable.

F

C

H

D

I

I

You

mentioned

a

number

of

times

that

latency

should

be

better

as

speed

increases

or

you

would

expect

it

to

get

better

and

you

know

just

have

been

getting

better

if

it's

a

latency

under

load

test

that

shouldn't

make

any

difference,

because

the

the

the

flow

should

should

have

be

given

time

to

reach

the

point

where

it's

filling

the

buffer.

The

same

so

it's

I.

I

F

We

have

a

scatter

plot,

that

of

the

data

that

I

didn't

show

here,

that

kind

of

shows

overall,

a

clear.

You

know,

based

on

the

data

that

as

speeds

increase,

the

latency

under

load

goes

down,

and

I

don't

know

if

it's

like

the

transmission

delay,

piece

or

or

for

other

reasons.

We

didn't

have

time

to

kind

of

sleuth

and

figure

that

out,

but

there's

a

clear

association

with

that.

F

A

C

J

J

You

know

and

finding

ways

of

detecting

pulses

of

that

are

really

key

to

a

better

user's

quality

of

experience.

If

you're

interrupted

every

two

seconds

from

whatever

you're

doing

it

can

be

a

quite

annoying

internet.

The

going

back

to

the

previous

set

of

comments

as

we

get

more

and

more

bandwidth.

The

tests

themselves

are

not

running

long

enough

to

actually

detect

what

happens

for

a

long-running

flow.

So

you

know

20

seconds

proved

not

to

be

enough

even

10

years

ago.

K

D

So

those

metrics

are

what

we

believe

are

important

for

end

users

to

be

able

to

understand

what

their

actual

line

quality

is.

So,

if

I'm

going

to

say,

my

isp

is

not

giving

me

good

service

because

my

line

sags

every

afternoon,

you

know

down

to

50

percent,

then

I

need

you

know

the

only

way

I

can

really

know

that

is

not

from

an

application.

D

K

Was

regarding

changes

in

capacity,

you

specifically

thought

we

were

talking

about

changes

in

capacity,

and

my

question

was:

were

you

able

to

observe

changes

in

capacity

that

are

happening

whenever

an

sp

is

changing

their

route

from

a

peering

agreement

to

a

transit

which

would

necessitate

completely

different

usage

pattern

of

the

backbone?

Thank

you.

D

Okay,

yeah,

the

our

observation

and

all

of

our

data

has

pretty

much

shown

us

that

when

we

see

this,

this

phenomenon

described

and

reflected

in

that

graph

of

the

sag

that

that

is

pretty

much

98

caused

by

local

issues.

Bad.

You

know

local

loop

contention

on

cable

systems

on

copper

lines.

It's

gonna

be

the

backhaul

being

insufficient

for

the

load

thanks.

Okay,.

L

The

the

other

thing

is

that

the

buffering

in

these

devices

has

been

scaling

with

each

generation,

because

at

least

when

there's

no

aqm

in

order

to

do

the

single

stream

test

to

maximize

that

bandwidth

value

you

keep,

tcp

has

demanded

that

much

more

buffering

for

each

generation

as

a

minimum

and

memory

has

gotten

very

cheap.

So,

while

I

can

believe

in

your

data

set

that

the

that

the

latencies

have

been

dropping

with

time,

that

doesn't

actually

mean

that

the

worst

case,

which

is

what

really

bedevils

you

has,

has

necessarily

changed.

G

Yeah,

just

more

of

a

clarification

than

a

question

for

the

discussion

between

dave

and

bob

earlier

on.

I

think

it's

perfectly

reasonable

when

you're

looking

at

a

macro

level,

as

dave's

presentation

did,

you'd

expect

the

idle

latency

to

fall

as

you

hit

as

you

move

towards

higher

access

speeds,

simply

because

they're

using

newer

access

technologies

so

pick

on,

like

centurylink,

for

example,

they're

a

blend

of

extremely

slow,

dsl

and

also

extremely

fast

vibe

to

the

home

and

a

whole

bunch

of

ranges

in

between

like

a

vdsl

and

fttc

as

well.

C

M

M

Keep

it

high

enough

that

it

can

maximize

some

sort

of

duty

cycle

there,

but

the

duty

cycle

tends

to

be

60

or

so

that's

what,

in

my

experience,

I've

seen

and

this

the

the

frequency

of

the

square

wave

can

change

depending

on

the

kind

of

streaming

you're

seeing.

This

could

be

other

live

video

streaming

where

this

is

much

smaller,

so

you

can

have

like

one

to

two

second

square

waves

or

you

can.

M

D

Okay,

just

another

comment

around

the

metrics

that

jim

and

dave

mentioned,

which

is

not

only

reinforced

the

need

to

run

these

tests

longer,

but

also

more

concurrent,

streams

are

required.

As

you

go

up

the

the

capacity

tiers.

It's

it's

pretty

easy

to

run,

let's

say

12

streams

on

a

gigabit

download

and

and

pretty

much

on

docsis.

N

Yeah

two

one

question

for

jonathan:

there

was

a

conclusion

that

you

made

that

it's

due

to

last

mile

conditions

I

suggested

in

the

docsis

network,

I'm

curious

if

the

testing

methodology

gives

you

more

information

that

can

support

this.

I

mean

I've

looked

at

the

paper

you're

showing

180

megabits

per

second

mine

for

a

lot

of

the

day,

you're,

not

achieving

that.

N

I

was

on

a

call

a

few

days

ago

with

a

cable

provider

and

they

claim

that

contention

in

the

docsis

network

is

very

rare

or

sorry

in

the

cmts

network.

So

it

is

interesting.

The

other

piece

here

is

this

latency

under

road

test.

Again,

I'm

wondering

if

this

is

realistic

or

representative

what

users

are

going

to

see

if

you're

trying

to

fill

the

buffer

at

the

bottleneck

link.

You

know

how

often

is

the

workflow

that

users

have

actually

going

to

cause

that

to

occur.

N

D

Okay

and

yeah,

we

we

do

see

and

again

our

adjustments

in

the

dynamic

range

are

done

when

the

the

line

is

loaded.

So

we

we

will

not

make

dynamic

changes

just

because

latency

metric

happened

to

come

back

bad.

We

only

do

it

under

known

load

conditions,

in

which

case

we

know

that

you

know

the

increase

in

latency

is

being

triggered

by

the

load.

Now

it

could

it

be

because

of

a

poor

peering

agreement,

or

you

know,

a

really

bad

quality

peering

link.

H

So

this

is

a

way

of

adjusting

themselves

and

the

reason

why

they

have

to

do

this

by

you

know

sending

unlimited

speed

bursts

is

because

you

know

from

the

network.

We

don't

give

them

another

way

to

figure

out

what

actually

the

available

downlink

downspeed

bandwidth

is.

So

there

is,

you

know

some

some

some

argument

to

be

made

that

if

we

had

a

better

network

service

that

would

give

some

idea

about

the

free

head

room.

K

They

just

absorb

the

location

put

their

own

equipment,

which

is

completely

different

from

the

isp

equipment

and

use

that,

I

wonder,

have

you

seen

a

strong

correlation

between

behavior

of

netflix,

facebook,

google,

amazon

and

other

services,

despite

that

prior

of

having

different

technology,

I'm

quite

curious.

How

that

has

been

seen

from

the

outside.

E

So

what

we

measure-

and

maybe

it's

slightly

misleading

when

I

said

we

measure

the

isp.

What

we

measure

is

the

end-to-end

performance

of

netflix.

It

could

equally

be

a

bad

wi-fi

in

your

house.

We

don't

know

right.

What

we

are

measuring

is

end-to-end.

What

is

netflix

doing

and

that

pulse

carries

information

on

whether

or

not

netflix

is

working

at

the

highest

available

resolution

and

we

detect

when

the

resolution

drops

and

we

detect

when

netflix

panics

when

the

playback

buffer

goes

down

and

it

opens

more

connections

and

tries

to

fetch

chunks

in

parallel.

E

So

we

have

built

models

around

the

behavior,

and

that

is

letting

us

make

these

inferences

so

you're

right

as

in

it's

not

only

the

isp

who's

at

fault

it

could,

they

may

or

may

not

have

a

cache.

We

don't

know,

but

we

just

measure

the

end-to-end

performance

of

every

stream

of

netflix.

I

don't

know

if

that

helped.

O

F

David

yeah,

I

just

I

sent

out

on

on

the

slack

line,

just

some

of

the

numbers

of

that

the

fcc

reported

earlier

in

their

data

last

year

on

the

different

isp

type

technology

type.

But

I

think

this

this

question

of

of

what's

the

long-term

trend

here

on

latency

and

the

performance

is

important

for

kind

of

understanding

and

putting

the

right

context

for

different

improvements,

and

if

we

don't

have

an

agreement

on

the

metric

in

particular,

that's

very

important.

F

If

you

know

the

fcc

has

a

10

second

test

and

it

should

be

20

or

30

seconds.

You

know

that's

an

important

thing

to

communicate

out

so

that

we

can

get

because

we

know

speeds

are

increasing

we're

at

one

gig.

We've

got

x-pawn

going

to

10

gig.

So

do

we

anticipate

improvements

or

not

the

you

know,

I

didn't

mention

it,

but

the

aqm

that

comcast

implemented

is

basically

a

50

improvement

year

on

year

for

the

month

of

november.

F

Now

that

you

know

again,

I

understand

that

you

can't

just

take

that

to

the

bank

and

it's

you

know

that

you

can

guarantee

that

type

of

improvement.

But

you

know

if,

as

these

protocols

improve,

there

could

be

some

expected

improvement

in

performance

over

time

as

the

service

evolves,

and

I

think

that's

important

piece

of

calculus

to

understand

how

this

problem

is.

You

know

the

consumer

experience

problem

is

going

to

evolve

over

time.

L

L

If

you

screw

up

the

round

trip

times

in

the

upstream

direction,

it

makes

it

real

hard

for

the

downstream

to

figure

out

how

to

buffer

your

video

right

and

our

applications

are

changing.

The

resolution

of

our

video

streams

is

going

up

the

amount

of

of

of

image

and

video

content

that

people

upload

or

want

a

backup

keeps

going

up.

L

M

Christoph

I

actually

want

to

go

what

what

jim

said.

I

don't

think

we

should

be

over

pivoting

on

netflix,

I

mean

there's

a

there's.

A

lot

of

applications

that

have

many

different

behaviors

and

netflix's

behavior

is

not

irrational

right.

I

mean

they're

serving

that

doing

something

that

is

solving

a

particular

problem.

It's

not

specific

to

netflix

either.

Let's

be

clear

about

that.

This

is

abr

video

in

general.

You

might

see

some

pronounced

effects

with

netflix,

but

it's

going

to

be

there

as

long

as

you

have

abr

video

doing

this

capacity.

M

Probing

thing

bandwidth,

probing

thing

that

it

keeps

doing

and

to

told

us

your

your

point

of

this

is

not

it.

These

are

basically

continuous

testing,

that's,

hence

the

adaptive

in

abr,

it's

not

just

done

at

the

beginning

of

a

stream.

You

can't

just

ask

the

network

hey

what

is

your

bandwidth

and

then

start

streaming?

At

that

rate,

it

is

a

continuous

measurement,

that's

happening.

We

basically

have

to

do

online

measurements.

We

don't

have

a

way

of

simply

querying

and

finding

out

what

the

bandwidth

of

the

end-to-end

network

is.

M

So

there

is

a

real

problem

here.

Element,

this

practical

engineering

problem

here

that

they're

trying

to

solve

it's

not

out

of

nothing,

and

I

think

jeff

made

a

point

earlier

that

maybe

we

should

engineer

the

network

around

this.

To

that

point,

I'll

come

back

to

the

the

the

maybe

something

that

jim

was

noting,

but

I'll

note

it

anyways

that

having

some

amount

of

isolation

across

the

applications

having

some

ways.

M

E

Thanks

yeah,

I

think

good

discussions

and

I'm

also

looking

at

some

of

the

comments

on

slack.

I

think

the

key

point

is

and

that

I'd

like

to

reiterate,

is

by

looking

at

the

behavior

of

any

application

and

netflix

was

just

an

exemplar,

and

you

know

you

have

youtube,

you

have

prime,

and

we

we

can

build

a

data

driven

model

of

how

it

behaves

and

that

can

be

used

to

estimate

experience.

P

I'm

mute

I'd

like

to

make

the

case

that

actually

we

should

pivot

and

we

should

think

long

and

hard

about

video

streamers.

I

lived

through

an

age

where

we

spent

a

huge

amount

of

time

engineering

for

voip

and

it

was

marginal

both

in

terms

of

traffic

volume

and,

most

importantly,

totally

marginal

in

terms

of

isp

revenue.

We

were

spending

a

huge

amount

of

time

for

basically

minute

marginal

pieces

of

traffic.

P

H

You

know,

bandwidth,

probing

of

of

new

flows,

and

so

there

there

are

a

bunch

of

other

things

that

go

into

the

codex

like

you

know,

increasing

decreasing

fec.

But

ultimately,

when

you

give

up

bandwidth,

you

have

to

get

it

back

afterwards

and

I

think

that's

the

the

the

diffic

most

difficult

thing

that

I

think

we

can't

improve

on.

Unless

we

improve

the

network

service

itself.

E

Absolutely

I'll

give

you

a

very

short

answer

and

the

longer

answer

sharath

can

give

maybe

offline,

which

is

that,

luckily

providers,

the

major

providers

like

netflix

have

nerd

stats

and

if

you

know

how

to

enable

nerd

stats

on

your

video,

you

can

train

a

machine

to

automatically

harvest

the

ground

truth

in

addition

to

the

what

it's

seeing

on

the

network

and

the

training

becomes

fully

automated.

But

not

all

providers

give

you

nerd

stats,

so

it

can

be

more

laborious

and

manual

for

the

other

providers.

C

N

I

think

it's,

I

think

it's

a

good

point

that

we

shouldn't

constrain

what

we

consider

in

terms

of

measuring

qoe

too

much

to

any

specific

set

of

applications.

At

the

same

time,

as

other

folks

have

mentioned,

the

vast

majority

of

internet

traffic

is

now

video

traffic.

That's

typically

going

to

be

served

by

cdns

that

are

local

to

the

user,

which

means

the

transit

networks

are

not

going

to

be

involved.

N

Sometimes

those

cdns

even

have

nodes

co-located

inside

the

end

user

isps

network

and

then

that

video

traffic

is

going

to

be

a

continuous

cycle

of

the

player

requests

a

chunk.

The

sender

dumps

it

into

the

network

as

fast

as

possible,

based

on

its

condition,

control

algorithm

and

then

the

receiver

requests

another

chunk

and

the

process

just

continues

to

repeat

based

on

the

receiver's

buffer.

The

other

things

you're

going

to

have

is

image

traffic.

N

That's

going

to

be

similar,

I'm

voting

a

web

page,

I'm

going

to

quickly

request

as

many

images

as

possible

get

them

served

to

me.

So

I

can

display

that

page

and

then

you

have

constant

bitrate

traffic,

that's

kind

of

like

what

we're

on

for

this

call.

There's

my

voice

right

now

has

to

be

carried

to

you.

There's

some

constant

bit

rate

encoding

of

it.

The

video

can

change,

but

in

general

we

need

this

traffic

to

be

constantly

delivered,

but

it

is

all.

N

None

of

these

types

of

traffic

are

representative

of

me

downloading,

a

large

file

and

that's

what

we

typically

see

when

people

are

developing

congestion

control,

algorithms.

Those

are

the

types

of

experiments

they

look

at.

If

I

have

two

senders

or

receivers,

they

are

both

sending

this

large

file.

How

do

they

share

bandwidth,

but

that's

not

as

representative

of

the

actual

traffic.

That's

on

today's

internet

thanks.

L

C

Q

Q

Q

Q

L

C

U

T

In

our

discussions

we

use

characteristic

as

availability,

pathway,

ability,

serviceability

and

got

to

wonder

is

what

do

we

mean

and

how

can

we

measure?

How

can

we

express

this

if

we

want

to

be

to

use

it

as

a

matrix

next

slide,

please

so

error

performance,

oem

tool

sets

include

methods

that

help

us

to

detect

network

defects

and

measure

performance.

T

T

So

we

believe

that

performance

management,

error,

performance

measurement

is

active

oem

in

terms

of

it

can

be

in

packet

switch

networks.

Specifically,

it

can

be

a

measured

using

antibiotic

measurement

methods

according

to

classification,

rfc

7799,

and

it's

not

a

new.

It's

well

known

from

a

concentrate.

T

T

T

T

T

V

Thank

you

very

much

christoph

so

before

I

start

digging

into

the

the

slides

I

want

to

set

a

bit

of

context,

I'm

a

little

bit

of

an

outlier

in

this

workshop.

In

fact,

some

of

you

guys

may

even

think

of

me

as

the

bad

guy

and

as

honored

and

excited

as

I

am

it's

a

little

bit

difficult,

not

to

feel

a

bit

of

the

imposter

syndrome,

but

I'm

hoping

I

can

bring

you

guys

a

bit

of

a

unique

perspective.

V

V

So,

for

years

now,

I've

literally

spent

my

days

working

with

the

whole

ecosystem,

the

sock,

vendors,

the

cp

vendors,

the

platform,

the

web

scale,

the

csps,

the

cdn

vendors

gaming

studios,

you

name

it

all

around

improving

the

quality

of

experience

of

the

end

user.

So

I

bring

a

different

view.

You

guys

are

the

metric

experts

and

on

all

my

friends

at

apple

google

bell

labs,

but

my

job

is

surely

to

understand

how

to

make

this

relevant

to

end

user.

V

So

when

you

guys

come

to

me

with,

I

got

this

new

queuing

algorithm,

I'm

like

great

that's

going

to

help

that

my

kill

debt

ratio

and

if

you

look

at

me

with

blinking

eyes-

and

you

know

what

I'm

talking

about-

then

nobody's

going

to

buy

this

stuff-

so

don't

get

me

wrong.

I

work

extensively

on

queueing

traffic

optimization,

but

my

focus

is

really

to

understand

how

to

translate

this

into

something.

The

consumer

understands

wants

something

that

csp

can

sell.

The

cp

vendor

can

integrate

and

a

stock

vendor

is

willing

to

do.

V

We

heard

some

amazing

comments

from

stuart

j.

You

know

gina,

vijay,

jim

and

so

many

others

on

the

real

world

benefits,

and

I

love

that

so

residential

internet

used

to

be

a

best

effort,

kovit

changed

that

I

think

jimmy

you're

mentioning

that

earlier.

I

totally

agree

people

don't

just

need

it.

It's

a

basic

human

right

in

in

a

sense

you

rely

on

it,

for

critical

services

worked

education.

V

So

what

I'm

going

to

share

with

you

guys

here

is

understanding

how

we

can

solve

that

potato

in

the

microwave

quote

that

came

out

a

couple

of

times.

I

love

that

there

are

ways

to

detect

when

there's

a

period

of

microwave.

Now

we've

opened

up

the

the

cp

environment

yeah,

you

know

containerization

and

I'm

working

with

a

whole

bunch

of

you.

V

Actually,

some

of

you

that

are

here

on

deploying

agents

in

the

cpes

that

will

give

you

that

low

level

access

to

queuing

wi-fi

wan

ran

whatever

you

want,

so

no

longer

you're

gonna

have

to

infer

measurements,

you'll

be

able

to

get

access

to

it.

So

with

that

said

I'll

go

quickly

to

the

slides,

I

want

to

share

some

real

world

results.

I

like

using

the

cloud

gaming

because

it's

a

great

example

of

high

bit

rate

video,

constant

video

with

with

low

latency

and

the

reality

is.

V

As

it's

been

said,

you

know,

90

of

your

issues

are

going

to

be

in

the

home

or

in

the

last

mile,

there's

a

lot

that

you

can

do

to

focus

on

metrics

and

improvement,

but

you're

hitting

a

point

of

diminishing

return.

If

you

don't

focus

on

the

in-home

in

the

last

mile,

that's

really

where

things

matter

next

slide.

V

So

what

we've

done

is

we've

actually

gone

ahead

and

done

some

real

world

measurements

when

I

say

real

world

we're

talking

to

residential

homes

with

real

gamers

pro

gamers

amateur

gamers-

and

I

remember

a

tier

one

operator

saying

you

know

what

we

have

a

fiber

network.

Yet

people

complain

about

our

gaming

and

so

they're,

like

our

ping.

Tests

are

great

and

yeah.

You

know

if

you

look

at

the

lower

left

graph,

their

ping

times

for

that

cloud.

V

Gaming

service

with

nvidia

was

great

62

milliseconds

on

average,

but

you

can

also

see

the

inconsistency

because

of

the

in-home

network

and

wi-fi

and

everything

else,

and

every

time

you

see

those

little

spikes

that

gaming

session,

that

video

conference

was

impacted

when

we

applied

things

like

pi

square

aqm

and

l4s

again

same

households,

same

real

world

users

and

everything

else,

you

saw

a

dramatic

improvement

in

the

quality

of

the

experience,

the

end

user.

I

don't

really

care

that

the

ping

time

improved

from

60

to

42

milliseconds,

that's

irrelevant.

V

It's

a

consistency

that

deviation

from

the

mean

that

really

matters,

and

for

me

personally,

I

had

to

to

be

able

to

understand

that

I

had

to

get

involved.

I

play

games,

I'm

a

twitch

streamer

on

friday

and

saturday

nights,

and

I

saw

my

kill

that

ratio

increased

by

0.5

in

four

weeks.

This

is

what

is

going

to

drive

the

consumers

to

force

the

service

providers,

to

pay

attention

and

to

buy

into

this

stuff

next

slide.

V

We

also

did

the

same

thing

for

video

conferencing

so

with

a

partner

of

ours

domos,

and

I

thank

them

for

being

able

to

share

the

stats.

We

did

a

video

conferencing

using

the

same

technology

using

real

world

enterprise

customers,

a

worldwide

global,

consulting

firm.

If

you

will,

we

took

some

measurements

now

on

video

conferencing

over

time

and

being

able

to

understand

how,

by

focusing

in

the

indian

home

in

the

access

in

this

case

it

was

a

5g

ran.

Could

we

improve

the

video

conference

and

we

did?

V

We

provided

some

really

amazing

results

where

at

the

90

and

99

percentile?

Where

is

really

what

you

want

to

focus?

If

I

have

one

video

blip

in

a

half

hour,

that's

enough

to

enrage

me

if

I

have

eight

hours

a

meeting

and

that

happens

every

hour,

so

it

for

us,

it's

not

the

50th,

it's

not

the

median.

It's

that

90

at

night

and

99

percent

out

that

we

really

want

to

focus

on

and

be

able

to

show

that

you

focus

in

that

last

smile

and

the

in-home.

V

You

can

have

significant

improvement

here,

and

you

know

what

I

I

don't

have

a

bet

in

the

source

if

it's

pi

square

versus

another

algorithm.

What

I

care

about

is:

will

this

translate

into

something

the

end

user

can

feel

and

can

want

and

is

willing

to

pay

for

it

next

slide,

and

in

this

case,

if

you

can

go

through

yeah

exactly

so,

we

want

to

focus

on

tangible,

real

world

benefits

from

the

consumer

point

of

view,

consumers

don't

care

about

cdf

careers,

they

care

about

kds,

they

carry

fps,

they

care

about.

V

All

of

these

things,

and

everybody

said

it

already.

All

my

thunder

has

been

stolen

for

the

last

day

and

a

half,

but

that's

great.

Now

speed

tests

are

reached

at

its

end

game.

We

can

already

provide

300

times

more

peak

capacity

than

the

average

sustain

usage

from

a

broadband

sub.

So

if

you

have

a

500

megabit

connection-

and

you

have

gaming

issues,

you

have

video

conferencing

issues

going

to

one

gig

or

1.5.

Gig

is

not

going

to

solve

any

of

that.

V

You

know

the

consistency.

Issues

also

aren't

from

the

general

internet,

they're

largely

confined

to

that

last

mile

in

home.

There's

a

reason.

70

percent

of

all

the

support

calls

that

come

to

a

service

provider

are

wi-fi

related,

that's

the

reason

they

don't

care.

They

don't

call

to

complain

about

netflix.

They

don't

care

to

complain

about

this.

They

care

to

say

my

wife

is

bad,

and

you

know

platform

and

web

skill

providers.

Apple,

google,

netflix

a

whole

bunch.

You

know

they've

been

you

know,

I

give

them.

V

V

I'm

just

gonna,

I'm

just

gonna

finish

up

with

the

with

this

one

really

here

is.

I

did

because

consumer

surveys,

the

consumers,

are

willing

to

pay

for

better

latency

for

their

own

control.

Latency

70

of

the

population

is

willing

to

pay

from

5

to

20

a

month

and

over

65

of

them

want

the

ability

to

tell

the

latency

agent.

If

you

will,

what

is

important

is

it

gaming?

Is

it

streaming?

Is

it

in

mornings

in

the

afternoon,

even

the

service

providers,

the

nps

score

increase

when

they

give

that

ability

so

last

slide.

V

W

Hi

junior,

I

think,

a

very

good

talk,

and

these

are

some

of

the

problems

we've

seen

operating

at

us

canopus

networks

in

isps

as

well.

What

would

you

think

is

the

point

at

which

we

need

to

intervene

to

help

alleviate

some

of

these

issues.

Was

it

the

home

router

right

within

the

household

premises,

or

was

it

the

cpe

where

typically

isps

enforce?

You

know

the

bandwidth

plans?

V

The

cpe

yeah

definitely

the

cpu

from

historical

perspective.

They

it

was

always

a

cost

at

the

bottom.

Cps

was

give

me

the

cheapest

thing.

I

can

put

the

home

that

gives

wi-fi

and

that

will

you

know

that

I

can

manage

that's

changing

the

cps

are

not

a

cost

anymore,

their

way

to

differentiate

their

way,

to

create

value

and

to

monetize.

So

that's

changing.

You

know

you

can't

go

and

replace

everything,

that's

in

the

field,

but

there's

a

different

mindset

that

the

service

browsers

are

bringing

to

the

table.

Now.

W

V

V

X

So,

for

example,

you

know

rtt,

which

is

latency

loss

information

from

the

network,

reordering

bandwidth

estimates,

so

the

transport

layer

has

really

rich

information

with

the

encryption

at

the

transport

layer

now,

particularly

with

quick.

It

is

also

the

right

layer

to

expose

this

information

up

to

the

applications,

because

the

visibility

in

the

network

is

is

reducing

compared

to

tcp

and

assuming

ideal

apps

in

terms

of

you

know

not

having

bottlenecks

in

putting

enough

data

out

on

the

network.

The

transport

layer

basically

determines.

U

X

U

X

A

very

rich

idea,

or

very

rich

picture

of

why

there

is

increase

in

tail

latency

or

why

certain

requests

are

taking

longer.

So

in

terms

of

what

is

done

being

done

today

in

platforms,

we

have

apis