►

From YouTube: IAB workshop on Environmental Impact of Internet Applications and Systems 3: Improvements

Description

IAB workshop on Environmental Impact of Internet Applications and Systems 3: Improvements

Workshop webpage: https://datatracker.ietf.org/group/eimpactws/about/

Papers in GitHub: https://github.com/intarchboard/e-impact-workshop-public

Session 1 (The Big Picture): https://youtu.be/90GxlL34rQ4

Session 2 (What Do We Know?): https://youtu.be/EaNgREHLXRg

Session 3 (Improvements): https://youtu.be/jjEZwuuChZc

Session 4 (Next Steps): https://youtu.be/Pc_XY5sDR58

A

Your

position

papers

are

public

already

and

of

course,

this

is

a

professional

meeting

and

we

expect

professional

behavior

and

no

kind

of

harassment

is,

is

accepted

and

that's

the

reminder

again

that

there's

lots

of

people

with

very

different

backgrounds

so

do

explain

clearly

what

what

you

mean

and

be

polite

and

learn

from

the

others

viewpoints

I.

Think

in

this

sense

in

particular,

we

will

be

talking

about

some

of

the

technical

things.

So

do

keep

that

in

mind

with

that

I

think

I

will

just

hand

it

over

to

you

Eve

and.

B

Okay,

when

I

advance

to

the

next

slide,

so

one

thing

that

was

quite

helpful

yesterday

was

to

sort

of

reiterate

what

some

of

the

goals

were,

and

so

we've

done

that

again

today

and

look

forward

to

you

helping

us

to

refine

our

objectives

and

to

hopefully

meet

them.

We

clearly

are

wandering

into

territory

where

we

are

discussing

potential

Solutions

today

and

also

some

of

the

feasibility

behind

them

and

their

benefits.

And

can

we

quantify

those

things

are

so

that

we

can

arrive

at

answering

the

questions?

Are

the

benefits

significant?

B

How

do

they

make

an

impact?

Can

they

and

how

much

do

they

make

an

impact

and,

for

example,

some

of

the

points

that

Vesna

made

on

Monday

that

were

quite

helpful

were

to

consider

you

know.

We

spoke

a

lot

yesterday

about

how

energy

usage

has

remained

rather

stable

or

static,

but

the

directives

coming

from

the

unipcc

and

elsewhere

is

the

urgency

to

reduce

our

usage

of

both

resources,

electricity

and,

ultimately,

carbon

footprint

and

emissions,

and

so

one

of

the

ways

to

consider

improvements.

B

Is

you

know

by

how

much

is

it

10

percent

less

per

year

or

some

other

goal

that

we're

trying

to

meet?

Certainly,

there

have

been

places

like

the

wri,

the

world

Resource

Institute

that

have

tried

to

quantify

how

much

faster.

We

need

to

accelerate

our

efforts

to

meet

these

goals

and,

in

terms

of

say,

the

introduction

of

Renewables

the

moving

off

of

fossil

fuel

and

how

quickly

we

need

to

move

to

the

electrification

of

transportation,

and

so

it

would

be

good

to

have

an

ambition

for

our

venues.

B

Our

areas

of

of

impact,

another

area

that

was

suggested

was

to

consider

disaster

scenarios,

emergency

situations

and

extreme

climate

as

Baseline

requirements,

for

example

in

the

United

States,

the

NOAA,

which

is

considered

the

the

National

Oceanic

and

Atmospheric

Administration.

Our

trusted

sort

of

weather

and

climate

organization

has

said

that

in

last

decade,

certainly

in

the

United

States,

the

number

of

billion

dollar

emergencies

has

doubled,

for

example,

and

then

other

kinds

of

ways

to

talk

about

improvements

or

caveats

is

to

beware

or

avoid

techno

optimism

that

everything's

going

to

work

out

technology

is

our

savior.

B

B

What

kinds

of

incentives

that

certainly

quite

important

to

and

and

who

are

we

trying

to

incent

and

as

well

as

security

issues

that

sometimes

more

than

sometimes

are

at

odds

with

our

goals

for

efficiency,

and

so

the

scope

today

is:

there's

no

need

to

focus

only

on

the

things

that

they,

the

ietf,

even

though

we're

sponsoring

this

Workshop.

So

it's

not

only

the

things

that

the

ITF

can

do,

but

what

can

we

do

collectively.

B

Alexander

Khan

will

be

speaking

to

that

we're

going

to

have

two

talks

on

General

thoughts

on

the

solutions

and

trade-offs,

Carlos

prignataro

and

Suresh

Krishnan

General

thoughts

on

Solutions

and

trade-offs

that

include

routing,

for

example,

Alvaro,

retana

and

Russ

white.

We'll

speak

to

that.

And

importantly,

you

know

the

data

formats

that

underpin

much

of

this

Brendan

Moran

and

Carson

Borman,

and

a

return

to

our

beloved

topic.

Multicast.

B

We

have

about

70

minutes

of

budget

or

discussion

and

if

it's

anything

like

the

last

few

days,

it's

been,

which

is

which

have

been

fantastic,

I

really

am

looking

forward

to

that,

and

please

continue

to

drop

your

comments

and

questions

as

well

into

the

chat

window,

and

we

will

try

to

service

some

of

those

service

and

service

some

of

those

as

well,

and

please

continue

to

either

jump

in

or

raise

your

hand

to

ask

questions

as

well

with

that

I

think

we

are

over

to

you.

Alex.

D

C

Yeah

so

yeah

good

morning,

oh

good

afternoon,

everyone

so

yeah.

So

the

first

presentation

here

concerns

metrics.

This

actually

based

off

of

a

draft

of

the

submission

was

was

based

on

the

draft

that

you

see

referenced

here

along

with

a

bunch

of

co-authors

whose

name

you

see

also

there.

So

let

me

jump

into

that

so

context.

We

don't

need

to

talk

much

about

that.

I

think

clearly.

C

This

is

what

the

workshop

is

all

about:

the

fact

of

basically

how

how

we,

how

the

ITF,

how

the

network

Community

can

contribute

towards

addressing

one

of

Mankind's

Grand

challenges,

which

is

basically

reducing

carbon

footprint

and

yeah

I.

Think

as

we're

all

aware,

is

networks

are

both

an

enabler

for

solutions

for

Solutions,

but

also

a

contributor

to

the

problem

itself,

and

there

are,

of

course

many

contributors

to

network

Energy

Efficiency

today,

many

of

which

go

perhaps

beyond

what

the

where

the

ietf

can

can

contribute

directly.

C

So

of

course

the

networking

can

contribute.

This

is

the

subject

of

the

discussions

or

further

discussion

that

we

will

have

here,

but

there

are

quite

a

few

potential

things.

Obviously,

areas

to

look

at

and

one

area

concerns,

certainly

basically

the

area

of

how

you

manage

that

works.

How

you

deploy

networks,

how

you

optimize

that

works

and

networking

standards

play,

do

play

a

role

and

enable

those-

and

these

are

yeah

a

number

of

things

so

basically

anything

from

well.

You

need

a

provision

networks.

Therefore,

you

do

detune

Dimension

them.

C

C

Ultimately,

total

management,

type

of

functions

and

Main

and

other

controllers

have

have

been

a

long

time

about

optimizing

various

parameters,

and

in

the

past

we

parameterized

things

such

as

utilization

or

cost

or

or

service

level

objectives

and

so

forth,

and

in

a

way

and

at

the

end

of

the

day,

energy

usage

is

just

another

great

parameter

that

that

can

be.

That

can

be

optimized

that

way

and

well,

there

are

many

other

ways

such

as

for

them.

Where

do

you

place

virtual

networking

functions?

How

do

you

plan

routes,

segments

paths

and

so

forth?

C

And,

of

course,

for

all

of

this?

How

do

you

moderate

also

the

trade-offs,

because

while

we

want

to

reduce

the

carbon

intensity,

we

of

course

still

need

to

keep

in

mind

that

there

are

service

levels

that

need

to

be

delivered

utilization

that

needs

to

be

maintained

to

make

things

economical

and

and

so

forth.

C

Well,

beyond

management.

There's

there

are

other

aspects

was

in

control.

Could

you,

for

instance,

would

it

make

an

impact

if

you

can

select

from

Greener

path

Alternatives

as

an

example,

then

there

are

network

architecture

issues.

Actually

some

of

this

came

through

also

in

earlier

talks,

but

then,

where

would

we

cash

from

a

carbon

standpoint?

Where

does

it

make

most

sense

here,

obviously

again

trade-offs

involved?

C

How

much

do

we

spend

in

transmitting

data

versus

storing

it

elsewhere

and

potentially

even

things

such

as

protocol

designs,

protocol

design

itself

can

could

be,

for

instance,

would

it

helpful

if

we

play

with

a

smoothing

versus

bursting

of

traffic

and

so

forth,

but

regardless

what

measures

in

the

end

are

I'll

selected?

It

all

starts

with

the

visibility

and

there's

this

famous

saying

by

Peter

Drucker.

C

If

you

can't

measure

it,

you

can

manage

it

and

one

might

add

basically

or

you

could

not

assess

how

effective

are

your

Solutions

and

you

had

a

device

solutions

that

the

rely

funds

on

control

groups,

and

so

accordingly,

you

do

need

visibility

and

visibility

starts

with

the

right

metrics.

This

is

really

basically

the

foundation

for

for

for

for

everything

else,

and

this

is

also

this

happens

to

be

also

an

area

that

is

very

actionable

and

where

the

ITF

may

be

able

to

make

an

impact.

C

So

concerning

metrics

well,

the

question,

then,

is

basically

what

metrics

do

we

need

to

Define

what

metrics

are

needed?

This

is,

of

course,

Very

Much,

driven

Always

by

the

types

of

question

that

we

want

to

answer.

How

do

we

assess

the

effectiveness

of

a

solution?

How

do

we

compare

between

design

Alternatives?

How

can

we

optimize

the

network

deployment?

How

do

we

know

if

one

is

better

versus

the

other,

etc,

etc,

and

so

and

yeah,

and

so

this

is

also

what

should

the

metrics

cover

right?

C

We

have,

of

course,

the

energy

usage

efficiency

and

where

the

scope

is

the

network

itself,

then

there

are

also,

but

then

the

question

is

beyond

the

usage

efficiency.

That

was

the

question.

Well,

how

are

the

energy

sources

right?

Are

they

sustainable

so,

but

this

this

basically

goes

beyond

the

network

in

a

narrow

sense.

If

you

will

addressing

the

entire

deployment

and

then

you

can

get

further

still

such

as,

for

instance,

yeah

the

the

need

for

well

basically

taking

into

account

manufacturing

life

cycle,

the

need

for

cooling

and

so

forth.

C

All

of

those

things

so

with

the

metrics.

We

want

to

provide

a

holistic

picture

that

can

be

provided

that

that

can

account

for

the

whole

picture

at

the

end

of

the

day,

not

not

just

not

just

the

part,

and

that

can

help

us

basically

address

the

question

that

we

want

to

have,

and

that

can

also

help

us

enable

the

control

loops

of

you

have

these

other

type

of

controllers,

which

is

depicted

here,

and

we

want

to

enable

them

and

and

again

based

on

metrics

so

getting

to

the

metrics.

C

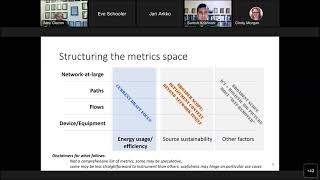

So

when

we

look

at

the

metrics

well,

we

will

look

at

basically

how

to

how

we

so

one

question

is

being.

How

can

we

structure

the

metric

space

and

the

obvious

way

to

start

it,

of

course,

with

the

device

and

equipment

and

but

then

itself

probably

is

not

enough.

We

want

to

know

also

basically

about

the

flows

or

service

instances

and

so

forth.

C

We

want

to

also

assess

funds

in

the

carbon

intensity

of

paths

and

also

talk

about

the

network

at

large

and

here's

and

basically-

and

we

want

to

address

all

of

these-

these

along

all

of

the

three

verticals.

If

you

will

the

energy

usage

efficiency,

this

is

actually

where

the

current

focus

of

the

draft

is,

but

we

don't

want

to

forget

about

the

other

factors

as

well.

So

with

this,

let

me

turn

to

some

of

the

metrics

and

that

we

can

identify

just

a

disclaimer.

It's

not

a

comprehensive

list.

C

So

at

the

device

in

the

equipment

level

and

sorry

this

is

a

little

bit

busy,

but

basically

they

are

yeah

a

bunch

of

things.

It

basically

starts

with

standard

substance

expect

on

on

data

sheets

and

so

forth.

So

basically,

this

is

just

the

device

ratings.

If

you

will,

what

are

the

power

consumptions

when

idle?

At

virus

slide

loads

at

various

configuration

and

so

forth,

and

then

for

the

current

aspect

of

what

is

is

actually

used

and

using.

C

We

want

to

be

able

to

potentially

know

the

path

we

want

to

basically

know

this

for

different

time

intervals

and

system

start

for

the

past

minute

and

so

forth,

and

in

addition

to

these

absolute

measures,

we

want

to

also

normalize

or

derive

metrics

so

that

we

can

assess

the

actual

efficiency.

So

we

want

to

relate

it,

for

instance,

in

terms

of

well

okay,

this

is

how

much

power

we

consume,

but

how

does

it

relate

to

the

to

the

amount

of

traffic

that

we

are

actually

passing

and

so

forth?

C

C

We

want

to

manage

the

overall

ICT

environment,

which

might

include

the

power

sources

and

so

forth,

but

among

the

things

that

can

certainly

be

done,

there

is

also

to

maintain

something

like

powers

or

sustainability

ratings

which

reflect

well,

which

are

either

obtained

from

an

energy

provider

or

which

might

reflect

the

operator's

mix

of

energy

sources

and

then,

likewise,

as

we

extended,

there

can

be

Beyond

power

source

sustainability

ratings

that

might

be

also

device.

Sustainability

ratings

that

basically

rate

the

device

as

a

whole.

C

How

eco-friendly

is

it

replacement,

lifecycle

considerations,

metrics,

where

you

could,

for

instance,

indicate

how

you

how

the

energy

debt

incurred

by

the

manufacturing

of

the

device

gets

amortized

over

the

equipment,

lifetime,

yeah

and

and

and

of

course,

and

of

course,

more

in

the

interest

of

time?

Let

me

just

move

on

so

because,

as

mentioned,

it's

also

important

that

we

move

also

Beyond

equipment

and

so

forth,

It's

itself,

because

this

is

where

a

lot

of

the

networking

functions

and

operational

things

are

also

to

be

found.

C

So

one

important

aspect

concerns

flows.

How

can

we

relate

carbon

incentives,

intensity

to

flows

or

also

to

instances

of

services?

So

metrics

of

Interest

here

are,

for

instance,

the

amortized

energy

that

is

consumed

over

the

duration

of

a

flow,

so

basically

the

power

budget,

if

you

will

that

could

be

yeah

assigned

or

associated

with

a

given

flow

and

also

potentially-

and

this

might

be

important

for

optimization

things,

what

is

the

incremental

energy

that

would

be

consumed

that

would

not

have

been

consumed?

C

Well,

then,

beyond

flows,

there

are

paths

so

clearly

it's

also

as

we

want

to

optimize

paths

and

perhaps

optimize

path,

selection

and

so

forth.

It

may

be

interesting

to

have

paths

things

such

as

paths,

sustainability

ratings.

These

might

be

functions

of

the

ratings

of

the

different

Pops

that

are

being

traversed

so

Does.

It

include

dirty

devices

if

you

will

or

is

it

composed

of

of

clean

devices,

and

the

function

could

be

anything

right.

It

could

be

a

an

average.

It

could

be

the

sum

it

could

be

the

maximum.

C

What

have

you

and

likely

we

may

want

to

know

also,

basically,

what

is

a

normalized

power

consumption

across

the

path

to

make

them

more

comparable

and

then,

finally-

and

of

course

it's

the

purpose

of

this-

we

want

to

basically

reduce

the

total

carbon

footprint

of

the

network

as

a

whole.

So

we

want

to

be.

We

will

also

need

to

aggregate

a

lot

of

these

metrics

for

the

entire

deployment

and

well

so

just

yeah

to

to

before

concluding.

There

are

just

a

few

other

considerations.

I

want

to

mention.

C

C

There

are

a

couple

of

thoughts

there

more

discussion

items

really

energy

consumption

may

be

easier

to

measure

than

actual

carbon

emissions,

so

this

is

basically

certainly

one

one

thread

of

discussion

here

and

likewise,

basically,

if

you

want

to

obtain

metrics

across

paths

and

for

flows

and

so

forth,

basically

this

this

will

be

more

than

just

instrumenting

a

yeah.

It

remain.

This

may

involve

invent

mechanisms

or

what

have

you

more

than

just

the

instrumenting

the

device

agent,

if

you

will

another

aspect,

concerns

a

certification

compliance.

C

So,

basically,

if

we

do

use

these

metrics

to

optimize

carbon

density,

it

is

of

course

important

to

have

instrumentation

that

is

accurate.

Otherwise

it

may

be

counterproductive,

and

it

may

also

be

particularly

important

when

regulation

in

monetary

incentives

get

involved

and

if

you

say,

if

you

claim

I,

offer

a

Greener

service

and

maybe

charge

something

for

it,

just

as

an

example,

how

would

you

actually

know

that

this

is

true?

C

Third,

consideration

is

one,

so

one

of

the

questions

also

how

we

can

consider

how

we

can

treat

this

not

just

as

a

problem

for

the

operator,

but

ultimately

also

attribute

the

energy

usage

to

users

and

confront

users

with

the

choices

of

the

actions

regarding

the

carbon

footprint

and

yeah

anyway.

So

this

this

concludes.

What

I

wanted

to

say.

I

want

to

mention

again,

I

believe

this.

A

lot

of

these

metrics

and

what's

related

to

this

is

quite

actionable

in

the

ITF.

C

This

is

I

believe

where

we

can

make

an

impact

and

once

metrics

are

defined,

there

are

several

areas

to

look

at

basically.

Next,

this

includes

things

such

as

young

models,

potential

protocol,

extensions

with

certain

virtual

support,

energy

parameters,

of

course,

Solutions

and

use

cases

to

drive.

Each

of

those

needs

need

to

need

to

be

defined,

and

finally,

also

just

to

mention

is,

as

I

mentioned.

This

is

based

on

a

draft.

B

B

B

D

D

So

the

question

was

really

so.

You

are

presenting

a

lot

of

different

options

here

or

possibilities.

Rather

and

of

course

there

is

a

there

is

already

about

their

standards,

both

when

it

comes

to

entity

performance,

especially

in

relation

to

networks

and

also

in

relation

to

ghd

emissions

and

so

on,

from

bodies

like

the

Etsy

European

standardization

body

and

the

itu.

So

I

was

curious

if

you

have

done

any

like

a

gap,

analysis

or

something

in

relation

to

your

work

or

if

this

is

a

later

step.

C

Well,

I

think

Gap

analysis

needs

to

be

done

absolutely.

This

is

basically

seen

as

a

later

step.

Somebody

defining

the

metrics

already

coming

up

with

a

set

of

comprehensive

metrics

is

a

is

I,

think

the

first

step,

and

then

from

that.

Basically,

then,

the

question

will

be

the

next

thing:

what

yeah?

What

exactly?

What

are

the

gaps

and

what

of

the

metrics

and

how

should

they

be

pre-prioritized

right?

Because

there

are

a

lot

of

possibilities

for

metrics.

C

Some

of

them

may

be

more

useful

than

others,

or

some

of

them

maybe

well

or

there

may

be

also

certain

use

cases

that

may

want

to

be

prioritized.

That

requires

some

of

the

metrics,

but

not

others,

but

that

would

be

yeah

I,

think

that

would

be

the

outcome,

then,

of

yeah,

of

further

discussions

and

and

the

next

step.

D

B

E

So

I

just

want

to

say,

like

I,

think,

like

Alex

like

and

I

like

and

Carlos,

we

kind

of

agree

on

a

lot

of

the

things

right.

Like

you

know,

we

I

looked

at

the

papers

as

well,

so

we

have

like

you,

know

very

similar

thoughts.

A

lot

of

the

things

I

do

I

kind

of

want

to

emphasize

on

the

things

where

we

didn't

really

have

the

same

kind

of

scope

in

the

papers

right.

E

Like

you

know

the

scope,

three

like

it's

much

larger

than

evens

coconut

scope

to

put

together.

So

we

need

to

kind

of

focus

a

little

bit

on

the

sculptory

emissions

because,

like

you

know,

our

organizations

themselves

are

focusing

on

scope

on

scope

too,

because

of

like

you

know,

Regulatory

and

and

legal

things

to

report

so,

and

one

thing

I

wanted

to

call

out

is

like

scope.

E

3

for

somebody

is

like

scope,

two

and

scope,

one

for

somebody

else

and

I

think

this

is

kind

of

in

the

same

direction

as

what

Alex

was

talking

about

right,

like

you

know,

when,

like

people

are

operating

gear

made

by

some

Network

vendor

right,

like

you

know,

they

have

really

in

the

reporting

chain

for

that

right

as

they

need

to

report

those

things.

But

as

like

you

know,

the

networking

industry

ourselves

like

we

need

to

kind

of

help.

E

Complete

problem

is

like

really

really

relevant

here.

So

what

we're

trying

to

do

is

like

kind

of

make

like

smart

choices

with

Energy

Efficiency

in

mind.

So,

like

you

know,

we're

kind

of

trying

to

bound

the

the

usage

of

the

other

dimensions.

Like

you

know,

in

kind

of

trying

to

find

energy,

efficient,

I

would

say

Frontier

for

lack

of

a

better

term,

and

this

also

includes

stuff

like

circular

economy.

Stuff

right,

like

you

know,

where

does

this

come

from?

E

There's

like

Alex

talked

about,

like

you

know,

metrics

right

but

like

stuff

happens

much

before

that.

So

is

it

like

something

that

we

can

do

with

modularity?

Is

there

something

that

we

can

do

with

like

packaging?

What

kind

of

power

supplies

are

sitting

there

right?

So

a

lot

of

the

things

are

like

even

pre-metric

right

like

so.

E

This

is

like

more

static

things

that

we

can

measure

through

the

supply

chain,

but

it's

also

something

we

kind

of

have

to

consider

in

the

overall

life

cycle

and

similarly,

like

you

know,

we

have

some

programs

at

least

like

in

Cisco.

We

have

some

programs

like

for,

like

you

know,

recycling

the

hardware

thing,

so

all

those

things

need

to

get

considered

when

we

do

the

sustainability

measurements,

so

we

see

kind

of,

like

you

know

three

faces

of

like

you

know

getting

through

this

right

like

so,

we

start

off

with

visibility.

E

I

think,

like

I,

think

most

of

us

I

would

say.

Probably

everybody

agrees

that,

like

you

know,

we

kind

of

need

to

get

visibility

into

the

system

then

move

on

to,

like

you

know

how

we

get

insights

from

it

and

how

we

actually

recommend

things

to

people

who

are

not

like

you

know

doing

this,

like

I,

said

Deja

pretty

much

right

to

like

improve

the

system.

And,

finally,

how

do

we

get

the

systems

to

kind

of

improve

themselves?

E

E

We

also

need

to

start

doing

this

for

environmental

impact

and,

of

course,

it's

difficult

to

do.

But

the

problem

is

like

the

longer

we

delay

doing

this.

We're

gonna

like

leave

ourselves

open

to

a

lot

of

stuff

happening

outside

the

IDF

right,

which

is

kind

of

like

lets.

You

have

stuff

that's

potentially

redundant

so

like

different

vendors

are

going

to

do

different

things.

E

It's

going

to

be

propriety

to

them

and

sometimes

like

people

are

going

to

do

like

contradictory

metrics

as

well

right

like

no,

you

really

don't

have

a

stuff,

that's

well

reconciled.

So

this

is

a

problem

in

by

itself,

but

for

somebody

who's

using

these

things.

If

you

have

like

very

different

things

from

different

vendors

and

you

have

a

multi-vendor

network,

it

becomes

very

difficult

to

kind

of

have

like

an

overall

view

of

the

system.

E

So

we

really

need

to

act

quickly

to

do

something

that

we

can

agree

on

and

kind

of

set

like

industry

level

standards

on

on

what

is

going

to

get

measured

and

how

and

and

kind

of

terminology

is

like

really

part

of

it

right,

like

you

know,

because

people

have

different

ways

of

measuring

stuff,

so

we

kind

of

have

to

have

precise

ways

on

what

things

are.

What

so,

the

the

next

step

is

like

kind

of

like

have

something

for

the

industry

to

use

right

like

and

like

this

is

just

like

a

straw.

E

Man

proposal

is

that,

like

we

kind

of

have

some

kind

of

Open

Source

implementation

right

like

for

people

to

actually

have

these

standardized

metrics

that

we've

built

and

collected,

and

and

and

put

them

together

in

a

way

that

people

can

actually

see

their

environmental

impact.

So

whether

it's

like

Energy

Efficiency,

like

or

like

you

know

somehow

translating

this

into

carbon

emissions

or

like

whatever

angle

you

want

to

see,

then

to

kind

of

visualize.

E

But

without

that

we

probably

don't

even

have

the

same

language.

You

can

speak

across

domain

so

and

of

course

it's

a

very

difficult

problem,

but

at

least

we

have

a

start

in

that

direction,

and

and

the

The

Next

Step

would

be

to

kind

of

provide

some

kind

of

advice.

Like

you

know,

is

there

any

operational

changes

you

can

do?

Can

you

like

turn

off

specific

routers

at

specific

times?

E

Is

there

like

some

transceivers

that

can

get

turned

off

right

and

is

there

like

some

equipment

that

can

be

replaced

like

you

know,

when

it's

getting

close

to

its

like

design

lifetime?

So

those

kind

of

things

can

all

be

like

recommendations

coming

from

such

kind

of

software

towards

the

people,

so

people

can

actually

plan

for

this

and

and

and

do

productive

changes

and

like

at

the

last

step

right,

like

we

kind

of

do

this

at

a

longer

time

scale.

E

So

if

it's

going

to

be

months

or

years

like

a

human

looking

at

it

and

providing

Solutions

or

looking

at

Solutions

and

ordering

stuff,

it's

all

going

to

work,

but

at

some

point

a

human

doing.

This

is

not

going

to

cut

it

right.

So

we

can,

if

you

want

to

do

something

in

a

smaller

time

scale

and

we

kind

of

have

to

start

building

some

amount

of

self

awareness

into

the

network

and

I

know.

E

Iitf

has

some

work

ongoing,

but

it's

not

really

in

the

space,

but

I

think

we

can

actually

start

looking

at.

Is

there

something

we

can

actually

do

and

probably

make

like

small

changes

and

and

and

have

like

a

feedback

loop

right

to

see

how

the

changes

affect

the

system

and

kind

of

keep

repeating

this

in

in

small

increments

and

and

one

key

thing

we

see

is

like

this

needs

to

be

done

in

a

declarative

fashion.

E

I,

don't

think

like

people

are

gonna,

start

doing

this

and

say

like

hey

like

make

my

I,

don't

know:

energy

effici,

Energy,

Efficiency

stuff,

like

93

or

whatever.

It's

not

really.

The

the

goal

for

somebody

right

like

people

are

gonna,

have

to

specify

something

in

a

higher

level

and

we've

seen

all

the

collected

metrics.

We

kind

of

have

some

kind

of

machine

learning

algorithm

that

can

go

and

look

at

opportunities

to

improve

these

things.

Right

and,

and

also

like

you

know,

looking

at

like

something

called

scope,

4

right,

scope,

4

is

like

really

avoided.

E

Emissions

I

think

it

was

yeah,

so

talked

about

it.

Yesterday,

like

you

know,

we

us

meeting

here

online,

she

has

a

lot

of

travel.

That's

like

let's

go

for

so

I.

Think,

having

some

kind

of

automated

system

is

going

to

help

us

look

for

opportunities

in

this

space

to

see

like

you

know

what

kind

of

emissions

that

can

be

avoided

in

the

future,

so

I'm

just

going

to

closing.

E

E

Side

and

and

looking

at

a

vendor

agnostic

standard

is

like

very,

very

important

because

it

like

we

see

a

lot

of

stuff,

that's

coming

out

in

the

market.

It's

really

greenwashing

right,

like

you

know,

it's

just

coming

up

with

stuff

to

say,

oh,

like

we

are

doing

like

amazing

stuff,

but

not

really

substantive,

so

we

kind

of

need

to

do

something:

that's

vendor

agnostic

and

and

and

and

that's

something

that's

like

substantial

to

reduce

the

environmental

impact.

E

I

think,

like

you

know,

we

kind

of

need

to

avoid

stuff

like

that

and

build

robustness

and

recoverability

into

the

protocols

and

and

also

look

at

like

how

we

meet

SLS

in

the

more

energy

efficient

way.

So

we

don't

need

to

really

beat

the

SLS

all

the

time,

but

also

look

at

like

you

know

what

is

the

like.

I

would

say

the

least

thing

we

can

do

to

meet

the

slas

and

finally,

like

you

know,

kind

of

avoid

micro,

optimizations

and

consider

product

life

cycle.

E

So

like

one

of

the

things

we

kind

of

like

realize

is

like,

let's

say

a

core

router

of

today

could

become

like

a

edge

router

for

tomorrow,

right

like

if,

if

it's

possible

to

have

some

of

the

functionality,

that's

required

for

the

edge

router.

That's

not

always

there.

So

what

we

kind

of

do-

and

we

are

Engineers

like

at

least

most

of

us-

are

Engineers.

We

try

to

optimize

everything

like

very,

very

tightly

to

the

use

case

and,

like

you

know,

we

are

proud

of

it,

but

I

think

at

some

point.

E

This

case

is

like

where

this

doesn't

really

work,

like

you

know,

for

example,

Carson

is

doing

a

lot

of

stuff

in

iot

and

like

something

that

lasts

like

a

year

on

a

double

A

battery,

like

those

kind

of

things

don't

work,

but

at

least

like

think

about

it

when

we

design

protocols,

so

that's

pretty

much

it

for

myself.

Thank

you.

B

B

G

G

That

would

be

helpful.

The

first

would

be

to

reduce

links.

For

instance,

I

have

two

links

right

here.

Perhaps

I

could

get

rid

of

one

of

those.

Two

I

have

two

parallel

paths

here.

Perhaps

I

could

get

rid

of

one

of

those

two

and

the

second

is

removing

redundant

equipment.

So,

for

instance,

if

I

could

get

rid

of

these

two

routers

or

power

them

down

for

some

period

of

time,

or

something

like

that.

That

would

also

reduce

cost

or

reduce

energy

usage.

G

Another

is

is

been

talked

about

in

the

chat

is

that

you

can

actually

reduce

the

speed

of

these

links.

If

this

is

100

Gig,

then

you

can

potentially

drop

it.

You

know

if

you're,

using

quam

and

you're

using

say

four

channels

of

quam

over

an

optical

link

or

something

like

that

or

a

wireless

link.

You

might

be

able

to

reduce

to

25

gig

or

something

like

that

and

reduce

the

power

usage,

and

all

of

these

can

be

done

in

a

Time

variant

way

and

I

think

that's

an

important

point.

G

But

now,

let's

look

at

some

of

the

strict

routing

protocols.

Things

now

I'm

not

going

to

talk

again

a

lot

about

equipment,

because

equipment

is

problematic

in

that

the

more

you

bring

something

down

and

pull

it

back

up

the

more

often

it

is

to

the

more

the

more

often

it's

going

to

fail,

and

so

you

just

have

to

think

about

the

trade-offs

in

the

equipment.

Failure

versus

how

often

you're

bringing

it

up

and

down

just

like

a

light

bulb

in

reality.

The

more

often

you

turn

it

on

and

off

the

quicker.

G

I

would

argue

that

we

don't

know

the

answers

to

these

questions

right

now,

because

we

haven't

done

a

lot

of

measurement

in

this

area

to

understand

like

if

I

shut,

a

router

off

15

times

in

a

day

versus

never

what

is

the

uptime

going

to

be,

but

thinking

about

strictly

from

a

control

plane

perspective,

just

thinking

about

some

of

the

impacts

that

we

have.

For

instance,

let's

say

if

this

left

is

my

bandwidth,

and

this

right

is

my

energy

usage,

then

I

can

say

I'm,

sorry,

yeah

I

can

say:

okay.

G

Well,

you

know

what

I

think

I

did

this

one

backwards

by

the

way.

I

think

this

is

one

four,

but

anyway

I

could

say

you

know

what

I

could

save

a

lot

of

energy

by

cutting

this

link

out,

but

when

I

cut

that

link

out

I'm

driving

traffic

up

through

this

upper

path,

this

increases

what

we

call

stretch,

which

is

simply

the

number

of

hops

in

the

in

the

network

itself,

and

the

thing

is:

is

that

every

hop

that

you

clock

off

Optics

and

into

electronics

and

off

Electronics

onto

Optics?

G

When

you

do

these

serialized

deserialized

steps,

you

are

adding

delay

to

the

network

and

you're

potentially

adding

Jitter

to

the

network,

because

here,

first

of

all,

you

have

just

the

simple

I'm

switching

from

this

path

to

this

path,

which

is

going

to

cause

the

routing

protocols,

convergence

which

causes

Jitter,

what

the

application

sees

as

Jitter

as

the

traffic

shifts

from

One

path

to

the

other

path.

The

second

thing

is:

As

I

push

more

traffic

onto

this

path.

G

These

cues,

if

I,

have

very

carefully

tuned

my

quality

of

service

and

I'm,

using

traffic

engineering

or

traffic,

steering

to

make

sure

that

the

network

is

optimal.

So,

for

instance,

let's

say

that

I

push

all

my

video

traffic

this

way

and

all

my

voice

traffic

this

way

and

then

I

kill

this

path

for

Energy

savings.

I'm

now

mixing

video

and

voice

in

the

same

queue

structure,

which

is

much

more

difficult

to

do,

and

not

have

lots

of

things

like

that.

G

So

you

decrease

the

aggregate

bandwidth,

your

your

increasing

stretch,

you're,

potentially

increasing

Jitter,

your

can

be

increasing

delay,

and

so

these

can

all

have

a

negative

impact

on

applications.

So

the

whole

point

here

is

not

that

we

shouldn't

do

any

of

this

stuff.

The

point

is,

we

need

to

think

about

it

and

figure

out

how

to

do

this

stuff

rationally

and

where

it

makes

sense

and

where

it

might

not

make

sense.

It

may

be

that

in

some

cases,

shutting

down

a

particular

set

of

links

might

save

us

energy.

G

So

I

need

to

decide

when

I

should

take

a

link

or

piece

of

equipment

out

of

out

of

commission

for

Energy

savings.

I

need

to

know

what

that

looks

like

when

I

bring

it

back

up.

I

need

to

know

how

I'm

going

to

determine

to

bring

it

back

up,

and

this

is

true

also

for

even

things

with

short-term

sleeps,

like

micro,

sleeps

and

stuff

like

that

which

we've

talked

about

in

the

past

to

solve.

G

G

G

So

we

need

to

think

about

from

a

routing

perspective,

control

plane

perspective.

How

do

I

remember

that

link?

Is

there

how

do

I

remember

what's

reachable

via

that

link?

How

do

I

handle

things

that

change

in

reachability,

while

the

link

is

asleep

or

down

or

the

device

is

down

or

asleep,

and

what

do

I

do

with

those

things

now,

I'll

say

that

this

part

of

things

will

probably

be

covered

in

the

TBR

working

group.

G

That's

coming

up

right

now,

in

fact,

I

have

a

meeting

this

afternoon

to

talk

about

next

steps

in

the

TBR

buff.

So

that

is

something

that

we

need

to

think

about.

Another

thing

is

that

there's

work

kind

of

going

on

in

that

area.

The

next

is

Convergence

impact,

so

when

I

converge

bgb

first

of

all,

since

bgp

is

the

big

kid

on

the

Block

nowadays,

first

of

all,

I

have

hunt

I

have

the

potential

of

all

sorts

of

just

not

converging

whether

or

not

people

believe

this,

the

internet

core,

never

converges,

never

ever

converges.

G

G

So

you

know

a

lot

of

parallel

links

and

redundancy

is

just

there

to

converge

more

quickly

in

the

case

of

failure,

and

so

how

do

we,

if

we

put

something

to

sleep

or

we

take

it

offline

to

save

energy?

How

do

we

anticipate

failure

or

how

do

we

deal

with

it?

And

what

do

we

do

about

the

fast

convergence

situations?

G

So

hopefully

that

is

useful

from

the

perspective

of

stuff,

so

I

see

Carla

said

in

data

centers,

you

always

have

an

Alabama

Network.

Well,

that's

true

in

some

data

centers

and

it's

not

true

in

other

data

centers,

some

data

centers

have

gone

just

for

Port

count

problems

to

an

inbound

Network.

So

it's

not

consistent.

Let's

see

someone

else

said

yes,

exactly

constraint,

based

optimization

and

as

I

said

earlier

in

the

chat.

One

thing

to

remember

is

that

optimizing

for

two

metrics,

like

bandwidth

and

energy

usage,

is

technically

MP

complete.

G

You

can

do

it,

but

you've

got

to

merge

the

metrics.

Somehow

you

can't

actually

run

shortest

path

first

or

even

bgp.

Part

of

the

reason

bgp

is

is

by

stable

is

because

we

try

to

optimize

on

multiple

metrics

and

when

you

do

that,

you

end

up

in

a

non-atomic

state

where

order

of

operation

makes

a

difference,

and

things

are

gone

are

hard.

B

B

H

I

I

J

Okay,

thank

you

so

just

first

so

I'm

a

second

year

PG

student

in

Belgium

at

your

server.

So

maybe

my

presentation

will

be

like

a

bit

too

much.

Research

oriented

but

I

hope

that

we

will

have

some

interesting

conversation

afterwards.

So

the

the

paper

in

the

positional

paper.

We

just

wanted

to

reconsider

multicast

because

we

had

problems,

we

moved

against

and

now

we

think

maybe

thanks

to

the

energy

impact

we

have

with

music

acids,

may

be

interesting

to

reconsider,

at

least

for

some

applications

in

some

Networks.

J

So

just

I'm

pretty

sure

you

almost

know

what

is

multicast

but

to

be

sure

that

we

are,

on

the

same

page,

a

quick

reminder

with

multicast.

You

avoid

sending

multiple

times

the

same

data

in

the

network.

So

sorry,

okay,

so,

for

example,

here

imagine

that

this

router

wants

to

send

the

same

data

to

routers

one

two

and

four

with

unicast.

J

So

there

are

currently

some

applications

that

use

multicast,

for

example,

still

IPTV

and

Stock

Exchange,

but

we

think

it

may

be

interesting

to

reconsider

multicast

for

other

applications,

for

example

here

the

the

the

conference

we

we

just

have

now.

It

may

be

interesting

to

reconstruct

multicast

if

we

could

deploy

it

in

the

wide

error

network,

for

example.

J

So

the

first

thing

we,

of

course

we

we

know

it-

is

that

multi

gets

reduced

to

the

bytes

Footprints

because,

as

we

avoid

sending

multiple

times

the

same

packets

in

the

network,

when

you

start

increasing,

really

match

the

number

of

receivers.

Sorry

for

noise,

we

avoided

multiple

times

in

the

same

packets

on

some

links.

So

here

we

see

that

when

we

increase

the

number

of

receivers

above

seven,

for

example,

we

start

having.

J

So

we

are

just

I'm

sorry,

we

will

just

send

fewer

bytes

on

the

network

compared

to

unicast,

which

is

more

linearly,

because

when

you

increase,

when

you

add

the

new

receiver,

you

will

send

individually

this

packet

to

the

new

receiver,

as

we

saw

before

now.

The

second

thing

we

analyzed

was

the

number

of

CPU

Cycles

on

the

source,

because

with

multicast

you

only

send

the

packet

once

compared

to

sorry.

J

We

multicast

you

send

the

packet

only

what

once

compared

to

unicast

when,

where

you

must

send

an

additional

packet

every

time

you

have

a

new

receiver,

so

here

with

multicast,

we

have

no

increase

when

we

change

the

number

of

receiver.

Of

course-

and

this

is,

this

will

be

more

important

when

you

consider,

for

example,

protected

payloads,

because

with

unicast

you

must

encrypt

a

separately

the

payload

each

time

you

want

to

send

to

a

new

receiver,

but

with

multicast

you

could

only

encrypt

it

once

and

then

send

it

to

the

network.

J

And

basically,

when

I

was

doing

my

research,

the

the

question

was

the

because

of

this

paper,

because

what

it

showed

basically,

is

that

with

multicast

we

have

issues

everywhere

and

it's

really

difficult

to

deploy

it.

So,

in

the

positional

paper

we

reviewed

three

of

these

issues

and

try

to

find

some

possible

solutions.

Of

course,

this

is

an

open

discussion,

so

you

might

not

agree

and

I

would

be

happy

to

discuss

to

discuss

it

with

you.

J

So,

for

example,

the

first

issue

was

that

IP

multicast,

which

was