►

From YouTube: IETF114 SPRING 20220727 1400

Description

No description was provided for this meeting.

If this is YOUR meeting, an easy way to fix this is to add a description to your video, wherever mtngs.io found it (probably YouTube).

A

A

B

A

C

A

I'll

try

to

to

be

short.

This

is

a

hybrid

meetings.

So,

if

you

are

in

person,

you

need

to

sign

in

using

mytheco

and

you

you

need

also

to

use

mytheco

to

join

the

mic

queue

and,

very

importantly,

you

need

to

to

wear

mask

anytime

and

if

your

remote,

nothing

changed,

please

make

sure

your

audio

and

video

are

switched

off

to

avoid

noise

and

minutes

they

are

collaborative.

A

A

D

E

E

So

thanks

the

motivation

for

circuit

cell

segment.

Routing

policies

is

to

allow

operators

to

run

a

single

network

that

not

only

carries

what

we

call

connection-less

services,

which

is

classical

ipvpn

services

or

layer.

2

vpn

services,

in

conjunction

with

more

transport,

oriented

or

connection

oriented

point

of

point

services,

often

called

private

lines,

or

you

know

in

essence

wise.

If

you

will

now

what

circuit

style

segment

routing

policies

are

adding

on

is

from

a

underlay

transport.

E

We

addressing

additional

requirements.

First

of

all

to

have

traffic

engineered

paths

which

are

persistent,

meaning

they

are

not

changing

because

of

traffic

load

or

topology

changes.

They

only

shall

change

when

the

operator

is

requesting

the

path

to

change,

we're,

looking

at

strict

pain

with

commitments

and

end-to-end

path,

protection

to

achieve

you

know

very

quick

failure,

recovery

and

the

famous

sub

50

millisecond

target,

as

well

as

restoration,

to

handle

multiple

failures

and,

along

with

that,

of

course,

path.

E

So

how

do

we

address

those

requirements

and

what

are

the

characteristics

of

segment

route

circuit,

style,

segment,

routing

policies?

First

of

all,

we

have

a

centralized

path.

Computation

element

in

the

picture

that

is

responsible

to

get

the

path,

requests

and

part

of

the

path

request

is,

of

course,

the

requested

bandwidth

and

the

path

computation

element

will

make

sure

that

we

have

bandwidth

management

for

all

the

links,

and

this

is

important

because

in

a

segment

routing

network

you

don't

have

any

rsvp

that

normally

would

have

done

that

job.

E

E

We

are

using

multiple

candidate

paths.

Now

we're

going

to

program

two

of

them

to

install

you

know

a

protection

scheme

and

there

can

be

a

third

one

if

restoration

is

required

as

well

and

stamp

is

used

to

do

loopback,

liveness,

detection

or

observation.

If

you

will

and

performance

measurement

next

slide,.

E

How

are

we

creating

circuit

style

segment,

rounding

policies

before

we

can

create

them?

A

few

words

on

the

topology,

the

topology

is,

you

know,

every

link

has

to

be

configured

with

unprotected

adjacency

sits

and

they

should

be

persistent

across

router

reloads,

which

generally

is

done.

If

you

configure

them

manually

and

explicitly,

the

topology

will

be

known

by

the

pce

via

igp

or

vgpls

extensions.

E

E

The

draft

is

outlining

a

bunch

of

you

know,

attributes

or

information

that

will

be

included

in

the

candidate

path.

Request.

Important

is,

for

example,

the

the

c

bit

in

the

bidirectional

association

object

to

a

force

co-routing.

We

also

have

some.

You

know

some

bits

in

the

lsb

a

object

to

to

enforce

no

local

protection,

but

there

is

a

dedicated

draft

for

that

will

cover

that

and

it

is

being

discussed

in

the

pce

working

group

and

we

are

using

multiple

candidates

for

protection.

E

E

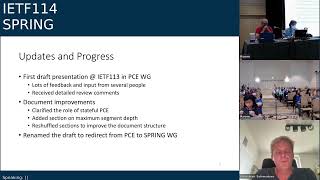

I'm

going

quite

quick

because

I

wanted

to

share

the

updates

and

the

progress

we

made.

We

have

done

our

first

presentation

at

iitf

113

in

the

pc

working

group.

We

got

quite

a

lot

of

input

in

the

meeting

as

well

as

after

that,

and

we

received.

You

know

a

lot

of

review

comments

which

already

got

incorporated.

E

We

added

a

section

about

how

to

deal

with

the

maximum

segment

depth

limitations

that

may

arise

and

we

overall

cleaned

up

or

reshuffled

the

the

sections

so

that

to

to

get

a

better

structure

and

readability

of

the

draft

and

speaking

of

the

draft.

One

of

the

feedback

was

that

the

draft

is

probably

more

better

suited

in

the

spring

working

group

and

that's

why

we

renamed

the

spring

working

group

and

we're

presenting

it

here

in

the

final

slide.

E

We

would

like

to

get

more

comments

and

and

feedback.

This

is

always

welcome.

We

think

that

we

got

already

the

document

in

a

pretty

good

state

and

that's

why

we

would

suggest

the

the

working

group

to

to

do

an

adoption

call

so

that

that

this

draft

may

be

adopted

by

this

working

group.

Thank

you

very

much.

D

E

So,

maybe

to

to

cover

the

the

the

core

the

bi-directional,

so

we

we're

going

to

use

the

bi-directional

association

to

signal

from

both

end

points

to

the

pc

that

those

two

sr

policies

belong

to

together

and

then

it's

the

the

job

of

the

pc

to

basically

perform

the

path

computation,

so

that

those

both

sr

policies

that

are

indicated

to

belong

together

are

co-routed

in

a

bi-direction

are

routed

in

a

bi-directional

and

co-routed

manner,

which

I

think

is

something

that

you

know

it's.

It's

is

already

quite

well

defined

with

piece

of

extensions.

E

The

protection-

maybe

I

was

going

a

little

quick

right,

so

we

have

two

candidate

paths

that

working

at

the

protect.

They

will

be

signaled

each

as

a

request

and

each

having

a

bandwidth.

You

know

requested

as

well.

So

when

the

the

pc

is

doing

the

computation

and

manages

the

allocations

of

all

the

path

computation

requests

in

the

topology,

it

will

allocate

both

the

working

and

the

protect

band.

I

E

Yeah,

I

think

this

is

this

is

a

a

fair

comment.

The

our

draft,

I

think,

is

mostly

focusing

on

on

the

the

overall

solution,

how

the

corroded

and

bi-directional

computation-

and

this

and

the

paths

are

getting

established.

You

know

establishing

an

end-to-end

path

segment.

As

part

of

that

you

know,

I

think,

fits

in

the

picture,

so

we

definitely

can

we

can.

We

can

talk

about

how

how

those

two

work

items

are.

Are

you

know

aligning.

I

D

L

Hi,

my

name

is

gion

mishra,

I'm

with

verizon,

and

I

will

be

presenting

srpmtu

for

sr

policy

on

behalf

of

the

co-authors.

Thank

you

next

slide,

so

some

history

as

to

how

this

draft

came

about

and

came

into

the

spring

working

group.

So

during

the

adoption

of

draft

pce

psep

with

shipping

a

need

for

the

spring,

a

need

for

a

spring

document

was

requested

and

confirmed

by

both

the

pce

and

spring

workgroup

chairs.

L

So

with

that,

a

and

I,

during

that

discussion

of

the

adoption

during

that

adoption,

call

an

idr

document

for

sr

policy

path,

mtu

adoption

this

this

topic

had

as

well

had

come

up

in

april

of

2020,

a

discussion

was

brought

up

by

keith

and

taluer

that

the

concept

of

path

mtu

for

sr

policy

and

its

applicability

should

be

first

defined

in

the

spring.

Working

group

should

be

the

first

to

find

in

the

spring

working

group

before

we

introduce

the

signaling

aspect

into

the

into

bgp.

L

So

as

a

result

of

the

pc

mtu

extension,

adoption

call

for

pete

for

psep

of

path.

Mtu

extension

was

to

maintain

basically

only

protocol

extensions

that

was

also

as

well

requested

for

the

idr

draft

to

just

really

maintain

just

the

protocol

extension

details.

While

the

sr

policy

path,

mtu

definition

and

framework

was

to

be

developed

in

the

spring

working

group

as

a

standards

track

document

to

ensure

interventor

interoperability

related

to

sr

path,

mtu

concept

and

computation

details.

L

This

topic

came

up

as

a

critical

issue

to

be

addressed

during

the

idr

work

adoption,

as

I

mentioned

in

2020

and

then

and

then

the

second

time

again

during

the

psap

extension

for

pat

mtu,

due

to

the

criticality

of

solving

this

topic

and

related

related

to

handling

of

fragmentation

and

fragmentation

issues.

That

could

happen

with

an

sr

policy.

L

L

So

basically

a

valid

candidate

path

is

selected

as

the

active

path

and

once

determined

to

be

the

best

path

for

the

sr

policy.

It

could

build

the

dynamics,

explicit

composite

set

of

segment

lists

or

composite

canada

path,

container

grouping

of

the

sr

policy.

So

so,

with

that

definition,

srpmtu

for

a

segment

list

is

defined

as

the

minimum

link

mtu

of

all

the

links

in

the

path

from

the

source

to

destination.

L

Next

slide,

so

here

just

depicting

kind

of

the

sr

policy

for

a

pmtu

framework.

So

in

this

example

we're

showing

an

sdn

controller.

We

have

a

path

these,

so

the

sr

piece.

The

centralized

controller

would

basically

push

down

via

pc

the

pce

to

pcc

to

the

sr

source

node

the

a

constraint

so

it'd

be

an

s.

It

would

be

an

srpm2

constraint

that

we're

pushing

down

from

the

controller

to

these

a

head-end

source

node

and

that's

with

the

bgpsr

policy.

L

L

So

the

framework

of

sr

policy

for

p

for

srpmt

for

sr

policy.

So

with

that

framework,

the

srpm2

path

computation

and

how

that

would

work

for

the

variety

of

different

paths.

So

a

loose

te

path

would

be

the

minimum

srpm2

of

all

ecmps

between

the

two

adjacent

and

two

nodes

between

ingress

source,

node

and

egress

destination.

Node

node

sid

along

an

srt

path.

So

that's

basically

the

node

node

sid,

ecm

ps,

so

the

next

one

is

a

strict

patch.

L

So

this

here

it's

a

minimum

link

empty

of

all

links

along

the

strict

srt

path

and

then

mix

to

the

minimum

sr

pmt

of

all

ucmps

between

the

two

two

node

sids

and

the

link

mtu

of

all

the

link.

Mtu

of

all

links

along

the

path

indicated

in

the

adjacency,

sid

and

then

binding

city

path

is,

is

the

srpm2

of

the

binding

path

is

the

same

as

the

sr

policy,

except

that

it

includes

the

associated

encapsulation

overhead

of

for

srv6,

with

the

outer

ipv6

header

nsrh

and

srm

pls

sids

sid

list.

L

That's

pushed

onto

the

stack

for

tlfti

lfa,

the

srpm2

of

the

repair

path

at

the

plr

node

to

the

merge

point

is

computed

by

the

controller

which

updates

the

head

end

with

the

new

srp

mtu.

So

in

that

bypass

loop,

if

there

is

a

different

mtu

than

the

then

the

primary

path

that

that

that

path,

computation

for

the

bypass

loop

is

actually

is,

is

is

sent

back

to

the

ti

to

the

head

end

node

for

the

bypass

loop

next

slide.

L

So

this

shows

an

example

of

kind

of

how

this

would

work

so

so

with

this

is

an

example

of

a

sr

policy

for

loose

path.

You

with

the

node

sid,

so

you

have

the

node

sit

on

the

ingress

and

egress

pe,

and

here

we

have

an

ecmp

so

so

to

keep

in

mind

that

we're

looking

at

so

this

could

be

an

n-way

ecmp.

You

have

many

ecmp

that

are

that.

Are

there

with

the

prefix

sid

prefix

node

said

so

now.

L

Here

it's

the

lowest,

the

the

lowest

pm2

of

all

the

pads

of

all

the

ecmps.

So

if

you

see

the

path

from

left

to

right,

it's

got

an

m2

of

2000,

but

then

you

see

the

bypass

loop

has

an

mtu

1500.

So

as

as

with

with

the

computation,

it

would

be

the

lowest.

So

here

the

lowest

would

be

that

bypass

loop

1500..

So

now

here

the

controller

would

basically

push

down

the

constraint

of

1500

down

to

the

sr

pulse.

Sr

sr

head

end

head

end

node

for

the

sr

policy

next

slide.

L

L

You

see

a

a

nmt

of

1500.,

so

in

this

example,

what

we're

trying

to

do

is

we're

trying

to,

but

so

normally

the

path

mtu

would

take,

as

as

mentioned

in

the

previous

slide,

the

low,

the

lowest

lowest

path

mtu.

So

in

this

case

the

lowest

path

mtu

would

be

the

bypass

path.

The

triangle

path

along

the

bottom

1500,

so

that

would

that

would

be

normal

standard

computation

when

the

path

mq's

is,

is

computed.

So

now

what

we're

doing

here

is

we

want

to

take

the

actual

the

higher

mtu.

L

L

So

this

example

is

the

loose.

So

it's

a

similar

topology,

so

we

got

left

to

right.

We

got

2000

and

then

we

have

the

triangle

path:

1500

along

the

low

the

other

path-

and

this

is

here

is

example

of

a

mix.

So

you

got

prefixed.

So

you

you

take

the

prefix

sid

in

the

segment

list:

you're

you're

taking

you

have

the

prefix

sid.

L

So

that's

your

first

sid

in

the

si

the

active

sit

in

the

sit

list

and

then

you

have,

then

you

have

an

adjacency

sid

that

you

take

along

the

path

to

get

to

the

egress

destination.

So

in

this

in

this

case,

what

would

happen

from

left

to

right?

You

have

the

you:

have

the

two-way

ecmp

here

left

to

right.

You

got

2000

and

then

on

the

bottom

triangle

path

you

got

1500.

So

it's

the

lowest

lowest

of

of

the

path

mtu

along

all

the

paths

and

this

case

is

1500.

L

Okay,

let's,

let's

go

down

to

the

last,

we

will.

If

did

you

have

time

for

just

one

more

go

up?

One

slide,

you

one

more

slide.

Okay,

thank

you.

So

what

I

would

like

to

ask

the

work

group

is:

if

we

could,

if

folks

could

review

the

draft-

and

we

just

want

to

get

feedback-

there's

some

there

are.

There

are

some

critical

components

that

we

would

like

to

get

feedback

from

these

spring

working

group

experts.

L

The

three

three

topics

that

that

we

bulleted

with

the

you

know

with

with

the

authors

co-authors

on

on

you

know,

as

that

we

had

discussed

one,

the

first

one

that

we

want

to

get

feedback

on

from

the

spring

working

group.

Experts

is

the

ti

lfa,

srpm2,

ti

lfa,

computation

and

fragmentation

caveats

so

just

to

make

sure

we

didn't

miss

anything.

You

want

to

make

sure

that

we

we're

really

on

target,

and

so

we

really

want

to

get

get

feedback

from

the

experts.

C

C

M

N

And

this

is

about

the

motivation.

We

think

that

the

exact

name

should

be

the

connection,

only

the

whole

backhoe

switching

pass

in

the

sr6

the

whole

pipe

hope.

Switching

is

just

the

way

that

the

traditional

amps

start

on

its

node,

in

which

the

label

is

locally

allocated

and

slapped

on

each

node,

as

we

know

that

at

r6

packet

can

contain

a

hole

or

partial

nodes

to

do

the

strict

or

loose

source

rooting.

Thus

fewer

status

on

each

node

would

be

needed.

N

N

And

the

for

the

connection

oriented

strictly

passed

in

s56,

we

can.

We

have

several

options.

The

first

is

we,

we

still

put

every

node

state

into

the

package

header,

but

if

the

path

is

long,

the

packet

header

will

be

large

optionally.

We

can

also

compress

the

c

list.

The

second

option

we

can

support

both

have

our

v6

and

I'm

pairs,

but

it

is

complicated

and

with

this

document

many

talks

about

the

third

option,

we

want

to

try

to

support

her

back

home,

switching

advice,

6

network.

N

N

N

It

should

be

supposed

on

each

node

and

contains

a

argument

similar

to

a

label.

This

is

a

graph

we

can

see

that

on

each

node,

the

packet

header,

the

destination

in

the

packet

header

is

changed,

is

similar

to

the

label

swap,

and

we

have

we

have.

We

need

these

nodes

to

support

the

label

mapping

in

a

seed

format.

N

On

the

different,

it

is

simple

to

introduce

new

cs

to

replace

the

label

in

mpls,

but

on

the

control

plan

we

also

have

two

options:

the

first

option.

Perhaps

we

can

use

a

pce

server

to

connect

to

each

node

and

communicate

the

label

labels

for

the

past

and

under

the

document

that

we

also

introduced.

Another

option

is

to

simulate

the

procedure

or

svpt

mpls

by

using

new

control

plan

c's

in

n56.

N

We

call

it

and

they

are

independent,

corpse

c

function,

which

is

also

needed

to

be

supported

on

each

node

and

the

content.

Data

paths

contains

an

argument

similar

to

a

label

and

the

id

step.

We

think

that

the

legal

zero

is

special.

It

is

used

for

the

confirm

the

path

we

have

the

the

graph

and

the

node

one

is

a

hidden

and

the

node

file

is

the

endpoint.

N

N

And

we

in

this

page

we

introduced

the

steps

or

as

hebrews

in

the

past.

The

first

step

is

we,

we

need

the

hidden

standard

packet

to

know

for

node,

one

to

know

the

file,

and

it

contains

all

the

nodes.

Seeds

and

each

downstream

node

will

respond

a

table

in

the

package

returned

to

the

node

one

and

they

can

establish

this

mapping

table,

but

it

is

in

the

sealed

format.

N

D

J

D

O

M

O

Introduce

the

background

and,

as

we

know,

a

network

slicing

partition

a

physical

network

into

multiple,

isolated,

logical

networks,

network

slides

associated

with

the

spatial

specific

network

resources

called

the

nrp.

An

rpid

is

used

to

identify

the

nrp

during

the

package

forwarding

and

it

can

be

carried

in

the

pack

packet

and

like

in

I

like

the

picture.

O

O

O

The

segments

of

the

intermediate

endpoint

along

the

six

parts

are

usually

the

end

or

and.x

type

segment,

so

we

can

and

the

argument

field

of

this

type

segment

are

not

defined,

so

we

can

use

this

this

field

to

carry

the

nrp

id,

so

the

seedless

in

the

rsrh

every

segment

can

carry

the

same

or

different

rpids,

which

can

remain

by

the

controller

of

the

cri.

So

next,

okay,

please.

O

Because

for

the

in

the

srv6

parts,

the

srv6

endpoint

can

look

up

the

local

seed

table

to

extract

the

argument

field

to

get

the

rpid,

but

in

other

nodes

like

the

non

srv6

node.

Even

the

node

cannot

support

the

israel.

6

only

supports

ipv6,

so

we

need

a

table

to

get

the

nrp

id

from

the

destination

address.

So

we

we

should

create

and

look

up

the

slice

prefix

table

here

so

with

the

static

configuration

mode

of

the

dynamic

advertising

mode.

O

That

means

from

the

controller

or

the

cri,

to

the

router

or

or

from

the

bbp

rs

or

the

lgp

to

other

nodes

of

the

controller

and

the

protocol.

Can

extensions

will

be

provided

in

future

versions

or

separate

drafts.

So

this

is

the

basic

mechanism,

so

a

slice

prefix

includes

the

prefix

should

be

matched

and

the

the

second

part

in

the

encoding

position

in

segment.

O

O

This

is

the

example

for

this

mechanism,

so

we

assume

that

we

have

a

two

rps

and

rp1

guarantees,

the

100

megabits

band

device

and

the

rp2

guaranteeing

200

megabits

pen

wise.

So

we

dedicated

the

the

queues

for

the

airy

router

to

guarantee

in

the

bad

ones.

So

we

have

a

sra

6

policy

on

p1,

including

the

segment

for

the

p1

and

seed

and

the

p3

and

the

seed

and

for

the

p1

as

the

head

end.

G

O

C

I'll

ask

one

question:

this

is

joel

halpern

as

a

participant,

I'm

in

looking

at

this

material.

I

think

I

understand

the

general

driver

and

actually

like

it,

but

the

material

needs

to

be

much

clearer

about

whether

you

are

requiring

strict

paths

expecting

strict

paths

or

expecting

intermediate

nodes

which

are

not

addressed

to

extract

these

arg

bits

from

the

current

sid

and

and

handle

them,

which

would

be

an

unusual

requirement.

So

we

need

to

be

very

clear:

I'm

not

telling

you

what

the

right

answer

is,

but

we

need

to

be

very

clear

about

what

we're

doing.

C

M

O

P

J

Q

Q

Q

That

means

the

ipv6

callback

hub

options,

header

the

destination

options,

header

or

the

srhtlv-

may

be

used

to

carry

the

instructions

or

commands.

However,

the

usage

of

the

options

or

trvs

will

cause

two

issues.

First,

you

will

make

the

packet

header

longer

that

will

reduce

transmission

efficiency

and

it

will

make

make

the

forwarding

processing

complex.

They

will

affect

a

forwarding

performance

besides

in

the

solution

of

srv6

c6

compression

with

next

flavor.

Q

Q

So,

in

order

to

address

these

challenges,

we

propose

this

draft

to

use

the

arguments

of

the

srv6

seat

to

carry

those

instructions

using

the

arguments.

We

will

gain

this

benefit.

First,

we

can

reduce

the

transmission

overhead

because

it

will

reduce

the

needed

space

of

ipv6

extension,

header

or

sih

tlv.

Q

Q

Q

We

we

configure

a

template

to

network

devices,

then

the

devices

read

and

process

the

content

of

the

arguments

according

to

the

template,

for

example,

if

the

argument

has

the

total

length

of

their

bits,

we

can

define

b0

to

bx

stores

the

instructions

of

feature

a

bit

x

to

bit

y

stores,

that

of

bishop

b

and

bit

y,

to

be

z,

stores.

That

of

feature

c

next

slide.

Please,

the

second

method

is

called

bitmap.

Q

Q

Moreover,

this

draft

also

considers

how

to

interact

with

srv6

cc

compression

solution.

As

for

the

next,

as

for

the

next

flavor

or

the

next

and

replace

flavor,

it

is

required

to

shift

the

sea

seed

in

the

srv6

seed.

So

the

c

seeds

can

be

placed

in

the

generalized

arguments

and

need

to

be

placed

from

the

most

significant

bit

while

shifting

the

multiple

cc's.

The

remaining

parts

of

the

generalized

arguments

should

not

be

shifted

next

slides.

Please.

Q

So

finally,

we

believe

there

are

something

to

be

discussed

and

clearly

defined

in

the

next

step,

for

example,

which

bit

in

the

bin

map

corresponds

to

which

feature

and

what

instructions

or

fields

of

the

specific

feature

need

to

be

carried

in

the

generalized

arguments,

and

how

long

is

the

space

of

arguments

that

we

need

to

allocate

for

a

specific

feature

at

the

end,

we'll

come

to

give

us

some

advice

online,

only

the

mailing

list

or

join

us

to

make

some

contributions.

Many

things,

that's

all.

Thank

you.

D

Thanks,

jim

generally,

I

have

a

question

myself

as

an

individual

contributor.

Before

I

go

to

the

queue,

it's

not

obvious

to

me

anyway.

What

these

features

that

you

talk

about.

Are

you

you're

kind

of

indicating

that

it's

a

feature,

but

presumably

there's

going

to

be

more

data?

So,

for

example,

you

talk

about

network

slicing.

D

Why

do

you

need

to

indicate

that

feature

when

you're

going

to

need

more

information

than

that

to

actually

be

able

to

do

anything?

You

don't

need

to

answer

that

now,

but

something

to

consider

the

other

thing

to

consider

is

potentially

giving

some

example

how

the

compression

would

actually

work

with

this.

D

Q

Thanks

for

your

comment

in

the

draft,

we

have

defined

a

new

flavor

to

indicate

that

the

argument

part

is

needed

to

be

processed

as

a

generalized

argument.

It's

named

as

a

structural

argument

flavor

now.

This

point

is

no

shown

in

the

slides.

For

time

reason,

thanks

for

your

mention

and

about

how

to

interact

with

the

srv6

compression

is

shown

in

the

above

page.

R

R

Q

R

Q

R

Sure

so

you

need

to

define,

I

mean

behavior

flavor

is

like

I

mean

I

don't

want

to

go

into

it.

You

would

need

to

define

a

new

code

point.

Let

me

say

that

for

for

set

behavior,

slash

flavor

that

is

going

to

use

generalized

arguments.

They

do

not

apply

to

existing

ones

that

are

defined,

and

let's

say

today

we

can

discuss

further

on

the

list.

Perhaps

if

you

have

any

queries.

D

S

S

Q

I

Okay,

I

this

is

a

quick

reply

about

this

about

this.

The

questions,

in

fact,

this

the

arguments

should

be

processed

in

the

local

node,

but

because

the

instruction

to

be

processed

by

the

specified

segment

there

can

be

multiple

arguments

for

different

features.

So

we

need

a

template.

We

all

need

a

structure,

the

arguments

to

be

defined

for

this

purpose,

but

we

ask

this

these

comments.

We

should

refine

this

draft

to

explain

the

use

case

more

okay,.

T

Next,

please

yeah.

Here's

some

background

of

the

redundancy

protection

and

it

is

a

generalized

protection

mechanism

that

and

and

used

in

used

in

the

context

of

of

the

segment

routing

paradigm

and

it

it

replicates

the

service

package.

On

the

redundancy

note

yeah

shown

in

the

in

the

figure,

it

replicates

the

service

packet

on

the

redundancy

node

and

transmit

the

copies

of

the

service

packets,

from

different

destroyed

paths

and

transmit

the

first

correct

and

illuminate

the

redundancy

packet

as

the

merging

node

and

to

to

be

clarified

it.

T

The

first

draft

is

was

adopted

by

spring

last

year,

introduced

the

redundancy

seed

and

merging

seed

in

data

plane

and

to

specify

the

endpoint

behavior,

and

this

draft

introduced

the

redundancy

policy

in

control

plan

to

instruct

the

instructor.

Multiple

redundancy

forwarding

pass

from

controller

to

the

redundancy

node

next.

T

So

what

is

the

redundancy

redundancy

policy?

It

is

defined

as

a

variant

of

the

sr

policy

and

with

minimum

changes.

So

it

inherits

the

the

main

structure

and

the

most

attribute

from

of

the

sr

policy,

and

the

function

is

to

instruct

the

replication

of

the

server's

packet

and

assign

the

multiple

redundancy

forwarding

pass.

So

we

actually,

we

specify

it

in

there

are

actually

it

works

in

two

scenarios

and-

and

the

scenario

is

shown

in

the

in

the

two

figures

below

the

main

difference

between

the

two

scenarios.

T

Is

that

whether

the

the

redundancy,

whether

the

head

end

of

the

head

and

node

of

the

sr

domain,

is

the

redundancy

node

or

not?

So

it

did

it.

If

it

is

the

if

the

head-end

notice

the

redundancy

node,

it

means

that

there's

no

necessary

to

use

the

redundancy

seat

at

the

at

the

header

so

that

it

did

so.

It

determines

the

whether

we

used

to

identify

seed

or

not

next.

T

In

this

picture

in

this

page

that

we

used

an

example

to

explain

the

structure

and

the

behaviors

of

the

radiances

node

and

in

the

figure

on

the

right,

there

are

three

folding

paths

between

the

arnold

and

m

note.

So

we

use

the

first

two

blue

first,

two

forwarding

paths

in

blue

as

the

redundancy

note

as

the

redundancy

path.

T

T

So

come

back

to

the

example

here

that

if

when

the

with

the

first

candidate

pass,

cp1

is

selected

as

the

best

valid

can

they

pass

for

this

redundancy

policy?

And

this

all

the

syllabus

are

used

for

all

these

lists.

In

this

candid,

pass

are

used

for

the

redundancy

forwarding

and

not

for

the

for

the

load

balancing

functions

so

at

the

same

time,

the

weight

of

these

list

is

not

applicable.

T

T

T

Yeah

the

the

next

steps

that

we

mainly

want

to

have

the

discussion

on

the

melody

stored

on

the

on

the

meeting

to

define

the

solution

and

also

keep

the

keep

in

line

with

the

sr

policy.

If

necessary,

and

the

further

possible

discussion

on

the

alternative

solutions.

People

may

want

to

discuss

whether

why

not

use

the

use,

multiple

candidate

paths

for

the

for

the

redundancy

forwarding,

forwarding

pass

and

we're

happy

to

receive

and

comments

and

suggestions,

and

thank

you.

F

F

T

B

Yeah,

israel

at

t

in

in

the

material

you

mentioned

a

binding

sit.

You

also

mentioned

something

referred

to

as

a

redundancy

binding

set.

However,

what

wasn't

clear

is

if

the

redundancy

it

takes

all

of

the

semantics

of

a

standard

binding

set

or

if

the

standard

bindings

it

can

also

function

as

a

redundancy

sid.

Can

you

clarify

that.

T

Yeah

the

redundancy

seed

that

we

defined

in

the

previous

in

in

the

first

draft,

and

it

is

we

we

defined

it

as

a

as

a

variant

of

the

of

the

bunking

seed.

I

think

it

takes

issue

takes

all

the

all

the

functions

from

the

all

the

semantics

from

the

pounding

seed,

but

add

more

functions

like

the

replication

of

the

packet

to

this

new

spending

seat

yeah.

But

but

if

you

have

the

after

any.

K

T

D

T

S

T

U

U

U

U

U

Actually,

as

we

all

know,

sr

mps

and

sr6

is

able

to

provide

explicit

routine

with

which

is

also

requested

by

that

net

and

for

the

convenience

of

presentation

I

just

take

srs6

and

as

an

example

and

srmprc

is

very

similar

with

source

routing.

It

could

steer

that

then

airflows

go

through

the

network

according

to

an

explicit

routine

by

second

releasing

srh,

but

only

explicit

routine

is

not

enough

for

guaranteed

bonded

latency.

So

what's

more

next

slide,

please.

U

What

we

are

proposing

is

srv6

extensions

should

be

defined,

to

provide

explicit

routine

and

upon

the

latency.

The

the

basic

idea

is

very

simple:

if

we

just

indicate

a

explicit

routine

along

the

past,

if

we

can

guarantee

the

boundary

latency

for

each

half

inside

of

the

path,

the

end-to-end

latency

can

be

guaranteed.

U

U

Here

I

just

referred

to

another

document.

We

have

another

document

called

upon

the

linus

information

which

is

introduced

for

body

latency

function.

The

the

document

will

be

presented

in

the

networking

group.

The

basic

idea

is

very

simple:

we

just

want

to

avoid

going

into

details

of

different

hardware

implementations

for

guaranteed

on

the

latency.

We

just

define

a

concept,

a

general

concept

of

under

latency

information,

which

have

eight

we

defined

in

existing

version,

eight

body,

latency

information

types,

and

we

then

cover

most

of

the

cases.

U

We

have

met

the

mechanisms

to

guarantee

bonding

latency

and

also

the

the

boundary

latency

information

value

is

specified

value

of

of

bli

to

provide

guidance

for

packet

processing

with

the

meaning

of

a

particular

bi

type.

As

I

have

mentioned,

the

the

eight

one

of

the

eight

type

in

the

table

in

the

right

hand,

so

the

information

pair

bi

type

and

the

blf

value

should

be

indicated

by

srv6

data

plan

to

provide

a

boundary

latency

next

slide.

Please

I

notice

as

greg

is

in

the

queue.

S

S

S

C

I

believe

the

detailed

definitions

of

these

are

said

to

be

det

net.

This

clearly

will

not

advance

until

the

dependent.net

stuff

is

adopted,

and

so

we'll

leave

it

to

det

net

to

debate

the

exact

way

to

describe

these

various

properties

because

it's

their

space.

The

only

question

for

spring

would

be

how

to

indicate

that

net

properties

in

a

spring

segment

list.

U

U

U

The

difference

main

difference

of

these

two

cs

is

whether

to

carry

bis

ex

implicitly

in

the

encapsulation

for

and

dot

x

blc.

It

has

two

meanings:

one

is

to

identify

an

interface

or

link

just

like

the

normal

or

the

json

c.

The

second

meaning

is

to

identify

the

information

pair

of

bli

type

and

the

br

value

on

the

interface

or

linked

to

guaranteed

funding

latency.

U

So

there

will

be

a

multiple

and

dot

x

blcs

for

one

adjacency

in

order

to

differentiate

different

bi

type

and

the

br

value

to

guide

the

packet

forwarding

and

another

variation

is

and

doltech's

bli

seed.

It

also

has

two

meanings:

the

one

the

first

one

is

to

identify

interface

or

link

as

the

norm

of

the

tcc

seed

and,

second,

is

to

identify

the

vi

type

to

guarantee

boundary

latency.

In

this

case,

the

the

bli

value

will

be

carried

in

the

encapsulation

of

the

srv6

packet.

U

U

Okay.

Okay,

thank

you.

I

just

finished.

There

are

three

possible

options

to

carrying

the

the

bi

information

value

and

what

is

the

next?

We

we

would

like

to

collect

the

comments

from

spring

and

attendance.

Like

joy

has

already

mentioned

the.

Maybe

the

definition

of

mechanisms

should

be

left

to

the

net

about

how

to

how

to

make

srv6

and

srmps

to

support.

U

D

P

P

This

page

is

the

motivation

for

segment

leads

list

identifier.

We

know

the

draft

segment

relating

to

policy

defined

as

a

policy

to

distribute

as

a

policy

to

the

hand

and

node.

There

are

policy

name

and

candidate

pass

name

to

identify

the

policy

and

candidate

parts

respectively

for

seminal

segment

list.

P

There

is

no

id

or

name

in

sr

policy.

So

in

some

scenarios

it

is

inconvenient

to

do

some

process

about

someone

list.

One

case

is

the

reporting

statistic

from

head

and

node

to

controller.

Normally

hidden

can

collect

a

forwarding

statistic

account

based

on

signal

list.

One

report:

the

control

controller

patent

has

reported

static

with

the

whole

signal

list

and

the

controller

has

to

compile

the

seed

one

by

one

to

identify

which

segment

list

is

reported

and

another

case

is

distributing

configuration.

P

It

is

from

controller

to

hidden

node

in

addition

to

distributing

policy

through

bcp

as

a

policy.

The

controller

also

distributed

configuration

through

that

that

conf

our

procedure

is

similar.

The

whole

segment

list

has

to

be

carried

in

these

cases.

Identifier

or

segment

list

may

be

helpful

next

slide.

Please.

P

P

So

displayed

two

subtlety

are

defined

and

the

left

subject

away

special

file.

Identifier

second

list

buy

four

octaves

number,

the

right

sub.

Here

we

specify

latin

fear

of

a

second

list

by

a

simple

logic.

Name

at

least

two

subtlety

are

optional

and

it

must

not

appear

more

than

once

inside

the

same

listed

subject.

Maybe

just

need

to

keep

one

on

them.

We

can

update

jobs

according

to

feedback

working

group

next

slide,

and

this

is

another

draft

about

the

head

and

behavior.

P

We

know

there

are

multiple

ways

to

steer

packet

flow

into

a

76

policy

for

binances

during

multiple

behavior

have

been

defined.

The

multiple

sf6

yet

banning

seed

with

different

behavior

could

be

encoded.

In

the

same

six

policy

it

can

perform

corresponding

behavior

of

68

with

a

76

banning

seed,

but

for

us

doing

well,

hannah

behavior

is

not

specified

by

bgp

as

a

policy.

Next

page.

D

K

Yeah,

thank

you

jim

money

from

huawei,

so

yeah

it's.

Firstly,

it's

really

happy

to

see

the

people

also

also

thinking

in

the

similar

way.

Actually,

we

have

some

draft

to

propose

some,

like

second

list

id

ident

identifier

or

plus

segment,

something

like

this

already,

so

we

can

discuss

further

to

see

how

to

cooperate

together

to

avoid

multi

redundant

draft

in

the

itf.

Thank

you,

okay.

Thank

you.

D

P

P

R

Okay,

so

couple

of

clarification

and

one

question:

the

clarification

is

that

sr

policy

yang

model

does

have

a

name

for

a

segment

list,

and

I

think

that

should

normally

be

sufficient.

So

I

would

suggest

that

you

know

that's

something

to

be

looked

at.

The

second

point

is

there

were

feedback

here

on

the

bgp

sr

policy,

and

I

would

not

much

rather

prefer

and

I'm

speaking

as

a

co-author

there.

R

I

would

much

rather

prefer

that

such

feedback

is

given

on

the

idr

list,

so

that

you

know

we

still

have

a

chance

to

make

any

changes,

or

you

know,

address

any

comments

rather

than

bringing

new

documents,

so

I

believe

that

we

have

the

necessary

things

there

to

address

this

and

you

know

basic

behaviors,

but

if

you

think

that

it

is

not,

please

comment

on

the

idr

list.

Thank

you.

V

V

V

W

Sure

sure

so

yeah,

so

the

problem

statement

is,

you

know,

focused

on

defining

requirements

and,

of

course,

use

cases.

For

you

know,

building

intent,

aware

paths

across

multiple

domains

and

again

focused

on

our

distributed

routing

solution,

primarily

on

bgp,

given

bgp

is

the

you

know,

inter

domain

protocol

and

many

of

these

networks

that

are

being

targeted.

W

W

Next

slide,

yeah

thanks

so

in

general,

why

you

know

focus

on

bgp,

you

know.

Clearly,

today,

bgplu

is

used

in

many

seamless

mpls

as

well.

As

you

know,

some

multi-year

deployments

so

because

operators

have

operational

familiarity,

so

some

of

them

expect

the

you

know

an

incremental

solution

that

they

can

deploy

in

you

know

of

bgplu.

W

W

Go

across

different

bgp

domains,

mostly

in

terms

of

appearing

policies,

next

slide,

please

so

so,

as

shraddha

said,

this

is

a

you

know,

merged

document,

so

what

we've

managed

to

do

is

put

together

some

sections

that

you

know

with

the

content

that

we

would

like

to

see.

So

some

of

these

sections,

they're

broadly

divided

into

two.

You

know

areas.

One

is

look

at

some

of

the

typical

deployment

scenarios.

W

W

W

The

there

is

a

section

that

defines

an

intent

aware,

routing

framework

introducing

some

of

the

you

know

the

the

base

constructs

the

concepts

and

how

existing

solutions

work.

So

this

is

meant

to

be

used

as

a

reference

for

some

of

the

you

know,

detailed

requirements

in

the

subsequent

sections

next

slide.

Please.

W

Then

we

go

into

some

details,

you

know

in

terms

of

the

technical

requirements.

Again,

it's

broken

up

into

different

categories,

there's

a

section

on

the

intent

requirements.

Currently,

what

we

have

are

the

transport

network

requirements

specified

in

general,

the

the

sections

that

you

see

underlined

are

ones

that

are

placeholders.

You

know,

specifically

the

vpn

layer,

intent

requirements,

the

oem

requirements,

as

well

as

the

multicast

intent

requirements,

the

co-authors

and

editors.

You

know

have

not

yet

managed

to.

You

know,

discuss

and

review

the

content

in.

W

You

know

those

sections

so,

but

we

will

address

them

in

subsequent

versions.

In

terms

of

the

the

other

you

know

requirements,

we

have

the

steering

requirements.

We

have

deployment

requirements

that

go

into.

You

know

different

topologies,

different

transport

types

that

are,

you

know

that

need

to

be

supported.

A

variety.

W

J

W

W

W

W

W

We,

you

know,

provided

some

data

on.

You

know

the

target

scale

you

know

and

then

some

analysis

on

what

that

entails

for

existing.

You

know

designs.

What

are

some

of

the

constraints

that

need

to

be.

You

know

taken

into

account

and

then,

in

terms

of

requirements

we

focus

on

two

broad

requirements.

One

is

you

know

to

scale

the

mpls

data

plane.

You

know

the

need

for

in

hierarchy

and

then

also

to

reduce

the

control

plane.

W

W

W

D

Thanks

dj,

you

mentioned

about

working

group

adoption.

So

if

the

document

gets

adopted,

then

the

chairs

obviously

want

to

see

that

front

page

cleaned

up,

preferably

with

editors

and

everybody

else,

moved

into

contributors,

but

I'm

sure

you're,

aware

of

that,

but

the

sooner

that's

done,

obviously

the

better.

So

if

you

could

take

that

to

your

co-authors,

that

would

be

sounds

good.

Thankful

from

the

chairs.

C

C

Okay,

we

are

pressed

for

time.

This

is

a

brief

presentation

on

the

on

one

of

the

proposals

for

encoding

changes

to

the

mpls

header.

This

is

an

active

topic

being

discussed

in

the

mpls

working

group.

There

are

multiple

proposals.

What

we

have

here

is

one

of

them

to

give

you

an

idea

of.

What's

going

on

in

this

space.

X

Hi

everyone,

my

name,