►

From YouTube: IETF114 TLS 20220725 1900

Description

No description was provided for this meeting.

If this is YOUR meeting, an easy way to fix this is to add a description to your video, wherever mtngs.io found it (probably YouTube).

A

A

All

right

well

we'll

get

started

with

some

of

the

formalities

here.

Thank

you

for

coming

once

again,

please

check

in

with

the

on-site

tool

and

wear

a

mask

unless

you're

presenting

at

the

front

of

the

room-

and

you

are

all

probably

familiar

with

the

notewell

that

governs

the

various

policies

here

at

the

ietf.

A

B

B

Somebody,

jim

reed,

asked

me

about

when

dtls

was

going

to

be

done

about

two

and

a

half

years

ago.

My

crystal

ball

was

really

wrong

by

the

way

we

have

two

other

drafts.

The

remaining

psk

psk

related

drafts,

they're

an

off

48

done,

which

basically

means

they

should

be

published

at

any

time

now.

We've

got

delegated

credentials,

aka

subsearch,

which

I

believe

is

in

paul's

on

paul's

plate.

B

We

can,

I

think,

we're

going

to

go

ahead

and

revisit

that

and

right

now

we

have

the

rc

for

tls,

1.2

and

1.3.

That

is

out

for

work.

Group

last

call

right

now

that

actually

ends

august

5th

and

then

everything

else

that

we

have

in

progress

which

we'll

talk

about

today

and

I

think

that's

it.

I

think

we're

going

to

go

to

the

the

presentations

and

then

we

have

a

slide

at

the

end.

So

these

are

some

scaled

or

expired.

B

C

D

D

D

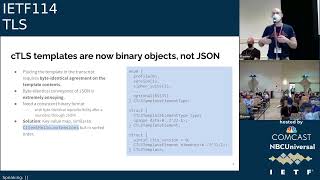

Another

really

important

change

is

that

ctls

is

no

longer

specified

as

a

compression

layer.

Instead,

this

draft

specifies

ctls

as

a

protocol

generator,

so

you

define

a

profile

and

each

profile

defines

a

unique

tls

like

protocol,

but

it

is

not

a

compression

system.

It

is

a

new

compact

tls

protocol

related

to

that

there

are

now

binary

objects

describing

the

templates

and

finally,

there's

a

new

system

of

handshake

framing

I'll.

D

D

So

that

means

these

can

only

be

used

in

cases

where

the

server

knows

that

the

client

is

going

to

be

sufficiently

up

to

date

that

it's

gotten.

This

entry

from

the

iana

registry

longer

profile

ids

longer

than

four

bytes

are

essentially

pri.

Well

they're

they're

not

registered

they're

free

for

anybody

to

use

there's.

They

only

have

significance

within

a

specific

deployment

and

the

profile.

Then,

after

the

profile

id

proceeds

to

lay

out

all

the

information,

that's

required

to

understand

what

that

profile.

Id

means

next

slide.

D

Okay,

ctls

is

no

longer

a

compression

layer,

specifically

the

previous

drafts

of

ctls

structured

ctls

as

a

layer

that

sat

basically

between

tls

and

its

transport.

So

in

principle,

you

could

take

a

totally

standard,

tls,

1.3

stream

and

then

like

maybe

even

literally

in

a

middle

box

or

in

some

sort

of

middleware.

D

You

could

take

that

encrypted

stream

and

and

convert

it

into

a

ctls

stream

and

you

could

convert

it

back

on

the

other

side,

it

was

a

transformation

that

didn't

require

access

to

any

of

the

secrets

associated

with

the

connection

that

has

positives

and

negatives.

We

can

talk

about

it,

but

it

seems

like

the

the

net

consensus

after

the

last

discussion

was

that

we

would

rather

have

ctos

authenticate

its

own

transcript,

instead

of

reconstructing

a

tls,

transcript

and

authenticating

that

so

the

new

draft

does

this:

it

authenticates

its

own

transcript.

D

Reconstruction

is

therefore

no

longer

an

implementation

requirement.

You

can

implement

ctls

without

having

to

reconstruct

the

the

corresponding

standard,

tls

handshake,

but

this

has

its

own

problem.

Ctls

transcripts

are

very

condensed

because

they

omit

a

bunch

of

information.

That's

very

important

on

the

assumption

that

both

sides

already

know

it,

and

so

now,

at

least

in

some

use

cases,

we

want

to

make

sure

that

both

sides

actually

agree

on

that

information

that

wasn't

exchanged

because

it

they

somehow

was

was

already

configured

ahead

of

time

and

to

make

sure

that

it

matches.

D

We've

adopted

this

solution

in

this

draft,

where

we

take

the

shared

information,

the

pre-shared

information,

which

we

call

the

template

and

we

prepend

it

to

the

transcript.

So

it's

present

in

the

transcript

on

both

sides,

and

so,

if

there's

any

disagreement

about

that,

the

handshake

will

fail.

Next

slide,

of

course,

putting

the

template

in

the

transcript

and

then

hashing

it

into

the

finished

message

means

that

both

sides

have

to

agree

on

it

exactly

and

in

draft

five

and

prior

the

template

was

described

as

a

json

object.

D

I

can

imagine

that

nobody

here

would

be

very

excited

about

trying

to

figure

out

how

to

get

byte

exact

hashes

of

json

objects

that

are

being

passed

around.

So

in

this

draft

there's

still

a

json

format

defined,

but

there

is

also

a

consistent

reproducible,

binary

format

defined

for

the

templates

that

allows

us

to

consistently

hash

it

into

the

template.

D

And

finally,

there's

a

new

system

for

framing

the

handshake

previous

drafts

were

a

little

bit

ambiguous.

I

think

about

how

the

handshake

was

framed.

We've

decided

to

cover

all

our

bases,

basically

by

supporting

both

a

full-size

handshake

option

which

allows

you

to

send

giant

handshake

messages

and

fragment

them

in

dtls

reorder

them

have

them

be

reassembled

in

the

right

order.

D

So

this

draft

is

definitely

still

a

work

in

progress.

There

are

a

lot

of

open,

interesting

questions

that

I've

I've

attempted

to

highlight

some

of

them

here,

but

there's

there's

a

lot

more

details

open

in

the

draft,

a

lot

of

highlighted,

open

issue

or

open

question

tags

in

the

draft.

So

I

would

encourage

anybody

who's

interested

in

this

to

read

the

draft

and

and

think

for

yourself

and

maybe

help

the

editors

think

through

some

of

these

questions.

F

F

Sorry,

so

I

think

this

first

point

yeah.

I

think

that

the

answer

is

almost

certainly

what

you

have

listed

here,

which

is

that

if

we

want

a

compressed

electric

curves

which

just

define

new

code

points

after

all,

we

already

have

compressed

electric

curves

for

x25519

and

x448.

They

just

come

that

way.

So

I

think

with

your

p26c.

If

anyone

still

cares

use

discourage

watching

the

press

is

the

right

answer

and

of

course

you

know,

tails

1.3

doesn't

have

very

many

curves

left

anyway.

F

D

H

I

forgot

to

check

in

oops

one

of

one

of

the

things

that

hillary

also

raised

was

the

relationship

to

ddls

1.3

and

initially

we

wanted

to

have

it

defined

in

a

way

that

it

works.

The

compression

works

for

both

ddls

and

dls

because

for

apparent

reasons,

because

the

protocols

at

the

hancheck

layer

very

very

similar.

D

So

I'm

not

sure

I

understand

I'll

note.

This

is

a

conversation

between

the

editors,

so

maybe

it

can

happen

offline,

but

I

do

think

that

the

current

draft-

essentially

the

current

draft-

is

no

longer

compression

layer,

it's

its

own

protocol,

but

it

is

essentially

a

a

system

for

generating

both

streaming

protocols

streaming

security

protocols

like

tls

and

datagram

oriented

security

protocols

like

dtls.

I

do

believe

they're

both

fully

covered

now.

H

Right

and

that

that

was

an

intention

and

then

ilari

raised

that

question

because

he

was

in

his

email.

He

was

saying

that

this

is.

He

doesn't

see

the

the

need

for

this,

where

I

actually

see

the

need,

I'm

not

entirely

sure

whether

we

are

fully

there

and

specifying

sort

of

the

functionality

for

both

dds

and

tls,

but

yeah.

I

think

that's

something

prototyping

will

help

whether

we,

whether

we're

really

there

yet.

E

Martin

thompson,

so

the

empty

messages.

One

bothers

me

a

little

bit.

We

have,

I

think

it's

end

of

early

data

is

probably

the

one

that

bothers

me

the

most

here.

We

we

need

that

one

and

we

need

to

know

that

it's

there,

because

that's

a

signal

that

we

use

to

to

determine

the

transition

point.

It's

not

necessary

in

the

datagram

versions,

but

it

is

necessary

in

the

stream

versions.

Otherwise

we

wouldn't

have

added

it.

So

I

think

we

can't

omit

them

and

it's

probably

better

not

to

worry

about

that

sort

of

thing.

D

E

And

the

other

one

that

I

got

up

to

speak

about

was

the

versioning

as

long

as

you

have

some

sort

of

context,

string

that

goes

into

the

transcript.

You

can

change

it

later.

You

don't

have

to

worry

about

putting

a

version

number

in

anywhere

or

anything

like

that.

One,

just

change

the

context

string

and

I

think

that'll

be

fine.

D

E

Yeah

I

mean,

whichever

way

you

do

it,

the

the

problem

that

you

need

to

solve

and

I'm

not

sure

if

you've

worked

through.

All

of

that

is

that,

if

you

have

a

version

number,

you

have

to

have

expectations

about

how

people

handle

a

version

number

they

don't

understand,

which

I

imagine

at

this

point

is

don't

use

the

thing

at

all.

Yeah

yeah.

I

F

The

compression

doesn't

affect

that

one

way

or

the

other

all

the

analyses

that

these

that

I

know

of

were

done

on

a

symbolic

level,

assuming

that

ignoring

how

things

were

worked

and

right

in

the

wire.

So

so

it

is

plot.

So

like.

Let

me

need

to

be

clear.

As

far

as

I

know

it

is,

it

might

be

possible

to

produce

a

profile

that

was

horribly

broken

where

you

say

like

all

the

max

for

zero

or

something,

but

I

do

not

believe

there's

compression

not

affects

that.

I

D

F

Question

is

so

let

me

just

like

try

to

narrow

that

very

slightly.

There

were

a

number

of

proposals

to

make,

so

I

think

roughly

he's

doing

some

work

here.

So

it's

a

concrete

example:

supposing

that

you

make

the

transformation

that

some

people

try

to

make,

which

is

you

remove

the

finish

max

and

you

replace

them

and

you

rely

entirely

on

the

aed,

then

you

don't

have

they

don't?

F

Have

you

see

between

the

the

key

key

exchange

and

the

and

the

hint

and

the

encryption

layer

that

we

ordered

nearly

what

right

and

so

under

those

circumstances?

For

instance,

it

would

not

be

safe

to

replace

the

cypher

suite

with

one

that

had

a

very

short

map

as

well,

whereas

it

would

be,

it

would

be

quasi-safe

with

those

1.3.

As

long

as

you

know

what

you're

doing

quasi,

by

which

I

mean

like,

of

course

you

have

a

short

mac,

so

you

get

what

you

get.

F

C

J

So

two

two

things

one

I

actually

spoke

with

punch

out.

I

don't

really

care

if

we

emit

empty

messages

per

se,

just

so

long

as

there's

only

one

valid

way

of

doing

it

like

either.

Everyone

must

omit

or

no

one

may

ever

admit,

omit

is

fine,

but

just

like,

even

if

you

say

both

must

be

accepted,

people

won't

implement

that

and

with

respect

to

formal

analysis,

I

think

the

only

version

that

the

the

only

analysis

that

I'm

aware

of

that

actually

models.

J

H

There

were

two

other

things

we

enabled

abstinent

performance

analysis,

and

maybe

we

should

just

do

it

the

other

way

around.

We

describe

it

and

then

have

the

community

like

kartik

and

jonathan

to

to

do

a

formal

analysis.

Analysis

is

the

use

of

the

randomness

of

random

numbers

initially

in

client,

hello

and

cervelo.

K

I

I

I

I

There's

a

picture

next

slide.

Stop

me.

If

you

want

me

to

just

go

faster

or

slower,

it

kind

of

works,

it's

a

work

in

progress,

it'll,

probably

change

a

little

bit

next

slide.

There

is

a

description

of

what's

in

the

response,

which

is

relatively

obvious.

I

think

it

has

the

ech

config

list

a

ttl

you'd

like

and

which

ports

on

the

web

server

are

using

that

next

slide.

I

I

So

you'd

have

to

say

something

about

what

to

put

in

something

more

about

what

to

put

in

retry

configs,

which

we

don't

currently.

So

you

could

change

the

ec

h

draft

and

do

it,

but

I

don't

think

that's

really

satisfactory.

So

I

think

that

doesn't

particularly

work

well,

we

might

change

our

minds,

but

that's

what

I

think

next

one

unless

somebody,

if

you

have

any

comments

or

want

to

disagree,

just

jump

to

the

mic,

please

another

comment

was

you

could

create

another

resource

record

somewhere?

That

basically

says

here's?

I

What

I'd

like

into

an

scvp,

svcb

or

https

rr

again

that

could

work.

The

difference

is

you'd

lose

the

server

authentication

that

you

get

with

the

well-known

url,

exactly

the

properties

of

having

that

server

authentication

is

something

to

think

about,

but

you'd

lose

it

and

again.

You

know

for

at

least

for

my

setup.

It

wouldn't

really

solve

the

problem,

because

I

still

need

some

way

to

get

the

public

key

for

ech

out

of

the

web

server

and

into

dns

infrastructure.

I

The

question

is

so

it

looks

like

this.

You

know

in

theory,

you

could

be

much

more

generic

about

this

and

say

we'd

like

to

provide

a

mechanism

for

a

tls

server

or

a

web

server

to

publish

everything

it

wants

in

a

svcb

or

https

uri,

and

that

could

get

very

complicated,

and

so

you

could

aim

for

more

generic.

It

seems

to

me,

at

least

for

now

that

it's

the

ech

keys

that

seem

to

be

changing

regularly

and

that's

kind

of

motivating

this

and

seems

to

be.

I

I

You

can

ignore

the

side

now

for

now,

unless

we

get

into

a

discussion

about

it

next,

there

could

be

other

content

that

that

you'd

like

to

see

in

https

that

end

up

essentially

reflected

in

the

inner

client,

hello

in

ech,

and

if

there

is,

I

had

a

look

through.

I

didn't

see

anything

obvious,

but

if

there

is,

we

should

think

about

that

and

then

probably

add

it

to

this

mechanism.

If

we

go

ahead

with

it

and

next

svcb

is

a

kind

of

a

bit

of

a

mystery

to

me

to

be

honest,

it's

it's.

I

I

Yep

other

points

that

were

raised

in

the

discussion

rob

made

a

point

about

not

not

mentioning

that

the

sheridan

split

mode,

topologies

mnot,

said

it

might

need

a

well-known

url.

That's

fair

enough

ben

said

the

path

was

wrong.

I

didn't

understand

why

but

nevermind

and

lastly,

I

think

looking

through

it

recently

there's

either

you

have

a

prefix

poor,

prefix

queue,

name

in

your

https

or

or

you

can

have

a

port

in

the

https

or

or

which

I

didn't

understand.

F

And

then

the

one

sort

of

lacuna

is

the

is

the

you

know,

retry

mode

with

the

public

name,

but

that

happens

like

seconds

afterwards.

It's

like,

if

you

cheat,

if

you

change

your

spology,

that

in

that

case

like

sorry

bad

day,

but

because

you

have

a

long

tt

for

you

a

lot

of

ttl

here,

that's

much

longer

than

that.

That

is

not

seconds,

but

it's,

like

you

know,

hundreds

of

seconds

that

easily

can

produce

the

situation

we're

talking

about

here,

and

so

I

and

so

I'm

on.

F

F

D

I

F

Let

that

I

mean

that

I

mean

so

I'm

provisionally

prepared

to

believe

that,

like

it's

fine

under

the

situation,

the

case

you

just

laid

out

then,

but

then

I

think

it

is

a

very

clear

warning

that

this

is

not.

This

is

not.

This

is

not

a

replacement

for

http

https

rr

for

generic

clients,

because

we

don't

want

people

getting

in

that

box

mode.

Sure.

I

That's

definitely

not

the

intent

for

this

yeah.

I

agree.

Okay,

just

before

you

go

back,

I

don't

know

so.

The

next

steps

basically

we're.

I

had

a

meeting

with

ben

and

rich

they're

gonna

join

his

co-authors.

They

said

unless

they

hate

me

after

this

and

we'll

probably

we'll

ask

the

chairs

whether

we

should

create

a

gif

repo

for

this

immediately

or

whatever,

and

I

think

ultimately

the

aim

would

be

to

whenever

you

hit

publication

request

for

ech,

maybe

to

look

for

a

working

class

called

roundabout,

then,

but

not

before.

L

One

of

my

points

was

actually,

I

think,

covered

to

some

extent

by

by

eckerd.

I

am

I'm

a

bit

concerned

here

that

the

hashing

infrastructure-

that's

there

for

http,

is

not

really

being

considered

by

this

draft.

I

think

your

answer

that

this

is

intended

for

a

very

limited

use

case

of

the

http

server.

Talking

to

a

specific

dns

infrastructure,

somewhat

removes

the

possibility

that

there's

a

random

set

of

transparent

caches

in

the

middle,

but

especially

if

you

think

there

might

be

a

different

use

case

in

the

future.

L

You

really

do

need

to

think

through

what

the

caching

architecture

looks

like

here,

and

the

interaction

between

the

the

time

to

live

that

might

be

present

in

the

in

the

http

caches,

which

is

not

always

obedient

to

things

in

in

the

instructions

and

how

you

would

deal

with

that,

like

whether

you'd

use

e-tags

or

something

like

that.

But

the

other

bit

of

this

is

really

this

doesn't

sound

real

baked

yet.

L

D

D

I

G

B

All

right,

exciting

times,

registries

yeah.

I

know

exactly

next

slide,

please

just

a

quick

refresher.

We

had

an

individual

draft

that

we

took

to

sag

to

try

to

figure

out

what

we

wanted

to

do

to

change

the

recommended

column,

because

that's

really

all

this

update

is

really

about

and

the

consensus

was

to

add

a

d

which

basically

is

discouraged

or

weak,

and

so

this

01

version

of

the

working

group

draft

is

an

attempt

to

trying

to

do

that.

B

So

there

might

be

some

controversial

selections

here,

joe

and

I

basically

just

threw

them

down

to

see

what

was

going

to

happen.

Also

note:

there

are

some

other

changes

in

this

version

which

are

trying

to

make

it

a

little

bit

easier

on

iana

to

be

like

we

changed

these

ones.

We

didn't

change

these

other

ones

to

kind

of

make

it

a

little

a

little

clearer

it's.

This

change

has

been

of

a

bit

of

a

pain

ass

because

there

was

new

registrations.

B

So

we

did

some

minor

updates

to

the

references

too.

The

first

extension

types

value.

We

went

through

each

registry

of

the

tls

registry

and

said:

what

are

we

going

to

change

so

the

two

that

we

came

up

with

were

truncated,

hmac

and

connection

id.

So

we

we

marked

those

as

d,

I'm

just

going

to

roll

through

these

and

people

can

jump

up

and

scream

and

yell

the

cipher

suit

registries.

I'm

hoping

that

all

of

the

most

of

these

will

be

done

by

this

obsolete.

F

B

F

F

I

think

we

should,

I

think

we

should

rename

it

to

underscore

capital

reserved

and

then

make

it

n

or

d.

I

don't

care

okay,

I

think

all

I

guess

all

the

my

position

is

all

the

reserved

ones

like.

Maybe

we

may

need

like

another,

like

I

mean

like

we

like

it's

like

in

a

different

there's,

a

different

category.

It's

like

it's

like

this

is

like

not

even

a

valid

codeplay

anymore.

Well,.

H

B

B

We

did

this

draft

that

there

were

some

orphaned

registries

that

were

tls

1.2

specific

and

we

were

lazy

and

didn't

address

them,

but

tls

1.2

is

going

to

be

around

for

a

while,

and

now

we've

added

this

d

we

figured

we

had

to

go

through

these,

so

here's

another

list

of

things,

registries

that

are

that

are

orphaned,

that

we

need

to

address

so

next,

so

the

first

one

hash

algorithms.

I

highlighted

the

ones

in

d

just

to

show

that

they

were

different.

B

F

B

F

D

E

It's

kind

of

martin

thompson.

I

think

I

think

the

standard

that

we

need

to

be

applying

here

is

that

if

there

isn't

an

rfc

that

we

can

all

get

behind

that

says

this

is

bad.

Then

it

shouldn't

say

d

either.

I

think

that's

really

the

standard

that

I

would

prefer

us

to

be

applying

here.

So

when

it

says

d

it

means

the

itf

think

this

is

all

think

this

is

bad.

E

F

F

G

B

All

right,

so,

let's

go

past

this

one

and

then

we

have

some

open

issues

where

we

have

elliptic

curve

related

registries,

and

so

one

of

the

things

was

that

we

thought

hey.

Maybe

we

could

put

this

in

the

deputy

obsolete,

keck

straps,

but

you

know

these

are

the

these

are

the

six

registered

values?

Maybe

we

just

stick

it

in

our

draft

and

figure

out

what

we

want

to

do

with

it.

I

didn't

really

know

what

to

do

with

these.

So.

F

E

So

the

reason

that

you

don't

want

the

other

ones-

and

you

want

them

to

be

d-

is,

if

you

put

them

on

the

wire

things,

will

explode

right.

It's

not

that

they're

broken

it's

not

that

they're,

fundamentally

insecure!

It's

just

that

if

you

put

them

on

the

wire

things

will

break,

and

so

we

can

actively

discourage

people

from

doing

that.

So

let's

do.

F

Yeah

yeah,

I

agree,

explicit

curves

are

like

bad

news.

It

was.

It

was

the

compress

that

I

wasn't

saying

where

they

were

just

the

the

supplies

occurs.

Definitely

we

should

forbid

and

the

compressed

are

kind

of

like

like

just

nobody

uses

them

and

martin

says

it's

gonna

make

things

blow

up,

so

I

think

we're.

I

think,

we're

in

agreement

all

right.

Q

Q

Q

During

the

last

working

group

meeting

at

itf

113,

we

were

asked

to

verify

that

this

document

should

fall

under

this

walking

group,

the

tls

working

group

and

the

chairs

kindly

checked

with

the

security

area

area

director

paul

wooters,

and

he

confirmed

that

the

document

indeed

belongs

to

this

group.

So

thanks

for

writing,

raising

this

issue.

R

G

Q

The

other

part

of

this

issue

is

what

happens

when

the

client

encounters

a

so-called

bad

group.

So

if

the

group

is

of

an

appropriate

size,

it

is

safe,

security-wise

for

the

client

to

verify

the

group

structure

and

proceed

with

the

connection.

If

the

group

is

safe,

we

could

add

language

to

the

document

allowing

that,

however

performing

this

verification

is

computationally

expensive.

Q

So

if

the

client

is

unwilling

to

invest

in

performing

this

verification

or

if

we

choose

to

disallow

non-safe

listed

groups

altogether,

does

the

question

of

what

behavior?

What

behavior?

The

document

should

specify

whether

the

client

must

abort

the

connection

in

such

circumstances

or

that

it

merely

should

abort

the

connection.

Q

S

T

Q

G

T

Q

Q

K

Okay,

scott

floor,

cisco

systems

about

mike

okay,

scott

flora,

cisco

systems.

As

for

checking

for

the

group

structure,

unless

it's

a

safe

crime,

we

do

not

pass

enough

informa

information

to

to

to

check

the

group

structure

and

even

a

safe

prime,

it's

it's

it's

it's

not

cheap.

I

would

recommend

just

a

abolish

just

just

forbidding

all

any

anything

other

than

a

name

name

group.

P

N

Yeah

ben

katek

back

and

a

nice

segue

from

victor's

comment,

which

is

just

me,

sort

of

spitballing

brainstorming

on

the

fly

here.

But

what

would

would

we

get

enough

if

we

went

and

made

it

easy

to

register

new

named

groups

for

these

things

that

are

already

wide?

It

might

be

deployed,

such

as

what

postx

has

or

anything

else

like?

Would

that

get

us

enough

enough

properties

that

we

would

be

able

to

leverage

that,

for

the

purposes

of

this

document,.

Q

Q

V

V

So

maybe

what

the

specification

needs

to

do

is

say

unless

there

was

a

known

pre-agreement

with

the

third,

with

with

the

peer

that

you're

talking

with

right,

we

don't.

I

don't

believe

anybody

here,

actually

thinks

that

it's

wrong

for

a

private

organization

to

use

their

own

groups,

that

they've

done

their

own

verification

on

that

they

could

that

they

could

list

right.

I'm

not

recommending

people

do

that,

but

the

the

pushback

that

you're

going

to

get

from

these

pen

testing

tools

needs

to.

We

need

to

be

able

to

say

this.

Pushback

is

wrong

and

here's.

V

Why

and

here's

a

statement

that

understands

why

the

pen

testing

tools

thought

they

had

the

right

idea.

We

need

to

acknowledge

that

concern

so

that

we

can

actually

get

them

to

stop

making

these

requests

right,

and

so

I

don't

know,

I'm

not

sure

exactly

the

right

way

to

do

that

in

this.

In

this

wording,

I,

like

ben's

suggestion

of

like

let's

have

a

a

registry

that

people

can

put

you

know

widely

distributed

groups

in

that

actually

have

been

checked,

but

I,

but

I'm

very

reluctant

to

like.

V

Q

E

Go

ahead,

martin

yeah

martin

thompson.

The

discussion

in

in

chat

here

is

kind

of

interesting.

I

think

that

there's

several

of

us

who

sort

of

realized

that

in

tls12

and

earlier

using

the

ffdhe

groups,

which

only

specify

the

the

one

value

essentially

which

doesn't

let

you

do,

the

validation

of

scot

point

points

out.

E

We

couldn't

turn

it

79

19

on

to

tls

1.2,

because

it's

just

impractical

and

then

with

people

going

off

and

generating

their

own

groups.

You've

got

no

way

of

knowing

that

they're

okay

a

priori.

So

this

is

exactly

the

point

that

dkg

made

if

you've

got

your

own

private

agreement-

that's

great,

but

you

can

also

have

your

own

private

protocol

at

that

point.

So

we're

not

adding

a

lot

of

value

here.

E

Q

G

T

T

Q

C

C

S

So

in

global

aviation

we

are

defining

a

trust

framework

to

be

able

to

use

pki

and

to

harmonize

and

map

commercial

aviation

identities

and

access

requirements

to

a

common

set

of

operating

rules,

something

that

may

seem

very

common

within

the

internet

and

within

even

some

examples

of

u.s

federal

bridge.

But

it's

have

never

been

done

so

far

in

aviation

and

we

have

been

using

something

that

has

not

been

used

very

much,

which

is

the

server

based

certificate

certificate

validation

protocol

and

to

validate

trust

and

identity.

S

Because

one

of

the

challenges

in

aviation

is

that

you

have

a

lot

of

different

organizations

that

are

not

centralized.

You

have

iko

that

operate

on

a

principle

of

state

sovereignty

and

the

software

is

quite

often

custom

and

developed

owned

and

operated

and

managed

independently.

So

there's

not

no

easy

way

to

say

I

can,

you

know,

send

out

trust

lists

with

my

software

updates

to

the

different

entities

and

then

like

with

ssl

on

the

web.

You

you

can

trust

who

connect,

who

I

am

when

you

connect

to

me.

S

S

So

you

can

see

it

becomes

a

big

maintenance

problem

for

the

aircraft

itself.

In

that

case,

even

if

you

use

short-lived

certificates

and

so

grant

certification

validation

on

the

aircraft,

using

suv

validation

basically

would

only

require

a

very

small

one

or

a

few

trust

anchors

that

don't

change

and

we

are

proposing

a

new

svp

validation

extension

to

remove

the

burden

of

the

sevp

request

from

the

aircraft

client

and

having

the

ground

server.

W

W

All

right

is

that

better

all

right,

sorry,

I

don't

speak

loudly

to

start

with

okay,

so

this

shows

a

diagram

of

how

it

could

be

used

in

aviation.

So

in

our

case,

the

aircraft

is

the

dtls

client

when

it

sends

down

the

hello

message

to

the

ground

system.

It

would

include

with

it

this

new

dtls

extension

of

scvp

validation,

request

and

a

structure

which

optionally

includes

a

list

of

the

scvp

responders

that

it

trusts.

W

If

it

does

not

include

any,

then

it

insinuates

it's

explicitly

known

by

the

ground

system

which

scvp

responders

the

aircraft

trusts.

It

also

can

optionally

send

down

a

list

of

trustless

trust

anchors

if

it

includes

the

trust,

anchors

and

the

certificate

path

has

to

terminate

at

one

of

those

trust

anchors.

W

It

optionally

can

have

a

cash,

it's

suggested

to

have

a

cash,

and

if

there

is

a

matching

value

in

the

cash

to

return

that

up,

if

not

to

translate

that

validation

request

into

an

seb,

cbp

cv

request,

object

and

send

it

to

the

sevp

server

and

receive

back

the

response

from

that

server.

The

response

is

signed

by

the

trusted

suvp

server,

so

it

can

be

trusted

by

the

aircraft

and

it

is

sent

back

up

to

the

aircraft

with

the

certificate

of

the

server.

W

Next

slide,

please

all

right,

so

this

shows

it

in

a

simplified

handshake

diagram.

So

you

can

see

there's

very

little

changes

to

the

actual

handshakes.

It's

a

extension

on

the

client,

hello

message,

a

validation

request

for

this

message.

We

have

defined

it

for

type

scvp,

but

have

allowed

it

to

be

expanded

to

other

validation

protocols

as

well

for

future

use,

and

then

the

sevp

cv,

request

and

cv

response

is

existing.

W

That's

that's

not

new,

it's

new

to

incorporated

into

the

tls

server

and

then

there's

a

new

extension

to

the

certificate

message

of

validation,

request

with

the

path,

validation,

information

again

it's

defined

for

type

scpp

and

would

include

that

cv.

Response

back

to

the

aircraft

or

in

this

case

back

to

the

tls

client.

W

N

C

N

W

N

W

So

there

is

a

a

mapping

from

the

request

message

coming

from

the

client

to

the

request

going

to

the

scbp

server.

That's

defined

in

the

proposal,

and

then

there

are.

There

are

certain

values

that

are

only

known

to

the

server

that

need

to

be

checked

in

the

response.

Coming

back

to

verify

that

the

the

response

is

valid

before

passing

it

up

to

the

aircraft.

A

T

H

Hi

this

is

hannes.

This

is

sort

of

more

in

response

to

uri,

because

this

topic

comes

up

regularly.

We've

gone

through

this

numerous

times

in

the

meanwhile

on

the

question

of

like

is

ddls

something

that

you

can

use

on

iot

devices

and

the

answer

is

look

at

the

papers.

Look

at

the

documents

we've

done

the

profile

it's

deployed

in

millions

of

devices,

even

dls

is

deployed

in

millions

of

iot

devices.

H

H

F

T

F

I

believe,

honestly

talking

about

all

these,

he

does

work

on

iot

devices,

so

erica

scroll,

so

just

I

just

want

to

see

if

I

can

sharpen

ben's

point

a

little

bit

to

make

sure

I

understand.

As

I

understand

it,

this

is

an

entirely

stock

scvp

server

on

the

right,

correct,

good,

okay,

so

this

does.

This

seems

this

is

quite

reasonable.

We

talked

on

email,

I

think

a

little

bit.

I

guess

my

question

is:

what

do

you

want?

Do

you

want

this

adopted

by

the

working

group?

S

F

For

working

group

adoption-

yes,

please

I

I

guess

so

I

I'm

sort

of

provisionally

in

favor

of

that.

I

guess

my

one

question

would

be

something

we

often

have

situations

where

an

external

body

wants

us

to

do

something

and

where

we

have

like

a

very

thin

kind

of

like

relationship

with

them

with

like

a

couple

people

are

you,

the

people

we're

talking

to?

Are

there

other

people

that

sort

of

show

up

and

and

help

us

out,

because,

like.

F

X

Bob

squitz

I've

rob

segers,

has

pulled

me

into

this

fun

and

games,

and

so

I

can

answer

that.

Yes,

the

people

at

the

table

are

the

the

airframe

manufacturers,

the

other

national

caas,

the

the

airline

industry.

A

number

of

the

players

are

there

at

the

table

and

how

they're

going

to

do

this

because

they

really

have

a

serious

issue

they

need

to

address.

I

feel

that

players

are

there.

They

are

dedicated

to

get

this

done.

X

X

But

the

number

of

those

servers

which

are

around

the

world

is

a

manageable

number

and

again

the

parties

that

own

these

things

at

the

the

national

airports

and

so

forth.

There

we

have

enough

of

them.

I

think

committed

that

the

rest

will

then

follow

along

the

big

players

are

committed

and

the

rest

will

participate.

So

I

think

you

have

the

community

of

interest

for

this

and

it's

worth

the

work

group

putting

their

knowledge

behind

to

make

sure

this

is

done

right,

because

this

has

international

consequences.

C

C

H

R

H

Close

to

anything

asking

for

adoption,

more

figuring

out

whether

any

one

of

you

is

interested

in

that

type

of

activity,

as

you

know,

I

work

for

arm

was

previously

mentioned

in

my

side

of

the

industry.

There's

a

lot

of

excitement

in

defining

new

hardware

extension

for

coming

up

with

new

forms

of

isolation,

which

then

obviously

bubble

up

in

operating

systems,

and

also

demonstrating

that

you

have

these

hardware

capabilities

and

this

isolation

capabilities

to

other

parties,

and

that's

happens

in

form

of

at

the

station.

H

H

It's

called

the

arm

platform,

security

architecture,

initial

attestation,

token,

some

fancy

name

that

our

marketing

people

came

up

with

and

that

sort

of

captures

what

the

hardware

is

and

also

what

the

state,

the

initial

state

at

the

boot

time,

the

software

actually

what

it

compromises,

what

the

different

components

are

quite

useful

information,

obviously,

but

for

the

dls

exchange

you

need

more

than

just

having

that

token,

which

you

could

describe

as

a

bare

token.

You

need.

G

H

Talk

about

also

obviously

described

in

a

document,

so

here's

an

instantiation

of

how

this

looks

like

in

a

in

a

typical

scenario.

So

the

device

is

split

into

in

this

case

into

two

parts,

which

is

like

the

part

where

the

linux

runs,

one

sort

of

compartment

and

another

one

which

is

here

called

the

secure

world,

which

runs

something

like

opti

as

a

as

an

operating

system.

H

So

they

have

different

operating

systems

running

at

the

same

time

in

different

software

isolation,

containers

and

if

the

dls

stack

is

in

in

running

in

linux

as

an

application

and

then

sort

of

communicates

with

this

secure

world

side

with

what

in

where

an

attestation

service

is,

and

that

talks

to

a

specific,

in

this

case,

a

security

engine

like

a

dpm

or

something

else

to

get

the

other

station

information.

And

then

it

bubbles

up.

H

But,

as

I

said

that

token,

by

itself

won't

do

the

job.

So

there's

another

layer

needed

where

the

device

at

some

point

in

time

generates

a

public

private

keypair

and

produces

an

at

the

station.

A

key

at

this

high

quality

key

at

the

station

token,

which

is

conceptually

you

could

think

of

it

as

a

sibo

web

document,

with

a

proof

of

possession

key

that

is

then

used

in

the

dls

handshake

to

actually

demonstrate

the

possession

of

the

private

key,

and

that

happens

that

is

generated

inside

this,

the

secure

world.

H

F

Questions

yeah,

so

you're,

probably

gonna

you're,

probably

gonna.

Tell

me

there's

some

internal

reason

why

this

won't

work

which

I'm

willing

to

accept,

but

is

this?

Is

there

one

key

or

two

keys

like?

Does

this

look

like

tls

client

auth,

or

does

it

look

like

with

a

funny

certificate

attached

to

it

or

like

something

else.

H

H

Yes,

in

in

it

comes

from

the

verifier

extruder

through

the.

So

that's

why

there

are

these

two

types

of

models

in

in

rats.

So

if,

in

the

background

check

model,

the

nonce

comes

in

all

cases,

it

comes

from

the

verifier,

but

in

the

background

check

model

it

then

gets

channeled

over

the

the

dls

exchange,

the

dls

handshake

right,

I'm

just.

F

H

Y

Thank

you.

Thank

you,

hi

hi.

I

have

a

question.

First

of

all,

I

think

this

draft

is

very

useful

when

we

use

this

in

the

trusted

social

environment

and

to

the

client,

but

I

have

a

question

is

that

a

this

protocol

only

supports

the

heat

and

the

tpm

attestation

of

if

there

is

this

protocol

support

other

attestation,

for

example,

if

there

is

a

measurement

result

or

just

a

hash

value

that

this

protocol

will

support

that.

H

Yeah,

so

we

we

wanted

to

support

different

attestation

formats.

To

begin

with,

we

wanted

to

focus

on

the

sort

of

like

the

dbm

and

the

and

the

heat

based

approach

and,

like

I

don't

see

a

reason

why

others

couldn't

be

included

in

the

air,

so

they,

the

the

part

that

goes

into

the

dls

exchange,

doesn't

actually

care

much

about

what

it

is,

but

for

the

for

the

overall

functionality.

Obviously,

it

matters

how

you

stick

the

different

pieces

together.

V

V

H

Actually,

it's

it's

both

and

in

in

this

simplifying

example.

I

just

focus

on

the

client

and

the

code

initially

focus

on

the

client,

but

it

turns

out

that

you

also

want

the

the

server

adjusting

to

the

client.

For

example,

if

you

have

some

confidential

workloads

in

some

cloud-based

service,

you

also

want

to

know

what

are

you

actually

uploading,

your

code

or

whatever

to

the

hardware

you're

expecting

it

to

be.

V

Yeah,

so

thank

you.

That's

that's

useful

to

understand.

My

second

question

is

about

the

the

persistence

of

the

platform

state

and

how

this

can

be

used

like

what

are

the

ways

that

this

could

be

anonymized

so

that,

for

example,

two

separate

processes

that

run

on

the

same

machine

don't

end

up

identifying

themselves

as

being

cotenants

or

if

a

user

say

wants

to

clear

their

their

state

and

come

up

with

a

new

identity

that

doesn't

that,

then

the

platform

state

doesn't

itself

leak,

a

linkable

identifier

to

the

server

over

time.

V

H

A

very

good

question-

and

I

think

this

is

on

the

to-do

list-

obviously

like

you

monty-

for

dpm

and

and

for

some

of

the

the

at

the

station

technology.

So

there

are

two

pieces

to

to

your

answer.

One

is:

how

does

the

attestation

technology

avoid

providing

identifiable

information

across

different

interactions?

The

other

one

is

there's

some

additional

information

this.

Z

Yeah,

so

I

think

you've.

A

lot

of

these

are

a

bunch

of

to-do

things

which

you

know

I'm

going

to

be

involved

in

obviously

monty

wiseman

by

the

way,

beyond

a

beyond

identity.

The

the

other

there's

two

reasons

for

the

attestation

key,

not

being

the

thing

to

sign.

One

is

the

nonce,

but

the

other

isn't

is

in

order

to

be

of

any

value.

The

attestation

key

has

to

have

the

properties

of

only

signing

things

that

are

inside

the

tpm.

Z

Otherwise

you

can

hand

out

a

blob

and

say

here

sign

this

it'll

become

a

signing

fool

right,

so

it

cannot

sign

external

data.

It

can

only

sign

stuff,

that's

inside

the

tpm,

except

that's

another

important

reason

why

you

can't

use

the

fstation

key.

The

other

thing

that

I

think

we

need

to

start

thinking

about

is

there's

lots

of

aspects

of

the

platform.

Are

you?

Do

you

only

care

about

the

bios?

The

firmware?

Do

you

only

care

about

the

os?

Do

you

care

about

ima?

Z

We

have

to

be

able

to

hand

it

a

bunch

of

stuff,

this

here's

the

stuff

I

care

about

and

then

get

this

backside,

and

I

think

this

is

going

to

be

a

more

complicated

thing

than

we.

I

think

it's

a

valuable

thing

to

do.

Don't

get

me

wrong,

but

I

think

this

is

going

to

be

a

lot

of

work

and

I'll

be

happy

to

be

involved.

O

Hi