►

From YouTube: IETF114-OPENPGP-20220729-1400

Description

OPENPGP meeting session at IETF114

2022/07/29 1400

https://datatracker.ietf.org/meeting/114/proceedings/

B

D

B

B

So

this

is

our

agenda.

We

did

a

working

group

last

call,

not

probably

it

won't

be

the

last

one.

We

have

a

bunch

of

issues

to

talk

about

that

that

we'd

like

to

get

through

and

if

we

can

reach

some

kind

of

resolution

in

the

in

the

room,

whether

here

or

virtually

for

those

issues,

that'll

be

great

and

then

confirm

them

on

the

list

later.

B

C

B

C

B

A

B

C

So

so

the

reminders

here

this

is

about

whether

the

padding

package

should

have

all

zero

content,

random

content

or

some

other

scheme

question

about.

What

do

we

tell

implementations

to

do

if

they

receive

aead

packets

and

try

to

decrypt

them

and

get

a

failure

issue

about

whether

we

include

gcm

in

this

draft

or

not

question

about

whether

we

use

hkdf

to

bind

keys

to

the

modes

in

aead.

C

I'm

going

to

look

at

what

we

do

with,

where

do

the

certificate-wide

parameters

like

algorithm

preferences

and

things

where

do

they

live

in

an

open,

pgp

certificate?

Are

they

in

the

direct

p6,

as

the

draft

currently

says,

the

whether

we

continue

to

disallow

the

revocation

key

sub

packet

for

v5

keys

or

whether

we

want

to

roll

that

back?

C

C

Do

we

believe

that

the

text

on

argon

2

is

clear?

Do

we

have

sufficient

guidance

there?

Is

there

anything

that

can

be

cleaned

up,

and

how

should

we

handle

problematic

keys

that

we've

been

seeing

in

the

wild?

There's

been

some

reports

about

some

fairly

popular

keys

that

don't

conform

to

any

of

the

specifications,

including

rfc

4880,

and

should

do

we

need

to

update

the

text

to

handle

it

better?

C

Do

we

need

to

modify

the

spec

for

v5

keys

to

not

have

to

worry

about

this

kind

of

failure

in

the

future,

and

then

the

last

question

I

think,

is

about

how

we

want

to

you

know

if

we

can

get

through

these,

we

get

a

new

draft

out.

How

do

we

want

to

scope

the

process

going

forward?

Do

we

want

to

say

okay,

when

the

new

draft

is

out

if

it

handles

all

these

things?

The

way

the

working

group

agrees?

C

C

So

that's

the

that's

the

queue

of

issues

that

we're

looking

at

I'm

going

to

pop

back

to

the

first

one.

So

we

have

some

time

to

discuss

each

one,

but

hopefully

that

gives

you

a

flavor

of

the

types

of

stuff

that

we're

that

we're

asking

for.

If

you

pull

the

slides

from

the

data

tracker,

the

meeting

agenda,

you

get

links

there

to

go

to

each

of

the

issues

and

we'll

pull

up

the

issues

here

as

well

during

discussion.

C

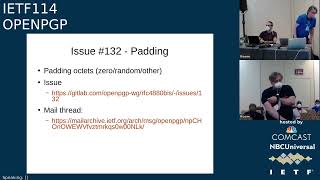

So

heading

back

to

issue

132

about

padding.

We

have

this

padding

packet

and

the

question

for

the

group

is:

what

should

the

content

of

the

padding

packet

be?

We've

had

different

people

with

different

perspectives

on

this

on

the

list,

we've

had

a

couple

different

variants

in

the

in,

as

this

evolved

through

through

git.

C

The

status

quo

is

that

the

padding

packet

should

be

full

of

random

octets

and

there's

a

little

bit

of

guidance

about

where

to

get

the

random

octets.

But

there's

been

discussion

on

the

list

about

whether

we

should

revert

this

and

we

have

a

couple

different

merge

requests

that

actually

offer

different

ways

to

handle

it.

C

D

D

So

I

don't

think

that

so

a

rigid

construction

based

on

something

that

is

to

do

with

either

the

the

that

is

to

do

with

something

generated

from

the

key,

but

through

a

different,

kdf

or

whatever

seems

to

be

need

to

be

the

best

solution.

Because

then

you

don't

give

chosen

plain,

gnome

plain

text

and

you

don't

give

a

covert

channel.

F

B

C

C

D

F

B

C

Right,

so

the

receiving

implementation

needs

to

know

what

to

do,

and

I

guess

we

have

this

question

of

what

what

would

a

receiving

implementation

do

when

receiving

one

of

these

things,

that's

supposed

to

be

deterministic

but

isn't

like.

Should

it

reject

the

packet

stream?

Do

we?

What

are

we?

What

kind

of

brittleness

are

we

willing

to

incorporate

in

order

to

defend

against

this

particular

covert

channel.

B

C

G

Yeah,

I

also

think

that,

like

there

are

many

many

ways

on

open,

pgp

to

put

in

some

side

channel

like

to

put

in

some

cover

data,

just

adding

a

packet

that

is

an

unknown

version

or

an

experimental

and

and

therefore

adding

random.

I

don't

think,

is

that

much

of

adding

like

it's

not

adding

that

much

to

the

copper

channel

in

openphp.

C

So

it

seems

like

one

one

question

is:

do

we

want

receiving

implementations

to

reject

padding

packets

that

are

considered

to

be

malformed

right,

because

that

actually

would

govern

whether

what

like

are

we

are

we

making

a

requirement

about

what

what

goes

in

it

or

not,

and

we

can

make

recommendations

about

what

to

put

in

it?

But

there

is

this.

There

is

this

underlying

question

of.

If

we

make

a

recommendation

and

it's

not

followed,

are

we

going

to

expect

the

receiving

side

to

reject

it?

B

B

C

Random

is

currently

recommended

and

there's

a

step.

There's

a

separate

discussion

about

what

you

know.

This

kind

of

randomness

is

probably

the

least

important

randomness

of

of

of

all

of

the

kinds

of

random

there's

a

section

about

how

how

do

you

generate

randomness

in

open

pgp,

and

it

calls

this

out

as

a

as

a

distinct

flavor

from

like

key

generation,

randomness,

okay,.

B

H

Yeah,

so

I

don't

actually

have

an

issue

with

random

data

in

the

panning

packet.

The

only

issue

I

have

with

the

current

text

is

that

it

suggests

that

the

random

data

protects

against

higher

level

compression

disguising

the

let's

say,

padding

or

sorry

negating

the

padding.

So

in

my

opinion,

it

doesn't

because

the

compression

will

compress

the

non-random

padding

anyway

and

will

reveal

information

about

the

length

it

has.

So

I'm

fine

with

leaving

it

random,

but

just

removing

that

text,

but

yeah.

F

B

B

B

C

A

F

B

I

think

I

actually

understand

this

one.

That's

that's

that's

helpful,

so

this

one

here

when

we're

using

aad

algorithms

and

you

decrypt,

the

issue

is

what's

how

to

handle

that

we

have

some

chunking

so

that

these

decrypt

failures

don't

necessarily

apply

to

an

overall

message,

and

currently,

I

think

the

text

in

a

few

places

says

that

if

you

get

an

aea,

decryption

error,

there's

a

should

fail

kind

of

statements,

and

I

think

the

suggestion

for

this

issue

was

to

change

those

to

must

fail.

Instead

of

should.

B

C

C

F

E

C

B

F

C

B

B

B

B

So

and

again

there

are

arguments

for

and

against

inclusion

of

gcm,

and

I

think

another

salient

point

is

that

currently,

with

the

specification

required

iana

rules,

if

we

do

not

include

gcm,

somebody

else

can

come

along

and

add

it

next

week

as

soon

as

we're

done.

So

it

seems,

like

the

opinions,

are

kind

of

all

over

the

place.

But

let's,

let's

give

people

a

chance

to

speak

to

should

we

include

gcm

in

this

specification

now

or

not.

C

D

D

But

if

I'm

going

to

implement

pgp

I'm

going

to

implement

the

algorithms

in

the

draft

and

I'm

not

going

to

accept,

expect

to

be

able

to

send

mail

in

a

scheme

that

is

not

in

the

draft.

So

not

putting

it.

Sorry,

if

gcm

is

not

in

the

rfc,

it

will

have

a

material

impact

on

adoption

and

it

will

encourage

people

to

use

ocb,

which

is

a

stronger

construct

which

we

all

should

be

using.

C

So,

thanks

for

the

reminder

for

the

clarification

ocb

in

the

draft

is

flagged,

as

must

implement

and

eax

is

not

flagged,

as

must

implement

and

gcm

is

also

flagged,

as

is,

is

not

mandatory

to

implement

yeah.

I

hear

what

you're

saying

about

the

presence

in

the

in

the

in

the

draft,

but

there

is

a

there

is

a

notable

distinction

between

the

modes

and

there

is

an

advertising

mechanism

that

where

you

can

indicate

what

you,

what

what

aed

algorithms

you

support

with

what

other

ciphers.

D

I

C

K

K

K

C

B

B

E

Paul

speaking

as

an

individual,

I

don't

think

it

matters

very

much

where

tcm

is

fine

pirates

in

this

rfc

and

another

one,

the

people

that

are

going

to

use

it

are

most

likely

the

people

that

need

to

be

fips

compliant,

and

they

have

no

other

choice

at

this

point.

So

those

will

need

to

implement

these

things

anyway,

whether

they're

in

a

separate

document

or

in

this

rfc.

So

I

don't

think

it

matters

where

it

is,

but

but

as

as

the

people

who

want

fibs

have

no

other

choice

at

the

moment

they

will.

J

Hi

jonathan

hamill

canadian

center

for

cyber

security,

so

I

I'm

in

favor

of

including

gcm.

While

we

don't

have

requirements

for

using

fips

validation.

It

is

our

guidance

because

because

it

does

provide

implementation,

validation,

it's

not

just

a

policy,

it

provides

a

security

benefit

that

the

implementations

can

actually

be

tested

by

an

independent

lab,

and-

and

that's

why

I

would

like

to

see

it

included.

L

Or

ori

steele

from

transmute,

I

agree

with

everything

he

just

said.

I

probably

would

get

support

for

it

if

they

were

to

implement

it

anyway,

but

I

think

for

the

the

reason

stated

you

know

regarding

fips

and

the

expectation

that

I

think

users

will

have

that

there

will

be

at

least

one

sort

of

fips

algorithm

that

would

be

available.

I

think

it

should

be

included.

M

Ben

kadek,

so

I

feel

pretty

similarly

to

paul

and

that

you

know

this

is

gonna

happen

and

you

know

having

the

working

group

do

it

sort

of

lets

us

retain

a

little

bit

of

control.

I

don't

have

a

particularly

strong

preference

at

all,

but

I

have

a

slight

preference

that,

like

the

working

group,

would

specify

gcm

but

in

a

separate

document,

but

if

we

keep

it

in

this

document,

I

can

certainly

live

with

that.

B

B

C

If,

if

someone

is

tampering

with

the

with

the

construction

that

you

have

there's

some

concern

that

key

reuse

across

different

modes

could

lead

to

some

kinds

of

problems.

I

believe

there

are

maybe

other

arguments

for

why

to

use

the

hkdf

as

well,

but

there

the

the

issue

raised

on

the

list

was

hkdf.

Isn't

is

an

unnecessary

additional

construction

to

include

here,

and

maybe

it

would

be

simpler

if

we

were

to

remove

it.

C

This

is

again

it's

issue

136.,

so

I'm

wondering

whether

folks

would

be

up

for

speaking

to

this

again.

I

know

that

we

have

had

discussion

on

the

list

about

it.

I

know

we've

had

discussion

in

the

data

in

the

issue

tracker

about

it,

but

we

would

like

to

have

that

discussion

here

in

the

room

if

we

can

anyway

so

now's

a

good

time

to

to

state

your

your

opinions

and

preferences

here.

H

And

then,

if

you

have

a

decryption

oracle

for

cfp,

which

has

happened

in

the

world,

then

you

also

have

a

decryption

oracle

for

gcm,

which

basically

means

that

the

the

security

of

the

gcm

mode

then

gets

reduced

to

the

security

of

cfb,

which

is

bad.

So

I

mean

in

general,

I

think

not

being

able

to

convert

keys

and

ciphertext

between

different

modes

is

good

but

yeah.

So

that

was

the

most

specific

issue

that

I

know

of

so,

if

we're

keeping

gcm,

then

I

think

we

should

definitely

keep

this

as

well.

I

C

C

B

A

C

I

C

C

J

B

C

All

right

and

I'm

putting

that

in

the

issue

tracker

as

well

thanks

folks

issue,

137

questions

about

where

certificate-wide

parameters

live,

so

certificate-wide

parameters,

meaning

things

like

your

algorithm

preferences.

Things

like

the

revocation

life,

the

expiration

lifetime

of

your

primary

key,

which

is

by

default,

the

expiration

of

your

entire

certificate.

C

C

So

the

current

draft

says

for

v5

keys.

Don't

look

for

those

things

in

the

user,

id

self

sigs.

Just

look

in

the

direct

key.

This

makes

it

a

little

bit

more

predictable,

I

think,

was

the

rationale

coming

from

the

design

team

and

real

and

a

little

bit

simpler

to

consider

what's

happening,

but

it

is

a

change

from

v4,

and

so

it

makes

v5

certificates

look

slightly

different.

It

does

not.

Actually,

the

draft

does

not

change

v4

certificates.

C

C

C

M

M

You

know

multi-uid

key,

but

they

have

like

different

implementations

on

their

mail

reading

setups

for

the

mailboxes

that

receive

those

different

uids

and

somehow

they

wanted

to

express

diversion

preferences

based

on

the

different

implementations

that

could

process

things

specific

to

that

uid,

as

opposed

to

you

know,

I

have

this

key,

it's

in

multiple

locations,

or

maybe

it's

only

in

one

location

and

I

just

can

handle

what

the

key

has

like

do.

We

think

people

use

that

flexibility

in

that

way.

C

I

mean

it

is

explicitly

contemplated

in

rfc

4880

that

this

is.

You

know.

It

says

like

if

you

look

this

key

up

by

user.

Id

atlas

then

use

the

preferences

from

user

that

are

bound

to

user

id

atlas.

But

if

you

look

it

up

by

bob,

then

use

it

for

user.

You

then,

then

use

the

preferences

found

on

on

bob's

self

sig.

I

have

not

heard

anyone

say

that

they

are

using

it

like

for

themselves.

C

I

don't

know

that

anybody's

done

a

survey

of

keys

to

see

whether

some

you

know

how

many

of

them

have

different

preferences

per

user

id,

and

I

also

don't

know

whether

anyone's

done

any

testing

to

see

whether

implementations

that

are

sending

actually

respect

that,

because

the

how

do

you

know

whether

you're

accessing

the

key

via

alice

or

bob?

Maybe

you've

done

some

binding.

That

says,

when

I

send

to

alice

use

this

fingerprint

or

something

like

that

and

then

from

the

openpgp

implementation.

C

It

just

sees

you

passing

the

fingerprint

and

doesn't

know

that

you're

looking

for

it

in

terms

of

alice,

so

I

don't

know

that

we

have

that

much

data

about

whether

it's

been

used.

There

certainly

has

been

nobody

stepping

up

to

say

if

you

rip

this

out,

I'm

going

to

be

sad

because

I

have

two

user

ids

that

I

expect

to

have

divergent

implement.

You

know

divergent

parameters

and-

and

I

want

to

see

those

things

present,

so

I

I

I

don't

think

we

have

much

data,

but

we

definitely

don't.

C

B

C

L

So

I

mean

I

think,

the

question

about

this

is

like

what

are

you

thinking

about,

for

you

know,

v7

or

v8,

because

it

sort

of

signals

a

direction

you're

planning

on

taking.

Like

you

mentioned,

you

know

if

you

have

to

support

v4

and

v5

you're

going

to

have

to

account

for

this

anyway,

but

in

future

states

where

you're,

supporting

v8

and

v7

after

you

know

years

of

this

change,

this

could

be

a

lot

simpler

and

I

am

also

in

favor

of

not

requiring

you

user

ids.

So

that's

that's

my

thoughts.

A

H

H

B

B

B

It's

a

more

complicated

question

being

on

the

fence

on

is

probably

more

reasonable

on

this

one,

so

we

have

a

software,

it

seems

to

stabilize

that

eight

hands

raised

and

zero

hands

not

raised,

which

is

an

indication,

but

I

guess

again

we'll

confirm

on

the

list

and

if

somebody

does

turn

up

with

some

use

case

as

ben

kind

of

indicated,

then

we

might

have

to

revisit.

But

for

now

we

have

an

opinion.

That's

good.

C

Okay,

noting

that

in

the

issue

tracker

as

well,

okay,

so

issue

138

in

so

in

rfc

4880,

we

have

a

sub

packet.

That

indicates

that

the

that

the

key

holder

is

willing

to

accept

revocations

from

a

third

party.

This

is

the

revocation

key

sub-packet.

It

contains

a

fingerprint

of

the

authorized

revoker

in

the

draft.

C

We

say

that

the

that

this

sub

packet

is

invalid

for

a

v5

certificate

and

we

describe

an

alternate

mechanism

for

doing

a

sort

of

delegated

revocation,

which

is

just

to

make

a

revocation

signature

and

send

it

encrypted

to

to

the

person

that

you're

willing

to

have

be

able

to

revoke

your

key.

That

mechanism

is,

is

available

obviously,

to

anyone

with

v4

keys

as

well.

It's

not

a

novel

mechanism,

but

it's

the

first

time

that's

been

described

explicitly

in

the

draft.

C

C

Key

sub-packet

for

v5

keys.

Some

of

the

reasons

arguing

for

the

removal

are

that,

given

that

it's

just

a

fingerprint,

you

might

not

even

have

a

copy

of

the

key,

and

you

might

see

a

revocation

and

not

know

whether

it

belongs

or

not

so,

there's

an

issue,

there's

a

logistical

issue

and,

secondly,

the

implementation.

Support

for

the

revocation

key

sub-packet

may

not

be

as

universal

and

robust

so

relying

on

it

seems

a

little

bit

dangerous

compared

to

relying

on

an

actual

revocation

signature.

C

So

I

think

those

are.

But

the

concern

here,

of

course,

is

that

it's

still

valid

for

v4,

so

your

implementation

that

does

v4

and

v5

will

have

to

do

both

and

some

people

say

that

this

is

actually

a

concretely

useful

thing

to

have

and

they

they

want

to

have

it

available

for

v5

and

they're,

not

comfortable

with

the

escrowed

keys.

So

hopefully,

people

can

ask

clarifying

questions

if

you

got

them

or

speak

in

favor

of

the

removal

for

v5

or

speak

in

opposition

to

it.

That's

a

good

chance.

C

M

So

you

could

get

into

a

scenario

where

the

the

mere

fact

that

this

revocation

signature

exists

cause

it

causes

it

to

get

uploaded

prematurely

when

it

wasn't

intended

to

be.

It

was

just

like.

I

have

some

data

it's

associated

to

this

key.

Let

me

publish

that

type

thing

and

like

this

is

seems

pretty

unlikely

to

me

and

I'm

not

super

concerned

about

it,

but

it

is

an

additional

risk

of

this

proposed

mechanism

that

you

know.

I

don't

know

if

we've

talked

about

before.

I

don't

remember.

C

Thanks,

I

think

that's

that's

a

that's

a

valid

point

there.

The

original

removal

here

was

was

accompanied

by

a

proposal

for

a

replacement

for

the

revocation

key

sub

packet

that

actually

included

the

revoking

primary

key,

the

the

the

the

key

material

of

the

revoking

of

the

delegated

revoker,

and

that

was

seen

as

either

too

complicated

or

out

of

charter

or

too

distracting,

which

is

why

we're

in

this

in

this

removal

phase.

That's

where

that's

why

this

is

it.

C

C

I

H

We

can

argue

again

about

whether

it's

in

charter,

but

I

think,

if

we're

removing

it

here,

which

I

think

is

reasonable,

then

I

also

think

that

adding

a

replacement

is

reasonable,

but

I'm

also

fine

with

just

removing

it

and

without

a

replacement

other

than

having

a

revocation

certificate,

which

is

already

a

mechanism

that

exists

and

is

used,

and

we

already

support

it

also

in

before.

So

I

think

that's

reasonable.

Also.

M

Ben

kadek,

again

thanks

everybody

for

the

extra

discussion.

I

think

that

has

sort

of

solidified

my

thinking

that

in

favor

of

removing

the

verification,

key

sub

packet

for

the

v5

keys,

that's

just

like

acknowledging

the

deployed

reality

that

you

can't

rely

on

it

to

work

seems

like

something

that's

really

appropriate

for

us

to

do

in

the

biz

document,

and

so

I

I

would

like

to

see

it

that

way.

M

I'm

okay

doing

that,

even

if

we

don't

have

a

replacement

mechanism

specifically,

I

would

also

be

okay

as

daniel

is

with

having

this

mechanism

for,

like

the

actual

full

verification

key

in

in

the

key,

because,

like

the

the

size

issue

is

on

the

issue,

if

you,

if

you

actually

use

it,

and

so,

if

you

don't

use

it,

you're

not

affected,

but

I'm

also.

Okay,

just

removing

this

and

and

not

providing

a

replacement

right

away.

B

B

B

So

this

is

iana,

so

in

48.80

there's

a

whole

bunch

of

viana

considerations

and

registries

and

registries.

That's

that's

to

require

ietf

consensus

for

change,

and

the

suggestion

in

most

cases

is

not

not

absolutely

everywhere,

but

in

most

cases

the

the

draft

six

basically

changes

those

to

specification

required

and

for

those

of

you

who

aren't

who

are

not

iana,

nerds

that

the

impact

there

is

we're

moving

from

a

situation

where

you

have

to

get

a

document

through

the

ietf

process.

B

B

So

I

think

it

requires

ietf

consensus,

igf

consensus,

sorry

because

an

independent

stream

rfc

doesn't

have

that

so

so

yeah

there's

a

couple

of

exceptions,

but

but

the

the

basic

movement

is

towards

allowing

specification

required

which

is

kind

of

loosening

up

things.

A

lot

of

other

groups

have

done

this

over

the

years,

but

it's

worth

checking

because,

for

example,

it

means

that

you

know

a

vanity

cypher

code

point

could

could

end

up

their

national

algorithms.

Would

connect

can

end

up

there,

which

may

be

good

or

bad.

B

B

N

Yeah

digital

community-

so

yes,

actually

designated

expert

is

needed

and

I

think

it's

it

would

be

very

useful

to

keep

the

instructions

of

the

designated

expert.

What

kind

of

you

know

specifications

are?

Do

we

we

probably

want

to

have

some

kind

of

stable

reference.

You

know

published.

You

know

some

other

standards

or

organization

paper,

not

just

on

some

webpage

somewhere,

so

you

usually

and

usually

dressing

experts

have

quite

are

quite

free

to

actually,

you

know

interpret

the

instructions

they

are

given.

So

we

don't

have

written

very

specific

instructions.

N

B

N

Okay,

I

think

most

of

the

most

of

the

rfcs

are

you

know

for,

for

those

are

obviously

the

time.

For

example,

expert.

I

think

they

actually

just

say

that

they're

expert

there's

actually

no

specification

required,

but

I

have

always,

as

an

expert

required

to

have

a

specification

before

I

actually

go

forward

and

say:

okay,

you

have

to

have

a

three

p.

Three

gpp,

for

example,

specification

is,

is

okay,

some

webpage,

I

will

say:

okay,

I

don't

know

if

I

would

want

to

allocate.

You

know

numbers

based

on

that.

M

M

Example

of

a

case

that

I

think,

does

this

pretty.

Well,

I'm

actually

the

sort

of

ghost

editor

for

the

cozy

drafts

that

are

in

all

48

at

the

moment,

because

jim

is

no

longer

here

to

to

do

the

authority

himself.

And

so

we

have

some

text

in

there

about.

The

expert

is

designated

an

expert

as

a

reason,

because

we

should

trust

our

expertise

and

give

them

leeway,

but

also

guidance

about

the

proposed

mechanisms

or

algorithms

need

to

like

actually

be

appropriate

for

the

requirements

of

the

registry

that

we're

trying

to

use

like.

M

If

it's

supposed

to

be

a

signature

algorithm,

it

has

to

actually

provide

the

signature

functionality

and

I

think,

there's

also

some

some

guidance

there

that

it

needs

to

meet

the

community

requirements

for

security

so

like

if

somebody

wanted

to

prove

so

null

security

and

a

null

sequencer

algorithm.

Essentially

if

it

doesn't

meet

the

requirements

of

the

community.

M

If

there's

also,

some

pointers

to

like

cfrg

is

a

good

resource

in

there

and

in

addition

to

sort

of

answering

the

question

that

you

asked

of

tarot,

I

wanted

to

also

say

that

in

general

I

do

support

this.

I

think

in

other

areas

like

tls-

and

I

think

ipsec

is

actually

in

the

process

of

doing

this

as

well.

You

know

opening

up

the

registries

to

make

it

a

lower

burden

is

good

for

the

ecosystem.

M

I

guess

I

also

wanted

to

mention

a

counter

example

in

the

sense

of

the

expert

is

not

given

very

much

leeway

and

I

believe

the

quick

registries.

The

policy

is

basically

a

shell

issue.

Policy

and

expert

is

there

to

like

apply

some

back

pressure.

If

somebody

is

asking

for

a

lot

of

code

points

or

something

like

that

or

if

they're

asking

for

a

specific

code

point

that

might

be

problematic,

but

the

the

guidance

to

the

expert

is

basically

you've

got

to

approve

this.

C

J

L

So

I'm

a

editor

maintainer

of

a

registry

that

was

recently

opened

up

in

in

this

way,

and

I

think

it

is

a

positive

thing.

That's

happening

like

you

mentioned

it's

sort

of

trend

in

the

space,

but

the

guidance

to

those

maintainers

is

critical

and

if

you

don't

give

good

guidance

to

them,

you

open

huge

political

cans

of

worms

for

them

and

they're

likely

to

resign.

C

C

B

B

C

Yeah

and

one

of

the

you

know

one

of

the

ways

that

we

can

determine

quantitatively,

if

it

is,

if

it

is

clear,

is

whether

we

have

functioning

interop

as

well.

I

mean

use

this

as

the

as

the

sorry

to

keep

poking

at

you

as

the

maintainer

of

the

interop

test

suite.

But

can

you

can

you

report

back

on

how

things

like

whether

whether

argon

2

has

been

tested?

This

is

something

that

might

be

in

the

in

the

lineup.

I

F

B

Okay,

so

so

I

think

this

situation

is

that

we

have

we

seem

to

have.

I

think

miebe

did

say

that

it's

supported

and

it's

and

then

justice

is

saying

that

it

interoperates,

so

that

that

you

know

seems

to

say

that

the

text

is

not

horrible,

but

I

think

so.

I

don't

think

this

one

would

have

a

poll

on.

I

think

this

is

one

where

we

should

go

back

and

check

essentially

because

argon

2

does

have

a

bunch

of

parameters.

Clearly,

one

person

on

the

list

at

least

has

found

that

confusing

or

something.

B

B

E

B

B

C

And

if

a

third

implementation

could

become

interoperable

or

if

we

could

get

proof

on

the

test,

suite

that

that

these

things

are

capable

of

being

interoperable,

then

that

would

be.

That

would

be

useful,

too

useful

evidence

for

clarity

all

right

getting

through

this

folks.

This

is

good,

so,

second,

last

one

yeah

we're

on

the

second

to

last.

So

thanks

everybody

for

bearing

with

so

we've

had

some

reports

on

the

list

recently

about

open

pgp

certificates

that

are

fairly

widespread

and

do

not

follow

either

rfc

4880

or

the

current.

C

C

One

thing

is,

we

could

adjust

the

text

in

the

revision

to

explain

a

little

bit

better

about

what

how

this

metadata

should

be

prepared

and

to

describe

what

what

an

implementation

should

do

if

it

discovers

that

the

metadata

has

been

ill

prepared.

So

that's

just

like

documentation,

cleanup

and

another

thing

which

we

actually

have

a

merge

request

for

is

to

strip

out

those

pieces

of

metadata

that

are

are

apparently

ambiguous

from

v5

keys

so

that

they

simply

wouldn't

be

present

in

those

structures.

So

you

couldn't

make

those

mistakes.

C

C

C

B

H

So

for

the

first

issue,

I

have

some

one

fairly

orthogonal

argument

for

removing

it

entirely,

which

is

that

if

you

have

a

crypto

api

which

lets

you

hash

and

sign

in

one

operation,

then

it's

it's

nice

to

be

able

to

use

that

without

needing

to

get

the

intermediate

hash

which

web

crypto

does.

So

again,

it's

fairly

specific,

but

I

I

think

that's

useful

and

also,

if

implementations

are

not

checking

it

anyway.

C

F

H

H

So

again,

the

only

real

reason

I

see

for

not

well

two

reasons

I

see

for

not

checking

it

is

one

you

have

such

an

api

where

you

can

hash

and

verify

in

one

step

or

well.

There

are

keys

out

there

in

the

wild

that

that

are

broken,

so

you

ignore

the

bytes.

For

that

reason,

so

I

mean

both

of

those

would

to

me

speak

for

either

removing

it

or

saying

that

you

you

should

or

must

check

it

and

reject

the

signature.

H

L

Again,

agree

with

everything

he

just

said:

it's

becoming

a

bit

of

a

theme

yeah.

If

it's

there,

it

should

be

checked

if

it

doesn't

match

it

should

be

rejected,

I'm

in

favor

of

removing

it,

I'm

not

sure

exactly

the

impact

on

the

keys

in

the

wild

based

on

that

statement,

but

sometimes

you

make

things

that

you

are

broken.

You

have

to

make

new

ones.

F

I

So

my

theory

that

this

is

or

was

once

an

optimization

right,

you

could

skip

the

heavy

lifting

of

doing

the

other

metric

operation

when

you

can

determine

from

the

hash

prefix

that

the

signal

didn't

didn't

check

out.

But

I

want

to

highlight

that

we

have

a

bit

of

a

heuristic

based

on

that

where

we

use

it

to.

I

I

C

C

C

F

B

B

J

C

So

the

the

the

three

arguments

for

keeping

it

that

I've

heard

are

one

it

allows

you

to

reject

signatures

faster

two.

It

allows

you

to

to

this

debugging

argument.

It

gives

you

some

extra

hints

about

where

the

problem

might

be

in

the

emitting

implementation

and

then

three

uses

this

point

about

being

able

to

reorder

certificates

more

efficiently

without

doing

the

heavy

lifting

of

the

crypto

piece.

I

see

use

this

out

of

the

queue

now.

B

B

C

C

A

C

And

then

so

so

then

we

what

we

haven't

discussed

yet

is

this

malformed

mpis

in

certificates

like

the

github

certificate

right,

so

the

mpi

specification

is

very

clear

but

not

necessarily

always

followed.

Apparently,

apparently

it's

not

as

clear

as

it

could

be.

It

says

that

the

it's

indeed

it

indicates

length

by

bits.

C

For

some

reason,

and

in

this

situation

we

have

certificates

that

are

it's

supposed

to

indicate

the

the

largest

bit

that

is

set

to

one,

but

in

this

situation

we're

looking

at

certificates

which

contain

mpis

or

make

signatures

that

contain

mpis.

Is

it

signatures

or

certificates

that

we're

only

seeing

this

in

anybody

aaron?

Are

you

still

in

the

queue

from

last

time?

Sorry.

C

C

So

it

seems

like

we

should.

We

ought

to

have

some

guidance

so

that

we

can

point

an

implementation

to

what

they

should

do

about

it.

It

seems

to

me

I

mean

in

terms

of

just

leaving

it

at

nothing,

doesn't

seem

very

useful

to

me.

I

don't

know

what

sort

of

guidance

people

would

want.

One

is

you

could

tell

people

to

reject

a

signature?

Another

one

is,

you

could

tell

people

to

clean

up

the

signature

if

the

mpi

has

this

particular

alternate

form.

L

B

B

B

M

I

think

daniel

beat

me

to

actually

getting

in

the

queue,

but

I

hit

unmuted.

So

my

understanding

here

is

that,

for

these

problematic

signatures

you

can

modify

the

cipher

text

so

as

to

or

will

modify

the

metadata

really

so

as

to

make

it

a

valid

signature,

and

I

believe

that

that

would

not

really

be

something

that

can

constitute

an

attack.

If

you

can

just

fix

it

yourself.

C

F

M

It's

it's

not

like

a

cryptographic.

Failure

on

the

signer's

fault,

it's

just

a

implementation,

failure

to

respect

properly

and

because

the

cryptography

still

checks

out.

I

think

the

practical

like

in

favor

of

better

interoperability

would

be

to

just

fix

it

and

maybe

complain

loudly

if

you

have

the

ability

to

do

that.

H

I

think

if

we

want

to

say

that

implementations

should

or

must

reject

it,

we

could

do

that

for

v5

signatures

and

keys,

but

for

v4

I

also

don't

think

we

can

for

full

disclosure

open,

very

old

versions

of

openpgp.js

also

used

to

produce

malformed

mpis

in

some

cases,

particularly

if

there

were

leading

zero

bytes.

So

it

was

a

slightly

different

issue

than

this

one,

but

still

I

don't

think

we

can

be

super

strict

for

mpis

and

v4

signatures,

but

for

v5

we

could.

If

we

want

to.

I

So

my

concern

is

that

if

you

have

a

system

that

is

composed

out

of

multiple

components

and

they

use

different

implementations

and

they

behave

differently,

you

may

be

able

to

confuse

the

system

as

a

whole.

Where

one

of

the

implementations

would

say

it's

a

valid

signature

and

the

other

one

may

think

it's

an

invalid

signature

and

that

may

be

able

to

create

problems.

N

N

That

the

old

signatures

are,

are

there?

That's

that's

true,

but

actually,

as

I

said,

I

don't

know,

if

there's

actually

any

way

of

you

know

like

somebody

was

saying

it

would

be

really

nice

to

get

warning

and

that's

actually

one

of

the

things

that's

been

saying

that

we

could

actually

fix

it

in

in

before

we

actually

keep

it.

So

we

could

actually

have

an

implementation,

actually

reacting

the

signature

and

then

checking

that

if

it's

actually

oh,

it's

a

signature.

That

is,

if

we

fix

it,

it

actually

works.

N

That

would

actually

allow

you

know

user

interface

or

or

programs.

To

actually

way

of

you

know

having

a

separate

method

of

or

or

the

other

thing

is

to.

That's

just

reacted

on

all

four

for

version

five,

but

actually

the

most

important

thing,

of

course,

would

be

to

get

you

know

the

people

who

are

doing

this

to

fix

them.

So

if,

unless

you

start

reacting

them,

I

don't

think

this

hub

is

going

to

be.

Is

this

still

generating

those

signatures.

N

So

I

I

think,

because

they

don't

see

any

reason

to

change

until

somebody

actually

starts

breaking

things,

and

I

think

we

should

it

would

be

better

to

have.

You

know

not

to

have

this

kind

of

corner

cases

because

they

usually

have,

as

as

was

pointed

out,

if

you

have

an

implementation

that

actually

checks

this

another

one

that

doesn't

and

it

might

be

happening

very

low

level

in

in

the

you

know,

you

know

crypto

library,

your

crypto

library

might

be

saying.

Oh

no!

No!

N

This

is

not

an

mp,

probably

nbn's,

because

it

doesn't

have

a

first

bit.

You

know

one

and

and

then

you

might

not

be

able

to

have

to

react

it

in

that

that

might

cause

you

know.

You

know

this

kind

of

issues

that

some

you

know.

One

part

that

was

supposed

to

do

something

based

on

this

and

toss

it

because

it's

well,

it's

signature,

other

one

doesn't

because

it's

invalid

signature.

L

L

Some

implementations

will

fail

to

verify

the

signature,

some

won't

and

in

practice,

the

way

I've

defended

our

our

source

from

this

is

to

manually

lower

the

signature

to

low

res

before

emitting

anything

you

know

outside

of

our

library,

so

for

the

for

the

libraries

that

are

handling

this,

they

could

decide

I'm

every

time.

I

see

this

thing,

that's

a

problem.

I'm

gonna

fix

it

for

myself,

but

it's

everyone

has

to

decide

to

do

that

and

it's

like

mess

in

the

code.

F

I

I

C

F

B

B

C

B

B

B

You

know

if

so,

if

somebody

finds

some

some

new

facts

or

or

comes

with

some

new

information,

then

we'd

have

to

look

at

things,

but

should

we

raise

the

bar

to

try

and

get

ourselves

done

by

essentially

encouraging

people

to

only

look

at

the

diffs

beyond

you

know,

from

seven

to

eight

or

whatever

draft

actually

resolves

these

issues.

Paul.

E

E

J

B

With

it,

but

it's

ok,

it's

a

question

of

you

know.

If

we,

if

the

if

the

working

group-

and

I

think

so

http

did

this

a

few

times-

they

basically

agreed

that

they

would

they

wanted

to

get

stuff

out.

So

they

were,

they

were

asking

people

to

look

at

the

divs.

If

people

look

at

other

things,

you've

got

to

deal

with

it,

but

I

think

we

can

do

it

if

people

want

to

do

it.

C

M

Sorry,

you,

you

called

so

passionately

for

people

to

be

in

the

queue

ben,

kadek,

so

yeah.

I

think

it's

it's

probably

worthwhile

doing

this

and

to

sort

of

paul's

question.

You

could

certainly

frame

this

as

saying

we

did

a

working

class

call

on

this

previous

version,

and

we

believe

you,

after

whatever

evidence

that

it

has

consensus,

and

so

bear

in

mind,

is

reviewing

that

we

have

a

presumption

that

this

other

stuff

already

has

consensus

and

so

focus

your

review

on.

B

F

C

So

if

folks

have

other

things

that

they

want

to

raise

about,

the

crypto

refresh

beyond

the

issues

that

we've

just

gone

through

now

is

a

good

time

to

raise

them.

If

you

don't,

then

we

want

to

give

the

remainder

of

the

time

to

some

discussion

about

these

potential

issues

that

would

come

up

after

a

recharger.

Does

that

sound

right.

B

H

Yes,

thank

you

steven

and

yeah.

So,

just

to

reiterate

this,

this

presentation

is

explicitly

out

of

scope

for

the

crypto

refresh

it's

more

so

meant

to

provide

some

ideas

and

make

some

motivation

to

get

this

crypto

refresh

out

the

door

and

get

to

working

on

new

stuff.

Of

course,

that

only

works

if

there's

actually

interest

in

these

ideas

otherwise.

H

So

this

leads

us

to

the

conclusion

that

there

are

sort

of

a

missing

middle

or

a

missing

type

of

keys.

If

you

want

to

encrypt

stuff

with

a

symmetric

key,

if

you

don't

need

asymmetric

cryptography,

so

it

would

be

really

nice

if

you

could

store

a

symmetric

key

in

a

long-term

key

ring

to

use

to

to

symmetrically

encrypt

stuff,

but

maybe

also

to

symmetrically

sign

stuff

using

an

hmac

or

a

cmac

if

it's

just

for

yourself

or

local

storage.

H

Next

slide,

please

so

yeah

for

the

use

cases

of

symmetric

encryption

that

we

see

that

we

have

in

mind,

for

this

is

yeah.

So

if

you

have

some

symmetric

file,

encryption

or

you

have

some

file

storage-

that

you

want

to

store

files

symmetrically

encrypted

for

backup

or

long

term

storage

or

on

a

usb

stick

or

whatever,

or

if

you

want

to

symmetrically

re-encrypt

the

the

messages.

The

emails

that

you

got,

for

example

for

long-term

archival,

you

can

decrypt

them

asymmetrically

as

they

come

in

and

grip

them

symmetrically

and

store

them

like

that.

H

H

H

Our

idea

is

to

define

two

new

public

key

algorithm

ideas

ids,

namely

aad

and

hmac.

So

the

reason

we

are

proposing

that

is

because

the

the

semantics

of

public

key

cryptography

in

openpgp

today

match

much

more

closely.

What

we

want

to

achieve

in

the

sense

that

you

encrypt

a

message

with

some

key

that

you

refer

to

by

a

fingerprint,

for

example,

or

you

sign

a

message

with

a

key,

whereas

for

symmetric,

cryptography

and

open

php

today,

you,

you

encrypt

a

message

with

a

password,

and

you

derive

a

key

from

that.

H

There's

no

way

to

refer

to

a

long

term

key,

let's

say

so.

In

that

sense,

it's

much

more

convenient

to

be

able

to

stick

a

public

key

algorithm

id

in

a

key

packet,

a

signature

packet

and

a

public

key

encrypted

session

key

packet,

despite,

of

course,

the

the

name

not

matching

what

what

it

actually

is.

So

there's

one

idea.

H

So

we

could

rename

those

perhaps

to

persistent

key

encrypted

session

key

packet

or

and

derived

key

encrypted

session

packet

or

personal

key

and