►

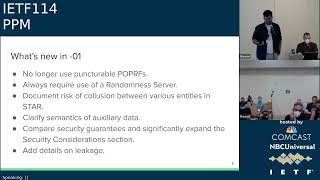

From YouTube: IETF114 PPM 20220728 1400

Description

No description was provided for this meeting.

If this is YOUR meeting, an easy way to fix this is to add a description to your video, wherever mtngs.io found it (probably YouTube).

A

A

A

B

D

Yes,

I

mean

q

to

bash

the

agenda.

There

was

no

opportunity

to

do

so.

I

would

I

would

like

to

request

that

this

be

moved

to

the

end

of

the

session,

so

we

give

appropriate

amount

of

time

for

all

the

dapper-related

issues

that

we

have.

We

need

to

make

sure

we

get

through

those

prior

to

discussing

non-working

group

items

at

this

time.

E

E

F

C

C

The

central

idea

is

that

we

would

like

to

have

k

anonymity

for

clients,

reporting,

potentially

sensitive

measurements

to

an

untrusted

server

and

the

pretty

important

goal

that

we

have

is

that

it

should

be

cheap

because

brave

is

a

small

organization

and

there

are

other

similar

small

organizations

who

would

like

to

do

privacy,

preserving

measurement

but

don't

have

infinite

aws.

You

know

money

to

spend

on

that

and

so

yeah,

so

low

computational,

overhead

and

network

usage

for

clients

and

servers

is

pretty

important.

C

C

The

central

idea

here

I

won't

go

into

too

much

detail.

I

think

alex's

presentation

at

the

last

itf

did

a

good

job,

but

essentially

we're

going

to

use

jameer's

secret

sharing.

So

you

get

a

symmetric

key

through

some

deterministic

function,

operation

on

the

initial

measurement,

and

then

you

encrypt

the

measurement

using

that

key,

and

so

the

idea

now

is

that

you

can

only

get

x

when

you

have

key

when

you

have

k.

C

So

you

generate

a

secret

share

of

of

k

and

you

send

the

measurement

and

the

secret

share

to

the

server

and

the

server

gets

a

bunch

of

these.

But

it

doesn't

know

what

m

is

until

it

can

perform

the

recovery

operation

on

k

on

the

secret

share

of

k,

and

it

can

only

do

that

once

there

are

n

of

those.

So

in

this

way

you

get

like

key

anomaly.

C

Where

n

is

the

number

of

minimum

number

of

shares

you

need,

and

then

you

can

use

k

once

you

recover

it

to

decrypt

them

to

and

get

the

original

measurement

back,

and

it's

really

important

to

have

an

anonymizing

proxy

here.

So

I'll

talk

a

little

bit

about

this

later

on,

but

essentially

once

the

measurement

is

decrypted,

you

want.

You

want

to

be

sure

that

you

still

don't

have

access

to

the

the

ip

address

of

the

people.

C

Submitting

this

and

also

you

have

to

use

a

randomness

server,

because

the

you

could

also

do

this

locally,

but

we

decided

that

it's

just

a

lot

better

for

privacy

reasons.

If

you

use

this,

so

the

idea

is

that

you

would.

The

client

sends

a

blinded

input

value

to

the

randomness

server

and

it

gets

assault

back,

and

if

you

have

the

same

input

value,

then

all

of

those

clients

would

get

the

same.

C

Salt

pack-

and

this

is

to

do

this-

is

done

to

like

mitigate

the

server

brute,

forcing

all

possible

input

values,

because

if

the,

if

the

space

for

the,

if

the

initial

measurement

is

not

except

doesn't

have

enough

entropy,

then

the

so

the

server

can

very

easily

brute

force,

all

possible

values

and

then

just

see

what

the

values

match

up

with

the

encrypted

value

and

then

yeah.

We

also

use

an

opr

to

make

sure

that

the

randomness

server

does

not

learn

the

input

value

as

well.

C

C

G

C

Yeah

and

we

made

a

bunch

of

changes

given

the

feedback

that

we

got

at

the

last

ietf

on

mailing

list

and

through

conversations,

so

we

don't

use

punctual

opr

apps

anymore

and

we

just

simply

rotate

the

keys.

Like

I

mentioned

so

the

randomness

server.

The

client

can

only

sends

the

encrypted

value

to

the

aggregation

server

in

the

subsequent

ebook

after

the

randomness

phase

and

yeah.

C

We

also

require

the

use

of

a

randomness

server

just

to

make,

I

guess,

keep

things

simple

and

you

don't

have

to

like

then

make

that

decision

yourself.

Oh,

should

I

do

I

need

one.

Do

I

not

need

one,

and

also

apart

from

that,

just

a

bunch

of

documentation

of

the

risk

of

collusions

between

various

entities

and

just

a

lot

more

like

details

on

leakages

and

the

security

consideration,

sections

and

stuff

like

that

and

yeah,

and

we

think

it's

ready

for

adoption.

C

H

H

H

Well,

I

want

what

I

want

is

to

make

it

impossible

for

you

to

construct

a

given

value

so

like

so

so,

for

instance,

like

you're

collecting

the

top

urls

right,

and

I

want

to

make

it

to

impossible

for

you

to

impossible

for

you

to

collect

the

google.com

on

the

top

urls.

So

what

I

do

is

I

generate

a

description

because

I

generate

so.

I

generate

a

thing

that

has

the

encrypted

value

for

google.com,

but

has

a

secret

share.

That

corresponds

to

a

random

point.

H

No,

no!

No

because

so,

if

I

understand

it,

you

inventory

the

you

sort,

the

keys,

so

you

sort

the

inputs

on

the

encrypted

value

right

good,

so

you

bucket

them

up

on

encrypted

value.

You

take

the

corresponding

sql

shares

right

now

you

can

start

the

key,

and

now

you

get

a

key

and

you

try

to

decrypt

them,

and

none

of

the

values

will

decrypt.

H

And

so

because,

if

you

consider

one

key,

you've

considered

a

random

key

right,

so

you've

got

to

say

so.

You've

got

to

find

somebody

to

reject

the

bogus

key.

The

book

is

inputs

right,

and

so,

if

I

remember

correctly,

there

was

some

technique

that

apple

used

in

their

in

their

in

their

system

system.

For

this,

but,

like

I

don't

know

how

to

do

it

with

this

design.

So

so

I

think

this

actually

doesn't

answer

for

this.

I

think

it's

not

just

I

mean

so

civil.

H

I

mean

like

it's

like

one

thing

to

be

like

we

don't

have

grand

civil

attacks,

but

like

the

situation

where

you

know

the

situation

is

that

like,

if

I

can

get

any

small

fraction

of

the

keys

that

you

can't

decrypt,

that's

like

actually

very

serious.

So

I

think

you

think

you

need

a

way

to

reject

bad

input.

Shares.

H

I

don't

know

I

don't

know,

I

don't

know

how

to

fix

it.

I

think,

as

I

I

said,

I

said

my

pleasure

would

be

that

there

was

a

that

in

the

in

the

binet.

You

know

lester

meyers

apple

ccm

thing.

They

had

a

secret

share,

a

secret

shimmer

secret

share

scheme

and

they

had

some

way

to

reject

broker's

values.

So

maybe

you

can

steal

that.

Maybe

you

can't.

B

I

Hey,

can

you

hear

me?

Okay,

yes,

alex

yeah

yeah,

I'm

alex

I'm

one

of

the

authors

of

the

star

draft,

a

co-author

of

the

star

draft.

So

in

answer

to

eric's

question,

that's

what

we

do

in

brave

is

we

take

the

threshold,

a

threshold

number,

the

threshold

number

of

ship,

the

minimum

threshold

number

of

shares

and

tries

to

reconstruct

and

then,

if

we

can't

reconstruct,

we

keep

taking

random

sets

of

shares.

So

that's

like

one

thing

that

we

don't.

I

H

Say

your

threshold

see

a

threshold

is

zero

threshold

is

500

shares

right

and

one

percent

of

the

shares

are

bogus.

What

is

what

is

the

chance

that

not?

What

is

the

chance

that

99,

so

0.99

to

the

500

is

like

an

incredibly

small

number

so,

like

it

doesn't

work

it

doesn't

it

doesn't

work?

It

can't

attack

with

any

kind

of

power.

I

B

B

J

K

We

go

okay,

let's

see,

and

I

got

buttons

all

right

great.

Let's

get

started

okay,

so

so

in

this

particular

deck

we're

going

to

cover

the

current

status

of

implementations

of

jp,

then

I

want

to

talk

about

a

small

number

of

the

notable

changes

in

the

most

recent

draft

001

of

bap,

and

then

we're

going

to

use

that

to

segue

into

the

discussion

of

a

couple

of

one

or

two

open

problems

that

the

chairs

have

encountered

and

that

we're

interested

in

discussing

in

the

working

group

before

I

move

on.

K

K

Sounds

good

all

right,

so

implementation

status.

So

as

of

right

now

we

have

two

implementations

of

draft

ietf

ppm

dap01

that

are

up

on

github.

First

there's

daphne,

which

implements

a

dap

leader

helper

and

a

collector

and

is

written

in

pure

rust.

Then

yanus

is

another

implementation

of

dap

server

components,

also

written

in

pure

rust,

so

dafty

and

giannis

are

independent

implementations

of

dap,

though

they

do

share

some

common

dependencies

which

we'll

get

to

in

a

minute

and

finally,

there's

a

divi

up

ts,

which

is

a

client.

K

So,

as

I

mentioned

before,

daphne

and

giannis

are

independent

implementations

of

dap,

but

they

both

use

libprio

to

implement

vdafs.

So

it

certainly

would

be

nice

to

see

more

implementations

and

especially

ones

in

some

languages.

Besides

rust,

then

you

want

there.

If

anyone

out

there

is

interesting

so

yeah

all

this

stuff

is

up

on

github,

please,

you

know,

go

check

it

out,

maybe

deploy

them.

K

K

K

So

in

dap,

a

report

gets

uniquely

identified

by

its

knots

and

a

nonce

consists

of

the

time

at

which

the

measurement

was

taken

and

then

a

random

component.

That's

intended

to

make

the

nazis

unique,

so

nonces

have

to

be

unique

because

they

are

used

for

anti-replay

by

the

aggregators

and

they

are

time

stamped

so

that

the

aggregators

can

decide

whether

a

given

report

falls

into

a

particular

batch

interval

so

up

until

draft

zero

one.

K

The

notch

was

the

number

of

seconds

since

the

unix,

epic

and

then

eight

random

bikes,

as

it

turns

out

that

timestamp

is

high

enough

resolution

to

leak

some

meaningful

information

about

the

client.

So,

as

of

the

most

recent

draft,

we

now

expect

clients

to

round

the

timestamp

down

to

the

minimum

batch

duration,

which

is

one

of

the

long

lived

task

parameters

and

we

widen

the

random

component

to

16

bytes.

K

K

Okay,

turning

back

to

the

aggregation

sub

protocol,

let's

just

unpack

a

bit

more

what

it

actually

does

so

at

some

point,

aggregators

are

going

to

be

holding

some

large

number

of

input

chairs

that

have

been

uploaded

by

clients,

and

I

mean

they

want

to

aggregate

them

together,

but

in

the

taxonomy

of

dap

you

can't

actually

aggregate

input

shares

you

first

have

to

obtain

an

output

share.

An

output

share

that

is

from

each

input

share

and

that

process

of

going

from

input

to

output

share

is

what

vdaf

refers

to

as

preparation.

K

Hence

we

end

up

with

the

somewhat

unhelpfully

generic

term

of

preparation,

but

one

thing

that

we

do

expect

is

going

to

hold

across

all

or

most

vdafs

is

that

preparation

is

embarrassingly

parallel

since

verifying

the

proof

of

one

input's

validity

should

generally

be

completely

independent

from

another.

So

we

want

to

enable

the

leader

to

be

able

to

schedule

the

preparation

of

lots

and

lots

of

inputs

in

parallel

for

efficiency,

and

this

is

why

we

introduced

this

notion

of

the

aggregation

job

into

the

protocol.

K

Okay.

So,

as

we

discussed

at

some

point,

the

leader

is

going

to

want

to

schedule

the

preparation

of

a

big

set

of

shares

in

the

prio

family.

Vdafs

preparation

can

begin

as

soon

as

the

aggregators

receive

inputs

from

the

clients,

because

there

isn't

an

aggregation

parameter

in

those

vdafs.

So

maybe

in

that

setting

the

leader

every

time

it

receives

a

thousand

inputs,

it'll

dispatch,

an

aggregation

job

right,

but

in

something

like

poplar

one.

K

The

aggregate

results

sorry

aggregate

shares

as

quickly

as

possible,

so

either

way

what

the

leader

will

do

is

generate

random

aggregation,

job

ids

and

assign

a

set

of

reports

to

each

job

that

mapping

one

job

id

to

many

shares

gets

transmitted

to

the

to

the

helper

in

the

aggregate.

Initialize

request

illustrated

here

on

the

slide

and

that

job

id

is

going

to

be

referenced

in

subsequent

messages.

K

So

again,

you

can

check

out

the

linked

issue

and

pull

request

on

the

slide.

If

you

want

to

learn

more

about

like

the

context

behind

this

change,

all

right.

Moving

on

now,

let's

discuss

how

the

aggregators

authenticate

to

each

other

in

this

aggregate

sub

protocol

that

we've

just

been

discussing

so

in

in

dp

aggregation

is

coordinated

by

the

leader

aggregator

though,

actually

in

the

aggregate

sub

protocol,

the

leader

is

acting

as

a

client

to

the

helper's

http

server.

Now

this

channel

between

the

two

aggregators

has

to

be

mutually

authenticated.

K

Now

we

did

this

because

it

enables

the

deployments

that

we

have

in

mind

right

now,

but

this

isn't

really

a

workable

solution

for

the

protocol.

So,

first

off

you

know,

long-term

shared

secrets

between

the

participants

is

not

desirable.

We

should

do

our

best

to

avoid

that,

of

course,

and

more

to

the

point,

because

it's

such

a

specific

prescription,

it

makes

it

impossible

for

deployments

to

use

any

number

of

existing

well-established

offense

or

offset

mechanisms

used

widely

used

in

http

apis.

K

So

this

in

particular,

is

something

that

we

definitely

want

to

change

in

a

future

draft.

But

at

this

point

we

should

take

a

step

back

from

the

specific

interaction

between

the

two

aggregators

and

look

more

broadly

at

how

protocol

participants

are

authenticating

to

each

other

when

they

communicate

in

dap.

K

K

So

in

this

case

the

design

requirements

are

confidentiality

which

we

achieve

by

having

the

client

encrypt.

Either

input

share

to

an

hpk

public

key

advertised

by

either

aggregator.

Of

course,

this

is

necessary

because

if

a

network

observer

could

see

the

input

shares

in

the

clear,

then

that

would

defeat

all

the

privacy

goals

of

the

protocol.

K

K

The

working

group

today,

okay,

where

was

I

right,

confidentiality

right,

so

we

protect

the

input

shares

in

flight

by

http

encrypting

them

to

a

public

key

advertised

by

either

aggregator.

Okay,

then,

of

course

this

has

to

be.

We

need

server

authentication

in

this

setting,

because

we

want

to

make

sure

that

the

input

chairs

are

being

transmitted

to

the

act,

the

authentic

aggregators

participating

in

a

dap

deployment.

K

K

K

We

we

also,

I,

in

my

view,

don't

want

to

specify

how

a

deployment

would

do

client

authentication

if

it

chose

to

which

I'm

going

to

come

back

to

all

right.

The

next

row

is

the

communication

between

the

leader

and

the

helper

during

the

aggregate

sub

protocol,

which

we

just

covered,

so

I

don't

want

to

spend

a

ton

of

time

on

it

again,

but

yeah

confidentiality

is

generally

cheap

because

they

are

communicating

over

tls

and

mutual

authentication

through

that,

through

this

current

pre-negotiated

barrier,

token

scheme

and

the

server's

tls

certificate.

K

So

these

aren't

exactly

the

same

because,

while

the

while

the

collector

makes

direct

http

requests

to

the

leader,

it

never

actually

talks

directly

to

the

helper.

The

communication

between

collector

and

helper

is

tunneled

through

the

leader,

which

coordinates

the

collect

and

aggregate

protocols,

so

we

achieve

confidentiality

in

both

cases.

K

Okay.

So

clearly

we

have

a

bunch

of

cases

where

what

we

do

say

about

authentication

needs

to

change

and

others

where

we

say

nothing

at

all.

And

maybe

we

should

oh

excuse

me

before

I

move

on.

I

forgot

one

interesting

piece

of

red

text

in

the

slide,

which

is

in

the

collector

helper

case,

as

it

turns

out

both

of

those

actors

already

advertise

in

http

configuration

and

public

key,

so

maybe

that

the

problem

of

mutual

authentication

there

could

be

solved

by

using

hpke's

mutual

authentication

mode.

K

Okay.

Where

was

I

right?

So

clearly

we

have

some

inconsistent

guidance

and

some

missing

recommendations

about

authentication

in

this

protocol.

So

the

question

here

broadly,

is

what

should

dap

say

about

request

or

response

authentication

so

to

advance

the

strawman

claim

and

stimulate

some

discussion.

I'm

going

to

claim

that

as

much

as

possible,

we

should

say

nothing

and

stick

to

enumerating

requirements

for

the

security

of

the

channels

rather

than

solutions.

K

So,

in

my

view,

we

should

be

aiming

for

composability,

with

existing

authentication

schemes,

widely

deployed

with

http

apis,

with

an

eye

towards

sort

of

integrating

nicely

with

with

the

schemes

already

deployed

by

vendors,

who

might

want

to

operate.

Dap

servers

so

stuff,

like

aws,

request,

signatures,

oauth2

or

even

tls

client

certs,

which

a

lot

of

people

do

use

for

authentication.

K

Now,

of

course,

the

exception.

There

is

the

cases

that

I

discussed

where

we

mandate

the

use.

Excuse

me

where

dap

mandates

the

use

of

hpke

the

distinction

to

keep

in

mind

there

is

that

we

mandate

that,

in

those

cases

where

we're

channeling

a

secure

channel

through

some

protocol

participant

and

so

in

those

cases,

we

can't

rely

on

an

under

excuse

me

on

a

security

property

of

an

underlying

transport.

K

So

continuing

from

the

topic

of

like

good

use

of

http,

we're

thinking

about

rewriting

the

http,

the

api

mandated

by

dap

to

be

a

little

more

resource

oriented,

if

not

full-on

restful.

So,

for

instance,

instead

of

the

upload

endpoint

being

just

upload,

with

all

the

meaningful

parameters

being

encoded

into

the

body

of

the

request.

K

You

know

into

into

the

uri

that

you're

uploading

to

we're,

also

interested

in

looking

at

the

relevant

best

current

practices

documents

and

aligning

with

their

guidance

where

it

makes

sense,

for

instance,

to

get

to

make

use

of

better

http

semantics

and

maybe

doing

something

like

extending

the

http

config

and

point

into

something

like

acme's

api

directory

and

as

we

were

just

discussing

or

interested

in

revisiting

what

the

requirements

are

for

authentication

and

what?

If

any

prescriptions

we

make

okay,

so

that's

it

for

me.

H

H

So

knowing

what

I

know

now,

which

is

not

much

well,

I

know

I

know

how

this

document

works,

but

I

mean

more

generally,

the

this

seems

like

a

good

approach,

but

I

think

perhaps

we

should

do

is

reach

out

to

the

hdp

api

working

group

because

they

are

specifying

best

practices

for

this,

and

I

think

they

can

like.

This

is

just

like

straight

up.

H

Http

web

app

right,

our

http

web

service,

and

so

I

think

we

should

take

their

guidance

on

how

we

do

this,

which

I

think

would

quite

likely

be

this,

but

I

think

we

should

get

their

guidance

on

that

rather

than

reinventing

the

wheel,

so

I

don't

know

who

will

be

responsible

for

that.

Is

you

know?

I

suppose

we

could

do

it

privately,

and

I

said

not,

but

that

would

be

my

recommendation

for

this.

As

I

said,

I

think

these

are

like,

I

think,

you're.

H

I

think

that

your

your

intuition

here

that,

like

people,

are

going

to

have

their

own

mechanisms

and

we

don't

want

to

interfere

with

those

like.

I

think

it's

entirely

entirely

correct.

I

think

that's

also

true

for

the

for

the

next

thing

you

said

about,

like

the

acme,

you

know

the

you

know

the

directory

and

stuff

like

that.

Those

are

also

questions

which,

like

that

one

might

help

us

with

so

starting

our

recommendation.

K

Yeah

yeah,

I

agree

eric.

Thank

you.

I

think

we

should

also

talk

to

some

of

the

prominent

operators

of

acme.

I

I

know

a

couple

of

them

to

see

what

their

experience

has

been

with

like

this.

You

know,

acme

specifically

mandates

the

use

of

jwts

and

the

directory

and,

like

I

know,

the

people

who

run

let's

encrypt,

have

opinions

about

those

things.

I'd

love

to

hear

from

other

acme

operators

how

they've

what

their

experience

of

that

has

been.

E

I

kind

of

see

the

motivation,

though,

because

we

have

basically,

this

collect

request

from

the

lecturer

goes

to

the

it

goes

through

the

helper

via

the

leader

and

if

the

leader

is

is

attacking

privacy,

then

this

is.

This

is

a

problem.

You

should

also

stipulate,

though,

that

the

collector

is

also

part

of

the

threat

model,

so

privacy

should

hold

as

long

as

one

aggregator's

honest.

That

said,

I

think

I

think

off

the

authentication

would

be

useful.

E

K

Yes,

yes,

I

think

that's

a

good

point,

chris

and

yeah

and

on

the

topic

of

direct

communication,

either

aggregator.

That's

also

something

we've

been

batting

around

in

this

on

the

upload

side

of

the

protocol.

Right

like

at

the

moment.

The

way

uploads

work

is

that

the

client

sends

one

message.

Sorry,

chris,

we

can

hear

you,

we

can

hear

your

typing,

you

wouldn't

doing

my

muting.

Thank

you.

K

At

the

moment,

clients

will

create

one

message

that

contains

both

input

shares

transmit

that

to

the

leader

and

the

leader

is

responsible

for

relaying

the

helper's

chair

to

the

helper.

So

this

has

some

problems

like

the

the

the

main

problem

with

that

which

I

think

we

discussed

the

last

ietf

is

that

it

means

a

leader

may

incur

like

significant

costs

for

network

egress,

but

yeah,

but

it

also

forces

us

to

deal

with

like

this

tunnel

channel

through

the

leader

so

yeah.

F

M

K

Yeah,

I

agree.

This

is

why

I

highlighted

the

problem

of

a

collector.

The

collect

request

being

authenticated

all

the

way

through

to

the

helper.

Otherwise

we

do

have

a

threat

model

in

the

back

of

the

document

that

tries

to

enumerate

like

what

exactly

the

leader

can

do,

but

the

helper

can't,

I

think

it's

out

of

date,

though,

and

it

certainly,

I

think

it

needs

some

attention.

H

Then

then

there's

a

protocol

design

failure,

because

the

because

the

collector,

if

you

take

the

collector

and

leader

and

you

split

them

apart,

the

collector

talks

directly

to

the

helper

and

then

the

collector

closes

the

leader

you're

back

in

the

soup.

So

the

protocol

must

resist

that

must

resist

it

must

have,

but

really

designer

design.

Was

that

neither

case

so

I

actually

don't

believe

it's

like

so

like.

So,

while

I'm

open

to

having

the

open

to

having

the

collector

talk

directly

to

the

helpers,

I

do

not

believe

they

address

the

problem.

H

H

So

I

think

if

we

do

decide

to

do

that,

we

have

to

have

a

mechanism

that

also

allows

you

to

have

a

an

ingest

server

because

like

otherwise

there

are

all

kinds

of

problems

where,

like

we

send

like

only

one

chair,

not

the

other,

and

you

could

deal

with

that.

So

I

think

there's

less

of

an

issue

for

the

helper,

though

it

is

like

a

lot

of

burden

on

the

helper

to

like

you

know,

make

it

happen.

L

So

I

also

want

to

echo

some

of

dkg's

concerns,

but

separately.

I

noticed

in

the

draft

that

you

specifically

say

that

only

one

collector

is

or

sorry

only

one

helper

is

supported.

Is

that

still

the

case

because

it?

I

did

not

pick

up

on

any

real

blockers

for

that,

but

I'm

curious

what's

providing

that

thanks.

K

All

right,

so

my

understanding

is

that

there

is

nothing

in

like

the

underlying

crypto

constructions,

which

is

to

say

the

vdas

precludes

additional

helpers,

although

chris

patton

is

about

to

because

I

think

that's

not

very

popular,

but

in

prior

you

can

have

arbitrarily

many

helpers,

dap

kind

of

makes

the

soft

assumption.

But

there's

exactly

one

helper,

though

we're

a

little

inconsistent.

I

think

throughout

the

draft

about

whether

there's

exactly

would

help

or

not

but

yeah.

So

in

my

view,

there's

a

trade-off

between.

K

E

If

anyone's

ever

played

that

video

game-

okay,

so

yeah

just

to

echo

tim's

point

right

now,

we

don't

support

more

than

one

helper.

However,

we

we

intended

to

design

the

protocol

in

a

way

that

we

can.

We

could

go

in

that

direction.

If

that's

what

people

wanted

to

do?

One

liter

one

helper

is

kind

of

the

simplest

thing.

It

adds

protocol

complexity

to

add

additional

helpers,

but

I

don't

think

that

complexity

is

impossible

to

address.

E

So,

if

folks

want

to

add

support

for

more

for

more

aggregators,

I

think

we

can

do

it.

I

wanted

to

go

back

to

dkg's.

Point

collector

to

helper

authentication

doesn't

have

anything

to

do

with

the

power.

The

extra

power

that

the

leader

has

the

extra

power

that

the

leader

has

has

to

do

with

civil

attacks,

because

the

leader

gets

to

pick

the

set

of

reports

that

are

aggregated.

E

We

don't

have

a

generic.

We

don't

have

a

generic

defense

for

civil

attacks.

That

would

probably

be

pretty

hard,

but

something

definitely

we

should.

We

should

find

solutions,

for

I

wanted

to

point

out

that

the

helper

also

has

can

do

sybil

attacks

by

anyone

who

can

upload

reports

to

the

leader

can

can

mount

a

civil

attack.

They

have

to

collude

with

the

collector

because

the

as

tim

pointed

out,

the

aggregate

shares

are

encrypted

under

the

collector's

public

key.

So

as

long

as

one

server's

honest

they,

don't

they

don't.

E

Actually

the

attacker

doesn't

see

the

result,

but

we

want

you

know

we

want

to

be

able

to

deal

with

the

case

where

the

collector

is

malicious.

So

because

reports

are

unauthenticated,

there's

anyone

can

do

a

civil

attack

and

I

think

the

leader's

relative

strength

is

kind

of

minor,

and

I

would

I

I

would

like

to

see

defenses

be

more

generic

and

not

just

apply

to

that

particular

situation.

A

J

B

N

J

D

On

on

the

topic

of

one

helper

versus

multiple

helper,

I'm

sorry

to

keep

bouncing

back

and

forth

between

different

things.

I

wouldn't

be

surprised

if

we

find

out

that

popular

in

practice

is

just

like

too

expensive

to

run

given

how

many

rounds

it

requires

for

every

single

bit

of

input

that

you're,

actually

that

you

actually

want

to

aggregate.

D

So

I

don't

know

what

that

says

about

the

fate

of

the

dap.

As

a

you

know,

generic

thing

for

all

vdos

versus

dap

as

a

prio,

specific

or

bus

or

whatever

specific

protocol.

But

I

I

could

see

a

future

wherein

dap

kind

of

gets

less

general

more

specific

to

prio

and

maybe

a

heavy

hitters

like

solution

gets

it's

its

own

thing,

maybe

that

star,

maybe

that's

something

else,

but

if

that

were

the

case,

then

accommodating

multiple

helpers

would

be

rather

straightforward

and

dap

for

prio.

D

But

if

it's

like

super

general-

and

we

have

popular

with

its

constraint

that

it

only

works

with

one

particular

helper,

it's

it's

not

clear

like

what

the

the

result

of

in

terms

of

complexity

would

be

on

the

protocol.

But

I

just

wanted

to

note

that,

like

we're

not

set

in

stone

here,

we

might

see

that

things

get

less

general

or

not

as

we

go

forward.

L

I

think

so

on

the

topic

of

the

leaders,

power

and

and

control

over

over

the

system.

Can

you

just

clarify

just

so

I

I

know

I

I'm

understanding

it.

So

the

leader

has

the

ability

to

reject

shares

and

therefore

they

are

never

processed

by

the

helper

and

therefore

a

colluding

leader

and

collector

can

basically

single

out

individual

uploads.

Is

that

correct.

K

Yeah

but

then

you're

going

to

select

yeah,

which

shares

get

paired

like,

as

we

saw

earlier

right,

it's

good

to

assign

a

reporter

to

job

ids.

However,

the

helper

also

can

do

this

on

a

per

share

basis,

because

the

responsibility

helper

delivers

to

a

leader's

aggregation

request

is

going

to

include

like

a

list

of

essentially

a

list

of

like

per

input,

preparation

messages,

so

the

helper

could

simply

choose

to

like

fail

to

prepare

any

individual

input

right.

So

I'm

not

saying

that

that's

good!

K

The

other

half-baked

thought

I

have

in

response

to

that

question

is

that

I

think

injection

servers,

anonymize

congestion

servers

are

probably

a

helpful

mitigation

here

right

in

that

one

of

the

issues

is

that

if

you

have

a

in

a

deployment

where

clients

are

uploading

directly

to

a

leader,

the

leader

gets

to

see

all

sorts

like

interesting

metadata

about

a

report.

You

know

client

ip

stuff

like

that,

on

which

basis

he

could

choose

to

to

to

drop

reports.

K

So

we

anticipate

that

a

lot

of

deployments

are

going

to

use

some

kind

of

intervening

ingestion

server.

Hopefully

you

could

just

like

stick

an

ohio

server

in

front

of

in

front

of

the

leader

such

that

the

leader,

I

don't

want

to

say

to

be

impossible,

but

certainly

it

ought

to

be

harder

for

it

to

be

able

to

selectively

drop

reports.

E

A

E

E

So,

as

tim

mentioned,

dap

centers

around

a

particular

class

of

multi-party

computation

schemes

that

we

call

vdfs.

These

all

have

basically

the

same

shape.

We

have

a

large

number

of

clients

each

with

the

measurement

clients

split

their

measurements

into

what

we

call

input,

shares

and

upload

these

to

a

small

number

of

aggregation

servers.

E

The

aggregation

servers

interact

with

one

another

in

order

to

verify

and

aggregate

the

reports

at

the

end

of

this

process.

Each

computes,

a

share

of

the

aggregate

results

then

later

on

a

collector,

comes

along

and

pulls

aggregate

shares

from

the

aggregators

and

computes.

The

final

result,

so

yeah

vdas,

are

being

worked

on

in

the

cfrg.

E

The

input

shares

are

encrypted

under

the

public

key

of

each

of

the

aggregators

in

order

in

order

to

protect

them

and

at

the

same

time

the

aggregators

are

aggregating

reports.

So

this

process

begins

with

the

leader

whose

gets

all

the

reports

it

picks

some

set

and

takes

the

pulls

out

the

encrypted

input

shares

of

the

helper

and

sends

these

to

the

helper

in

a

a

an

http

request,

and

then,

after

some

number

of

rounds,

they

have

computed

aggregate

shares

for

the

set

of

reports

they

were

able

to

verify.

E

How

do

we

choose

a

set

of

reports

to

aggregate

and

when

you

think

about

it,

the

most

basic

requirement

for

this

is

well?

The

batch

of

reports

needs

to

be

sufficiently

large

that

the

measurements,

the

set

of

measurements,

remain

private

and

what

this

means

is

kind

of

application

dependent,

but

at

least

intuitively

the

larger

the

batch,

the

more

privacy

that

you

get

but

think

about

this

from

a

sort

of

a

usability

perspective.

What

are

the

expectations

of

the

collector

who's

grabbing

data?

E

E

E

So

what

a

collect

request

specifies

a

batch

interval

which

determines

a

sequence

of

time

windows

and

what

the

collector

expects

is

that

the

reports

aggregated

all

fall

into

one

of

these

time

windows.

Now

we

have

certain

restrictions

on

the

on

on

batch

on

batch

intervals.

On

the

one

hand,

this

is

about

like

operational

stuff,

like

we

want

it

to

be

possible

for

both

aggregators

to

be

able

to

efficiently

pre-compute

aggregate

shares

in

advance

of

getting

a

collect

request

and

also

there's

privacy

considerations

here.

E

But

the

problem

we're

working

on

right

here

is:

there

are

a

couple

of

use

cases

that

this

this

scheme

doesn't

support.

Very

well.

So,

for

starters,

you

might

want

to

select

a

batch

based

on

some

client

property,

so

this

was

brought

up

in

in

issue

183.

Basically

reports

you

might.

What

the

collector

might

want

is

that

the

reports

are

grouped

by

say

user

agent

or

location,

so

the

collector

would

specify

some

predicate

that

defines

a

set

of

reports

that

go

in

the

batch.

E

Basically,

the

properties

of

reports

that

go

in

the

batch-

and

you

can

imagine

this-

this

could

be

quite

simple

like

give

me,

the

aggregate

for

all

chrome

users

or

all

safari

users,

or

a

little

bit

more

complicated,

like

give

me

the

aggregate

for

all

chrome

users

in

the

us

or

or

all

firefox

users

that

aren't

in

canada

or

something

like

that

and

yeah.

So

the

problem

that

is

the

problem,

though,

is

that

even

a

very

simple

version

of

this

kind

of

grouping

strategy

is

not

well

supported

in

the

protocol.

E

You

can

kind

of

hack

around

it,

but

we

don't

expect

that

any

solution

that

we

have

today

will

scale

very

well

now

issue

273

brought

up

even

what's

arguably

a

simpler

use

case.

Maybe

you

actually

don't

care

that

reports

have

anything

to

do

with

each

other?

Maybe

what

you

need

basically,

is

that

reports

are

the

the

batches

are

disjoint

and

that

they

all

have

the

same

size

or

at

least

approximately

the

same

size

and.

E

E

Okay,

so

so

fixed

size

batches

are

useful

for,

like

you

know,

a

statistical

analysis

where

you

need

to

control

the

sample

size

and

for

applications

that

want

to

compose

dap

with

differential

privacy.

This

is

also

going

to

be

important

for

tuning

noise

and

then

there's

also,

you

know

waiting

for

the

current

time,

endo

to

expire

before

you,

compute

in

aggregate

can

add

latency

to

the

system

that

might

not

actually

be

sort

of

necessary.

E

Okay,

so

all

right

so

where

we

are,

we

think,

is

that

we

need

more

flexibility.

The

question

is

how

much

and

we

need

to

stipulate

the

fact

that

collectors

in

dap

are

going

to

be

more

constrained

than

in

a

more

in

a

traditional

database

or

telemetry

system,

and

this

has

to

do

with

some

privacy

privacy

issues

which

chris

will

talk

about

in

the

next

presentation.

E

Lots

of

open

questions

there,

but

even

from

like

a

functional

perspective,

we

need

to

figure

out

what

we

need.

One

question

is:

what

are

all

the

query

types?

So

I've

talked

about

three

here.

Basically,

this

time

series

things

that

we

have

today,

grouping

by

client

properties

or

or

or

partitioning

things

into

fixed-sized

chunks.

What

else

do

we

need?

E

Another

question

is:

do

we

need

to

be

able

to

compose

different

query

types?

This

can

get

quite

complicated.

I

imagine

not

all

query

types

would

necessarily

compose,

and

finally,

would

every

dapp

deployment

need

to

implement

all

query

types,

or

is

this

something

that

we

can

allow

folks

to

implement

incrementally

or

not

at

all

so

yeah?

So

I,

I

guess

I'll

leave

this

slide

up

for

the

discussion.

I

can

also

go

back

and

forth

as

needed.

E

My

proposal

for

draft

two

would

be

to

take

an

incremental

step

that

is

minimal,

but

is

sufficient

for

our

use

cases,

and

I

think

this

would

involve

enumerating

all

the

possible

query

types

that

we

want

to

support

in

a

way

that's

extensible,

and

then

I

you

know

I

would

add

some

additional

requirements

to

this.

Basically,

the

idea

would

be

that

the

collector

would

include,

in

its

collect

request,

a

query

that

the

leader

would

use

to

choose

a

batch

of

reports

that

satisfy

that

query.

E

H

I

want

to

just

talk

this

over

before

I

start

saying

what

I

think

we

got

to

do

so

I

guess

I

want

to

make

two

observations.

One.

I

know

what's

going

to

talk

about

the

pro

but

the

privacy

implications,

but

I

think

we're

already

like

kind

of

out

of

the

zone

where

we

can

plausibly

make

privacy

assertions

so

like

the

the

the

property

that's

nice

about

the

current

design

is

that

you

can

look

at

that.

Look

at

the

possible

and

queries

these

trips.

H

Don't

work

the

possible

outputs

and

draw

conclusions

about

the

privacy

properties

of

the

system

right.

You

got

some

key

and

enemy

conclusion.

You

draw

some

like

conclusion

about.

Like

interception

attacks

like

you

can

just

conclude:

it's

safe

or

unsafe,

but,

like

you

can

just

analyze

it

right,

and

so

I

suspect

that

the

minute

we

get

to

the

point.

B

H

But

if

it

doesn't-

but

I

mean

sorry,

you

know,

although

screwing

around

we've

done,

is

that

trying

to

make

that

single

processing

requirement

easier

to

implement

on

the

helper

run

later

right,

and

so

as

soon

as

you

get

out

of

that

mode,

and

you

say

you

can

make

one

query:

multiple

queries

on

the

same

submission,

which

is

not

quite

creative.

This

allows

but

like

it

implicitly,

might

allow

and

and

certainly

we're

much

harder

to

implement.

You

know

if

it

doesn't.

H

H

H

I

wonder

if

we

want

something

like

much

more

fancier

instead

right

and

so

what

I

need

a

fancier

is

effectively

to

say

well,

the

collector's

like

any

subset.

It

wants

to

be

any

mechanism

at

once

and

and

then

they

usually

analyze,

and

we

have

some

other

mechanism

for

ensuring

privacy

into

those

conditions.

H

But

anything

could

be

I'm

not

sure

quite

sure,

but

to

say

once

we

have

any

kind

of

query

methods

that

allows

overlapping

queries,

we're

already

in

the

soup

and

we're

going

to

have

a

flexibility

question

analysis.

So

so,

like

here's,

like

my

dumb

version

of

this,

which

is

effectively

that

the

that

the

collector

gets

to

upload

a

piece

of

javascript

that

the

the

leader

and

help

the

coverage

execute

the

term.

Whether

it'll

include

a

given

section.

H

Another

version

of

that

would

be

for

the

leader

and

helper

to

provide

the

collector

with

the

entire

inventory,

every

possible

submission

and,

and

the

collectors

simply

say,

aggregate

these

ones,

these

ones

these

ones

right.

And

so

the

reason

why

the

reason,

the

reason

I'm

saying

this

is

not

it's

not

make

the

problem

harder,

but

to

make

it

easier

and

to

sort

admit

the

fact

like

admit

the

fact

that

we

already

are

sort

of

like

off

the

fairway

and

try

to

solve

the

problem

on

the

far

end

of

the

fairway.

E

E

E

To

support

something

like

this,

that

is

simple

and

already

kind

of

constrained,

but

I

like

your

point,

though,

is

well

taken

like

whatever

we

do

here,

I

think

at

a

minimum

we

can

try

to

prevent

overlapping

batches,

like

that.

I

think

that

could

always

be

defined,

although

with

like

the

grouping

thing

like

the

what

this

is,

what

this

is

kind

of

about

is

I

want

to.

I

want

to

explore

the

data

yeah

yeah

well.

H

H

H

Then

you

like

a

counter

on

every

submission

and,

like

you

know-

and

I

don't

know

about

one

query

right-

and

these

are

some

other

rules

and

some

other

rule,

but

I

think

like

and

and

then

I

think

that

what

basically

says

like

and

then

I

think

the

question

is:

what's

the

most

the

cheapest

way

to

allow

arbitrary

queries

and

not

design

a

whole

new

language

for

that,

rather

than

trying

to

design

that

language

for

that.

So

now

we

might

put

on

this

on

this.

H

M

The

reason

that

people

are

comfortable

participating

in

this

scheme

is

because

they

want

to

give

feedback

that

will

help

the

person

the

the

group

that's

developing

their

software

or

they

want

to

report

some

telemetry

without

risking

their

own

privacy

right,

and

some

of

these

types

of

disaggregation