►

From YouTube: IETF115-SAAG-20221111-0930

Description

SAAG meeting session at IETF115

2022/11/11 0930

https://datatracker.ietf.org/meeting/115/proceedings/

B

B

Let's

get

started

all

of

the

slides

are

in

the

data

tracker

if

you

want

to

follow

along

there

and

we

also

have

kind

of

media

meeting

minutes.

So

if

you

want

to

help

collaborative

edit,

those

that's

a

possibility

as

well

on

the

screen.

Is

the

note

well

just

a

reminder:

you've

seen

this

every

every

meeting

you've

attended.

We

have

a

various

kind

of

policies

and

procedures

kind

of

in

place.

Please

make

yourself

aware

of

them.

B

B

We

have,

we

probably

have

as

many

participants,

remote

As.

We

have

in

this

very

small

room

here,

I'm,

not

sure

why

we

got

sized

the

way

we

did

so

it

will

be

kind

of

intimate.

What

we

have

up

are

a

few

tips

about

how

to

make

the

experience

most

productive.

If

you

are

in

the

room,

please

scan

the

QR

code

to

bring

up

the

meet

Echo

light

client

and

if

please,

keep,

please

have

your

kind

of

mask

on.

B

A

B

We

have

this

is

the

agenda

we

previously

had

published

we're

going

to

go

through

the

administrative

and

summaries

of

the

area.

We're

going

to

have

Morgan

Cullen,

give

us

a

talk

on

significant

adoption

of

a

couple

of

key

ietf

Technologies.

Then

we're

going

to

talk

about

formal

verification,

it's

a

topic

that

has

popped

up

in

a

couple

of

other

places,

and

then

we

Justin

Richard

is

going

to

talk

to

us

about

hdb

message,

signatures

which

is

I

believe

in

working

from

last

call

in

HTTP.

This

pausing

there

for

any

agenda

bashing.

B

Not

hearing

any,

let's

press

forward

wanted

to

want

to

call

out

a

couple

of

things.

Participating

in

the

security

area

means

a

lot

of

different

things.

We

welcome.

We

welcome

you

kind

of

hearing

more

more

from

interested

participants

about

how

you

would

like

to

participate.

There,

of

course,

is

sitting

on

this

side

of

the

of

the

room.

If

you're

interested

in

being

a

chair,

please

let

us

know

another

great

way

to

get

involved

in

kind

of

the

real

business

of

how

the

working

groups

do

things

is

be

a

document

Shepherd.

B

This

gives

you

a

lot

of

insight

into

how

to

do

document,

reviews

and

ietf

process,

and

it

is

a

tremendous

help

to

the

working

group

The

working

group

authors

and

to

and

to

pollinate

we're

going

to

talk

about

this

later.

There

are

all

sorts

of

Errata

in

the

security

area

that

we

need

to

do

a

little

bit

better

job.

B

At

closing,

this

is

another

way

to

learn

about

how

we

do

maintenance

on

on

ITF

protocols

that

we

publish

some

in

active

working

groups-

some

not

so

much

and

that's

certainly

a

place

where

we

need

a

lot

of

help

and

then,

of

course

participating

is

you

know

if

you

can't

make

it

in

person

the

virtual

experience

these

are

you

know?

Virtual

participants

are

first

order,

participants

kind

of

as

well,

so

please

join

especially

in

boss

to

help

us

understand

what

work

we

want

to

bring

into

the

ITF.

B

So

I

will

say

you're

going

to

see

something

a

little

bit

different

Paul

introduced

an

innovation

here

then

maybe

we

don't

need

to

run

through

all

the

different

working

groups,

so

we

are

going

to

largely

manage

the

queue

from

now

on

by

exception.

If

folks

want

to

come,

come

forward

and

talk

about

things

so,

first,

first

order

of

things:

we've

had

some

changes

in

working

groups

and

some

talk

of

new

work.

B

Since

we

last

met

at

ietf

14.,

we

had

a

virtual

buff

between

14

and

15.,

originally

titled

Json

web

proofs,

and

then,

if

you

jump

to

the

next

category,

there

was

consensus

out

of

the

both

in

person.

We

had

an

in-person

buff,

and

then

we

had

a

virtual

buff

coming

out

of

that

virtual

Bob.

We

had

consensus

to

move

forward

with

chartering

to

reopen

the

Jose

working

group,

and

that

is

in

Flight,

that

is

in

flight.

Now

it's

going

to

be

looked

at

the

isg

in

early

December.

B

A

thing

to

kind

of

call

out

is:

what's

at

the

bottom,

the

ITF

overall

introduced

a

new

way

to

do

Buffs

or

brought

back

I'm

told

for

for

folks

that

have

participated

a

long

time.

This

notion

of

virtual

interim

Buffs,

that

is,

you,

don't

have

to

wait

to

the

face-to-face

meeting

to

have

a

Bob.

We've

now

used

that

successfully

three

times

so

Tigris,

which

is

in

the

app

area

with

security

considerations.

B

We

used

it

to

to

move

skit

forward

and

we

also

used

it

to

move

forward

to

what

what's

getting

chartered

now

we'll

see

on

the

community

feedback

in

Jose

and

so

kind

of

bottom

line.

It

looks

like

it's

cutting

out

at

least

a

half

meeting

cycle

for

work

to

get

new

work

kind

of

Chartered,

and

it

looks

like

a

successful

approach

and

I

see

us

continuing

to

to

use

that.

B

So

this

is

this

is

the

compression

of

what

normally

would

be

20

slides.

Thank

you

kind

of

Paul.

A

lot

of

working

groups

met

him

this

last

week,

I

believe

almost

all

of

them

sent

a

summary

to

the

sagless.

That's

where

all

the

details

are.

We

invite

any

working

group

chairs

or

participants

to

come

up

to

the

mic

and

now

tell

us

about

Hot,

Topics

or

anything.

They

think

Sac

should

know

about

those

previous

meetings

this

week.

B

A

B

All

right,

well,

perfect,

I'm

sure

we're

going

to

read

everything.

That's

on

the

mailing

list,

okay,

so

pulling

us

to

to

some

of

the

administrative

updates

that

the

80s

would

like

to

to

share

with

you.

First

we

want

to.

We

want

to

highlight

that

working

one

of

the

ways

in

which

working

groups

are

successful.

It

really

is

because

of

the

leadership

of

the

chairs,

facilitating

the

technical

work

so

to

highlight

some

changes.

We

want

to

thank

Mohit

and

Richard

for

for

chairing

cassette

Dispatch.

B

They

have

rotated

out

if

you're

on

the

SEC

dispatch

kind

of

mailing

list.

One

of

the

things

that

we

did

is

you

know,

really

rethought

what

to

do

with

standing

working

groups

so

with

working

groups

that

don't

have

an

end

to

their

work

like

SEC

dispatch.

When

a

working

group

chairs

signs

up

the

exit

plan

is,

unless

we

say

you

know,

let's

rethink

this

or

they

tell

us

they're

out,

they

will

be

working

group

chairs

kind

of

indefinitely.

B

We

wanted

to

set

up

a

rotation

process,

and

so

we've

roughly

created

a

policy

insect

dispatch

that

it's

about

a

four-year

term.

Unless

you

know,

we

think

there's

an

issue

or

a

day,

or

they

would

like

to

step

down,

and

so

Richard

has

been

in

in

that

role

for

four

and

a

half

four

and

a

half

years

and

really

launched

the

process

for

us,

rifat

is

stepping

in

for

him.

Kathleen

is,

is

three

years

into

the

term.

The

next

I'm

sorry

this

coming

summer

in

iatf17.

B

She

is

going

to

rotate

out

and

we

would

expect

that

the

Ed's

kind

of

at

the

time

Paul

and

whoever

is

the

ad

at

the

time-

will

kind

of

make

that

make

that

selection.

So

this

is

kind

of

this

idea

of

withstanding

working

groups.

We

should

rethink

what

happened

friends

and

then

we

spun

up

the

new

skit

working

group

and

so

kind

of

thanks

to

John

and

honest

for

for

for

stepping

in

to

to

lead

us.

B

B

We

didn't

create

any

non-working

group

mailing

lists.

So

our

cue

for

ad

sponsor

documents

is

getting

kind

of

a

little

bit

deeper.

So

this

is

real

time

as

of

real-time

as

of

at

least

SEC

dispatch.

Yesterday,

where

we

added

the

draft

legit

SP

CAC

for

for

ad

sponsorship,

and

then

we

have

not

yet

closed

the

action

item

for

SEC

dispatch,

114

to

land

draft

East,

Lake

fnv,

but

we

are

going

to

work

on

it.

B

Are

there

any

questions

about

the

ad

sponsor

drafts

we

are

holding?

We

especially

Point

them

out

here

because

of

for

folks

a

little

less

familiar

with

the

process.

80

sponsorship

means

it's

not

going

through

a

working

group,

so

the

review

process

is

the

formal

ITF

last

call

and

whenever

we

as

ads

trigger.

So

these

documents

are

here

primarily,

so

you

have

visibility

assat

and

we

would

beg

for

your

reviews

on

those

documents

to

make

sure

that

they

leave

the

ITF

and

is

the

best

quality

as

we

can

get

them

foreign.

B

B

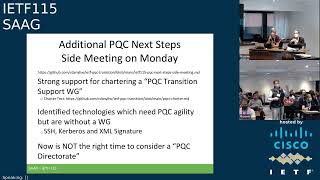

One

unique

thing

that

we

did,

that

it

isn't

kind

of

part

of

this

structural

update

that

you've

seen

a

number

of

times

is

reconvened.

What

we

call

the

pqc

next

steps

or

additional

next

step

side

meeting

on

Monday

and

the

thinking

there

was.

We

saw

a

tremendous

amount

of

energy

on

the

pqc

mailing

list

that

we

spun

up

a

little

bit

before

ietf

14..

B

Additionally,

we

had

we

had

a

sec

dispatch

result

in

114

to

pick

up

a

pickup,

what

we

considered

a

transition

support

document

around

pqc

and

after

kind

of

thinking

about

the

energy

on

the

list

and

what

we

wanted

to

do

with

that

document,

we

we

shopped

around

a

charter

to

what

we're

calling

the

pqc

transition

support

working

group.

So

this

is

a

working

group

that

is

not

intended

to

change

existing

protocols.

B

It

is,

it

is

a

forum

which

to

discuss

design

and

transition

choices

that

are

relevant

across

the

the

SEC

working

groups

and

probably

across

kind

of

the

ietf

and-

and

all

it's

permitted

to

do.

Is

that

facilitation

that

discussion

and

when

discussion

really

is

probably

reusable

or

it

makes

sense

for

it

to

be

durable.

For

archival

purposes

they

that

working

group

would

have

the

ability

to

charge

to

sorry

publish

informational

documents.

B

It

appeared

we

had

consensus

for

that

from

the

mailing

list

to

mailing

list

it

appeared.

There

was

really

strong

support

for

that

from

the

side

beating.

So

at

this

point

we

are

likely

to

proceed

with

chartering.

The

big

blocker

is

as

everything

in

the

ietf

one

of

the

most

important

things

is

choosing

a

name

which

we

have

not,

and

we've

got

to

dial

in

the

dial

in

the

deliverable.

So

we

welcome

kind

of

additional

kind

of

feedback,

and

polish

please

put

that

on

the

pqc

list.

B

C

B

B

And

we're

not

making

changes

to

proposal

to

I'm

sorry

protocols,

yeah,

please

check

the

charter

text

I

believe

we

do

say

that,

but

if

not,

let's

publish,

we

asked

two

other

questions,

and

this

is

a

little

bit

of.

We

see

energy

and

we've

been

coming

back

to

you

in

Sag

double

checking

where

we

want

to

be

as

a

community.

We

were

trying

to

check

out.

Where

do

we

think

we

have?

There

is

a

bunch

of

working

groups

that

are

active,

doing

pqc

kinds

of

things

we

wanted

to

check.

B

D

B

Heard

Kerberos

and

we

held,

we

heard

XML

signature.

If

you

think

there

are

more,

please

kind

of.

Let

us

know

if

you

go

to

that,

GitHub

site

that

we're

going

to

migrate

to

the

ITF

kind

of

Wiki.

There

there's

kind

of

a

place

where

we're

capturing

that

list

and

then

the

third

question

we

asked

was:

we

often

rely

on

the

ITF

on

directors

to

help

us

do

document

reviews

for

specialized

things,

so

A

specialized

I

mean

we

have

the

security

director,

but

we

also

have

Niche

things

like

Yang.

B

So

if

you

have

a

Yang

document,

you

can

go

to

the

Yang

doctors

to

help.

You

specifically

do

that

the

question

was

asked:

do

we

think

we

need

something

for

just

post

one

cryptography

reviews,

so

a

pqc

directorate,

the

feedback

from

the

side

beating

was

no.

It

is

we're

not

ready,

and

now

is

not

the

time

to

do

that.

If

you

want

kind

of

more

information,

there

are

some

references

there.

B

We

see

the

sector

review,

putting

in

kind

of

feedback

hey.

You

need

to

think

a

little

bit

more

about

that

or

issues

that

Paul

and

I

commonly

discuss

on

this

is

kind

of

a

running

list.

This

is

you

know

something

that

that

we

started

it

could

have

a

number

of

years

ago.

I

just

highlight

that

if

you

have

feedback

for

other

things,

perhaps

you

are

doing

if

you

are

reviewing

documents

that

have

been

working

in

the

working

groups.

B

One

thing

we

just

added

added

to

that

list

because

we

are

seeing

it

in

a

number

of

recent

and

kind

of

child

chats

is

that

working

that

documents

are

coming

to

the

telechat

review

and

they

are

saying

we

are

Fielding

this

in

a

limited

domain

and

beyond

that

there

isn't

a

lot

of

specification

about

what

are

the

security

properties

of

that

domain.

How

are

you

bounding

that

domain

and

how

are

you

controlling

things

are

in

there

and

so

that's

an

example

of

a

flavor

of

kind

of

common

themes

that

we

would

put

on

that.

B

If

you

want

to

get

a

sense

for

where

we

are

with

your

documents.

Those

are

the

pointers

to

the

queue

there

is

fairly

detailed

kind

of

inside.

You

know

when

we

take

the

document

for

isg

processing,

where

we

are

kind

of

with

that

process

and

how

far

we

have

Advanced

it.

Just

a

reminder:

we

have

a

sec

area,

SEC

area

kind

of

Wiki

The

igf

is

migrating

its

Wiki

technology

from

track

to

Wiki

JS,

so

that

URL

is

where

we

are

right

now.

B

In

close,

in

close,

we

can't

emphasize

enough

how

helpful

the

security

director

is

to

the

ietf

to

help

the

working

groups.

Polish,

the

documents

find

issues

and

really

get

us

to

the

point

where,

when

we

go

to

RFC,

the

documents

aren't

as

good

as

they

can

be

and

with

as

well-rounded

of

any

kind

of

an

assessment.

So

thank

you

to

the

security

directorate.

B

They

made

kind

of

this

possible,

and

these

are

all

the

folks

that

that

helped

us

review

documents

across

all

of

the

working

groups

for

everything

that

went

to

ietf

last

call,

since

we

got

together

and

also

a

big

shout

out

to

tuchero,

who

is

our

secretary

for

the

sector.

Reviews

and

literally

every

week

to

every

kind

of

two

weeks

is

making

the

assignments

of

those

documents

and

What

needs

to

meet.

If

you

are

interested

in

participating

in

security

directorate,

please

email,

Paul

and

may,

and

then

we

can

have

a

comment.

B

D

E

So

I'm

gonna

talk

about

an

implementation

report

on

the

use

of

the

Ben

radius

for

U.S

Edge

room

edu

Rome

is

a

service.

That's

been

around

for

a

long

time.

20

plus

years

U.S

said

Jerome

has

been

around

for

a

long

time.

We

did

a

new

implementation

of

us

edgerobe

in

AWS

and

it

was

new

to

us

to

operate

and

deploy

this

service,

and

so

we're

gonna

offer

some

observations

we

had

about

about

those

Technologies.

While

we

were

doing

that

so

next

slide.

E

We

worked

with

internet

too,

who

operates

the

U.S

Edge

Rome

service

to

implement

and

deploy

a

new

Amazon

web

services

based

U.S

Edge,

Rome

infrastructure.

It

went

live

in

November

of

2021,

so

we've

been

operating

it

monitoring

and

maintaining

it

for

about

a

year.

It's

free

radius

based

it's

geographically

redundant

and

then

within

each

geographic

region

it

is

load

balanced

and

I

will

talk

more

about

that.

The

goal

of

this

presentation

is

to

share

our

experiences.

E

Implementing

and

operating

this

service,

and

much

of

this

presentation

focuses

on

Need

for

improvement,

but

I

want

to

emphasize

that

a

lot

of

those

things

are

small

things

and

actually

the

overall

experience

has

been

very

positive

and

highly

successful.

So

it's

up

and

running

and

it's

stable

and

it's

working

well,

but

there

are

a

lot

of

small

things

that

we

think

either

could

be

modernized

or

added.

That

would

make

it

a

lot

a

lot

easier

to

deal

with

so

next

slide.

E

So

this

is

a

little

outline

of

the

talk.

The

first

thing

I'm

going

to

do

is

a

quick

review

of

what

Edge

room

is

just

because

there

may

be

people

in

the

room

who

don't

know

what

I'm

talking

about

at

all

and

try

to

bring

them

up

to

speed

and

then

we'll

do

an

overview

of

the

US

said

your

own

deployment,

including

how

we

deployed

the

infrastructure

and

some

facts

and

figures

about

it,

then

talk

about

how

requests

are

routed

in

entero

and

some

things

about

how

that

works.

E

That

have

been

a

struggle.

Some

security

challenges

that

we've

run

into,

and

also

some

operational

challenges

that

we've

run

into

so

next

slide

at

your

room

is

a

widely

used

international

roaming

service

for

Higher,

Education

and

Research.

As

I

said,

it's

Josh

sitting

in

the

front

row

could

probably

tell

us

exactly

how

long

it's

been

out

because

I

believe

he

did

the

first

deployment

of

it.

But

it's

been

out

for

a

long

time

decades.

E

E

With

that

location,

proxying

an

authentication

request

into

the

central

infrastructure

which

goes

back

to

the

home

institution

and

authenticates,

the

user,

millions

of

students

from

thousands

of

Home

institutions,

access,

Edge,

Aroma,

tens

of

thousands

of

service

locations

throughout

the

world.

It's

a

very

large

scale

service,

and

if

you

want

to

hear

more

about

edurome

itself,

you

can

go

to

that

URL

there

or

talk

to

class,

because

I

think

he

he

works

at

that

organization

and

knows

everything

about

the

central

Ledger

room

service.

E

But

if

you

go

to

the

next

slide,

I

have

a

little

picture

of

how

it

works

here.

If

you

look

on

the

right

hand,

side

well,

let's

start

with

there's

a

user

and

the

user

is

John

at

institution.home.

Okay.

He

once

was

at

his

home

over

on

the

left

and

he

got

identified.

There

got

issued

a

student

ID.

He

got

issued

credentials.

He

then

traveled

to

another

institution

institution.visit

where

he

wanted

to

get

on

the

network.

E

E

E

There's

a

proxy

hierarchy

in

engine

room

that

lets

it

run

all

over

the

world

and

the

way

that

this

proxy

hierarchy

works

is

that

the

individual

edurome

institutions

have

their

own

radius.

Servers

could

be

the

same

radius

servers

that

they

use

to

have

people

log

in

at

other

ssids

or

could

be

dedicated

to

eduro.

That's

totally

up

to

them.

E

So

now

we're

going

to

talk

about

the

US

said

your

own

deployment.

If,

on

the

previous

slide,

I

had

thought

to

mention

it.

I

could

have

said.

One

of

those

National

servers

is

the

U.S

national

server

and

that

server

is

run

or

that

proxy.

The

U.S

national

proxy

is

run

by

internet

2

in

common,

and

we

work

with

them

to

to

run

that

service.

E

The

U.S

Edge

robe

service

has

greater

than

a

thousand

home

institutions

or

idps

greater

than

3

000

service

access

points

or

RPS,

and

somewhere

in

the

area

of

2

million

eligible

students

and

staff

to

use

the

service.

We

can't

say

how

many

people

are

actually

using

it,

because

some

people

only

use

it

locally.

We,

we

can

only

see

from

running

the

the

national

proxy,

the

people

who

roam

so

we

we

don't

know

exactly

how

many

people

out

of

those

eligible

students

are

using

it.

There's

a

URL

here.

E

E

E

It

is

there's

a

sort

of

traffic

engineering

activity

that

happens

there,

where

the

the

software,

the

traffic,

isn't

added

and

sent

over

VPN

tunnel

to

AWS,

where

we

run

the

actual

VPN

a

router

to

be

the

endpoint

of

the

VPN

and

then

the

actual

radius

proxies.

This

picture

shows

a

primary

and

a

backup

because

it

hasn't

been

updated

yet,

but

actually,

at

this

point,

we're

load

sharing

between

the

two

servers

running

any

on

each

coast

and

as

I

said,

it's

then

duplicated.

E

E

We

get,

we

see

somewhere,

you

know

greater

than

12

000

unique

authentication

requests

coming

through

per

minute.

An

authentication

request

involves

several

packet

exchanges,

so

it's

if

there's

not

any

sort

of

one-to-one

between

messages

and

authentication

requests

that

complete

about

two-thirds

65

percent

of

the

authentication

requests

that

are

made

in

the

U.S,

get

an

access

accept

and

about

35

get

an

access

reject.

E

This

is

of

authentication

requests

that

complete

without

some

sort

of

internal

error,

about

18

of

the

requests

we

receive

are

rejected

or

discarded

by

our

proxy

and

some

of

the

biggest

reasons

that

that

happens

are

request,

looping,

where

basically

proxy

looping,

where

basically

we're

receiving

back

a

request

that

we've

already

touched

missing

or

malformed,

username

or

Realm

unknown

client

just

isn't

registered

with

us.

Or

is

it

known

to

us

an

invalid

authenticator

or

a

malformed

message?

E

E

The

eat

method,

distribution,

I

thought

this

was

interesting

when

I

first

saw

it,

we

see

about

75

percent

peep,

fifteen

percent

epls

and

12

ttls.

This

is

from

a

report

in

q1

of

2022..

We

have

I,

haven't

like

gone

through

and

tried

to

look

at

it

again

to

see

if

we

have

any

Trends,

but

but

that's

where

we

were

at

that

time.

E

E

If

that

sounds

a

lot

like

a

1980s

internet

host

file,

that's

would

be

because

it

is

a

lot

like

a

1980s

internet

host

file.

Okay,

we

say

we

know

these

hosts

and

basically

the

whole

thing

is

being

routed

by

files

that

are

being

exchanged

once

a

day

saying

the

all

these

people

are

under

me.

Please

send

their

traffic

to

me.

If

so,

if

an

institution,

radio

server

receives

a

non-local

request,

it

goes

to

the

nro

radius

proxy.

The

nro

radius

proxy

does

not

have

a

matching

realm

registered.

E

It

forwards

it

up

to

a

top

level

server.

The

way

our

servers

work.

If

the

country

code

is

included

in

the

Rel

name,

we

forward

the

request.

Accordingly,

we

send

Asian

countries

to

Asia

and

European

countries

to

Europe,

but

what

we

do

if

the

country

code

is

not

in

Europe

for

Asia

or

if

there's

no

country

code,

it's

just

example.com

is

that

we

do

some

round

robin

between

the

top

level

servers.

E

There's

multiple

servers

in

in

each

of

Europe

and

Asia,

and

if

the

top

level

operator

doesn't

have

a

registration

request

of

the

realm,

they

need

to

fold

it

over

to

the

other

top

level

operator,

and

so

basically,

things

can

be

forwarded

several

times.

This

isn't

a

completely

flat

structure.

So

if

you

look

at

the

next

slide,.

E

And

inefficient,

this

is

like

the

most

inefficient

you

could

be

in

a

real

example.

You

know

is

that

a

user

from

example.com,

which

happens

to

be

a

Canadian

institution

in

my

example,

visits

a

US

Edge

your

own

service

location

and

attempts

to

join

entero

the

service

location,

radius

server.

So

that's

the

first

radius

server

that

touches

it

determines

that

example.com

is

not

in

the

U.S,

it's

not

local

to

that

institution

and

it

forwards

the

request

to

one

of

the

us

as

your

own

proxies

the

U.S

and

your

own

proxy.

E

That's

the

second

radius

server

to

touch

the

packet

determines

that

example.com

is

not

a

realm

registered

in

the

U.S,

finds

no

country

code

and

forwards

the

request

to

an

Asian

top-level

radius

proxy,

just

by

round

robin

the

Asian

proxy.

That's

the

third

proxy

to

touch

this

packet.

This

request.

This

message

determines

that

the

IDP

realm

realm

example.com

is

not

registered

in

Asia,

so

it

forwards

their

quest

to

a

European

top

level

server.

E

The

European

top

level

server

determines

that

example.com

is

registered

by

Canada,

who

I

am

presuming

registers

with

Europe,

but

I

could

be

wrong

and

forwards.

That

request

to

Canada's

radius

proxy,

the

Canadian

proxy,

that

being

the

fifth

proxy

forwards,

the

request

to

one

of

example.com's

radius

servers,

and

that

is

the

sixth

radius

server

to

actually

see

this

request.

So

it

can

go

through

six

proxy

hops

before

well

through

six

servers,

five

hops

before

it

gets

to

the

the

ultimate

destination

and

that

could

be

a

normal,

successful

authentication

exchange.

D

B

E

So

you

know

you

could

say

well,

six,

six

hops

or

you

know,

five

hops

through

six

servers

is

not

that

much,

but

it's

actually

the

case

that

a

successful

Edge

room

Authentication

also

requires

several

request.

Response

exchanges,

I've

seen

them

from

three

to

seven.

Although

I

don't

think

we

get

three

using

the

methods

I

just

listed,

and

it

depends

on

the

eat

method

and

the

size

of

the

credentials

and

a

few

other

things.

E

But

we

see

several

usually

message,

exchanges

so

request

resp,

like

like

access,

request,

access,

challenge,

access,

request,

access

challenge.

You

know,

request

response

exchanges

before

we

get

to

an

answer

in

a

typical

radius

request,

so

we're

seeing

a

multiplicative

value

there

right

we're

going

through

six

hops.

We've

got,

let's

say

five

messages,

and

every

time

a

message

goes

through

a

hop

there's,

a

cryptographic

message,

authentication

performed

so

more

efficient

routing

than

this

would

be

highly

desirable.

A

lot

of

times.

People

aren't

waiting

to

get

on

the

network.

E

But

if

you

are

waiting

to

get

on

the

network,

the

delay

can

be

quite

noticeable

from

using

radius,

but

it's

hard

to

know

how

to

get

more

efficient

routing,

because

we

don't

do

any

sort

of

dynamic

routing

and

we

don't

even

have

the

equivalent

of

icmp

redirects.

So

even

if

Asia

knew

that

example.com

should

have

gone

to

Europe,

there's

no

way

for

them

to

tell

us

and

there's

no

standard

mechanism

for

Loop

detection

or

prevention.

E

E

The

other

thing

we

found

that

was

difficult

for

us

is

that

there

are

very

few

testing

or

debugging

tools

for

this

type

of

multi-level

proxy

fabric.

There's

when

a

remote,

EPA

radius

request

is

dropped

or

rejected,

it

can

be

very

difficult

to

figure

out

why

it

didn't

work

or

even

figure

out

what

server

dropped

it

or

ejected

it.

A

lot

of

things

are

silently

dropped

so,

and

access

reject

messages,

don't

typically

contain

a

useful

error

code.

E

Even

if

you

do

get

an

access,

reject

method,

back

a

message

back

so

you're

you're,

often

trying

to

figure

out

what

went

wrong.

There's

a

status

server

request

in

radius,

but

that

only

goes

one

hop.

You

can

only

send

it

to

that

that

first

server

that

you

talk

to

that

you

have

a

shared

secret

with.

So

you

can

query

the

health

of

that

server

of

that

proxy.

There's

no

way

to

query

the

health

of

the

more

remote

proxy.

That's

multiple

hops

away

from

you!

E

So

there's

no

ping

that

would

go

across

multiple

Hops

and

then

also

there's

no

way

to

trace

the

paths

that

a

request

would

take

to

see

if

the

path

is

valid

or

looping

or

what

so,

there's

no

like

trace

route

like

functionality

that

we

can

use

to

debug

these

problems,

which

ends

up

with

people

literally

calling

each

other

on

the

phone

and

asking

them

to

check

their

locks.

That's

that's

how

you

you

debug

a

multi-hop

radius,

routing

problem

today,

so

next

slide.

E

There

are

also

some

security

challenges.

You

know,

eduroma

is

a

security

service

and

it's

based

on

radius

and

radius

is

good

and

works

well,

but

its

message

protection

is

pretty

Antiquated

by

today's

standards

and

this

comes

up.

Sometimes,

when

you

talk

to

people

about

Edge

Rome,

it

consists

of

pairwise

shared

secrets

and

an

md5

hash

for

message.

Protection

and

the

shared

secrets

are

often

typed

by

administrators

into

a

UI

or

a

plain

text

file.

E

There's

no

consistently

enforced

minimal

length

for

those

keys.

Nor

is

there

any

requirement

for

cryptographic

generation

of

those,

those

shared

secrets

and

there's

no

algorithm

agility

for

the

md5

hash

and

I

think

this

came

up

in

the

the

buff

on

on

Monday,

although

when

I

first

started

working

on

these

slides

and

talking

to

you

guys,

the

buff

wasn't

even

a

glimmer

in

in

Alan's

eye

as

far

as

I

know.

So

next

slide,

there's

also

a

trade-off

that

our

our

subscribing

institutions

run

into

between

privacy

and

secondary

credentials.

E

User

privacy

is

absolutely

essential

in

edurome,

because

the

risk

is

exposing

the

physical

location

of

an

end

user

right,

you're,

not

going

to

expose

the

fact

that

they

went

to

this

website

you're

going

to

expose

the

fact

that

they're

in

a

particular

room

at

this

time.

So

some

of

the

people

in

the

SAG

room

are

on

edge

room.

B

E

You

might

want

that

too,

but

that's

a

whole

nother

question

that

maybe

I

should

have

put

on

these

slides,

but

I

did

not,

but

secondary

credentials

such

as

certificates

can

be

valuable

to

allow

passwordless

Authentication

or

to

protect,

protect

primary

credentials

from

being

used

in

an

environment

where

the

where

they

might

be

exposed

out

on

the

internet.

So

so

you

know,

your

user

may

have

primary

credentials.

E

They

use

for

logging

into

University

services,

but

you

don't

want

them

to

use

that

same

credential

to

use

Edge

your

own,

because

they're

going

to

go

out

and

use

that

credential

and

Starbucks

or

whatever

so

people

want

both

of

those

things,

but

there's

a

trade-off.

Peep

and

ttls

are

pretty

good

on

the

Privacy

side.

You

well

as

long

as

you

configure

it

right.

You

can

use

an

anonymous

username

in

the

outer

method

so

that

the

username

portion

is

only

ever

transmitted

over

an

encrypted

tunnel.

E

E

One

is

that

you

could

support

TLS,

1.3

and

eptls

so

that

the

certificate

would

be

encrypted

or

have

wider

use

of

radzsec

to

encrypt

the

whole

session,

and

that

would

those

would

meet

both

requirements,

but

those

are

also

both

pki

Solutions,

there's

and

there's

some

people

who

don't

want

pki

Solutions,

which

is

why

we

see

Peep

and

ttls

combined

being

a

much

larger

deployment,

at

least

in

the

US

than

eptls,

but

there

I

don't

know

of

any

combination

of

currently

available

methods.

That

would

give

you

both

of

those

things

in

a

non-pki

solution.

E

So

so

that's

where

we

are,

we

don't

actually

have

any

of

those

things

fully

today,

like

rad

sex,

informational

and

Eep,

TLS,

1.3,

I,

I,

don't

I

think

there's

been

some

talk

about

that,

but

it's

not

in

implementations

and

I.

Don't

even

think

we

have

a

a

draft

that

would

offer

a

non-pki

solution.

That

would

let

you

do

both

of

these

things

at

once.

So

next

slide,

we

ran

into

some

operational

challenges

as

well.

E

E

Also

because

you

don't

end

up,

you

don't

want

the

response

to

end

up

looping

as

well,

and

we

have

a

way

that

we

notice

looping

with

a

vendor

attribute.

Other

people

have

other

ways

of

noticing

looping,

usually

requiring

a

vendor

specific

attribute,

but

there's

no

standard

method

for

detecting

looping

or

letting

another

server

know

that

anything's

looping

also

separate

issue.

Many

clients

will

retry

a

failed

connection,

request

immediately

with

the

same

credentials,

so

they

they

get

back

and

access

reject

and

they

just

try

again.

E

They

had

a

comma

edu

at

the

end

of

their

realm.

Instead

of

a

DOT

at

you

and

their

supplicant

just

tried

to

authenticate

as

fast

as

it

could

37

000

times

every

five

minutes

until

somebody

went

and

blocked

that

particular

user

I.

Don't

know

why

that's

the

case

and

would

like

to

figure

out

if

there's

something

better,

that

we

could

say

that

would

get

them

to

back

off

or

stop.

E

We

get

a

lot

of

people

who

have

long,

expired

or

obsolete

credentials

still

configured

on

their

devices

like

you

might

say

why

35

error

rate,

those

aren't

proxying

errors.

Those

are

the

IDP

saying.

No,

those

credentials

are

not

valid

and

most

of

the

time,

that's

because

they're

obsolete

or

expired,

and

there's

no

way

to

signal

for

the

IDP

to

signal

to

the

device

that

those

credentials

need

to

be

invalidated.

E

Supplicants

will

try

to

use

obviously

bogus

credentials.

You

know

Realms

with

comma

edu

in

them

things

with

no

realm

things

with

white

space

or

special

characters

in

in

the

username

or

the

realm

missing.

Realms

expired,

certs

like

there's,

not

supplicants

aren't

doing

anything

to

try

to

decide

if

this

is

a

reasonable

request

before

sending

it,

which

is

frustrating

because

we

get

a

lot

of

those

as

well.

E

That

I

think

was

third

down

on

the

list

of

reasons

why

we

have

to

throw

away

requests

and

then

the

last

one

I

have

and

I've

tried

to

research.

This

to

some

extent,

is

that

we

receive

many

Quest

requests

per

second,

with

Realms

of

the

form

wland.mnc3

numbers.mcc3

numbers,

Dot

3gppnetwork.org

and

as

far

as

I

can

tell,

we

are

somehow

being

mistaken

for

a

3gpp

carrier

network,

but

I

can't

figure

out

how

to

make

it

stop.

So

those

are

my

random.

E

E

Some

of

them

are

in

the

rad

extra

boff

and

the

the

radx

tree

chartering

effort

and

then

also

Josh

talked

about

eat

guy

and

emu.

But

some

of

the

things

that

we're

struggling

with

aren't

actually

being

worked

on

anywhere

and

you

know

would

I

would

be

interested

at

least

in

in

trying

to

contribute

if

somebody

had

a

good

way

to

solve

some

of

those

problems

next

slide.

E

So

these

are

the

three

people

who

talked

about

this

presentation

as

it

was

under

development.

It's

just

our

own

observations

and

opinions.

It

doesn't

represent

the

views

of

internet

too,

certainly

not

of

edrome.org

who

none

of

us

work

for

or

any

other

company,

company

or

organization.

So

there

you

have

it.

Those

are

thoughts

on

on

deploying

Eep

and

radius

in

a

great

big,

multi-level

proxy

application.

A

F

Yeah

hi

Jan

Freddie,

room,

Enthusiast

and

National

roaming

operator

in

Germany

Margaret.

You

talked

about

load,

balancing

and

failover

mechanisms

in

Germany.

We

had

tried

that

once

but

failed

spectacularly

because

of

course

there

are

the

two

layers:

radius

and

EAP,

and

if

you

don't

recognize

the

the

EAP

layer

and

you

just

distribute

the

radius,

then

you

end

up

with

failed

authentication

requests.

How

do

you

deal

with

that?.

E

If

if

something

goes

wrong

with

them,

so

or,

and

sometimes

they

were

booted

for

operational

reasons-

updates

stuff

like

that,

so

we

don't

get

perfect

load

balancing,

but

we

do

it

all

on

IP

address,

rather

than

trying

to

do

anything

out

of

the

radius

or

eat,

because

it's

not

or

even

the

ports,

because

we

end

up

with.

If

we

do

that,

we

end

up

with

traffic

I

mentioned

for

people

who

aren't

aware

of

what

he's

talking

about

that.

There

are

several

exchanges

for

each

authentication.

E

F

E

Portion

of

especially

on

the

East

Coast,

because

more

people

use

the

East

Coast

servers

on

the

west

coast

server

for

some

reason

having

all

of

the

stuff

people

sent

to

the

east

coast

IP

address

going

to

one

AWS

was

we

were

running

into

non-protocol,

related

problems,

load

problems,

and

so

this?

What

we're

doing

now

is

enough

to

actually

make

make

the

load

low

enough

that

we're

not

running

into

those

problems.

So

so

we.

G

G

H

H

There

are

kind

of

reasons

for

that

that

he's

talked

about,

but

in

any

case

I

mean

today,

if

you're

deploying

Wi-Fi

at

scale

it

it

is

and

you're

not

doing

mobile

management.

Right

then

doing

something

like

crossword

cross

domain

cross

everything

right.

It

is

a

challenge

because

platforms

do

not

align

here.

They

don't

share

like

common

apis

common

models,

even

anywhere

remotely

common

capabilities,

and

that's

a

problem

for

logical

rollout.

E

Gers

I

I

guess

I

didn't

mention

it

because

I,

you

know

don't

play

product

managers

on

TV

or

anything.

But

you

know

if

you

like

talk

to

our

subscribers,

that's

the

biggest

problem

that

they

have

is

how

to

onboard

the

users

to

eduro

and

if

we

could

come

up

with

a

solution

that

would

actually

make

that

easy

that

that

would

help

with

deployment

it

might

help.

Radius

need

be

used

in

other

large-scale

deployments

where

that

might

be

an

even

bigger

issue.

I

D

C

I

haven't

been

inside

in

a

long

time,

but

boy

would

I

encourage

people

who

are

thinking

where

are

hard

problems

that

touch

end

users

as

compared

to

the

network

to

work

on

this.

If

this

comes

to

them,

there

will

be

an

expectation

because

they

already

do

free

internet

service

through

local

internet

service

provider

and

stuff.

Helping

them

out

would

would

be

like

a

huge

thing.

C

E

This

came

up

a

lot

with

children

out

of

school

for

covet,

at

least

in

the

U.S

as

well,

that

they

would,

you

know,

end

up

outside,

like

Taco

Bell

or

something

trying

to

get

on

the

internet.

So

there's

a

big

desire

to

get

all

those

school

children

on

edge

room

and

have

a

lot

of

Ed

your

own

service

points,

so

that,

if

something

like

that

happened

again,

the

they'd

have

somewhere

to

go

to

get

free

internet

access.

B

So

we're

switching

to

the

next

topic,

which

we

hope

will

be

a

little

more

we'll

be

interactive

because

we

want

to

get

feedback

from

from

the

group.

So

the

key

up

is

that

we

in

the

security

area

have

relied

on

outside

help

to

do

formal

verification

of

security

properties

across

a

number

of

working

groups,

frankly

to

a

lot

of

great

success.

B

We've

had

really

great

success

to

do

one

of

two

things:

first,

it

has

helped

us

find

things

deep

vulnerabilities

that

we

would

not

have

otherwise

found.

It

has

also

helped

us

the

other

way,

which

is

again

gave

us

a

little

bit

more

confidence

that

what

we're

gonna,

what

we're

about

to

publish

for

broad

scale,

adoption

and

operation

has

the

properties.

B

The

question

is:

okay,

that's

not

kind

of

unusual

to

have

to

take

in

our

different

practices,

but

we

have

a

really

kind

of

nice

method

to

give

us

a

little

bit

more

security

kind

of

assurance,

and

when

would

we

want

to

really

apply

it

fully?

Recognizing

that

we

don't

have

a

lot

of

that

expertise

here

in

the

iitf?

So

how

do

we

Bridge?

Perhaps

the

communities

that

can

do

that

formal

verification?

B

The

other

motivation

for

this

is

that

there's

an

activity

in

Flight

in

the

irtf

there's

an

initiative

very

much

how

we

have

a

charter

and

kind

of

process

to

create

new

working

groups.

There

is

a

proposal

in

Flight

in

the

irtf

to

spin

up

a

group

kind

of

more

broadly.

That

helps

us

think

how

to

formally

specify

ITF

protocols,