►

From YouTube: IETF115-ALTO-20221111-0930

Description

ALTO meeting session at IETF115

2022/11/11 0930

https://datatracker.ietf.org/meeting/115/proceedings/

A

A

So

this

is

not

aware

apply.

So

probably

you

can

read

this

and

a

code

conduct.

Please

be

nice

to

the

colleague

in

the

room,

trivia.

Actually

we

make

sure

you

wear

a

mask.

If

you

want

to

you,

you

don't

speak

and

so

there's

a

agenda

for

today's

discussion,

a

pretty

tight

agenda,

and

so

we

will

focus

on

China

item

also.

A

A

A

Document

update,

so

we

have

two

new

offices.

You

get

a

published

since

another

item

meeting

one

is

the

past

Factor.

The

second

is

the

course

mode.

We

still

have

a

performance

performance

measuring

of

the

Q

that

dependency

to

the

tcbm

working

group

draft

and

for

working

with

John.

After

we

have

in

the

auto

working

group,

we

have

Auto

om.

This

will

be

presented

in

today's

meeting.

The

second

is

auto

new

transport.

A

A

B

Let's

say

the

current

document

into

two

documents:

additional

documents

next

slide:

please

I

just

wanted

to

recap

a

little

bit

what

we

had

this

customer

and

where

the

position

is

and

the

feedback

we

we

got

so

far

and

next

slide.

Please

we

have

a

couple

of

more

slides

and

we

got

also

feedback

from

HTTP

experts,

Martin

Thompson

Spence

at

Elkins,

Mark,

Nottingham

and

Martin

Duke,

and

we

more

or

less

put

it

in,

and

the

idea

to

split

the

document

into

support.

Parts

is

also

some

results

of

the

feedback.

B

We've

plot

next

slide.

Please

also

just

remember

so.

There

was

a

discussion

itf114

regarding

the

client

pool

until

long

full

mechanism.

We

saw

that

HTTP

one

dot

X,

which

is

related

on

a

strict

sequence

numbering

and

you

can,

as

you

can

see,

on

the

right

hand,

side.

This

makes

it

a

little

bit

inflexible

regarding

the

server

push

and

that

yes,

now

to

describe

the

push

promise

mechanism

that

says

in.

C

B

Separate

document,

so

we

need

to

split

a

little

bit.

The

idea

is

to

have

one

draft

that

is

talking

about

the

general

issues

and

then

anyway,

have

additional

draft

where

we've

describe

the

push

mechanism

and

I

have

also

slide

later

that

describes.

So

we

might

also

be

that

we

will

discuss

ourselves

or

put,

but

at

the

moment

this

is

out

of

scope.

We

are

not

focusing

so

much

on

this.

B

B

If

you

have

a

transport

the

queue

mechanism,

we

rely

on

strict

sequencing

of

the

number

and

strict

scheduling,

and

this

is

a

little

bit

inefficient

and

when

we're,

the

idea

is

that

the

server

can

also

push

multiple

in

information

so

that

we

are

get

advantage

of

the

features

that

HTTP,

2

and

HTTP

3

have.

That

is

a

basic

idea,

and

that

was

also

discussed

in

the

last

ITF

meeting.

B

Yeah,

so

discussion

must

go

on.

Can

you

move

on

please?

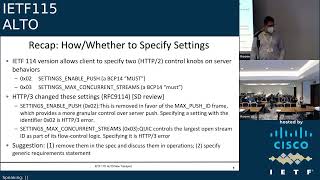

There

was

also

discussion

last

time

regarding

here,

the

HTTP,

2

control,

knobs

and

last

discussion

that

quick

or

HTTP

3

is

a

little

bit

different

and

the

idea

is

Yet

now

to

you

know

where

we

specify

or

discuss

the

these

operations

and

to

to

remove

them

and

yeah.

That

is

also

something

that

we

did.

This

discussed

so

far

and

I

think

the

idea

is

then

accepted.

B

The

style

guide

things

I

just

want

to

go

over

it.

You

can

put

this

slide.

I

have

not,

and

now

we

come

to

the

major

changes

and

the

ideas

that

we

have

and

where

we

need

also

the

feedback

from

this

group

regarding

the

idea

as

I

state,

so

the

major

structure

of

the

transport

document.

The

idea

is

to

change

this

to

split

the

current

document

into

separate

documents.

At

the

moment

the

idea

is

to

have

three

documents.

B

B

And

if

we

agree

here

in

this

group

at

some

of

the

part

of

the

first

document,

we

have

prepared-

let's

say

a

draft

version,

and

if

the

worker

group

accepted

that

we

will

move

these

sections

to

a

separate

draft

and

yeah.

And

let's

say

what

is

already

on

this

on

the

table

or

what

we

have

presented

so

far

and

already

uploaded

is

the

server

push

mechanism.

But

in

our

internal

discussion

regarding

the

move

of,

let's

say

because

of

the

pool

section

into

a

separate

document.

B

We

are

also

very

where

I

find

this

discussion

so

in

in

the

in

the

sense

we.

The

idea

is

then

to

have

multiple

documents,

one

describing,

let's

say

the

overall

mechanism

and

then

the

various

mechanisms

of

HTTP,

let's

say

I

get

or

a

mechanism

will

then

also

describe.

Let's

say

in

the

separate

document

and

the.

B

B

Here

you

can

see

it

here,

visualize

the

structure,

the

idea

that

we

had

we

had

in

the

document-

one

this,

let's

say

increment

Q

updates,

create,

read

Etc

then,

and

we

need

to

decide

whether

we

want

to

put

this

out

of

the

first

document

and

describes

the

prolongful

mechanism

of

zephyr

document.

This

is

something

that's

at

the

moment,

officially

included

in

the

current

transport

document.

We

need

to

decide

to

put

this

out.

B

Then

there

is

a

new

proposal

regarding

the

push

and

and

the

client

which

is

related

especially

to

our

hdb2

and

HTTP

3..

So

this

will

be

then

some

kind

of

version,

specific

document

and

at

the

moment

out

of

scope,

is

a

server

put,

but

that

could

be

also

an

document

for

yeah.

Let's

say

a

discussion

for

the

discussions,

then

okay

here

there's

a

short

record

collection

of

what

is

based

in

the

document.

B

One

document,

one

for

example,

you

have

the

definition

of

let's

say

the

transport

of

information

and

the

incremental

updates

are

described

there,

the

idle

server

as

a

master

of

the

updates.

We

have

this,

as

mentioned

before

the

CDR

operations.

We

are

related.

Let's

say

when

we're

using

this

document

to

a

strict

sequencing

and

and

only

the

auto

server

can

write

to

it

and

client

can

issue

command

sequency

or

in

parallel.

So

that

is

a

basic

stuff

that

we

have

discussed

so

far

and

yeah.

B

The

sequence

number

is,

let's

say

up

to

64

bits

as

described

here,

and

so

the

structure

is

a

pretty

similar

to

what

we

have

discussed

so

far.

Then,

let's

move

to

the

next

Slide

the

proposal

for

the

pull

document

and

Cloud

read,

updates

very

simple

design

and

then

only

the

get

mode

method

that

is

used

here

and

could

be

done.

It

could

be

used.

Also

for

caching

and

content

is

solution

in

that

scale

and

server

clients.

It.

B

B

Specify

attribute

transport

control

here,

but

this

more

lesson

also

transparent

to

the

also

design

next

slide,

please

so

the

server

push.

That

is

something

that

is

here

here

a

little

bit

new

or

different,

since

HTTP,

2

and

3

both

use

push

Province

mechanism,

and

this

was

a

main

advantage.

The

idea

is

to

put

here

a

separate

document,

and

this

would

also

be

done

from

the

transfer

perspective.

Then

also,

let's

say

one

additional

advantage,

and

the

idea

is

here

to

to

specify

this

mechanism,

which

was

also

in

an

early

stage,

defined

in.

E

B

B

So

I

don't

know

if

we

can

say

it

was

really

doing

the

same

same

thing.

Let's

do

the

same

thing

a

little

bit

differently.

So

the

push

promise

mechanism

they're

just

described

only

in

let's

say

in

this

document,

enveloped

for

two

and

three

and

let's

say

the

basic

document

gives

let's

say

more

or

less

a

general

overview

and

structure

of

of

all

the

concept.

Yeah,

that's

what

I

say

it

was

the

idea.

G

F

Yeah

I

I

would

I'm

strongly

disinclined

to

publish

two

different

solutions

to

this

problem:

okay

I,

if,

if

like,

unless,

unless

this

like

clearly

unless

one,

unless

they

address

different

use

cases

where,

like

you

know

use

case,

a

like

two-

is

much

better

because

of

these

certain

metrics

and

like

for

use

case

b

doc.

Three

is

much

better

and

like

that

would

have

to

be

pretty

strongly

motivated.

I

think

we

already

have

one

solution

to

this,

which

is

SSC

I.

G

Ssc

has

much

a

larger

overhead.

The

income,

actually

quite

a

complex

and

I,

could

2003

are

we

simpler,

I

think

essential.

Three

essentially

is

complete

replacement

of

SSE

using

a

much

more

modern

design.

Of

course,

if

you

really

push

SSC,

for

example,

and

and

really

for

example,

eventually

it's

really

built

up

like

you

said,

and

it

should

be

built

on

top

of

independent.

G

If

you

build

on

top

of

HTTP

three,

for

example,

then

actually

you

even

can

even

gain

performance

over

SSE,

because

your

fundamental

and

the

line,

supposedly

it's

a

single

using

essential,

total

serialization.

So

therefore

you

serialize

everything

and

about

here,

actually

you

can

even

have

concurrency.

Even

you

can

push

all

all

the

updates

concurrently.

So

therefore

you

can

get

latency

in

the

worst

case.

You

have

a

lot

a

lot

of

number

of

subscriptions

and

you

want

to

push

all

updates.

Docker

three

would

even

compete,

beat

the

performance

of

SSE

great.

F

All

right,

that's

what

I

thought

so

my

my

understanding

is

that

push

is

not

much

beloved

by

the

HTTP

community

and

maybe

not

supported

that.

Well,

so,

without

having

any

data

whatsoever,

I

I

would

be

inclined

towards

the

doctors

approach

rather

than

doc,

three

approach,

but

you

know,

if

you

guys,

have

the

data

at

like

what

like

three

better,

that's

great

I

I

would

actually

and

we're

on

the

private

space

of

like

server

updates

right

in

a

perfect

world.

F

We

would

have

either

doctor

I

mean

whether

you

want

to

combine

one

and

two

like

I'm,

not

gonna

like

if

you're

you

know,

I,

don't

I

haven't

looked

at

it

editorially.

If

that

works

or

not,

but

I

would

I

would

certainly

like

to

do

either

two

or

three

and

in

a

perfect

world.

Consider

like

obviously

an

SSC.

F

G

F

Two

and

Doc,

three

and

and

again

I

I'm

I,

don't

have

the

data,

but

from

what

I

know,

I

would

be

prejudiced

towards

doc.

Two

because

it

doesn't

use

push

and

then

like

as

whether

doc

one

is

merged

with

Doc.

Two

or

three

is

an

editorial

thing:

I,

don't

you

know

whichever

and

then

I

would

certainly

encourage

the

the

working

group

to

look

at

obsoleting,

SSC

and

I.

Don't

have

the

information

implementation

Wiki

like

in

front

of

me

right

now

on

how

like

how

installed

that

is

in

in

the

base?

F

F

Not

deployed

and

implemented

much

then,

like

all

the

more

reason

to

just

get

rid

of

it.

If

we

think

this

is

a

way

Superior

I

mean

this

is

not

a

such

a

widely

deployed

protocol

that

we

can't

make

really

sensible

revisions

to

it.

I

would

say,

but

that's

the

second

issue,

I

think

the

first

thing

is

to

down

select

of

all

this

stuff

figure

out

what

to

do

here

and

then

we

can

have

it

have

a

discussion

about

obsoleting

other

documents.

G

Okay,

so

how

do

proposal

we

proceed

so

we're

going

to

Real

Estate,

because

right

now,

by

the

way

very

quickly

at

high

level,

is

you

can

emulate

to

actually

get

ambulance

through

using

two

of

course,

then

assumption

is

you

should

there's

some

mechanism,

even

the

amulet

three

using

two?

Basically,

you

you,

you,

you

allow

the

client

to

essentially

pre-fetch,

essentially

put

a

large

number

of

pending

put

because

essentially

is

a

server

portal,

can

reduce

the

latency

even

below

a

single

one

round,

three

time

and

poor

you

really

conceptually.

G

If

you

don't

want

to

really

have

a

lot

of

pending

requests,

you

you

can,

it

can

have

a

large

number

of

pending

pull

requests

put

on

the

server.

So,

therefore,

you

put

a

load

on

a

server,

but

if

you

you

think

like,

for

example,

in

implementation,

we

think,

for

example,

overhead

on

a

server

side

may

not

be

a

major

problem.

We

can

essentially

ask

a

client

to

really

send

out

a

larger

number

of

pending

pull

requests

on

future

secret

numbers

to

emulate.

F

F

The

other

thing

I

will

say

about

two

versus

three:

is

that

if

we

pick

three,

then

I

think

we

have

to

keep

SSE,

because

that

we

need

an

HTTP

one

solution:

I'm

not

mistaken,

and

that's

okay

like

I

guess,

but

yeah

I

mean

I,

would

look

at

it

and,

like

I,

mean

other

people

in

the

work

whose

benefit

opinion.

Of

course,

I

I,

don't

I.

F

H

On

this

part,

I

think

what

is

what

will

be

really

useful

for

the

for

the

working

group

is

that

the

rational

about

the

I

would

say

the

the

need

for

these

three

functionalities

are

really

documented,

so

that

we

understand

what

our

the

scope

of

the

problem

and

then,

if

they

overlapping

to

justify

modif

and

motivate

why

we

need

a

further

solution,

or

rather

than

just

pick

one

of

them.

If.

D

H

Have

this

material

I

would

say

clearly

formulated

somehow

that

would

be

really

good

for

the

welcome

to

know

and

exactly

that,

this

can

be

included

in

the

current

draft.

You

have

without

proceeding

with

any

emerge

or

any

in

a

split

just

explain

this.

This

is

how

the

three

problems

we

are

trying

to

solve.

These

are

the

approaches

and

we

will

pick

or

select

all

of

them,

based

on

the

information

you

will

receive.

That

would

be

really

good

to

have

in

the

next

version

of

the

this

one.

D

E

B

H

Just

just

a

comment

about

the

I

would

say

more

logistical

aspects.

As

you

know,

we

are

out

of

school

you

from

this

milestone

for

this

one.

We

are

supposed

to

deliver

something

in

the

late

September,

I

I,

don't

know

if

you

have

a

plan

for

the

or

at

least

the

expected

scheduling

for

for

delivery,

I

would

say

the

pieces

we

we

have

so

far.

Do

you

expect

to

have

something

I

would

say

in

the

next

three

months:

that's

something

which

is

stable

or.

B

So

so

what

we

discussed

in

the

group

I

think

we

can

can

do

because

at

the

moment

it's

a

split

of

what

we

already

had

yeah

and

we

support

the

motivation

to

say.

Okay,

let's

describe

the

reasoning,

why

why

it

is

splitted

and

discusses

on

the

list.

I

think

that

could

be

a

growth,

good

progress

and

I

won't

think

that

there

need

to

be

so

much

change

regarding

the

in

also

the

the

content

or

how

it

works

in

the

draft.

I

think

that

is

pretty

much

this

described.

We

got

feedback

regarding.

B

H

I

I

I

And

for

the

new

revisions,

they

mentioned

it

to

resolve

a

the

three

open

discussions.

They

have

in

the

and

heard

that

at

least

about

the

discussion

on

mailing

list

pages

and

some

persecution

around

the

document

and

also

I

figurative

about

in

the

the

IIT

105

item.

So

they

have

popular

impacted,

the

Olympic

model

in

their

profile

implications

and

it

can

be

checked

and

they

have

to

message.

I

Yeah

from

this,

we

will

discuss

some

details

about

how

we

make

the

progress

on

the

three

other

three

of

them

discussion

topics

and

for

the

topic

one.

So

it's

about

how

to

handle

the

data

set

in

the

Auto

related

data

analysis

and

the

current

decision

is

to

use

the

identity

to

define

the

object

size.

This

can

allow

the

data

to

be

managed

in

a

modular

base

and

it

can

guarantee

some

backward

capability

compatibility

and

the

filter

documents

can

Define

the

new

tabs

by

adding

the

new

identities

in

some

extension

modules.

I

I

But

about

the

device

better

to

use

the

element

in

modules

working

group

has

a

different

opinion,

so

some

people

will

support

this

because

the

animating

modules

can

guarantee

the

compatibilities,

but

the

government

has

a

different

means

and

because

they

not

attacked,

have

the

equipment

changes.

So

the

element

in

module

system

cannot

be

overdone,

but

the

example

once

we

explain

so

if

they

use

their

animation

modules.

What

will

look

like

may

I

have

that

has

common.

H

Yeah

yeah

I

have

only

one

comment

about

the

the

what

you

have

mentioned

about

the

new

support

for

the

animated

model.

Did

this

actually

defeat

the

idea

of

having

an

Ayana

registry

for

the

protocol?

So

if

you

are

not

expecting

to

have

something

which

is

extensible

and

new

values

defined

in

the

future,

then

what

what

these

same

arguments

can

be

against

having

this

element

end

model,

so

I'm,

not

I,

don't

think

that's.

H

This

is

really

an

argument

to

take

to

take

into

account

the

key

one

is

that

if

you

want

to

have

something

which

is

really

I

would

say

open

to

new

implementation

without

having

to

say

heavy

changes

in

the

process.

The

animated

models

are

there

for

free

a

new

entry

once

it

will

be

added

to

the

previous

video

model

will

be

automatically

generated

and

there

is

no

cost

there,

but

the

other

one,

the

so

I

I

I'm,

not

sure

I

understand

the

the

the

other

argument.

I

Yeah

actually

I

agree

with

you.

So

in

the

example

we

show

the

how

they

are

limiting

modules

will

work

so

the

next

page

will

you

will

go

to

the

next

page,

so

you

can

say

once

a

experimental

notice

become

the

standard,

it

will

update

the

entire

modules,

but

because

the

basic

mother

depends

on

the

other

model,

we

don't

need

to

update

the

basic

model,

so

only

the

model

will

be

updated.

So

that's

a

I

think

that's

the

main

benefit.

I

And

that's

the

major

part.

We

have

a

lot

of

discussion

on

milliliters

so

to

achieve

the

server

to

server

communication,

we

identify

the

OM

may

need

to

have

the

following

three

part

of

the

configuration.

So

one

is

they

need

to

configure

how

the

server

to

be

discovered

by

another

server-

okay-

and

it's

probably

already

included

in

the

the

history

relations

and

we

have-

will

provide

the

new

grouping

of

the

auto

server

Discovery

grouping

and

they

can

have

the

different

kind

of

the

manners.

The

Safari

has

three

predefined

cases

for

this

respiratory.

I

And

the

the

second

part.

Actually,

we

also

need

to

configure

the

how

the

server

to

discover

another

server.

So

that

part

is

still

in

the

progress

and

we

have

the

kind

of

proposal

it

will

have

the

another

booking

and

this

grouping

will

include

the

contribution

about

some

parameters,

how

the

server

can

be

used

to

discover

another

so

like

for

the

reversing

ads,

and

you

configure

the

idea,

servers

and

yeah,

but

they.

I

I

I

Yeah

so

then,

so

this

will

also

invoke

the

start,

so

I

probably

like

the

immigration

parameters.

So

we

need

to

configure

how

the

server

to

connect

it

to

discover

servers.

So

that's

part

is

not

determine

whether

CP,

including

in

the

this

document,

because

they

it

has

a

multiple,

continuous

solutions

to

undo

this.

We

have

to

have

some

discussions

on

the

mailing

list

in

the

previous

ITF

meetings

and

we

have

some

individual

jobs

to

talk

about

this,

but

to

a

very

quick

summary.

So

this

can

be

the

three

kind

of

the

solution.

I

Yet

so,

actually,

in

the

next

talk

in

the

incentives,

we

will

have

some

discussions

about

more

details,

also

what

we

observed,

how

to

stop

the

subject

that,

in

this

talk

so

far,

the

the

very

observation

that

we

think

if

we

considered

using

the

First

Source,

it's

a

transfer

remote

to

using

the

auto

particle

to

do

the

set

of

some

multiplication.

So

that

can

be

the

simplest

approach

to

leverage

the

existing

Auto

Centers

and

to

support

this

so

OEM.

Can

it

tends

to

I've

got

so

far?

I

I

What

we

need

to

do

is

to

provide

the

basic,

unified

models

to

cover,

sell

the

common

application

status

and

the

economic

primary.

So

next

page

will

give

some

information

about

this,

but

from

our

practice

and

determine

so

consider.

The

OEM

and

there'll

be

some

slightly

different

from

the

consider

the

implantation,

the

department

from

the

information

Department

strategy.

I

Well,

they

need

to

progress,

is

how

to

handle

the

heterogeneous

format

of

the

data

sources

and

how

to

process

the

detected

from

the

difficulties,

but

for

the

OEM

actually

more

interest

in

how

to

handle

the

Heritage

mechanism

to

assess

this

result

and

how,

to

correctly

configure

the

the

calling

flow

for

the

infamous

resource

creation.

So

in

the

oriented

model

to

separate

the

information

of

creation,

to

scrape

up

part

of

the

data

model.

The

message

layers,

algorithm

layer

and

the

data

soft

layers

and

for

the

next

page,

we'll

be

a

current

example.

I

I

For

example,

the

result

ID

the

rate

of

type,

the

URI

probabilities

and

the

basic

algorithm

should

be

used

to

create

the

information

result

and

for

the

algorithm

need

to

configure

the

status.

You

will

be

used

to

generate

this

recovery

resource

and

they

also

have

some

implantation.

Investigative

parameters

like

the

network

map

may

need

to

configure

the

theatrical

analysis

and

for

the

cost

knife

you

need

to

configure

the

the

Precision

to

compute

the

cost

and

for

the

data

sources.

I

It

will

include

some

parameters

about

like

the

resource

bumper

codes

and

the

update

like

system

and

also

the

major

part

they

learned

from

our

practices,

the

complex

resolution,

so

the

negative

excuse

this

yeah,

so

that

will

be

a

major

license.

We

learned

from

the

real

implantation,

so

the

different

data

cells

may

have

to

accomplished.

F

H

F

So

right

so,

but

there's

no

server

to

server

discovery.

There's

no

server-to-server

communication

spec

in

Alto,

like

client,

discovery

of

server

versus

in

scope.

So

2.1,

that's

fine,

I'm

concerned

that

the

other

two

are

something

that

is

not

Alto,

that

we're

trying

to

configure

in

this

document

which

to

me

is

out

of

scope,

do

I,

misunderstand

What's,

Happening

Here,.

F

F

A

I

A

A

J

K

H

L

So

hello,

everybody

I

will

present

the

the

update

of

the

deployment

of

all

the

integration

of

Alto

in

in

telefonica,

so

in

such

a

way

that

we

can

expose

the

telephonical

network

information

to

the

telephonica

CDN.

So

it's

a

single

domain

environment

signal

administrative

domain

environment,

but

we

recommend

across

the

presentation

that

also

has

some

influence

in

in

other

domains

in

I

mean

for

users

in

in

other

domains

in

in

other

operators

at

the

end.

So

this

is

an

update

from

last

ITF.

So

next

slide,

please

just

a

quick

reminder.

L

So

the

objective

of

all

of

this,

for

all

of

these

work

is

to

improve

the

little

bit

of

traffic

from

the

telephonica

CDN

in

the

tunnel

of

the

network,

so

such

a

way

that

the

decisions

that

the

telephonica

CDN

the

logic

of

the

CDN

could

take

could

consider

also

the

network

information,

the

topological

information.

By

now

we

are

playing

with

the

number

of

hops.

The

idea

is

to

include

more

power

for

more

Rich

metrics

in

the

in

the

future.

L

That

could

be

maybe

the

the

occupancy

of

the

links

or

the

latency,

and

so

by

now

it's

just

simply

the

the

number

of

hops

or

the

igp

metric

you

wish,

so

the

project

was

already

presented

in

last

ITF

or

in

in

Alto

for

sure,

but

also

in

in

media

operations,

and

so

I

will

provide

an

update

here

next,

please

well.

Let

me

also

recommend

that

this

was

already

presented

this

in

this

itf2

media

operations

and

on

Monday.

So

this

is

just

I

know

that

you

are

very

familiar

with

that.

L

So

what

we

are

playing

the

pieces

that

we

are

playing

is

the

network

map,

which

is

essentially

a

groups,

the

different

prefixes

representing

them

points.

In

this

case

the

endpoints

will

be

on

one

hand

the

prefixes

allocated

for

the

end

users

for

the

consumers

of

the

streaming

content.

On

the

other

hand,

the

other

endpoints,

let's

say,

will

be

the

caches.

The

IP

addresses

of

the

caches

for

delivering

the

the

content.

L

L

Just

to

remark,

the

regular

map

is

obtained

through

vgp,

so

we

establish

a

session,

a

number

of

sessions

of

bgp

sessions

with

roof,

reflectors

in

charge

of

exposure

or

the

advertising,

the

preferences

of

the

end

users

and,

on

the

other

hand,

for

the

cost

map

we

established

bgpls

sessions

with

different

root

reflectors,

so

in

a

manner

that

we

can

build

the

topological

relationship

between

the

nodes

and

connecting

both

endpoints

in

each

side.

Okay.

So

next,

please

so

this

this

slides

summarize

the

process

is

follow.

L

Last

ITF

I

presented

the

the

initial

part,

so

we

started

playing

with

a

toy

environment

in

the

in

the

lab.

Just

for

validating

the

concept,

then

we

moved

to

the

pre-production

lab

of

one

of

the

operations

of

telefonica.

So

now,

I

started

playing

with

more

realistic

environments,

different

vendors

and

and

understandings

of

how

the

the

issues

of

that

the

real

architecture

could

bring

into

the

deployment.

For

instance,

in

the

lab

we

were

playing

simply

with

ospf

in

the

preproduction

lab.

L

L

So

the

time

in

in

the

Captivity

114

and

90f155,

we

essentially

prepare

all

the

environments,

so

they

plug

in

Alto

connect

the

financial

reflectors

doing

the

the

Field

Works

of

server

installation,

all

the

security

aspects

for

hardening

the

deployment

in

a

real

Network,

so

trying

to

be

sure

that

the

flows

between

the

road,

reflectors

and

Delta

server

goes

I

mean

where

they

should

go,

etc,

etc.

So

what

we

are

we

I

will

comment

is

the

result

of

this

POC?

Is

this

pilot?

So

it's

just

a

work

in

progress,

but

I

will

provide

you.

L

We

have

the

different

levels,

aggregation,

Regional

and

so

on

so

far,

so

we

have

a

restriction

between

the

connections

with

a

from

hierarchical,

Level,

2

to

hierarchical

level.

Three,

the

problem

that

we

have

is

for

a

specific

vendor.

We

have

an

issue

with

the

software

and

we

cannot

include

the

proper

information

in

ospf,

so

a

hierarchical

level,

two

with

hierarchical

level,

three

is

is

connected

through

ospf

in

this

case,

so

in

not

in

all

the

footprint

of

this

network,

we

we

have

solved

yet

this

this

issue

of

being

able

to

retrieve

the

information

later

on.

L

With

bgpls,

so

there

is

a

gap

in

this

respect,

so

we

have

a

small

area,

a

small

region

of

the

of

the

country

being

solved,

but

not

for

the

general

country.

So

this

is

known

in

advance

and

well.

This

will

take

the

time

to

be

solved

because

we

need

to

wait

to

the

news

over

release

and

so

on

so

far

next,

please

so

good

news

for

bright

news

about

the

the

deployment.

So

we

connected

the

ultra

server

with

the

BJP

speaker

to

the

Rock

reflectors

and

we

start

to

retrieve

a

number

of

summarized

iib.

L

Others

ranges

so

more

than

16

000

of

them,

and

these

ranges

corresponds

to

different

kind

of

users,

fixed

users,

mobile

users

and

Enterprise

users.

This

is

this

is

good,

because

we

can

then

start

differentiating

the

kind

of

flows

that

we

can

deliver

from

the

CDN

to

the

different

users

at

the

end,

according

to

the

type

of

user

that

we

could,

the

user

that

is

requesting

the

content.

L

Those

IP

ranges

are

both

internal

and

external,

so

the

majority

of

them

for

sure

are

internal,

but

there

are

also

some

external.

What

are

those

external

IP?

Prefixes?

Are

those

corresponding

to

the

National

interconnections

who

to

so

the

ones

that

we

are

getting

for

the

pitting

points?

Why

the

national

not

international?

Well,

all

the

international

connectivity

in

telephonica

is

handled

by

a

specific

career

from

the

entire

one

carrier

for

the

telephonica

group.

So

the

only

nodes

that

deal

with

interconnection

in

the

in

this

operation

are

the

ones

for

the

national

interconnection

why

this

is

relevant.

L

This

is

relevant

because

we

also

can

improve

the

delivery

of

towards

the

users

that

are

coming

from

other

operators.

They

can

note

that

the

CDN

is

delivering

for

live

streaming

is

delivering

Ott

traffic

right,

so

Sports

and

and

this

kind

of

things.

So

it's

also

important

for

us

to

have

to

understand

what

could

be

the

better

cash

to

serve

external

users

so

trying

to

identify.

Also

the

the

proper

interconnection

point.

L

L

Pretty

well

so

know

that

I

commented

before

that

we

cannot

build

all

the

cosmap,

but

we

we

retrieve

the

information,

so

we

don't

expect

a

higher

load

because

of

the

fact

that

we

could

resolve

that

point

for

the

cost

map,

so

the

the

load

should

be

the

the

one

that

we

have

now

more

or

less,

and

this

is

not

important

next

slide.

Please.

H

L

Just

wait

for

that:

let's

wait

for

that.

So

next

please!

This

is

just

an

example

of

the

information

retrieve,

so

you

can

see

there.

The

actual

pids

without

the

actual

IP

address

ranges

from

telephonica

and

also

the

course

map

with

the

relationship

of

the

of

the

igb

metrics

among

them.

Interesting

to

note,

I

will

just

highlight

one

point

that

I

will

comment

at

the

end.

You

see

there

in

the

apids

on

asterisks,

and

this

is

because

of

the

fact

that

the

realizing

I

mean

running

the

POC.

L

We

realized

that

this

information,

the

identifier

of

the

PID,

is

sensible.

So

this

is

why

somehow

we

could

hear

this

kind

of

simple

obfuscation,

but

I

will

elaborate

a

little

bit

more

at

the

end

about

this.

Has

a

potential

topic

to

address

as

well,

but

that

were

the

good

news

they're,

not

so

good

news,

so

that

the

things

that

we

need

to

to

get

fixed

is

there

is

no

information

of

Ip

ranges

about

the

five

percent

of

the

pops

of

the

political

on

present.

L

So

we

need

to

analyze

what

particularities

are

on

these

Pops

to

to

understand

why

they

are

not

advertising

the

the

information,

so

another

IP

Ranger

seems

not

to

be

retrieved,

so

we

have

a

good

number

of

them,

but

there

are

some

missions,

so

we

need

to

understand

what

is

happening

with

them.

Probably,

we

need

to

connect

some

to

some

further

or

reflector

to

understand

to

look

for,

for

where

these

preferences

are

being

advertised.

L

Only

27

pids

are

both

in

the

network

map

and

in

the

course

map.

This

could

be

the

result

of

the

known

issue

with

the

a

decoration

between

hierarchical

level,

two

and

three,

but

we

need

to

assess

that

we

are

not

just

zero

if

this

is

the

the

cost

and

finally,

the

pids

for

the

cdan

nodes

are

not

yet

captured,

and

this

could

be

just

a

matter

of

connecting

again

to

a

different

group.

Reflector.

L

The

the

this

operation

in

telefonica

has

a

number

of

reflectors

for

different

purposes,

so

it

could

be

the

case

that

we

are

missing

some

relevant

connection

bgp

for

this.

So

next

steps

not

taking

much

more

time

for

the

pilot,

so

we

need

to

understand

how

to

consume

the

health

information.

Okay,

we

now

have

the

information

about

how

we

should

consume

it.

So

how

often

we

can

retribute

that

map,

and

so

so

we

need

to

continue

analyzing

the

information

received

to

understand

the

Dynamics

in

a

pollution

Network.

So

there

will

be

changes.

L

Product

for

logins

for

a

log

registering

for

all

the

events

that

we

could

Monitor

and

so

on

so

far,

and

also

to

work

on

how

to

automatically

load

upload

the

topology.

So,

for

instance,

one

of

the

questions

that

are

rising

to

us

is

what

happens

if

the

in

some

point

in

time

the

topology

that

this

upload

is

quite

different

from

the

existing

topology,

which

what

should

we

do

to

consider

the

new

one

to

consider

the

old

one

to

discard

the

new

one.

L

So

these

kind

of

things

is

something

that

we

need

to

learn

yet

and

and

provide,

and

then

finally,

for

alto

media

operations

working

group,

the

idea

would

be

to

document

the

pilot.

So,

and

probably

this

could

be

also

interesting

for

for

mobs

to

identify

the

gaps,

issues

and

the

improvedness

in

the

solutions

that

could

be

worth

it

to

work

and

and

disrespective.

L

I

would

like

to

emphasize

the

point

of

the

security,

the

obfuscation

of

the

pids

and

so

probably

all

their

security

capabilities

in

in

the

ultra

environment

and

yeah

for

sure

to

provide

another

update

for

next

ITF

and

hopefully

with

all

of

these

solved.

And

let's

see

if

we

are

able

to

do

so,

so

there

is

a

question.

Thank.

D

You

Luis

for

your

presentation.

My

name

is

Ayo

misuse

I'm

from

physics,

research

of

Europe

I

found

this

presentation

very

very

interesting.

Can

you

go

back

please

to

the

hierarchical

scheme

that

you

showed

for

different

layers,

so

I'm

very

interested

in

this

topological

IP

transport

hierarchy?

My

question

is

that

our

security,

as

per

considering

this

hierarchy

security

aspect,

can

be

integrated

in

this

hierarchy.

L

Yes,

I

mean

well,

this

topology

is

how

internal

to

The

Domain,

so

how

is

is

hardened

is

secure.

What

we

would

like

to

prevent

is

to

to

reveal

sensitive

information,

as,

for

instance,

I

commented

in

the

pids.

The

pids

provide

an

identifier,

but

it's

quite

simple

from

that

identifier

to

derive

the

look

back

of

the

of

the

routers.

So

we

need

to

know

how

to

hide

that

information

in

in

when

the

application

is

internal.

L

It's

not

a

major

problem,

but

with

the

with

the

idea

of

exposing

this

information

to

external

parties,

it

could

be

for

sure,

sensible

information.

So

that

would

be

one

aspect

that

will

be

some

other

aspects

to

cover

in

security,

so

yeah

the

priority

is

a

matter

to

start

working

on

and

yeah

and

trying

to

identify

points

of

yeah

for

making

Alto

more

more

secure

and

more

robust

in.

D

L

L

A

E

A

E

J

Yeah

Jerry

Russia

from

Qualcomm

yeah,

so

I'm

I'm

gonna,

be

this

is

a

collaborative

work

and

Kai

Jensen

and

myself

are

going

to

be

doing

this

presentation

I'm

just

going

to

start

off

we're

going

to

be

talking

about

a

few

deployments

that

are

being

taking

place

on

alto

and

some

of

the

science

networks

in

Europe

and

the

US.

So

next,

thanks

yeah

I'll

skip

this

one,

so

yeah

the

context.

This

is

two

things:

one

is

the

open,

Alto

The

Source

Source

base.

J

That's

been

developed

as

part

of

the

open,

album

project.

It's

an

open

source

implementation

and

platform

with

an

MIT

license

it's

available

on

GitHub.

The

other

development

is.

The

openalto.org

domain

is

a

running

instance

of

the

deployment

of

open

Alto,

providing

Network

information

in

the

context

of

science

networks

like

I

mentioned,

such

as

LIC

one

LIC,

OPN,

CERN,

esnet

and

NRP,

and

so

on.

We'll

talk

about

these

deployments

in

in

this

conversation

and

yeah

it's

available

in

under

this

domain.

J

Next

yeah.

This

is

just

a

snapshot

of

one

one

instantiation

here

of

a

network

that

we

are

when

we're

deploying

open

Alto.

This

illustrates

different

regions

of

where

the

Network's

running

Asia,

Americas

and

Europe

This

is

in

particularly

the

LHC

one

network,

that's

connecting

the

experiments

at

CERN

in

Geneva

and

can

help

basically

dedicated

to

move

this

massive

data

sets

we're

talking

about.

You

know,

petabytes

of

information

that

come

from

the

from

the

LHC

collider

in

Geneva

have

experience.

J

You

know

this

is

foreign

Engineering

in

this

massive

data

sets

that

then

need

to

be

transferred

to

scientists

in

other

regions

of

the

world,

whether

it's

in

the

US

or

Asia

or

anywhere

so

yeah

just

to

get

an

idea

next

yeah.

This

is

the

specific

architecture.

That's

been

running

on

this

science

networks,

specifically

in

the

CERN

network

at

the

bottom,

so

this

sort

of

layering

a

layering

View

at

the

bottom.

J

Then

we

have

the

visibility

component,

which

is

implemented

using

open,

Alto,

that's

deployed

and

sort

of

monitoring

the

network

and

providing

then

the

maps.

Basically,

these

Maps

then

are

fed

into

the

next

layer,

which

is

the

what's

what's

called,

that's

called

TCN,

plus

FTS,

and

then

the

dashboard

control

Network

and

the

the

file

transfer

system.

Fts

is

science

networks,

terminology

or

technology

which

basically

schedules

data

data

transfers.

J

Basically,

it's

pulling

information

from

the

control

plane,

the

adjacent

metrics

subnets,

the

pgp

input

from,

and

then

generation

of

the

FIP

and

so

from

the

here

then

DDF

reconstructing

you

know,

topology

the

paths

and

so

on

and

creating

the

network

map

and

the

cost

map

another

path.

Another

source

of

information

is

that

the

data

plane

control,

which

is

control

information,

but

we

pull

it

from

the

devices

from

the

data

plane.

So

it's

specifically

looking

glass.

J

J

In

the

actual

you

know,

sampling,

packets,

so

Technologies

like

netflow

as

flow

personal

icmp,

and

this

is

an

integration

with

the

with

grading

graph,

which

is

running

one

of

the

deployments,

so

actually

the

development

of

of

a

Plugin

or

an

Asian,

an

open,

auto

plugin

that

actually

gets

this

data

from

G2

and

G2

actually

gets

it

from

the

net

flow,

S4,

icmp

and

so

on.

Then

this

is

our

component.

J

K

Thank

you,

okay,

so

here

is

basically

an

overview

of

the

art

deployment.

So

right

now

we

have

like

three

deployments

so

the

first

two

deployments,

mostly

based

on

our

server

deployment

efforts.

So

the

first

one

is

to

deploy

the

auto

server

inside

CERN

and

right

now

it

is

already

up

and

running

in

the

server

inside

the

server

internal

Network

which

but

which,

unfortunately,

is

not

accessible

from

the

internet.

But

then

we

also

have

a

public

mirror

hosted

at

auto.org.

K

It

has

Open

Access

in

the

internet,

and

but

we

also

have

another

puppy

mayor

hosted

at

openauto.org

and

we

all

have

implemented

RFC,

7285

and

also

RC

7240.

Basically,

the

unified

property

map

documents

and

the

less

deployment

is

some

update

from

the

client

side,

because

we're

not

only

developing

other

servers

for

the

LIC

one

use

case.

We

are

also

developing

some

applications

that

can

average

the

visibility

information

so

next

time,

please.

K

And

in

a

few

slides

in

the

next

few

slides,

we

basically

give

some

details

about

all

these

deployment

efforts

and

the

first

one

is

certain

deployment

update

and

what

effect

basically

fetch

information

from

the

quick

database,

which

provides

the

map

the

IP

addresses,

which

we

are

interested

in

of

because

in

the

air

HTML

Network,

it

does

not

actually

use

every

IP

address

in

the

whole

internet.

So

it

only