►

From YouTube: IETF115-BIER-20221110-1530

Description

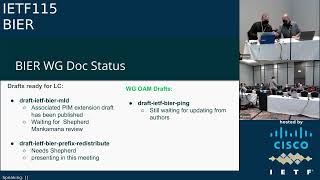

BIER meeting session at IETF115

2022/11/10 1530

https://datatracker.ietf.org/meeting/115/proceedings/

B

A

B

A

A

A

A

You

just

faded

out:

man

fell

off

the

chair,

okay,

yeah,

I

I

need

a

I,

need

a

button,

a

release

button

if

I

pass

out

and

drop

the

button.

You'll

know

there's

just

questions

already

about

remote

control

of

the

slides

I

mean

the

PDFs

are

up

there.

I

know,

there's

two

different

buttons

on

allowing

either

they

control

it

or

you

control

it.

A

C

C

B

A

B

B

B

B

A

I

add

a

note:

I

want

to

add

a

note

about

the

queue.

The

queue

is

a

composite

of

people

online

and

in

the

room

and

to

manage

the

queue

properly.

Everyone

should

be

using

the

tool,

so

even

if

you're

in

the

room

get

on

meet

Echo

and

as

you

come

into

the

mic,

hit

the

queue

button,

so

we

can

manage

the

queue

order

based

on

who

hit

it

first,

both

in

the

room

and

online.

B

B

Much

much

simpler

here

in

the

room,

there

is

a

note-taking

tool

right.

When

you

did

you

manage

to

Darling

that

your

eyes

doesn't

have

Wi-Fi,

so

that

sucks,

so

we

only

have

one

laptop

with

material.

The

other

one

has

only

the

Q

all

right.

How

do

we

not

taking

now

in

this

degraded

environment,

old-fashioned

sucks,

give

it

another

shot?

What

you

get

in

that

you

know.

B

All

right

there's

one

which

is

completely

open,

yeah

Legacy,

nobody

does

Legacy

except

me

and

the

eyes.

So

that's

why

it

works

all

right.

So,

let's

see

what

the

ice

gets,

the

note

taking

all

right.

So,

let's

start

so

I'll

be

flipping

those

things

and

then

we'll

see

how

the

stuff

works.

New,

tooling,

fine,

the

usual

note!

Well,

the

GJ

plus

everybody.

Please

wear

a

mask.

B

B

B

A

A

B

A

B

We're

not

having

any

technical

issues,

otherwise

you

know

what

to

report

them

good,

so

agenda,

a

bunch

of

updates

on

the

previous

stuff,

a

very

interesting

conversation

on

Ephesian

P4

based

beer

implementation

looks

actually

fascinating

on

the

Tofino

and

then

tallest

is

showing

something

towards

recursive

beat

masks

which

looked

like

a

pretty

crowded

pressure.

We

see

what

comes

out

of

it,

then

probably

an

frr

update

and

T

extension

by

Sandy.

If

we

find

time,

okay,

so

what's

the

status,

so

the

T

stuff

is

an

RFC.

Congratulations!

Yeah!

A

B

A

B

Oh

thanks

for

Jeffrey

to

actually

hurting

that

one

along

for

quite

a

long

time.

All

right

now

comes

the

public

castigate.

The

ospv3

stuff

is

being

not

taken.

Care

of

I

was

twice

on

people

I

was

promised,

updates.

I

updates

not

delivered

one

of

the

code

that

is

sitting

here

and

looked

properly,

bedraggled

right.

So,

gentlemen,

I

give

you

last

warning

and

two

weeks

and

otherwise

I

start

to

go.

B

Look

for

another

Shepherd,

oh

I'm

sheparding,

but

the

stuff

as

far

as

he's

not

addressed

so

I

give

you

two

weeks

for

version

that

takes

care

of

the

stuff.

Otherwise

you

know

you

lose

the

opportunity

for

Eternal,

Fame

and

I

will

go

and

drag

another

author

and

make

him

editor

and

basically

start.

You

know

to

get

the

stuff

delivered

so

once

again,

last

call,

but

in

a

different

sense

a

bunch

of

things

are

moving

forward.

Nothing

particular

here:

Bunch

waiting

for

Shepherd.

B

E

B

F

F

B

A

B

G

H

I

B

I

B

I

Thing

is

about

I'll,

make

it

very

Snappy,

so

the

problem

we

are

trying

to

solve

is

that

some

providers

that

they

are

trying

to

go

next

slide.

Please

actually

some

providers

that

are

trying

to

go

to

the

next

to

the

next

technology,

which

is

beer

for

multicast.

They

want

to

replace

some

parts

of

their

Network,

so

they

buy

brand

new

hardware,

that

is

beer

capable

and

they

want

to

replace

one

portion

of

the

network

as

an

example.

I

In

this

case

the

network

is

being

multicast,

ldp,

mldp

and

the

small

island

within

that

network

is

going

to

get

upgraded

and

to

beer

and

with

this

draft

basically

what

they

can

do

is

they

can

I,

wouldn't

use

the

term

as

Stitch.

They

can

signal

the

mldp

to

the

Beer

core

from

one

Island

mldp

Ireland

to

another

mldp

Island

that

is

connected

through

this

vehicle.

I

So

so,

basically,

the

way

it's

done

is

like

PM

signaling.

All

that

happens

is

that

when

mldp

effects

arrive

at

a

beer,

Edge

router,

we

grabbed

that

mldp

FEC

and

the

mlbp

signaling.

If

you

will

and

we

use

RFC

7060

and

we

shoot

it

from

the.

Let

me

get

my

terminology

right:

the

the

down

a

stream

B

router,

which

is

Ingress

view,

router,

I,

guess

to

the

upper

stream

B

router

and

the

Upstream

B

router

keeps

track

of

all

these

Downstream

routers.

I

I

I

B

G

All

right,

so

it's

this

is

update

on

this

bgp

extension

for

beer

signaling

on

behalf

of

the

co-authors.

Next

slide,

please

there's

a

bit

of

history

on

this

draft.

It

had

passed

working

group

last

call

before

and

I

was

the

shepherd

I

did

the

review.

I

had

some

comments,

then

the

main

authors

had

lost

Dean,

and

so

they

couldn't

address

the

comments.

G

G

G

If

you

hadn't

known

that

bfr2

can

be

used,

then

you

could

have

just

tunnel

to

the

bfr2

only

with

the

new

approach,

all

the

bfrs

via

router,

when

they

re-advertise

the

beer

prefixes,

they

will

updates

the

beer

information

and

they

will

also

update

a

sub

a

next

hub,

sub

tlv

in

the

brtov

and

to

themselves.

So

this

has

two

purposes.

G

The

main

purpose

is

that

in

this

example

bfr2,

when

the

it

really

advertised

the

bfvr123

prefixes,

it

updates

next

hub

to

itself

so

now

in

bfr1,

guess

that

this

is

the

next

half

is

bfr

too,

and

it

has

the

encapsulation

information

and

associated

with

bfr

too.

So

it

knows

that

for

all

those

bfvr

one,

two

three

they

can

just

be

reached

from

bfr

too,

so

that

handles

the

non-bf

Rockets

very

well,

and

another

side

benefit.

Is

that

all

those

prefix

beer

prefixes?

G

So

here

bfaer123

originated,

there

are

an

RI

for

their

peer

prefixes

and

then

it

is

assumed

that

everyone

just

re-advertise

them

everywhere,

but

there

may

be

situations

where

you

do

not

want

to

re-advertise

the

Beer

prefixes

everywhere.

For

example,

bfr2

can

say

that

I'm

not

going

to

re-read

the

price,

those

bfvr

one,

two

three

prefixes

instead

bfr2

will

just

advertise

its

own

beer

prefixes,

but

it

uses

sub

POV

that

is

defined

in

the

other

draft

beer

prefix

either.

G

Excuse

me

re-advertisement

that

in

that

sub

new

sub

gov,

you

will

just

list

all

the

bfr

IDS

reachable

from

this

bfr.

So

that's

what

I

was

talking

about

on

that

slide

later,

let's

go

back

to

that

slide.

You

know

yeah

so

summary

of

the

all

the

changes

in

this

revision.

We

extended

the

scope

for

general

or

applicability.

G

G

B

Questions

on

the

queue

nothing.

In

my

opinion,

this

needs

to

be

bounds

of

IDR

as

well.

Very

significant

change

of

procedure.

Okay

and

now,

because

you

carry

the

stuff

transitive

through

I-

would

like

to

have

these

guys

look

in

terms

of

like

Atomic

attributes,

Aggregates

blah

blah

right,

you're

triggering

a

couple

of

procedures,

so

I

will

fire

something

towards

the

IDR,

and

then

we

see

whether

anything

pops

up

on

the

list

and

then

find

Visual

work,

group

plus

call

again

and

Shepard,

please

if

anyone

volunteers

to

do

that

stuff.

B

E

G

G

Just

a

quick

review,

we

have

three

igp

domains

in

this

topology,

even

though

they

are

running

three

different

igp

instances

that

beer

subdomain

itself

is

actually

just

a

single

one.

They

cover

all

those

domains,

questions.

How

do

we

registering

the

beer

information

between

them?

That's

one

question.

G

C

G

So

the

the

approach

there

is

the

to

have

the

Border

routers

advertise

their

own

beer

prefixes

with

that

same

proxy

wrench

subtlb

I

mentioned

earlier

to

list

the

external

bfr

IDs

that

are

reachable

about

them.

So

that's

the

basic

idea

on

how

to

handle

the

pgplu

case.

That's

basically

the

what

this

draft

is

about.

It's

been

there

quite

for

quite

some

time.

I

will

not

get

into

the

details.

B

A

A

A

B

B

A

B

B

A

B

H

H

Okay,

can

I

start

okay,

perfect,

hello,

everyone.

My

name

is

Stephanie

I'm,

a

PhD

student

at

the

University

of

tubing

and

today

I'm

going

to

talk

about

an

efficient

before

based

beam

Plantation

on

Tofino,

and

this

is

a

joint

work

with

my

colleagues,

Daniel

melling

and

Michelle

Metz

Next

Step,

please,

okay,

I'm,

going

to

start

my

talk

with

a

short

recap.

H

Next

slide,

please!

Okay!

So

at

ITF

108

we

already

presented

the

first

implementation

of

beer

and

bfr0

in

before

for

the

Intel

Tofino,

and

there

we

used

an

iterative

processing

approach

where

in

each

iteration

one

next

top

was

served.

So

we

received

a

beer

packet

in

the

first

pipeline

iteration.

We

forward

the

packet

to

the

first

next

top

of

the

pack

of

the

gear

package.

Then

we

cloned

the

packet

recirculated

in

the

second

iteration,

the

second

Next

Top

is

served

and

so

on

next

slide.

Please!

H

This

approach

has

one

huge

drawback,

because

we

make

heavy

use

of

recirculation

and

recirculation

requires

capacity

on

the

switch.

So,

for

example,

when

we

get

100

gigabit

per

second

multicast

traffic

with

tires

and

desktops,

then

this

results

in

400

400,

Giga

per

second

recirculation

traffic.

That

needs

to

be

processed

on

the

switch

and

when

we

look

at

the

chart

here

on

the

left

side

and

when

we

get

100,

gigabit

per

second

traffic

have

only

a

singular,

select,

recirculation

port

and

want

to

send

the

packet

to

three

Next

Tops

This

Is,

the

green

bar.

H

Then

we

only

achieve

around

40

gigabit

per

second,

because

the

pipeline

is

overloaded

due

to

recirculation,

so

we

have

heavy

packet

loss.

We

were

able

to

solve

this

issue

by

adding

dedicated

recirculation

parts

to

increase

the

recirculation

capacity,

so

with

three

recirculation

Parts

on

the

right

side

of

the

plot,

we

are

able

to

serve

up

to

four

Next

Tops

at

line

rate

with

100

gigaby

per

second.

However,

these

dedicated

recirculation

parts

are

physical

parts

of

the

device,

so

these

cannot

be

used

for

any

other

traffic

than

recirculated

beer

traffic.

Next

slide,

please.

H

Well,

this

idea

has

some

challenges

in

P4

and

on

the

internet

Tofino,

because

with

beer

bit

strings

with

at

least

256

bits,

it's

quite

difficult

to

map

this

bit

string

to

the

set

of

a

packet's.

Next

stop

in

a

single

table

like

look

up

when

we

do

not

have

specialized

Hardware

as

when

we

just

have

an

Intel

Tofino,

and

a

second

challenge

is

that

we

only

have

a

limited

number

of

configurable

static,

multicast

groups.

H

So

we

cannot

simply

have

any

combination

of

possible

next

stops

as

multicast

group,

because

it's

just

too

much

so

the

question

is

now:

how

can

we

do

this

more

efficiently

next

time?

Please

next

slide,

please

just

as

a

very

small

recap:

P4

is

a

highlighted

programming

language

to

describe

data

planes

and

there

we

have

a

compiler

that

Maps

the

P4

program

onto

programmable

pipelines

of

targets,

for

example.

In

our

case,

the

inter

tufino

next

slide,

please.

H

So

our

approach

for

more

efficient

B

implementation

is

now

the

following.

We

have

a

switch

with

a

certain

number

of

ports,

for

example

32,

and

now

we

divide

all

parts

of

that

switch

in

case

so-called

configured

Port

clusters,

for

example,

the

first

10

parts

are

the

first

configure

Port

cluster

here

with

the

yellow

border.

Another

10

parts

are

the

second

here

with

the

blue

border

and

the

remaining

12

ports

are

another

configure

Port

cluster.

H

These

configure

Port

clusters

can

be

overlapping,

so

they

do

not

have

to

be

disjoint

and,

of

course,

ports

within

a

configured

podcast

that

do

not

have

to

be

physically

next

to

each

other.

This

is

just

for

Simplicity

and

all

configured

part

classes

together

have

to

cover

all

parts

of

the

switch,

and

now

we

want

to

determine

which

configure

Port

clusters

require

a

packet

copy.

So

we

get

a

b,

a

packet

that,

for

example,

should

be

sent

through

the

first

part

of

the

switch

and

through

the

last

one.

H

So

we

need

the

first

configured

part

cluster

and

the

third

configure

part

cluster,

and

this

may

be

done

again,

possibly

iteratively.

However,

only

with

a

few

iterations

like

one

to

four

and

not

like

1

to

32,

as

in

our

previous

implementation,

and

when

we

now

know

that,

for

example,

C1

needs

a

packet

copy.

We

can

use

a

suitable

static,

multicast

group

to

replicate

the

packet

to

all

required

Next

Tops

within

this

a

configure

Port

cluster,

but

only

towards

the

required

Next

Tops.

H

And

with

this

approach

we

need

a

maximum

number

of

K

minus

1

recirculations

for

K

configure

plot

clusters.

So,

for

example,

with

the

32

Port

switch

and

three

configured

Port

clusters

for

size,

11,

11

and

10,

then

we

need

at

most

two

recirculations

independent

on

the

number

of

next

stops

and

in

our

last

implementation

this

was

next

house

minus

one.

So

this

is

a

drastic

reduction

in

the

number

of

risk

conditions.

Next

slide.

H

Please,

and

the

question

is

now

okay:

how

can

we

determine

which

configure

Port

clusters

need

a

package

and

to

that

end

we

look

at

all

combinations

of

these

configure

Port

clusters

here

called

SI

with

our

example,

with

three

configure

Port

clusters.

We

have

seven

combinations,

a

single

configured,

part

clusters,

all

combinations

of

two

and

all

combinations

of

three,

and

then

we

look

at

the

so-called

combined

fbm,

which

indicates

all

beef

ERS

that

are

reachable

through

the

subset.

H

H

So

all

parts

together

build

also

the

fbm

of

the

configure

Port

cluster

and

when

we

now

have

a

subset

of

configured

Port

cluster,

we

just

bitwise

or

the

individual

fpms

of

the

configure

plug

testers

and

when

we

now

receive

a

b,

a

bit

string

and

which

is

here

shown

in

the

example

with

beef

ERS

that

are

reachable

through

the

first

configured

Port

cluster.

Here

in

Orange

and,

for

example,

the

second

configure

Port

cluster

here

in

blue,

then

we

apply

a

ternary

match.

H

Operation

ternary

match

is

simply

a

bit

wise

and

operation,

and

we

do

it

with

the

complement

of

the

combined

fbm

and

we

Define

a

match

when

the

result

is

zero.

What

does

this

mean?

This

means

that

the

complement

of

the

cspn

has

a

zero

at

every

bit

position

where

the

BRB

string

has

has

an

activated

bit,

and

this

means

that

the

combined

fpm

has

a

one

at

every

bit

position

where

the

build

string

has

bought

an

activated

bit,

which

simply

means

that

the

cfbm

serves

all

pvrs

of

video

package.

H

H

Then

we

recirculate

and

the

second

step

we

can

work

with

the

remaining

control

clusters

that

need

a

packet

copy.

Next

slide,

please,

and

now

that

we

know

which

configure

thought

clusters

need

a

packet

copy.

We

only

have

to

determine

an

appropriate

multicast

group

so

that

we

can

replicate

the

packet

to

all

Next

Tops

within

this

configure

Port

cluster

in

one

shot

without

research

collection.

How

do

we

do

that?

First,

we

configure

all

combinations

of

paths

within

configure

Port

clusters

as

static

multicast

groups.

H

We

do

that

to

gain

some

robustness

against

changes

in

the

magic

history,

for

example,

when

in

DVR

joins

police,

and

then

we

apply

the

same

logic

as

before.

We

have

a

combined

fbm

of

the

static

multicast

Group,

which

indicates

all

the

vrs

that

are

reachable

through

this

group,

or

rather

through

the

parts

that

are

part

of

this

static.

Multicast

group.

Again

we

apply

the

ternary

match

operation

on

the

pivot

string

and

the

complement

of

the

csvm

when

the

match

is

zero.

H

We

need

around

5120

static,

multicast

groups,

and

this

is

well

feasible

on

a

device

like

the

Intel

Tofino.

We

implemented

this

approach.

It

runs

at

100,

Cubit

per

second

per

part

on

the

Intel

toofino

next

slide.

Please,

this

approach

can

be

further

optimized

as

follows:

next

slide,

please

we

have

certain

assumptions

about

multicast

traffic.

We

believe

that

multicast

traffic

is

not

completely

random.

We

mean

by

that

that

there

is

some

correlation

on

eager

spots.

H

The

second

assumption

is

that

we

only

have

a

limited

number

of

static

multicast

groups,

so

we

have

to

comply

to

yourself

maximum

number

that

we

want

to

use,

and

our

optimization

idea

is

now

the

following:

we

sample

beer,

packets,

locally

and

based

on

the

beer

bit

string.

We

know

which

parts

have

to

be

used

locally

on

the

device

to

forward

the

package.

This

information

is

stored

in

a

graph

structure,

and

then

we

can

apply

clustering

methods

to

choose

appropriate

configured.

H

When

we

have

highly

correlated

multicast

traffic,

which

means

that

there's

a

high

correlation

between

equals

parts

and

beer

packets,

then

we

need

almost

zero

rest

circulations

with

around

1000

static,

multicast

groups,

that's

the

bar

on

the

right

and

when

we

have

less

correlated

multicast

traffic,

which

means

that

there's

some

Randomness

within

the

use

of

eager

spots

for

beer

packets,

then

we

still

need

around

a

scenery

circulation

with

1000

static,

multicast

groups

instead

of

the

3.5

recirculations.

Now

about

approach

next

slide,

please!

H

So

to

conclude

this

talk.

We

presented

the

mechanism

to

forward

beer

traffic

with

only

a

few

iterations

instead

of

the

number

of

next

talks,

and

to

that

end

we

choose

so-called

configure

podcasters

and

then

apply

an

iterative

processing

where,

in

each

iteration

we

select

the

configure

Port

class

that

it

needs

a

packet

copy,

and

then

we

send

a

packet

to

all

required

Parts

within

this

configure

Port

cluster

in

one

shot,

the

Second

Step

may

be

achieved

through

predefined

internal

multicast

groups,

as

in

the

case

with

Intel

Tofino.

However,

these

multicast

groups

are

static.

H

They

are

not

Dynamic,

so

we

do

not

need

any

Dynamic

state,

and

this

may

also

be

done

differently

depending

on

the

technology.

So

when

there's

another

replication

mechanism,

that's

more

efficient,

then

multicast

groups.

This

can

be

also

used

with

this

mechanism.

We

have

a

maximum

number

of

iterations,

which

is

bounded

by

the

maximum

number

of

block

clusters,

for

example

three

instead

of

32,

and

we

can

even

further

optimize

this

approach

by

packet,

sampling

and

then

applying

machine

learning

and

to

get

appropriate

configured

podcasters.

H

B

H

Because

maybe

there

are

certain

multicast

groups

or

certain

number

of

peer

packets

that

have

a

subset

in

the

yellow

cluster

configurable

part

cluster.

But

there

are

also

some

beer

packets

that

need

parts

of

the

second

one

and,

as

we

configure

all

possible

configuration

combinations

of

Parts,

when

we

have

disjoint

configure

Park

clusters,

we

need

at

least

one

recirculation

when

we

need

to

have

a

packet

that

needs

parts

of

both

clusters

and

when

we

can

do

overlapping

clusters,

then

there's

the

chance

that

we

need

no

rest

circulation

at

all.

B

G

G

G

In

some

companies

internal

implementations,

we

can

discuss

further

offline

and

additionally,

I

guess

it's

really

depending

on

the

implementation.

I

know.

There's

some

implementation

do

not

require

a

recirculation

for

each

iteration

of

that

lookup

that

that

bit

in

the

bit

string,

so

indeed

I

guess

for

for

some

Hardware

each

iteration.

What

that

does

require

re-segration-

and

in

that

case

this

optimization

will

help.

H

Yeah

definitely

so

we

are

a

bit

Limited

in

the

number

of

table,

lookups

that

we

can

do

before

and

on

the

Tofino,

and

so

we

do

not

have

a

way

to

make

30

lookups

in

one

iteration,

for

example.

So

we

have

to

do

these

recirculations,

and

this

is

a

way

to

optimize

how

we

do

these

three

circulations,

but

I'm

really

interested

in

more

information

about

the

line

card,

capabilities

of

existing

B

implementations.

H

J

J

You

know

by

doing

just

the

recirculate,

you

know

the

programming

with

P4.

Are

you

able

to

eliminate

the

pipelining

or

eliminate

recirculation

I

think

have

you

have

you

tried

without

having

to

deal

with

the

randomness

I?

Think

like

you

had

to

in

in

your

one

of

your

last

slides,

you

mentioned

that

you're

working

on

how

to

the

optimization.

H

Think

well,

yeah.

Thank

you.

Thank

you

for

the

question.

Of

course,

you

could

do

it

all

without

recirculation

by

just

copying

the

packet

to

each

egress

Port

that

has

a

potential

receiver

and

then

dropping

the

packet

in

the

egress

when

the

receiver

is

not

needed.

But

in

this

way

you

will

block

the

response.

So

when

you

get

100

Gig

and

each

Port

has

a

receiver

at

the

end

and

we

make

a

packet

copy

to

each

Port,

then

the

whole

switch

is

full

and

we

cannot

have

any

other

traffic.

B

H

It's

not

a

multicast

address,

it's

an

internal

way

of

telling

the

Tofino

to

do

a

packet

replication

to

certain

parts,

and

basically

we

tell

it.

The

control

plane

give

us

a

multicast

group

for

these

parts.

Then

we

get

a

number

and,

based

on

this

number,

we

can

later

tell

the

Tofino

to

replicate

the

packet

to

this

port

and

we

have

up

to

2

to

the

power

of

16

multicast

groups

available

on

the

Tofino

and

on

our

example

on

one

of

the

slides.

With

these

three

configurable

podcasters,

we

used

five

thousand

of

them

so.

H

A

H

B

K

K

L

So

we

continue

to

think

that

traffic

engineering

is

really

very

important

right.

So

we've

got

brt

out

and

we're

getting

back

at

that

net

to

all

the

good

things

where

we

could

actually

for

for

multicast

use,

brte

and

I'm

not

going

to

to

read

through

the

long

list,

I

I

think

most

people,

hopefully

should

know

them

and

then

through

working

through

brte

I,

think

we

got

to

the

point

where

I

was

happy.

L

L

L

L

L

B

L

L

L

Hopefully,

the

visuals

are

somewhat

helpful,

but

yeah

I

think

we'll

take

more

time

to

breathe

in,

but

the

the

main

point

is:

every

router

only

needs

to

look

at

its

own

bit

string

and

that

bit

string

only

needs

as

many

bits

as

that

router

has

interfaces

so

to

speak

or

host

neighbors

next

slide

right.

So

this

is

a

little

bit

different,

a

view

of

the

same

thing

right.

Obviously,

this

could

replace

the

bitstring

field

of

an

RFC

8296

packet

header

and

then

the

cost

of

the

serialization

right.

L

We

love

the

fact

that

we

only

got

every

router's

bit

string

that

we

need

not

the

bit

strings

from

any

router

where

the

tree

doesn't

go

through,

but

the

cost,

of

course,

are

exactly

these

addressing

fields

that

are

telling

us

the

length

of

the

subtrees

and

then

the

global

Ru

links,

and

are

you

offset?

So

this

is

kind

of

the

overhead

for

the

savings

that

we

get

next

slide,

and

so

we

did

some

simulation

some

calculation

for

large-scale

Network.

L

B

B

L

B

L

Okay,

so

the

three

big

things

right,

so

replication,

efficiency

for

small

trees

and

large

networks

right

so

and

it's

easier

to

make

the

comparison

with

beer,

because

brte

with

all

these

different

optimizations

is

it's

very

difficult

right.

Imagine

you

have

a

network

with

10

000,

bfer

and

you've

just

got

a

bit

string

length

of

256

right

so

even

replication

to

a

small

set

of

10,

20,

30

or

40

random

destinations.

L

A

large

Network

right

across

that

Network

may

require

up

to

40

packet,

copies

in

BR

and

brte,

because

they're

all

in

different

bits

drinks

right.

So

you

have

20

256

bits,

so

that's

40

different

bit

strings.

So

even

for

small

trees,

you

may

need

a

large

number

of

copies

right,

and

so

why

do

we

care

about

small

trees

and

large

networks?

Well,

that's

what

we

did

started

in

1994

right,

which

we

called

it

Pim

sparse

mode

right.

L

We

want

to

have

efficient

delivery,

small

trees

in

large

networks,

and

that

hasn't

changed

just

because

we

want

to

go

stateless.

So

that's

why

small

trees

are

actually

quite

interesting

next

slide.

So

then

we

had

this

fun

discussion

with

Dino.

He

isn't

even

here

so

that

was

basically

I

was

trying

to

be

really

provocative

and

that's

the

red

warning

offensive

technology,

Right.

L

So

consider

really

why

don't

we

have

bit

strings

that?

Are

you

know,

10

000

bits

long

right,

what's

bad

about

it,

so

and

then

go

through

the

reason

why

it

does

or

does

not

work

right.

So

in

the

first

place,

I

think

it

would

work

perfectly

fine

from

the

benefit

side.

Okay,

it's

one

kilobyte

worth

of

bit

strings,

one

kilobyte

worth

of

data,

but

we

still

only

have

an

overhead

of

50

we're

sending

it

to

a

large

number

of

bits,

10

000

receivers,

and

we

have

an

overhead

of

two

times

right.

L

If

we

do

Ingress

replication,

it's

an

overhead

of

ten

thousand

or

right.

So

if

we

go

Pim

sparse

mode

sure

we

only

have

an

overhead

of

one,

but

then

all

you

know

the

stateful

operation.

So

why

didn't

we

like

large

headers

up

so

far?

I,

don't

think

the

MTU

is

the

issue

right,

one

thousand

byte,

more

MTU!

If

we

start

with

data

center,

I've

I've

worked

with

so

many

crazy

networks

with

really

you

know

gigantic

packet

sizes

right

I,

don't

even

think

it

is

long-term.

L

The

accessibility

of

the

header

right

we've

started

with

header

from

128

byte.

Now

we're

at

512

byte

would

say

we

can

access

one

kilobyte

of

of

header

in

the

forwarding

plane

in

10

years,

but

the

problem

that

we

have

is

we

don't

want

to

process

10

000

bits

worth

of

you

know

stuff

when

we

only

need

to

replicate

to

10

outgoing

interfaces

right.

L

If

we

only

want

to

replicate

to

10

outgoing

interfaces,

we

should

only

have

to

look

and

process

something

that

is

proportional

to

the

number

of

replications

we

really

can

do,

and

that

is

exactly

what

RBS

gives

us

right.

We're

only

looking

always

at

a

local

bit

string

and

all

the

other

header

is

just

payload

that

you

know

some

other

router

down.

The

tree

will

look

into

next

slide.

B

L

You

know

the

identifiers

of

routers

across

the

different

SI

right,

so

that

problem

goes

away

and

I

think

we

could,

with

this

mechanism,

also

get

back

to

something.

That

is

a

lot

more,

if

not

fully

auto

configuring.

So

next

slide

yeah

so

scale

right.

That

primarily

comes

because

we're

not

doing

this

hard

subdivision

into

fixed

256,

bit

streaks

or

larger

right.

So

we

are

not

wasting

any

bits

that

we're

having

in

the

bit

strings.

L

L

Scalability

comparison,

implementation,

feasibility,

such

as

MP4,

you

saw

one

of

the

co-authors

being

Michael

right,

so

we're

working

on

that

together

and

then,

of

course,

there

may

be

other

proposals

that

are

looking

beyond

the

flat

bit

strings

that

we've

done

and

that

hopefully

we're

starting

to

successfully

deploy

for

the

Next

Generation

beyond

that

right

and

then,

ultimately,

you

know

when

we

feel

that

we

have

something

that

enough

people

feel

could

work

right.

What

are

the

criteria

that

would

make

any

such

non-flat

bit-free

Solutions

acceptable

as

beer

working

group

right?

Obviously

rechargering.

L

We

had

to

do

that

all

the

time,

but

what

are

the

criteria

right?

What

are

the

bounds

of

what

beer

ultimately

would

want

to

do?

I'm

not

asking

to

answer

that

question

now,

but

I

think

it

would

be

really

good

to

have

an

answer.

You

know

within

the

next

half

a

year

or

a

year

so

that

we

know

where

to

put

what

works,

because

the

more

we

get

working

group

support

and

people

interested

I

think

the

better.

The

result

will

be.

A

L

G

G

There

are

some

benefits

on

the

slide

you

claimed.

I

will

need

to

understand

it

better,

but

the

basic

idea

of

using

the

recursive

structure

and

to

save

the

the

overhead

using

local

bits

a

bit

Precision

that

that's

the

key

I

I.

It

does

work

and

I

like

it

and

once

a

suggestion

in

the

name

itself

is

to

me

it's

really

just

beer

RBS,

don't

call

it

cgm2.

Oh.

B

B

See

that

verified

independently,

because

the

gains

are

impressive.

It

looks

simple

enough

to

process

in

the

hardware.

There

are,

of

course,

open

things

like

how

do

you

make

sure

that

everybody

computes

the

same

tree?

What

will

happen

while

the

tree

is

converging

like

Loop

prevention

right,

the

the

usual

discussions?

What

will

happen

there?

Nothing,

because

each

node

has

to

kind

of

see

the

same

tree

and

know

the

offset?

Who

is

the

next

note?

B

L

Okay,

my

biggest

challenge,

of

course,

is

so

we've

done.

You

know

an

evaluation

of

course

in

actual

Hardware,

which

is

you

know,

like

everybody

else

here,

vendor

proprietary

Hardware,

that

researchers

can't

verify.

So

that's

why

Michael

is

doing

it

on

P4

and

P4.

Has

problems

I'm,

not

sure

how

much

that

is

going

to

tell

us

right,

so

the

decisions

of

what

is

actually

feasible

everywhere,

I

think

in

our

space

is

really

a

difficult

one

to

do,

and

I

hope

that

we

can

all

try

to

do

that.

You

know

as

good

as

possible.

B

L

B

C

C

Yeah

because

I

think

there's

some

design

choices

made

to

not

put

the

more

state

in

the

packet,

but

put

it

in

the

control

plane

where

it

doesn't

belong

so

in

my

I

do

expect

actually

the

the

header

size

to

increase.

If

you

want

to

solve

that

problem.

So

the

data

that

you're

showing

here

for

this

256

bits

might

not

be

as

good

as

it

slips

two

days.

Yes,

definitely

foreign.

A

L

J

Yes,

just

a

few

comments,

so

I

I

really

like

the

idea,

you

know

it's,

it

seems

like

an

optimization.

It

kind

of

takes

some

of

what

exists

today,

I

guess

in

Optimus

ideas

of

optimization

and

it

seems

like

a

really

a

good

Improvement

and

works

with.

As

you

said,

small

trees

are

important,

Universal

Big

Trees,

but

it

really

is

a

is

a

real

nice

optimization

to

severe.

J

L

I

mean

I

described

how

it

would

fit

into

an

80

296

header

right.

So

obviously

we

only

have

power

of

two

length

of

the

bit

string

right

now,

so

we

would

need

to

do

some

padding

and

ultimately

we

could

use

all

the

different

lengths

depending

if,

if

the

hardware

does

it

right

so

yeah

it

could

be

fit

in

there.

Is

it

the

best

header

but

I,

don't

think

we

can

save

a

lot

by

by

trying

to

optimize

that

header

just

for

you

know,

saving

every

single

bit.

B

Well,

I

mean

so

there

was

a

strong,

architectural

and

practical

consideration

to

put

the

Beet

mask

at

the

very

end.

Right

so

hence

I

mean

the

power

of

two

is

artifact,

because

the

algorithms

to

look

up

look

up

so

good,

but

there

is

nothing

that

will

you

know,

put

a

crimp

into

our

whole

thing.

It

would

make

the

big

mess

different

length,

so

you

know

we'll

have

to

think

how

to

record

it

in

terms

of

the

beer

and

coating

that

we

have

today,

we

have

a

version

on

it.

B

We

can

always

bump

it

to

version

two

or

we

can

think

of.

You

know

how

we

semantically

distinguish

just

like

with

the

brke.

How

do

we

semantically

interpret

the

bit

mask

differently,

so

I?

Don't

think

or

it

actually

anything

would

prevent

us

to

go

and

have

yet

another

semantic

interpretation

of

the

bead

mask

and

all

the

existing

Hardware

right,

yeah

yeah.

L

B

Have

in

this

slightly

whatever

I

mean

the

control

plane,

we

can

go

wild

right

so

that

we

can

synchronize

who

assigns

which

semantics

you

know

to

to

what

kind

of

bitmaps

is

long

in

the

hardware.

We

don't

start

to

pile

stuff

up,

so

yeah,

I,

fundamentally

I,

don't

see

like

architect,

actually

that

wouldn't

feed

and

then

Coatings

we

have

and

the

architectural

degrees

of

freedoms

that

we

provided

yeah.

B

B

M

M

M

M

Ipfr,

so

one

question

is

that

we

already

have

an

ipfri:

why

do

we

need

a

bfr?

This

is

because

beer

package

is

a

forward

without

IB

header

I

mean

outside

header.

So

in

this

case,

ipfr

cannot

apply

to

the

Beer

package

because

it

don't

have

to

be

a

Target

that

doesn't

have

a

IP

out

headers.

So

in

this

case,

when

one

beer,

that's

hot,

failed

or

unreachable

and

then

be

a

package

will

be

dropped

and

then

lost.

So

that's

why

we

need

bfr

so

regarding

to

beer,

LFA

and

the

IPL

fa.

M

So

for

for

IPL,

we

have

different

types

and

we

have

basic

IPL

Alpha

and

we

have

remote

one

and

we

have

a

property

independent

LFA.

So

those

are

three

types

will

also

reused,

because

we

have

a

architecture

to

support

normal

action,

which

is

just

forwarding

the

package

to

the

laptop

and

then

we

also

have

a

tunnel,

and

then

we

have

also

you

first.

So

what

is

a

covered?

So

next

page.

I

Yeah,

who

made

me

goalie

Nokia

I,

mean

yeah

great

idea,

but

I'm

still

scratching

my

head.

Usually

the

deployments

that

we're

seeing

with

beer

unicast

is

enabled

so

most

of

the

deployments

they

want

to

follow

unicast,

so

I'm

still

kind

of

scratching

my

head.

Why

do

I

want

to

separate

my

beer

faster

you're

out

from

unigas.

M

I

I

D

I

Is

in

the

mercy

of

the

igp

and

what

what

we

have

realized

I

mean

our

implementation.

Is

you

just

turn

on

LFA

in

the

network

and

beer

just

follows

it,

there's

nothing

special

about

it

that

that's

how

the

input

like,

even

from

implementation

point

of

view.

We

didn't

have

to

do

marriage.

We

just

turned

on

the

LFA

under

your

igp,

and

you

just

follows

it.

M

As

long

as,

if

you

have

one

but

that's

I,

think

the

yeah

normally

is

okay,

but

sometimes

because

some

notes

from

the

support

appear.

In

that

case,

we

may

have

a

issue

if

we

ever

know

the

support

that

one,

it's

okay,

yeah

every

ID,

every

IP

LFA

is

also

p

l

of

a

and

then

we'll

watch

that

go

forward

right.

I

B

Jumping

quickly,

I

mean

we

had

kind

of

this

discussion,

not

ongoing

discussion,

but

to

keep

everybody

open-minded

right.

Yes,

today

we

deploy

the

right,

GPS

or

unicast

or

works

like

a

charm

but

fundamentally

or

it

actually

were

not

slave

to

igp

right,

we

can

run

completely

different

computation

run,

build

completely

forwarding

table

installed

via

controllers

if

we're

not

slave

to

igp

and

igpfrr

is

not

good

enough.

B

I

Well,

that

okay,

again

I

just

want

to

be

very

careful

here,

even

with

the

controller

like

if

you

have

Sr

policy

or

anything

that

comes

down

with

the

controller

again

from

what

we

see

from

the

implementation.

It

just

follows:

what

is

installed

in

the

data

path?

Right

I

mean

we

don't

see

anything

as

specific,

but

yeah

I

mean

I'll.

I

B

D

Okay,

I'd

like

to

say

this

is

a

very

simple

draft-

is

used

for

prte

VP,

a

beefed

ID

at

what

husband

in

in

brt

in

calculate

circulation.

Sorry

because

the

previous

slides,

the

previous

structure

is

no

are

not

opting

optimal,

so

we,

you

would

like

to

use

a

new

advertisement

for

the

gift

ID

advertisement.