►

From YouTube: IETF-DETNET-20221212-1300

Description

DETNET meeting session at IETF

2022/12/12 1300

https://datatracker.ietf.org/meeting//proceedings/

B

C

D

B

B

It

is

a

formal

ietf

meeting,

so

our

note

well

applies

I.

Think

everyone

on

the

list

here

is

pretty

familiar

with

it,

or

else

you

wouldn't

have

made

it

into

the

interim.

If

you're

not

just

go

to

the

note

well,

please

do

try

to

practice

good

mic

control,

make

sure

you're

muted.

We

name

you

you

if

you're

not

supposed

to

be

talking.

We

also

are

going

to

use

the

queue

to

manage

the

discussion.

B

B

B

B

We

did

ask

to

rename

from

in

naming

the

the

sort

of

file

name,

we

removed

large

scale

and

put

in

scaling

some

of

the

discussion

during

the

adoption

and

before

the

adoption

pointed

out

that

a

number

of

the

requirements

are

just

about

building

scalingup.net

and

rather

than

argue

more

about

what

is

large

scale

and

what

is

not

we're

going

to

just

use

the

term

scaling.

We

do

expect

that

to

also

propagate

down

to

the

title

of

the

draft.

B

At

some

point,

we

have

one

liaison

when

there's

a

draft

response

that

has

been

discussed

on

the

list,

there's

been

very

little

discussion,

but

there

that

discussion

did

result

in

a

change

in

Reading

over

the

the

text

and

I

proposed

the

most

recent

change.

I

think

it

addresses

both

my

comment

and

the

comment

of

the

other

comment

that

was

raised.

B

B

Well,

we

do

have

the

enhanced

debt

net

data

playing

requirements

document,

that's

what

came

out

from

the

that's

what's

in

our

chartered

Milestone

and

you

know

we're

the

scaling

document.

We

think

fits

that

bill

on

this

session.

We're

going

to

really

focus

in

on

queuing,

addressing

queuing,

related

requirements

that

are

in

that

document.

B

B

There

are

a

lot

of

good

individual

drafts.

We

need

to

choose

how

many

proceed.

Are

they

combined?

Are

they

maintained

separately

or

some

things

more

interest?

Some

are

less,

you

know,

that's

the

normal

working

group

processing,

so

we're

going

to

do

that

normal

processing,

but

also

to

help

move

things

along.

We

expect

to

form

a

design

team

early

next

year.

The

the

charter

has

not

been

written.

Yet

we

expect

this.

B

C

A

Thank

you

and

it's

the

requirements

of

large-scale

determinist

network

and

for

the

name.

Maybe

the

security

is

a

better

choice

and

we

can

see

more

comments

from

the

quarters

because

before

that,

we

don't

really

know

the

the

changes

of

it.

So

my

person

personally

will

is

that

it

is

accept

acceptable,

yeah.

So,

first

thanks

for

all

of

the

people

who

review

and

support

this

draft,

it

was

adopted

and

got

so

many

good

comments.

A

So

this

document

has

absorbed

some

requirements

of

assumes

large,

secure

enhancement,

and

but

this

draft

I

mean

I,

mean

the

the

draft

option

also

updated

and

into

the

next

version.

So

some

content

of

it

is

changed

and

she

also

also

proposed

some

new

points.

We

are

also

working

on

this

on

this

work

here

wow.

A

Including

the

clock,

synchronization

or

no

clock

synchronization

in

different

domains,

the

combination

of

short

and

long

distance,

hops

of

the

end-to-end

path

and

the

virus

transmission

rate

and

the

large

number

of

flows

with

different

levels,

crossing

the

multi-domains

and

more

common

scenarios

of

topology

change

and

the

videos

of

Link.

And

this

mechanisms

used

to

ensure

the

boundary

latency,

maybe

multiple

or

have

different

configuration

or

parameters

in

multi-domains.

A

A

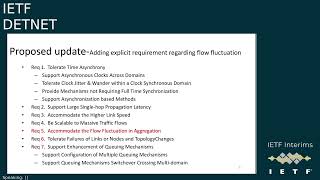

It

is

a

latest

submitted

version,

including

to

support

the

timer,

synchronously,

large,

large,

single

hope,

propagation

latency,

higher

link,

speed,

massive

traffic

traffic

flows,

videos

of

links

and

on

those

and

topology

change,

and

the

enhancement

of

killing

mechanisms

related

to

the

configuration

of

multiple

killing

mechanisms

and

switch

over

causing

body

demand.

And

following

balance

comments,

we

add

a

new

requirement,

so

we

can

find

them

in

the

next

slide.

Maybe,

and

here

that

data

plan

enhancement

requirements

to

support

the

aggregate

head

flow

identification

matter.

A

A

First,

one

adding

the

explicit

requirement

regarding

flow

fluctuation,

and

so

second,

is

to

some

scenarios.

Attributes

are

referred

to

the

heterogeneity

and

not

necessarily

the

skill

itself,

and

it

is

better

to

discuss

how

current

Internet

solution

doesn't

support

large-scale

scenarios

and

whether

this

is

still

in

single

demand

or

multiple

demands

and

clarify.

Using

C

graph

is

not

limited

to

two

queues,

and

it

refers

to

the

article

in

section

3.52.

A

A

And

now

most

of

them

has

been

addressed

in

a

proposed

new

version

of

the

draft,

but

it

has

not

been

posed

to

the

Middle

East

most

because

of

the

third

one,

because

there

are

some

texts

in

ASU

comment:

sub

section

to

see

what

caused

by

the

large-scale

damage

and

the

single

or

multi-demand

details.

However,

the

authors

think

about

maybe

some

paragraphs

to

brief

to

see

before

the

comment

section

will

help

people

to

understand

more

about

it.

A

A

To

address

the

comments

from

adding

explicate

requirements

regarding

flow

fluctuation,

we

propose

to

add

a

new

requirements,

after

request

for

a

common

dates,

flow

fluctuation

in

aggregation,

because

more

kind

of

traffic

flows

will

cause

more

Dynamic,

join

or

leaving

of

the

flows,

which

will

further

cause

more

flow

of

fluctuation,

as

well

as

more

brake

stability

of

the

Daniel

flows.

For

instance,

there

will

be

more

aggregation

nodes

which

receives

flows

from

more

Upstream

nodes,

which

adds

undeterministic

delay

of

the

package

treatment.

A

A

A

A

And

it

is

proposed

the

change

of

the

requirement

and

for

the

others,

comments

to

be

addressed.

We

write

them

in

the

new

version

of

the

of

the

of

the

draft,

so

The

Next

Step,

the

proposed,

updated

version

will

be

sent

to

the

mailing

list

and

I

hope

to

get

more

comments

and

we'll

find

together

of

it.

So

thanks

and

Link

comments.

E

A

C

F

Okay,

thank

you.

As

Tom

mentioned,

the

Gap

analysis

of

date

plane

was

submitted,

hope

that

will

help

to

make

a

clarification

for

the

scaling

networks

and

until

this

displaying

The

Wider

current,

that

letter

should

be

enhanced,

and

so

we

could

continue

to

discuss

later

of

nine

to

say

which

can

be

added

in

requirements

draft.

Thank

you.

A

B

G

C

H

A

G

C

G

C

B

C

H

B

B

D

J

J

This

page

is

for

some

background

about

this

work

in

Ikea,

104

and

105.

We

collect

some

suggestions

by

working

group

to

split

the

document

of

10

and

enhance

the

data

plan

into

two

independent

documented,

because

the

contents

contain

two

parts.

The

first

one

is

a

plan

requirement

and

the

second

one

is

solution.

So

I

I

just

also

see

some

comments

in

the

chat

box

to

ask

whether

these

are

the

same

topic.

J

Actually,

there

are

two

separate

Topics

in

this

committing

because

we

we

are

trying

to

separate

these

the

documents

into

two

parts,

and

my

presentation

is,

for

the

first

part,

first

I

I'm,

trying

to

provide

a

rough

understanding

of

what

is

enhanced

than

that.

From

my

perspective,

it's

just

give

some

thoughts

in

that

data

plan

framework

for

wording.

J

Sub

layer

is

supposed

to

provide

resource

allocation

and

explicit

routes,

and

before

we

Charter,

it

is

supposed

to

reuse

the

existing

ietf

and

the

attribute

mechanisms

of

to

provide

boundary,

latency

and

zero

congestion

loss

in

forwarding

sub

layer.

There

is

a

good

summary

in

RC

9320,

which

has

just

been

published

in

the

document.

It

gives

a

timing

model

to

compute,

end-to-end

latency

and

a

bond

different

and

the

bonds

of

different

queuing

mechanisms.

J

J

The

first

one

is

resource

allocation,

which

is

supposed

to

configure

and

allocate

Network

resource

for

the

the

Daniel

flows

exclusively,

and

this

part,

I

think,

is

supposed

to

be

covered

in

the

net

controller

data

plan

and

also

the

encapsulation

to

identify

the

resources

to

be

used

by

a

given

the

net

packet

in

the

data

plan

and

which

is

also

very

important,

is

to

provide

queuing,

shaping

and

scheduling

mechanisms,

which

is

the

the

detail

out

behavior

of

allocation

resources.

It

determines

the

transmission

queue

selection

and

the

the

hardware

in

the

device.

J

Considering

that,

as

I

have

mentioned,

the

resource

allocation

is

for

the

controller

plan.

Part

and

the

queuing

mechanisms

will

be

a

lot

of

different

variations

and

a

lot

of

possibilities

for

different

use

case

and

the

scenarios.

So

in

this

document

we

will

pay

more

attention

to

to

encapsulation

to

answer

the

question.

What

type

of

metadata

will

be

requested

for

the

enhanced

Dana

data

plan?

J

Basically,

we

we

give

three

kind

of

cases.

The

first

case

is

that

no

new

metadata

is

requested

because

they're

in

our

previous

work,

data

flow

ID

has

already

been

defined

and

that

is

could

be

used

by

both

sub

forwarding,

sub

layer

and

service

sub

layer.

So

it

can

also

be

used

if

the

the

enhance

the

then

that

mechanisms

is

flow

based.

Also,

some

existing

capsulation

in

ITF

could

be

reused,

for

example

the

sap.

So

in

that

case

we

need

no

no

more

new

metadata

and

there

are

also

two

other

cases.

J

The

first

one

is

we

can

explore

metadata

for

enhance

the

data

that

could

use

the

by

different

QE

mechanisms,

or

we

could

Define

different

metadata

for

different

query

mechanisms.

We

think

the

working

group

may

prefer

the

first

one,

because,

on

the

the,

the

other

option

will

bring

difficulty

for

interoption

when

we

introduce

different

kind

of

mechanisms.

Queuing

mechanisms,

especially

there

are

a

lot

of

different

query

mechanisms

that

are

being

discussed

in

working

group

and

there

also

a

potential

new

mechanisms

that

will

be

discussed

in

ITF.

J

C

J

J

So

yes,

if

we

think

the

the

working

group

believe

the

the

the

the

right

method

is

to

combine

the

all

these

documents

we

can,

we

can

try

to

just

work

on

the

the

only

document

that

has

already

been

adopted

and

the

working

group

think

may

we

need

another

document,

for

example,

for

the

a

design

team

that

has

been

mentioned

by

Lou

I

think

we

are

also

happy

to

contribute

on

a

new

one.

It's

it

both

work

for

us.

E

B

Not

knowing

what

you're

going

to

say

sure

I'll

jump

in

it's

really

brief.

So

we

have

a

a

working

group

document

now

that

covers

enhanced

data

plane.

It's

called

scaling

right

now.

If,

if

you,

if

anyone

has

any

additional

requirements,

they'd

like

to

see

brought

into

the

working

group,

please

just

work

with

the

work

with

the

the

the

the

working

group,

the

authors,

the

mail

list

put

put

your

suggestions

on

list.

B

You

have

a

document

here,

it's

great

work

with

the

authors

and

come

up

with

a

proposed

Edition

discuss

it

on

the

list

and

once

it's

agreed

to

on

the

list,

we

can

bring

it

into

the

working

group

document.

The

as

always,

authors

have

a

lot

of

flexibility

on

authors

of

working

group

documents

have

a

lot

of

flexibility,

whether

they

want

to

incorporate

text

and

then

bring

it

to

the

working

group

or

discuss

it

on

the

list

first

and

then

publish

it.

E

Okay,

so

I

was

I,

I

was

hoping,

Lou

would

say

something

like

that.

I'm

going

to

set

second

lose

remark,

which

is

there

is

an

adopted

requirements.

Document

for

for

scaling,

I

would

expect

the

design

team

to

work

from

a

revised

version

of

that

of

that

document

is,

is

early

version

of

revision

expected

and

another.

The

other

comment

I

wanted

to

make

is

the

lower

left

post

to

the

side.

We

use

existing

encapsulation

field

like

dscp.

E

That

is

something

I'd

like

to

see

working

group

discussion

on

whether

dscp

should

be

used

for

detnet

or

whether

net

ought

to

Overlay

existing

uses

of

dscp

of

for

other

purposes.

This

really

gets

into.

How

does

detnet

relate

to

the

to

the

diff

serve

architecture,

and

that

that

that

that

will

need

to

be

a

working

group

decision.

B

C

B

C

J

Yes,

actually,

we

are

very

willing

to

combine

the

all

these

requirement.

Considerations

into

the

existing

working

group

document

actually

I'm.

Also

the

co-author

of

the

working

group

document.

The

only

only

reason

why

we

reach

this

topic

again

as

a

separated

top

as

a

separate

separated

presentation

is

only

that.

Do

we

think

the

enhance

the

that's

not

equals

the

scaling

than

that.

If

we

think

it's

it's

the

same

concept,

I

think

it

the

there

is

no

no

reason

to

to

to

have

another

work.

J

B

C

D

B

Least

from

my

side

and

I

think

as

Jana

said

earlier

from

his

side

and

maybe

even

from

David's

side,

it

seems

like

these

are

all

overlapping,

and

so,

let's

put

it

in

one

document

and

if

we

need

to

separate

out

later,

we

can

do

that.

Keep

in

mind

just

because

it's

one

requirements

document

doesn't

mean

that

it's

one

solution.

Document

I

am

going

to

not

pronounce

the

person's

name

in

Q

correctly.

I

apologize

for

that.

But.

K

Yeah

thanks

hello,

so

I

as

one

of

the

course

so

Clauses

after

the

the

the

the

recently

adopted

requirement

requirement

document.

I

I

would

be

happy

to

take

this

relevant

part

into

discussion

and

to

be

included

to

be

combined

into

that

document.

I

think

that

would

be

a

as

one

of

the

contributor

of

the

working

group

I

think

that

would

be

a

great

choice.

I

want

to

clarify

a

little

bit

why

it

is

scaling

related

at

the

first

Point.

That's

because

we

are

kind

of

think

the

scaling.

K

Actually,

the

death

net

is

already

a

very

good

solution

right

now,

but

the

scaling

is,

we

think,

is

one

of

the

root

cause

that

we

probably

need

some

enhancements

to

that.

So

in

that

case

the

enhance

the

data

plane

is

part

of

the

considerations,

in

addition,

maybe

to

the

control

playing.

So

if

you

are,

if

the

causes

of

this

document

thinks

there

could

be

some

other

root

causes

other

than

the

scaling

I

think

we

can

have

discussion

offline

and

to

see

whether

what's

the

appropriate

text

can

be

included

there

thanks.

J

Yeah

actually

I

think

behalf

of

the

co-orders.

We

are

totally

fine

to

to

combine

the

contents

into

the

existing

working

group

document

and

the

reason

why

we

we

make

it

separately

is

because

it

is

suggested

by

working

group

to

to

separate

this

from

the

solution.

Part,

but

I

think

we

are

have

the

we

are

on

the

same

page

about.

J

J

C

C

C

B

J

C

J

Yeah,

this

page

is

for

how

to

design

the

enhanced

data

data

plan.

We

we

are

trying

to

introduce

them.

The

upon

the

latency

information,

which

could

be

divided

into

two

type.

One

is

for

resource.

One

is

for

requirement

which

aligns

with

the

the

definition

ERC,

86

and

55,

and

also

for

all

these

designs

for

different

types

of

of

the

query

mechanisms

that

will

be

discussed

in

the

following

sessions,

and

this

is

some

proposal

which

I

think

also

have

already

been

discussed,

which

maybe

we

need.

J

I

Okay,

thank

you.

So

this

is

a

little

bit

of

food

for

thought.

Given

how

you

know

the

the

past

git

itfs.

We

had

several

really

interesting

queuing

mechanisms

that

seem

to

require

new

data

to

be

carried

with

packets

and

how

that

is,

I

think

a

great

thing

that

we

would

want

to

have.

But

how

do

we

do

it

so

next

slide?

Let

me

hit

okay.

I

I

Just

you

know

one

routing

header

that

we

can

have,

and

we

would

also

want

to

have,

of

course,

pre-off

be

combined

with

the

queuing

mechanisms

and

if

every

mechanism

starts

with

its

own

header,

then

then

we

have

a

problem

with

that.

So

now

the

process,

I

guess

the

way

I

predicted-

is

that

we

can

perfectly

well

Define

in-depth

net

the

functionality

that

we

want.

I

But

when

it

comes

to

the

encapsulation

packetization

of

anything

we

want

to

have

new

in

the

package,

then

we'll

need

to

work

with

and

give

up

control

most

likely

to

mpls

six

men

or

a

beer

working

group.

If

it's

for

you

know

a

multicast,

so

there

are

working

groups

that

are

doing

packet

headers

for

their

realm

and

that

basically

means

that

we

also

need

to

explain

to

them

or

what

the

packet

header

needs

to

do.

I

I

Next

year

to

write

into

a

draft-

and

so

was

it

just

they're

trying

to

give

a

quick

rundown

of

this

to

give

an

idea

of

what

we

might

want

to

end

up

in

in

such

a

document

right

so

obviously

for

the

non-latency

things

we

already

know:

pre-off,

we

have

a

sequence

number.

We

have

a

flow

identifier.

We

have

right

now,

an

mpls

header

in

which

it

is.

Is

that

good

enough?

Can

we

use

it

in

the

other

forwarding

planes,

but

maybe

most

easily?

I

Then

an

example

of

a

queuing

mechanism

to

reduce

end

to

end

a

Jitter

is

that

we

really

just

have

a

play

out

function

right,

so

that

may

be

another.

You

know

even

draft

to

write

out,

but

we

haven't

done

this

so

far.

If

we

use

existing

queuing

mechanisms

that

are

available,

we'll

have

pretty

much

unbounded

Jitter

and

to

reduce

that

you

could

even

do

that

in

the

last

top

router

or

receiver

device

by

knowing

the

timestamp

when

the

packet

was

sent

or

when

it

needs

to

be

played

out

right.

I

I

So

there

are

things

that

we

can

use

like

the

experience

delay

on

a

perhap

basis

and

that

would

basically

be

updated

by

every

router

and

that

would

allow

the

receiver

to

also

perform

layout

buffering,

but

without

having

a

network-wide

clock

right.

So

that

is

then

different

in

so

far

is

that

it

gets

updated

on

every

router

hop

changed.

There

is

something

which

is

a

novel

and

would

be

similar

to

some

other

Advanced

queuing

mechanisms.

I

So

this

is

also

for

a

mechanism

that

hasn't

been

written

as

a

draft

right.

So

I

was

not

having

the

time

to

get

through

all

the

drafts

and

look

at

their

information

elements,

but

here

obviously

the

delay

time

on

a

per

hop

basis.

That

is

what

the

damper

function

would

require.

So

there

is

one

damper

draft

out

already.

There

may

be

more

damper

drafts

coming

and

then

obviously

for

tcqf,

which

you

have

heard

me

talking

about

repeatedly.

I

I

So

with

all

these

type

of

information

elements

right,

it

sounds

like

overwhelming,

but

I

think

when

we

start

to

think

reusing

the

basic

idea

of

the

dscp,

a

code

point

which

is

not

fixed

semantic

but

which

is

a

configured

semantic

right.

We

can

start

thinking

about

having

an

information

model

that

says

well

in

the

packet.

I

So

here's

basically

for

everybody

who

is

writing

one

of

those

queuing

drafts,

as

we

call

them

right

now

to

think

about

what

kind

of

the

you

know,

maybe

shareable

set

of

information

elements

in

packet

headers.

That

would

be

sufficient

for

you

right

and

so

I

was

really

thinking.

If

we

get

a

way

for

the

queuing

mechanisms

with

64

bits,

which

could

be

subdivided

in

two

times,

32

bits

read

writeable

and

then

we

would

also

have

only

the

sequence

number

and

the

flow

identifier

in

32

bits.

I

I

C

E

Hey

Carlos

so

I

like

what

like

I

like

a

lot

of

what

I

see

here,

though,

I

would

suggest

that

you've

got

you're

trying

to

do

two

things,

one

of

which

is

one

of

which

is

incredibly

useful

now

and

the

other

one

of

which

we're

going

to

be

arguing

about

for

a

while

to

come.

The

one

that's

really

useful

now

is

the

taxonomy

of

all

the

things

that

could

be

carried

between

nodes

or

that

taxonomy

trying

to

divide

things

up,

help

structure

the

sort

of

what

are

we.

E

I

I

You

know

these

derived

information

Elements,

which

are

code

points,

are

how

we

want

to

call

them

right

at

a

later

stage,

but

you're

right

I

would

like

to

start

with

this

semantic

information

elements

that

are

required

by

by

the

different

queuing

mechanisms

and

then

be

as

explicit

as

knowing

okay,

they're

rewrite

in

the

beginning

or

on

every

hop

on

the

receiver,

and

they

need

to

have

the

following

number

of

bits

for

the

following

size

of

network.

So

and

that's

painful

enough

work.

J

Actually,

I

I

want

to

provide

some

consideration

about

David's

question

because,

as

I

have

mentioned,

we

are

also

trying

to

introduce

some

metadata

for

the

for

different

kind

of

queuing

mechanisms.

So

that

is

also

a

question

we

have

considered.

That

is

why

we

we

Define

two

types

of

boundary,

latency

information,

one

of

them-

is

requirement.

K

I

Yeah,

just

a

quick

comment

from

from

me:

I

I

completely

agree.

The

the

only

thing

I

always

have

in

the

back

of

my

mind

is

that

we

do

have

limits

on

how

much

we

can

carry

in

packets.

The

more

we

want,

the

more

pushback

we'll

get

and

so

I

I.

Keep

that

in

the

back

of

my

mind,

when

looking

about

different

proposals

that

we

have,

how

much

would

they

need

in

the

packet

header?

How

difficult

would

it

be

and

I

think

that's

not

too

unprudent?

If

we

we

keep

that

in

mind,.

I

H

So

as

I

also

added

to

the

chat

window,

so

I

think

simplification

is

a

very

good

direction.

So

I

I

also

would

like

to

have

a

solution

that

we

are

limiting

the

information

we

are

adding

to

that

net

packets

regarding

the

Bandit

latency,

whether

it

is

only

one

or

two

parameter

or

just

a

class

information.

I

think

this

is

up

to

discussion,

but

I

definitely

intend

to

to

limit

the

volume

of

information

we

are

adding

to

that

net

packet.

So

I

think

that

that's

a

good

direction.

B

L

Of

minimum

and

maximum

and

to

understand

the

internet

plan

does

not

use

metadata

flow

ID

to

identify

the

detonate

floor,

but

didn't

Define

the

metadata

to

guarantee

the

network

and

lendency

that

there

are

other

requirements

of

that

standard

display

in

draft

requirements

for

that

scale.

Date

matter,

including

you

place.

The

inclusion

of

the

mixed

data

used

for

traffic

treatment

capability

to

different

underlying

Network

technique,

Technologies

to

to

meet

the

requirement

of

full

boundaries.

There

have

been

several

mechanisms

proposed

to

that

net.

The

list

on

the

left

is

the.

L

Information

that

is

currently

in

that

plan

used

to

facilitate

that

net

transit

in

order

to

guarantee

the

bonded

entity,

as

shown

in

this

figure

bonded,

let's

say,

is

for

information,

is

transmitted

across

multiple

detonate,

translate

node

and

used

by

that

title.

Follow

your

supplier,

so

this

page

discusses

the

design

principles

firstly

facing

the

body.

Language

information

should

be

carried

in

data

plan

to

facilitate.net

flow,

to

map

user,

forwarding

on

the

cellular,

cellular

resources

not

focus

on

the

local

mechanisms.

L

Secondly,

basically

is

a

good

is

good

to

have

a

uniform

formative

to

accommodate

a

various

other

scheduling

mechanisms.

Secondly,

the

class

of

the

classified

as

a

mechanism

mentioned

in

the

previous

page

into

two

categories:

why

is

requirement

which

summarizes

the

requirements

from

a

Detonator

service

on

the

map

to

the

resource?

L

In

the

entropriate

data

field,

we

use

the

VR

type

to

indicate

the

type

of

boundedness

information

and

the

BR

format

is

used

to

indicate

the

format

of

a

boundary

elements,

information

for

the

field

of

flag.

Currently,

we

we

don't

have

any

definition

for

it

for

future

study.

If

there

are

any

mechanism

or

algorithm

accepted

by

that

and

boundless

information

is

different

with

insertable

editor.

L

So

falter

rpv6

based.net

that

player

will

Define

the

new

IPv6

extension

Hardware

operation

called

the

Bri

operation

operation.

Jspr

Operation

can

be

encompleted

in

Azure,

IPv6,

hope

or

hope.

I

hope

audio

is

extended

harder

depending

on

the

processing

Behavior,

and

there

may

be

more

than

one

pound

difference.

Information

can

appear

in

about

VR

opinion.

L

L

For

the

second

comment,

which

Queen

mechanism

is

2,

it

depends

on

the

Node,

for

example,

the

timer

resource

ID

can

be

a

computer

present

or

second

mechanisms

is

used

on

when

that's

not

node.

If

there

are

several

several

clean

mechanisms

supported

by

one

node,

the

markings

scheme

depends

on

the

Node

local

mechanism,

foreign.

D

Don't

understand

why

it

is

necessary

for

the

trending

load

to

level

exactly

what

is

the

end-to-end

delay

budget

to

use

it

as

the

as

the

parameter

to

to

to

to

relay

and

and

forward

the

packing

and

the

flow,

because

the

end

of

the

end

service

requirements,

the

parameters

it

could

be,

it

could

be,

it

could

be

utilized

and

incorporated

into

the

and

the

policies

through

the

control

plane.

So

I

I

do

not

think

it's

necessary

to

put

this

kind

of

information

as

metadata

into

the

data

plane.net.

Thank

you.

L

J

J

You

know,

Transit

node

is

open

to

different

type

of

mechanisms,

but

the

basic

idea

is

that

if

we

have

end

to

end,

did

they

budget

and

when

we

Transit

through

a

packet,

a

Transit

through

a

node,

the

the

the

latency

that

has

already

been

expensed

in

this

node

can

be,

can

be

minused

and

the

the

end-to-end

delay

budget

will

be

reduced

to

the

left

delay

budget

and

which

could

be

used

to

direct

the

packet

forward

in

the

next

node.

That

is

the

one

possible

method

to

how

to

use

this

parameter.

J

The

most

important

thing

is

that

our

proposal

is

now

to

carry

some

specified

or

some

particular

parameters

in

the

encapsulation.

The

the

motivation

is

similar

as

a

tourist

presentation.

What

we

are

trying

to

do

is

just

to

find

some

common

metadata

that

could

be

used

for

different

mechanisms,

and

we

are

also

agree

about

the

balance

suggestion.

We

we

should

try

to

simplify

the

the

metadata

we

are

trying

to

carry

and

the

metadata

can

be

for

be

further

discussed

by

working

group.

J

I

Some

of

the

proposals

with

the

deadlines,

I

haven't

seen

and

I,

don't

think

even

jakov

or

so

was

claiming

that

they

are

deterministic,

latency

calculi

for

them

right

so

they're,

basically

based

on

heuristics

and

I.

Think

that's

another

point.

We

need

to

discuss

whether

we

want

to

include

them

in

our

mechanisms.

I

It

may

be

useful

to

to

share

packet

headers,

but

it

may

not

be

called

deterministic

so

that

that

was

what

came

to

mind.

Okay,

let

me

go

on

to

the

slides

here,

so

this

was

just

a

quick

follow-up.

Given

that

the

nodes

from

itf1415

said

we

ran

out

of

time

and

wanted

to

continue.

The

discussion

I

actually

didn't

want

to

repeat

anything

much,

except

that

I

felt

there

was

good

feedback

and

people

like

the

benefits

of

the

tcqf

mechanism

and

I.

Think.

I

The

main

distinguishing

aspect

is

that

it

is

the

only

queuing

mechanism

that

gives

us

a

low

Jitter

without

the

need

for

new

packet

headers,

because

its

metadata

can

fit

into

the

EXP

and

the

IP

dscp

if

I'm

wrong

or

not

I'd

like

to

be

reminded

what

competing

mechanisms

we

have

that

fulfill

these

requirements

of

no

packet

headers

and,

of

course,

that

is

also

meant

to

work

for

a

high-speed,

large-scale

networks.

All

those

good

things

I

had

a

great

talk

with

David

black

afterwards.

I

What

what

I

actually

wanted

to

to

to

maybe

ask

for

all

of

us

in

in

our

documents

is

right.

What

what

should

a

queuing

document

have

right,

because

I

was

trying

to

figure

out

what

it

is

and

that's

what

the

tcqf

document

has

configuration

data

model,

A

textual

description

of

perhap

processing

and

the

different

Ingress

and

egress

processing

as

necessary

description

of

the

calculus

to

determine

the

latency.

I

Of

course,

if

that's

a

predetermined

one

like

in

our

case,

I'm

pointing

to

the

the

pre-existing

predefined

calculus,

but

it's

very

simple,

of

course,

the

pseudocode

then,

as

as

as

a

more

formal

method

to

describe

that,

could

I

guess

in

other

drafts,

be

algorithms,

math

formulas

and

then

describing

the

scalability

and

performance

aspects,

and

you

know

specifically

for

for

this

document.

Of

course

that's

right

is:

do

people

feel

there

is

anything

missing

to

to

actually

adopt

it

right,

given

how

it

doesn't

require

different

packet

headers?

E

C

E

As

I

said,

I

would

like

to

separate

what

needs

to

be

done,

and

here

I

I

fully

agree

that

that

that

there

is

a

that

that

tcqf

gets

a

lot

of

Leverage

out

of

a

relatively

small

number

of

bits,

from

an

assumption

that

oh

we're

going

to

have

to

put

in

the

dscp

is

I

believe

much

as

you're

correct

about

implementation

constraints.

That

is

a

fundamental

architectural

question

that

I'm

sure

you'll

recall

in

the

first

round.

E

J

I

Yeah

I

mean

we

do

have

you

know

from

the

history

some

interesting

attempts

to

leverage

even

within

the

same

flows,

a

different

dscp,

like

you

know,

AF

41

a42

for

the

guaranteed

amount

of

traffic

versus

the

extraneous

amount

of

traffic

for

partially

congestion-controlled

traffic

flows

right.

So

this

wouldn't

be

the

first

time

when

we

logically

think

it

is

appropriate

to

use

more

than

one

bscp

for

a

flow

for

different

purposes.

So

I

I,

don't

think

I

have

an

architectural.

I

C

C

B

K

B

K

K

So

I

think

this

has

been

presented

before

so

I

will

try

to

move

quickly

for

the

first

few

slides.

Basically,

the

first

few

slides

is

a

brief

introduction

on

the

basic

or

fundamental

cqf

which

using

which

uses

two

buffers,

and

if

we

click

on

it,

the

buffers

will

rotate

for

each

of

the

cycle.

We

call

the

cycle

time

t

or

TC

in

some

of

the

slides,

so

it's

click

here.

They

need

to

move

the

next

hop.

Then

the

next

hop

until

going

to

the

sink.

So

this

is

a

well-known

feature.

K

I'm,

sorry,

well,

no

mechanism

for

the

called

the

fundamental

two

buffer

cqf,

and

it

has

a

formula

here.

Let

me

see

yeah

this

page,

so

the

total

queuing

time

the

minimum

should

be

the

number

of

hops

minus

one

times

the

cycle

time

plus

a

DT,

then

the

maximum

is

the

number

of

hops

plus

one

times

the

cycle,

time

TC

and

minus

DT.

K

So

the

that

time,

the

DT

plays

a

important

role

here,

because

the

DT

basically

is

used

for

to

absorb

all

the

types

of

time

variance,

including

the

the

the

variations

in

the

propagation

delay

and

in

the

Pro

in

the

processing

delay

and

other

other

variations

like

the

clock.

Drifting.

Something

like

that.

So

that's

the

fundamental

cqf

does.

K

So

the

we're

thinking

the

fundamental

cqf

has

a

potentials

to

to

be

widely

deployed

because

the

end-to-end

boundary

the

latency

can

be

easily

calculated.

It's

just

related

to

the

number

of

hops

and

the

the

cycle

time

so

giving

given

the

condition

that

we

have

more

and

more

link

bandwidth.

The

link

speed

is

higher,

so

there

is

potential

that

the

cycle

time

can

be

decreased,

and

so

it

can

be

decreased

from

a

few

hundred

milliseconds

to

a

few

milliseconds.

K

So

that's,

basically

what

the

left

hand

side

talks

about

and

in

that,

in

that

case,

let's

try

to

revisit

the

that

time

record

that

that

time

is

the

time

required

to

absorb

the

time

variation.

So

even

with

the

decreasing

of

the

cycle

time,

the

dead

time

it

Still

Remains

there.

So

basically,

the

dead

time

will

occupies

a

large

proportion

of

the

cycle

time.

K

So

that's

that

means

that

that

time

will

eat

up

the

cycle

interval

when

the

cycle

time

is

more

that

results

in

the

low

link

utilization

or

sometimes

it

becomes

impractical,

because

that

that

time

is

so

large

that

that

it

basically

is

100

or

even

more

of

the

cycle.

Time,

for

example,

when

we

take

the

cycle

time

to

be

like

a

10

millisecond,

then

the

dead

time

is

normally

like

a

a

few,

a

few

milliseconds

like

three

or

five.

Then

it

would

becomes

impractical.

K

So

that

gives

her

some

requirements

of

deploy

the

cqf,

the

fundamental

cqf

in

large

that

network.

So

there

are

one

two

three

four:

there

are

four

requirements

we

are

trying

to

address.

The

number

three

and

number

four

here:

number

three

is

the

longer

links

introducing

longer

propagation

delay

and

number

four

is

the

larger

processing

time

variance

variations

because

there

are

large

number

of

nodes

and

the

node

types

has

great

diversity,

they're

all

different

types

of

nodes,

so

the

variations

we

don't

know

up

front.

K

So

that

would

be

something

would

be

hard

for

the

fundamental

cqf,

because

it

requires

a

shorter

cycle

time

T

in

order

to

achieve

the

lower

end-to-end

boundary

latency.

At

the

same

time,

it

required

to

maintain

a

relatively

large

dead

time

in

order

to

absorb

the

time

variation

and

also

to

maintain

a

smaller

ratio

of

that

time

over

the

cycle

time.

In

order

to

achieve

better

link

utilization,

there

are

some

existing

ways.

K

Actually

we

call

it

a

cqf,

say

cqf

variant

that

to

use

more

than

two

buffers

so

fundamentally,

the

three

buffers

would

work

in

the

rotation

in

the

rotation

manner.

That

is

a

quite

straightforward

way.

I'm

not

going

to

introduce

it

in

great

detail

here,

it's

available

in

the

I

think

in

the

in

the

in

in

some

papers.

We

don't

think

the

new

standard

would

be

required

in

order

to

provide.

K

K

If

you

look

at

lamb

has

signed,

there

are

green

and

and

the

red

small

boxes.

So

basically

the

idea

is

because

there

there

exists

the

time

variation

in

processing,

so

the

receiving

window

will

swell.

So

when

the

cycle

time

is

so

small,

the

receiving

window

time

swells

spending

across

more

than

one

cycle,

then

that

will

give

the

difficulty

in

identify

which

one

is

the

right

side

right,

buffer

right,

output,

buffer,

a

particular

received

the

package

should

be

put

into

this

is

because

the

fundamental

cqf

use

the

time

demarcation.

K

However,

the

time

demarcation

may

not

work

so

well,

because

the

the

the

the

processing

time

variations

gives

the

gives

it

hard

and

to

make

the

right

time

demarcation

unless

the

DT

is

so

great,

so

that

we

can

clearly

separate

the

swelling

receiving

window

time.

So

the

the

way

out

is

to

make

sure

that

the

packet

carry

the

carry

the

cycle

ID

metadata,

so

that

at

the

receiving

part

it

use

that

cycle

ID

to

determine

which

one

which

output

buffer

is

the

right

one

to

be

put.

K

So

that's

the

help

to

help

remove

the

time.

Ambiguity,

so

I

think

this

one

is

some

simulation

result.

If

we

look

at

the

left

hand

side

the

picture,

we

have

a

flow

generator,

then

two

routers,

two

physical

routers.

Basically

a

flow

generator

is

the

both

the

source

and

the

and

the

both

the

top

talker

and

the

sink

for

the

flow.

Then

the

Red

Arrows

means

the

best

effort

flows

as

a

background

and

the

green

arrows

is

the

high

performance

or

the

dead

net

flows.

K

So

there

are

the

the

the

best

effort.

Flows

has

much

larger

Buster

size

and

basically

the

experiment

try

to

show

try

to

examine

actually

whether

the

large

burst

of

the

best

ever

flows

will

impact

the

net

flows

so

the

right

hand

side.

It

shows

that

the

the

Jitter

between

the

sorry,

the

children

of

the

Dead

net

flows,

using

the

the

cycle

ID

based

sqaf,

actually

is,

is

bounded.

K

So

here

is

the

summary

we

are

thinking

the

secret

of

attractive

features

and

the

potentials

for

wider

deployments,

and

the

cqf

Orion

is

straightforward

layer,

3

extension

from

the

fundamental

cqf

by

using

more

than

two

buffers

and

you

and

employ

the

explicit

cycle

ID.

So

the

thing

is

last

time

there

was

a

question

that

there

are

IEEE

has

a

project

802.1

qdv

which

trying

to

enhance

the

cqf.

So

what

we

look

at

it

and

also

why

this

we

this

we

still

want

to.

K

We

put

the

secret

variants

in

the

death

net.

This

is

one

of

the

reason

is

that

we

think

this.

The

second

ID

based

cqf

actually

is

a

simple

extension.

Sorry,

simple,

cqf

variety

to

the

current

published

standard,

which

is

a

fundamental

SQL

2x4,

3x4

cqf

it

doesn't

require.

It

does

not

require

the

major

change

of

the

current

cqf,

like

what's

being

proposed

in

the

ongoing

project,

IEEE

802.1

qdv.

So

that's

the

that's.

The

reason

why

it

was

put

here,

I

think

that's

all.

M

M

M

First,

we

can

guarantee

the

latency

on

end

to

end

the

basis,

not

per

hope,

since

the

E3,

latency

and

latency

bound

of

a

packet

could

be

less

than

the

sum

of

the

bounds

per

hop

here.

The

two

solutions

are

introduced:

regulators

and

The

Meta

Meta

metadata

based

forwarding

the

metadata

could

be

the

so-called

Global

finish

time.

It

will

be

introduced

in

the

next

slide

and

the

Jitter

guarantee

the

cheetah

guarantee

solution

can

utilize

the

already

guaranteed

LED

latency,

guaranteed

Network

and

time,

stamping

function

and

buffering

okay,

so

the

latency

guarantees

Solutions.

H

M

M

M

And

the

next

solution

I

would

like

to

introduce

is

the

fair,

which

is

the

flow

Aggregate

and

IR

is

combination

of

flow

aggregation

and

The

interleaved

Regulators

in

between

so

here

the

the

whole

network

is

divided

into

several

aggregation

domain.

We

call

at

and

the

only

at

the

boundary

of

the

AED,

the

IRS

are

placed,

the

IRS

are

operated

per

flow

aggregate

and

in

a

sense

it

is

a

general

generalized

ATS,

as

you

can

see

in

the

table

in

the

bottom

left.

M

The

fair

framework

even

encompasses

the

interserve,

which

is

which

doesn't

have

a

regulator,

but

the

scheduler

is

based

on

the

flows.

The

cues

are

assigned

per

flow

and

the

queues

are

served

accordingly,

but

maintaining

flows.

Maintaining

Q's

per

flow

is

too

complex.

So

if

you

do

not

accept

in

service,

we

do

not

accept

into

surveys

our

Solutions.

M

M

These

analysis

is

too

complex,

so

I

will

just

briefly

go

over.

So

the

basic

idea

is

is

that

the

network

is

very

large.

They

are

divided

into

several

parts

called

ad

and

the

the

topology

and

the

flows

Are

all

completely

symmetrical

and

the

flows

are

all

identical

in

that

case,

if

we

divide

the

network

into

proper

ads,

you

can

have

a

test.

M

M

It