►

From YouTube: IETF-T2TRG-20220504-1300

Description

T2TRG meeting session at IETF

2022/05/04 1300

https://datatracker.ietf.org/meeting//proceedings/

A

C

B

B

I'm

carsten

berman,

I'm

sharing

this

group

together

with

ari

carolyn,

and

I

need

to

do

a

little

bit

of

bureaucracy

at

the

start.

This

is

my

version

of

the

so-called

node

well

slide,

which

tells

you

three

things

you

may

be

recorded.

Actually,

you

will

be

recorded,

as

you

can

see,

from

the

red

recording

blob

down

on

the

screen.

B

B

B

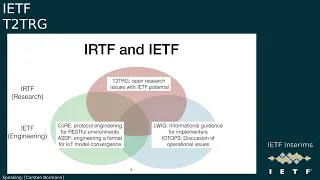

It's

easy

to

confuse

the

irtf

with

the

ietf

and

and

that's

somewhat

okay,

because

we

are

closely

related

organizations,

but

the

research

task

force

focuses

on

longer

term

research

issues,

while

the

ietf

really

focuses

on

on

creating

standards,

short-term

issues

of

engineering

and

standards

making.

So

what

we

are

not

discussing

today

is

making

standards

we

might

be

discussing

what

we

would

like

to

ask

the

iatf

to

make

standards

for,

but

we

are

not

a

standards,

development

organization

which

doesn't

mean

that

we

don't

occasionally

create

rfcs.

B

B

We

have

a

jabber

connection

to

the

chat,

but

you

can

see

the

chat

on

the

left

part

of

your

screen.

If

you

click

the

the

chat

button,

so

you

usually

don't

have

to

go

there

and,

of

course

we

have

a

mailing

list

in

the

research

group

which

it

makes

sense

to

surpr

subscribe.

You

will

not

be

totally

drowned

with

me.

We

have

a

relatively

low

volume

mailing

list

and

we

also

have

a

github

organization

and

we

usually

have

a

repository

per

meeting.

So

this

is

the

may

2022

meeting.

B

D

Thank

you,

since

we

have

many

first

time,

ietf

first

here

so

just

a

quick

note

on

the

meet

ecosystem.

So

if

you

have

any

questions

or

comments

of

the

presentation,

you

can

use

the

join

cue

button

on

the

top

left

part

of

your

screen.

Just

click

it

and

you

will

join

the

queue

and

then

we

can

give

you

we

will

let

you

know

when

it's

good

time

for

you

to

have

a

talk

or

that

that's

the

best

way

to

join

for

comments.

B

B

It's

meant

to

be

discussing

research

issues

that

that

have

become

of

interest

or

still

are

open

in

getting

an

internet

of

things

going.

That

is

not

just

using

the

name

internet

but

really

uses

internet

technologies,

and

one

important

aspect

of

this

is

there

are

many

aspects,

but

one

that's

important

enough

to

put

it

on

the

slide

here

is

that

we

are

talking

about

low

resource

nodes,

constrained

nodes

that

need

to

be

able

to

be

part

of

the

internet

of

things.

B

So

we

focus

on

issues

that

might

have

opportunities

for

iitf

standardization,

but

of

course,

essentially

catering

to

the

the

general

research

community

is

is

of

interest

here

as

well

and

with

respect

to

the

iot.

We

really

do

this

full

stack,

so

we

start

down

at

the

ip

adaptation

layer

right

above

the

radio,

and

we

end

with

application

layer

concerns

security

concerns

and

so

on.

B

So,

within

the

organizations

irtf

and

ietf,

there

are

three

blobs

and-

and

we

are

part

of

the

irtf

blob-

the

green

blob

really

is

the

the

working

groups.

So

there

are,

I

think,

13

right

now,

just

mentioning

two

here

and

there's

also

a

blue

blob,

which

are

working

groups

that

really

look

less

at

protocol

development

and

more

at

how

do

you

actually

run

these

things?

How

do

you

actually

implement

these

things?

B

So

I

cannot

do

a

full

discussion

of

the

organization

here,

but

I

think

it's

important

to

keep

this

in

mind

and

that's

why

I

have

another

slide

here.

So

the

ietf

does

the

standards

from

the

detection

adaptation

layer

higher

on

to

transfer

protocols

and

profiles

for

transport

protocols,

security

mechanisms,

application

data

formats,

data

models.

We

have

created

16

working

groups

since

2005,

three

of

which

are

closed

because

they

have

completed

their

work.

That's

something

we

tend

to

do

in

the

ietf

and

we

are

done.

B

B

So

the

plan

is

to

do

a

quick

introduction

and

I'm

already

five

minutes

over

time,

then

we

will

have

two

talks

from

people

who

haven't

had

a

lot

of

contacts

with

the

I

contact

with

the

irtf

before,

and

there

is

a

digital

twin

consortium

behind

that.

So

I'm

very

interested

in

hearing

this

point

of

view

and

we

will

reserve

a

little

time

to

do

clarifying

questions

after

that.

So

we

probably

don't

want

to

go

into

the

big

discussion.

We

can

do

that

at

the

end,

but

maybe

we

can.

B

B

E

Hi

I

am

starting

to

share

my

screen.

You

should

see

that

now,

yes,

okay,

great,

thank

you

very

much.

I

am

antoidia

joe

I'm

going

to

be

presenting

some

some

work

that

we've

been

doing

the

last

few

years.

By

way

of

introduction,

I

have

been

developing

integration

platforms

for

building

systems,

which

is

really

iot

for

about

30

years.

E

So

I

have

a

lot

of

experiences

and

and

trying

to

bring

systems

together

at

that

level,

and

this

work

of

cnscp

also

knows

connection

profile

came

out

out

of

work

that

we've

been

doing

the

last

few

years

in

developing

a

an

integration

platform

in

the

internet

era,

as

it

were.

So

we

came

up

with

this

mechanism

and

it

became

clear

to

us

as

we

started,

to

think

about

deploying

it

and

implementing

it

that

it

should

be

something

that

is

put

in

the

public

domain.

E

So

we

are

in

the

process

of

doing

that,

making

connection

profiles

open,

and

we

can

speak

a

little

bit

more

about

that.

So

one

of

the

things

we've

been

doing

the

last

couple

of

years

is

testing

out

this.

This

mechanism

within

the

digital

twin

consortium,

community,

essentially

sort

of

incubating

this

idea-

and

it's

very

been

a

very,

very

interesting

experience.

There

testing

it

out

with

a

number

of

really

sort

of

different

use

cases

that

we

may

be

able

to

talk

about

towards

the

end.

E

E

So

it's

about

10

of

the

world

gdp.

So

it's

a

big

problem

and

obviously

there's

a

value

in

trying

to

figure

it

out.

So

the

way

we

started

to

think

about

it

is

that

because

the

world

economy

is,

you

know,

by

definition,

extremely

complex,

I

I

was

trying

to

simplify

what

system

of

system

is

and

came

back

to

nature

and

thinking

about

flock

of

birds,

because

the

flock

of

birds

is

clearly

a

system

of

systems.

E

There

are

two

types

of

entities

here:

a

flock

and

birds

they

obviously

organize,

which

is

a

flock

which

is

a

system

in

the

birds

as

as

animals

are

systems.

So

when

we

dig

into

this

and

start

to

understand

how

does

a

flock

of

birds

work?

How

do

they

know

how

to

do

this

and

they

do

this

over

and

over

again,

the

the

thing

that

became

sort

of

interesting

is

really

the

relationships,

and

the

only

relationship

that

really

exists

in

a

flock

of

birds

is

relationships

between

one

bird

and

another.

E

You

know

one

bird

can

know

that

it's

following

another

bird

and

so

on

and

so

forth.

All

of

the

birds

have

some

kind

of

mechanism

like

this,

and

that

is

essentially

what

makes

the

flock

there's

nothing

else.

There's

no

there's

no

other

entity,

that's

actually

forcing

the

the

birds

to

fly

in

that

way.

It's

actually

themselves.

E

So

we

tried

to

then

take

that

to

a

sort

of

systems.

Discussion,

and

this

is

the

the

the

way

we

decided

to

depict

that

we

have

essentially

a

system

of

systems,

that's

depicted

by

the

gears

that

you

see

here.

That

is

made

up

of

these

constituent

systems

that

are

essentially

you

know,

equipment

and

other

things

in

iot

and

the

little

twin.

E

But

we

already

know

from

the

flock

of

birds

that

the

gears

aren't

really

there

so

they're

sort

of

invisible

gears

and

what

you're

left

with

is

a

whole

bunch

of

nodes

which

we

refer

to

as

interface

nodes,

so

think

about

the

interface

nodes

as

the

brains

of

the

birds

and

the

equipment.

Here,

as

the

body

of

the

birds,

the

brain

of

the

birds

knows

about

things.

It

knows

about

the

system

of

systems,

it

knows

what's

around

it,

and

it

also

knows

about

itself.

E

It

knows

about

its

health,

etc,

and

once

we

start

thinking

about

it

this

way,

then

each

node

becomes

unique

and

once

each

node

becomes

unique,

we

start

to

be

able

to

think

about

relationships

between

two

entities.

Two

two

birds

as

it

were

so

l

and

k

are

two

entities,

and

this

is

really

the

relationship

that

we

see

as

being

valuable

and

connection

profile.

Is

a

mechanism

to

model

the

behavior

of

that

relationship

or

the

contents

of

that

relationship,

and

that's

really

the

the

premise

of

connection

profiles.

E

The

way

connection

profile

works

is

that

the

the

the

constituent

systems,

the

client

systems

and

the

server

system

there's

always

a

client

and

a

server

in

a

connection

profile

mechanism.

They

instantiate

themselves

into

the

system

of

systems

and

creating

these

sort

of

virtual

modes.

So

you

have

the

two

virtual

nodes

as

part

of

that

instantiation.

E

They

they

provide

information

such

as

the

context

of

where

what

they're

interested

in,

if

you

think

of

the

birds

that

particular

flock

of

birds

as

opposed

to

the

one

that's

five

kilometers

south

of

it

and

the

the

mechanism

then

asks

the

the

systems

to

declare.

What

is

this

able

to

do

in

terms

of

connection

profiles?

Connection

profiles

all

have

names,

so

this

the

the

example

here

is

proto.example.cis.

E

All

the

profiles

are

given

names

such

as

that.

So

what

this

client

is

saying

is

that

it

can

consume

this

profile

and

the

server

says

they

can

serve

that

profile

in

this

particular

context.

So

that's

kind

of

the

premise

of

of

what

needs

to

happen

what

the

system

of

systems

does

as

a

broker.

It

looks

at

that

and

it

says:

okay.

There

are

two

compatible

complementary

connection

profile

declarations

in

a

particular

context.

E

So

we

think

about

this

as

metadata.

So

we

think

about

this

as

a

control

plane

of

of

this

whole

system.

In

many

cases,

in

most

cases,

in

fact,

in

iot

and

digital

twin

there's,

the

the

systems

themselves

have

some

kind

of

protocol

and

some

kind

of

need

to

communicate

direct

directly

with

each

other.

So

connection

profile

mechanism

allows

this

to

happen

and

with

the

metadata

potentially

being

communication

parameters

that

will

inform

how

this

data

flows

directly

between

the

client

and

the

server.

E

So

this

is

the

simple,

the

the

the

the

the

the

mechanism

itself

in

the

simplest

form.

The

other

way

to

think

about

this

is

when

it's

not

a

digital

twin.

The

mechanism

is

the

same.

Everything

above

is

the

same.

The

the

difference

here

is

that

the

connection

instance,

then

we

can

think

about

that

as

passing

data

rather

than

metadata

right,

so

both

applies,

and

the

mechanism

is

the

same

for

both

the

connection

profile

itself.

This

is

an

example

of

one

it's

actually

pretty

simple.

This

is

header

information.

E

The

most

important

part

of

this

is

the

stuff

on

the

right

hand,

side,

because

this

describes

what

type

of

server

this

connection

profile

was

created

for

and

what

type

of

client

on

what

type

of

application,

what

type,

what

kind

of

purpose

so

a

connection

profile

you

can

think

of

as

a

as

a

codification

of

a

use

case

of

why

two

systems

need

to

communicate

to

do

something

and

the

payload

of

connection

profile.

Are

these?

E

What

we

call

properties

and

the

properties

are

tagged

either

as

being

properties

that

come

from

the

server

or

from

the

client,

and

these

properties

need

to

be

filled

in

at

instantiation,

not

at

the

model

stage.

So

here

there

are

a

number

of

properties,

uri

cost,

so

they

can

be

any

type

of

property

that

makes

sense

for

that

particular

application.

That's

defined

in

the

in

the

header

and

the

client

also

has

to

provide

some

some

properties

as

as

per

the

use

case.

E

Obviously,

a

whole

bunch

of

them

and

the

the

the

full

picture

of

the

connection

profile

is

that

there

is

a

then

a

connection

profile

registry

where

all

the

connection

profiles

are

put

into.

It's

somewhat

similar

again

there's

some

sort

of

analogy

to

dns,

so

think

about

this

as

being

very

similar

to

how

dns

servers

work.

E

E

As

I

mentioned

earlier,

the

this

is

really

the

the

open

source

sort

of

mechanisms

and

licenses

that

we

think

is

appropriate

for

the

different

components,

and

all

of

this

is

done

for

innovation

to

happen

either

on

the

client

or

on

the

server

end

or

on

the

orchestrator

itself,

and

obviously

this

is

where

we

work.

So

it's

we.

We

think

it's

a

very

useful

mechanism

to

make

things

connect

with

each

other.

So

philosophically

this

is

really

what's

going

on

here.

E

We

believe

that

we

currently

live

in

what

we

describe

as

an

endpoint

centric

world,

and

so

that,

if

you

take

a

diagram

such

as

this,

that

we

see

all

the

time

all

over

the

place,

what

we

do

is

we

think

about

the

nodes

we

think

about

the

endpoints,

because

the

endpoints

are

where

computing

is

is

where

application

is

where

data

is

where

people

is

where

things

are

right.

Everything

that

we

as

humans

do

typically

is

a

node.

E

E

We

think

there's

a

better

way

of

thinking

about

this,

and

we

call

this

a

relationship

centric

view

of

it

where

the

endpoints

are

the

same

endpoints

we

had

before

nothing

has

changed

there.

The

the

difference

is

that

the

root,

the

the

relationship,

the

lines

then

become

relationships,

and

what

we're

really

proposing

is

that

we

should

really

be

managing

and

thinking

about

those

relationships,

because

that's

really

where

the

value

is.

E

I

think

we

all

know

that

data

static

data,

if

it

just

stays

there

forever,

it's

actually

no

use

data

is

useful

when

it

gets

used

and

when

it's

transferred

from

one

system

to

another.

So

the

the

the

the

thesis

here

is

that

we

should

really

be

managing

that

and

sort

of

extracting

the

most

out

of

that.

So

as

a

way

of

explaining

what

this.

E

What

we

have

to

do

today

is

we

have

to

have

a

whole

bunch

of

other

parameters

and

metadata

and

other

information

to

enable

that

data

to

go

from

one

side

to

the

other,

everything

from

the

uri

of

the

server

to

keys,

inserts

and

all

sorts

of

business

rules

and

stuff

like

that,

that

we

need

to

have

on

both

sides

and

they

need

to

synchronize

and

they

need

to

match

before

data

can

actually

flow

and

really

the

the

only

way

that

we

have

to

do.

That

is

two

main

ways.

E

One

is

through

the

very

comms

pipe

that

we

want

to

put

the

data

in

right,

so

we're

using

the

comms

pipe

to

control

the

comms

pipe,

which

is

kind

of

not

really

a

a

good

way

to

think

about

that.

So

that's

the

main

way

of

we're

doing

it

and

for

really

really

critical

information

we

resort

to

ourselves

humans,

sneaking

it

so

thinking

about

things

like

api

keys.

The

way

we

do

that

is,

we

email

them

or

copy

and

paste

them

to

with

each

other.

So

this

is

the

way

that

we

have

to

synchronize

this.

E

We

think

this

is

very,

very

expensive.

All

of

this

very

expensive,

very

risky

and

actually

creating

a

lot

more

issues

than

they

solve,

but

it's

the

way

we

do

things

at

the

moment.

So

that's

the

first

challenge

is

that

the

synchronizing

everything

is

is

hard.

The

the

other

challenge

is

that

this

picture,

here

of

of

the

of

the

network

as

it

were,

is

not

normalized

right.

E

This

complex

world

that

we

live

in

as

we

move

to

relationships

relationships

are

also

complex

in

a

different

way,

and

here

we

we

think

about

humans

when,

when

when

we

have

relationships,

there

are

different

layers

of

the

aspects

between

between

two

humans

right.

We

have

trustworthiness

right,

so

somebody

would

have

a

set

of

trustworthiness

cast

and,

for

example,

I

know

that

he's

a

member

of

ietf

and

rtf.

So

when

we

started

to

communicate,

I

know

who

he

is

and

he

knew

who

I

was

through

other

sort

of

mechanisms.

E

We

also

have

other

ways

of

determining

our

trustworthiness

and

when

we

need,

when

two

p,

when

two

individuals

or

two

systems

need

to

communicate.

That

then

creates

a

level

of

trust

between

them

and

that

basically

says

it's

okay.

To

have

this

relationship,

then

there's

issues

of

capabilities,

custom

have

certain

set

of

capabilities,

and

so

do

I

and

when

they

they,

when

they

match

each

other.

There's

compatibility

so

there's

some

usefulness

in

relationship

between

the

two

and

then

there's

a

state

which

is

the

current

state

of

the

the

two

entities.

E

The

current

state

of

of

of

carson

is,

as

he

was,

presenting

it

in

in

the

opening

in

terms

of

explaining

this,

this

work,

group

etc,

and

I

have

this

similar

thing

and

there

is

a

context

that

defines

that

there

is

some

usefulness

and

actually

us

having

a

conversation

and,

lastly,

we're

actually

having

a

conversation

right.

So

this

works

in

in

human

sort

of

world.

E

E

Is

it

normalizes

everything,

because

the

the

picture

on

the

top

is

normalized

using

cns,

because

I

now

know

that

this

line

here

is

made

up

of

some

cns

connections

of

a

certain

connection,

profile,

name,

connection

profiles,

it's

all

modeled

and

each

of

the

connection

profiles

is

then

modeled,

so

that

you

is

predictable

as

normalized

depending

on

the

needs

and

the

applications

involved

and

the

whole

thing

is

dynamic

and

and

composable

right.

So

we've

gone

from

a

completely

un

normalized

world

into

a

very

normalized

world,

so

that's

kind

of

the

the.

E

What

we

think

is

the

proper

value

proposition

of

this

technology

getting

to

some

tactical

level.

How

does

this

work

if

we

imagine

a

connection

profile

called

test.abc,

where

the

client

is

obligated

to

provide

two

properties

in

the

servers

provided

two

different

properties

when

the

the

client

starts

up,

it

does

a

registration.

It

publishes

itself

to

the

orchestrator

to

the

broker.

E

With

this

with

a

note

id

with

the

context

that

is

interested

in

the

connection

profile

that

it's

able

to

to

do

in

the

roles.

In

this

case,

the

client

and

the

properties

for

one

and

through

two

it

sends

all

of

that

to

the

broker

the

broker

says.

Thank

you

very

much.

I've

create,

I

know,

I

now

know

what

you

want

and

what

matches

I'm

looking

for

and

have

a

nice

day,

that's

kind

of

it.

E

Sometime

later,

a

millisecond

later

or

a

year

later,

the

orchestrator

the

broker

finds

a

match

and

it

basically

starts

a

flow

of

what

we're

thinking

of

as

a

subscription,

because

it's

basically

connected

is

sent

to

the

client.

The

information

about

the

the

the

match,

which

is

obviously

a

server

with

those

properties

file

one

and

far

too,

and

from

here

on

in

there

is

a

an

ongoing

bi-directional

flow

of

information,

one

going

from

the

client

to

the

server

and

another

one

going

from

the

server

to

the

client

simultaneously.

E

So

that's

how

the

mechanism

works,

put

into

sort

of

json

way

of

thinking

about

it.

This

is

the

same

flow.

This

will

be

a

publishing

packet

manifest

and

this

will

be.

What

comes

back

here

is

the

the

node

id,

and

this

is

the

connection

etc

and

ongoing.

Beyond

that,

it's

just

the

values

flowing

back

and

forth,

just

sort

of

zoom.

E

And

is

just

sort

of

how

it

how

this

works?

If

you

think

of

an

environment

where

there

are

two

different

contexts,

you

can

think

of

this

as

a

factory

with

a

manufacturing

plant

and

an

admin

plant,

let's

say

when

a

system

instantiates

itself

says

here's

me

I'm

system

one.

I

can

do

these

four

things.

These

four

connection

profiles

a

b

c

and

d.

A

is

a

server

and

the

others

is

a

as

a

client

right

now,

there's

nothing

else

in

the

system

in

the

in

the

environment.

So

it's

not

connected

to

anything.

E

E

So

if

a

system

x

goes

away,

it

can

actually

go

back

to

that

state,

so

you

can

then

go

on,

and

there

are

obviously

other

lines

here

that

we

can.

We

can

explore

later.

So

this

is

kind

of

the

interesting

thing

about

connection

profile.

It

creates

this

dynamic

network,

I'm

starting

to

think

about

this

as

a

network.

E

Lastly,

this

is

really

it's

got

a

big

question

mark

on

it,

we're

trying

to

sort

of

figure

out

how

this

fit

in

fits

in

with

it

with

a

sim

layer

stack.

We

think

it's

somewhere

like

that,

but

we're

not

sure

and

would

love

to

get

feedback

or

you

know,

I

think,

that's

some

work.

That

needs

to

be

done.

So

that's

that's.

Basically,

my

presentation.

B

B

B

F

Before

that

I

spent

a

good

15

years,

integrating

every

kind

of

control

system

that

you

can

imagine,

building

systems,

transmission

systems,

distribution

systems,

large

fluidized,

bed,

coal,

plant

cogeneration

systems,

thermal

storage

systems

and

just

to

make

it

more

fun.

All

of

this

was

on

a

a

us

state

university,

which

means

every

building

was

a

low-bid

government

contract,

which

means

nothing

talked

to

anything

else.

There's

no

standards

about

what

the

systems

were

in

there,

so

that

made

me

focus

very

much

on

issues

of

integration

and

other

stuff.

F

Before

I

could

do

anything

else,

I

want

to

talk

some

about

having

done

status

for

years.

I

want

to

say

a

couple

things

about

what

good

standards

are.

I

think

anybody's

on

this

card

called

knows

this,

but

I

think

it's

worth

saying

allowed

anyway.

So

good

standards

are

stable.

You

don't

want

to

have

a

big,

complex

standard

that

changes

all

the

time

when

every

time

something

new

comes,

they

have

to

be

visible.

People

have

to

know

how

to

use

them

and

see

them

and

build

their

own

value

on

them.

F

The

calendar

comes

in

as

just

another

standard

in

the

stack

we

mix

and

match,

if

someone's

using

plain

text

instead

of

html

or

mail,

handles

it

a

few

years

back

people

using

rtf

instead

of

html

standards,

just

handle

it,

you

you.

So

you

want

modular

standards

that

can

compose

and

adjust

as

time

changes,

and

that's

very

much

my

way

of

thinking

about

this

stuff

and

how

this

works.

F

One

of

the

things

I

said

ahead

of

time

was,

if

you

guys

like

this

sort

of

a

a

early

draft

of

something

that

might

be

a

standards

track

rfc,

but

it's

it

needs

more

work,

but

I

shared

it

early

and

I

don't

know

whether

that

was

shared

with

anybody

else

other

than

carsten

when

I

mailed

it

out,

but

we

can

talk

about

that

later

and

so

I

said

I'd

talk

about

more

detailed

things.

I

was

probably

assuming

I

was

going

to

be

talking

about

that

too.

F

F

As

with

the

team

that

updated

all

the

I

calendar

standards

in

the

last

decade-

or

so

I

know

if

you've

been

tracking

them

as

a

group,

but

there's

a

new,

I

calendar,

there's

a

new

there's,

a

new

v

card,

there's

a

new

free

to

busy

there's

there's

an

entirely

new

standard

availability,

which

is

very

important

for

for

digital

twin

kind

of

stuff.

Availability

is

oh.

This

is

available

during

business

hours,

oh,

but

what

are

business

hours

for

you?

So

it's

it's

repeating

patterns

over

time

of

scheduled

negotiations

within

smart

energy.

F

As

the

editor

of

the

energy

market,

information

exchange,

which

is

used

and

was,

has

appeared

in

ieee

standards,

energy

operation,

of

which

open

adr,

which

is

a

demand

response

in

buildings,

is

a

standard

that

was

a

decade

ago.

I

wish

it

was

lighter

and

looser

than

it

was,

but

now

there's

an

installed

basis

car

to

clean

it

up.

There's

now

common

transactive

services

coming

out,

which

is

very

much

lighter

and

looser

profiles

of

those

standards,

I'm

working

with

the

spatial

web,

which

is

similar

to

the

digital

twin

efforts.

F

But

there

was

a

while

where

we

went

to

walled

gardens

to

aol

to

compuserve

to

bulletin

boards,

and

then

they

got

open.

You

could

go

everywhere

and

then

we've

all

fallen

back

to

oh,

but

the

entire

internet

lives

in

seven

data

centers

worldwide,

which

is

a

bad

scenario

for

lots

of

things.

So

part

of

the

goal

of

spatial

web

is

how

do

we

make

it

entirely

decentralized

again,

including

decentralized

identifiers?

F

How

can

I

establish

an

identity

for

this

context

in

this

place,

without

going

back

and

having

my

identity

checked

out

from

a

central

repository?

That

knows

where

I'm

going

and

using

it

so

there's

a

whole

bunch

of

standards

in

that

effort,

which

I

may

talk

about

later

and

I've

been

working

with

anto

on

connection

profiles

trying

to

get

that

developed

trying

to

get

that

moved

up

to

a

point

where

it's

light

and

loose

and

easy

to

use

for

for

multiple

purposes.

F

F

So

I

want

to

talk

for

a

minute

about

the

challenge

of

the

internet,

of

things

which

includes

digital

twins,

which

is

one

that's

much

more

diverse

than

typical

I.t.

There

are

more

types

of

things

I

mean

at

some

level.

Everything

in

typical

I.t

is

everything

that

was

in

a

novell

network.

You

know,

there's

a

database

server

and

a

file

server

and

a

web

server

and

clients

and

find

the

protocols

have

changed,

but

that's

pretty

simple

framework,

but

you

get

into

things.

F

So

that's

another

whole

class

of

diversity

that

that

you

get

in

the

internet

of

things.

Cyber

physical

security

is

ill

defined

at

best

for

most

people,

and

you

know

I

I

could.

I

could

be

hacking

a

autonomous

car

by

having

an

led

flashlight

on

the

side

of

the

road

and

hitting

the

sensors

in

the

right

way.

F

Cyber

security

profile

is

much

much

more

complex

than

in

traditional

I.t

system.

Configuration

often

requires

deep

domain

knowledge,

and

a

lot

of

early

attempts

at

during

the

internet

of

things

came

result

of

people

who

thought

they

understood

something

because

they

were

always

the

smartest

guy

in

the

room.

But

they

didn't

understand

that

other

people

studied

what

they

were

doing

for

a

long

time

and

to

me

with

my

smart

grid

background.

One

of

my

favorite

examples

of

that

is

the

the

disease

that

we

now

call

legionnaires

disease.

F

The

reason

it's

called

legionnaires

disease

is

the

utility

guys

who

are

the

smartest,

because

their

electrical

engineers

told

the

hotel

how

to

save

energy

in

the

wake

of

the

1970

energy

price

shocks

and

they

told

them.

Oh,

you

don't

need

to

run

the

fans

after

a

cooling

cycle's

over.

That's

just

wasting

energy.

F

F

This

slide

right

here

shows

that

the

cyber

physical

security

challenge-

and

it's

not

quite

clear

who

that

surface

that

published

surface

is

securing.

Is

it

securing

the

crocodiles?

It's

securing

the

researcher

who's

hiding

into

the

crocodile

mask,

because

the

server

can

be

hacking.

The

client,

just

as

the

client

can

be

hacking

the

server

it's

just

the

internet

of

things

and

digital

twins

is

a

much

more

complex

environment.

F

F

The

goal

of

that

is

to

think

so.

Maybe

I'm

a

company,

that's

making

a

piece

of

equipment.

I

can

publish

what

a

connection

looks

like.

Maybe

I'm

an

integrator

who

does

some

special

thing.

I

can

publish

what

my

interface

looks

like

I

can

and

all

those

are

universally

available

like

dns,

you

know

the

the

actual

lookups

are

public,

but

the

use

of

them

is

private,

like

dns,

so

it's.

F

F

So

to

me,

one

of

the

big,

exciting

things

about

the

cns

cp

system

is

that

it

it

intrinsically

provides

documentation

of

the

decisions

you

made

about

connections

already.

I

want

to

talk

about

this

one

just

a

little

bit.

It's

very

easy

to

focus

on

connection

profiles

here

that

this

thing

is

connecting

to

that.

F

It's

exposing

that

this

is

connecting

that

and

there's

a

one-to-one

connection,

but

this

node

here

is

potentially

more

interesting,

so

this

node's

connected

to

two:

is

it

adding

that

one

together

or

having

a

voting

system

before

it

exposes

to

something

else

before

it

exposes

to

something

else?

Can

you

build

a

framework

of

connectivity

and

knowledge

and

actors

within

a

scp

system

to

to

bring

different

connections

from

different

places

into

new

meta

information?

F

That's

got

new

value

and

I

believe

you

should

be

able

to

compose

this,

and

I

think

we

we've

got

a

proposal

here

today

that

enables

you

to

do

that.

So

it's

easy

to

when

describing

it.

It's

easy

to

focus

on

this

connection

down

here,

but

this

connection

going

to

that

where

it's

translating

distributed,

that

where

it

comes

in

as

one

of

the

three

voting

aspects

that

goes

into

that

before

it

comes

to

that.

F

That

is

actually

a

more

interesting

fabric

in

many

ways,

because

that's

where

you

get

additional

value

from

the

network

from

the

intentionality

of

the

network

and

from

how

it's

applied.

So

I

think

whatever

we

do,

we

need

to

enable

the

capability

of

the

network

talking

to

the

network

being

an

additional

new

source

of

value.

F

I

think

cnc

scp,

which

I

sent

out

to

the

group

before,

but

again

I

don't

know

if

it's

distributed,

there's

a

seed

standard

for

other

efforts

that

are

trying

to

do

it.

Digital

twins

has

been

mentioned

clearly,

since

we've

been

working

with

the

digital

twin

consortium

for

some

time

were

already

embedded

in

them

but

say

p2874.

F

F

So

I

think

it

gives

you

an

idea

of

what

we're

thinking

about

and

what

the

what

the

pieces

are.

I

gave

these

slides

to

carson

advance,

which

means

they

can

distribute

them.

I

have

some

references

on

the

work

that

follow,

because

I

do

that

after

discussion.

Nobody

wants

to

see

them,

but

sometimes

people

want

to

look

up

what

I

was

talking

about

and

with

that

I

welcome

any

questions

or

comments

over.

B

A

B

G

F

E

E

E

Never

changes

is

immutable

forever

because

it

doesn't

describe

how

you

get

to

something

it

describes

the

the

function

or

the

the

information

of

a

connection

between

two

entities

between

client

and

server

and

the

intent

is

that

becomes

a

specification

that

never

changes

so

going

to

toby's

comment

that

once

you

install

something

20

years

later,

you

could

still

rely

on

that

connection

profile

to

mean

the

same

thing

as

it

was

20

years.

Previous.

F

F

F

G

E

But

the

the

the

ultimate,

the

creation

of

each

connection,

the

answer

is

yes,

but

really

in

in

practice.

What

happens

is

that

that

that

connection,

although

those

connections

or

connections

occur

after

somebody

or

following

somebody,

does

something

manually?

In

other

words,

I

install

of

a

piece

of

equipment

in

in

a

in

a

factory,

and

then

I

install

it

in

the

system

that

is

the

orchestrator

managing

that

factory

and

that

then

triggers

the

discovery

mechanism

that

is

done

by

the

the

system,

assistance

or

the

broker.

That

then

starts

to

create

the

connection.

F

Let's,

let's,

let's

to

explore

that,

let's

take

a

very

simple,

almost

trivially,

simple

connection

type

ambient

room

temperature.

It's

only

one

value

it's

one

way,

but

maybe

I

want

to

know

it.

I

might

have

a

complex

enterprise

hvac

system,

which

can

tell

me

that

for

every

room

in

the

building

I

expose

those

each

of

the

context.

The

context

might

be

a

room.

C

F

Or

something

else,

there

has

to

be

some

semantic

standard

that

is

developed

within

a

corporation

to

apply

to

that

profile,

and

then

I

can

say:

look

I've

got

all

these

room

temperatures

that

I

could

expose

to

you

well,

so

the

connections

are

created

to

this,

that

guy

to

this

system

that

wants

to

consume

ambient

room

temperatures

as

as

all

these,

these

connections

are

there,

but

maybe

it

says

I

only

want

to

see

the

law.

I

only

care

about

the

lobby,

I'm

not

going

to

actually

wire

anything

else

to

use

it.

F

So

then

the

connections

are

there

but

unused

the

system

has

discovered

them

and

said

you

could

use

any

of

these.

It

shares

the

context

so

they

can

be

used,

but

but

whether

anybody

wants

to

use

them

finds

any

value

in

using

them.

That's

another

thing:

so

connections

have

a

life

cycle

between

when

they've

been

discovered

versus.

F

F

Connections

themselves

in

this

system

are

universally

available

and

known

worldwide,

but

a

broker

might

be

private

might

have

any

kind

of

security

decisions.

I

don't

necessarily

want

to

expose

to

my

competitor

the

details

of

connections

that

I

have

in

my

internal

broker,

so

you

have

these

different

realms

of

security

for

different

things

and

that's

outlined

in

the

the

kind

of

early

draft

of

the

rfc.

I

just

I

I

send

out,

but

it

it

also

speaks

somewhat

to

your

your

question.

Carrie,

have

you

got

your

answer.

H

Yes,

okay,

it

showed

that

I

was

unmuted

or

yeah

that

I

was

unmuted

and

but

anyway.

Well,

I

have

a

question

for

my

understanding

and

that

is

for

you

for

your

two

presentations.

This

is

this

something

that

is

being

worked

on

inside

the

dtc

as

some

sort

of

agreed

best

practices

on

way

forward

or

how?

How

do

I

interpret

what

you

just

presented

from

from

a

dtc

perspective?.

E

H

E

The

way

the

best

way

to

think

about

that

is

a

system

of

systems,

because

in

a

system

of

systems,

environment,

the

com,

the

the

constituent

systems

that

make

up

the

system

of

systems

they

have

to

interact

with

each

other

in

an

interoperable

way.

So

that's

kind

of

how

I

would

link

the

interoperability

topic

with

digital,

twin

and

system

assistance.

I

hope

that

clarifies

things.

H

E

C

E

By

create

by

defining

it

as

a

shell,

the

connection

profile

is

focused

on

how

two

things

talked

with

each

other

and

therefore

defining

the

information.

That's

needed

from

both

ends

at

the

same

time,

and-

and

that's

that's

really

what

this

thing

is

modeling,

it's

modeling

a

relationship

between

things

I

think.

As

far

as

we

are

aware,

all

of

the

other

sort

of

ways

of

modeling

things

is

actually

modeling

a

thing,

not

modeling,

a

relationship

that

that

is

the

key

difference

here.

F

E

F

F

Some

people

believe

that

it's

a

three-dimensional

digital,

complete

representation

of

every

single

thing

completely,

and

you

need

that

for

some

things.

Some

people

believe

that

it's

a

a

complete

record

of

every

nut

and

bolt

that

was

put

into

a

complex

facility

or

was

constructed

it's

static.

But

but

that

way,

if

somebody

kind

of

bolt

rusts,

you

know

where

we

place

where

that

bolt

needs

to

be

replaced.

That's

one

definition.

F

H

F

H

A

B

B

So

basically

they

are

thinking

about

a

network

as

something

that

is

worth

having

a

digital

twin

of

for

for

a

number

of

reasons,

and

they

define

this

as

something

that

has

data

both

historical

and

real

time.

That

has

models

which

allow

you

to

find

out.

What's

what

something

should

be

doing,

what

something

will

be

doing,

and

maybe

what

something

is

doing

in

a

different

way

than

you

thought.

B

So

maybe

it's

doing

it

wrong

and,

of

course,

it's

a

number

of

interfaces

between

the

network

and

the

children

and

being

the

between

the

digital

and

the

applications,

and

they

are

looking

at

this

from

a

network

management

point

of

view.

So

they

really

want

to

analyze.

What's

going

on,

diagnose

deviations

that

they're

experiencing,

they

actually

want

to

be

able

to

decouple

the

digestion

for

a

moment

and

run

it

as

an

emulation

system

to

see

what

happens.

F

Knowing

that

the

relationship

that

things

have

are

complex

and

we

don't

know,

we

can't

look

necessarily

for

one

particular

thing

happening

to

hack

them,

but

we

can

notice

that

somehow

they're

being

dragged

out

of

the

right

performance

out

of

alignment

with

the

emulation.

So

this

is

a

key

cyber

security

feature.

That's

that's

going

on

in

some

of

the

groups,

I'm

meeting

with.

B

Okay,

so

that

was

my

two-minute

presentation

of

of

what

the

network

management

research

group

is

up

to

now.

I

quickly

want

to

talk

about

sdf

because

that

that's

actually

a

date

and

interaction,

modeling

activity

that

has

been

going

on

for

a

while

and

which

we

made

use

of

in

the

next

two

talks.

So

I

I

must

admit

I

didn't

massage

my

slides

a

lot

here,

but

I

think

we

can

can

still

use

them.

B

B

So

it

makes

sense

to

talk

about

a

common

format

first

and

the

the

interesting

thing.

What

happened

was

that

1dm

decided

they

really

want

to

work

on

the

data

models.

So

the

the

definition

format,

discussions

were

outsourced

to

the

ietf

and

that's

great,

because

the

itf

is

very

good

in

solving

limited,

well-defined

problems,

which

is

essentially

what

we

got

from

from

one

dm,

and

there

is

now

a

working

group

called

asdf.

B

So

this

defines

classes

of

things

and

while

things

might

have

some

some

data

internally,

what

really

defines

them

is

their

interactions

with

their

peers

in

the

digital

world,

which

might

be

a

digital

twin,

but

it

might

also

be

something

very

different.

So

if

a

light

talks

to

a

light

switch,

that's

one

of

the

interactions

we

want

to

model

here-

and

we

do

this

by

borrowing

terms

from

from

the

human

computer

interaction

world

where

computers

or

computer

based

systems

have

affordances,

which

are

the

the

knobs

and

and

sliders,

and

so

on.

B

That

can

be

used

to

do

something

with

the

physical

item

and

the

physical

item

reacts

to

that

by

by

actually

implementing

these

affordances,

and

we

have

three

big

interaction

patterns

here:

property

action

and

event.

I'll

talk

about

that

in

a

bit

and

finally,

we

do

have

some

common

data

structures.

So

if

you

have

an

rgb

lamp,

you

probably

have

some

common

idea

of

what

the

color

is

and

you

can

define

that,

independently

of

any

specific

property

action

or

event.

B

B

But

this

is

essentially

the

the

overall

structure

of

sdf

here.

So,

for

instance,

an

action

is

comparable

to

a

rest

post.

It's

a

client

initiative.

It

has

some

input

data

and

some

output

data

and

we

have

other