►

Description

ACM, IRTF & Internet Society Applied Networking Research Workshop 2017 - Part 3

Prague, Czech Republic

Saturday, July 15, 2017

A

All

right,

let's,

let's

start

Janene

session,

my

name

is

Aaron

Falk

work

for

Akamai

and

I'm

going

to

be

sharing

the

afternoon

session.

Our

first

talk

is

implementing

ipv6

segment

routing

on

the

Linux

kernel

aliyev,

Bonaventure's,

presenting

on

behalf

of

David

Lebrun.

Co-Author

Olivia

is

a

professor

at

UCL

and

levan

and

of

Belgium,

where

he

leads

the

IP

networking

lab

his

students

have

contributed

to

various

implementations

of

IP

IETF

protocols,

including

shim,

six,

this

multipath

tcp

and

now

ipv6

segment.

A

B

B

The

loose

source

wrote

inside

an

ipv6

extension

adder

that

contains

a

list

of

segments,

and

there

are

basically

three

types

of

segments.

You

can

specify

your

node,

so

you

can

force

the

packet

to

pass

through

a

specific

roger,

and

in

this

case

you

will

use

a

root

or

loopback

address

as

a

segment

end

point,

so

you

can

follow

the

packet

to

pass

through

a

specific

roger.

B

You

can

specify

an

adjacency

segment,

so

you

can

force

a

packet

to

be

forwarded

on

one

egress

link

on

one

router,

which

forces

you

to

use

a

specific

outgoing

interface

and

it's

also

possible

to

use

an

ipv6

address

to

encode

a

virtual

function

that

will

be

applied

on

the

packet

on

a

router

to

support

an

AV,

for

example-

and

there

are

lots

of

use

case

for

ipv6,

eggman

routing,

and

there

are

working

group

discussions

within

the

ITF

on

that.

So

how

do

we

do

that?

B

We

define

an

ipv6

segment,

routing

header,

so

new

ipv6

extension,

and

what

do

we

find

in

this

ipv6

extension?

Well,

a

list

of

IP

addresses

that

are

the

intermediate

segments.

So

we

can

have

a

list

of

segments

inside

the

ipv6

extension

header

and

each

segment

uses

one

ipv6

address

and

then,

as

with

the

traditional

source,

routing

implementation,

you

have

the

number

of

remaining

segments

and

the

index

of

the

last

segment

in

the

packet,

and

there

is

extensibility

with

CSV

objects

that

you

can

add

inside

the

ipv6

segment

working

either.

B

So

nothing

really

special

what's

important

is

that

you

have

a

list

of

segments

inside

each

packet.

What

kind

of

use

case

can

you

do

with

a

v6

and

Marathi?

You

can

give

full

control

on

your

network

to

the

Endo's,

and

in

this

case

the

nos

will

add

the

segment

routing

header

to

all

the

packets

at

its

end,

so

that

the

packets

will

be

four

wide

along

your

path,

which

is

chosen

by

the

application

or

chosen

by

the

network

administrator,

which

is

who

is

configuring

the

network?

B

So

you

can

do

end

to

end

a

path

control

inside

a

network.

You

can

force

packet

to

pass

through

a

specific

function,

which

is

a

software

function

that

you

have

placed

on

one

of

the

roger.

So

here

there

is

a

special

function

which

is

running

on

water

file

and

this

function

running

on

water

file.

You

want

it

to

be

applied

for

all

the

packets

that

are

sent

by

a

given

client.

B

B

You

can

also

do

segment

routing

ipv6

segment

rotting

at

the

routers

only

and

not

at

the

endpoint.

So

the

endpoint

is

using

plain

ipv6.

Sorry,

it

doesn't

work

with

ipv4,

but

nobody

is

interested

in

ipv4,

so

you

can

encapsulate

the

packet

and

add

and

segment

routing

header

to

the

packet

so

that

the

packet

follows

a

specific

paths

in

the

network

and

then

you

D

capsulate

at

the

egress,

which

means

that

you

are

doing

ipv6

Eggman

routing

only

between

the

routers

and

you

don't

have

to

mess

up

with

the

endpoint

security.

B

So

I

mentioned

we

had

issues

with

ipv4.

We

had

issues

with

ipv6.

How

do

we

get

rid

of

those

security

issues

with

IP

with

segment

rotting?

Basically,

you

can

add

inside

a

segment

segment

rotting,

adder

eh,

Mac

TLV,

which

allows

you

to

verify

that

the

segment

routing

extension

has

been

inserted

by

a

trusted

device

and

typically

the

trusted

device.

They

would

have

a

special

key

and

knowing

the

key,

you

can

compute

the

Ashbaugh

H

Mac

that

authenticates

the

packet.

B

So

what's

the

typical

use

case,

you

will

configure

all

the

routers

in

your

network

with

the

H

Mackay

key,

and

then

some

device

will

be

allowed

to

do

some

specific

segments

through

the

network.

You

give

them

either

the

full

segments

that

they

have

to

use

to

do,

cut

and

paste

in

the

package

at

this

end.

B

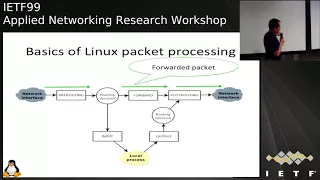

Second

point:

implementation

in

the

Linux

kernel.

So

if

you

look

at

what's

happening

in

a

typical

Linux

kernel,

when

you

process

packets,

you

go

from

one

network

interface

to

another

and

the

functions

that

you

will

apply

to

the

packet

are

the

prewriting

step.

Then

you

have

the

routing

decision

that

decides

whether

the

packet

is

local

or

whether

it

needs

to

be

forwarded.

If

it's

local,

it

goes

to

the

input

processing

and

then

it

goes

to

the

local

processes.

B

And

then

you

have

the

output,

processing,

writing

decision

forward

and

all

swati,

and

typically

you

have

three

paths

inside

the

Linux

kernel

to

process

packets.

The

first

one

is

the

rate:

is

the

horizontal

pass

where

you

will

forward

ipv6

packets,

where

you

have

pre

routing

routing

decisions

and

forwarding

and

post

writing?

If

the

packet

is

this

time

to

the

node,

then

you

will

do

prewriting.

B

You

check

that

the

packet

is

local

and

then

you

do

the

input

processing

and

if

you

send

the

package

from

the

node

to

the

to

the

outside,

then

you

have

the

output

processing,

the

routing

decision

and

the

post

waiting

to

send

the

packet.

So

what

do

we

change

with

ipv6?

Eggman

writing?

Well,

you

have

to

change

the

way.

The

package

that

contain

a

segment

row

together

will

be

processed

and

let's

take

at

two

examples.

B

The

first

one

is

the

router

is

one

of

the

segments

in

the

list,

so

you

have

received

an

ipv6

packet

that

contains

a

segment

all

together

and

this

segment

rotten

header

contains

the

loopback

address

of

the

router

as

one

of

the

addresses

of

the

segment

routing

header.

So

the

packet

was

designed

to

this

node,

at

least

from

a

segment

wottinger

pond,

so

the

packet

will

arrive,

the

destination

of

the

packet

is

virtually

the

router,

so

it

goes

to

the

input

processing

in

the

input

processing.

B

So

I

can

we

can

reconfigure

ipv6

segment

routing

with

the

Linux

implementation.

Basically,

the

control

planning

on

Linux.

You

have

IP

route

2,

which

allows

you

to

manipulate

the

routing

tables

and

manipulate

the

configuration

of

the

interfaces

and

I

appear

with

to

receive

comments

from

FT

net

link

and

basically

in

the

ipv6,

a

Marathi

implementation.

B

We

extend

actinic

link

to

support

what

is

required

to

manipulate

and

to

configure

ipv6

and

marathi,

and

here

you

have

an

example

of

the

IP

command,

which

applies,

of

course,

to

ipv6,

where

you

specify

that

all

the

packets

whose

destination

is

FC,

42,

/

64.

So

you

match

the

destination

address.

When

you

match

the

destination

address,

you

will

encapsulate

the

packet

with

ipv6

segment

rotting.

B

That's

the

second

part

of

the

command

and

you

will

add

the

segment's,

which

is

simply

specified

as

a

list

of

ipv6

addresses,

which

commas,

with

with

commas,

to

indicate

the

segment

that

that

needs

to

be

attached

in

the

encapsulated

packet.

So

this

would

typically

run

on

an

ingress

router,

where

you

would

have

to

add

a

segment

to

a

packet

that

matches

a

given

destination

and

there

are

other

ways

to

configure

it

to

configure

it

as

well.

B

Segment

routing

can

also

be

used

directly

by

applications,

and

in

this

case

we

modify

the

socket

API

so

that

in

the

socket

API,

when

you

create

the

socket,

for

example,

this

is

for

a

TCP

connection.

When

you

create

the

socket,

you

specify

the

segment

routing

header

that

has

to

be

used

for

all

the

TCP

packets

that

are

sent

over

the

specific

connection.

B

So

this

is

not

very

complex

for

the

H

Mac

I

told

you

that

H

mark

allows

to

verify

the

integral

and

the

authenticity

of

a

sing

Monroe

together,

and

there

are

three

knobs

that

can

be

controlled

on

a

rotor

to

decide.

Other

water

would

process

the

packet

with

an

H

mark.

The

first

method

is,

you

know,

H

Mack,

all

the

time.

B

You

can

also

verify

the

package

that

contain

an

H,

Mack

and

forward

the

packets

without

an

H

Mike

or

you

can

be

strict,

and

if

the

packet

contains

an

H

Mack,

you

verify

it

and

you

process

it.

If

the

H

Mack

is

valid

and

if

the

packet

does

not

contain

an

H

Mack,

then

you

discard

the

packet,

so

the

the

packets

there

would

be

discarded.

B

There

is

a

mistake

in

this

light,

so

everything

has

been

implemented

by

the

Vig

in

the

Linux

kernel,

so

it

was

initially

in

Linux

4.10

and

it

so

it's

part

of

the

mainline

kernel

and

it

has

been

improved,

and

now

it

is,

the

improved

version

is

part

of

UNIX

4.12.

So

the

next

time

you

download

the

recent

Linux

kernel,

you

will

have

ipv6

segment

watching

for

free

and

you

will

be

able

to

play

with

it

and

I've

seen

that

di

cottan.

B

There

is

a

group

doing

configuration

with

net

conf

on

top

of

the

ipv6

segment

rotting

implementation

already,

so

people

are

already

playing

with

playing

with

it.

So

let's

look

at

the

performance

of

the

implementation

so

to

test

the

implementation

we

use

the

lab.

So

we

we

took

three

Intel

exome

servers

each

with

four

coughs:

eight

threads

at

two

point,

three

to

two

point:

50

gigahertz,

10,

gig,

Ethernet

cards.

B

So

we

first

write

to

see

in

this

setup

what

is

the

baseline

ipv6

performance

and

we

have

a

bit

more

than

1.1

million

packets

per

second

for

64

bytes

packets,

and

we

use

this

as

a

bad

light

baseline

to

compare

the

performance

of

the

ipv6

segment

watching

extension.

So

the

first

test

we

did

with

inline

injection.

So

we

add

the

segment

were

together

or

we

do

encapsulation.

There

is

no

difference,

no

significant

difference

from

performance.

B

Sometimes

it

took

the

slow

pass

and

it

considered

that

since

we

have

multiple,

multiple

cpus,

you

had

to

take

a

spin

lock

to

be

able

to

do

the

free

and

so

on,

and

this

slow

down

the

performance

a

lot

so

that

we'd

fix

that

and

improve

the

performance.

So

that

we

reach

now

1

million

packets

per

second

for

inline

injection

and

almost

the

same

for

encapsulation,

so

we

have

the

same

result

and

we

are

pretty

close

to

what

we

do

with

ipv6

a

regular

forwarding.

B

The

main

difference

is

that

we

basically

have

to

do

to

do

caps,

possibly

because

we

go

again

in

the

column,

so

it's

normal

that

we

have

lower

performance,

but

the

difference

is

not

so

huge

anymore.

So

this

is

reasonable

performance

for

a

single

core.

So

we

have

one

CPU

doing

that

on

a

10

gig

interface,

we

looked

at

whether

there

was

a

difference

between

long

and

short

packets.

So

in

red

you

see

the

1000

bytes

packets

and

in

blue

you

have

the

C

the

four

bites

back.

Yet

that

were

the

default.

B

There

is

no

significant

difference

and

the

cost

is

the

other

processing.

It's

not

the

data

movement

so

which

is

good.

We

looked

at

the

H

Mac,

so

if

you

have

to

compute

an

H

Mac

with

sha-256

for

each

ipv6

other

that

you

have

to

process,

there

is

a

cost

and

there

is

no

surprise.

So

you

get

a

bit

more

than

200,000

packets

per

second,

with

a

generic

implementation

of

each

Mac

sha-256

in

the

linux

kernel.

If

you

use

the

version

which

uses

special

Intel

assembly

instruction,

then

you

have

slightly

better.

B

B

So,

to

summarize,

and

to

conclude,

I

can

say

that,

thanks

to

the

work

of

David

during

the

entire

PhD

thesis,

ipv6

segment,

routing

as

matured,

so

now

we

have

a

stable

specification.

You

will

see

discussions

within

the

ITF.

There

are

many

use

case

with

ipv6

segment

routing

beyond

those

that

are

discussed

in

this

presentation.

C

Hi,

so

this

is

really

cool

stuff.

So

I

wanted

to

ask

a

question

about

the

bottom

on

there

on,

unsurprisingly,

H

Mac,

TLB

FX

performance.

Yes,

because

there's

a

lot

more

work

that

has

to

happen

is

that,

with

the

the

algorithms

that

are

used

there

is

that

or

the

the

ciphers

that

are

used

there.

Is

that

a

thing

that

could

be

hardware

accelerated

in

a

Linux

based

router

or

because

the

design

of

this

preclude

that

I.

B

A

B

A

B

So

the

question:

what

happens

when

you

have

a

waypoint

that

you

cannot

reach?

It

really

depends

on

the

use

case

that

you

use

or

whether

you

have

end-to-end

solution

or

whether

you

have

encapsulation

on

that.

So

if

you

have

an

end-to-end

solution,

wait

like

this

one.

If

you

are,

you

have

specified

that

one

of

the

address

was

had

to

be

used

with

the

destination.

B

Then,

if

this

one,

if

say

rotor

six

is

not

available,

then

you

should

receive

an

ICMP

back

to

the

source

and

the

source

will

know

that

there

is

a

problem

of

which

ability

of

or

of

a

six.

But

you

are

not

forced

to

use

F

six

as

a

well.

The

segment

that

you

use

does

not

necessarily

be

an

ipv6

address

of

one

node,

so

you

can

have

an

ipv6

address

that

corresponds

to

multiple

nodes.

D

B

On

in

a

software

implementation,

like

the

Linux

one,

the

number

of

segments

that

use

does

not

have

an

impact.

But

if

you

go

in

a

hardware,

implementation

I

know

that

for

MPLS

version

of

Sigma

writing

there

are

some

chips

that

are

limited

to

three

or

four

to

a

depth

of

three

or

four,

and

it's

likely

that

it

will

be

the

same

for

ipv6

as

well.

But

I,

don't

know

the

specs

of

the

other

way

of

platforms

that

I

used

to

support

ipv6.

In

other

words,

do.

B

A

E

A

Okay,

everybody

hydrated

caffeinated

good,

all

right!

So

yes,

no!

Thank

you.

I'm

good

I've

got

coffee,

so

our

next

talk

is

managing

resource-constrained

IOT

devices

through

dynamically

generated

and

deployed

yang

models

presented

by

Thomas

jeffer

Thomas

has

worked

for

20

years

in

the

field

of

IP

based

data

communications,

since

2008

he's

taught

data

communications,

network

engineering

and

network

security

at

Booz

Haku,

a

University

of

Applied

Sciences

in

Berlin

Germany

he's

participated

in

a

number

of

European

research

projects

in

the

area

of

ipv6

transition

and

deployment

and

network

security

issues.

A

E

F

Thank

You

Aaron

Laura

into

division

so

now

onto

something

completely

different

I

feel

it's

much

more

application

oriented

presenting

here

today,

but

maybe

has

some

implications

for

general

internet

area

as

well.

So

this

work

is

growing

out

of

yeah

some

some

work

I've

doing

with

my

students

for

quite

a

couple

of

times,

so

we

what

we

do

with

them

is

to

program

these

little

devices

here

which

was

sort

of

8-bit

microcontrollers

and

trying

to

do

ipv6

on

them.

F

So

basically,

there's

there's

been

some

some,

probably

something

you

seen

yourself,

I

mean

if

you

look

at

the

normal

I

you

Chi

installation.

Today,

it

probably

looks

something

like

this:

you

have

a

jumbo

of

middle

boxes

and

things

which

aren't

really

connected

to

each

other.

I

mean

they

may

be

connected

to

the

Internet.

But

it's

that's

the

only

common

feature

where

you

see

here,

so

you

got

a

lot

of

different

ecosystems,

each

one

sort

of

tries

to

keep

their

devices

close

together

and

to

themselves.

F

So

they,

the

fight

at

the

moment,

is

really

about

who's

owning

these

devices

and

who

is

getting

the

data

and

how

to?

How

do

you

protect

your

your

investment,

but

it's

it's

not

so

much

about

making

this

stuff

interoperable.

So

in

on

the

other

side,

it

looks

like

this

that

sort

of

each

of

them

comes

with

our

little

app

in

order

to

manage

it.

So

it's

requires

a

lot

of

user

tension

and

requires

a

lot

of

for

to

actually

manage

such

a

IOT

installation

and

it

may

work

in

in

a

home

environment.

F

But

if

she

was

thinking

about

industrial

deployments,

that

means

definitely

something

which

is

maybe

not

the

right

way

to

do.

That

sort

of

you

have

a

closed

ecosystem

visiting

with

an

IOT

app

only

one

side

on

your

mobile

phone

and

a

closed

device

on

the

other

side.

So

we

really

need

to

open

up

this

a

little

bit

more.

That's

my

idea

that

the

wormwood

much

would

make

much

more

sense

if

you

had

a

much

more

insulation.

F

So

our

idea

for

this

book

was

that

what

needs

to

be

done

to

make

or

to

break

this

current

status

quo,

where

everybody

is

building

closed

ecosystems

and

everybody

invents

lives

in

their

own

little

bubble

and

and

lot

of

supposedly

standards

being

developed.

But

each

of

these

standards

is

just

basically

some

way

to

to

lock

you

in

into

some

certain

vendor

and

in

some

certain

sort

of

set

of

weather

control.

F

F

If

we

talk

about

in

IOT

is

an

area

we

have

where

we

have

hundreds

and

thousands

of

these

devices.

That's

you

don't

want

to

manage

this

manually.

You

don't

want

to

go

to

every

single

device

into

some

onboarding

to

some

bootstrapping

procedure.

You

want

to

have

these

devices

to

announce

their

capabilities

and

their

presence

in

the

network

and

then

make

this

sort

of

discover

make

this

network

discoverable.

F

So

the

obvious

xkcd

comic-

and

this

is

actually

sort

of

yeah.

Everybody

sort

of

talks

about

we'd,

be

building

new

standards

and

it

doesn't

work

by

sort

of

now

going

to

this

scene

and

say:

oh,

we

built

a

new

standards,

because

what

will

happen

is

that

we

only

had

one

more

and

and

in

the

end

we

get

much

more

confused

and

by

the

way,

if

like

15,

competing

standards

in

the

IOT

domain

is

a

wicked

ridiculous.

F

Low

number

I

mean

you've

got

much

more

than

this,

so

that

that's

the

current

situation,

or

at

least

how

I

perceive

it.

So,

let's

talk

a

little

bit

about

net

conf

and

how

we

could

use

it

so

in

that

computer

all

know,

is

kind

of

the

the

big

thing

in

the

ITF.

So

everybody

defines

yang

models.

Everybody

uses

not

conf,

but

if

you

look

outside

your

ITF,

my

feeling

is

that

nobody

knows

it.

F

So

nobody

really

has

an

idea

that

that

there

there

is

something

other

than

SNMP

and

and

we

could

use

it

for

for

lots

of

things.

It

has

all

these

nice

features.

Buildings

are

the

sort

of

distinguishing

between

state

and

operation,

manage

whole

networks

and

individual

devices

and

have

discovered

we

discoverability

built-in

have

a

data

model

language

which

could

be

used

to

define

services,

etc,

so

that

Curtin

fits

actually

I

feel

like

a

good

starting

point

for

for

also

doing

a

lot

of

stuff

than

sort

of

managing

routers

and

switches.

So

we

have

this

protocol.

F

We

have

the

angles

which

we

could

use

for

for

describing

data

on

or

describing

capabilities

of,

all

those

other

things

than

just

routers

and

switches.

So

what

we

wanted

to

do

is

use

use

these

kind

of

protocols

in

in

comparison

or

together

basis,

our

very

low

powered,

very

constrained

devices,

and

what

we

found

out

is

that

there's

actually

not

a

good

working

model

for

using

that

confundus

devices,

so,

first

of

all,

our

device

will

not

be

able

to

process,

as

XML

I

mean

it's

kind

of

obvious.

F

F

The

other

thing

is

we

need

to

sort

of

also

describe

if

you

want

to

make

our

devices

discoverable.

We

need

to

think

about

data

modelling

and

if

you

look

at

yang

it's

a

fairly

static

way

of

describing

your

your

data,

because

obviously

a

route

or

limitation

or

switch

implementation

doesn't

change

much.

So

you

can

have

very

static

there,

aesthetic

versioning

system,

and

so

you

basically

know

this

in

advance.

F

F

It's

been

done

by

by

basically

by

IBM,

and

they

started

in

1999

actually

as

a

practical

solution

to

monitor

or

physical

installations,

and

so

it's

been

through

quite

some

iterations

and

this

kind

of

works

and

it's

using

TCP,

maybe

not

the

best

solution

for

for

such

constraint

devices,

but

it

we've

got

some

implementations

running

at

the

moment.

So

basically,

we

treat

this

as

a

way

to

push

the

the

device

configuration

and

also

to

get

commands

back.

F

Why

do

we

use

it

because

it's

using

a

public

subscribe

model,

so

we

have

a

broker

in

between

which

decouples

TT

publish

over

and

the

subscriber

over

topic

topic

is

something

a

message

channel

which

allows

you

to

what

pace.

Whatever

data

you

have

so

maybe

some

unstructured

data,

or

maybe

some

some

binary

stuff

whatever.

F

So,

how

do

we

use

this

on?

How

do

we

put

this

together?

We

want

to

create

dynamic,

yang

models.

So,

first

of

all,

we

need

to

make

some

bootstrapping

decisions

and

that's

what

we

did

here

is.

If

you

look

at

some

of

the

protocols,

they

really

have

not

very

good

onboarding

procedures.

So,

even

if

in

areas

like

coop,

there's

I

feel

at

least

there

there's

something

missing

which

allows

you

to

quickly

set

up

things.

So

what

we

did

is

we

did

define

like

a

command

channel

and

device

configuration

channel.

F

We

get

a

profile

which

we

push

to

the

net

country

server.

Here

we

we

sort

of

translate

this

profile

into

a

yang

model.

Then

we

can

control

the

net

con

server

and

this

sort

of

just

bridges

our

commands

to

the

MQTT

broker,

which

again

that's

sort

of

translated

or

passes

on

to

the

device

which

needs

to

be

managed.

F

So

the

big

question

here

was:

what

are

they

basically

modeling

requirements?

What

do

we

need

to

put

into

our

our

data

models?

First

of

all,

we

need

some

sort

of

device,

description

and

identification,

but

we

also

have

like

sensors

and

actors

on

our

devices

which

need

to

sort

of

first

of

all

describe

what

kind

of

data

at

they

are

putting

out

and

what

kind

of

command

values

they

are

accepting.

F

So

this

needs

to

be

these

needs

to

be

modeled,

and

also

me,

we

may

have

some

meta

information,

like

the

supported

protocols,

like

the

supported

middle

information,

which

needs

to

be

managed,

and

all

this

get

gets

to

written

into

these.

These

files

and

I'll

show

you

in

a

minute

on

how

this

has

been

done.

There's

a

lot

of

folks

and

there's

currently

an

IT

semantic

interoperability

workshop

next

door

and

I

felt,

like

maybe,

was

more

appropriate

to

their

topic

here.

What

I'm

describing

here

is,

but

I

think

it's

it's

relevant

for,

for

both

of

them.

F

So

what

we

did

is

we

did

an

easy

choice.

We

just

sort

of

rolled

our

own

and

we

in

order

to

get

started,

we'd

simply

develop

our

own

JSON

format,

which

fairly

closely

resembles

what

yang

actually

is

doing

already.

So

we

are

very

close

to

what

the

final

yang

model

would

look

like.

You

see

an

example

so

sort

of

we

have

a.

We

have

a

device

which

has

a

description,

has

some

sort

of

categorization.

You

see

this

at

the

moment.

F

F

So

what

needs

to

be

discussed

a

lot

of

things,

I

think.

First

of

all,

our

experiences

has

been

that

device.

Bootstrapping

is

far

from

automatic

and

even

if

you

look

at

yeah

inertia,

if

you

even

if

you

look

at

drafts

from

from

co-op

where

you

say

they

have

a

like

a

repository

function

where

they

can,

where

you

couldn't

put

your

device

configurations

in

there,

it's

not

really

defined

and

how

you

get

to

this

point

where

you

can

actually

register

and

then

query

and

whatever.

So

so.

F

Something

is

needed

to

make

this

much

more

painless

than

it

is

at

the

moment

so

and

and

everybody

sort

of

needs

to

have

reference

point

and

how

he

can

gets

this

stuff.

So

maybe

some

sort

of

generic

ipv6

multicast

address

whatever

sort

of

dis,

have

defining

something

we

have

enough

address.

So

let's

just

use

them

for

for

some

good

purpose.

F

Here

you

get

like

six

times

the

amount

of

configuration

data

which

needs

to

be

pushed

from

the

device

so

and

what

we

are

currently

doing

is

we

are

trying

to

look

if

that

this

is,

can

actually

then

sort

of

make.

You

can

make

some

some

some

some

some

translation

from

from

from

the

oh

whale

to

the

ein,

and

what

we

haven't

looked

at

all

is

like

something

like

device

management.

This

was

kind

of

a

rapid

prototype.

I

know

that

sure

that

things

are

working,

but

it's

not

like

textual

solution.

Every

saying.

Yes,

that's

that's.

F

We

should

further

manage

our

devices.

So

then,

just

a

lot

of

things

which

you

have

looked

at,

which

we

would

need

to

pick

up

if

in

order

to

make

it

a

real

protocol

so

and

there's

another

question

which

comes

up

basically,

is

that

we

use

yang

slightly

out

of

their

original

definition.

So

so

I

said

you

usually

you've

defined

yang

models

as

static

models

with

your

referenceable.

Let's

say

they

have

the

URI.

They

are

version

eyes,

so

you

can

look

them

up

later.

I

mean

they

have

a

defined

meaning

in

our

configuration.

F

This

is

just

ephemeral,

so

whenever

a

new

device

on

boards

network,

whenever

something

changes

by

some

firmware,

update

or

whatever

on

the

device,

we

may

generate

it

completely

new

yang

model,

which

is

then

again

the

current

model,

but

it

doesn't

have

any

relation

to

the

the

other

one.

Also

our

time,

granola

ran'l

arity

is

a

little

bit

different

I

mean

the

Earth's

versioning

and

yang.

So

we

could.

In

theory

we

could

say.

F

Oh

just

version

these

different

things

and,

and

so

I

didn't

into

some

some

data

store,

but

our

time

to

honor

our

rarity

is

sorry.

It's

something

completely

different

from

what

you

current

model

uses

so

and

also

yang

s

as

I

see,

it

is

at

moment

very

device

centric

and

what

we

would

like

to

do

they

in

in

in

the

end

is

that

we

that

we

don't

want

to

manage

a

thousand

devices

individually

or

thin

towers,

nor

whatever

how

many

device

we

have

in

the

end.

F

In

the

end,

we

wanted

to

have

something

where

we

aggregate

our

device

information

and

make

it

a

network

which

we

manage,

and

this

is

maybe

not

so

much

out

of

scope

than

the

other

two

issues,

but

still

something

which

is

currently

not

very

developed

in

the

current

gangster's.

So

my

question

is:

is

this?

Could

we

use

it

like

this

or

some

strong

opposition

to

what

we

did?

Who

knows

so

currently,

there's

a

working

prototype.

It

was

kind

of

a

moderate

coding

effort.

F

There

are

some

very

good

existing

libraries

which

you

could

use

and

we

basically

try

to

use

this

as

a

further

step

or

further

development

step

for

for

other

existing

projects

with

our

students.

So

this

should

be

hopefully

some

some

good

efforts

which

we

can

can

work

on

in

the

future.

So

if

you

have

any

questions,

please

state

them

now

or

drop

me

an

email

or

whatever.

G

G

So

you

mentioned

the

ability

to

run

the

napkin

fits

over,

not

mqtt

beta

over

TLS

and-

and

you

also

told

us

that

it's

an

8-bit

device.

So

how

realistic

is

it

that

we

like

in

this

realm

for

you

to

have

cheap

devices

that

we're

going

to

get

some

actual

security?

Okay,

do

you

have

any

thoughts

on

that

based

on

yeah.

F

We

actually

thought

about

this.

That's

that's

it.

It's

a

very

good

question.

I

mean

how

do

we

manage

security

on

such

devices?

In

theory,

these

devices

could

support

TT

OS

running

UDP

whatever,

so

my

personal

view

is-

and

this

is

just

purely

sort

of

academic

here-

is

that

we

could

so

construct

the

security

boundary

here

at

the

broker,

where

we

say

or

we.

F

Whenever

we

talk

to

something

outside

the

network,

then

we

can

use

whatever

encryption

scheme

which

is

deployable,

and

but

whenever

sort

of

these

guys

are

been

talking

today

to

the

broker,

then

we

simply

use

a

simple

scheme

and

we

just

just

make

just

be

sure

that

they

can't

be

reached

from

the

outside.

So

we

would

need

some

some,

some

very

serious

filtering

here.

H

F

F

H

Had

this

your

issues

list

there

with

the

what?

How

should

this

model

be?

Looking

like

how

others

have

have

you

had

any

thoughts

on

how

one

would

one

could

preserve

the

properties

that

the

usual

yang

models

identifiable

forever,

whether

you

are

I?

Could

you

come

up

with

their

generation

and

archiving

scheme

or

something

that

those

properties

could

be

preserved?

To

that

you

have

a

fair,

fair

chance

to

talk

to

the

people

without

being

shut

down

immediately

I'm,

just

wondering

whether

this,

whether

there's

architectural

considerations

or

design

considerations

you

could

put

forward

here

well.

F

The

thing

is

for

me:

it

doesn't

make

much

sense

to

make

these

these

young

models

were

horrible,

I

mean

I,

don't

think,

there's

a

hard

architectural

constraint

here,

I

mean

we'd,

be

we

could,

whenever

were

sort

of

a

new

kind

of

model

is

generated

whenever

new

kind

of

data

comes

in

and

I

expect

these

kind

of

networks

to

be

very

flexible

and

very

very

rapidly

changing.

So

what

would

happen

here

is

that

you

may

generate

new

like

gang

models

in

a

very

short

time

frame.

There

is

no

particular

reason

you

couldn't

store

these

devices.

F

F

Of

that

building,

yeah,

that's

that's!

That's

fine!

Yeah

thanks!

Thanks

for

for

this

coming

and

you

you're

completely

right,

I

mean

in.

If,

if

we

would

have

these

very

strong

requirements,

we

possibly

can

build

them

in

here.

I

was

just

thinking

there's

in

order

to

make

this

in

order

to

align

this

with

the

current

yang

specifications,

they

would

need

it

to

to

at

least

sort

of

augment

their

time

base,

because

at

the

moment,

I

think

the

the

current

yang

granularity

for

four

versions

in

in

the

current

gang

standard

is

a

day.

F

A

So

our

next

paper

is

no

domain

left

behind

is

let's

encrypt

democratizing

encryption.

Authors

are

Martin

Eriksen

from

Delft

University

need

technology

message,

Krasinski

from

Delft

University

of

Technology,

sorry

message:

giovanni

mora,

SI,

DN

labs,

semana,

edge

elites

de

Kubb

from

Delft

University

technology

and

young

Vanderburgh

from

Delft

University

technology,

Martin

work

said

NCSC

Netherlands

as

an

advisor

on

the

security

of

inter

network

infrastructure

standards

and

protocols.

He

is

an

interest

in

cryptography

in

its

lack

of

deployment.

Ncs

CL

is

part

of

the

central

Dutch

government.

A

Its

mission

is

to

contribute

to

the

enhancement

of

the

resilience

of

Dutch

society

in

the

digital

domain

and

thus

to

create

a

secure,

open

and

stable

information.

Society.

Ncsc

Netherlands

functions

as

an

information

hub

and

center

of

knowledge

for

information

security

and

also

hosts

the

computer

emergency

response

team

for

the

Dutch

central

government.

Martin

hold

the

master

science

from

University

of

Twente

in

telematics

and

a

master

science

from

leading

University

in

cybersecurity

governance.

A

A

A

E

G

E

G

So,

thanks

for

the

first

laugh,

I'm

I

think

I'm,

not

I'm

bi

between

you

and

your

your

next

coffee

break

so

I.

In

order

to

to

keep

everyone

away,

you

guys

three

questions

and

we'll

do

some

exercise

lifting

hands.

So

this

talk

is

about.

Let's

encrypt,

come

who's

heard

of

let's

encrypt

lots

of

exercise

cool

who

has

personally

used,

let's

encrypt

okay

about

half,

maybe

other

people

in

the

room

who

have

used

that's

encrypt

on

a

large

scale

like.

D

G

Cool

yeah,

so

this

this

talk

is

about

the

deploy

or

the

use

of

let's

encrypt

in

his

first

year.

I

did

this

as

a

thesis

work

last

year,

so

it's

also

data

from

you.

Last

year,

people

who

have

attended

the

Chicago

map

orgy,

talk

of

Giovanni

have

perhaps

seen

some

of

this

and

for

those

people,

I

expect

good

criticism,

because

you've

had

some

time

to

think

about

this.

So

so

so

please,

please

do

save

those

for

later.

G

One

thing

we

got

asked

when

we

talked

about

this

is

whether

we

are

affiliated

with

let's

encrypt

and

we're

not

so

we're

independent

and

it's

it.

This

research

was

kind

of

born

out

of

an

interest

to

find

out

whether

their

statistics

could

be

improved

or

what

the

what

another

crew

people

would

think

measuring

their

progress.

G

So

the

the

quick

motivation

was

basically

at

this

guy

and

the

revelations

he

brought

into

the

community

so

I

think,

since

the

the

news

broke,

seen

wider

spread

encryption

on

the

internet

and

I

won't

go

into

that

too

much

detail,

but

you

can

also

plot

it

on

on

on

browser

telemetry.

So

if

you

look

in

the

same

period

at

the

adoption

of

HTTPS

on

the

web

for

for

basically

random

people,

we've

crossed

the

50

percent

mark

somewhere

and.

E

G

That's

that's

really

cool,

because

that

that's

been

a

long

time

coming

and

it's

been

I

guess

the

hard

work

of

many

people.

On

the

other

hand,

it

also

means

that

there's

a

lot

of

people

on

the

internet

who

do

not

have

HTTPS.

So

one

of

the

questions

I

ask

myself

is

like.

Why

is

this?

Why

is

this

the

case

and

it

turned

out

that

lots

of

people

have

been

doing

work

in

this

area

and

and

one

of

the

principal

reasons

that

were

in

identified

as

as

well

troubling

in

this

area?

G

Is

the

use

of

certificates,

so

barriers

to

to

ubiquitous

encryption

on

the

web

are

the

cost

of

porches

deployment

and

and

and

renewal

of

certificates?

And

it's

also

it's

not

just

that.

It's

also,

if

you

want

to

do

it

as

a

skill,

and

that

skill

is,

if

you

do

it,

for

people

who

are

not

in

the

business

of

running

IT

or

running

networks

or

doing

security,

like

maybe

the

bakery

on

the

front

of

your

street,

you

need

to

do

it

for

a

lot

of

people

and

then

you

run

into

the

complexity

issues.

G

G

They

don't

do

not

charge

for

four

certificates

and

they

employ

automation,

I

think

there's

even

a

there's

been

an

ACME

working

group

at

the

ITF

for

for

a

while

now

and

that

that

spot,

you

know,

there's

two

parts

to

this:

there's

a

protocol

to

actually

request

a

certificate

and

obtain

one,

and

there

is

software

implementing

this

protocol,

which

can

help

you

to,

for

example,

configure

your

web

server

or

deploy

these

certificates

at

scale.

So

that's

all

the

work

of

other

people.

G

What

I

was

interested

in

is

to

find

out

whether

the

first

year

of

let's

encrypt

help

them

reach

those

people

that

would

need

this

most.

So,

if

you

think

about

this,

the

big

websites

already

had

encryption

in

some

cases,

and

they

were

slowly

moving

over,

but

at

least

they

would

have

the

money-

and

maybe

also

the

brainpower,

to

to

make

this

work.

So

the

other

part

of

the

web

is

is

end.

G

G

We

did

the

first

year,

September

2015

was

when

they

went

live

or

day

they

issued

the

first

certificates,

I

think

they

went

live

in

October

until

one

year

later

and

all

results

I'll

show

you

are

reduced

to

TL

due

to

LD

or

three

LD

form

so

for

TLDs

that

have

well

ending,

for

example,

dot

NL.

We

look

at

domain

Dolan

L

for

the

dot

code

that

UK

type

of

TLDs.

We

look

at

domain

dog

code

at

UK.

G

What

data

sets

are

used

for

the

certificates?

We

use

certificate

transparency,

who's

familiar

with

certificate

transparency,

so

certificates.

Transparency

is

basically

an

append-only

log

of

all

certificates,

seen

as

submitted

by

various

parties,

and

the

nice

thing

about

guys

at

let's

encrypt

is

guys

and

girls

is

that

they

decided

to

submit

all

the

issued

certificates

they

ever

coined.

So

that

means

that

we

actually

have

data

to

offer.

G

Adèle,

there

was

access

to

far

side,

DNS

DB,

which

is

a

passive

DNS

data

set

and

in

previous

work

they

used

max

yd

g,

IP

and

Whois

data

to

actually

create

to

bundle,

IP

addresses

into

organizations

and

organizations,

there's

hosters.

So

what

can

you

do?

And

now

I'll

just

walk

you

through

the

different

results

in

different

stages.

So

these

were

the

numbers

which

were

like

publicly

known

and

it's

just

the

growth

of

the

service.

So

you

you

first

see

other

growth

in

in

unique

fqd

ends,

which

hits

10

million

around

September

16.

G

If

you

look

at

the

domain,

so

you

you

get

rid

of

all

the

people

issuing

certificates

for

100

machines

under

their

own

domain.

It's

it's

much

lower!

It's

around!

4

million!

Oh!

But

that's

that's

a

nice

number

in

a

year!

But

what

does

it

really

tell

you?

You

can

get

like

relative

numbers

if

you

compare

it

with

all

known

domains

worldwide,

as

found

in

the

specific

DNS

data

set,

and

what

you

then

get

is

the

number

of

2%

of

all

domains

worldwide.

G

As

containing

this,

data

set

have

at

least

one

certificates

issued

by

let's

encrypt,

and

then

you

you

can

really

see

why

this

is

a

big

thing,

because

2%

in

year

is

pretty

pretty

pretty

nice.

So

that's

just

the

raw

numbers

now

who

is

using?

Let's

encrypt.

So

if

you

look

at

a

popular

website

websites,

you

can

basically

see

that

it

stabilizes

in

the

summer

of

69.

Every

time

you

increase

your

top

list

with

the

factor

of

10.

They

also

contribute

a

factor

of

10

more

to

the

overall

percentage

of

of

certificates

over

the

domains.

G

One

percent-

and

it

goes

up

in

ten

tens

and

tens

and

tens

and

what's

interesting

about

this-

is

that

if

you

look

at

the

top

Alexa

1

million,

they

only

have

about

two

percent

coverage,

which

means

that

the

other

98

percent

are

not

for

popular

websites

or

at

least

not

for

popular

websites.

If

you

look

at

it

from

the

perspective

of

Alexa.

G

What's

that's

kind

of

well,

you

could

expect

that

what's

more

interesting,

actually

is

you

if

you

turn

it

around?

So

if

you

look

at

within

each

ranking,

what

is

the

percentage

that

is

using?

Let's

encrypt

you

get

to

the

point

where

you

see

that

the

most

popular