►

From YouTube: Technical Oversight Committee 2021/02/19

Description

Istio's Technical Oversight Committee for February 19th, 2021.

Topics:

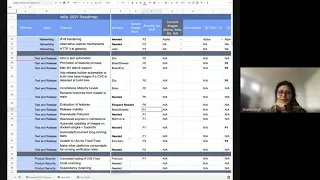

- Test & Release 2021 and 1.10 Roadmap presentation

- User Experience 2021 and 1.10 Roadmap presentatino

- Discussion of EnvoyFilter and restricting its usage.

E

A

B

G

H

B

I

B

K

Basically,

the

one

thing

we

wanted

to

pick

up

is

we

wanted

to

pick

up

the

istio.io

test,

automation.

I

think

now

that

the

documentation

work

group

has

sort

of

had

a

change

in

process.

I

don't

think

they're

doing

that

anymore.

So

I

think

that

it's

going

to

fall

back

to

the

test

and

release

work

group.

K

G

K

K

Okay,

I

know

mariam

is

doing

some

work

for

the

current

release

to

handle

what

I

want

to

say

staging

across

pages,

so

that

you

know

we

have

the

one

example

that

is

about

10

pages

long.

We

want

to

be

able

to

test

each

page,

you

know

building,

so

there

was

some

work

done

for

that.

I

don't

know

what

might

come

up

in

future

requirements.

G

Okay-

so

maybe

not

here

eric,

let's

take

it

offline,

but

there

are

certain

pages

where

we

were

looking

for

needs

consideration

where

we

need

to

understand

if

the

framework

can

support

it

and

does

not

what

it's

needed.

So

that

requires

some

efforts

just

because

there

wasn't

anybody,

so

we

were

not

looking

into

it.

So,

let's

just

discuss

that

offline

yep,

yeah.

M

B

K

K

K

We

want

to

handle

one

of

the

things

that

we

run

into

is

part

of

our

release.

Building

is

the

build

stop

if

we

detect

that

the

base

images

have

a

cve

and

then

you

know

we

need

somebody

to

go

and

build

us

new

base.

Images

run

some

scripts

and

today

that

has

to

be

somebody

from

google

to

do

it

manually

because

they're,

the

only

ones

with

the

cred.

K

L

B

J

C

K

K

L

Okay,

so

going

back

here,

there

was

a

request

for

the

definition

of

done

to

evaluate

all

of

the

features

that

we

currently

have

in

istio

to

make

sure

that

they

meet

the

requirements

for

beta,

etc

and,

if

not,

then

have

that

as

a

debt

in

the

seo

project.

So

this

really

encompasses

that

and

we

list

that

as

test

and

release,

but

really,

I

think,

that's

a

responsibility

of

all

the

work

groups

to

say:

hey,

here's,

the

features

that

we

have,

here's,

whether

they

meet

the

levels

or

not,.

D

So

there

needs

to

be

someone

who

is

the

owner

of

that,

as

in

they

are

ultimately

responsible

for

making

sure

that

happens,

even

though

you

know

the

way

they

do,

it

is

by

delegating

to

a

bunch

of

other

people

like

if

you,

if

you

leave

this

as

owners

to

you,

it

won't

happen.

Okay,

so

we

need

to

pick

someone

or

someone

needs

to

take

this

and

say

yes,

okay,

I

will

you

know,

track

everyone

down.

They.

K

C

I

Here,

sorry,

did

you

finish

right:

okay,

good

yeah,

so

I

I

totally

see

the

value

of

you

know

assessing

what

features

we

have

and

whether

the

maturity

level

actually

meets

the

bar

that

we

have

set

now

and

look

retroactively.

I'm

trying

to

understand

what.

How

do

we

communicate

this

to

the

users

and

what

action

do

they

take?

D

This

is

not

meant

for

users

right.

This

is

meant

for

us

to

identify.

We

have

those

gaps

and

then

it's

up

to

us.

I

think

in

toc

to

help

fill

those

right

so

find

an

owner

for

that

feature.

If

there

isn't

one

already

and

have

them,

you

know

go

through

the

process

to

get

it

at

least

to

where

it's

been

described

as

right.

B

I

Okay,

yeah

I'll,

maybe

talk

with

brian

offline,

because

I

understand

there

are,

there

will

be

gaps

identified,

I'm

guessing

those

gaps

because

there

are

gaps.

That

means

users

must

be

seeing

some

effects

of

it

now

right

and

that's

why

we

need

to

do

some

work,

so

I

would

like

to

outline

those

gaps

earlier

while

the

work

is

going

on

and

that's

where

the

communication

should

happen.

B

D

I

D

Yeah

right

because

I

think

my

preference

is

maybe

not

to

do

that,

but

it

depends

on

exactly

what

we

find

right.

So

absolutely

yeah,

okay,

but

I

think

to

your

point.

We

should

do

this

sooner

than

later,

so

maybe

it

should

be

higher

priority

than

p1,

and

should

we

work

to

find

someone

as

soon

as

we

can.

I

K

Camden

all

right

all

right,

let's

keep

going

yeah

and

we'll

we'll

touch

back

to

this.

I

guess

when

we

get

to

the

1.10

road

map,

maybe

so

yeah.

So

the

next

item

is

release

visibility,

and

this

is

basically

the

work

related

to

helping

the

release

managers

with

the

dashboard

and

making

sure

that

things

are

on

schedule

and

up

to

date,

so

that

we

can,

you

know

better,

identify

problems

earlier.

K

D

M

M

It's

definitely

a

mixed

bag

in

terms

of

usefulness,

however,

the

the

parts

that

are

the

most

looked

at

tend

to

be

the

parts

that

require

the

most

maintenance,

which

I

I

guess

is

a

good

thing.

Ideally,

they

wouldn't

require

that

much

maintenance

either,

but

the

the

areas

where

investing

work

are

areas

like

test

flakes

where

we

do

see

that

people

seem

to

be

using

the

dashboard.

M

D

K

So

similar

to

the

automation

around

the

base

images,

we

want

to

try

to

handle

some

other

automation

that

we

do

for

images

like.

I

know

the

book

info

images

we

get

prs

that

talk

about.

You

know

updating

some

of

the

sample

code

and

then

they

need

to

be

rebuilt

and

re-pushed

to

docker

and

tested,

and

that

sort

of

is

a

a

longer

process

that

involves

somebody

who

can

build

the

images

and

push

them

to

docker,

as

well

as

the

pr

originator

making

sure

that

they

test

the

various

things.

K

So

we

like

to

do

some

automation

in

that

space

as

well

to

try

to

limit

the

time

it

takes

a

developer

to

you

know,

do

the

building

and

pushing

as

well

as

starting

shortening

the

window,

that

the

prs

are

out

there,

one

of

the

things

we

we

had

done

earlier,

and

I

I

don't

know

how

much

of

this

is

is

done

at

the

at

the

moment.

But

we

want

to

make

sure

that

we

automate

and

document

the

long

tests.

K

I

think

it's

mostly

done,

but

the

problem

we

have

today

is

that

there's

only

one,

maybe

two

people

that

sort

of

always

do

all

the

long-running

tests.

So

whenever

we

want

to

spin

a

new

release,

it's

you

know,

go

talk

to

somebody

and,

and

have

that

done.

I

think

we

want

to

make

sure

that

we

can

get

that

better,

documented

to

let

more

people

be

able

to

do

it.

K

K

K

So

that's

sort

of

the

2021

roadmap

as

a

whole

in

terms

of

sort

of

documenting

what

we

were

going

to

do

for

1.10.

If

you

look

at

the

right-hand

side

of

the

of

the

share,

you

can

sort

of

see.

What's

under

the

quarter

two

column

you

can

see,

we

have

p

zero,

p

yep

right

there,

the

quarter

two

columns,

so

that's

sort

of

what

we

were

planning

on

doing

in

terms

of

1.10,

since

we

don't

really

have

anything

that

has

feature

stages

associated

with

it.

K

K

K

B

B

C

C

B

M

H

H

M

M

We

would

like

to

oh

sorry,

I'm

jumping

ahead

of

myself

in

row.

2

we're

talking

about

getting

analysis

data

put

into

customer

resource

status,

so

this

is

something

that

we

attempted

in

1

9.

We

hit

some

performance

challenges,

just

as

we

went

to

move

it

into

beta.

We

would

like

to

solve

those

problems.

E

The

proxy

status

item

is

merely

to

move

what

we

have

into

a

sub

command.

As

we

have

more

people

running

external

control

planes,

we

found

that

people

were

confused

about

what

permissions

they

had

and

we

wanted

to

make

it

more

obvious

that

this

command

might

not

be

available

depending

on

how

security

had

been

locked

down.

This

also

means

rewriting

the

help

text

and

things

that

come

with

the

steel

cuddle.

N

M

Okay,

rule

four

is

what

I

originally

started.

Talking

about,

all

of

brian

and

nate's

work

on

making

sure

that

all

of

our

annotations

and

apis

are

are

marked

with

a

maturity

level.

We

would

like

to

present

that

to

users

an

analyzer

seems

like

the

right

vehicle

to

do

that.

There's

a

little

bit

of

implementation

challenge

there

in

terms

of

whether

the

user

cares

to

make

sure

it's

alpha

or

higher

or

beta

or

higher,

etc.

M

But

bottom

line

is.

We

would

like

a

surface

in

istio

control

that

lets

users

know

the

maturity

level

of

features.

They've

used,

sort

of

a

stretch

goal

along

those

lines

that

that

may

or

may

not

make

it

into

110

is

also

a

way

to

block

using

features

so

that

you

could

set

like

a

control,

plane

level

flag.

That

says,

don't

allow

me

to

use

alpha

features.

E

D

B

So

quick

question

here

I

agree

this

is

going

to

be

very

useful.

Does

this

cover

like

the

field

levels

status

for

experimental

and

deprecated

or

just

the

overall,

like

is

your

operator

using

that

as

an

example

right,

it's

your

operator?

I

think

it's

alpha

today.

Like

certain

fields,

we

think

is

your

operator,

maybe

experimental,

would

the

user

be

able

to

kind

of

differentiate

those.

E

B

E

In

the

past

we

have

had

ad

hoc

code

written

for

individual

fields

and

that

has

always

broken

if

any

other

working

group

tells

us

that

a

particular

field

is

being

deprecated

or

is

not

recommended,

we

we

will

write

an

ad,

we

will.

We

have

an

existing

ad

hoc

analyzer

for

that

we'll

manually

do

it,

but

it's

often

incorrect

and

it

has

been

wrong

before.

I

M

So

the

idea

is

that

we

are

using

apis

to

enable

features

right

sure,

and

so

we

want

to

give

the

user.

The

the

long-term

view

is.

This

ought

to

be

a

comprehensive

understanding

of

what

various

maturity

levels

you

are

engaging

with

in

your

istio

installation,

whether

we're

talking

apis

features,

annotations,

etc.

C

M

I'm

not

sure

that

I

would

describe

it

as

a

linkage.

However,

the

the

intent

is

to

take

that

once

it's

done,

which

again

it's

it's

not

slated

for

110

at

this

point

and

to

expose

it

to

the

user.

So

the

idea

is,

you

may

be

using

a

particular

value

in

an

api

that

is

deprecated

to

turn

on

a

feature

that

is

still

beta,

in

which

case

we

want

to

be

able

to

communicate

to

you

hey

this

feature

is

good,

but

you

probably

want

to

enable

it.

This

other

way

right

right.

C

Yeah

yeah,

so

I

mean,

but

because

we

don't

yet

have

a

kind

of

well,

we've

been

working

on

a

feature

catalog

that

could

be

used

as

part

of

tooling

and

automation,

but

we

don't

yet.

We

haven't

yet

assessed

its

coverage

for

kind

of

creating

that

correlated

feedback

for

users

other

than

in

an

ad

hoc

way

right.

C

M

Do

think

it's

valuable,

I

think

what

you're

seeing

here

in

the

roadmap

is

our

intent

to

iterate

towards

that

goal.

Not

the

sort

of

thing

we'll

be

able

to

accomplish

in

one

release,

we'd

like

to

make

meaningful

improvement

this

release

and

then

be

able

to

reevaluate

where

to

go

next,

totally

makes

sense.

M

M

There

are

more

and

more

ways

that

we

can

give

helpful

information

to

the

users

and,

of

course,

with

analyzers

being

written

to

status

fields

that

help

becomes

much

more

proactive

and

visible

to

those

users.

So

we

see

sort

of

an

exponential

growth

in

the

value

of

investing

in

analyzers

for

our

users

and

we'd

like

to

continue

that.

I

M

That

is

not

a

bad

idea.

I

I

would

worry

about

the

cohesiveness

of

that

list

and

the

feasibility

of

the

list.

Generally,

we

approach

it

as

if

there's

a

problem

we've

run

into

in

configuring,

our

service

mesh

that

analyzers

didn't

help

with

that's

a

good

time

to

write

an

analyzer.

It's

a

bit

like

a

regression

test

for

a

bug.

I

A

O

M

D

Yeah,

but

so

like,

if

you

what

I

was

thinking,

is

if

you're

running

it

with

the

one

nine

code

base

before

upgrading

to

110,

you

don't

have

as

much

knowledge

as

if

you're

running

with

the

110

codebase.

So

do

we

already

do

something

like

that?

Do

we

have

a

an

upgrade

test,

but

yeah

exactly?

Can

I

upgrade.

B

E

M

N

It's

it's

a

bit

more

complicated

than

just

the

beta

status.

I

mean

if

you

are

in

a

cluster

and

you

applied

mesh

config.

You

know

you

have

uninstalled

version,

one.

Seven

you

apply

mesh

config

and

set

some

settings,

accept

a

different

ca

or

all

kind

of

other

things.

It's

not

trivial.

It's

not

sufficient

to

have

the

same

better

level.

It's

it's!

A

new

control

plane

needs

to

be

compatible

in

settings.

We

have

the

same

ca.

So

it's

it's

a

very

risky

thing

to

claim

that

it's

compatible

without

deeper

understanding.

D

M

M

N

E

D

D

E

Row

6

is

just

about

using

information

that

we

may

already

have,

but

not

be

using

to

find

the

control

plane

like

looking

at

the

injector

or

better

timeouts,

to

see

if

the

existing

debug

ports

are

blocked.

Row

7

is

about

adding

something

to

pilot

agent

so

that

you

could

exec

onto

a

sidecar

and

then

have

the

sidecar

ask

the

control

plane

about

proxy

status

which

may

be

needed.

We've

only

made

that

p2,

because

we

failed

at

that

for

the

last

two

releases

and

don't

want

to

fail

at

anything

higher

than

a

p2.

I

E

The

proxy

status

has

the

problem,

if

you're

using

an

external

control

plane

that

it

might

be

hard

to

contact

the

particular

issue,

the

instance

that

knows

the

status

for

a

particular

pod.

So

the

only

solutions

that

we

currently

have

are

based

on

implementation

trickery.

There's

no

previous

previous

releases.

We've

wanted

to

make

a

well-defined

service

in

this

2d.

That

knows

about

all

the

pods

that

could

be

used

for

these

things.

That

work

has

proven

to

be

too

difficult

for

any

one

person

to

do

so.

E

And

stuff,

like

that,

the

the

really

tricky

thing

about

this

was:

you

know

we

did

a

performance

improvement

and

we

stopped

using

cube,

cuddle,

exec

and

went

to

proxy

status,

or

we

went

to

proxy

proxy

forward

port

forward

for

performance

reasons.

A

few

releases

ago,

99

of

our

users

thought

that

was

great

and

it

was

faster

for

them.

E

N

One

comment

on

this

subject

is

not

only

for

external

studies.

We

have

a

feature

in

pilot

which

is

disables

the

debug

endpoint,

which,

with

a

comment

recommended

for

production

use,

because

without

that

option

in

production,

you

have

debug

available

to

everyone

wide

open

with

on

a

plain

http,

with

no

authentication.

E

And

constant,

if

they

do

said

that

currently

in

this

to

cuddle,

we

have

two

proxy

statuses,

the

one

the

regular

and

the

one

and

experimental

the

one

in

experimental

still

will

work.

But

it's

not

considered

a

fallback

strategy

for

the

mainline

one

and

that's

part

of

row.

Five

is

if

the

debug

port,

it

won't

work,

try

the

experimental

version

which

might

work.

N

E

M

Yeah,

but

the

bottom

line

is

that

the

functionality

that

we've

provided

historically

in

proxy

status

requires

a

very

high

level

of

privilege

that

we

don't

expect

most

users

to

have

maybe

control

plane.

Operators

will

have

that

moving

forward,

but

our

application

operators,

our

mesh

operators,

etc,

will

not

have

that

access,

and

so

this

experimental

version

that

we

would

like

to

begin.

Investing

in

more

will

not

have

the

full

functionality

the

proxy

status

brought.

We

will

be

implementing

whatever

bits

of

functionality

are

capable

of

carrying

over.

Without

that

privilege,

yeah.

B

Yeah,

I

guess

one

comment

I

would

make.

Is

we

actually

ask

around

at

ibm

before

I

leave?

You

know

how

many

people

are

using

proxy

status.

I

I

know

it

was

very

super

useful.

You

know

at

the

the

very

early

days

of

istio

when

we

have

a

lot

of

bugs,

but

I

think

these

days,

because

the

project

is

a

lot

better.

Now

I

honestly

haven't

used

proxy

status

for

a

long

time,

myself.

B

C

So

I

guess

one

suggestion

I

might

have

is

that

if

really

the

differentiation

between

the

kind

of

two

degrees

of

proxy

status

is

the

level

of

privilege

that

the

user

has,

can

we

make

that

distinction

clear

in

the

ux

of

the

what

we

want

to

provide

right,

where

you

have

istio

proxy

status

and

ixia?

You

know

privilege

proxy

status

right

and

we

basically

describe

the

difference

between

the

two

things,

and

so

people

understand.

M

I

Yeah,

okay,

good

and

lynn.

I

actually

disagree

and

I

think

you're

an

expert

user

of

istio

and

I

think

you

are

using

the

proxy

config

to

begin

with.

But

many

people

need

to

understand

by

looking

at

the

status

which

of

these

proxies

might

have

issues

and

then

go

into

and

debug

it.

So

I

still

find

it

very

useful

and

I'm

glad

that

we

are

making

it

less

privileged,

like

you

can

write

in

less

privileged

environments.

B

M

Well,

like

one

workflow

lin,

for

that

part

of

the

command

would

be

to

check

what

data

planes

are

still

accessing

an

old

revision

of

the

control

plane

before

shutting

it

down.

If

you're

safely

operating

your

control

plane,

you

want

to

make

sure

everybody's

migrated

off

before

you

turn

it

down.

That

would

be

one

year

where

a

control

plane

operator

would

be

very

interested

in

using

proxy

status

as

it

was

as

it

exists

today,

but

metrics.

M

A

B

M

You

have

the

one

last

line

item

here

and

that

is

that

we'd

like

to

continue

the

ux

research

that

we

started

in

the

fall

around

upgrades.

We

want

to

get

an

idea

of

how

much

we've

moved

the

needle

in

one

nine.

How

much

better

has

the

experience

gotten

and

particularly,

we

want

to

provide

feedback

on

that

before

we

make

decisions

about

end

of

lifeing

1-8,

so

that

we

can

make

an

educated

decision

based

on

our

user

experience.

M

So

I've

been

soliciting

many

of

you

individually

for

questions

to

put

on

that

survey.

I've

got

a

spreadsheet

going

where

anybody

can

put

suggestions

and

I've

shared

that

with

the

upgrade

working

group

ping

me

or

drop

a

note

in

the

spreadsheet.

If

you

have

a

particular

question

about

upgrade

usability

that

you'd,

like

answered

in

our

user

survey,.

D

C

C

I

G

D

N

C

N

I

N

C

I

I

was

just

going

to

say

the

second

case

is

interesting

right.

So

if

you

look

at

customizing

telemetry

labels

or

metric

labels,

exa,

for

example,

it

has

been

a

feature

from

the

first

release

of

histo

right.

So

that

has

been

a

stable

feature

when

we

moved

to

telemetry

v2,

we

kind

of

took

it

away

and

then

we

reintroduced

it.

So

the

progression

there

is

more

market

than

we

like.

I

N

Can

I

can

I

ask

the

question:

are

we

really

talking

about

people

editing

their

boy

filter

directly

or

about

putting

stuff

in

various.xml,

because

right

now,

every

time

you

do

install

upgrade,

we

override

the

voice

filter

with

whatever

is

important

to

the

cameras.

I

would

argue

that

the

actual

api

is

values.yaml

or

mesh

configure,

whatever

the

stop

gets

into

the

installer.

C

Yeah

nobody's

trying

to

rewrite

history

right.

Clearly

we

have

situations

in

this

state

we

they

need

to

be

addressed.

So

I

guess

the

question

is:

if

we

want

to

institute

this

policy,

we

should

also

institute

you

know

as

quickly

as

possible.

Creating

the

api

is

necessary

to

backfill

for

those

cases

where

we

have

exceeded

this

boundary.

N

C

D

D

N

C

No

custom-

that's

not

totally

correct

right,

like

mesh

config

is

a

way

to

it

is

an

api.

It's

perfectly

reasonable

for

there

to

be

data

level,

features

in

mesh

config

right

now,

because

it

is

the

the

global

api

and

we

have

things

that

make

sense

as

globals,

and

it

doesn't

always

make

sense

to

go,

create

a

new

crd.

Every

time

we

have

a

new

feature

right.

So

it's

more

nuanced

than

that.

A

I

D

I'm

starting

with

envoy

filter,

then

we

could

expand

it

to

other.

We

have

right

and

annotations,

but

yes

yeah.

We

need

to

be

careful,

we

need

to

think

about

it.

We

should

also

take

the

time

to

go

back

and

look

at

all

the

existing

beta

features

that

rely

on

alpha

apis

and

discuss

them

right.

Yeah,

not

today,

this

product.