►

Description

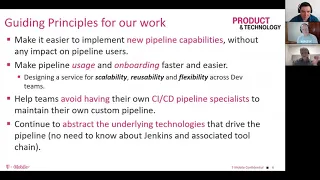

Ravi Sharma, Martin Krienke, and Larry Ogrodnek presented the POET pipeline framework for Jenkins including the concept of defining each step of the pipeline with a Docker container. Pipelines are defined with a YAML file and use Docker containers referenced in the YAML file to perform their steps.

T-mobile has open sourced the POET pipeline and looks forward to ideas and insights from others.

A

Record

and

welcome

everyone

it's

time

to

start

we'd

like

to

start

on

time

thanks

very

much.

This

is

a

Jenkins

online

Meetup.

Today

we

have

the

privilege

of

having

the

t-mobile

development

team

present

to

us

on

poet

their

pipeline

system

that

they

use

to

deploy

to

thousands

of

developers

at

t-mobile.

So

Martin,

would

you

like

to

take

it

up

to

lead

us

all?

First,

we'll

let

you

and

Robbie

go

back

and

forth.

Yeah

sounds.

B

Good,

thank

you

mark.

So

just

everybody

and

we'll

just

introduce

ourselves

your

mom.

What

we're

going

to

do

today

is

we'll

go

through

also

just

talking

about

what

the

vision

and

approach

we

had

for

this

pipeline

and

our

strategy

have

what

motivated

us

to

I

end

up

where

we

were,

and

then

Ravi

and

Larry

will

take

you

through

some

of

the

high-level

design

and

the

implementation

then

get

down

to

kind

of

the

core

of

the

engine.

It's

so

it's

kind

of

those

three

areas

of

just

here's.

Here's!

B

What

led

us

to

this

point

where

I

was

feeling

motivated

to

adjust

and

put

things

into

how

do

things

a

little

differently

than

how

pipelines

were

traditionally

being

viewed

at

t-mobile

here

and

then

Ravi

really

was

a

key

person

for

helping

us

drive,

adoption

and

working

with

our

teams

at

the

time

by

he's

now,

you'll

see

a

manager

title.

He

just

had

that

role.

B

Just

a

few

weeks

ago

in

another

team,

you

got

that

opportunity,

which

is

great,

but

really

with

the

folks

I'm

working

with

our

customers

and

understanding

what

their

needs

were,

so

that

kind

of

helped

feeding

our

product

pipeline.

As

we

continued

and

then

Larry

really

was

the

guy

at

the

heart

of

the

engine,

who

spent

all

the

time

putting

the

the

core

parts

of

our

engine

together

from

which

then

everybody

else

was

leveraging

it

so

as

noted

I'm,

Martin,

cranky

senior

manager

of

product

and

technology

at

t-mobile,

Ravi

Sharma.

B

He

also

does

a

note,

he's

now

manager,

product

and

technology

at

t-mobile

in

one

of

our

other

pipeline

teams,

but

he

was

the

head

of

all

of

our

brought.

You

was

basically

product

managers,

I

called

it

product

manager

for

customer

support.

It

was

so

important

how

we

looked

at

customer

support

and

how

we

talked

about

our

customers

and

theater

customers

and

listen

to

them

that

actually

we

had

two

product

managers,

one

for

the

heart

of

the

pipeline

activities

and

then

also

Oh.

Somebody

just

focused

on

customer,

and

we

found

that

paid

off

quite

well.

B

Larry,

as

I

mentioned,

was

really

our

guy,

for

you

wanted

something

technical

built.

I

go

to

Larry

on

on

that,

so

Larry

was

definitely

will

be

the

guy

under

the

covers.

That

did

a

lot

of

the

core

work,

all

right.

So

really,

the

kind

of

where

all

this

came

about

was

when

we

talk

about

managing

a

CI

CD

pipeline.

B

There's

gonna

be

a

lot

of

complexities

right,

just

building

and

designing

that

thing,

and

especially

if

you

work

from

a

Jenkins

perspective,

all

right,

you

have

all

your

plugins

you've

gotta,

get

if

you're

on

vm's

you're

keeping

those

things

going,

you

have

to

get

them

updated

the

variety

of

types

of

deployments

people

want

do

they

want

to

start

doing

blue

green,

especially

we

start

moving

DevOps

way.

We

want

canary

deployments,

there's

a

lot

of

other

workflows

that

you

need

to

integrate.

B

B

You

know

you

just

had

all

these

other

things,

which

I

will

say

a

lot

of

times.

The

customer

support

is

overlooked

a

lot

of

times

and

there's

a

shared

service

organization.

It's

often

you

know

as

much

as

we

think

about

the

things

that

we've

got

to

do

to

provide

tooling.

Are

you

really

thinking

about

your

comest

customer

and

then,

of

course,

if

you're

24/7?

How

are

you

gonna

handle

that?

Do

we

have

sufficient

documentation?

B

How

are

we

training

people

all

those

important,

and

at

least

our

experiences

here

at

t-mobile,

had

been

that

you

know,

especially

if

somebody

was

trying

to

build

more

of

a

centralized

one

or

capability

that

other

teams

could

use

this.

This

category

was

really

lacking

and

if

it's

lacking

boy,

everything

else

kind

of

tends

to

suffer

in

frustrations

mount

around,

and

it

just

becomes

a

challenge

for

everybody,

whether

it

be

the

users

of

the

platform,

the

people

creating

the

platform

and

then

management's

trying

to

make

sense

of

do.

B

B

Another

goal

here

was

developers

like

to

have

their

flexibility

right.

You

want

to

give

them

the

head

engineer

he's,

but

I

got

this

other

thing,

there's

always

a

reason:

hey

I

can't

quite

do

that

because

I

needed

this

one

other

thing,

and

you

don't

have

that

for

me,

so

we

really

wanted

to

try

to

give

them

the

flexibility

and

adapt

to

the

model

that

they

were

using

in

their

development,

so

not

be

too

prescriptive.

We

really

want

to

avoid

that.

B

It's

usually

a

downfall

of

a

lot

of

shared

service

solutions

and

things

as

when

you

start

being

so

prescriptive

that

people

just

really

don't

have

that

flexibility,

and

then

I'm

gonna

key

thing

here

is:

let

them

focus

more

on

the

development

and

testing

aspects

of

whatever

it

is.

They

were

building

of

their

software

rather

than

spending

time

on

having

to

maintain

their

pipelines.

This

wasn't

interesting.

This

has

came

up

recently,

but

our

senior

vice

president

made

a

comment

recently

that

everybody

was

wanting

to

show

their

pipeline.

He

says,

I

talked

to

here.

B

We

go,

you

got

to

see

our

pipeline

and

you

know

like

it

he's

like

I,

just

I,

don't

know

why

and

well

one

things

I

was

I

talked

about.

Was

you

know?

Why

had

they

been

wanting

to

show

their

pipelines

to

him?

It's

really

because

they

were

complex,

they're,

very

proud

of

them.

They

spent

a

lot

of

time

working

on

those

pipelines

and

so

they're

like

hey.

B

We

want

to

show

you

this,

because

this

is

really

cool,

but

our

take

was

pipelined

should

not

be

that

big

a

deal

it

should

be

just

oh

yeah

I

have

a

pipeline.

We

run

our

stuff,

we

can

deploy

it.

We

build

all

the

things.

We

need

it's

a

piece

of

cake.

It

should

not

be

that

big

a

deal,

and

so

this

is

really

where

we

at

t-mobile

has

started

to

shift

po

at

pipeline,

and

some

other

activities

have

been

happening.

B

I've

really

been

trying

to

move

away

from

this

idea

that

every

team

is

having

to

create

their

own

pipeline.

It's

because

it's

not

efficient

for

the

senior

senior

VP.

He

as

I,

don't

remember

its

thousands

of

people

under

him.

It's

not

economical,

really

for

him

if

every

team

is

building

their

own

pipeline

and

trying

to

do

all

the

custom

work.

B

So

what

are

the

other

things

here?

That

we

didn't

want

to

do?

Is

let's

set

some

principles

for

how

we're

going

to

work

on

this,

and

we

do

it

for

both

of

our

team.

We

have

guiding

principles

for

the

team

and

how

we

work

as

a

team,

but

also

then

what

are

we

gonna?

We're

guiding

principles

that

we're

gonna

check

ourselves

against,

as

we

did

this

pipeline

and

and

built

this

out

and

first

of

all,

was

like

look.

Let's

make

it

easier

to

implement

new

capabilities?

B

We

don't

want

to

really

have

an

impact

to

other

users,

and

what

does

that

mean?

Well,

if

I

go

and

I

write

all

new

code

in

my

engine,

you

know

I've

got

everybody,

including

in

a

Jenkins

file

and

they're

running

this

code

and

all

that

and

then

I

make

changes

for

that

library.

I

do

a

bunch

of

stuff.

I

could

be

impacting

those

folks.

They

start

loading

that

one

in

and

you

know,

there's

a

lot

of

other

testing

that

has

to

happen.

B

If

I'm

upgrading

plugins

do

I

know

all

the

plugins

work

I

had

one

person

told

me

that

he

had

come

into

a

company

and

they

had

over

a

hundred

plugins

running

in

their

Jenkins

instance.

Well,

you

know

version

diversion

right.

Those

plugins

can

change

and

we

had

a

pipeline,

not

as

our

team

specifically,

but

here

at

t-mobile

there

was

a

pipeline

that

was

trying

to

do

more

of

the

global

hey.

B

So

that

was

a

challenge

that

we

we

were

very

concerned

about,

wanted

to

make

sure

that

when

people

come

up,

we

want

to

get

somebody

onto

the

pipeline.

We

want

to

help

you

want

to

make

the

usage

the

onboarding

faster

and

easier.

We're

gonna

be

really

conscious

again

it's

about

that

customer

right.

So,

let's

think

about

our

scalability,

our

reusability,

our

flexibility

for

the

dev

teams

hit

all

those

those

marked,

and

so

again

these

are

things

we're

checking

ourselves

against.

B

As

we

went

along

as

we

iterated

on

this,

and

then

we

didn't

want

to

have

this.

What

we

call

a

CID,

CI

CD

pipeline

specialist.

We

did

some

numbers

on

this

and

some

calc

stood

as

to

how

much

money

were

we

saving

by

some

of

these

things

and

if

you

get

down

to

it

any

given

team,

if

you're

in

a

larger

organization

and

people

have

their

own

pipelines,

you

end

up

having

to

have

somebody

that's

kind

of

dedicated

for

that

pipeline.

B

They

may

not

be

24/7

42

hours

a

week,

always

doing

it,

but

when

there's

an

issue

with

a

pipeline,

they

have

to

drop

everything

and

be

right

on

that.

So

that

becomes

a

very

disruptive

in

I.

Think

to

your

other

planning,

maybe

other

work

you'd

like

to

have

that

person

working

on

going

back

to

that

whole

concept.

I've

mentioned

about

us

actually

trying

to

let

people

focus

more

on

the

development

of

code.

B

So

if

we

can

give

more

predictability,

it's

hard

to

find

the

specialists

all

those

things

so

we're

like

okay,

we

want

to

see

if

we

can

start

to

reduce

that

reliance

for

teams

on

that

that'll

also

save

them

money

and

focus

to

where

they

want

to

be,

and

then

again

can

we

actually

abstract

the

underlying

technologies

that

drive

the

pipeline

out.

So,

yes,

we

use

Jenkins

under

the

covers

it.

Jenkins

does

a

lot

of

stuff

first,

that

could

just

give

us

foundation

that

we

could

start

on.

We

didn't

have

to

do

everything

from

scratch.

B

There

is

a

value

to

some

of

these

key

plugins

somebody's

got

a

plug-in

that

lets

me

get

to

get

the

different

things.

I

need

to

send

something

to

Splunk

whatever

it

is.

I

have

these

plugins

I

can

leverage,

but

let's

minimize

those

minimize

them

and

let's

not

have

teams

actually

even

have

to

worry

about

knowing

we're

using

those.

So

those

were

kind

of

our

key

principles

on

that

front,

all

right

and

so

I'll,

just

I

think

this

is

my

last

slide

right

here.

B

What

I

just

want

to

note

was

if

you're

a

shared

service,

and

you

are

supporting

multiple

teams

with

pipelines

and

all

that

remember

it's

not

just

about

the

technology.

You

can

build

that

really

cool

awesome

mousetrap,

but

if

you

don't

focus

on

the

customer,

you

might

get

theme

here.

It's

customer

customer

customer.

If

you

don't

focus

on

that,

it

can

be

you'll,

have

a

real

challenge.

B

You

can

think

you're

listening

to

them

or

it's

very

easy

to

get

into

yeah,

but

I

know

what's

best

for

you

well,

listen

to

what

a

person

has

to

say:

give

them

that

chance

to

provide

you

that

feedback,

and

that's

really

that

third

bullet

is

where

we

did

this.

We

always

gave

a

customer

satisfaction

and

we

were

running

at

about

a

4.9

out

of

5

on

a

customer

satisfaction

with

customers.

It

was

a

set

of

questions

we

put

out.

We

tried

to

be

very

open

about

the

questions

we

asked.

B

We

didn't

try

to

be

leading

with

those

questions.

Sometimes

people

put

questions

out

and

they're

a

little

bit

leading

was

assumptions

under

them,

but

it's

just

something

to

think

about

that

as

you

do

this,

that

you

can

do

great

things

with

technology.

But

if

you

don't

remember

who

your

customer

is

the

development

team?

Folks

like

that

yeah,

you

know,

then

your

you

might

have

a

little

bit

of

a

struggle

so

with

that

I

think

Robi

do

I

turn

it

over

to

you.

Yes,.

C

What

Marty,

actually

just

explained

is

basically,

there

are

good

things

and

everything

what

he

talked

about

is

about

the

customer

actually,

and

we

are

really

very

customer

focused,

so

whatever

we

have

designed

so

far

like

whether

it

is

a

physical

architecture

of

our

pipeline

engine

when

I

say

pipeline

engine,

it's

nothing

but

I'm.

Talking

about

the

Jenkins

here

and

the

pipeline

library,

which

is

our

pipeline

framework

which

which

we

have

developed

is

so

whenever

we

have

design

any

of

these

component.

C

We

have

kept

our

customer

in

the

center

of

the

table

and

see

that

whether

any

solution

we

are

designing

is

actually

helping

the

customers

or

not,

because

in

the

DevOps

team

there

are

certain

questions

when

you,

when

you

design

a

pipeline

library

in

your

organization,

they

have

certain

questions

when

they

start

using.

The

library

itself

is

how

easy

is

to

do

the

onboarding

on

to

the

library.

Basically.

So

if

you

have

a

new

application,

you

have

a

centralized

team

in

your

company.

C

Is

that

taking

care

of

all

the

onboarding

of

applications

in

a

company

or

whether

being

a

DevOps

team?

Can

the

team

take

scale

by

itself

and

how

easy

is

that

and

how

much

learning

curve

basically

is

required

when

you

understand

the

pipeline

and

another

one

is

basically

so

these

are

the

actually

our

experiences

from

last

70

years.

C

We

have

on

our

hands

with

a

lot

of

like

you

know,

different

ways

of

working

on

Chen

and

crediting

the

library

and

that's

why

we

have

come

to

a

conclusion

that

this

is

the

best

architecture

and

the

library

we

can

actually

design.

Do

we

really

need

a

dedicated

resource,

how

we

can

extend

our

pipeline

with

the

features

and

the

capability

if

we

need

to

add

into

our

pipeline.

C

There

are

a

lot

of

duplicity

happens

when

you

have

the

the

micro

service

kind

of

model,

lot

of

duplicity

happens

there,

because

in

the

micro

services

you

are

having,

following

the

similar

methods

of

you,

know,

building

the

pipeline

testing

the

pipeline

and

they're

playing

on

similar

platforms.

So

we

have

the

similar

jenkins

file,

which

we

need

to

repeat

over

and

over

again

with

all

the

components

can

we

can.

We

have

this

duplicity.

We

can

be

avoided

actually.

C

So

there

are

certain

questions

when

people

ask

to

you,

and

so

one

of

the

things

which

I

will

talk

about

right

now

with

the

physical

architecture.

So

what

we

have

basically

done

in

this,

we

are

actually

deploying

deploying

our

jenkins

basically

into

the

kubernetes

kubernetes

namespace.

The

genkan

itself

is

into

a

into

the

container

and

then

we

are.

We

are

leveraging

the

kubernetes

as

well

along

with

it.

C

So,

as

you

can

see

in

the

picture

here,

the

master

itself

is

into

the

container

and

then

we

have

a

persistence

volume

of

the

Jenkins

home,

which

is

right

there

on

this

on

the

other

kubernetes

space,

and

then

we

are

leveraging

basically

having

only

few

very

few

Jenkins

of

plugins.

Basically,

you

know

the

plugins

are

basically

the

heart

of

the

heart

of

the

Jenkins

as

more

and

more

plugins

you

keep

adding

in

to

the

Jenkins.

C

You

get

more

and

more

features,

but

in

this

case

the

we

wanted

to

have

a

chenkin

switch

which

actually

we

have

less

number

of

plugins

just

to

support

our

pipeline.

So

we

used

only

basically

four

plugins

to

maintain

into

our

pipeline

engine

here

and

then

we

have

step

containers

so

usually

all

the

step

containers

which

are

basically

bill

step

container.

These

all

are

the

build

sub

containers

and

different

components

in

your

build

file

which

you

are

going

to

use,

they

spin

up

into

the

same

into

the

same

kubernetes

space.

C

So

what

else

we

have

actually

done

is

here

is

we

have

used

the

Splunk

basically

for

the

loading

we

have

used,

AB

D,

AB

dynamics,

spring

cloud,

config

server.

Basically,

we

have

used

for

our

encrypted

passwords

to

soar

into

the

spring

cloud,

config

server.

The

reason

being

doing

it

is

this

whole

setup

is

actually

fully

automated.

So

fully

automated.

When

I

say

we

are

using

the

Jenkins

config

ads

code,

we

are

using

split

screen

cloud

config

server.

So

when

this

Jenkins

comes

up,

it

takes

like

two

minutes

and

some

seconds

to

bring

a

new.

C

So

by

using

that

what

happen

is

if

everything,

if

your

Jenkins

instance

goes

away

by

any

chance,

you

can

actually

restore

it

again

within

the

same

two

minutes

or

few

seconds,

because

first

of

all,

the

loading

of

the

genkan

is

very

easy

for

us.

We

are

using

very

few

plugins

into

that

and

then

all

the

configurations

are

stored

into

bit

bucket

and

the

version

control

tool.

C

So

it's

easy

to

restore

them

back

now

we

talked

about

the

agents

so

which

are

workers

for

the

for

Jenkins,

so

they

can

be

spin

either

onto

the

same

kubernetes

space

or

they

can

be

actually

done

on

to

another

kubernetes.

So

for

that

we

are

you

using

the

dynamic

allocation

of

the

agents

and

dynamic

provisioning

of

the

agents,

similar

things

we

can

do

on

to

the

AWS.

C

So

in

our

case

we

are

using

both

of

them

and

at

the

end

we

have

a

graph

on

a

dashboard,

so

whatever

happens,

or

all

the

steps

when

you

execute

in

a

pipeline,

the

all

the

matrices

are

actually

getting

collected.

Silicon

graph

on

a

dashboard.

Let

me

so

here

is

a

the

few

details

about

it.

So

the

complete

infrastructure

is

on

kubernetes,

including

Mark

Stone

agents.

Each

team

actually

gets

their

own

pipeline

engine

so

that,

if

something

happens

like

their

isolated

teams,

basically

so

anything

happens

to

one

of

the

masters.

C

The

other

teams

are

not

getting

impacted

in

this

case

and

for

us

it's

very

easy

to

spin

any

any

number

of

masters

on

the

kubernetes

space.

So

we

are

not

worrying

about

those

things.

Shengcún

configures

code

is

highly

utilized

for

us.

We

are

using

very

minimum

number

of

plugins

and

they

are

all

pre-configured.

Even

we

have

like

global

credentials

within

your

organization,

like

you

have

some

global

credential

which

can

be

used

across

the

teams,

but

there

are

specific

credentials

which

team

would

like

to

use

for

that.

C

We

have

provision

folder

level

access,

so

the

folder

level

access

given

to

the

team.

They

usually

have

the

create

the

credentials

which

are

specific

to

their

applications

within

those

folders,

so

that

nobody

else

can

actually

see

them,

and

it's

a

Sox

compliance

perspective

as

well

using

core

for

core

plugins.

You

can

have

as

number

of

plugins.

You

want

actually

and

I

remember

before

coming

up

with

this

particular

architecture.

We

had

200

plus

plugins

and

200

plus

main

plugins,

and

you

can

think

of

the

dependent

plug-in

which

get

installed

so

when

I

say

for

core

plugins.

C

Basically,

these

are

the

main

plugins.

You

have

some

dependent

likely

also

get

installed

along

with

that.

We

have

extended

it

to

16

plugins

because,

like

we

are

using

like

Splunk

as

well.

In

that

case,

so

one

of

them

somebody

is

looking

for

build

chimes

in

because

if

you

go

into

the

UI,

you

need

some

plugins

actually,

so

we

have

extended

it

them

to

16

plugins

into

demo

by.

We

have

removed

a

pager

dependency,

actually

so

by

storing

the

credential

involved.

C

So

what

happened

basically

is

like

you

know,

we

have

our

credentials

stored

right

now

into

the

vault

and

through

that

we

are

using

spring

cloud,

config

server.

So

all,

but

in

this

Jenkins

comes

up

and

up

and

running

it

actually

brings

everything

from

the

from

the

spring

cloud

config

server

in

encrypted

credentials

and

get

them

storing

the

global

global

credential

in

Jenkin,

when

I

say

almost

zero

maintenance

and

that's

a

use

of

it

used

to

be

a

huge

pain

when

you

have

a

single

master.

A

A

So

so

one

of

the

questions

is:

are

you

using

Jenkins

job

builder

and

my

assessment

was

you're,

probably

not

Jenkins.

Job

builder

didn't

look

like

it

was

involved

in

in

the

structure

that

you're

doing

no

okay

and

are

you

using

cloudBees

Jenkins

are

using

clog,

B's,

core

or

the

product

or

Jenkins

the

open

source

component

to

do

the

the

Jenkins

instances?

This.

A

C

You,

okay,

so

now

I'll

talk

about

the

pipeline

execution

example.

Basically

how

the

pipeline

looks

like

and

with

this

design.

So

as

you

can

see

in

this

picture,

there's

a

user

who

creates

a

pull

request

once

he's

done

with

his

task

and

the

on

the

source

code

and

the

source

code

in

our

case

is

basically

into

the

big

bucket

right

now

we

have

a

something

called

pipeline

definition

file,

which

is

a

yeah

Mel

file.

C

Where

you

store

all

of

your

steps,

steps

in

the

sense

like

what

all

different

steps

you

would

like

to

perform

as

part

of

your

pipeline,

you

are

doing

some

pre-built

steps

and

then

build

stab

you're

building

with

different

tools

and

technology

or

a

programming

language

you

use,

and

then

you

you

go

for

like

notification,

sonar

cube

or

testing

and

then

deployments

right.

So

all

the

steps

are

mentioned

into

this

pipeline

definition

file.

Now

the

picture

you

see

is

all

the

clouds

in

the

containers

here.

C

Each

of

the

step

is

a

container

here

in

this

case,

so

you

can

say

like

about

the

bill.

Java

8

and

b1

is

one

of

the

container

another

slack

notify,

which

is

again

a

container

here.

Sonar

cube,

UCP,

notify

and

then

45

deploy

to

K

8

k.

8

is

nothing

but

it's

a

kubernetes.

You

can

think

and

then

the

last

step,

if

you

see,

is

which

is

in

flux,

DB

logging,

so

which

is

basically

the

graph

on

a

graph

and

a

loading

with

you.

So

this

whole

pipeline

steps

run.

C

Each

of

them

runs

like

a

container

and

we

have

something

called

pre

and

post,

which

Larry

will

explain

you

in

detail.

So,

basically,

once

the

pipeline

gets

run,

everything

gets

logged

onto

the

bear

fauna

and

it

creates

beautiful

of

beautiful

graphs

actually

onto

a

graph

Anna

for

us

related

to

the

pipeline

itself.

Cic

D.

Now

the

picture

which

is

down

here,

which

is

for

shared

or

use

reusable

step

container

library.

This

is

something

actually

we

have

created

on

on

our

own,

but

there

is

an

open

source

code

available

for

this,

so

this

step

container

library.

C

What

we

have

done

so

far

is

we

have

40-plus

different

containers

available

for

t-mobile

users,

and

these

are

very

generic

containers

created

on

different

tools

and

technologies

and

people.

The

users

can

extend

them.

They

can

use

the

containers

which

we

have

created

as

a

base

image,

and

they

can

extend

those

containers

for

their

functionality

into

the

pipeline

now

in

this

picture.

C

This

is

the

sample

of

the

pipeline

code

and,

as

you

see

like

you

know,

and

when

I

talk

about

this

sample,

basically

there

is

not

much

learning

for

the

development

team

into

this.

The

learning

in

the

sense,

the

development

team

should

understand

how

to

create

docker

images.

Basically,

we

should

understand

how

to

read

or

update

any

ml

file,

and

that's

the

only

two

thing

I

expect

from

a

development

theme

when

we

say

that

they

can

extend

and

use

this

pipeline

by

themselves.

In

this

we

have

three

component.

Basically

one

is

called

global

section.

C

Another

one

is

called

global

environment

section

and

third,

one

is

steps

and

that

soul

is

all

about

this.

This

whole

pipeline,

in

this

case

the

global

section

you

have

to

specify

application

name,

basically,

what

application

of

the

micro

services

you

are

going

to

build.

You

have

to

specify

that

name,

and

then

you

have

application

version.

So,

as

you

see,

we

have

branch,

wise

versions

here,

like

Baxter

dot

photo

in

this

case.

The

feature

branch

has

like

2.4

dot,

1.

The

reason

being

is

here

having

the

branch

wise

person.

C

Basically,

if

you

have

a

master

branch

and

you

are

creating

creating

a

child

branch

out

of

it

or

a

feature

branch

you

can

actually

have

and

when

you

are

connecting

the

feature

branch-

and

you

are

doing

the

pipeline,

your

pipeline

is

running

so

it's

act

getting

built

on

2,

dot,

4

dot

1.

But

when

you

start

merging

your

feature

branch

into

the

master,

you

will

get

some

time

conflicts

in

the

sense.

C

If

the

feature

branch

also

has

2

dot,

4

dot,

2

and

it

will

row

right

onto

the

the

master

branch

in

this

case

you

can

keep

the

version

separate

for

features

and

master.

That's

how

this

app

wasn't

works.

Then

you

have

global

environments,

so

think

about

the

situation

where

you

are

writing

a

program

basically

and

in

the

program

or

a

cell

scripts

or

any

language

you

are.

C

You

are

defining

lot

of

environment

variables,

which

you

want

you

to

use

throughout

in

your

program

like

you

have

two

different

classes

or

you

are,

and

you

want

to

use

those

environment

variables

so

similar

things

you

have

to

define

here

now.

The

next

thing

is

basically

steps.

So

steps

are

nothing

but

what

all

different

activities

you

are

going

to

perform

as

a

part

of

your

pipeline,

so

you

have

to

define

now.

Step

has

minimum

three

components

here,

which

is

name

basically

jar

build

I.

Would

I

generally

recommend

people

to

use

to

make

sure?

C

Like

you

know,

your

UI

looks

good

instead

of

space

here

and

then

you

have

an

image

where

which

is

the

step

container,

so

this

image

is

basically

contains

all

of

your

tools,

which

are

required

to

complete

that

particular

step.

So

in

this

case,

I

am

having

a

maven

build

and

I

need

a

JDK

8.

So

that's

installed

in

this

image

and

then

I

have

a

command

section.

C

So

when

I

talk

about

this

command

section,

basically

it's

nothing

but

a

it's

a

it's

a

plain

ground

where

you

have

like

it's

a

VM

for

you

now

to

start

writing

all

different

commands.

You

would

like

to

execute

in

that

particular

image

when

it

start

up

as

a

container,

so

right

now

the

example

is

basically

given.

As

with

one

command,

you

can

create

multiple

commands.

Basically,

if

you

understand

the

concept

of

yeah

Mel

file,

when

you

see

the

it

means

it's

an

array

of

the

commands,

you

can

write

as

much

many

commands

as

you

want.

C

Third,

one

and

all

the

old

environment

variables

which

are

we

have

defined

at

the

global

level,

are

basically

you

can

utilize

them.

You

can

see

with

a

built

container

the

environment

variable

we

define

at

the

environment.

Section

of

the

global

is

basically

we

are

using

into

the

image

here

similar

way.

Now

you

have

another

step,

you

want

to

do

a

docker

image

build

once

you

have

your

jar

file

ready.

You

wanted

to

use

the

jar

file

and

create

an

is

out

of

it.

C

Then

you

write

the

next

step

and

step

basically

name

again,

the

name

step

of

the

name,

image

and

command.

So

you

keep

writing

this

with

all

the

step

containers

using

into

it,

and

your

pipeline

keep

enhancing

so

where,

as

everybody

I

think,

people

over

here

are

very

well

versed

about

the

pipeline

library,

which

is

a

groovy

code

which

we

have

to

write

now.

C

C

This

complete

pipeline

pipeline

framework

and

that's

why

we

call

it

as

pipeline

framework

because

the

framework

don't

need

to

be

changed

in

the

sense

until

unless

you

have

a

great

feature

who's

coming

in

and

you

need

to

have

it

distributed

to

all

the

development

teams,

then

only

you

just

go

back

to

your

library

now

and

change

it

rest

of

the

features.

For

example,

you

are

going

to

have

a

chain

meter.

C

You

don't

need

to

go

back

to

your

library

and

add

those

dependencies

into

your

groovy

code,

but

rather

you

just

create

a

container

right

now

and

that

will

help

you

to

extend

your

features

and

the

capability

in

your

pipeline

pipeline

value.

So

most

of

all

things

which

I

just

was

speaking

about

is

here.

So

it's

a

framework

where

the

pipeline

execution

is

defined

by

each

team.

Basically,

so

anybody

in

the

development

team-

you

don't

need

a

specific

person

or

a

specialist

in

your

team,

basically

to

just

work

on

the

pipeline,

extend

the

pipeline

features.

C

No,

any

developers

can

do

that

in

the

UML

file

easier

and

faster

onboarding.

So-

and

this

is

proven

with

us,

that

any

application

or

any

micro

services,

if

you

have

all

the

information

in

hand,

you

can

actually

onboard

application

in

less

than

one

hour

on

point

pipeline

and

that's

what

we

have.

We

have

been

doing

with

the

with

the

within

our

team

about

so

no

biggie

ml

or

groovy.

C

Script

maintain

yes,

the

same

thing,

which

I

just

explained

that

it's

just

you

don't

have

to

have

a

big

yeah

Mille

in

this

case

also

we

are

using

ml

and

if

you

have

like

20

different

steps

to

perform,

you

will

absolutely

say

that,

like

you

know,

the

ml

file

is

getting

increase.

Again,

it's

a

big

ml

part

for

to

solve

that

problem.

We

have

actually

introduced

templates,

which

is

the

next

step.

You

reusable

templates,

so

the

the

templates

when

I

say

basically

is

every

step

which

you

are

writing

into.

C

The

ML

file

can

be

converted

into

a

templates

and

same

templates.

Let's

say,

for

example,

you

have

100

plus

microservices.

You

do

the

same

way,

build

maven,

build

you

do

you

have

the

dollar

bills

again

and

then

you

are

using

sonar,

cube

and

other

different,

but

they

are

all.

The

micro

services

are

following

the

same

way

of

having

your

pipeline

out.

What

building

your

pipeline

now,

if

I

used

this

particularly

ml

file,

then

I

have

checked

out

hundred

different

repositories

and

I

have

to

check

in

the

same

Yambol

file

over

and

over

again

into

that.

C

Let's

say

I

got,

another

features

need

to

be,

or

capability

need

to

be

added

into

the

template.

Now

what

will

happen

again?

I

have

to

check

out

all

of

my

hundred

repositories

and

I

have

to

update

my

pipeline

Doty

amel,

which

is

a

definition

file,

but

what,

if

I,

have

a

template

if

I

create

a

template

out

of

the

repository

in

a

different

wrap

all

together

and

maintain

all

the

templates

and

then

include

those

templates

into

my

main

pipeline

30

ml

file?

C

What

will

happen

is

tomorrow

if

any

change

happen

in

capabilities

and

features,

basically,

you

will

be

doing

that

in

a

separate

repository

all

together

and

which

is

not

not

impact.

Your

current

pipeline,

you

want

some

cases.

I

have

seen

that

if

you

change

any

pipeline

specific

files,

it

starts

a

bill

because

it's

a

auto

bill

as

well.

You

check-in

into

a

code

repository.

C

It

start

another

bill

which

is

not

required

so

time

because

it's

it's

a

pipeline

specific

file,

it's

not

a

code

change,

but

when

you

have

a

separate

repository

all

together

to

maintain

these

templates,

it

means

we

are

not

changing

your

whole

pipeline,

no

DML

file

in

your

source

code

and

any

changes

to

these

files

will

not

do

every

build

off

your

phone

activity.

That's

how

this

templates

will

work

and

I

will

I'm

gonna

actually

show

you

into

how

these

templates

works

on.

How

you

can

include

easily

into

the

into

the

pipeline.

C

30Ml

file

is

a

definition.

File

step

containers

are

again,

I

already

speak

about

it.

Specs

tab

container

is

nothing,

but

you

have

a

specific

container

for

specific

tasks

in

this

case,

and

you

have

to

in

this.

In

our

case,

we

have

a

library

for

that

and

you

can

use

the

docker

how

to

have

these

containers

to

include

in

your

consistency

of

approach

and

application

across.

So

it's

the

same

thing

because

you

do

the

build

pipeline.

All

the

steps

are

similar

same

approach,

same

consistency

across

all

of

your

micro

services.

C

C

C

How

you

basically

do

the

installation

of

the

pipeline

so

I

think

we

have

just

updated

a

pipeline

engine

masters

like

the

infrastructure

which

we

have

built

with

us,

which

is

a

complete

automated

system

for

us

to

stand

up

in

Eugene

Keynes,

all

the

steps

and

the

source

code

is

available

here

for

you

to

stand

up

the

genkan,

so

you

can.

If

you

start,

you

know,

following

these

steps,

you

will

be

able

to

stand

up

a

genteel

within

within

minutes.

That's

that's

for

sure,

and

then

how

you

do

the

Lyle

library

set

up.

C

So

you

know

that

there

is

a

plugin

called

global

pipeline

library

in

Jenkins.

You

just

need

to

have

this

code

ready

and

then

update

the

configurations

into

the

into

the

library,

global

library

sections,

and

you

will

be

up

and

running

in

your

Jenkins

in

our

case,

basically,

what

we

have

done.

This

is

again

automated,

along

with

our

Jenkins

when

we

stand

the

Jenkins

up,

so

these

things

are

very

prefilled

configured

for

us

when

the

Jenkins

comes

up

now

how

to

section,

basically

it's

a

the

getting

started

with

the

pipeline.

C

So

when

you

start

working

on

the

pipeline,

you

need

two

files.

One

is

Jenkins

file,

another

one

is

pipeline,

dot,

UML

file

and

I.

Think

all

Jenkins

lowers

knows

that

the

same

can

file

contains.

Where

is

your

library

basically,

which

you

define

into

the

into

the

plugin

just

now

in

in

the

previous

section?

C

So

you

just

need

to

provide

those

details

here

and

the

Jenkins

file

should

be

part

of

each

microservices

in

in

your

repository,

and

it

never

changed

until

unless

you

are

actually

changing

a

branch

or

any

other

information

related

to

that

it

doesn't

change

frequently,

and

then

we

have

pipeline

dot,

yeah

Mel

pipeline.

No

tamil

is

nothing

but

it's

again

the

same

thing

like

you

have

the

steps

Global

section

and

the

steps

defined

within

the

pipeline.

C

Dodi

ml5

you

now

what

core

plugins

we

need,

so

this

is

I

think

in

between

it

came

in

the

step,

so

I

will

just

let

you

know

these

are

the

four

plugins

core

plugins.

You

need

to

set

up

the

whole

Jenkins

and

to

make

it

make

the

pipeline

framework

up

and

running.

You

don't

need

more

than

that.

You

can

install

more

and

then

like

we

have

16

of

them

it's

because

we

are

looking

for

different

other

other

features

in

the

Jenkins

to

work

on

like

Splunk.

We

are

logging

our

logs

into

this

plank.

C

C

Now

we

will

talk

about

what

different

things

you

can

write

into

the

pipeline

30ml

and

thanks

to

Larry,

he

has

put

a

schema

together.

So

if

you're

not

sure

like

you

know

any

any

of

the

components

or

any

of

the

variables

which

you

are

writing

into

the

into

the

pipeline

dot

yeah

Mel,

are

they

being

teaser

type

or

a

string

type?

Or

what

should

it

be

the

length

or

different

validation?

I?

Think

you

can.

You

can

go

through

with

the

schemas,

and

you

will

understand

more

about

it.

C

Now

we

have

different

sections

in

the

in

the

pipeline.

30Ml

one

is

Haggard

basically,

so

it

has

the

version

and

the

pipeline

section

here.

Then

you

have

the

global

section

where

you

define

the

application

name

and

the

application

version.

After

that,

we

have

a

global

environment

variable

where

you

specify

the

group,

the

environments

which

we

are

going

to

use

into

your

step

containers

so

again,

and

then

we

have

steps.

So

these

three

three

components

will

be

the

part

of

the

pipeline

Dodi

ml.

Now,

let's

walk

you.

Let

me

walk

you

through

with

the

step

section.

C

Basically,

what

different

things

are,

what

what

different

components

or

the

variables

you

can

use

into

the

step

container

in

this

you

can

see

we

have

environment,

condition,

secrets

and

control.

So

I

will

walk

you

through

in

a

briefing

about

this.

So

a

minimum

step

containers

looks

like

you

have

a

name.

You

have

an

image

which

is

your

container

when

it

will

spin

and

you

have

the

command

section,

you

can

write

the

command

or

you

can

pipe

them

commands

a

pipe

or

multiple

commands

into

single,

or

you

can

have

a

script

to

execute

in

this

action.

C

Basically,

now

we

have

something

called

specific

environment

variables

you

can

define

within

the

step,

which

is

basically

it's

like.

You

have

global

environment

variables.

You

can

use

across

multiple

steps

and

then

you

have

specific

environment

variables,

which

you

would

like

to

use

within

a

single

step

and

that's

what

is

the

section

about?

You

can

define

the

environment

variables

here

now.

You

see

if,

in

this

particular

image

tag,

I'm

using

something

called

pipeline,

underscore

app

underscore

version.

This

is

wherever

you

see

like

pipeline

underscore.

These

are

the

naming

conventions.

C

These

environment

variables

are

already

exposed

by

the

pipeline

library.

These

are

not

user-defined,

but

you

can

override

them.

As

you

start

writing

your

pipeline

or

the

command

section,

you

can

start

overriding

them

and

that's

possible.

Then

we

have

something

called

condition

so

the

conditions.

Basically,

let

me

give

an

example

of

you

know

how

we

use

condition.

So

in

this

case

we

have

a

vent

clause

which

says

branch

master.

The

reason

for

that

the

meaning

of

this

basically

is

this

particular

step-

will

only

execute

when

the

branch

name

is

master.

So

let's

say

you

have.

C

You

are

working

on

multiple

features

and

you

have

taken

out

branch

out

of

it

right

now

with

a

single

pipeline.

No

tamil,

you

don't

want

to

you,

know,

delete

or

update

or

any

of

the

staffs

from

the

single

pipeline

dot

Yambol,

which

got

inherited

from

master

to

that

feature,

branch

to

make

sure

that

I

just

wanted

to

execute

something

to

deploy

on

qat

environment,

but

I

don't

want

to

do

it

in

our

my

feature,

branch