►

From YouTube: Community Meeting, April 26, 2022

Description

No description was provided for this meeting.

If this is YOUR meeting, an easy way to fix this is to add a description to your video, wherever mtngs.io found it (probably YouTube).

A

Hey

everybody

today

is

april

26th,

and

this

is

the

weekly

kcp

community

meeting.

Thank

you

all

for

joining

and,

as

I

mentioned

before,

I

started

recording.

If

you

do

have

an

agenda

item,

please

feel

free

to

add

it

to

the

issue

here

and

stefan.

If

it's

okay

with

you,

can

we

come

back

to

your

issue

or

to

your

item

and

go

on

to

the

other

ones?

First,

yeah

as

usual.

That's

the

last

one,

okay!

So

paul!

You

have

the

first

comment

here.

B

A

B

A

Yeah,

so

I

maybe

maybe

a

label

I

mean,

I

don't

know

if

we're

if

we

do

a

good

job

of

housekeeping

and

putting

those

labels

on

before.

I

forget

one

thing

that

a

friend

of

mine

who

works

on

sig

multi-cluster

reached

out

a

few

weeks

ago

and

asked

if

we

could

come

and

present

to

the

sig

just

about

kcp

in

general.

So

if

anybody

is

interested

in

trying

to

put

something

together

or

reuse,

some

existing

material

that

we

have,

I'm

sure

we

could

get

on

their

calendar

sometime.

A

A

C

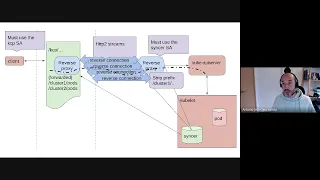

Or

that

there

you

go

okay,

but

that's

that's

two

to

to

give

you

some

context,

because

that's

the

requirements

that

I

understood

that

we

have

in

kcp.

So,

as

you

see

the

graph,

we

are

going

to

have

external

clients

in

the

middle.

We

have

this,

this

green

symbol,

that

is

the

kcp

server,

and

we

are

going

to

have

a

private

clusters

on

the

right

side.

C

C

C

C

Why

I'm

saying

this

because

the

trickiest

thing

here

is

to

to

do

the

qctl

exec

and

the

qctr

performance

they

use

speed.

So

we

need

to

upgrade

the

connection

and

reverse

proceed:

reverse

closing

disconnections

to

what

they

deposit

the

with

this

reverse

proxies.

We

solve

the

problem

of

of

the

different

api

calls

using

the

speedy.

Then

we

have

the

problem

that

the

client,

the

sinker

in

the

private

cluster,

is

the

one

that

has

to

initiate

the

connective

and

we

need

to

be

able

to

use

a

connection

between

these

reverse

proxies.

C

So

there

are

different

approaches

that

can

be

used

and

one

is

to

create

a

tcp

connection

or

a

grpc

tunnel

or

use

good

sockets.

The

thing

that

I

didn't

like

from

those

approaches

is

that,

for

example,

forward

sockets

multiplexing

over

with

sockets.

I

didn't

find

anything

any

library

mature

enough

that

allows

multiplexing

and

for

scaling

this

solution.

We

are

going

to

need

multiple,

robust

connectors

with

grpc

symmet,

it's

in

the

best

candidate,

because

the

rbc

already

implements

a

streaming

in

by

default.

C

But

what's

the

problem

is

that

for

the

fpc

you

need

another

employee,

because,

despite

it,

you

see

tb2

using

in

using

it

in

the

same

server

as

the

between

golan

is

experimental

and

it

has

reported

this

support

performance.

So

what

I

did

is

I

created

a

small

library

to

create

robust

connections

over

http

stream

streams

that

I

I

wanted

to

use

this

too,

because

that

means

that

if

this

work

well,

we

can

also

implementing

kubernetes

upstream

moving

away

from

spd

and

moving

to

http

2

streams

and

solving

all

the

problems

in

question.

C

But

that's

the

long

shot,

and

so

conceptually

the

the

implementation

is

like

this.

You

have

a

component

that

dials

are

needed

to

be

connected

to

kcp

and

then

it

discovers

a

control

plane

connection

and

when,

when

a

client

connects

to

a

url,

a

special

url

in

kcp,

like

the

ones

that

you

can

see

as

cluster

one

slash

parts,

it

reverse

proxy.

That's

connecting

through

the

reverse,

connecting

to

the

other

rubbers

proxy

and

get

to

the

ap

server.

D

C

This

I

think

that

is

simple

to

to

see

so

for

the

demo.

What

I

did

is,

I

have

an

instance

in

in

in

google

cloud

this.

This

is

the

installing

workload

and

in

google

cloud

I

create

a

server

that

this

we

will

write

the

kcp,

so

the

kcp

server

should

be

this

one,

and

this

this

the

this

is

called

the

the

on

the

bottom

of

the

screen.

The

one

that

server

spot

is

is

the

sync.

So

when

I

run

the

sinker,

this

will

be

a

part

against

the

url

of

the

kcp.

C

C

What

is

doing

right

now

is

establishing

a

connection

on

a

controller

playing

connecting

and

what

indicates

so

that

the

server

knows

that

has

a

client

attached

and

if

it

receives

a

connection,

it

sends

a

request

to

create

a

reverse

connection

and

it

forwards

the

the

it

uses

us

to

proxy

the

the

request

from

the

client

so

to

use

this,

what

they

have

is

we

can

send

requests

directly.

So

what

I'm

going

to

do

is

use

the

cubesat

directly

and

what

I'm

going

to

do

is

is

to

to

use

a

special

url.

C

34

this

will

be

the

base

euro

and

this

slash

proceed.

Our

higher

01

is

the

the

id

of

my

reverse

spot.

You

can

see

below

that.

I'm

I'm

calling

the

kcp

with

this

identity

id.

That

means

that

when

I

receive

another

request

on

that

url

I'm

going

to

forward

through

this

response,

I

can

do

the

the

first

time

it

always

takes

a

lot

because

it

has

to

do

with

the

discovery,

and

you

can

see

that

you

have

they

get

parts

all

the

requests

work.

C

C

C

C

Because

I'm

not

taking

the

remote

and

I

need

to

work

it,

so

we

can

have

multiple

connectives

that

that

problem

is

so

we

can

have

concurrent

connections.

The

other

tricky

thing

that

we

want

to

work

this

for

forward.

So

now

this

pod

is

an

http

server.

What

I'm

going

to

do

is

to

forward

my

local

1899

to

report

on

the

port,

so

this

is

a

bug

that

they

have

so

the

problem

is

here.

You

can

see

that

sometimes

the

the

body

is

received,

and

you

know

I

need

to

convince

her.

C

C

C

C

I

don't

know

if

you

have

another

command,

the

thickest

one

was

the

the

the

speedy

one,

because

you

need

to

hide

up

the

connector

and

that's

that's

the

thing

that

was

tricky,

because

for

that

the

the

I

the

implementation

of

the

connection

has

to

so

the

golden

standard

library.

That's

some

tricky

thing.

Is

you

know

that

the

red

a

read

a

call

is

blocking,

but

you

need

to

make

they

have

a

special

command

with

just

to

set

the

line

on

the

past.

It

unblocks

the

the

real

call,

and

you

need

to

implement

that

logic.

C

So

when

you

want

to

create

a

fake

connection

and

you

want

to

make

it

hijackable

and

that

war

events

is

that

disabled

to

hijack

the

connection,

you

need

to

implement

that

the

connection

is

able

to

prevent

on

risk,

and

that

is

how

it

works.

If

you

see

the

code,

let

me

check

with

that

with

that.

The

the

nicest

thing

here

is

that

you

can

see

you,

you

don't

need

practically

anything

that

is

not

in

the

standard

library.

D

D

We

should

just

get

sketch

it

out

what

what

is

the

goal

for

p5

to

get

something

in

kcp

like

this

or

it's

a

feasibility

study

right.

That's

what

my

understanding,

so

we

have

the

tool

like

we

have

the

library

to

do

all

of

what

we

want

and

now

we

want

to

integrate

it

and

to

make

user

story

out

of

that

right.

That's

something

to

talk

about.

C

D

What

I

say

this

url

will

basically

be

like

a

virtual

workspace,

it's

virtual,

because

it's

not

a

cluster

workspace,

but

it's

it's

a

real

cluster.

So

we

have

to

think

about

how

to

offer

that

that

to

the

user

I

mean

you

will

not

say

minus

minus

server.

You

need

some

commands.

You

have

to

think

about,

maybe

kcp

pots

or

something

like

that,

and

then

you

should

do

those

things

you

need

it

here

and

the

example

in

the

table.

D

A

A

A

B

A

E

Yeah,

so

we've

got

open

pr

right

now

against

the

new

kcp

dev

client

gen

repo

with

some

client

client

set

generators.

I

think

that

one's

waiting

to

review

I've

taken

the

changes

to

informer

gen

that

we

made

in

upstream

cube

and

brought

them

into

kcp.

So

there's

nothing

pr

against

the

kcp

dev

kubernetes

fork

with

the

informer

gen

changes,

there's

an

open

pr

against

even

api

machinery.

E

That's

pulling

in

that

that

round,

tripper

that

you

were

andy

with

some

changes

to

make

it

work

with

the

new

cluster

stuff.

I

think

that

one's

been

reviewed.

I've

responded

to

that

and

we're

in

the

process

of

getting

the

informer

and

lister

gen

scaffolds

up

we're

waiting

to

hook

all

that

into

the

actual

generator

kit.

A

Cool

thank

you

for

the

update

I

will

say

for

for

me.

I

am

finding

myself

a

bit

swamped

right

now

and

trying

to

get

pull

request.

Reviews

in

is

difficult

at

the

moment.

So

if

you

want

to

err

on

the

side

of

doing

things,

please

feel

free

to

proceed

with

the

code

on

the

other

generators,

if

you're

willing

to.

If

you

really

want

to

wait

for

reviews

on

the

first

one,

that's

acceptable

too

just

be

aware

that

at

least

for

me,

I

don't

have

a

bunch

of

spare

time

today.

E

A

Okay,

yeah.

I

have

a

pr

of

my

own

that

I

need

to

clean

up

and

I

have

multiple

prs.

I

need

to

review

so

I

will

try

to

get

to

everything

as

quickly

as

I

can

and

if

anybody

else

is

interested,

you

don't

have

to

be

one

of

a

select

few

to

do.

Reviews

on

any

of

our

code,

so

please

feel

free

to

might

be

ours.

Yeah

on

the

prs,

we

welcome

everybody

to

participate.

A

So

if

you

want

to

take

a

look

at

stuff,

the

ones-

actually

I

don't

know

if

you

have

yeah

you're,

not

in

here

you're

in

a

different

repo,

we'll

get

the

links

added

to

the

agenda

for

review,

but

anything

in

here.

We

would

really

really

appreciate

folks,

if

you

have

spare

time-

and

you

want

to

review-

please

don't

feel

like

if

you're

a

first

time

contributor

that

you

need

to

stay

away,

we

we

would

love

the

contributions

and

the

help.

A

All

right,

I'm

gonna,

move

on

to

incoming

issues.

If

anybody

thinks

of

anything

you

want

to

chat

about,

please

let

me

know

so

looks

like

we've

got

a

few

here.

I'm

gonna

start

on

the

bottom,

so

I

filed

this

issue

after

a

discussion

with

stefan

a

month

ago

about

having

one

cube,

config

and

needing

to

split

it

up.

A

A

A

D

A

B

C

Not

the

problem

with

this

is

when,

when

the

transport

is

not

catchable

or

because

the

us

client

has

an

internal

cash

for

all

the

time,

and

if

you

use

a

proxy,

you

use

custom.

I

don't

remember

now

the

details.

It's

going

to

generate

one

connection

for

each

person

for

each

group

that

you

declare

that.

A

A

So

I'm

going

to

put

help

wanted

on

here

to

see

if

somebody

looking

for

someone

to

test

and

validate

if

this

is

still

something

we

need

to

fix

or

if

we're

already

sharing

the

underlying

trick,

and

I'm

gonna

put

this

in

pvd

all

right.

Next

is

one

that

kyle

created

about

not

recreating

the

admin

token,

I'm

pretty

sure

that

this

is

fixed,

at

least

the

in

my

usage

of

it.

When

I

stop

and

restart

kcp

locally,

I

I

keep

the

same

token.

I

think

so.

Kyle

do

you?

A

F

A

Well,

this

was

we,

I

know

on

so

like

we

were

on

a

a

managed

instance

of

kc

internally,

and

I

know

in

that

instance,

we

were

not

preserving

the

dot

kcp

directory,

and

so

we

were

losing

the

root

token.

It

was

getting

regenerated

every

time

we

restarted

the

service

because

we'd

restart

a

pod,

we'd

get

a

new

kcp

directory,

and

so

that

we've

solved.

But

that

was

like

a

deployment

issue,

not

a

coding

issue,

so

we

just

need

to

make

sure

that

basically,

the

admin

token

doesn't

get

regenerated.

A

D

D

Or

does

yeah

either

that

or

we

just

built

something

in

kcp?

But

the

point

is

I

mean

in

the

moment

we

start

with

just

one

sso

and

people

build

other

rules.

On

top

of

that,

we

are

stuck

in

this

world

like

we

can

never

make

those

names,

I

mean

we

can

make

new

names

unique

again,

but

the

names

change

so

the

step

from

from

auditory

usernames

to

something

like

sso

id

colon

username.

This

step

will

be

hard

anyway.

It's

something

to

think

about

everybody

interested

in

all.

If

multiple

classes

always

should

become

a

thing,

then.

F

A

Username

that

happens

to

be

identical.

Coming

from

something

like

that,

all

right,

I'm

going

to

put

this

in

dvd

different

ways,

so

we

have

we

use

builda

to

build

kcp

image

and

we

use

code

to

build

the

syncer

image.

I

think

long

term

we

should

unify

I'm

gonna,

put

help

wanted

this

one,

but

priority

wise.

It's

not

pressing.

A

A

Okay

and

the

last

one

is

that

if

you

run

the

kcp

workload,

sync

command

multiple

times

it

fails

every

subsequent

time.

I

do

think

it

would

be

nice

to

either

split

this

into

well.

I

do

think

it

would

be

nice

to

be

able

to

run

this

multiple

times,

especially

if

you

want

to

reconfigure

what

resources

you're

planning

on

syncing.

A

A

A

All

right:

well,

I

think

this

is

like

a

good

first

issue

and

help

wanted,

and

this

is

the

sort

of

thing

I

would

tend

to

put

into

0.5

coming

up

in

the

next

milestone,

because

I

think

it's

important,

but

I

am

going

to

put

it

in

tbd,

so

we

stick

with

the

plans

of

actually

doing

milestone

planning.

So

that's

it

for

new

issues.

Since

march

8th,

where

we.