►

From YouTube: Scaling Down to Scale Up [Destination: Scale]

Description

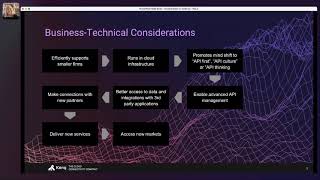

In this session from Destination: Scale, Jelena Duma Sr. Director, Enterprise Architecture at NexJ shares how they scaled down in order to scale up by moving to a microservices architecture. She also shares best practices for other organizations that are also looking to scale and reach new audiences.

Learn more about Destination: Scale - https://konghq.com/events/destination-scale/?utm_source=youtube&utm_medium=video&utm_campaign=destination_scale

A

A

A

We

are

a

software

development

company

who

primarily

develops

customer

relationship

management

solution

for

the

financial

services

market.

Next

j

has

been

delivering

crm

as

the

core

of

an

advisor

workstation

for

more

than

a

decade.

Now

we

have

products

specifically

tailored

to

the

sub

verticals

of

wealth

management,

private

banking,

commercial,

banking,

corporate

banking,

sales,

trade

and

research

and

insurance,

and

each

of

these

sub

verticals

have

their

own

specific

requirements

and

required

customizations.

A

These

deployments

are

on-prem

deployed

directly

on

our

client

systems

and

if

you

look

at

our

biggest

clients,

for

example,

wells

fargo,

royal

bank

of

canada

or

ubs,

you

can

see

the

large

number

of

users

and

integration

systems

that

are

applications

needed

to

integrate

with,

for

example,

our

crm

at

wells.

Fargo

has

over

35

000

users

and

19

systems.

A

A

A

The

most

important

requirement

for

advisors

in

the

financial

industry

is

an

uninterrupted

workflow,

which

would

require

an

easy

transition

from

one

system

to

another.

We

had

to

provide

seamless

integration

points

and

make

it

so

the

advisor

wouldn't

even

realize

the

system

was

moving.

That's

how

our

mind

started

to

shift

to

an

api

first

model,

meaning

first,

we

define

apis

and

integrations,

and

then

we

start

to

build

the

applications

and

as

an

api's

core

functionality,

it's

all

about

connectivity.

A

And,

as

we

all

know,

competition

is

fierce

when

it

comes

to

developing

new

applications,

and

it's

not

only

that

apps

have

to

be

designed

well,

but

also

shipped

to

the

market

in

a

timely

manner,

and

so

we

needed

to

remodel

fast

and

we

had

a

technical

challenge.

Our

biggest

one

was

the

large

monolithic

application

that

needed

to

be

deployed

on

the

cloud

and

integrated

with

standard

cloud

applications

by

using,

for

example,

oauth2

for

authentication.

A

So

we

needed

to

implement

that.

We

also

needed

to

expose

our

apis

in

a

standard

and

secure

way

for

those

third-party

integrations.

We

started

to

think

about

breaking

down

the

monolithic

capabilities

into

individual

autonomous

services

that

could

be

sold

standalone

like

adjacent

applications

that

could

be

integrated

with

our

crm

or

external

crms.

A

A

The

conc

api

gateway

provided

a

uniform

appearance

for

our

apis,

no

matter

what

technology

stack

we

used

in

the

back

end

and

as

we

built

more

application

features

and

with

api

version

changes,

the

gateway

hid

away.

All

structural

api

changes

because

outward

facing

end

points

stayed

the

same,

and

thus

it

provided

stability

for

the

client

and,

as

our

teams

and

developers

are

also

our

most

important

next

year,

api

clients

working

with

these

new

apis

provided

a

great

developer

experience.

A

A

kong

api

gateway

also

gave

us

a

good

management

tool

for

our

apis.

We

use

the

development

portal

to

see

the

workspaces

services

and

routes

we

connected

cong

to

our

afk

elasticsearch

stack

for

application

monitoring

and

we

are

looking

into

integration

with

kibana

for

api

tracing

and

the

most

important

feature

that

conch

gateway

gives

us

is

security.

A

A

A

We

use

two-way

ssl

mutual

tls

between

our

microservices.

We

hardened

cores

by

using

kong's

course

plugin,

since

our

microservices

are

running

on

kubernetes.

We

use

conc,

ingress

controller

to

route

our

services

and

setup

load

balancer

per

cluster,

and

we

use

request

transformer

advanced

kong's

plugin

to

transform

our

requests

for

health

checks.

A

And

each

micro

service

in

our

infrastructure

is

built

and

deployed

independently,

so

and

it

as

it

is

built

independently.

It

is

in

its

own

docker

container,

and

these

docker

containers

then

run

in

a

kubernetes

cluster,

which

is

an

orchestrator,

an

internal

network

where

the

containers

can

communicate

and

make

use

of

their

resources.

A

So

we

use

all

important

kubernetes

objects,

such

as

pods

as

docker

containers,

masternode,

that

manages

other

worker

nodes,

kubernetes

services

that

allow

pods

to

communicate

with

each

other

deployments

that

manage

set

of

pods

ingresses

that

allow

pods

to

communicate

with

the

network

outside

of

the

pods,

in

our

case,

cong

config

maps

and

secrets

for

external

configurations

and

to

set

up

kong,

api

gateway

and

kong

ingress

controller,

as

well

as

all

other

resources.

We

used

a

declarative

approach.

We

used

yaml

files

to

configure

pods

services

and

ingress

resources.

A

We

used

custom

resource

definitions,

crds

and

kubernetes

native

tooling,

to

configure

kong.

That

kind

of

approach

is

kubernetes

friendly.

It

has

the

ability

for

version

and

automate

control,

and

it

is

easier

and

faster

to

roll

back.

We

used

kubernetes

ingress

resources

to

set

up

kong's

workspaces

routes,

cong

services

and

consumers.

A

A

When

coming

from

the

outside

of

our

cloud,

the

request

first

reach

waff

web

application

firewall.

Then

they

go

through

the

load

balancer,

who

is

configured

by

ingress

controller

and

from

the

load

balancer.

The

requests

are

distributed

over

the

gateway

to

the

applications

in

our

kubernetes

setup,

hong

kong

proxy

service

is

exposed

as

a

load

balancer

and

since

all

access

to

the

apis

is

managed

through

the

gateway

and

ingress

resources.

A

A

A

A

And,

let's

look

at

our

roadmap,

we

are

still

looking

into

extending

our

cong

gateway

setup.

As

I

said

before,

we

want

to

extend

the

usage

of

the

development

portals

so

that

we

can

enable

api

tracing

tracing

of

the

api,

would

increase

observability

and

would

be

a

great

help

in

the

troubleshooting

process.

A

We

are

also

looking

into

how

to

bring

the

developer

portal

to

our

teams

so

that

they

can

subscribe

to

our

own

products

and

services,

get

a

dedicated

api

key

like

with

regular

clients

and

use

it

for

development

of

their

applications.

That

would

promote

our

engineering

transformation

to

the

api

first

approach

and

with

the

whole

movement

of

gitops

and

infrastructure.

As

a

code,

we

are

trying

out

argo,

cd

and

automating

our

pipelines

for

easy

cluster

setup.