►

From YouTube: Kubernetes SIG Apps 20230306

Description

No description was provided for this meeting.

If this is YOUR meeting, an easy way to fix this is to add a description to your video, wherever mtngs.io found it (probably YouTube).

A

Good

morning,

good

evening,

good

afternoon,

depending

on

where

you

are

today

is

March

6th,

and

this

is

another

of

our

bi-weekly

say

gaps

call.

My

name

is

Machi

and

I'll,

be

your

host.

Today,

one

quick

announcement.

We

are

roughly

a

slightly

over

a

week

from

the

127

code

freeze,

which

is

happening

march

to

March

14th

over

to

15,

depending

on

where

you

are

on

earth.

A

B

A

So

that

was

all

when

it

comes

to

the

announcements.

The

main

two

topic

of

discussion,

the

first

one

was

already

handled

by

by

by

Ken

and

I

on

Friday

afternoon,

when

we

were

thinking

prior

to

this

call,

and

that

leaves

us

only

with

the

topic

from

Lucy's

sweet

about

scaling

up

and

down

deployments.

So,

let's

hear

from

let's

see

about

the

use

cases

and

the

idea

behind

it,

do

you

have

a

presentation

that

you

want

to

share

with

or

oh.

C

A

C

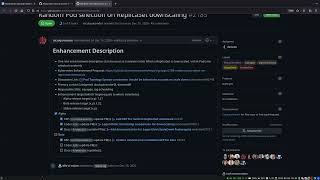

Yeah,

just

look

at

the

issue:

that'll

work,

okay,

so

here

I'll

give

you

the

quick

rundown

of

where

this

has

come

from.

I

work

at

Uber,

ubis,

kubernetes,

I,

know

big,

surprise

there

and

we

have

a

load

of

workloads

running

on

K8

right

now

and

while

many

of

them

are

designed

to

withstand

disruption

from

a

load

of

instances

being

dropped

all

at

once

or

a

load

of

instances

appearing

all

at

once.

Some

of

them

are

is

that

anti-pattern

yeah,

probably,

but

it's

an

anti-pattern

the

week.

C

What

I

was

looking

at

doing

is

allowing

end

users

to

basically

control

the

speed

at

which

some

so

right.

Now,

when

you

create

a

deployment

and

you

change

the

replicas

number

in

the

deployment,

that's

immediately

passed

the

replica

to

the

replica

set

and

that

immediately

just

instantly

scales

up

or

scales

down,

depending

on

what

you

change

the

number

to

there's.

No,

what's

the

word,

there's

no

there's

no

slow

roller

or

anything,

it's

just

instant

in

Behavior.

C

So,

in

parallel

effectively,

what

I

was

thinking

of

doing

is

making

it

so

that

if

you

opt

into

this

Behavior,

so

it

doesn't

break

people

who

already

ex

rely

on

the

parallel

behavior

that

you

can

scale

up

and

down

at

a

speed

that

you

control

I.

Guess,

like

my,

the

two

things

going

on

in

my

head

is

first

off.

C

Do

we

even

want

this,

because

that's

kind

of

important,

obviously-

and

the

second

thing

going

on

my

head-

is

because

there's

quite

a

few

approaches

we

could

take

to

actually

do

this

of

yet

how?

How

would

you

do

this?

But

I

guess

the

first

thing

before

I

say

anymore

is

yeah

first

off,

do

we

even

want

this?

Is

this

something

that

yeah

should

even

exist

in

the

API,

so

I'll

stop

there

for

now

laughs.

A

Deployment

and

grouplook

has

said

that

actually

prevents

from

scale

up

or

scale

down,

be

super

excessive

and

actually

I

was

checking

the

code

earlier

before

the

call.

What

we

do

when

we're

scaling

up.

We

are

slowly

speeding

up

the

scale

up

operation,

so

you

start

with

a

one

and

then

everything

goes

well.

We

double

the

scale

operation

with

every

every

next

iteration,

so

it

doesn't

scale

immediately

to

whatever

number

you

come

up

with,

but

it

will

be

one

two,

four

and

and

so

forth.

A

So

I

wonder

when

you

were

testing

it,

what

kind

of

scale

up

and

down

you

were

talking

about,

whether

the

the

numbers

were

significantly

big

that

this

was

I.

Don't

know,

debossing

your

cluster,

that

you

brought

this

this

topic

up

and

in

a

similar

fashion.

There

is

indeed

a

unfinished

work

that

I

was

actually

looking

and

I

just

spotted,

because

the

enhancement

was

closed.

Previously

we

had

the

notion

that

a

random

part

where

were

being

chosen

during

downscaling,

but

that

has

changed

with

this

enhancements,

which

is

currently

beta.

A

If

I

remember

correctly,

it

wasn't

promoted.

We

should

probably

pick

it

up

and

and

move

it

across

the

Finishing

Line,

which

would

allow

users

to

slightly

affect

the

scaled

down

operation.

So

maybe

before

answering

those

what

kind

of

problems

you

run

into

with

the

current

mechanism

that

we

have

that

you

are

proposing

this

enhancement.

C

Okay,

so

I

guess

very

quickly.

Yes,

so

when

I

was

testing

it

on

a

cluster

of

my

own,

it

rolled

out

in

parallel

I'm

wondering

I,

guess

my

initial

thought

would

be.

Does

that

wrote

Progressive

rollout

happen

if

you

change

the

Pod

generation,

maybe

but

I

don't

know

yeah

the

actual

problems

we

were

dealing

with

is

what's

the

word.

C

Let's

see

if

I

can

skirt

my

NDA

the

correct

side

it's

is

pop

is

basically

there

are

some

servers

Services

inside

Uber

that

service

owners

have

written

where

the

pods

communicate

with

each

other

I've

seen,

for

example,

people

before

where

they

like

run

FCD

on

the

service

itself

and

then,

when

you

suddenly

drop

a

load

of

them

or

introduce

a

load

of

them.

The

whole

thing

blows

up

in

their

face.

Is

that

an

anti-fattern

yeah

sure

it's

definitely

a

challenge

to

deal

with,

but

that's

that's.

C

To

give

an

example,

it's

mostly

basically

where

pods

talk

to

each

other

is

where

it

starts

to

become

painful

but

yeah,

and

when

I

tested

the

behavior

in

minicube

on

what

was

it

one

point:

I

can't

remember

the

the

latest

version

that

mini

mini

cubes

are

supporting

right

now.

It

it

instantly

took

them

up

in

parallel,

like

with

no

check

with

no

waiting

at

all

really.

A

Ron

I

would

have

to

dug

up

from

what

the

API

is

called,

because

I

can't

remember,

but

basically

there

are

options

which

allow

you

to

decide

how

fast

the

rolling

update

is

happening.

Have

you

tried

changing

those

values?

The

only

downside

is

that

they

are

actually

affected

during

a

rollout

and

not

during

a

scale

operation,

which

is

probably

one

of

the

issues

that

you

might

be

running.

C

Yeah,

so

to

be

clear,

yeah

this

happens

when

I'm

talking

about

here

isn't

a

rollout.

It's

just

increasing

the

replicas

number

up

and

down,

so

it

doesn't

create

a

new

pod

generation

and

it

doesn't

go

through

Max

surge,

Max

unavailable

or

anything

like

that.

It's

yeah

it's

completely

separate

from,

for

example,

changing

something

about

the

Pod

spec

and

then

the

deployment

goes.

Oh,

the

prospect

change,

I

need

to

roll

out.

If

that

makes

sense,

I

mean

one

of

the

proposals

in

this

issue

actually

was

to

yeah.