►

From YouTube: Kubernetes SIG Apps 20220613

Description

No description was provided for this meeting.

If this is YOUR meeting, an easy way to fix this is to add a description to your video, wherever mtngs.io found it (probably YouTube).

A

Hello

good

morning

or

potentially

good

afternoon

or

evening,

depending

on

where

you

are

in

the

world,

everyone.

This

is

the

July

or

sorry

June

13th

meeting

of

cigaps

I'm

going

to

be

hosting

I'm

Kenneth

Owens,

my

Jack

will

be

co-hosting.

I

think

you

might

have

to

give

out

a

little

bit

early

and

I.

Think

Janet

couldn't

make

it

this

time.

B

C

You

hear

me

now

for

work.

Yes,

there's

reason

it

is

when

I

mute

myself,

I

have

to

switch

devices

back

and

forth.

Well,

whatever

so

Ryan

put

this

into

the

agenda

last

time,

Philip

looked

into

the

test

itself

over

the

past

couple

of

days,

I've

already

approved

it.

Although

Philip

pointed

out

that

first

of

all,

we

can't

verify

the

serial

testing

even

manually

before

submission

and

their

there

appears

to

be

a

problem

with

this

particular

test

that

it

is

actually

failing.

C

Philip

tested

that

on

his

own

local

password,

so

the

test

is

approved

as

soon

as

and

Philip

is

working

on,

ensuring

that

we

can

actually

trigger

the

serial

test

in

a

pre-submits,

because

currently

it

is

not

possible,

assume

that

that

gets

sold

and

the

test

passes

to

the

passive

rights

to

to

merge

it.

So

this

should

be

handled

if

I.

Remember

correctly,

that's

one

of

the

last

pieces

for,

for

conformance.

A

E

E

E

The

motivation

behind

this

project

is

this

long-term

idea

of

migrating

a

staple

set

across

clusters,

so

we've

discovered

some

use

cases

for

certain

sets

of

users

that

may

want

to

move

safely,

sets

out

of

a

cluster

boundary

and

maybe

due

to

scalability

limits,

tenant

or

application

isolation.

So,

if

you

need

to

say,

move

out

of

a

shared

cluster

to

an

isolated

cluster

or

encountering

features

that

are

end

of

life

in

a

particular

cluster

and

are

only

available

on

new

clusters

when

they're

created.

E

So

the

goal

here

is

to

be

able

to

move

a

stapler

set

while

it's

still

hosting

an

application

in

it

piece

by

piece

from

a

cluster

a

to

Cluster

B.

A

lot

of

existing

Solutions

are

out

there

that

allow

for,

like

a

backup

of

a

staple

set

to

be

created

or

a

staple

application

to

be

created,

underlying

storage

to

be

snapshotted

and

then

rehydrated

in

new

cluster.

Unfortunately,

this

requires

schedule

of

Maintenance

and

downtime

for

an

application.

E

Next

slide,

please.

So

what?

In

order

to

do

this?

Building

block

well,

there's

several

building

blocks

that

are

required,

so

moving

an

application

over.

You

can

consider

a

process

of

say,

scaling,

scaling

down

a

stapler

set

and

cluster

a

and

scaling

it

up,

and

cluster

B

and

moving

PODS

over

so

replicas

of

the

application.

E

In

order

to

do

this,

though,

you

need

some

networking

configuration

in

order

for

an

application

of

cluster

a

to

talk

to

a

logical

application

of

cluster

B.

You

need

some

storage

orchestration

for

underlying

discs

to

be

moved

or

just

to

be

snapshotted.

If

we're

moving

across

zones,

for

example,

and

then

the

final

piece

is

the

actual

replicas

of

the

application

that

are

being

moved.

So

it

takes

a

a

three

replica

database.

E

That's

what

we're

talking

about

here,

moving

over

those

specific

instances,

so

maybe

moving

secondaries

over

performing

a

failover

and

then

bringing

up

a

primary

on

a

cluster

beat.

So

this

cap

is

just

focusing

on

the

third

problem

here.

I

know

that

there's

a

lot

to

be

solved

here

for

networking

and

storage,

but

just

focusing

on

creating

a

building

block

that

enables

this

third

problem

to

kind

of

be

solved

so

next

slide.

E

So

the

core

problem

here

that

can't

be

solved

today

with

staple

sets

if

they're

running

in

a

single

cluster,

is

that

there's

kind

of

this

split

playing

split

brain

control,

problem

where,

if

you

have

two

clusters,

they

have

separate

control

planes,

there's

no

way

to

really

have

a

logical

grouping

across

the

cluster.

So

in

order

to

run

a

logical

app

with

worker

nodes

in

both

clusters

need

a

way

to

be

able

to

have

a

global

view

of

the

resources

across

the

Clusters

so

either.

E

So

we

could

imagine

if

there's

a

three

replica

staple

set,

a

pods,

zero

and

one

and

then

cluster

a

and

then

pod

2

in

cluster

B

and

being

able

to

move

pod

by

pod

over

across

the

cluster.

And

you

can

imagine

you

know

if

you

have

more

replicas,

you

can

do

this

faster.

You

could

do

it

slower,

but

the

idea

is

having

a

mechanism

to

be

able

to

orchestrate

pod

movement

while

maintaining

application,

availability.

E

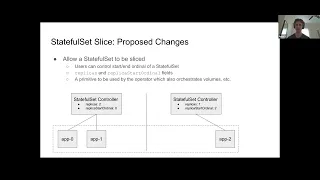

So

the

proposed

changes

in

the

skep

or

to

be

able

to

slice

a

staple

set

So

currently

there's

only

the

spec

replicas

field

enables

the

number

the

control

for

the

number

of

replicas

in

a

stapler

set.

But

the

proposed

changes

here

are

allowing

the

user

to

control

both

the

start,

ordinal

and

the

end

ordinal

So.

Currently

the

end

ordinal

control

already

exists

using

the

replicas

field.

The

proso

here

is

adding

a

new

field

called

replica

start

ordinal,

which

would

enable

kind

of

a

start

and

end

ordinal

to

be

defined

effectively.

E

E

You

could

do

this

by

using

the

replica

story.

Ordinal

field,

spinning

up

a

new

stapler

set

in

cluster

B,

adding

replicas

one

replica,

star,

ordinal

2

and

then

scaling

down

replicas

in

cluster,

a

so

that

you

have

the

same

replica

application

replicas

across

the

global

view

of

both

clusters,

but

you're

able

to

can

able

to

split

up

the

staple

set

using

those

fields

so

I'm

bringing

this

problem

to

cigaps

today

to

ask

for

some

discussion,

feedback

and

some

insight

into

this

idea

and

kind

of

presenting.

E

This

is

a

core

problem

rather

than

this

is

the

definitive

solution

we

want

to

take

to

kind

of

solve

this

problem,

I

just

kind

of

want

to

get

some

feedback

for

feasibility

of

this

idea.

If

people

have

major

concerns,

if

people

can

think

of

alternative

ways

to

kind

of

solve

this

problem

without

needing

to

add

this

particular

API,

so

I'm

going

to

stop

there

and

just

open

the

floor

for

a

discussion.

A

A

So

but

I

guess

the

the

thing

here.

It

would

help

if

you

could

probably

give

a

more

specific

example

of

what

you're

trying

to

do

in

terms

of

application.

Availability

under

these

constraints,

right,

like

I'd,

have

to

imagine

that

the

client

application

for

this

lives

outside

of

either

cluster

right,

because

you're

tearing

or

does

it

like,

I

I,

don't

understand

what

the

networking

would

look

like.

A

E

See

yeah,

that

makes

sense.

So,

let's,

let's

take

a

scenario

of,

say,

a

three

bucket

replica

database

that

we're

moving

over

and

for

ease

of.

Like

example,

let's

say

it's

a

primary

secondary,

so

one

primary

two

second,

two

secondaries,

so

the

orchestration

of

the

storage

would

kind

of

be

an

external

component

and

that's

still

something

that

we're

working

on

prototyping

and

kind

of

thinking

of,

but

it

would

require

orchestration

of

some

external

system.

E

That's

unrelated

to

this

specific

stapler

set

cap

that

we're

discussing,

but,

like

one

example,

could

be

say

you

initiate

this

process

from

a

staple

set

and

you

determine

which

volumes

are

actually

consumed

by

the

staple

set

Maybe

by

an

application

selector

that

the

user

specifies

there'd

be

potentially

some

agent.

That

would

be

migrating.

These

references

so

recreating

persistent

volume.

Persistent

volume

claims

in

the

new

cluster

that

point

to

the

same

underlying

storage.

E

A

A

So

you

have

to

detach

it

from

one

I

guess,

which

I

think

the

thing

that

that

I

struggle

with

is

that,

like

without

the

motivating

example

of

the

external

orchestrator,

it's

unclear

on

like

what

the

best

thing

to

do

a

stateful

set

is

in

order

to

help

and

without

like

some

details

about

the

system

that

would

actually

migrate.

The

persistent

volume

claims

into

consistent

way

like

it's

hard.

B

A

Reason

about

the

correct

behavior

of

Staples

set

in

this

context,

because

you

are

talking

about

probably

several

orchestrators

that

will

be

required

in

order

for

this

to

work

effectively,

leaving

networking

out

of

it,

which

I

don't

fully

understand,

like

I,

think

they're

if

you're

looking

at

replicating

across

geographic

regions

or

geopolitical

boundaries.

Aside

from

instead

of

just

clusters

in

the

same

like

data

center,

there

might

be

entirely

different

constraints

there.

E

E

So

I

guess

the

one

thing

that

for

for

networking

that

we're

considering

using

multi-cluster,

Services

I

know

this

cap

was

introduced

in

2020,

and

it's

gained

some

traction

kind

of

so

being

able

to

use

that

for

networking

across

clusters

would

enable

that

to

be

set

up

with

the

application

before

migration

is

initiated,

but

I

yeah,

I,

agree,

I,

think

the

the

major

unknown

here

is

really

the

storage

orchestration,

that's

something

that

hasn't

really

been

proposed

or

like

brought

to

the

community.

Yet.

A

On

the

plus

side,

I

do

think

that

the

implementation

for

really

straightforward

right,

because

all

you're

saying

is

give

me

an

extra

feel

that

determines

the

starter

number

of

the

orbitals

for

enumeration

and

then

like

instead

of

my

replicas

starting

at

I,

would

go

at

two

and,

if

I

scale

up

to

three,

for

instance,

in

your

on

the

second

screen

over

here,

it

would

be

at

two

at

three

at

four

right.

That's

basically

what

your

ask

is.

A

Yeah

that

seems

like

the

cap

has

the

benefit

of.

Like

I

mean

it

does

seem

fairly

straightforward.

The

the

kind

of

downsides

from

my

perspective

is

that,

like

the

motivation,

is

a

little

bit

weak

without

understanding

what

the

external

orchestrator

is,

that

it

would

actually

be

interacting

with

all

right,

because

you're

at

like

the

the

typical

thing

we

would

say

is

like

you

know,

you

could

always

do

this

work

out

of

tree.

You

can

always

Fork

Staples

set.

A

You

can

always

operate

with

your

third

party

controller,

using

custom

resource

definitions

and

just

have

a

blast

right

like

there's.

No,

nothing

stopping

you

from

doing

that

today.

If

you

want

to

put

it

as

a

feature

in

core

kubernetes

like

inside

of

a

V1

API,

it

would

be

helpful

in

terms

of

facilitating

that

to

have

some

strong

motivation

in

terms

of

the

systems

that

would

actually

benefit

from

making

the

modification

shepherding

it

in

and

then

maintaining

it

over

time.

E

A

I

mean

that's

my

two

cents,

but

my

two

cents

is

not

exhaustive

of

the

entire

kubernetes

community,

so,

like

I,

think

it's

worth

it's

worth

offering

it

it's

just

again

like

I'm,

just

giving

you

my

feedback

about,

like

you

know,

looking

at

it.

What

I

see

is

kind

of

like

the

strength

of

it

and

kind

of

the

weakness

of

it,

which

is

like

the

feedback

you

asked

for

the

feedback

that

I'm

seeing

is

like

the

feedback

that

comes

to

my

mind

is

like

it's

really

hard

for

me

to

wrap.

A

My

head

around,

like

like

I,

get

what

you're

trying

to

say

but

like

making

a

change

to

a

V1

API

that

we're

going

to

support

pretty

much,

because

once

it

goes

GA,

that's

it's

forever

and

not

having

any

client

systems

that

would

actually

be

able

to

leverage

that

to

provide

value

to

the

end

user

is

is

a

hard

thing

to

motivate

right

that

that's

kind

of

the

way

I

see

it.

There

may

be

other

people

like

you

know.

If

you

offer

the

cap,

there

may

be

like

a

bunch.

A

Maybe

there

are

pre-existing

systems

like

you

know,

VMware,

and

so

then

a

lot

of

the

other

storage

providers

have

existing

systems

that

will

attempt

to

migrate

storage

across

clusters

already.

So

maybe

this

integrates

well,

and

maybe

the

the

thing

you

can

do

is

just

by

offering

the

captain

the

community

get

more

motivation

and

other

people

saying

like

yeah.

This

would

really

help.

You

know

so

like

that.

A

That's

a

starting

point

that

would

maybe

motivated,

but

as

it

is

like,

if

you

were

saying

like

hey

I've,

got

this

system

and

I

think

there

are

some

other

systems

in

the

community.

That

would

benefit

from

this

feature

and

it

would

allow

us

to

actually

more

reasonably

like

lower

the

disruption

of

doing

cluster

upgrades,

because

a

lot

of

kubernetes

providers

do

encourage

effectively

blue

green

or

red

black

or

how

you

should

maintain

your

clusters.

Right,

like

they

don't

say

in

place,

upgrade,

is

the

the

happy

path.

A

They'll

tell

you

roll

up

a

new

cluster

and

then

migrate,

your

workloads

to

it

and

then

turn

the

old

one

down

and

I

totally

get

that

so,

like

you

know,

trying

to

do

things

in

that

space

to

facilitate

that

I.

Think

I

think

it's

worth

doing,

but

it's

just

hard

for

me

to

look

at

this

and

say

like

okay,

so

we're

gonna,

Commit

This,

take

this

cat

commit

this

patch.

Carry

this

patch

and

I:

don't

have

a

user

for

it

right.

F

So

like

the

only

way

we

could

see

you

know

doing

this

without

changing

stateful

set

is

basically

forking.

The

stateful

set

controller.

Anybody

who

wants

to

use

this

instead

of

using

a

stateful

set

for

their

workload

would

have

to

use

our

special

stateful

set

version

instead

and

you

know,

and

like

I

kind

of

have

a

complete

Fork.

So

a

part

of

the

idea

of

this

cap

is

like:

how

can

we

do

a

minimal

change

to

the

stateful

set

to

allow

these

extended

usages?

You

know,

but

still

have

as

much

as

possible.

F

A

F

So

we're

actually

in

the

process

of

doing

a

proof

of

concept

of

this

I

mean

I

I,

think

the

plan

we

have

currently

is

actually

to

have

a

fork.

Stateful

set

controller

I

mean

considering

that

we

want

to

do

our

proof

of

concept

in

the

next

few

months,

like

that's

kind

of

the

option

and

like

in

parallel.

We

are

per

proposing

this

cap,

which

is

our

current

plans

now.

F

You

know,

because

we're

still

in

the

proof

of

concept

phase

like

I

feel

like

there's

still

quite

a

bit

of

scope

for

changing

how

we

do

this,

which

is

the

you

know

that

this

is

the

motivation

for

starting

the

discussion

on

this

cap,

but

yeah

like

in

the

next

couple

months.

We

plan

to

have

a

POC

of

the

volume

controller

out,

which

I

think

will

maybe

help

clarify

this

use

case,

and

you

know,

could

could

help

more

concrete

discussion

when.

F

Or

that

is

the

ultimate

plan,

yes,

but

because

it's

like

we

don't

have

to

change

anything

core

to

do

like.

Currently,

at

least

as

we

see

it,

we

can

do

it.

You

know

purely

as

a

out

of

tree

controller,

so

we

haven't

sort

of

started

the

Upstream

process,

yet

until

we

I

mean

we

kind

of

need

to

get

an

implementation

and

actually

test

stuff

before

we

start

making

wild

speculations,

you

know.

A

Yeah

so

I

mean

like

I.

Like

again,

my

gut

reaction

is

the

touch

is

light

like

you're

asking

to

change

the

beginning

of

the

ordinal

numbers.

It

doesn't

seem

like

there's

not

a

whole

lot

of

things

that

would

break

for

current

customers.

I

can

figure

it

like.

It

can

think

in

my

head,

pretty

trivially

how

to

do

it

in

a

way.

That's

Backward,

Compatible,

so

releasing

it

is

fine.

The

main

thing

is

like

once

it's

out

there:

it's

it's

out

there

forever

like

and

you

you.

A

If

you

had

like

a

like

three

or

four

different

use

cases

where

it's

like

these

are

the

things

it

supports.

Then

you

know

that

would

be

a

strong

motivation.

You

don't

have

three

or

four,

though

you've

got

like

one.

It's

a

good

use

case

right

but

like

if

you're

already

like,

if

you're

already

like

look

I,

got

a

fork

it

in

order

to

make

this

work

for

my

POC,

then

I'm

gonna

do

the

volume

controllers

and

release

them.

A

Why

not

run

with

that

and

then,

like

you,

can

keep

the

cap

open

and

get

more

Community

feedback

and

kind

of

try

to

build

momentum

around

what

you're

trying

to

do

here

and

then

from

there.

You

know

when

you

have

something:

that's

a

little

bit

more

like

concrete.

We

can

look

at

like,

but

really

have

some

evidence

and

some

signal

to

assess

like

okay.

This

is

like

the

right

thing

and

then

we

can

look

at

bringing

in

and

trade

yeah.

F

F

F

A

It

wouldn't

be

too

bad,

like

you're,

never

going

to

be

in

that

case,

but

like

would

you

expect

orbiting

Behavior,

or

would

you

expect

deletion

Behavior

like

what

happens

if

you

set

this

field

if

it

hasn't

been

set

previously?

If

that's

not

in

there,

you

might

want

to

think

about

adding

what

the

desired

behavior

is

there,

because

I

could

get

a

little

bit

hairy,

but

I

even

don't

think

that,

in

terms

of

a

coronary

cases,

particularly

like

nasty

nasty,

yeah,

yeah

I.

E

It

can

lead

to

some

unexpected

if

the

user

isn't

aware

of

that.

Behavior

like

it

can

lead

to

some

application

like

effectively.

You

know,

if

you

had

replica

star

ordinal,

that

was

quite

High

say

like

three:

it

would.

You

know,

try

to

delete

pods

that

are

greater

than

a

certain

ordinal

and

then

recreate

the

ones

from

like

zero

to

two.

A

The

fidelier

bid

is

actually

with

the

pbcs

and

the

storage

creation

right,

the

storage

provisioning

because,

like

especially

with

a

staple

application,

your

expectation

is

you're

going

to

get

very

particular

volumes

associated

with

your

your

staple

set

right.

Yeah

you're

gonna

have

data

on

them,

but

now,

with

default

settings

you're

not

going

to

lose

that

data,

it

would

still

be

there.

You

should

probably

be

able

to

retrieve

it

by

scaling

the

staple

so

not

particularly,

but

associating

the

exact

volume

with

the

exact

pod

that

has

the

identity

you

want.

A

It

is

going

to

be

fiddly

if

you

roll

back

right

like

if

all

of

a

sudden

you

were

at

you

know

like

if

you

created

a

staple

set,

start

at

three,

give

me

three

replicas,

so

you

have

three

four

five

six

and

then

you

go

back

to

an

old

cluster.

You

have

three

replicas,

it

doesn't

say,

started

three.

You

have

zero

one.

Two

three,

those

are

gonna

be

brand.

New

hives

they'll

have

no

data,

so

whatever

the

application

does

on

initial

provision,

you

need

is

what

you

get

there.

A

You

should

be

able

to

recrate

reclaim

your

data

by

scaling.

It

up

to

like

six,

if

that's

possible,

but

in

any

case

you

could

be.

You

would

at

least

be

able

to

break

glass.

Get

your

PVCs

back

and

get

your

volumes

out.

The

default

behavior

isn't

going

to

be

a

data

loss

which

is

like

that

tends

to

leave

people

in

consolable

right,

like

that's

like

generally

unacceptable,

so

that

you

wouldn't

get

that

behavior.

A

So

I

mean

that

that's

why

I'm

like

this

doesn't

seem

like

super

risky

or

super

like

I'm,

not

looking

at

like

this

is

like

crazy

I'm.

Just

looking

at

it

like

you'd,

be

helpful

to

have

a

strong

motivation

for

it,

but

that's

the

feedback.

I

have

but

I've

opened

up

and

does

anyone

else

have

anything

to

add

or.

B

A

D

D

Let's

say

if

a

user

knows

a

particular

exit

code

to

consider

a

pod,

failed

and

and

not

recoverable,

there

is

no

way

to

immediately

fail

the

entire

the

entire

job

and,

on

the

other

hand,

there

is

no

way

to

control

when

a

pod

failure

is

due

to

infrastructure

errors

versus

the

the

user's

infrastructure.

Errors

would

be

things

like,

they

know

it

goes

down

or

they

know

it

is

preempted

or

keep

scheduler

had

to

preempt

the

Pod

things

like

that.

D

D

History,

the

stories

the

user

stories.

Okay.

Yes,

so

that's

that's

the

context,

so

we

are

proposing

this

this

API

that

precisely

allows

you

to

to

determine

specialized

certain

failures

by

by

exit

code

or

by

the

failure

reason,

and

to

take

two

decisions

either

to

completely

terminate

the

job.

For

example,

can

you

stop

there?

For

example,

here

we

have

the

rule

down

there

in

the

yaml

we

have

the

rule

terminates

when

the

exit

code

is

not

between

40

and

50..

D

This

is

more

useful

for

for

infrastructure

providers

because

they

don't

they.

If

somebody's

providing

a

job

system

right

for

researchers,

the

the

administrators

don't

know

what

exit

codes

the

the

application

might

have

so,

but

what

we

do

know

is

that

cubelet

or

other

controllers

insert

a

certain

reason

in

the

body

status

to

to

signify

why

the

Pod

was

terminated

or

to

explain

why

the

plot

was

terminated.

So

here

we're

adding

this

other

rule

the

for

example,

in

in

the

case

of

preemption,

or

no

no

pressure

eviction.

D

D

I

want

to

highlight

a

few

risks

that

one

of

the

risks

is

precisely

these

status

reasons

that

are

not

currently

fully

documented

in

kubernetes,

but

we

want

to

to

do

a

survey

of

all

the

the

reasons

that

we

are

currently

introducing

and

document

them

in

in

the

website

and

also

move

all

the

constants

to

the

core

V1

package.

So

they

they

are

more

discoverable

and

also

they

have.

They

are

subject

to

API

reviews

and

the

the

other

somehow

risk

is

that

you

know.

D

Currently

the

garbage

collector

removes

spots,

so

there

could

be

a

scenarios

where

we

lose

the

the

status

before

we

can

take

a

decision,

but,

as

you

might

remember,

remember,

we

have

already

decent

going

feature

for

for

accounting

job

failures

using

finalizers.

So

once

that's

completed

this

no,

this

will

no

longer

longer

be

a

an

issue,

and

additionally,

there

are

certain

back

to

reasons.

D

There

are

certain

reasons

that

are

currently

or

there

are

certain

components

in

kubernetes

that

currently

delete

pods,

and

they

don't

explain

why

one

of

them

is

keeps

scheduler,

for

example,

when

it

preamps

a

pod,

it

just

deletes

the

Pod.

It

doesn't

say

why.

So

we

want

to

also

survey

all

these

users

usages

usages

of

deletion,

delete

pod,

delete,

API

and

I'll

add

a

reason

for

it.

So

we

would

be

adding

a

delete,

an

option

for

the

delete

API.

So

we

can

include

that

reason

that

can

be

added

to

the

Pod

status.

D

D

C

I

started

thinking

now

that

I

was

listening

to

how

we're

explaining

whether

we

should

split

the

reason

into

separate

cap,

because

that's

kind

of

like

one

thing

of

the

proposal

and

the

other

one

is

just

just

a

job

related

changes

and

then

make

depend

on

the

other.

That's

probably

something

else.

My

main

question

I

already

left

at

Indica

as

well.

Is

there

any

particular

reason

why

you

went

with

slightly

complicated

API

approached

towards

defining?

C

D

D

Ultimately,

I

think

this

is

more

of

an

implementation

detail

that

we

can.

We

can

fix,

but

yes,

I'm

I'm

working

with

with

me.

How

was

writing

the

cap

to

to

try

to

find

the

closest

API

to

existing

label

selectors

and

your

so

back

to

your

first

question:

if

I'm

hearing

correctly,

you

are

suggesting

we

split

this

in

two

caps,

one

just

for

you

know

the

delete

options

and

adding

reasons

and

one

for

controlling

the

job

for

the

con.

The

job

failures

and

updates.

D

I

guess

that

would

just

need

a

extra

extra

review.

I

mean

we're

already

adding

seat

API

Machinery

to

the

to

the

to

the

cap

as

participating,

and

the

reasons

change

itself

is

not

that

big

and

it's

hard

to

justify

by

itself

so

kind

of

I

I.

Think

having

it

as

a

single

cap

provides

a

bigger

picture.

But

if

you

disagree

we

can

we

can

split

it.

C

That's

why

the

kind

of

similar

to

labels

selectors

approach

seemed

a

little

bit

more

simplistic

on

the

API

surface,

but

it

will

be

also

much

easier

to

extend

in

the

future

if

you

decide

to

add

additional

operators

or

additional

fields

on

either

side

of

the

of

that

condition.

I

I,

it's

probably

something

that

we

can

discuss

in

the

cap

itself,

but

it

yes.

B

D

Yeah,

it's

I!

Guess

it's

it's

simpler!

If,

if

we

can,

if

we

are

looking

at

extending

it,

but

it's

a

little

bit

more

work

for

the

user,

if

it's,

if

you

just

want

to

provide

Min

and

Max,

because

now

you

have

to

Define

an

array

of

conditions

of

comparisons,

I

guess,

instead

of

a

single,

a

single

field:

okay,

but

I'll

I'll

go

back

to

the

let's

say

to

the

Whiteboard

to.

D

C

Definitely

because

I'm

pretty

sure

that,

as

soon

as

we

expose

this

kind

of

API

a

lot

of

a

lot

of

people,

a

lot

of

people

will

start

relying

on

it.

I'm

positive

that

the

fact

that

you

will

be

just

implementing

this

and

using

this

in

the

job

controller

will

be

then

used

further

by

other

consumers

and

other

controllers

who

are

implementing

or

working

directly

with

bots

in

a

similar

fashion.

C

C

Having

both

the

reason

and

the

exit

code,

and

maybe

potentially

something

in

the

future,

is

one

of

the

reasons

that

I'm

thinking

of

being

able

to

easily

expand

the

the

API

surface,

but

rather

than

having

more

and

more

Fields

added

the

fact.

If

we

go

through

the

yeah

I,

don't

like

the

comparison,

but

it's

probably

the

closest

the

labels.

Electoral

life

mechanism

would

allow

us

for

a

little

bit

more

flexibility

when

it

comes

to

expanding

that

surface.