►

From YouTube: Kubernetes WG Batch Bi-Weekly Meeting for 20230216

Description

No description was provided for this meeting.

If this is YOUR meeting, an easy way to fix this is to add a description to your video, wherever mtngs.io found it (probably YouTube).

A

Good

morning,

good

evening,

good

afternoon,

depending

on

where

you

are

today

is

February

16th-

and

this

is

another

of

our

bi-weekly

worker

batch

calls.

My

name

is

Matthew

and

I'll.

Be

your

host

today

and

I

see

that

we

have

topics

on

the

agenda

already

from

Aldo,

so

I

guess

I'll

go

take

it

away

from

here.

B

B

B

C

B

Is

that

from

now

on,

we

will

have

a

backwards

company

backwards

compatibility

guarantees,

so,

from

now

on,

you

wouldn't

be.

You

will

have

time

to

migrate

and

proper

tooling

for

migration

and

an

important

feature

that

was

highly

requested

from

the

beginning

of

the

project

is

preemption

and

I'm.

Gonna

talk

also

a

little

bit

about

how

it

works.

B

B

B

B

B

If

you,

if

you

look

at

look

at

the

slides

later,

you'll

find

all

the

links

to

to

the

dogs

or

PRS

and

whatnot,

but

I

wanted

to

highlight

a

few

changes.

In

particular

this

one

we

GK,

we

actually

run

some

ux

research

studies

and

also

from

feedback

we

got

from

from

early

users.

We

we

learned

that

people

didn't

didn't

understand.

B

It

got

confused

with

these

two

terms

that

we

use

for

quora

mean

unbox,

so

we

we

did

a

bunch

of

brainstorming

to

figure

out

how

to

properly

name

these

fields,

but-

and

we

just

ended

up

with

these

two

names-

which

we

hope

are

more

understandable.

So

here

we're

saying

that

the

CPU,

the

CPU

resource

has

a

flavor

of

spot

and-

and

we

have

40

CPUs

available

of.

C

B

Now

the

next

field

is

a

borrowing

limit,

which

means,

once

you

have

reached

your

quora.

If

your

cluster

queue

belongs

to

a

cohort,

then

you

can

borrow

quora

from

these

other

cluster

queues

in

the

same

cohort.

So

and

then

this

is

the

the

limit

of

how

much

you

are

able

to

borrow

from

all

those

other

cluster

queues.

So

I

guess.

The

only

thing

to

note

here

is

that

now,

instead

of

Max,

we

are

doing

you

know

the

extra

which

is

60

so

then

60

plus

4

is

the

old

Max.

D

B

Servers

priority

on

furnace

if

you're

familiar

with

it,

which

also

deals

with

quotas

and

sharing

and

so

on.

So

they

already

had

decided

this

these

two

terms

and

we

found

out

that

they

fit

nicely

with

our

semantics

as

well.

So

we

are

doing

that

yeah

so

with

that

I

wanted

to

also

give

a

shout

out

to

Jordan

Liggett,

who

gave

us

a

quite

a

few

hours

of

review

of

the

API.

B

So

we

we

did

all

of

all

of

this

well

well

doing

this

proposal

for

a

for

a

beta

API.

Now

still

in

the

same

in

the

same

object,

we

went

a

bit

further,

so

you

can

see

here

on

the

left

kind

of

the

updates,

the

updated

spec

for

for

the

cluster

queue.

So

basically,

you

can

see

that

we

specify

multiple

resources,

CPU

and

memory

and

then

for

each

resource

we

specify

flavors

and

for

each

we

specify

the

quota.

Now.

B

What's

the

problem

here,

so

usually

things

like

CPU

and

memory

are

very

deeply

coupled

because

they

they

are

tied

to

the

to

the

node.

So,

usually,

you

want

the

same

flavors

to

be

listed

for

CPU

and

memory

and

of

course

you

can.

You

cannot

assign

a

flavor

of

spot

to

CPU

and

then

a

flavor

of

on-demand

to

memory

that

that

will

be

incompatible

so

in

in

a

way

we

actually

want

to

define

a

flavor.

Instead,

that

has

the

flavor,

gives

you

CPU

and

memory.

So

that's

that's

the

the

change.

B

We

theory

was

needed

to

give

a

contour

example

here

we

have,

for

example,

a

license

which

a

license

probably

is

not

tied

to

the

VM

right,

so

it

it

doesn't

have

the

same

flavors

as

the

other

resources.

So

now

you

have

here,

you

have

well,

we

just

put

a

some

boilerplate

here,

but

let's

say

you

have

license

for

the

operating

system,

so

you

have

only

disorder

for

Windows

and

this

much

quota

for

Linux

and

then

this

is

not

tied

to

do

the

other

resources

anyways.

B

B

B

B

A

B

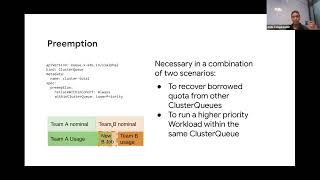

Okay

sounds

good,

sounds

good,

so

the

next

Feature

Feature

that

we

have

been

working

on

is

preemption,

so

impression

I

wanted

to

simplify

with

this

graphic.

Here.

Let's

say

you

have

this

nominal

quota

for

team,

a

on

the

left

on

the

green

and

then

this

nominal

quota

for

Team

B

in

Orange.

Now

you

have

this

usage

this

this

green

block.

So

this

these

two

cluster

cues

are

tied

together,

meaning

that

they

can

share

resources.

They

can

borrow

resources

from

each

other.

B

So

let's

say

team

a

is

currently

using

this

using

extra

quota

that

Team

B

is

not

using

right.

Team

B

on

the

right

is

using

less

than

its

minimal

quota.

Then

team

a

can

borrow

the

quota.

That

b

is

not

using,

but

now

we

have

a

new

job

coming

from

B,

so

B

wants

to

recover

its

quota

because

it

belongs

to

it's

part

of

his

part

of

its

nominal

quota.

B

So

what

should

happen

here

is

that

we

Q

needs

to

preempt

some

workloads

that

are

running

in

this

quota

to

accommodate

for

for

Team

B

for

the

new

workload

from

TB.

So

that's

that's

the

that's

one

scenario

of

of

preemption

that

you

want

to

recover

quota

from

from

cluster

queues

that

are

currently

borrowing

from

you.

B

B

You

can

say:

never

you

can

say

always,

or

you

can

say,

I

only

want

to

preempt

if

the

priority

from

in

the

priorities

I

only

want

to

print

workloads

that

have

lower

priority

than

me

so

and

and

just

as

a

reminder,

this

cluster

queue

is

an

object

that

administrators

set

up,

not

users

not

end

users.

So

this

is

setting

policies

right.

This

is

setting

policies

from

the

organization

whether

you

know

the

preemptions

should

respect

or

not.

The

priority

might

depend

on

your

needs.

B

Another

another

scenario

for

for

preemption

is

within

within

the

cluster

queue.

Let's

say

there

is

no

more

space

to

borrow

Team

B

is

using

everything

you

have

a

higher

priority,

high

priority

workload

coming

for

a

then.

In

that

case,

you

might

actually

want

to

preempt

some

workloads

within

that

are

running

within

the

quarter

of

a

so

that's

the

second

scenario-

and

this

is

the

second

field-

the

policy

that

this

second

field

is

controlling,

so

preemption

within

cluster

queue.

Again

you

can

say:

never

you

can

say

lower

priority.

B

B

B

Startup

configuration

field,

so

this

is

how

you

can

you

can

you

can

check

the

documentation?

Actually,

this

link

might

be

no,

it's

it's

the

new

olympius,

so

yeah.

So

this

this

is

a

configuration

field

for

for

the

future,

wait

for

pots

ready

and

you

can

enable

and

disable,

and

you

can

set

up

a

timeout

for

how

long

you're

willing

to

wait

for

pods

to

be

ready.

So

what's

what's

what's

this

for

so

when

you

enable

this

feature,

you

will

wait

for

the

pots

of

a

job

to

be

ready.

B

If

you,

if

you're,

if

you

follow

the

the

progress

in

the

job

API,

you

might

know

that

there

is

a

field.

A

new

kind

of

New

Field

called

ready

in

the

job

status.

That

tells

you

if

the

pots

are

well

scheduled

and

already

running,

so

we

use

that

field

within

within

Q

to

you

know,

gate

the

jobs.

So

we

wait

for

a

job

to

schedule

to

start

up,

and

only

then

we

schedule

the

next

job,

and

this

offers

All

or

Nothing

guarantees.

B

When

either

you

have

a

very

fragmented,

a

cluster

that

could

potentially

get

fragmented

easily,

namely

fixed

size,

cluster

and

or

if

you

are

prone

to

stockouts,

which

are

possible

in

it's

possible

when

you're

like

requesting

way

too

many

gpus

in

the

cloud

or

some

other

uncommon

or

highly

demanded

piece

of

hardware

so

yeah.

This

is

a

kind

of

a

first

first

attempt

at

offering

All

or

Nothing,

but

keep

in

mind

that

this

is.

This

is

not

what

we

intend

to

have

long-term

long

term.

B

We

want

to

have

deeper

integration

with

cluster

autoscaler

so

that

cluster

Auto

scalar

can

give

a

feedback,

a

feedback.

We

can

have

a

feedback

loop

between

q

and

cluster

Auto,

scalar

or

the

scheduler,

or

both

to

inform

you

that

a

scalab

was

not

possible

or

scheduling,

All

or

Nothing

scheduling

was

not

possible,

so

Q

can

back

off

the

next

jobs.

C

C

E

C

B

B

Framework

for

building

load

tests,

so

it's

very

easy

to

to

set

up

now.

It's

super

easy

to

set

up

new

scenarios.

You

know

with

with

the

jobs

the

number

of

bots

you,

your

organization

might

have

per

job,

and

then

you

can

tweak

all

the

parameters

and

then

it's

very

easy

to

run

the

the

tests

themselves

and

another

thing

I

wanted

to

highlight

about

q.

A

why

the

memory

consumption

is

is

low,

is

because

Q

takes

decisions

at

the

job

level.

F

B

B

B

B

Right

so

Q

would

call

the

jobs

so

that

there

are

no

parts.

Actually

until

Q

says

you

can

you

have

we

have

quota

for

this

job

and

then

the

job

controller

is

able

to

create

the

pots

and

only

then

keep

scheduler

would

take

over

so

yeah

that,

in

in

a

sense,

Q

will

reduce

the

memory

consumption

of

the

entire

cluster.

When

you

have

way

too

many

jobs

yeah,

because

there

are

less

spots

overall

to

process.

G

Okay,

thank

you

so

remember

that,

like

all

these

jobs

are

actually

created

in

the

API

server,

so

there

is

a

dependency

on

how

scalable

the

API

server

is

as

well

like.

If

you

create

it's

not

like

we

queue

is,

is

like

you

know

the

front

end

that

receives

and

stores

these

objects.

They

are

all

on

the

API

server.

D

B

Yeah

one

thing

we

did

notice

is

that

we

we

sometimes

run

into

the

limitation

of

of

the

API

server

itself

that

once

you

have

too

many

parts

there,

each

the

each

of

the

cubelets

is

sending

startups

of

days.

So

it

the

entire

API

server

is

slow.

So

you

you

need

a

beefy

master

or

a

BP

API

server

to

handle

those

kind

of

loads.

So

an

IQ

is

highly

dependent

on

on

that

size.

On

the

the.

B

F

Do

we

want

to

for

the

mod

apps

like

those

into

your

job,

and

so

so

so

we

can

choose

this

just

as

a

scaffold,

and

so

you

don't

need

to

build

your

control

from

scratch

and

to

achieve

this

we

we

defined

a

new

interface

named

We

name,

the

generic

job

I

think

the

name

is

there

a

bit

inside

and

it

will

shapes

the

default

Behavior.

We

need

to

integrate

with

you

and

you

know

the

most.

They

know.

F

F

B

I

have

one

thought

about

this:

I

forgot.

Let's

just

continue

so

as

usual,

we

are

always

looking

for

contributors,

not

just

continuous

in

code

but

documentation

or

now

the

website

and

just

general

feedback.

Oh

yes,

so,

for

example,

I

I

left

in

the

in

the

notes

for

today's

meeting

the

links

to

some

of

the

PRS

and

whatnot,

for

example,

the

custom

job

Library,

the

custom

job

Library.

B

Ideally,

you

want

10

10

parts

but

you're,

okay

with

five,

so

those

kind

of

scenarios

and

integrating

with

people

such

so

that

we

can

measure

a

higher

granularity,

the

performance

of

Q

and

some

like

usage

user

experience,

improvements

such

as

a

capacity

or

plugging

and

perhaps

even

grafana

samples

to

build

dashboards.

We

already

have

metrics,

but

we

don't

have

ready

to

use

grafana

dashboards,

which

we,

it

would

be

nice

to

provide,

and

there

are

some

like

people

asking

about

Helm

and

other

deployment

mechanisms.

B

C

B

It's

it's.

It's

touching

every

piece

of

queue,

so

once

that's

finished,

we

just

need

to

update

the

documentation

and

we

we

have

a

tech

writer,

help

us

with

the

with

those

changes

so

to

to

really

bring

the

best

quality

to

the

to

the

presentation

and

I

would

expect

you

know,

maybe

around

four

weeks

to

the

next

release.