►

From YouTube: Kubernetes Data Protection Workgroup Meeting 20210324

Description

Kubernetes Data Protection Workgroup Meeting - 24 March 2021

Meeting Notes/Agenda: -

Find out more about the Data Protection WG here: https://github.com/kubernetes/community/tree/master/wg-data-protection

Moderator: Xiangqian Yu (Google)

A

A

A

The

first

thing

is

that

shin

and

I

have

been

working

on

this

whole

container

notified

cap

for

a

little

while

today,

I'm

going

to

give

you

they

give

the

group

a

more

kind

of

look

back,

plus

current

status

updates.

This

will

take.

Probably

30

minutes

of

the

session

depends

on

how

many

questions

we

have

and

the

second

agenda

is.

We

will

talk

about

a

little

bit

about

the

white

paper.

Was

that

without

updates,

then

we

will

be

opening

the

opening

the

session

to

the

audience.

A

A

A

So

you

probably

have

been

hearing

this

topic

for

a

little

while

just

to

give

those

who

are

not

very

familiar

with

this

topic

a

little

bit

background,

so

that

in

total,

two

attempts

so

far

to

define

a

mechanism

to

send

a

command

to

an

container

in

kubernetes

world.

The

first

attempt

the

campus

list

over

there.

It

was

crd-based

controller-

will

reconcile

on

the

crd

and

it

needs

to

be

a

privileged

part

because

it

needs

to

run

arbitrary,

cube,

exact

comment

against

arbitrary

part

and

container,

and

it

mainly

was

targeting

and

solving

the

application.

A

A

There

were

good

amount

of

pushback

from

signal

and

and

also

as

api

reviewers

talking

about

the

possibility

of

moving

this

into

a

different

direction.

The

other

thing

is

that

this

seems

to

be

a

good

there's.

Some

there's

there

are

other

use

cases

which

can

fit

into

this

model

and

they

want

to

extend

the

scope

a

little

bit

so

that

use

cases

like

sending

signals

to

a

set

of

containers

or

parts

in

the

kubernetes

cluster.

A

A

The

scope

of

pre-reached

controllers

that

offered

by

the

community

cooperate

already

have

all

the

necessary

access

to

run.

Those

commands

against

any

arbitrary

part

and

container

on

that

particular

node,

and

that

is

also

to

support

generic

more

generic

to

support

other

use

cases,

and

this

is

a

second

attempt,

but

we

received

a

good

amount

of

feedback

from

the

second

attempt.

A

A

A

The

second

thing

that

drove

a

lot

of

discussion

is

the

whole

imperative

versus

declarative

concept.

Kubernetes

apis

are

mostly,

if

not

all,

declarative

right.

This

is

this

whole

base

level

triggering

versus

age

of

triggering

right.

Everything

is

about

level

triggering

kubernetes

work

and

signal

execution

hooks.

I

tend

to

be

more

imperial.

Basically,

you

send

something

and

you

leave

it

there

that

you're

wrong.

A

A

Yeah

application

requires

enquires

for

sure,

and

when

you

send

a

signal,

for

example,

if

you

want

to

temporarily

adjust

the

lock

level

to

debug,

you

don't

want

to

do

this.

You

know

you

don't

want

to

leave

it

there

forever,

because

that

probably

will

you

know,

write

too

much

block

into

your

system

and

make

your

disk

full

very

soon.

A

So

how

to

deal

with

the

newly

created

or

newly

deleted

part

in

a

kubernetes

world,

it

remains

a

problem

to

be

solved.

Basically,

pods

can

go

and

get

the

again

go

and

come

at

any

time

in

this

in

the

kubernetes

environment,

even

nodes,

so

how

we

process

that

is

another

major

discussion,

topic

question

so

far.

A

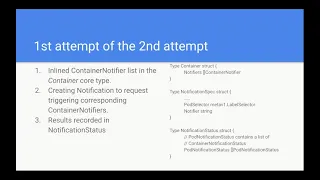

No,

I

then

I

will

move

on

so

so

we

have

two

attempts

in

the

second

attempt

that

this

is

the

first

attempt

in

the

second

attempt

the

call

thing,

so

the

idea

is:

have

a

inlined

container

loaded,

notifier,

a

list

of

actually

container

notifiers

in

the

container

core

type,

and

this

is

not.

This

is

not

an

api

object,

it's

just

a

united

type,

so

the

structure

of

the

notifier

is

pretty

straightforward.

A

A

You

can

take,

you

can

see

that

the

notification

status-

the

first

thing

it

has-

it

is

a

list

of

pod

notification

status

and

each

part

notification

status

contains

potentially

multiple

container

notification

status,

because

each

part

can

have

multiple

containers

and

all

these

containers

can

have

that

notifier

defined

this

making

sense.

So

far.

A

A

A

B

B

B

Wondering

like

if,

if

we

just

like

cube,

cuddle

exec

lets,

you

run

commands

and

the

reason

we

don't

like

it

is

because

it's

just

you

know,

if

you

don't,

if

you

don't

put

any

limit

on

the

commands,

you

can

run,

people

can

use

it

to

perform

attacks.

So

this

mechanism

lets

the

guy

who

defines

the

pod

sort

of

enumerate

a

set

of

commands

that

can

be

run

from

you

know

from

other

controllers.

D

E

B

D

But

you

don't

really:

where

do

you

save

your

you're?

Not

really

saving

those

in

your

ikea

objects

right

the

results

when

you

run

that,

where

are

you

saving

this,

so

you

need

to

have

your

own

controller,

take

care

of

those

right

here.

We

are

talking

about

we're,

seeing

that

in

an

api

object

status.

So

that's

the

concern.

B

D

D

D

D

D

D

D

G

G

G

D

D

So

so,

if

you're,

using

cube

and

also

what

I'm

saying

is

we're

talking

about

scaling

issue

right

when

you're

running

that

cube

color

command

you're

running

one

one

command,

so

you

are

logged

into

one

container

at

a

time

right

here,

we're

talking

about

we're

doing

a

program.

Then,

when

we

select

a

lot

of

we

use

the

part,

the

the

selector,

then

it

could

be

thousands

part

and

we're

running

that

at

one

time.

That's

a

concern.

Well,.

D

We

need

to

yeah,

but

we

need

to

get

the

results

right,

but

you

can

yeah,

so

you

can

achieve

all

of

this

by

using

cube

cuddle.

Yes,

but

then

you

still

have

to

figure

out

like

how

to

store

that

yourself

right

so

right

today,

I

think,

like

the

backup

vendors,

some

some

of

them

they

well,

I

think

most

of

them

should

be

already

doing

this,

so

they

have

to

handle

that

themselves.

Maybe

actually

we

should

probably

ask

someone

to

answer

this

one

other

than

we

talk

about

it.

D

B

D

D

I'm

just

trying

to

understand

I'm

just

saying

what

I'm

trying

to

say

is

that

the

the

problem

is

the

potent

potentially

you

could

have

thousands

of

parts.

So

I

think

the

concern

is

the

scalability.

I

think

that

probably

actually

exists

today.

If,

if

you

are

doing

a

if

your

class

and

then

you

actually

have

to

require

thousands

of

parties,

you

also

have

that

problem.

D

A

D

D

H

H

D

H

H

D

A

D

A

Are

making

this

engineering

only

solving

the

application

is

not

a

problem.

I

don't

think

scalability

is

really

a

concern

because,

as

tom

was

suggesting

normally

you

won't

have

over,

let's

say

a

dozen

of

parts

for

a

specific

application

that

we

need

to

requires.

But

if

we're

talking

about

signals,

then

this

is

a

good

chance.

We

will

bridge

the

thousands

we're

getting

to

the

southern's

kind

of

domain.

H

For

the

signal

case,

you

won't

have

that

much

output

being

sent

back,

though

right

I

mean,

because

you're

essentially

just

running

a

single,

a

single

command

that

won't

have

too

much

output.

Unless

what

output

are

you

thinking,

you'll

have

to

collect

for

from

sending

a

signal

to

a

thousand

pods

sure.

A

A

And

I

think

this

is

a

valid

concern

in

many

cases,

so

we

let's

see-

let's

see

we

have

some

kind

of

you

know

solution.

Let's

see

whether

we

can

solve

this

problem,

but

I

want

to

discuss

with

the

plane

offline

a

little

bit

and

to

get

where

his

idea

is

because

I'm

interested

to

learn

about

that

ben

I'll

ping.

You

later

on.

B

A

B

B

A

I

I

Back

to

the

previous

discussion,

I

think

basically,

the

problem

here

is

this:

has

the

potential

you

know

for

people

to

use

it?

However?

They

want

so

outside

of

you

know,

snapshot

use

case

and

quieting

people

can

use

this

like

ansible,

you

know

just

to

run

commands

at

a

bunch

of

parts.

You

know

which

is

can

be

more

frequent

than

running

application,

consistent

snapshots

or

you

know,

as

far

as

scalability,

I

think

it's

same

as

you

know

the

kubernetes

model,

where

people

just

write

custom

controllers

in

their

pods,

you

know

and

they're

watching

and

updating

crs.

I

So

I

think

what

this

enables

is

that

people

don't

have

to

write

custom

controllers.

They

can

just

abuse.

This

mechanism,

to

you

know,

run

commands

across

a

bunch

of

pause,

look

kind

of

like

answerable.

You

know

you

just

unleash

the

command

on

a

bunch

of

nodes.

I

think

this.

This

is

this.

This

is

where

scalability

can

get

affected.

I

A

A

There

will

also

be

the

ones

who

label

the

parts

the

way

they

want

it,

so

that

the

correct

parts

are

selected

and

there

will

be

also

the

ones

defining

the

execution

hook

command.

So

if

this

is

a

purely

kind

of

you

know,

application

consistency,

snapshot

use

case,

then

I

would

be

less

concerned

about

the

scalability,

but

extend

this

to

a

more

generic

way

opens

the

door

at

least.

A

This

proposal

brings

that

concern

because

kubernetes

is

already

kind

of

busy,

so

it

is

possible

that

many

parts

are

scheduled

on

a

note

so

having

coupled

to

run

all

these

notifications

in

once,

you

may

may

I'm

saying

not

necessarily

for

sure

but

may

bring

them.

You

know

too

much

burden

to

the

cuprate.

A

A

This

thing

is

for

user

friendly

cases,

especially

for

signal

you

don't

want

to

manually,

send

every

single

signal

to

every

single

part

using

part

notification

define

that

bob,

the

dice

lana

can

actually

scale

and

also

high

level

controllers

may

utilize

this

one.

But

on

top

of

that

we

add

a

couple

of

things,

one

or

two

things.

Majorly.

One

thing

is

this

whole

policy

thing

it

defines

one.

Should

this

part

this

selector

should

cut

the

scope

so

right

now

there

were

two

possibilities:

one,

it's

all

parts.

A

It

means

that

at

the

creation

time

of

your

notification,

this

is

the

time

that

you

market

in

the

api

server

for

your

notification

object,

use

that

timestamp

to

find

any

existing

parts

that

is

up

and

running

which

have

which

have

a

smaller

timestamp

than

the

notification

creation.

Time

way,

any

newly

created

parts

after

the

creation

of

the

notification

will

not

have

a

pod

notification

created.

A

The

another

field

we

add

is

called

parallelism.

If

you're

familiar

with

the

drops

or

batch

jobs

api,

you

will

find

that

the

parallelism

similar

mechanism

over

there.

This

is

majorly

targeted

at

avoiding

users

from

creation,

creating

a

bunch

of

jobs.

Let's

say

a

thousand

jobs

to

be

kicked

off

at

the

same

time

which

crashed

the

system.

A

A

If

e,

either

the

part

notification

will

fail

or

it

will

succeed

right,

there's

the

the

result

should

be

deterministic,

but

the

problem

is

in

the

in

the

paired

execution

case

where

the

first

part,

a

notification

actually

succeeded

for

choirs,

they

say

using

fs,

freeze

and

the

second

before

the

second

one

is

send

the

part

get

deleted.

Then

your

file

system

may

be

locked

in

this

case.

I

have

to

be

honest

with

you.

We

don't

have

a

solution

for

that.

A

No,

it

is

possible

at

the

quietest

time.

The

part

is

still

up

and

running

right

and

then,

if

you

imagine

a

lot

of

level

controllers

say,

oh

now

requires

my

application.

No,

no!

I

get

exited

execute

it.

You

go

ahead

and

do

your

volume

snapshot

or

volume

backup

or

your

application,

backup

whatever

it

is.

It

takes

some

time

right

before

you

execute

inquires

against

the

part

the

could

delete

it.

D

D

F

A

In

that

case,

I

can

imagine

the

the

the

way

the

a

viable

approach,

upper

level

controller

creates

and

notification

sees

a

notification

object

and

it

creates

pod

notifications

right

and

a

part

notification

to

parts

are

one-to-one

mapping

they're

one-to-one

mapping.

So

that's

can.

Let's

say

this

is

a

choice

and

when

you

do

an

inquiries,

you

should

be

expecting

exactly

the

same

universe

of

your

quiet

comment.

A

A

Did

I

answer

your

question?

Surely

I

think

so?

Yeah:

okay,

the

last

piece

is

that

notification

status

will

no

longer

hold

a

jargon

list

of

pod

notifications

and

container

notifications

results,

but

rather

it

offers

high

level

aggregates

the

completion.

Time

and

start

time

are

both

kind

of

optional.

In

this

case,

the

completion

time

will

only

be

marked

if

the

policy

is

pre-existing

parts.

Only

imagine

the

word.

If

it's

all

parts,

you

will

never

have

a

completion

time.

Basically.

I

A

Controller

logic

will

not

be

encrypted

right.

So

in

this

case,

cooper

will

be

not

the

ones,

selecting

the

path

to

be

executed.

That's

what

the

other

one

and

then

you

know

it.

At

least

you

know

somehow

shift

the

concern

from

sick

nodes

to

more

or

less

upper

level

controller.

Where

this

is,

I

don't

know

yet.

That's

one.

The

second

one

is

the

aggregated

results.

Is

it's

recorded

instead

of

detailed

ones

in

the

upper

level?

A

A

I

A

I

I

mean,

I

guess,

the

the

way

we

currently

control,

that

is,

that

we

have

coders

per

name

space,

so

we

can,

for

example,

prevent

the

user

from

creating

too

many

parts

or

too

many

pvcs

or

you

know,

launch

a

denial

of

service

attack.

But

here,

if

users

can

set

their

parallelism

field

to

arbitrary

large

values,

then

nothing

can.

I

A

A

A

I

A

You

are

absolutely

right,

so

we

imagine

this

to

have

multiple

cases

right,

so

we

imagine

the

application

controller

most

likely.

It

is

reasonable

to

for

them

to

use

the

part

notification

directly

so

that

they

can

control

the

universe

for

choirs.

You

have

this

universe

for

enquires,

you

use,

you

have

another

universe.

A

A

B

A

B

A

A

A

A

D

D

D

D

Right

so

yeah,

so

this

is

a

container

right,

so

we're

adding

this

kind

of

notifier

and

then

in

each

continent,

notifier,

so

each

kind

of

notifier.

We

have

this.

We

have

this

name,

so

this

name

right.

So

this

one

so

the

basically

the

container

these

kind

of

content

notify

in

the

container

must

have

a

unique

name.

And

then

you

move

down

move

down

a

little

bit.

I

think

it's.

D

B

D

A

C

B

Right,

there's

both

there's

both

use

cases.

You

may

want

the

ability

to

just

broadcast

a

message

to

all

of

your

containers

and

all

of

your

pods,

in

which

case

the

convenient

thing

to

do,

is

define

your

notification

with

the

same

name

on

all

of

your

containers.

But

then

you

may

have

a

different

use

case

where

you

want

to

just

do

a

one-off

and

the

the

fact

that

you

can't

target

one

container

forces

you

to

like

make

a

second

notification

that

runs

the

same

command

with

a

different

name

or

something

lame

like

that.

B

F

Yeah,

I

also

have

another

question.

I

think,

while

we're

talking

about

this

it,

if

I

have,

I

can

have

three

containers

and

each

container

has

a

container

and

a

photo

with

the

same

name

would

create

a

pod,

a

notification.

Spec

that

says

go

run

this

notifier

in

that

pod

and

I

would

just

get

a

success

or

failure.

A

A

No

problem:

it's

here

see

the

status

is

a

container

notification

status,

but

ben's

point.

I

I

think

it's

a

very,

very

important

idea.

The

typical

case

I

can

think

of

is

you

have

multiple

parts

in

and

sorry

multiple

containers

in

the

pot.

Maybe

some

of

them

is

the

main

container

you

don't

want

to

mess

up

with,

but

you

want

to

adjust

local

levels

with

other

containers.