►

From YouTube: Kubernetes WG IoT Edge 20220223

Description

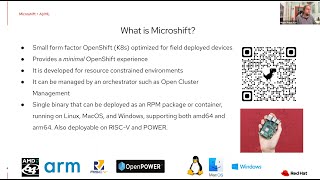

AI on the Edge with MicroShift

A

Hitting

record

welcome

to

the

february

23rd

meeting

of

the

kubernetes

iot

edge

working

group

for

today's

meeting,

we

have

on

the

agenda

a

presentation

on

red

hat's

recently

announced

microshift

project,

which

is

a

solution

for

running

workloads

at

the

edge

and

specifically

the

speaker,

miguel

that

we

have

today

is

going

to

address

using

this

for

ai

use

cases.

So

with

that

said,

I'll

turn

it

over

to

miguel.

B

Thank

you

steve

and

thank

you

for

for

having

me.

So

yes,

it

was

interesting.

My

plan

was

to

play

a

video

of

the

presentation

that

we

have

for

devcon

because

I

thought

it

was.

It

was

nice.

I

mean

we,

we

did

some

some

technical

demo

of

running

a

workload

on

the

edge,

but

let's

see

if

that

works.

If

it

doesn't,

then

I

will

fall

back

to

just

slides

and

and

yeah,

and

maybe

I

can

oh

I

I

don't

hear

you

now

sorry.

A

I

know

you

intend

to

go

into

ai

use

cases,

but

I

know

I'm

up

for

myself:

I'm

not

all

that

familiar

with

microshift

other

than

reading

a

couple

of

blogs

and

poking

around

a

bit

on

the

github

source

code

site.

But

if

you

could

start

with

an

intro

to

you

know

what

microshift

is

kind

of,

why

the

project

was

done,

that

kind

of

stuff

before

we

get

into

ai,

I

think

it'd

be

useful.

Yeah.

A

B

B

B

A

Hey

while

we're

waiting

dion,

I

know

that

you

were

working

on

that

report

for

the

steering

committee

and

shared

a

document.

So

I

just

wanted

to

bring

up

that

all

the

users

of

this

group

are

welcome

to

add,

whatever

comments

they'd

like

to

have

go

to

steering

if

you've

got

any

feedback

on

this

group,

good

or

bad,

we're

open

to

your

comments

and

encouraging

them

and

will

as

long

as

they

don't

violate

the

code

of

conduct,

I

think

we'll

forward

them

into

the

report

to

steering

completely

unedited.

A

B

B

Yeah

that

part

and-

and

we

can

get

into

microsoft,

so

microsift

is

a

small

form

factor

of

for

open

shift.

We

have

reduced

the

the

footprint

while

still

retaining

some

of

the

features

native

to

to

openshift,

so

people

running

workloads

on

on

openshift.

They

can

still

use

some

of

the

additional

security

features

and

and

yeah

other

apis

embedded

in

in

openshift.

B

So,

as

you

know,

openshift

has

a

lot

of

operators

to

to

make

everything

work

and

in

microsoft

there

is

no

no

operator.

It's

a

simple

setup

that

you

can

customize.

We

have.

We

use

a

few

images

from

from

openshift

instead

of

the

okay,

the

distribution

and

and

for

the

intel

platform.

Those

are

the

same

images.

We

don't

need

to

recompile

anything,

but

for

other

platforms

like

like

arm

risk

power,

pc

and

and

so

on.

We

recompile

those

images,

the

arm,

64

yeah.

B

B

A

B

Yes,

so

we

we

are

working

on

on

having

all

I

mean

all

the

pieces

and

and

the

gaps

together

and

and

making

sure

that

those

can

be

used

with

with

microchip.

There

are

some

things

which

are,

I

mean,

still

not

not

close

and

not

clear,

like

the

the

final

steps

on

this

side,

but

the

the

the

idea

is

that

I

mean,

if

you

know,

secure

device

on

board,

you

can

I

mean

you

can

have

the

manufacturer,

the

manufacturer

pre-initialize,

the

the

device

for

you

and

and

have

a

voucher.

B

Maybe

you

could

even

put

the

container

images

of

the

of

the

final

application-

maybe

a

specific

version,

of

course,

but

in

the

device,

for

example,

is

not

going

to

have

a

network

access

and

after

the

onboard

you

could

still

do

that

and

and

depend

for

example.

Instead

on

pulling

images,

you

could

depend

on

simply

updating

the

os3

operating

system,

image,

which

is

supposed

to

be

atomic.

B

B

B

B

B

C

B

B

B

The

access

point

is

running

a

support

and

the

cameras

are

connected

to

this

access

point

and

they

are

at

this

point

trying

to

to

to

find

our

microsafe

application.

We

look

at

the

wi-fi

adapter.

We

will

find

the

asking

via

mdns

for

our

microcam

registry,

but

nobody

is

responding

because

there

is

an

application

for

that.

So

the

next

thing

that

we

are

going

to

do

is

starting

power

of

our

camera

service.

B

B

B

Okay,

no

sorry,

I

was

trying

to

stop

there

here

and

not

used

to

zoom

so

yeah.

I

I

I

don't

want

to

take

more

more

time

in

that,

so

it's

just

so

on

that

presentation

we

we

didn't

go

through

the

whole

life

cycle

of

managing

workloads

on

the

edge

which

probably

it's

important

to

this

group.

So

I'm

not

familiar

with

the

topics

that

you

normally

discuss

and

but

I

I

suspect

that

those

interesting

to

us.

A

A

B

And

and

the

register

and

then

the

server

will

connect

back

to

the

cameras

with

the

token

that

the

camera

has,

I

mean

you

could

do

it

the

other

way

around

I

mean

it

was

simpler

to

to

program

for

the

demo,

but

technically

you

could

have

the

camera

connect

and

then

stream,

video

into

the

server

or

the

server,

find

the

cameras

and

then

connect

to.

I.

A

B

A

The

other

questions

I

had

were

about

microshift

itself,

like

you

know,

if

it

gets

installed

by

a

device

that

gets

shipped

out

to

an

edge

site.

I

saw

in

the

github

registry

that

it

says

the

binary

is

150.

Megabytes

is.

Is

that

pretty

much

all

that

you'd

have

to

send

over

the

wire

to

provision

a

device,

or

is

that,

on

top

of

an

os

that

you'd

have

to

get

down?

First.

B

Any

images

that

microshift

would

need

for

that

specific

specific

version,

and

then

the

I

mean

the

the

the

final,

the

final

owner

of

that

that

will

run

an

application

inside

they.

They

could

provide

the

the

deployment

configuration.

So

that's

picked

up

when

the

system

boots

and

it's

going

to

make

sure

that

the

the

the

deployment

is

running

and

ready,

but

they

could

also

include

the

their

own

images

on

on

the

on

the

operating

system.

B

So

they

could

reveal

the

image

of

the

whole

thing

and

have

it

so

and-

and

those

are

the

things

I

mean

that

we

want

to

analyze

like,

for

example,

on

on

manufacturing.

You

could

have

a

an

operating

system,

image

that

has

the

operating

the

basic

operating

system

with

microshift,

almost

ready

or

microsoft,

plus

the

basic

microsoft

images,

but

no

user

application.

B

And

then,

when

you

onboard

that

on

on

the

final

destination,

the

additional

layer

of

the

operating

system

with

the

final

images

and

and

the

configuration

is

added

and

then

that's

what

needs

to

be

transmitted

over

the

wire

to

the

device.

So

yeah.

We

are

looking

at

all

the

possibilities

and

yeah.

There

are

many

fac,

very

important

factors

on

the

edge

like.

Maybe

you

don't

have

good

network

access

and

you

cannot

download

a

lot

of

data.

A

So

is

it

fair

to

say,

microsoft

attempted

to

take

on

the

mission

of

just

being

a

compliant

kubernetes

with

a

a

small

binary,

and

it

really

is

just

that

so

that

if

you

wanted

other

features

like

I

don't

know,

device

discovery

or

remote

federated

management

of

multiple

clusters

that

you

would

add

it

on.

Just

like

any

other

kubernetes.

Is

that

I

I

that

that's

sort

of

what

I'm

picking

up

here,

but

maybe

I'm

missing

something.

D

B

A

That

was

my

question,

and

I

only

ask

because

there

are

a

few

other

edge,

optimized

kubernetes,

that

attempted

to

bundle

in

more

functionality,

so

that

I

mean

that

could

be

good

or

bad.

You

know

there's

an

argument

against

being

a

monolith

that

lets

people

plug

and

play

components

just

the

way

they'd

want

to,

and

in

the

end

it

as

long

as

you

can

put

in

what

you

need

and

get

the

functions,

you

want

you're,

fine,

but

yeah.

That

was

my

question.

D

Yeah

we

tried

to

provide

also

some

extra

apis

from

from

openshift,

so

developers

that

use

openshift

like

in

the

cloud

and

so

on

can

have

their

application

some

kind

of

portable

to

to

the

edge

so

just

like,

develop

once

and

run

it

everywhere,

but

yeah

microshift

is

mainly

an

edge

optimized,

kubernetes

distribution.

That's

right.

A

D

B

Yes,

yeah

like,

if

probably

in

most

cases,

it

will

be

fine,

but,

for

example,

if

I

mean

if

your

application

is

doing

some

sort

of

artificial

intelligence

workload,

maybe

the

gpu

on

a

single

board

is

not

enough,

and

maybe

you

need

to

add

more

or

I

mean

whatever

your

application

needs

to

do.

It

spreads

over

multiple

places.

A

So

how

opinionated

is

it

with

regard

to

the

operating

system

it

runs

on?

I

mean

I

obviously

understand

that

being

done

by

red

hat

you're,

going

to

support

red

hat

flavors

of

os's

first,

but

if

I

had

something

I

don't

know

like

in

my

home

lab

to

play

around

with

I've

got

raspberry

pies,

which

seem

to

be

better

supported

with

the

debian

variants

of

os's.

From

my

experience

is

there

enough

there

that

I

could

find

in

the

documentation

a

way

to

run

it,

or

is

it

at

this

stage

pretty

much

expecting

a

red

hat

os.

B

B

A

C

D

D

B

A

B

D

Yeah,

our

first

vision

was

to

to

have

microshift,

so

this

edge

optimized

operating

systems

like

fedora,

iot

or

rail48,

or

they

use

os

3

rpm,

os

3

right

and

most

of

the

file

system

is

read

only

and

you

cannot

just

like

you

know,

edit

some

of

the

files

that

are

embedded

into

this

os3.

So

it's

like

an

immutable

tree

with

packages

and

so

on.

So

our

initial

idea

was

to

have

cryo

embedded

in

the

os3

and

microshift

as

a

as

a

container

running

on

on

docker.

D

So

the

idea

with

this

is

that

you

can

upgrade

microshift

without

interruption

for

the

workloads.

So

you

have

this

extra

services

running

as

containers

in

cryo,

and

you

have

your

workloads

and

your

applications

and

so

on.

So

you

could

easily

replace

microshift

without

any

service

interactions

for

for

the

workload.

So

the

idea

of

not

embedding

cryo

is

that

if

you

upgrade

microshift

with

cryo

in

it,

there

will

be

some

service

interruption.

So

that

was

like

the

main.

The

initial

idea.

A

A

I

don't

have

one

of

the

jets

and

things

that

I

saw

you

use

in

your

demo,

but

I

do

have

some

other

things,

so

I'd

probably

want

to

take

it

onto

a

raspberry

pi

or

something

like

that.

Now,

where

can

I

go?

Is

there

a

collection

of

or

a

community

formed,

where

people

who

are

using

this

get

together

and

chat

and

ask

questions

and

things.

D

No,

there

is

a

slack

organization,

it's

not

within

kubernetes

it's

just

there.

We

have

our

own

free,

three-tier

micro

shift

slack

and

there

there

is

a,

for

example,

a

specific

room

for

arm

enablement

and

how

to

run

microsift

on

raspberry

pi's

and

all

these

kind

of

devices.

There

are

a

couple

of

blog

posts

from

red

hatters

that

have

tried

to

well

that

I

have

achieved

to

deploy

microshift

on

raspberry

pi's.

D

Regarding

our

demo,

I

I

bet

you

could

run

the

demo

on

a

microsoft

on

a

raspberry

pi,

but

probably

you

might

need

to

do

some

tweaking

because

of

course,

there

are

no

gpus

in

in

the

raspberry

pi's,

and

there

is

a

specific

correct

me,

miguel,

if

I'm

rom,

there's

a

specific

parameter

to

change

the

machine

learning

model

from

gpu

to

only

cpu

right.

Well,.

B

B

B

C

B

It's

actually

something

like

700

megs

or

something

like

that,

but

still

yeah.

We

we

didn't

go.

I

mean

we

didn't

explore

optimization

too

far.

At

this

point

we

just

trimmed

down

all

the

operators

and

everything

unnecessary

for

that.

We

thought

it

necessary

for

the

edge

like

image,

building

or

any

other.

I

mean.

A

A

B

B

You

have

a

something

that

you

cannot

extract.

I

mean

they

could

technically

if

the

tpm

is

not

inside

the

the

the

processor

and

it's

external,

I

suspect

that

they

could

just

reap

the

tpm

and

and

use

it

to

authenticate,

but

I

mean

it's

and

it

makes

makes

it

harder,

but

I

think

yeah

that

that

maybe

at

some

point

I

mean

we

could

have

a

maybe

a

standard

standard

method

to

to

to

do

authentication

in

kubernetes

with

dpns.

A

C

So

it

seems

like

that

was

already

considered

that

that

should

be

in

the

workflow,

and

I

think

the

implementation

is

the

tricky

part

right,

the

devil's

in

the

details,

and

it

is

if

you

solve

it,

then

you're

solving

it

for

the

whole

industry.

So

I

would

say

the

discussion.

The

discussion

right

now

is

is

a

really

really

big

one

right

and,

as

usual,

steve

asked

the

pointed

questions

that

caused

really

good

group

discussion.

C

We

happened

at

the

at

the

eclipse

side

at

the

edge

native

working

group.

We

happen

to

be

putting

together

an

article

right

now

opening

the

discussion

on

this

topic

right.

It's

like

how

to

use

509

certificates

as

a

a

potential

stack.

That

would

give

you

the

ability

to

to

stack

your

identities,

but

it

all

begins

with

a

hardware

route

of

trust

and

then

the

question

is

you

know?

C

How

do

you

leverage

that

properly

and

maybe

you

didn't

build

the

device,

and

so

then

you

also

need

to

say

if

we

acquired

it,

but

then

we

put

our

software

stack.

How

do

we

certify

ours

and

anyway,

I'm

basically

rambling,

but

I

wanted

to

say

that

that

you

know

this

discussion

is,

is

about

a

16-hour

discussion

in

itself

with

with

no

final

answers,

but

I

thought

it

was

cool

that

you

had

the

device

onboarding

in

your

flow

charter.

B

B

Sorry,

the

fido

onboarding

protocol

like

the

manufacturing,

server,

rendezvous

and

onboarding

server,

along

with

the

application

that

you

have

to

run

on

the

device,

and

so

I

was

getting

those

pieces

and

getting

familiar

with

them,

and

I

still

I

mean

there

are

some

I

mean

fido

defines

the

protocol

of.

How

do

you

do

that?

And-

and

you

can

I

mean

you-

can

move

the

device

and

authenticate

the

status

of

the

device

during

the

whole

process?

A

Yeah,

this

group

had

a

presentation

from

intel

on

this

that

secure

device

onboarding,

I

think,

was

to

a

fair

extent

initiated

by

intel,

and

then

they

moved

it

into

the

foundation.

But

that

presentation

happened

about

a

year

ago

in

this

group

and

it's

up

on

youtube.

So

I

I

thought

it

was

a

good

presentation

that

covered

the

subject.

Well,

as

of

that

time

now,

like

all

things,

this

is

a

rapidly

changing

area.

A

You

know

either

docker

images,

or

some

of

these

talks

have

been

even

on

web

assemblies,

potentially

packaged

in

an

oc,

high

form

factor,

and

at

that

point

your

image

registry

also

becomes

another

thing

you

need

to

care

about.

I

mean

there.

There

are

a

lot

of

these

scenarios

where

people

invest

in

a

container

image

registry

that

does

security

scanning

and

things.

C

A

C

Is

great,

this

is

great,

I

completely

agree

and

then

I'm

going

to

add

another

log

on

to

the

fire.

So

how

about

those

cameras

so

whatever

you're,

connecting

to

probably

needs

to

be

able

to

be

certified

as

well

as

a

disconnected

but

connected

information

source

right?

It's

not

built

into

the

board

and

therefore,

even

if

it's

over

I

squared

c

or

spy,

it

could

be

something

that

is

a

plug-in

right.

And

so

then,

how

do

we

certify

or

usb

it's

usb

attached

right

and

so

the

harry?

C

The

hairball

mess

of

certification

and

attestation

is

a

wonderful

playground

and

a

great

place

to

start

a

company

right

now.

But

but

the

thing

is,

it

would

be

very

difficult

to

solve

it

in

a

universal

way.

So

I

think

just

open

discussion

is

probably

my

guess

is

going

to

be

going

on

for

years

and

you're

right

steve.

C

That

was

a

year

ago

that

we

saw

that

the

with

the

secure

device

on

boarding,

and

I

think

it

was

good

for

for

everything

it

covered

and

then

there's

probably

about

you

know

a

dozen

more

things

that

that

need

to

be

added,

such

as

yeah

container

registry

and

then

and

then

I

would.

I

would

bring

sensors

or

devices

to

the

list.

A

Yeah

bringing

devices

you're

saying

it's

a

good

idea

for

a

startup

or

something,

but

would

make

a

great

movie

plot

too,

for

these

kind

of

heist

films

where

they

go

in

there

and

patch

out

the

security

cameras

in

ways

that

are

probably

incredulous.

But

then

again,

when

you

look

at

this,

what

would

stop

that?

Really?

You

know,

you

know

you

think

you're

monitoring,

all

your

security

cameras

and

somebody

hooks

in

a

pipe

of

a

recorded

video

that

isn't

really

what's

going

on.

That's

right,

because

you

can

you

can.

C

Mimic

a

camera

feed

right

because

it's

the

same

network

traffic,

that's

the

exciting

version

is

the

james

bond

hack.

Then

the

non-exciting

version

is

that

you

go

to

like

a

away

scale

for

vehicles

coming

through,

and

the

equipment

certificate

is

out

of

date

because

it's

not

has

not

been

calibrated

recently

enough

or

for

things

like

fuel

pumps

and

so

on,

and

and

that's

not

movie

worthy

or

exciting.

A

An

interesting

thing

that

just

popped

into

my

head

for

cameras,

given

that

some

of

these

are

legacy

things

with

long

device

like

times,

meaning

that

people

aren't

likely

to

change

them

out

for

things

with

tpm

chips.

If

they

were

even

available,

there

might

be

a

way

to

close

the

loop

by

having

a

device

with

a

tpm

engage,

an

activity

that

would

be

picked

up

by

the

camera

so

that

at

least

you'd

know

that

it

was

connected

end-to-end.

You

know.

Let's

let's

say

I

had.

A

I

don't

know

a

laser

projector

that

could

project

a

a

pattern

on

the

ground

at

a

random

time

and

verify

that

it

comes

in

through

the

video

stream.

At

least

you'd

know

that

at

that

point

in

time

these

the

video

feed

was

authentic

and

the

camera

was

where

it

should

be.

You

know

you

could

have

a

laser

projector

or

maybe

a

drone

that

flies

in

the

area

or

who

knows

what

I'm

just

daydreaming

here.

C

This

is

really

cool.

What

you're

actually

talking

about

steve?

You

could

generalize

as

two-factor

authentication

for

things

that

that

exist

in

the

physical

world,

sensors,

etc,

and

it's

kind

of

like,

what's

the

the

source

of

entropy,

that

we

would

expect

to

hear

from

same

as

I

used

to

have

a

key

fob

right

in

in

2005

to

get

into

my

my

my

banking

system

or

whatever.

C

C

A

A

C

B

So

yeah

I

will

face

the

the

pr

if

I

know

how

to

do

it

yeah

and

there

you

have

a

list

of

the

included

apis

and

they're,

not

included

ones

yeah,

but

I

mean

mostly

so

we

have

the

the

the.

We

include

the

binding

restrictions,

the

bills,

images

and

proxies

apis

image

and

registry

configurations,

and

then

cluster

resource

quotas

and

security,

cons,

context

constraints.

A

Okay,

well

we're

one

minute

past

the

top

of

the

hours,

but

thanks.

That

was

a

great

presentation.

I

appreciate

the

effort

again

if

you

can

afterward

get

me

the

link

to

the

presentation

that

was

in

the

recording-

and

I

don't

know

whatever

you

want

to

give

me

I'll,

cut

and

paste

it

into

the

meeting

notes,

so

that

people

who

catch

this

on

the

recording

and

find

it

later.