►

From YouTube: Kubernetes WG IoT Edge 20190424

Description

April 24 2019 meeting of the Kubernetes IoT Edge Working group - discussion of security concerns in industrial IoT context

C

For

my

thesis,

where

I'll

be

focusing

on

developing

edge

infrastructure

for

some

specific

iot

use

cases,

I

got

to

know

about

cube

edge

a

while

ago

during

my

literature

review-

and

this

was

sort

of,

I

think,

the

first

nordic

project

specifically

for

iot,

so

I'm

interested

to

learn

more

about

it

and

possibly

contribute

at

some

stage

later

on

yeah.

Thank

you

great.

A

So

we,

as

I

said

in

in

the

email-

I

I

don't-

have

any

fixed

agenda,

so

nobody

propose

any

any

specific

material

for

for

this.

This

this

particular

call.

So

I

know

we

have

a

couple

of

things.

People

have

been

working

on

lately,

so

you

know

I

was

thinking.

Maybe

we

can

go

through

it

and

see

where

we

are

and-

and

you

know

what

are

the

interesting

and

you

know

maybe

a

little

bit

organized

about

where

we,

where

we

want

to

go,

is

the

next

item

so

things

on

my

mind,

I

know

cindy.

A

D

D

D

D

So

it's

working

end-to-end

and

then

the

code

is

gonna,

be

merged

soon,

and

I

can

share

all

the

two

links

as

well.

So

regarding

to

the

coming

kubucons,

there

are

maintenance,

coop

edge

talks,

including

introduction

and

deep

dive.

We

are

gonna.

Do

some

demos

there

are

demo

booths

as

well,

and

also

huawei

is

hosting

a

meetup

in

person

meetup,

so

you

got

you're

all

welcome

to

to

attend.

Basically,

we

want

to

like

welcome

the

whole

community

to

talk

about

the

edge

computing.

What

are

the

scenarios?

D

E

So

we

had

planned

during

the

last

meeting

to

start

to

integrate

some

of

the

the

comments

and

additions

that

were

being

provided

by

the

community,

and

I

have

not

yet

had

the

time

to

go

in

and

start

to

integrate

those

those

contributions.

But

I

actually

should

have

the

time

this

weekend

next

and

our

plan

was

to

reach

a

point

where,

in

a

very

short

order,

actually

where

we

felt

that

the

paper

had

had

received

enough

contributions

and

had

enough

time

out

there

that

we

were

ready

to

say.

E

Okay,

it's

good

and

we

discussed

that

possibly

being

not

this

meeting,

but

the

next

any

thoughts

around

sticking

with

that

or

extending

that

it's

I'm

good

either

way.

I

I

definitely

think

we

can

finish

kind

of

some

comment:

integration

over

the

next

two

weeks,

given

the

amount

of

activity

on

it.

But

if

anyone

would

like

it

to

be

out

there

longer

and

doing

a

bit

more,

that's

also.

Okay,

too,.

F

No,

I

mean

I,

I

myself

haven't

taken

a

look.

I'm

I'm

not

looking

to

you

know,

reopen

anything,

I'd,

love

to

I'd

love,

to

take

a

look,

and

I

think

this

idea

of

security.

I

know

I've

had

a

couple

recent

customer

conversations

and

since

steven's

on

the

call,

I

don't

know

how

well

he

he

can

speak

to

the

harbor

piece,

but

where,

where

did

you

land

on

trusting

containers

to

run

on

the

edge?

Is

that

addre

is

that

topic

addressed

in

the

in

the

paper.

E

The

the

right

exact

scenario

is

being

described

as

a

net

security

challenge

or

if

you

would

like

to

add

so

the

the

way

that

I

structured

the

paper

was,

instead

of

being

a

you

know,

a

one-year

effort,

ish

thing

where

you

know

we

kind

of

had

to

get

everything

just

right

and

they

had

its.

You

know

particular

vocabulary

and

so

on.

E

It's

actually

really

more

of

a

way

of

exposing

what

it

is

that

that

we

need

to

be

looking

for

regarding

security

at

the

edge

and

and

therefore

a

lot

easier

to

kind

of

finish

out

and

get

out

to

the

world,

and

so

in.

In

that

regard,

it's

I

think

it's

come

together

quite

easily

and

it's

got

a

good

amount

of

material

in

it.

But

again

you

know

it's

not

a

400

page

paper.

E

G

Yeah,

I'm

preston.

I

heard

you

mentioned

my

name

with

regard

to

harbor,

so

you

know

where

that

would

fit

in.

I

I

think

that,

whether

it's

harbor

or

some

other

private

container

image

registry,

when

you're

at

the

edge,

I

would

contend

that

if

you

have

the

resources

to

host

it,

you

probably

want

something

to

host

your

container

images

locally

so

that

you

can

take

a

network

outage.

G

But

then,

on

top

of

that,

once

you

get

that

going

harbor

and

I

think

a

few

other

potential

solutions

would

engage

in

static

analysis

of

the

content

in

a

container

to

potentially

analyze

them

against

cve

databases.

So

that

would

be

useful

on

top

of

that.

If

you've

got

the

resources,

there

might

be

additional

solutions

that

would

do

runtime

analysis

of

containers

behaviors.

G

Now

those

things

can

be

kind

of

costly,

so

whether

everyone

could

afford

them

at

an

edge,

I'm

not

so

sure,

and

they

often

engage

in

the

network

underlay

as

well,

because

they're

monitoring

the

behavior

of

these

things,

you

know

an

example

might

be

ascertaining

whether

something

that

nominally

is

an

inbound.

Only

service

suddenly

appears

to

be

attempting

outbound

connections.

F

So

I

mean

I

wouldn't

I

like

I

wasn't

planning

on

this,

but

it's

interesting

because,

like

I

said,

I've

had

a

couple

conversations

that

I

had

to

put

together

some

slides

for

an

oil

and

gas

customer

who

had

custom

devices

where

they

had

tpms

on

board

that

were

looking

to

figure

out.

How

would

they

use

the

tpm

to

verify

you

know,

containers

and

that

led

me

down

a

you

know

deep

dive

into

what

we're

doing

in

the

binary

authorization

space

and

where

that

intersects,

with

open

source

site.

G

If

people

of

the

images

is

important

and

then

the

other

factor,

I

think

that

you

generally

want

is

audit

logs,

because

a

lot

of

these

vulnerabilities

get

discovered

and

it

turns

out

they've

been

in

there

for

months

or

years

and

there's

great

value,

potentially

in

at

least

being

able

to

get

measurements

on

the

worst

case.

Potential

exposure

like

where

were

they

actually

used

and

in

what

time

frame.

F

E

E

You

know

some

other

papers

that

have

historically

stayed

out

there

for

a

while

and

if

we're

all

good

with

it,

you

know,

I

think,

the

the

deadline

of

two

weeks

from

now,

having

kind

of

all

comments

integrated

and

have

it

a

little

bit

more

polished

is

still

fine

by

me.

So

I'm

saying

I'm

happy

to

do

that

unless

anyone's

opposed

ship

it

all

right.

F

D

E

F

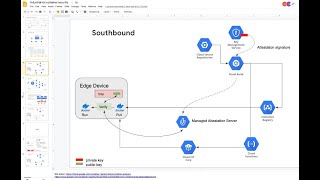

Is

this

presenting

well

enough

for

folks

yeah?

I

can

see

it

okay,

I'm

to

just

keep

it

in

this

mode,

rather

than

kind

of

go

full

screen,

but,

and

I'm

not

going

to,

I

don't

want

to

go

through

all

these.

This

is

the

main

one

I

wanted

to

talk

to,

which

is

that

they

they

had

this

challenge,

which

is

they

had

invested

in

putting

the

hardware-based

security

on

the

device

and

we're

looking

at

that.

F

You

know

sort

of

now

what

from

the

rest

of

the

devops

workflow

and

all

the

blue

hexagons

are

some

degree

of

a

managed

google

service

and

then

what

I

also

want

to

then

show

is

like

what

are

they?

What

are

those

functional

components?

Look

like

in

in

open

source

right

because

they

operate

in

saudi

or

china

or

places

we

can't.

We

can't

run

these

services

so

and

in

general,

I'm

I'm

always

in

favor

of

saying,

let's,

let's

avoid

lock-in

but

try

to

just

offer

what

we

can

as

far

as

manage

stuff

just

for

its

value.

F

F

Is

that

in

your

build

process,

you

can

have

multiple

steps

in

the

ci

cd

pipeline

that

each

can

use

a

separate,

pgp

key

to

attest

to

their

part

being

done

on

an

image

and

each

of

them,

and

this

is

open

source

as

a

project

called

graphios,

and

so

when

each

of

these

steps

completes

their

task,

they

add

a

note

into

the

the

sort

of

metadata

server

saying

this

image.

Uri

has

passed

my

qualification

and

then

in

in

gke.

F

However,

this

customer

wanted

to

do

something

just

with

containers

without

kubernetes,

on

the

edge,

which

I

think

is

also

kind

of

a

bit

of

a

proxy

of

just

saying.

What

do

we?

What

does

this

look

like

in

a

pure,

kubernetes

or

or

kubernetes

like

or

edge

environment,

and

then

so

why

this

took

me

some

getting

used

to?

Is

that

when

you

look

at

secure

boot

or

edge

security

around

trusting

what

content

runs

you're,

usually

looking

at

signatures

of

the

content

itself,

whereas

in

this

situation,

all

that's

being

attested

to

is

the

uri

of

the

registry?

F

So

the

idea

is

that

you'd,

without

the

admission

controller

and

without

kubernetes

you'd

have

one

task

that

pulls

so

you'd

have

to

receive

some

sort

of

command,

which

is

this

you

know

through

our

iot

service,

send

a

command

say:

hey,

pull

this

pull

and

run

this

image.

You

pull

it

by

verifying

it

where

you're

doing

is

you're

retrieving

the

the

signature

of

this

uri

from

this

metadata

service.

Again

this

would

have

to

be

trusted.

F

You

have

to

trust

that,

but

then

the

public

keys

of

anything

that

is

signing

these,

that

is

a

testing

to

these

containers,

would

be

stored

in

the

tpm

right,

so

that

that's

where

the

the

hardware

trust

is

applied

in

the

sense

that

you

you're

not

going

to

have

a

malicious

process

on

the

device

mucking.

With

this

verification

step

now

I

mean

you

know

in

order

to

trust

something

as

rigorous

as

a

tpm

sort

of

everything

around

it

has

to

be

rigorous.

F

F

F

F

So

I

don't

know

I

tried

to

keep

that

quick

just

because

I'm

not

here

to

give

a

long

talk

about.

But

what

do

you

I

mean?

How

do

other

people

think

about

you

know

so

the

other

options

that

I've

come

across

out?

There

are

the

the

docker,

tough

notary

route

of

signing

image

content

and

then

red

hat

deon.

I

don't

know

if

you

know

much

about

the

simple

image

signing

project

in

red

hat.

G

F

G

G

F

Right

right,

the

difference

between

that

which

is

still

a

pipeline

of

states

right

versus

a

single

image

in

any

one

of

those

that

is

passed,

a

list

of

checks

and

has

like

does

the

does

the

does

the

notary

system

support

for

a

given

container?

How

do

I

retrieve

the

list

of

all

of

the

signatures?

I

would

expect

to

be

yeah.

G

I

believe

it

does,

but

I'm

speculating,

I

can't

say

I've

ever

done

it,

but

I

believe

the

signatures

are

cumulative.

Another

potential

issue

is

just

having

somebody

in

your

organization

vet

the

licensing

terms

on

components

in

there,

so

there

are

orthogonal

things

that

relate

to

components

that

might

not

have

been

done

by

your

own

organization.

At

all.

You

know

you

don't

want

the

college

intern

to

choose

what

things

come

off

of

docker

hub,

for

example,

maybe.

F

And

that,

and

that

kind

of

in

some

ways

is

where,

like

this,

the

new

world

just

needs

to

go

copy.

The

old

world

of

golden

images,

golden

vm

images

that

have

been

blessed

by

an

organization's

policy

requirements

right.

Like

start,

your

app

image

from

this

blessed

corporate

maintained

golden

base,

image.

G

Yeah,

but

it

is

nice

to

have

if

you

can

tolerate

the

storage

bloat

potentially

from

these

signatures

accumulating,

and

I

think

in

the

big

picture

in

today's

world,

maybe

at

edge

you've

got

limited

enough

resources.

You'd

worry

about

that,

but

in

most

places

in

traditional

I.t

you

don't

you

might

as

well

have

that

tracking

go

along

for

the

ride,

because

when

bad

things

happen,

you

want

to

often

do

forensics

to

figure

out

how

to

make

it

better.

G

Next

time,

like

hey,

this

thing

supposedly

got

signed

and

vetted,

but

it

turned

out

that

maybe

it

wasn't

examined

carefully

enough.

Let's,

let's

try

to

improve

how

we

are

working

this

in

the

future

and

having

that

audit

log

facility

can

be

of

great

value.

I

suppose

you

could

have

the

signatures

accumulate

or

you

could

simply

write

everything

to

logs

that

could

be

analyzed

later

either.

One,

I

suppose,

gets

you

to

the

same

end

state

potentially.

E

Steve

on

the

note

of

us,

you

know

being

being

concerned

about

storage

at

the

edge

for

all

of

this.

You

know

in

a

in

an

ideal

world.

Your

edge

is

not,

you

know,

a

single

edge,

node

and

a

constrained

network

environment

talking

up

to

the

the

cloud

backend,

but

rather

you

have

a

spread.

It's

pretty

easy

to

have

a

a

localized.

E

You

know

gateway

that

has

more

storage

that

can

serve

as

a

resource

for

the

really

small

stuff

as

well.

So

I

agree

with

you.

I

don't,

I

think

in

extremely

constrained

environments

you

might

care,

but

otherwise

I'd

say

you'd.

Probably

rather

have

this

data

also

that's

based

on

everything

I've

heard

out

in

the

market.

That

makes

sense

to

me

too.

D

D

F

And

so

the

verification

step

was

making

a

cloud-bound

call

to

check

in

with

that

metadata

service

to

say:

do

I

have

notes

that

have

tell

me

this

is

trusting

now

I

don't

think

the

I

don't.

I

think,

there's

a

scaling

down

of

the

performance

cost

of

doing

a

full

content

based

signature.

So

you

know

when

you

verify

a

content-based

signature.

You've

got

to

read

every

byte

of

the

image

to

calculate

the

the

signature

right,

which

potentially

could

be

raised

as

like

a

performance

issue.

F

If

you

had

to

do

that

for

large

images,

but

I

think

on

the

edge

you're

already

trying

to

ship

relatively

small

images,

so

that

reduces

the

burden

of

scanning

every

bite.

This

system

does

have,

I

think,

potentially

a

irrelevant

performance

advantage

and

that

since

you're

only

signing

the

uri

it's

you

know

very,

very

little

content.

You

actually

have

to

like

hash

and

sign

and

and

then

compare,

but.

E

Yeah

and

then,

if

you're

not

changing

out

containers

too

frequently,

then

you

know

these

steps

are

executed,

as

long

as

you

can

protect

that

what's

running

has

been

running

is

still

running

the

what

you

know

what

was

scheduled,

then

you

don't,

and

you

don't

have

a

lot

of

changes

there,

then

that

activity

is

not

too

frequent.

The

scanning

that

you're

describing.

G

F

F

F

G

F

G

C

F

F

D

G

G

Not

only

this

there's

a

bigger

picture

issue

here

that

if

you

establish

working

technology

and

really

evaluate

this,

I

know

I

among

others,

have

been

kicking

around

the

idea

that

when

you

look

at

a

container

image

registry,

one

could

argue

it's

a

catalog

of

blobs

and

that

if

you

build

such

a

thing

like

a

harbor,

there's

no

reason

you

couldn't

embellish

it.

Going

forward

to

also

host

things

like

firmware

images

which

you're

going

to

find

at

the

edge.

G

You

know

they

could

be

firmware

images

for

compute

devices,

network

switches,

whatever

you

might

have

even

embedded

things

not

running

linux

or

containers

at

all,

but

that

still

need

updates

out

at

the

edge

location

and

signing

those

firmware.

Images

is

as

important

as

signing

the

container

images

right.

It's

all

runnable

code

and

you

need

to

establish

providence

and

not

allow

trojan

horse,

manipulated,

hacked

versions

of

these

to

get

put

into

production.

E

F

There

does

seem

to

be

in

this

tech

constantly

reinventing

itself

a

limit

to

how

general

a

scope

certain

tools

can

be

built

for,

in

the

sense

that

the

attestation

of

containers

to

be

pragmatic

has

to

assume

in

a

few

places

that

we're

dealing

with

building

containers

and

a

a

fully

factored

out

sort

of

signing

pipeline

may

be

too

difficult

to

apply

to

specific

cases.

I

don't

know,

but

that's

it's

just

something.

I've

seen

in

terms

of

the

limits

of

patterns

that

that

are

applicable,

but

that

aren't

easily

portable.

E

Oh

yeah,

I

think

you

know,

there's

the

implementation

of

it

as

a

nightmare

right

to

be

able

to

do

the

the

checking

across

different

resource

types.

But

you

know

there

are

plug-in

architectures

that

could

be

used

or

maybe

it's

the

ends.

The

end

goal,

which

is

the

the

attestation

verification,

is

universal

right

and

then

things

feed

into

it.

E

But

yeah

point

taken

it's

just

like

everything

when

you

get

down

to

just

like

just

like

the

edge

hardware,

stuff,

the

above

the

abstraction

layers,

everything

seems

you

know

really

nice

and

well-formed

and

underneath

it's

a

nightmare

of

you

know,

pieces

of

code

that

actually

do

the

work

because

it's

different

across

different

boards,

etc.

So

yep,

it

is

true,

it

was

super

easy.

I

guess.

We'd

have

universal

attestation

in

place

right

now

for

a

variety

of

things

flowing

so

yeah.

H

H

F

G

G

G

G

Documents,

but

when

you

authenticate

to

utilize

the

registry,

is

that

what

we're

talking

about

yeah,

I

yeah.

I

believe

that

that

authentication

is

pluggable,

there's

a

section

of

the

documentation

on

our

back,

though

you

can

use

whatever

authentication

source

is

in

common

use

within

your

organization.

F

Uses

we

use

our

our

standard.

Credential

for

service

counts

right

into

our

managed

container

registry,

but

what

I'm

thinking

of

this

is

wandering

off

topic

for

the

edge,

but

I

might

look

at

doing

a

hardware

replication

of

a

google

cloud

container

registry

mirroring

for

on-prem

use

where

the

the

primary

cloud-based

registry

is

our

managed

registry,

but

the

on-prem.

F

G

Yeah,

I

think

one

of

the

issues

or

one

of

the

values

of

that

replication

is

to

even

constrain

your

network

path

so

that

you

don't

want

a

scenario

where,

let's

say

I've

got

data

centers

that

are

much

larger

than

others.

That

merit

scale

out

of

these

registries,

because

a

big

city

los

angeles

might

need

five

registries

locally,

but

you

don't

want

all

five

to

be

pulling

them

from

central.

Maybe

one

should

pull

it

and

then

share

it

with

peers.

Something

like

that.

G

F

G

E

I'd

like

to

to

make

a

request

that

if

anybody

here

on

the

call

surfaces,

something

that

is

not

listed

as

a

particular

challenge

of

security

at

the

edge

and

you

can

even

as

it

as

it

gets

toward

the

cloud

back

ends,

would

you

kindly

drop

a

note

into

the

security

white

paper?

Even

if

you

just

you

know

two

three

sentences

that

we

can

expand

upon

to

contribute

this

into

that

paper

that

that

is,

its

purpose

is

to

cindy

you

mentioned

so

get

the

list

and

then

dive

deep.

E

G

H

Yeah,

no,

maybe

at

this

time

really

from

my

side

also,

I

started

to

work

towards

from

the

cloud

to

to

the

edge

and

for

you

guys,

my

comments

are

made

in

green.

So

if

you

find

the

green

section,

this

is

done

by

bernhard

and

I

recently

reviewed

six

section

six

and

four

and

five

was

already

quite

comprehensive.

H

Much

interestingly,

I

also

came

up

yesterday

with

the

conclusion.

We

still

miss

some

some

sort

of

updates

of

the

host

operating

system

on

the

edge

node

or

gateway

node.

So

I'm

currently,

let's

say

thinking

about

how

this

is

related,

for

example,

to

the

initial

setup,

which

is

section

2

of

4.2,

running

processes

and

binary

at

this

station.

E

Sure

I'd

say:

let's

I'd

say:

let's

give

it

a

shot.

I

mean

there's

a

lot

of

material

there.

So,

let's

we

can

open

a

section

just

for

that

and

if

it

turns

out

to

only

have

one

or

two

items

in

it,

maybe

we

merge

it

into

other

sections.

But

I'd

say

anything

more

than

three

sub

points

and

you've

got

yourself

a

whole

section.

There

makes

sense

to

me:

okay,

fine,.

E

E

G

E

Because

that

tends

to

be

the

way

security

discussions

go.

I

thought

a

a

master

list

of

what

it

is

everyone's

concerned

about

would

be

a

great

way

to

to

level

set

exactly

what

people

need

to

dive

into.

Then

you

essentially

have

each

each

section

or

subsection

is

its

own

rabbit

hole?

Well,

that's

fine!

You

can

take

it

from.

G

G

G

G

F

D

F

That

then

has

a

bridge

over

to

the

k-native

eventing

system

and

it

gets

very

inceptiony,

because

I

think

you

can

have

brokers

that

link

to

brokers

the

link

to

brokers

and

at

each

stage

you

can

choose

how

to

how

wide

the

path

you

want

right.

So

I

think

traditional

brokers,

like

you,

could

always

do

this

in

rabbit.

For

example,

where

you

could

have

exchanges

and

then

you

could

have

within

exchanges.

You

could

draw

like

a

lasso

around

these

name

spaces

or

these

topics

could

then.

G

F

Edge

with

the

k3s

running,

k

native

on

the

edge

right

and

then

having

a

k

native

deployed

running

in

response

to

a

event

where

the

origin

of

the

event

is

a

device

on

the

lan.

That's

publishing

to

an

mqtt

broker

on

a

node

or

on

the

cluster

or

that

mqtt

broker

is

then

bridging

the

event

into

a

k-native

event.

As

a

canadian.

G

G

F

G

That

would

actually

you

know

coming

up.

It's

not

only

a

meeting

is

good,

but

at

the

very

end

of

the

year

we'll

have

kubecon

north

america,

where

this

sig

probably

is

going

to

get

a.

You

know

opportunity

to

have

intro

and

deep

dive

sessions

again,

and

that

might

be

a

good

session

for

probably

in

the

deep

dive

category.

But

I'm

really

curious

about

this

subject

and

then

would

doing

that

at

the

edge

make

seo

service

mesh

mandatory

as

well

or

is

that

an

only

option.

F

G

D

F

Yeah,

the

main

role

is

that

k-native

is,

is

a

approach

to

standardize

the

business

logic.

Authors

contract

right.

So

if

all

I'm

writing

is

a

function

that

responds

to

stuff

and

I

can

deploy

that

function

on

the

cloud

or

on

here,

you

know

I.

I

have

to

only

learn

one

way

to

respond

to

requests

or

events

and

the

that's

the

kind

of

k-native

developer

contract.

In

some

ways.

F

D

F

H

F

D

G

A

G

Yeah,

let's

not

change

it

frequently,

because

I've

still

been

noticing

people

trying

to

go

in

at

the

old

times

and

every

time

you

change.

I

think

there

must

be

whenever

a

kubernetes,

sig

or

working

group

changes

time.

It

takes

a

lag

of

about

six

months

till

the

last

person

notices

the

new

time,

yeah.

A

But

but

what

I'm

thinking

if,

if

the

apec

time

is

not

convenient

for

everybody

to

to

to

join-

and

you

know,

people

think

we

need

you

know

more

more

things,

even

temporarily

for

for

a

couple

of

months

to

work

on

some

topic,

we

can

also

schedule

new

meetings.

So

maybe

we

can

have

like

this

meeting

every

two

weeks

in

this

time

on

top

of

the

the

apec

one,

so

you

know

whatever

people

think

makes

sense.

You

know

to

keep

the

things

going

properly.

G

The

other

thing

that

we

might

get

an

opportunity

for

coming

up,

I

think

we

missed

a

window

for

europe

and

and

maybe

china

too,

but

at

the

kubecon

events

we

can

request

a

birds

of

a

feather

like

room

out

of

the

cncf,

so

maybe

at

the

end

of

the

year.

If

we

have

enough

people

likely

to

go

to

the

north

america

q

con,

we

can

ask

for

a

room

and

just

get

together

in

a

physical

meeting.

G

D

A

G

And

just

putting

a

stake

in

the

ground

by

the

way

for

this

series,

which

is

for

the

convenience

of

north

america,

I

think

I

don't

think

it's

fully

stated

in

the

group

charter

for

the

meeting

time,

but

I'm

hoping

that

this

will

shift

with

daylight

savings

time

for

the

u.s,

because

that's

the

normal

expectation

in

all

the

other

kubernetes

groups.

Yes,

so

I.