►

From YouTube: Kubernetes Contributor Summit 2018 - State of Networking

Description

A survey of 2018's achievements, some of the in-progress work, and a bit of exposition on forward-looking efforts.

Presenters:

Bowei Du, Google

Tim Hockin, Google

A

Good

morning,

everybody

thank

you

all

for

coming

out.

We

are

here

to

talk

about

what's

new

in

networking,

these

are

the

Sigma

Network

chairs.

Well,

we

got

Dan

here

Casey's

somewhere

in

the

audience.

There's

Casey

and

we've

got

Bowie

and

the

other

Dan

who

are

some

of

our

top

contributors

in

cig

networks,

so

we're

here

to

sort

of

tag-team

on

you

today,

so

we're

gonna

only

have

25

minutes,

so

we're

gonna

kind

of

burn

through

this.

If

you

have

questions,

please

raise

your

hands

and

jump

up

and

scream,

or

we

can

talk

afterwards.

A

First

I

want

to

talk

about

a

few

of

the

things

that

we

got

done

in

2018.

So

since

last

year,

when

we

were

here,

we

have

merged

a

whole

bunch

of

changes

in

no

particular

order.

We've

got

traffic

shaping

in

our

CNI

Network

expect

now,

so

the

traffic

shaping,

which

is

sort

of

this

thing

bolted

on

to

pods,

is

now

actually

a

first-class

thing.

We

have

support

for

Network

policy,

egress

and

pods

and

cider

rules

that

went

to

GA

in-camera

is

112.

We

have

the

IPS

version

of

cube

proxy

hallelujah.

A

It

scales

much

better

than

the

IP

tables

version.

So

for

those

of

you

who

are

running

these

massive

8000

node

clusters,

you

can

actually

do

this

now.

I

know,

there's

a

lot

of

you

out.

There,

we've

also

merged

core

DNS

as

a

default

replacement

for

Cordy

and

for

a

cube

DNS.

So

for

all

of

you

who

have

had

some

DNS

pain,

hopefully

it's

a

little

bit

better.

A

We've

got

some

more

on

that

later

and

how

life

is

getting

even

better

in

DNS

space,

and

then

we

have

a

cool

feature

that

lets

you

figure

out,

which

IP

addresses

you

want

to

expose

your

node

ports

on.

These

are

just

a

few

of

the

things

that

that

popped

up

through

the

release

notes

through

the

last

year,

I'm

sure,

there's

more

but

I

apologize

if

I

missed

those

a

bunch

of

things

are

also

in

flight.

This

is

where

the

exciting

stuff

is.

A

We

have

ipv6

support

in

kubernetes,

I,

don't

know

if

you

knew

that,

but

it's

alpha

and

kubernetes

since

1/9

the

the

team

that's

been

working

on.

This

has

been

working

on

continuous

integration

development

and

has

been

working

on

the

the

follow-on

to

this,

which

is

dual

stack,

v4

and

v6.

At

the

same

time,

we

also

have

custom

pod

dns

policy,

so

for

those

of

you

who

don't

like

the

default

DNS

and

kubernetes

from

your

pod

point

of

view,

you

can

change

all

the

settings

that

you

have

for

your

pods

in

flight.

A

That

API

is

beta

since

110

I,

don't

see

anything

that

would

stop

that

from

going

to

GA.

We

also

have

a

really

cool

feature.

Coming

up

is

of

111.

It

was

beta.

It's

called

pod

readiness

gates.

What

this

lets

you

do

is

have

dynamically

adjustable

readiness

for

your

pods.

So

if

you

have

a

load

balancer

that

needs

to

sync

with

your

pods,

you

can

actually

have

it

out

of

tree

feedback

into

the

readiness

loop

of

pods,

so

that

you're,

rolling

updates

of

your

deployments

or

whatnot

can

wait

for

your

load

balancers

to

catch

up.

A

This

is

very

excellent

for

external

infrastructure.

We

also

added

support

for

SCTP.

You

know

that

that

other

IP

protocol,

apparently

people

still

use

it.

It's

actually

not

that

uncommon.

I'm

surprised

so

we've

got

support

for

it.

It's

alpha

and

112

I

don't

see

anything

that

would

stop

that

from

going

to

GA

and

as

of

113,

which

was

like

you

know,

5

minutes

ago,

we've

got

support

for

node

local

DNS

caching,

which

should

help

a

whole

lot

in

people

who

are

having

DNS

problems

on

UDP

lossy

networks.

A

So

this

is

a

huge

deal

and

this

is

sort

of

catches

you

up

again,

there's

a

ton

more

going

on.

These

are

just

a

few

of

the

things

that

are

in

flight

right

now

and

then

coming

up.

We've

got

a

whole

bunch

of

things

that

are

also

sort

of

at

the

beginnings

of

being

in

flight,

and

these

guys

are

going

to

talk

about

each

of

these

things

into

in

detail

we're

working

on

egress.

We

spent

a

lot

of

time

at

cube

con

last

year.

Talking

about

ingress.

A

We've

got

an

update

on

where

we

are

with

that.

We've

got

dual

stack

support,

which

is

v4

and

v6.

At

the

same

time,

multiple

IPS

per

pod

node

local

services.

This

is

something

people

have

been

asking

for

for

a

long

time.

How

do

I

talk

to

the

copy

of

the

daemon?

That's

running

on

my

machine,

we're

talking

about

possibly

a

revamp

of

services

and

endpoints.

Anybody

who

is

paying

attention

to

Brian's

talk

saw

him

start

to

allude

to

some

of

the

pain

points

around

there.

We've

got

some

workaround

multicast.

A

B

So

I'll

talk

about

ingress,

so

ingress

is

current.

The

lowest

common

denominator,

API

and

our

thoughts

is

to

sort

of

change

that

and

change

the

strategy

by

which

we

express

l7,

it's

very

clear

from

the

survey

earlier

this

year.

I

think

oh

gosh,

it's

January

that

users

are

not

happy

with

it.

It's

too

many

annotations

most

are

not

portable

I

think

we

found

in

the

survey

about

60%

of

users

use

annotations

and

probably

more

and

in

2018.

B

You

know

we

expect

more

from

an

l7

proxy

right

than

just

prefix

matching,

for

instance,

so

this

was

a

hot

topic

at

Q

con

2017

there

was

a

lot

of

conflicting

input

from

the

survey

is

still

not

resolved,

but

we

have

a

couple

ideas,

so

please

catch

us

in

person.

Conversation

I

think

this

is

a

great

place

to

sort

of

get

all

those

ideas

out

and

kind

of

understand

where

the

community

sits

in

this

next

slide.

A

So

ipv6

and

dual

stack

so,

like

I

said

we

have

single

stack

v6

now,

which

means

you

can

bring

up

a

kubernetes

cluster

in

pure

v6

mode

or

pure

v4

mode,

but

not

both.

There's

a

kept

a

big

cap,

it's

very

detailed.

It's

approximately

done

for

the

dual

stack

support.

It

requires

some

really

significant

API

changes

actually,

which

required

us

to

argue

a

lot

within

the

cigar

contexture

about

how

do

you

actually

evolve

bits

of

our

API

incompatible

ways?

A

For

example,

how

do

you

take

a

single

IP

address

and

make

it

a

list

of

IP

addresses

in

a

way

that

is

rolled

back,

safe

forwards

and

backwards?

It's

actually

a

fairly

complicated

topic.

I

was

a

little

bit

surprised

at

the

depth

of

it,

also

adding

multiple

IPS

for

endpoints,

and

how

do

you

disambiguate,

whether

you're

speaking

through

v6

or

3-4?

A

It

also

requires

cube

proxy,

which

has

always

run

in

one

of

these

modes,

to

now

run

in

both

of

these

modes,

which

is

going

to

be

an

interesting

bit

of

code

to

write

and

for

cubelet

to

handle

host

ports

in

multiple

IP

families,

we're

not

adding

IP

family

conversion.

So

you

can't

come

in

as

v4

and

get

converted

to

v6,

but

we

will

allow

you

to

come

in

through

either

protocol

honestly.

This

is

a

huge

effort.

It's

gotten

a

lot

bigger

than

I

thought

it

would.

A

This

is

a

place

where

I'm

calling

for

help

anybody

who's

in

our

community

who

wants

to

help,

develop

and

test

this

or

even

just

feedback

on

the

ideas

read

the

cap.

We

could

totally

use

your

help

on

this

one

I'm

doing

since

apology,

sorry,

so

the

other

top

that

I

think

is

really

exciting.

Right

now

is

node

local

services.

This

was

actually

a

proposal.

Several

months

back

by

a

community

member

was

fantastic.

A

They

proposed

a

very

simple

API,

for

this

and

I

said

hold

on

hold

on

I,

think

I

think

it's

bigger

than

that,

and

we

spent

a

whole

bunch

of

time

exploring

how

big

it

could

get,

and

we

came

back

to

the

simplest

solution

is

actually

the

best

which

is

awesome.

So

the

kept

is

in

progress

now

I

think

actually

just

got

merged

last

week,

which

means

the

development

is

going

to

happen

it

like

so

many

things

in

kubernetes

is

surprisingly

complicated.

So

even

though

it's

one

field

in

the

API,

it's

actually

fairly

complicated.

A

We're

gonna

need

a

new

resource

type

to

describe

some

of

the

forwards

and

backwards

lookups.

So

we're

gonna

start

with

a

sort

of

limited

alpha,

won't

have

all

the

functionality,

but

it

will

let

you

finally

say

I

would

like

to

talk

to

the

logging

agent

or

the

monitoring

agent

on

the

same

machine

as

me,

which

is

a

pretty

huge

deal.

I

think.

B

A

B

As

brian

alluded

to

earlier,

it

looks

like

it's

time

to

revisit

how

services

and

endpoints

are

designed

at

least

concretely.

We

know

that

the

endpoints

kind

of

the

way

it's

structured

today

results

in

an

N

squared

scaling

factor

which

doesn't

work.

If

you

have

say

thousands

of

service

endpoints

and

in

the

other

piece

that's

relevant

is

that

services

themselves

are

kind

of

this

mashup

of

all

the

various

features,

and

you

could

imagine

the

resource

being

more

orthogonal

in

its

design.

So

we

haven't.

B

C

So

I

just

submitted

a

kept

for

a

multicast

some

people,

especially

like

JBoss,

for

instance,

if

you

have

a

high

availability

group,

the

the

servers

use

multicast

to

find

each

other,

and

so

people

are

doing

things

in

kubernetes

that

want

multicast

support.

We

don't

actually

specify

what

happens

at

all

when

they

try

to

do

that.

Most

plugins

don't

support

it.

C

We're

trying

to

find

a

good

way

of

configuring

it

in

a

way

that

not

everybody

will

be

able

to

support

it

because,

like

some

of

the

clouds

use

an

underlying

network

that

have

no

multicast

but

we're

just

sort

of

trying

to

figure

out

what

to

do.

The

cap

actually

got

closed

when

they

all

got

migrated

and

I

have

to

reopen

it.

A

D

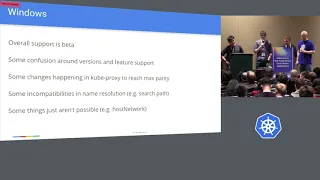

All

right

windows

support.

It

turns

out

that

people

actually

want

to

run

Windows

only

clusters

and

mixed

Windows

and

Linux

clusters.

So

over

the

last

year,

there's

been

a

lot

of

work

from

Microsoft

cloud

based

in

a

couple.

Other

places

that

have

added

window

support.

Add

a

bunch

of

layers

in

the

company

stack,

cubelets,

cube

proxy,

the

network

plug-in

and

CNI

layers.

The

overall

supports

currently

at

beta,

but

there

are

there's

a

little

confusion

around

what

levels

of

support

there

are

at

four

different,

Windows

versions.

D

The

earlier

support

didn't

quite

work

like

all

of

us

expected.

It

would

because

we

just

weren't

familiar

with

how

Windows

dealt

with

containers,

but

it

seems

like

that's

converging

a

little

bit

more

as

different

Windows

versions

come

out

and

different

updates

are

happening

for

windows

side,

so

I

think

that

will

become

a

little

bit

more

streamlined

a

little

bit

more.

Like

the

Linux

side

of

things.

There

are

some

differences,

for

example,

with

DNS.

D

There

are

some

peculiarities

with

how

Windows

needs

the

DNS

to

happen,

because

guess

what

it's

networking

just

doesn't

look

like

or

work

like

Linux

does

so,

and

some

things

aren't

possible

that

Linux

has

supported

for

a

while.

So

that's

continuing

to

be

work

done,

and

we

expect

a

little

bit

more

convergence

in

how

cube

runs

across

multiple,

different

or

across

different

OSS.

D

So

if

you

want

to

contribute

there,

that's

also

a

good

place

to

help

out

and

to

because

the

PRS

are

very

large,

or

at

least

they

have

been

in

the

past

and

it's

hard

for

those

of

us

that

don't

work

on

Windows

daily

to

actually

review

those

PRS

in

a

sane

way.

So

if

anybody

has

comments,

you

know

talk

to

us

or

jump

on

PR

reviews

on

github

as

well.

Please.

A

D

D

Two

years

ago,

last

year

at

cube

con

in

December,

we

kind

of

formed

a

working

group

to

kind

of

work

through

these

issues

a

place

where

you

know

we

could

talk

about

them

and

not

necessarily

conflict

with

or

put

these

discussions

into

sig

network,

so

they

kind

of

just

have

a

more

focused

working

group.

What

came

out

of

that

was.

Over

the

last

year,

we

developed

a

specification

for

tackling

some

of

the

the

problems

that

people

in

that

space

in

the

network,

function,

virtualization

or

media

function.

D

Virtualization

use

cases

head,

and

we

wanted

to

keep

the

solutions

simple.

We

did

not

want

to

change

cube

API.

We

wanted

to

try

to

put

some

of

these

things.

You

know,

keep

them

outside

of

cube

and

just

prove

out

those

use

cases

without

making

too

many

changes

to

Cabernets.

So

there

was

a

specification

developed

over

the

last

year

about

how

pods

can

use

multiple

network

attachments.

Essentially,

you

get

a

multiple

IPS

or

multiple

kernel

interfaces

in

your

pod

and

your

pod

exists

and

multiple

data

planes.

D

And

also

so,

the

the

previous

multi

network

working

group

was

a

little

bit

more

focused

on

like

the

year

two

years.

Just

try

to

get

something

going

and

network

service

mesh

is

kind

of

a

slightly

longer

term,

looking

project

that

also

tries

to

tackle

that

problem

and

a

couple

other

problems

in

the

networking

space.

D

For

my

pod,

it's

more

abstracted

in

terms

of

I

want

to

talk

to

the

service

and

here's

a

little

bit

about

my

requirements

for

talking

to

that

service,

and

when

we

say

service

we

don't

necessarily

mean

a

kubernetes

service,

but

something

in

the

cluster

that

requires

networking

and

network

service.

Mesh

is

really

a

project

about

how

you

get

your

pods.

To

talk

to

that

other

thing,

and

there's

a

lot

of

underlying

infrastructure

there

and

they're

kind

of

they're.

D

A

And

and

to

just

to

say

again,

all

of

these,

the

the

current

topics

are

being

developed

out

of

tree.

So

these

are

using

custom

resources

and

extension

api's

to

extend

and

build

out

the

kubernetes

api

server,

which

is

one

of

the

topics

that

Brian

talked

about

earlier

this

morning

and

I

just

want

to

call

out

as

a

really

sort

of

awesome

point

that

these

teams

can

do

this

work

without

having

to

be

subjected

to

the

rules

of

kubernetes

and

the

pace

of

the

overall

project.

Yeah.

B

To

follow

on

not

just

at

l3

l4,

we

find

that

people

are

looking

to

service

message

to

solve

a

lot

of

their

problems

and

at

the

same

time

we

see

that,

for

example,

if

you

layer,

SDO

or

a

service

mesh

on

top

of

kubernetes

concepts,

some

of

them

are

fairly

overlapping.

You

can

think

Network

policy

definition

of

service

and

so

forth.

So

we're

trying

to

look

into

how

to

make

these

things

mesh

more

cleanly,

at

least

define

kind

of

or

resolve

some

of

these

overlaps.

B

A

To

get

towards

the

end

here,

we've

got

a

couple

of

speculative

looking

forward

things

that

we're

talking

about

maybe

building

more

into

communities.

Multi

cluster

is

a

topic

that

has

come

up

over

and

over

and

over

again,

we've

gotten

way

for

the

last

four

plus

years

saying

that

kubernetes

is

a

cluster

abstraction.

Well,

it

turns

out

that

in

the

real

world,

people

have

multiple

clusters

and

they

want

to

be

able

to

work

across

them.

A

A

D

Currently,

the

network

plug-in

API

for

kubernetes

is

based

on

CNI,

which

is

a

process

and

exact

model.

So

one

of

the

things

we're

looking

at

is:

how

do

we

transform

that

into

being

a

G

RPC

based

model

because

most

of

the

other

plugins

and

communities

use

G

RPC?

Why

should

the

network

plug-in

model

be

different

and

so

there's

opportunities

for

tighter

integration?

There,

perhaps

better

reliability

as

well.

So

that's

definitely

going

to

come

up

in

the

next

year

or

so.

B

One

other

thing

we're

looking

at

is

how

to

sort

of

make

the

DNS

schema

better.

So

there's

a

couple

of

issues

right

now,

for

example,

you

can

alias

it

names

if

you

use

this

correct,

namespace

and

name,

and

how

do

we

do

a

migration

while

we

preserve

compatibility,

so

definitely

the

DNS

spec

needs

a

re-examination

also

in

terms

of

DNS.

We

need

to

look

at

how

this

intersects,

with

policy,

for

instance,

should

all

names

of

all

services

be

available

to

everyone,

which

is

the

case

today?

A

So

truthfully,

there's

probably

a

hundred

other

things

that

are

in

flight

that

we

haven't

talked

about

here.

I

apologize

to

people.

If

I

missed

your

favorite

topic,

the

reality

is

sig

network

is

a

fairly

big

sig.

We

get

a

lot

of

people

who

attend

our

calls

every

couple

weeks,

but

we

have

a

relatively

limited

contributor

base.

So

the

call

to

action

here

today

is

if

networking

is

something

that's

interesting

to

you

or

your

company

or

your

teams.

We

could

sure

use

help.

A

We

have

a

lot

of

code

that

needs

better

tests,

so

we

have

a

lot

of

refactoring

that

can

be

done.

We

have

a

lot

of

catch-up

work

around

things

like

component

configs

and

component

isolation.

We

could

totally

use

help

in

the

sig

network,

otherwise

we

will

continue

to

chew

away

at

it

for

the

course

of

the

year

and

I

hope

that

we

have

more

stuff

to

talk

about

next

year,

but

I

also

hope

that

you

know

your

pet

projects

would

be

on

here.

E

Question

about

multicast

actually

you've

been

saying

that

it's

something

that

depends

on

the

actual

implementation

of

the

provider.

I

just

wanted

to

know.

If

there's,

maybe

any

plans

to

like

try

to

support

multicast

on

an

iron

level

instead

for

those

that

doesn't

prove

I,

provide

that

I

know

it's

difficult.

C

So

kubernetes

get

like

kubernetes

core

only

has

limited

control

over

networking

functionality,

it's

all

delegated

to

the

the

network

plugins.

So

the

idea

that

we're

looking

at

is,

we

will

provide

a

way

for

an

application

to

say:

I

need

multicast

to

work

here,

and

then

the

network

plug-in

deals

with

actually

implementing

that,

and

so

some

of

them,

like

we've

currently

implements

multicast

is

one

of

the

few

that

does

and

it

will

implement

it

even

if

you're,

on

top

of

a

network

that

doesn't

implement

multicast

underneath.