►

From YouTube: Multi-Network community sync for 20230614

Description

Multi-Network community sync for 20230614

A

Okay,

welcome

everyone.

The

multi-networking

community

sick

meeting

today

is

June

14th

and

we

are

continuing

our

discussion

on

a

multi-network.

Please

add

your

agenda

items.

If

you

have

other

topics

to

to

discuss.

Could

it

be

in

front

of

the

discussion

because

it's

going

to

take

like

the

most

of

the

rest

of

the

meeting?

Probably

let's

I

think

Pete.

Did

you

added

that

one

or

I'm

not

sure

who

added

the

first

one.

A

B

I'm

happy

to

give

you

a

few

minutes.

If

you

want

or

I

can

just

leave

it,

but

I

think

the

key

thing

is,

it

does

feel

like

Dr

Ray

should

work

pretty

well

and

I.

Think

if

we

write

the

right

kind

of

controller,

we

could

save

ourselves

writing

a

lot

of

core

kubernetes

code

that

maybe

we

don't

need,

and

that

would

be

great

if.

A

B

Absolutely

I:

okay,

I!

Don't

want

to

turn

this

into

lots

of

bike

shading,

because

there's

lots

of

technical

details

here

that

aren't

100

and

wait

it's

better

to

discuss

them

offline,

but

okay,

okay!

So

let

me

start

explaining

I

think

the

key

thing

sorry

I'm

fighting

Zoom

a

little

which

has

decided

to

make

itself

really

small,

which

doesn't

help.

Give

me

a

moment.

B

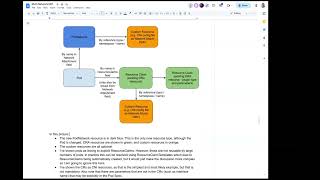

There

we

go

right

so

a

couple

of

things

here:

firstly,

there's

a

bit

of

a

link

here

to

all

the

different

pieces

here

here

that

there

exist,

so

we've

got

pods,

so

we've

got

pods

and

pod

networks.

Pods

are

some

kind

of

link

to

pod

Network,

that's

fairly

uncontroversial

blood

networks

have

got

some

custom

resource

and

I've

got

in

my

mind,

a

cni

implementation,

so

the

customer

resource

would

have

some

kind

of

network

attachment

definition

type

stuff

in

it.

B

In

that

case,

the

other

case

that

exists

now

is

that

ports

kind

of

resource

claims

which

say

oh

I,

want

to

have

a

resource

like

this,

which

could

be

a

GPU

device

driver.

In

this

case,

the

resource

claim

would

correspond

to

I

want

to

be

on

this

network,

and

then

there

are

various

links

from

that

resource

claim

which

can

point

to

custom

resources

and

provide

arbitrary

parameters.

So

all

of

those

things

are

provided

by

dra

and

the

flow

that

I

am

thinking

we

could

make

work.

B

Is

you

instantiate

a

pod

at

the

point

where

you

create

a

pod,

there's

already

some

flaws.

That

say,

if

you've

got

resource,

claim,

templates,

go

and

add

resource

claims

to

the

Pod.

We

could

extend

that

so

that

for

pod,

for

if

you're

attached

to

networks,

we

add

a

resource

claim

for

each

Network

with

potentially

customer

resources

you

might

want

to

have

to

the

ability

to

put

your

own

resource

claim

in

there

rather

than

having

a

resource

claim

template

from

the

custom

resource.

That,

then,

gives

you

a

couple

of

things.

B

It

gives

you

scheduling,

because

that

resource

claim

can

affect

where

the

Pod

is

scheduled

and

because

this

is

relatively

arbitrary,

it

can

do

all

kinds

of

arbitrary

scheduling

complexity,

and

it

also

gives

you

some

interaction

with

the

kublic,

because

the

Kubler

does

access

to

these

fields.

At

the

moment,

it's

just

passing

them

across

into

a

plugin.

B

The

minimal

way

of

making

this

work

would

be

that

we

write

a

driver,

I'm

going

to

say

cni

again,

because

that's

the

way,

I'm

thinking

about

things

that

would

the

data

that

it

would

set

up

and

the

scheduling

it

would

do

would

be

very

minimal.

It

would

read

the

relevant

resources

of

custom

resources.

It

would

then

put

them

into

the

data

blob

that

is

going

to

be

past

the

plugin

on

the

kublet

when

it

gets

the

Kubler

that

data

is

passed

across.

So

no

core

changes,

yet

we've

just

written

some

drivers.

B

The

core

changes

to

this

would

be

well.

We

need

to

have

the

Pod

Network

feels

to

actually

set

this

stuff

up

and

then

some

questions

about

how

the

reserve

claims

go

in

there

and

at

the

far

end

we

actually

have

to

set

the

networks

up

on

the

Node.

But

we've

got

the

information

into

the

node

and

I've

done

the

scheduling

stuff

again

with

what's

already

in

Dre.

B

B

One

of

the

more

interesting

points

is

dr8

is

problematic

if

your,

if

your

cluster

needs

to

be

functional

before

dra

is

up

and

running

and

because

dra

requires

you,

installing

controllers

there's

a

kind

of

chicken

and

egg

problem

here

and

the

right

answer

for

that

is

probably

to

say.

Well,

you

can

have

a

simplified

Network

where

the

default

Network

would

always

be

a

simplified

Network

that

just

doesn't

use

any

of

this.

The

way

that

that

works

is

if

you've

got

a

simplified

Network.

It

doesn't

use

Dre

at

all.

B

B

A

But

Network

becomes

the

resource

claim

yeah

because

the

whole,

but

that's

what

kind

of

I

would

think

in

the

long,

so

the

the

the

special

resource

handling,

let's

say,

qos,

which

is

not

on

the

on

anywhere

on

the

roadmap.

Yet,

but

let's

bear

with

me

what

I

would

imagine

right,

because

the

scheduler

already

I

I

see

a

path

without

any

of

this.

What

I'm

saying

is

in

scheduler,

if

I

see

in

a

podsback

pod,

Network

I

ask

this

special

controller

that

we

write

for

this

purpose.

A

Basically,

and

then

it

does

what

you're

saying

about

the

cluster

creation

having

the

chicken

egg

issue.

That

goes

away,

because

we

create

that

controller

that

the

default,

the

reference

controller

for

the

default,

Network

or

very

basic

it

shouldn't

be

for

default.

It

would

do

the

basic

thing

that

we

do

today,

which

would

be

just

okay.

A

This

network

is

available

or

not,

and

there

is

a

some

way

in

this

controller

that

can

tell

you

this

node

has

it

or

this

this

one

this

node

doesn't

have

so

basically

that

is

all

there

and

we

leverage

the

whole

code

that

the

array

created

pod

and

dra

code

understands.

Oh,

if

it's

support,

network

I

will

go

and

ask

this

driver,

which

is

hard

coded

because

it's

standard

right.

So

there

is

that

one

and

then

the

same

way

in

cubelet.

Oh,

it's

a

pod,

Network

I'll

just

use

the

dra

path

to

pass.

A

That

part,

is

that

part,

yes,

that

that

party

will

be

there

because

you

always

have

to

have

KCM

before

you

do

anything

KCM

has

to

work

in

that

static

pod?

Usually

so,

and

this

is

where

the

controller

would

run,

because

this

will

be

a

default

controller

inside

core

kubernetes,

it

will

not

be

some

or

maybe

it

would

be,

but

then

you

would

have

to

maybe

start

that

guy

some

some

other

way

so

something

to

discuss.

I'm,

not

saying

you

might

be

right.

A

B

If

you

have

pod

Network

I

mean

all

I'm.

Thinking

is

that

if

you

have

a

pod,

Network

you're

going

to

have

to

have

something

that

says

what

resources

it

needs

now,

you

can

have

a

default

that

says

if,

but

if

you

just

have

a

pod,

Network

and

knows

and

no

resource

claim

attached

to

pod,

then

you

have

a

controller

that

puts

that

resource

claim

in

and

says

you're

on

this

network

I'm

going

to

build

a

resource

claim

based

on

this.

A

A

At

one

point

we

can

extend

it

so

that

either

what

you're

saying-

maybe

maybe

that

should

be

the

case

right,

pod

Network,

and

maybe

this

will

create

a

resource

claim

so

that

we

don't

have

to

do

some

special

code

in

the

inside

under

the

hood.

We

just

we

just

already

have

the

resource

claim

right

for

it,

and

and

we

create

one

pair-

maybe

interface,

per

attachment

to

a

pod,

something

along

those

lines.

Maybe

right,

I

would

not

want

to

this

I.

Don't

like

this.

This

Arrow

here

this

guy.

A

C

C

C

Address

is

one

of

your

concerns,

the

other.

Do

you

have

a

comment

that

I

would

like

to

make?

Is

that

if

you

do

only

one

resource

claim

per

pod

Network,

you

basically

allocate

resources

for

the

entire

network,

and

then

you

may

get

some

limitations

like

this

network

is

available

on

on

these

nodes.

But

then

your

ability

to

influence

pot

scheduling

is

fairly

limited.

C

You

can

basically

say

any

number

of

ports

can

run

on

this

node

using

this

network,

but

simulating

things

like

a

certain

number

of

ports

per

node

becomes

tricky

and

an

allocating

additional

Hardware

like

in

like

what

I

all

thingy

that

that

becomes

harder.

That's

why

a

per

Port

resource

claim

does

make

sense

for

I

guess

at

least

some

drivers

or.

A

A

C

A

That's

the

idea,

yeah

I

think

that's

the

idea.

Okay,

I

hear

you

so

yeah.

Let

me

let

me

read

through

that

because

yeah

that

might

be

as

well

then,

but

then

what

will

be

the

option

for

for

a

user

you're

right,

because

then

you

can

only

have

one

for

that

Network

right,

so

so

then

kind

of

you

screw

it.

If

you

want

to

have

I,

don't

know

based

on

bandwidth,

qos

and

some

other

pieces

right.

Do

you

have

to

like

for

each

parameter

you

have

to

have

separate

resource

claim.

C

So

the

resource

claim

uses

the

same

concept

that

you

have

in

your

in

your

in

your

proposal.

There

is

a

reference

two

parameters

and

those

parameters

are

specific

to

that

drive

of

attendance

resource

claim.

So

if,

if

there

is

one

part

Network

type

implemented

by

someone,

they

Define

which

parameters

they

accept

and

those

parameters,

they

need

to

get

passed

through

to

the

resource

claim

and

then

through

the

resource

claim

to

the

driver

and

those

parameters

can

be

an

arbitrary

cod,

with

whatever

Fields

make

sense

for

that

pod

Network.

A

Okay,

but

is

it

basically

I

think

it

can

be

true

because

it's

all

up

to

your

controller,

so

basically

what

I'm

getting

at

is

like

today,

I,

let's

let

me

maybe

do

a

better

analogy:

I

can

I

can

request

a

CPU

or

a

memory

right.

Can

a

single

resource

claims

gauge

it

all

based

on

those

two

parameters

at

once:

yeah,

and

because

it's

custom,

your

own

controller,

so

basically

yeah

you're

right,

because

then

it

can

just

see

who

has

what

resources

and

then

list

those

return.

A

Those

nodes

that

that

kind

of

fits

both

conditions

right.

So

what

I'm

getting

at

is

that

it

will

boil

down

what

sort

of

controller

for

Dra

we

will

create

what

will

be

the

default

one

right

that

will

come

out

of

the

box,

because

I

think

with

this

one

we

will

have

to

have

one

right

and

then

it

has

to

be.

There

has

to

be

ability

for

you

to

either

Point

your

pod

Network

to

a

different

control

controller

for

the

array.

A

They,

let's

call

it

I'm,

calling

it

dra

control

machine

related,

that's

true

or

not,

but

let's,

let's

say

that

is

pod.

Network

can

point

to

a

different

control,

a

dra

controller

right.

That

will

say:

okay

now

use

this

guy

for

this

spot

Network,

because

this

guy

is

my

own

and

it

includes

stuff

like

us,

bandwidth

and

an

additional

stuff

that

will

kind

of

identify

which

network

is

available.

C

C

Even

if

it

doesn't

do

much

beyond

what

the

default

network

does,

it

would

just

be

for

testing

purposes,

and

then

that

is

when

the

solution

that

we

pass

on

to

vendors

and

say:

Here's:

here's

how

you

can

do

it.

Here's

an

example

now

Implement

your

own

custom,

Hardware

logic

or

whatever

else,

parameters

what

you

need

using

using

this

new

new

apis.

So.

A

So,

for

that

reason,

I

yeah,

I

I,

would

maybe

those

yeah

that's

something

that

we

need

to

think

of

whether

we

automatically

create

this

resource

claims

or

a

pod.

Network

can

reference

one

and

that

will

indicate

which

path

I

want

to

use

the

more

complicated

one

or

not

all

right,

I

think

I'm,

gonna

cut

it

here.

I

don't

want

to

kind

of

get

into

this

for

let's

read

through

this

doc.

Everyone-

and

let's

start

commenting

on

this

one

and

let's

kind

of

adjust

what

we

are

discussing.

A

B

A

So

so,

basically,

just

to-

and

let's

let's

move

to

that,

okay,

so

to

kind

of

resume

to

what

we

discussed

last

time,

the

first

basic

things

right:

the

Pod

pod

spec

changes.

We

are

thinking

of

adding

the

Pod

Network

attachment

and

that's

that's

a

list

of

structures

which

will

be

the

reference

to

a

pod

network,

name

optional,

name

of

the

interface

that

you

want

to

add

to

your

to

your

attachment

and

whether

the

specific

network

is

a

primary

or

not

so.

A

D

D

So

so

maybe

they

use

the

won't

to

add.

So,

let's,

let's

imagine

yeah

the

as

the

you

mentions.

The

amounts

have

a

way

to

adding

the

static

IPO

Mac

address

of

this

stuff.

So

maybe

this

should

be

not

in

the

Airport

network

attachment

object,

but

I

suppose

this

should

be

put

in

the

additional

information

inside

the

port

Network

attachment

right

there.

We

have

the

extremely

string

map

all

right,

so

they

are

using

the

string

map.

We

could

add

India

some

parameters,

so

so

I'm

I'm

just

proposing

that

here

to

adding

the

additional

stuff.

A

A

a

string

of

strings

map

is

I,

think

I'm,

not

sure

anyone

would

allow

us.

This

is

usually

easy

reserved

just

for

for

labels,

I'm,

not

sure

whether

someone

would

allow

us

to

just

arbitrary

parameters

like

that.

That's

kind

of

yeah

it

is

convenient

because

it

handles

everyone's

case,

but

on

the

other

hand,

that's

I'm,

not

sure

we

that

will

be

okay

and

I'm,

not

sure

whether

we

will

be

able

to

push

that

through

in

the

API.

A

C

D

For

the

sake

by

the

way,

I

also

have

the

question

about

the

primary.

So

this

this

design

is

expecting.

They

are

one

pod.

Network

should

care

about

the

IPv6

as

well

as

ipp4,

but

sometimes

some

some

user

wants

to

ipv4

uses

the

eso.

Zero

I

mean

okay

ipv

for

the

default

network

is

used,

but

to

that

for

IPv6

case

they

want

to

use

the

data

plan

at

that

time.

D

A

E

D

A

A

F

A

A

F

D

Yeah

today,

I'm

understanding

what

the

mic

is

saying

so

maybe

the,

but

also

that

I'm

also

understanding

that

you

are

thinking

the

you

are

thinking

the

different

type

B6

right.

So

so

maybe

the

India

cap,

you

should

Implement.

You

should

explain

the

this

in

this

cap.

Ipv6

is

the

a

little

bit

different

from

the

huge

ipp6

such

as

we

do

not

care

about

the

router

advertisement.

We

do

not

get

the

ring

work

ourselves

start

to

specify.

A

It's

it's

not

that

normal,

it's

up

to

the

implementation

and

Michael.

Let's

sort

that

out

in

this

should

be

a

separate

cap.

If

that's

an

issue

in

kubernetes

I,

don't

want

multi-networking

fixing

that,

because

that's

gonna

be

this

is

a

completely

separate

cat.

Where

she

was

saying

is

an

issue

today

in

default

Network

today

and

let's

resolve

it

there,

not

here,

please

because

I

think

that's

that's

a

separate

cat.

That

has

to

be

there

to

say

the

default

gateway

comes

from

the

route

advertisement,

but

kubernetes

does

it

differently?

A

Let's

resolve

it

as

a

separate

cap

in

the

default

network.

Not

here,

please,

because

that's

I

I

see

what

you're

saying

I

know

what

you

mean,

but

I

don't

think

multi-network

should

support

a

suit,

cannot

resolve

that

because

that's

something

that

needs

to

be

resolved

on

the

core

component,

then

we

can

inherit

that.

So

maybe

what

we

can

do

is

yes

should.

A

I

agree

with

you,

but

what

can

I

tell

you

I

I,

don't

think

we

can

resolve

that

because,

as

I

said,

that's

completely

different

story

resolving

how

the

V6

should

be

done.

It's

not

a

multi-networking

issue,

it's

V6

issue

and

how

it's

supported,

I

agree

with

you

we're

building.

Then

then,

let's

say

we

don't

support

V6.

Is

that

what

we

should

say

then

I

don't

think

so

that

that

won't

fly

as

well.

Neither

so.

A

F

A

A

A

That's

not

HP,

because

I

can

have

multiple,

so

it

will

not

cover

the

case

where

I

want

to

have

multiple

networks

per

namespace.

Each

then

separate

pod

network

will

be

default,

will

be

primary

for

each

of

those

namespaces.

So

I

will

have

to

configure

every

pod

Network

as

a

primary,

because

I

want

all

of

them

to

be

Primary

in

their

respective

namespace.

So

what

you're

saying

I

cannot

have

one

network

saying

that

your

primary

and

the

other

one?

No,

not

because

then

I

can

attach

those

two

into

my

pod

and

then

what.

B

B

A

No

but

I

think

this

is

the

most

generic

across

the

board.

What

we

are

trying

to

support

and

I'm

gonna,

throw

at

you

srov

for

dpdk,

you

don't

have

the

name,

you

don't

even

have

the

primary

at

that

point,

the

primary

might

be

because

your

implementation

might

might

use

the

interface,

but

then

it's

up

to

your

application,

the

PK

application

onto

how

you're

gonna

make

sure

that

this

this

interface

is

the

default.

A

So,

and

we

want

to

support

such

case

right

that

this

network

is

all

let's

say,

dpdk

type

Network,

which

you

just

pass

around

pointers

to

a

PCI

device

right,

and

so

this

is

an

extreme

case.

An

interface

name

already

has

no

meaning

here,

but

that's-

and

this

is

just

an

example-

that's

why

I'm

I'm

saying

if

you

will

want

to

say

Okay

I,

want

to

specify

Mac

address

here,

someone's

going

to

say

I'm

using

completely

I'm,

not

using

ethernet

I'm

using

something

else.

So,

let's

add

now

infinity.

E

I

think

it

is

a

fairly

common

use

case,

and

it's

just

for

the

reality

of

how

people's

networks

are.

Is

there

there

are

exceptions

and

just

because

some

things

like

dpdk

says?

Oh

all,

this

stuff

is

like

kind

of

optional

right,

like

you

nailed

it

like

interface

name,

is

meaningless

right,

Mac

addresses

potentially

meaningless

or

whatever,

because

it's

all

handled

on

a

different

IP

stack

right.

E

When

you

could

potentially

have

something

to

say,

like

hey,

I

need

a

static

Mac

address

for

this

thing,

because

it

exists

on

a

Network

that

has

Port

security

or

whatever

and

I

need

to

like

have

one

that

matches

what's

already

in

that

external

equipment

or

something

so

I

do

think

that

we

need

something

to

express

a

like

that.

You

may

need

some

particular

detail

here

and

yes,

I

realize

cnai

is

a

implementation

detail,

but

this

is

something

that,

in

the

cni

world

we

handle

with

cni

args

right.

A

D

A

D

The

so

I

mean

okay,

so

yeah

yeah,

so

they

are

not

only

the

cni,

so

the

implementation

specific.

You

mentions

that

those

are

the

Implement.

All

almost

implementation

would

introduce

the

additional

feed

for

PowerPoint

basis,

so

I

think

so

that

not

not

the

specific

data

structure,

but

we

should

provides

some

string

map

or

some

stuff,

and

that

idea

and

then

the

implementation

can

consume

this

field

whatever

they

want.

Maybe

there's

some

implementation,

May,

adding

the

Json

industry,

it's

okay,

some

implementation

May

consume

just

a

swing.

It's

okay.

D

A

D

D

A

G

A

I

was

so

so

what?

If,

okay

before

we

go

there,

because

I

think

we

are

moving

to

another

topic,

I

think

we

didn't

finish.

The

primary

discussion

and

I

want

to

finalize

that

before

so

before

we

go

there

because

I

want

to

talk

about

the

network

interface

that

I

am

proposing,

not

that

proposing

that

I

was

thinking

initially

to

have,

and

maybe

this

answers

all

our

questions

and

all

the

kind

of

concerns

used.

But

before

we

go

there,

let

can

we

finalize

on

the

primary.

D

A

Yeah,

let's,

let's

conclude

on

the

primary,

so

is

that

something

that

we

so

I

understand

today

we

have

only

one

network,

so

there

is

no

other

way

to

kind

of

this

split,

V4

and

V6.

But

that's

a

fair

point

right.

We

can

have

that

and

putting

aside

Michael

the

case

of

that,

it's

completely

Incorrect

and

the

default

gateway

is

coming

from

Route

advertisements.

A

F

A

A

That's:

okay!

That's

fine

yeah!

So

basically

your

name

defines

whichever

okay

I'm

using

this

name.

This

network,

this

network

is

the

primary.

So

it's

going

to

be

the

default

gateway.

That's

what

you're

signing

up

for

that's

what

I

want

to

avoid.

I

want

to

the

network

to

be

more

flexible,

and

it

says

okay

here

on

this

pod

you're

gonna

be

default,

but

on

the

other

pod

you're,

not

because

you're

you're,

just

a

secondary.

F

A

That's

correct,

but

then

you

can

Define

what

what

0

0.2

and

then

the

next

hope

is

up

to

your

implementation

and

imagine

you

have

an

implementation

where

the

next

hub,

I'm

gonna,

bring

up

Google

and

our

gcp.

Our

whole

infrastructure

under

the

hood

doesn't

rely

on

any

any

of

what

you're

saying,

because

we're

using

end

to

end

and

end

end

point

to

endpoint.

So

basically,

my

next

help.

Okay,

just

just

make

sure

that

the

packet

goes

out

of

the

note.

The

rest.

A

A

A

F

A

Sure

but

then

you're

tight,

the

single

one

is

tied

to

be

the

the

only

the

single

one

or

I

need

to

create

future

version

for

the

same

network,

because

I

want

to

for,

depending

on

what

I'm

doing,

I

want

one

network

to

be

the

primary,

the

other

one,

not

on

the

other

case.

That

would

don't

support

my

cases

or

I

have

to

create

double

versions

of

every

Network

one

that

has

the

is,

is

the

default?

Has

the

default

set

and

the

other

one,

which

is?

Where

is

not

that's.

A

A

E

F

A

A

With

you

then

I

agree

with

you.

You

mess

up

with

things,

but

if

I'm

not

mistaken,

like

Kaliko

doesn't

do

that,

celium

doesn't

do

that.

Those

are

the

two

I

know

the

bigger

ones

that

do

not

do

that.

Don't

rely

on

external

things

to

configure

your

pods

namespace

because

of

what

you're

saying

there

is

no

control

over

it

then,

but

all

the

all

those.

A

And

and

that

that

that's

it

that's

a

choice,

right

I,

understand

that,

and

there

you

can.

There

can

be

various

ways

on

how

to

implement

that

Michael,

but

I'm

talking

about

like

what

is

90

of

kubernetes

networking

today,

that's

what

what

they

do

and

and

I

I

understand

your

case

and

I

understand

your

networking

kind

of

approach.

But

this

is

like

probably

five

or

ten

percent

of

kubernetes

usage

and

it's

not

doesn't

cover

the

90

that

we

kubernetes

uses

today.

D

A

D

A

That's

okay,

yeah

I

said

that

now

and

now

I

have

to

swallow

that,

but

you

you're

kind

of

right,

but

then

what

what

I'm

getting

at

I

am

trying

to

make

this

fit

into

today's

standard

of

kubernetes

I,

don't

want

to

completely

break

the

standard

that

kubernetes

has

today,

because

that's

what

you're

asking

for

and

I

don't

want

to

go

there.

Let's

do

that,

then,

in

kubernetes,

in

the

standard

network,

because

I.

F

A

That's

fine,

but

that

combination

doesn't

use

us,

doesn't

assume

that

whatever

you

do

in

in

the

pod,

what

I

want

to

say?

No,

it's

and

then

it's

up

to

that

cni

on

how

you

manage

your

poding

site,

and

now

you

want

to

push

on

the

the

spec

on

the

API,

more

of

the

kind

of

concepts

of

what

that

you

have

to

kind

of

address,

all

the

dynamic

see

as

well

and

do

all

the

over

there

in

the

cnis

and

basically

boil

it

down

to

to

the

cni

conf

how

it's

being

done.

A

D

F

F

F

B

A

D

G

A

G

A

A

A

A

F

A

So

that's

what

I

was

referring

last

I

think

that

was

last

meeting

is

I.

Think

maltus

is

working

towards

the

same

thing

where

the

cni

is

just

a

stop

is

just

a

a

a

proxy

because

you

have

to

have

of

how

the

API

is

constructed.

You

create

a

binary

in

that

path

that

you

said,

and

it

does

stop

what

it

does.

A

It

will

call

into

a

socket,

or

there

are

some

other

ways

socket

local

on

the

Node,

and

that

binary

knows

it's

hard

coded

in

that

binary

and

basically

it

calls

that

socket

and

that

socket

is

part

of

an

agent

that

runs

as

a

pod

in

that

node,

as

a

demon

set

across

all

the

nodes.

Basically,

so

that's

why

it's

available

everywhere

and

that

demon

set

has

a

full

knowledge

about

kubernetes

API

and

basically

this

is

like

another

controller

and

a

fully

fledged

pod.

A

A

A

E

What's

the

disconnect

here

so

in

the

in

the

today's

Maltese

and

cni

World

and

Other

cni,

there's

a

number

of

cni

plugins

that

work

this

day,

we

have

an

architecture

that

we

call

a

thick

plug-in,

which

is

essentially

where

your

typical

cni

plugin

would

be

a

thin

plug-in,

which

runs

as

a

one

shot.

It's

a

binary

on

the

host.

It

runs

in

one

shot

and

Away

you

go

now.

A

thick

plug-in

replaces

that

one

shot

with

a

shim

that

passes

the

information

to

a

resident

Damon.

E

E

That's

not

to

me

at

least

exclusive

from

what

Mike

is

saying,

because

that

the

way

that

operates

today

is

by

the

same

conflict.

That

Mike

is

is

talking

about

right.

So

at

least

the

way

that

I

understand

it

is

Mike

is

saying:

here's

what

we

have

today,

how

what's

our

equivalent

of

that

for

the

future

Mike

did

I

totally

butcher

that

or

help

me.

A

Yeah

and

and

Michael

at

that

case,

this

TX

cni

that

that

Doc

is

mentioning,

has

a

full

knowledge

about

kubernetes.

So

imagine

that

that

agent,

it

just

need

the

name

of

the

Pod

and

it

can

get

all

the

data

it

needs

from

the

API

server

again

outside

of

whatever

cubelet

provided

it

to

him.

What

it

needs

only

is

the

ID

of

the

United

States,

because

that's

a

hash

that

cubelet

and

basically

pass

to

cni-

and

this

is

something

that

is

not

anywhere

publicly

available.

You

can

grab

from

API.

A

So

that's

the

information.

You

definitely

need

from

the

cni

right.

I

need

to

get

the

the

namespace

or

the

hash

of

the

namespace,

and

then

the

agent

looks

at

that

namespace.

It

has

privileged

access

to

enter

that

namespace

and

do

whatever

it

wants

with

it

right,

and

it

can

do

it

based

on

the

additionally

on

top

of

that,

based

on

the

kubernetes

API,

so

I

grabbed

my

pod.

Oh,

the

Pod

has

those

pod

networks.

I

will

grab

the

Pod

networks.

What's

in

the

Pod

networks?

Oh

it

has

parameters.

A

I

will

grab

the

parameters

it

it

gets.

I,

don't

have

to

pass

all

that

information

through

the

cni

config

I,

just

pull

it

from

the

API

myself.

I

just

need

the

references

and

then

based

on

that

my

implementation

knows

what

to

do

and

it

can

do

whatever

it

wants

to

set

the

default

gateway

set.

All

the

routing

put

the

names

on

the

interfaces

sky's

the

limit.

Basically

at

that

point

right.

So

that's

what

I'm

trying

to

kind

of

support

as

well

right.

A

Your

case

is

valid

as

well

and

I

agree

with

you,

where

I'm

limited

just

the

thin

cni,

where

the

whole

configuration

and

the

whole

logic

is

contained

to

the

binary

and

has

no

access

to

the

kubernetes

API

and

everything

what

it

needs

is

inside

the

conflict.

That's

valid

use

case,

I,

agree

with

you.

The

primary

in

this

case

is

completely

unusable

because

it's

over

there,

but

then

there

are

other

cases

where

the

thick

cni,

where

I

don't

care.

What's

in

the

conflict

complex,

it's

just

a

stab.

It's

just

a

shame

layer.

A

D

A

D

D

A

A

That

no

I

agree

with

you,

so

maybe

at

that

point

for

those

use

cases

it

we

use

what

Michael

said.

It's

just

the

name

of

the

conflict

they

are

named

and

basically

what

Michael

said

the

Pod

network

has

too

much

to

the

cni.

If

you

don't

specify

anything

and

you're

you

that's

what

it

may

can

default

to.

Maybe

that's.

We

should

look

into

that

right.

A

How

to

support

what

you're

saying

I

agree

with

you:

how

we

do

that

we

point

to

the

cni,

and

maybe

that

should

be

the

default

and-

and

maybe

this

is

where

the

vendor

with

we

would

have

to

support

from

the

get-go

the

default

vendor

provider.

Basically,

it

will

be

the

the

cni

vendor

right.

Basically,

the

default

cni

vendor,

which.

A

D

We

are

more

thinking

that

they're,

not

only

the

cni,

also

the

other.

Something

can

be

utilizing

so

at

that

time

the

opportunity

should

have

the

how

they

say

not

only

the

way

to

access

the

data

and

they

also

reached

the

the

cap

should

provide

the

way

to

passing

the

additional

implementation.

Specific

parameters

for

each

area,

I

think.

A

A

So

I

think

this

is

a

fair

point

from

you

Tomo

on

how

we

would

support

that.

Maybe

that's

something

that

we

should

look

into

and

have

a

ways

a

means

to

support

the

default

like

the

the

current

conflict,

because

today

is

just

the

first

on

the

list.

Maybe

this

can

change

that,

and

this

is

what

what

Michael

was

saying.

This

name

identifies

the

cni

conf

list

and

then

the

only

thing

that

will

distinct

it

will

be.

A

We

Define

a

hard

code,

a

specific

provider

that

will

just

say:

okay,

just

use

a

simple

cnis

and

the

names

and

those

are

the

names

for

those.

Maybe

that

can

be

it

and

then

there's

no

parameters,

no,

nothing!

You

can

expand

them

if

you

want

to,

but

something

to

think

about

all

right.

Let's,

let's

cut

it

here.

Let's

count,

I

will

add

this

as

a

continuation

point

for

the

next

week

and

and

if

you

have

a

chance,

please

read

what

Pete

wrote

about

the

dra

still,

so

we

can

continue

next

week

as

well.