►

From YouTube: Kubernetes SIG Network 2018-01-25

Description

Kubernetes SIG Network meeting from Jan 25 2018

A

B

A

C

The

cube

ATM

ones,

unfortunately,

all

of

those

are

based

on

a

project

called

kubernetes

anywhere,

which

started

out

as

just

someone

wanted

this

type

of

thing,

but

it

became

kind

of

the

de

facto

four-cross

cloud

testing

and

I've

been

trying

to

add

some

fixes,

but

there's

not

a

lot

of

owners

in

there

and

the

one

owner

that

I

did

know

and

that

works

on

this

to

you.

So

I

can't

test

things

out

very

easily

and

have

like

a

high

degree

of

certainty

needed.

C

I

mean

it

was

always

like,

like

an

instance

of

kubernetes

anywhere

like

a

Shah,

was

brought

over

in

to

test

infra,

and

if

you

have

tests

right,

yes

itself

and

the

PR

that

added

that

got

closed

so

now,

I'm

kind

of

stuck

in

the

same

process.

It

got

closed

because

Kubb

deploy

or

cluster

API

was

supposed

to

be

the

way

forward.

C

A

A

B

D

So

I

am

more

of

that.

On

our

end,

we've

been

working

on

migrating

all

ingress

jobs

to

pull

from

a

dedicated

project,

pool

with

a

lot

of

quota,

and

that

kind

of

migration

is

spawns,

a

couple

of

unforeseen

issues,

but

we're

aware

of

them,

and

we

know

how

to

fix

them.

It's

just

a

matter

of

fixing

it,

so

those

should

be

completely

fixed.

Hopefully,

at

the

end

of

today,

okay,.

D

A

C

A

B

I

mean

the

concern

I

have

is

that

there

there

might

be

a

lot

of

noise

and

some

of

these

results

because

of

its

flaky.

We

don't

know

whether

it's

flaky,

because

of

our

problem

or

because

of

somebody

else's

and

then

even

if

it

is

somebody

else's

problem,

then

it'll

still

be

showing

as

flaky

for

at

least

probably

at

least

a

day

yeah.

B

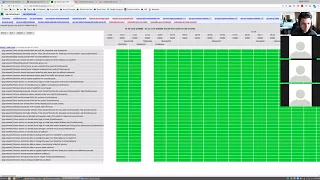

So

it's

kind

of

like

it's

a

little

hard

from

these

results

here,

to

tease

out

what

we

really

do

need

to

pay

attention

to

consistently

I

mean.

Obviously

you

can

go

through

the

list

once

at

one

time

and

be

like

okay,

this

tests,

not

our

problem,

it's

like

not

our

problem,

but

then

how

do

we

kind

of

know

that

the

next

time

we

go

through

in

like

a

couple

of

days

later,

that

yeah

I,

don't

know

it

just

seems

like

there's

kind

of

some.

F

B

A

B

B

Maybe

this

is

just

me

not

quite

understanding

the

presentation

of

the

tool

here,

it's

like

given

that

so,

if

we

go

back

to

the

yeah

you're

in

the

right

spot,

go

back

there

and

you'll

notice

that

the

DNS

should

provide

DNS

for

pods.

That's

the

one

that

builds

most

recently

and

it

failed.

Like

looks

like

this

morning.

How

do

we

actually

get

to

the

test

results

for

that

specific

test?.

B

B

B

A

C

Put

that

cuz,

if

so,

that

I

think

that's

the

change

that

I

made

so

I

noted

the

same

things

that

Dan

was

like

it'll,

be

a

six

storage

test

that

failed

so

for

a

given

job.

If

that

happens,

the

whole

thing

is

marked

as

flaky.

So

I

had

use

some

of

the

regex

that

I

saw

or

to

filter

to

just

save

Network

stuff,

but

that

appears

does

not

potentially

have

effect

on

the

whole

suite

if

you're

just

filtering,

because

it

just

shows

what's

displayed

I

guess

I.

F

A

B

B

A

B

A

B

B

Also,

do

we

have

any

idea

of

which

tests

are

more

important

to

concentrate

on

I

mean

like?

Is

there

a

set

of

tests

that

we

should

be

looking

at

every

single

day

or

every

other

day

to

make

sure

that

we

actually

work

to

get

them

fixed

and

then

some

others

that

we

don't

care

quite

as

much

about,

because

you

know

clearly

we

have

limited

manpower

or

person

power

as

it

is.

A

B

That's

something

that

some

feedback

that

we

could

send

to

the

tool

people

is,

if

there's

a

way

to

kind

of

sort.

The

tests

by

importance

by

importance

of

what

the

sig

thinks

is

important

yeah.

So

if

they

show

up

on

top

as

opposed

to

having

to

scroll

through

the

list

to

find

those-

and

then

you

know,

I

mean

at

least

then

you

can

kind

of

take

a

look

at

the

top

five

tests

or

something

and

be

like

you

know:

hey

our

most

important

tests

are

failing

and

that's

a

lot

easier

to

see

more

quickly.

B

A

G

A

B

Does

that

mean?

That's

you

alright!

So

there's

a

listed

issue

there

and

one

of

the

things

that

we

had

been

looking

into

was

running

pod

data

plane

on

a

separate

network

interface.

Now

this

doesn't

have

anything

to

do

with

multiple

networks

and

kubernetes

really

at

the

moment.

But

it's

more

about

you

know

having

the

masters

and

nodes

be

able

to

contact

each

other

on

you

know

one

network

or

one

IP

network

and

having

the

pod

data

plane,

run

on

a

different

one,

and

it

turns

out

that

there

are

some.

B

It's

not

particularly

easy

to

do

that,

and

there

are

a

lot

of

dependencies

in

the

code

where,

for

example,

if

you're

I

think

it's

if

the

node

IP,

where

the

hosts

I

think

it's

the

node

IP

yeah.

So

when

you

start

a

pod,

the

pot

gets

a

pot

IP,

but

the

pod

also

has

a

node

IP

and

some

things

in

communities

use

that

node

IP,

where

they

probably

need

some

other

kind

of

address.

If

the

pods

data

plane

is

on

a

different

network,

so

I

I'm,

probably

not

describing

as

well.

B

We

were

proposing

one

for

the

control

plane,

I,

believe

because

there's

enough

stuff

that

uses

the

nodes

current

address

for

data

plane,

type

things

like

Q

proxy,

for

example,

and

so

by

specifying

a

new

address

for

control,

plane,

eg,

nodes,

talking

to

the

master

or

master

talking

to

nodes.

That

was

kind

of

a

way

to

not

have

to

modify

a

ton

of

kubernetes.

B

A

F

Example

with

go

ahead:

Mike,

yes,

a

at

IBM,

we

always

use

notes

well,

usually

use

notes

with

two

interfaces

and

we

care

about

what

goes

on

or

what

I'm

trying

to

remember

what

I've

done

about

this

and

I?

Don't

really

remember

the

specifics,

yeah

I,

don't

quite

understand

why

it's

the

committee's

issue

at

all

I

mean

the

node

address

is

used.

For

you

know,

platform

communications

and

the

pod

addresses

are

used

for

data

and

it's

up

to

the

routing

rules

which

are

not

something

Cooper

in

his

manages

anyway

to

toe.

B

B

Usually

that's

going

to

be

I

think

whatever

the

hosts

IP

address

is

or

whatever

you've

set,

the

node

IP

for

the

notice,

but

the

node

IP

is

also

used.

I

think

when

the

master

wants

to

directly

talk

to

the

node

and

there's

a

couple

of

cases

when

that

happens,

because

there's

two

ways

that

nodes

and

masters

can

communicate

the

master.

Sorry,

the

node

can

actually

ping

the

master

and

keep

the

connection

open

and

by

this

I

mean

API

service,

entually

and

register

itself.

B

B

A

F

B

B

You

know

your

private

network,

so,

but

neither

of

these

are

exactly

right,

because

you

know

you

can

think

of

both

in

the

split

kind

of

controlling

data

playing

case.

Both

these

addresses

could

be

private.

Neither

one

of

them

needs

to

be

publicly

accessible

like

no

external

address,

but

yet

you

want

certain

traffic

going

over

one

or

the

other.

So.

B

F

F

F

F

B

Yeah

I

mean

the

issue

happens

here

when

well

anyway.

I

think

that's.

What

this

has

made

clear

to

me

is

that

we

need

to

be

more

specific

in

where

this

boundary

is

kind

of

crossed

or

violated

in

the

issue.

So,

let's

start

there

I'll

go,

do

that

and

then

update

that

issue

and

then,

if

anybody

else

on

the

call

is

interested

in

this.

That

issue

is

there.

You

can

kind

of

continue

to

discuss.

A

H

So

I

wrote

this

down,

and

this

has

been

a

problem

since,

like

the

birth

of

kubernetes,

so

basically

hot

readiness

is

defined

as

all

its

containers

are

ready

and

whether

it's

containers

already

or

not,

is

based

on

the

feedback

from

the

runtime

and

the

readiness

probe.

So

the

pod

readiness

essentially

is

solely

determined

on

cubelet

and,

on

the

other

hand,

like

services

to

use

service

selectors.

H

That

means

the

services

has

like

implicit

backends

and

that

basically

further

encouraged

the

workload

api's

to

basically

ignore

services

in

their

decision-making

and

were

closed

generally,

like

deployment

or

demon

said

they

generally

only

look

at

the

pod

status

there

they're

managing

so

so.

This

creates

a

gap

between

the

service

life

cycle

and

the

pod

life

cycle.

F

H

H

H

So

that's

why?

Because

this

is

sort

of

a

big

API

change

in

kubernetes.

If

we

want

to

fix

this

properly,

to

make

the

were

close

more

like

service

and

network

aware,

so

we

have

to

introduce

an

extra

state

somewhere.

Either

we

delegate

were

close

decision-making

to

some

external

stuff

or

we

need

to

allow

like

external

feedback

into

the

pass

status

right

that

basically

there

no

no

way

around

it.

There's

only

two

way

forward.

So

both

of

these

options

are

sort

of

a

big

change

to

kubernetes

api.

H

So,

and

since

this

is

mostly

impacting

the

networking

folks,

so

I

first

wanted

to

basically

send

out

this

problem

statement

and

the

survey

and

see

like

whether

folks

are

feeling

the

pain

of

this

problem

and

then

how

much

support

how

much

determination

we

want.

We

can

get

from

the

community

to

say

to

push

this

API

change

forward.

He.

F

H

H

They're,

like

the

deployment

controller,

have

a

extension

saying

they're

all

like

me.

When

you

do

this,

this

is

you're

making

you

you

have

to

talk

some

talk

to

somebody

else

and

get

a

more

yeah,

more

feedback

about

the

pod

or

or

the

pod

status

itself

needs

to

allow

external

feedback

right

now.

The

feedback

loop

stays

within

kubernetes,

which

means

basically

cubelets

updates

it

only

cubelet

updates.

It.

H

F

So

one

of

the

things

that

I

find

troubling

as

I

think

through

this

start

to

think

through

this,

as

I

said,

it

seems

to

me

look

what

matters

is

everything

that

points

the

pods

you

know

needs

to

track,

pods

coming

up

and

coming

down

and

clean

all

sorts

of

load,

balancers

and

I.

Remember

Kelsey

Hightower

a

couple

of

coop

cons

ago

emphasizing

how

services

or

training

wheels

and

you

don't

have

to

use

them

and

you

can

use

whatever

you

want

to

load

balance.

F

So

that's

really

an

open

set

of

things

that

needs

to

be

tracking

pods

coming

up

and

going

down

and

whatever

you

have

an

open

set.

Well,

there's

got

to

be

some

responsibility

on

the

members.

These

things

that

are

tracking

pods

that'll

be

let

they've

got

to

be

updated

to.

Let

kubernetes

know

that

they've

tracked

it.

So,

whenever

you're

talking

about

making

a

change

in

an

open

ecosystem,

you

can't

expect

it

gets

done

in

any

amount

of

a

particular

amount

of

time.

H

Yes,

yes,

so

that

that

basically

brings

up

to

bring

us

down

to

the

the

proposed

like

solutions.

You

know

we

wanted

to

wear

like

a

very

disruptive

like

proposal

that,

like

basically

enforce

everybody

to

to

consider

the

cases

or

we

say

more

of

an

open-ended

like

solution

where

you

can

say:

okay,

you

you,

if

you

you

care,

then

you

consider,

if

you

don't

care

that

you

you

just

stay

whatever

you

you're

doing

well,.

F

There's

two

sides

of

caring

right:

one

is

in

some

sense

the

consumers

of

the

pods

service.

They

want

to

know

when

the

pod

really

is

ready

and

when

it

really

is

not,

and

the

other

is

that

the

intermediate

providers

of

load-balancing

they

are

the

ones

that

are

in

this

unbounded,

things

that

we're

never

going

to

find

them

all

and

that

need

all

get

updated

well.

But

we

can't

require

that

all

give

it

because

they

never

will.

F

Is

that

the

there

are

consumers

to

go

in

direct

through

load,

balancers

and

load

balancers

take

some

time

and

in

policy

enforcers,

and

you

know

various

intermediaries,

all

right,

they're

consumers

to

go

through

intermediaries,

and

so

the

pod

isn't

really

ready

when

it's

coming

up

right

or

it

starts

to

go

out

of

service.

So

as

it

goes

down

as

his

intermediaries,

it

changed

their

handling

of

the

traffic.

H

That

could

be

one

case.

That

can

be

one

case.

Yes,

what

are

the

other

cases

like,

for

instance,

leave

pause,

come

and

go,

and

then

you

have

never

policy

in

place

or

not

in

place,

and

then

you

have

like

security

gaps

or

like

the

iptables

are

not

not

in

place,

and

then

you

don't

know

how

to

debug

it

and

what

what.

H

H

So

at

first

I

wanted

to

like

ask

the

class

folks,

if

you

see

this

as

a

very

severe

problem,

or

is

it

like

a

liveable

problem

that

takes

okay,

it

can

be

solved

or

not

solved,

or

is

it

like

must

be

solved

in

kubernetes

or

you

can?

You

should

be

solving

some

higher

like

constructs

or

some

kind

of

some

kind

of

service

mesh

that

that

bill

on

top

of

kubernetes

well.

F

F

F

H

I

If

I

may

add

something,

it's

also

sometimes

a

problem

over

when

there

is

a

problem

reporting,

what

kind

of

problem

occurred

and

letting

the

user

know

that

a

pod

is

not

able

to

come

up

for

the

specific

reason

that

the

Cubitt

may

not

be

aware

of,

like

you

know,

some

failure

in

the

networking

back-end,

and

it

would

be

very

useful

to

be

able

to

provide

still

updates.

For

this

reason,.

H

G

H

H

A

A

H

So

I

have

so

so

we

have

a

bunch

of

users

which,

like

run

into

similar

problems,

which

we

can't

have

a

proper

room

caused

by

let's

say:

there's

no

logging,

no

snapshotting

of

like

the

iptables

state

at

the

time

the

problem

show

up

or

it's

an

intermittent

problem

or

whatnot.

So

we

when,

when

facing

this

kind

of

like

mismatch

between

part

life

cycle

and

the

network

for

service

life

cycle,

this

kind

of

mismatch

there.

H

F

I'll

also

add

that

I've

also

seen

colleagues

in

IBM,

basically

saying

you

know:

I

I

get

these

occasional.

You

know

500

errors,

you

know

or

500

ya,

miss

500

or

requests

drops.

You

know

the

problems

that

that

could

be

explained

by

this.

Don't

know

whether

they

actually

were.

But

you

know

clearly,

this

kind

of

thing

is

gonna.

Give

interruptions

in

service

and

I

have

colleagues

who

were

reporting

interruptions

and

service?