►

From YouTube: Kubernetes SIG Node 20220830

Description

SIG Node weekly meeting. Agenda and notes: https://docs.google.com/document/d/1Ne57gvidMEWXR70OxxnRkYquAoMpt56o75oZtg-OeBg/edit#heading=h.adoto8roitwq

GMT20220830-170256_Recording_3838x2118

B

B

B

If

not

I'll

just

start

talking

and

it's

it's

not

too

much

on

the

slides

anyway.

So,

as

you

all

know,

we've

presented

in

the

past

about

this

thing,

but

we

start

with

Dynamic

resource

allocation,

which

is

a

mechanism

in

kubernetes

and

enhancement

proposal

that

rethinks

how

devices

are

potentially

handled

by

kubernetes,

because

there

are

quite

a

few

limitations.

A

B

A

B

We

came

up

with

an

approach

that

is

basically

a

bit

like

volume

handling

but

gets

a

bit

more

complicated

because

the

API

is

so

customizable

that

we

actually

have

to

integrate

a

resource

driver

with

the

scheduler,

because

the

scheduler

just

doesn't

know

anything

about

these

claims

and

what

the

parameters

means,

or

we

have

to

have

some

kind

of

back

and

forth

between

scheduler

and

a

custom

vendor

vendor

provided

driver

for

custom

resources,

but

we

have

a

solution.

We

wrote

down

a

cap,

we

now

I'm

getting

well

like

this

is

this

is

the

overall

diagram.

B

B

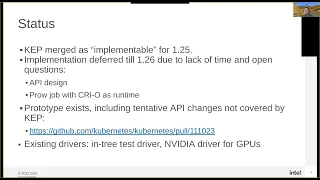

I've

kep

got

merged

as

implementable

for

125.

That

was

a

kind

of

a

way

already

a

big

milestone

for

us,

because

it

it

really

showed

that

kubernetes

project

is

behind

this,

and

that's

it's

not

just

some

wild

idea,

but

some

some

random

guys

have,

but

it's

actually

something

that

a

project

wants

to

do

and

I

think

that's,

that's

very

valuable.

That

gave

us

a

lot

more

visibility

and

also

support

for

going

forward.

B

On

the

other

hand,

this

cat

merge

happened

really

close

to

the

cap

deadline,

and

then

there

wasn't

that

much

time

left,

given

that

some

people

also

including

myself,

went

on

vacation.

So

we

then

decided

to

give

the

actual

implementation

a

little

bit

more

time

and

push

back

to

126.

So

that's

why

you

didn't

see

anything

Landing

in

125

there's.

B

We

also

that's

where

you

guys

come

in

again,

try

to

run

or

enable

end-to-end

tests

for

this

thing,

so

we

do

have

end-to-end

tests

in

that

prototype

and

we

can

run

them

locally,

for

example

with

local

cluster

up.

That's

how

I

currently

do

my

development

I

just

compiled

kubernetes

bring

up

a

one.

Node

cluster

and

I

can

run

these

and

test

against

that

cluster,

but

we

certainly

would

like

to

have

something

that

runs

in

brow,

ideally

with

multiple

nodes,

and

that's

where

last

week,

I

found

that

there

isn't

really

any

existing

job.

B

B

Containerd

will

have

it

soon,

but

it

hasn't

done

a

formal

release.

So

you

would.

We

would

have

to

pull

the

master

plan

of

containerdy

to

get

CDI

support.

So

cryo

is

currently

the

the

one

that's

most

most

usable

for

us,

because

we

just

need

to

use

I

think

actually

Solve

IT

some

some

projects

that

it

was

using.

The

right

version

already

I

just

couldn't

find

a

configuration

where

I

get

a

full

cluster.

B

D

Hey

Patrick

Patrick

about

running

itoa

tests

with

multiple

nodes.

I

have

done

that

with

the

resize

using

gke

clusters,

so

you

use

Cuba

to

deploy

a

cluster

of

the

size

that

you

desire.

One

I

use

one

worker

and

two

two

workers

and

one

masternode

and

you

use

instead

of

local.

You

use

I,

can

work

with

you

on

this

one.

You

use

skeleton

as

a

type

for

running

your

e2e

test.

Oh,

that

should

put

it

on

that

about

cryo.

That

I

don't

know,

but.

D

You

can,

if

you

do

Cube

up

and

install

the

cluster,

then

you

can

post

installation

for

a

small

cluster.

You

can

do

it

or

you

could

modify

the

cube

up

script.

It's

some

work

to

when

it

deploys

cubelet

I

Believe

by

default.

It

uses

container

d

and

to

switch

that

to

cryo

I,

don't

know

if

that

is.

If

there

is

a

flag

for

that,

it's

a

feature

that

certainly

could

be

added

and

it

would

be

useful

for

others

as

well.

So.

A

B

We

need

we

need

support

for

container

device

interface,

which

is

a

spec

and

needs

some

code

in

the

runtime

to

read

Json

files,

that's

how

it

works.

The

runtime

is

basically

told

to

modify

the

container

based

on

a

Json

file

and-

and

that

is

the

so-called

CDI

support

and

cryo.

Has

it

in

a

released

version.

Containerd

has

it

in

the

master

Branch,

but

we

are

still

waiting

for

formal

release.

So

if

there

is

a

way

to

do

a

brow

job

with

container

D

master,

that

should

also

work

foreign.

F

B

So,

for

I

mentioned

for

Prototype

a

few

times,

it's

basically

is

a

private

Pro.

It's

a

pro

a

branch

in

my

Poe

kubernetes

repo,

but

it

just

is

an

official

pull

request

against

kubernetes.

So

if

you

have

any

comments

on

the

existing

code,

that

would

be

the

place

to

to

commend

and

I

myself

already

left

some

comments.

Where

I

have

questions

to

Tim.

We

just

need

to

touch

a

CP

answers

to

those

now,

and

this

pull

request

also

has

some

instructions

in

the

description.

B

We

also

have

a

test

driver

that

doesn't

do

much

because

it's

Hardware

agnostic

it

just

basically

injects

and

variables

in

a

custom

way

without

relying

on

the

the

normal

functionality

for

that

or

normal

normal

API,

and

we

are

using

that

drive

also

for

end-to-end

testing

for

tests

that

do

failure.

Injection,

for

example,

a

more

realistic

example

drive

or

more

aggressive,

more

realistic

driver

will

be

the

one

from

Nvidia

4,

where

gpus

so

Nvidia

is

part.

B

Is

our

our

fellow

traveler

here

also

motivated

by

limitations,

but

things

that

they

can't

do

with

a

device

plugin

interface

with

four

bedroom

gpus

right

now?

They

have

a

possibility

to

put

Nvidia

Cards

into

a

virtual

mode,

where

you

can

split

up

the

hardware

fairly

flexible

in

in

so-called

mix,

where

you

can

allocate

virtual

Hardware,

basically

a

subset

of

Hardware,

with

certain

amount

of

ram

certain

compute

units

they

want

to

make

that

available

to

kubernetes

workloads,

because

right

now

they

basically

have

to

be.

B

They

have

to

do

pre-partitioning

into

certain

chunks

and

then

hope

that

this

resize

resize

chunks

fit

for

workload,

and

this

mikron

mode

will

be

more

flexible

and

Kevin

has

already

demoed

what

he

has

right

now

and

it's

fully

working.

He

showed

us

a

demo

where

he

was

actively

on

the

console,

creating

claims,

running

ports

and.

B

That

case,

in

that

case,

I'll

just

hand

it

over

to

you,

but

we

weren't

sure

whether

you

joined

so

I've.

Had

that

said,

all

I

had

I

know,

there's

one

more

slide.

We

do

have

a

dra

channel

on

the

kubernetes

slack,

where

we

are

now

doing

all

of

our

all

of

our

discussions.

Actually,

we

haven't

decided

about

doing

regular

meetings,

yet

we

were

doing

some

among

the

people

who

were

interested

running

up

to

the

cap,

that

cap

submission

and

we

keep

doing

that.

But

I

think

we

should

start

doing

regular,

open

meetings.

B

C

B

People

have

asked

some

someone,

but

I,

don't

know

how

how

popular

that

meeting

will

be

right.

Now

we

are

fine

with

with

just

having

a

meeting

between

Nvidia

and

Intel,

but

it

certainly

wouldn't

make

sense

to

open

it

up.

I,

don't

I,

don't

have

a

problem

with

that,

except

for

the

logistics

of

officially

hosting

that

meeting.

C

B

C

Yeah

so

like

I,

don't

know,

I

would

find

it

interesting

I've

seems

like

there's.

Sometimes

this

tension

of

wanting

to

be

involved

in

the

sake

and

then

being

kind

of

Hidden

Away

from

the

sick

and

like

the

best

thing

I

would

say,

is

just

make

your

involvement

as

open

as

possible

and

yeah

I

guess:

I

didn't

know

that

channel

existed.

So

thanks

for

it's.

C

E

I

also

wrote

a

nice,

the

too

much

traffic

and

people

cannot

discuss

some

other

signal.

Topic.

I

would

rather

converging

together

because

anyway,

people

might

want

to

know

the

background.

Also,

it's

not

you

don't

need

to

repeat

same

thing

right.

So

that's

the

discussing

and

other

things

over

the

place.

B

B

C

C

G

H

G

Okay,

great

yeah,

so

I

don't

want

to

take

up

too

much

time,

but

I

just

wanted

to

show

the

Nvidia

driver

that

we've

ridden

against

this

new

Dynamic

resource

allocation

framework

from

kind

of

an

end

user's

perspective,

I'm

not

going

to

go

into

the

details

of

how

we

built

the

driver,

but

I

just

wanted

to

show

you.

You

know

as

someone

that

might

deploy

pods

against

the

Nvidia

driver

for

Dra,

you

would

actually

ask

for

and

consume

gpus.

So

you

know

just

really

quickly.

G

If

you're

familiar

with

Nvidia

gpus,

the

next

thing

I'm

going

to

show,

you

will

be

obvious,

but

for

those

of

you

that

aren't

I'm

currently

on

a

machine

that

has

eight

gpus

on

it,

four

of

them

currently

have

this

Mode

called

Mig

mode

disabled

on

them,

meaning

that

these

gpus

can

be

used

in

full

mode

to

run

workloads.

The

full

GPU

can

be

consumed

to

run

workloads,

and

these

last

four

in

what's

called

Mig

mode,

which

means

that

these

These

gpus

are

partitionable.

G

They

can

be

partitioned

into

a

smaller

sizes

and

then

those

smaller

kind

of

sub

gpus

can

then

run

workloads

on

top

of

them,

and

this

output

that

you're

seeing

down

here

is

just

showing

that

currently

even

those

last

four

that

are

in

this

Mig

mode,

don't

have

any

of

these

partitioned

gpus

configured

for

them.

If,

if

and

when

I

I

actually

do

a

creative

partition

on

it,

you'll

see

some

sub

gpus

embedded

underneath

these

and

right

now,

there's

nothing

there.

G

Obviously,

this

whole

framework

is

much

more

powerful,

but

in

a

simple

case

it

needs

to

at

least

be

able

to

do

what

the

existing

device

plugin

does

right

and

so

in

this

example,

and

basically

creating

an

instance

of

a

GPU

claim,

I'm

calling

it

one

GPU

and

then

saying

that

you

know

if

anyone

ever

grabs

reference

to

this

claim,

they'll

be

granted

one

GPU

right

and

then

I

have

two

separate

pods.

That

I've

created

that

each

have

their

own

separate

resource.

G

Claim

that

references

is

that

GPU

claim

and

then

tells

the

container

running

inside

of

it

to

grab

a

hold

of

that,

that

resource

claim

and

make

sure

that

all

the

devices

Associated

get

it

with

it

get

injected

to

it.

So

what

we

expect

to

see

from

this

pod

when

it

gets

launched,

is

that

or

for

this,

when

these

two

pods

launch,

we

expect

them

each

to

get

access

to

to

a

separate

GPU,

because

there's

two

separate

resource

claims

that

are

that

are

created

from

that

one

GPU

claim

spec

that

I.

G

G

That

only

creates

one

instance

of

this

claim

and

then

within

my

two

containers

of

that

pod

I'm

grabbing

access

to

the

same

GPU,

and

so

it

ends

up

having

the

effect

that

you

have

shared

access

to

this

to

to

a

GPU

rather

than

having

exclusive

access

for

each

of

them,

and

then

the

third

one

that

I'm

showing

here

is

just

kind

of

taking

this

one

one

level

further.

Where,

instead

of

having

shared

access

across

two

containers

within

a

pod,

you

could

actually

share

access

to

a

GPU

across

pods.

G

G

G

This

one

also

works

so

long

as

my

driver's

not

crashing,

and

this

is

one

that

actually

gives

me

the

ability

to

partition

these

gpus

up

such

that

I

have

a

subset

of

the

GPU

that

I

can

get

access

to,

and

so

once

again

I

have

this

Global

GPU

claim

that

I

create

and

then

I

also

have

these

sub

Mig

device

claims

that

I

can

create.

That

then

reference,

the

GPU

claim

that

I

that

I

want

access

to,

and

so

in

a

setup

like

this

I

can

have.

G

G

G

E

E

I

E

G

B

B

So

this

this

logic

would

have

to

be

configurable

in

an

advice

driver

when

it

allocates

it

knows,

for

which

namespace

that

is,

and

that

namespace

might

have

a

configuration

object.

That

says

this

many

CPU

gpus,

for

example,

but

then

that

would

be

specific

to

that

particular

driver.

That

knows

what

a

GPU

is.

C

I

did

a

quick

pass

through

I,

didn't

see

anything

proposing

changes

to

quota

or

adding

new

quoted

resources.

So

maybe

it's

in

a

separate

dock

but

yeah

I,

guess

Patrick

and

Kevin.

Just

maybe

that's

the

next.

When

the

demo

is

great,

we

can

see

a

quote

of

concept

with

it

too

yeah,

but

this

is

really

cool

to

see.

G

Yeah,

so

everything

came

back

up,

I'm,

not

sure

what

went

wrong.

It

was

just

a

transient

thing,

so

yeah

so

just

to

to

wrap

this

up

as

we

expected

for

that

for

the

first

deployment

that

I

showed

the

two

different

pods

have

access

to

separate

gpus

for

the

second

test

that

I

showed

the

two

containers

have

shared

access

to

the

same

GPU

and

then

likewise

in

that

third

test,

the

two

separate

pods

have

shared

access

to

the

same

GPU

yeah.

I

G

G

G

G

I

G

G

You

can

see

that,

as

we

expected,

all

of

the

container

zeros

have

access

to

this

partition

that

has

three

compute

units

inside

of

it

container.

One

has

access

to

all

of

the

partitions

that

have

two

compute

units

inside

of

it,

and

so

on

with

the

with

the

different

just,

you

know

configured

the

way

that

I

specified

in

the

in

the

spec

there

cool.

J

J

A

L

K

The

answer

is

probably

writer

cap

retrospectively,

but

I

just

sort

of

wanted

to

bring

it

up.

If

there

was

any

context,

that's

not

written

down

that

anyone

remembers

or

whether

I

should

just

sort

of

take

it

away

and

attempt

to

sort

of

back

back

right

catch

for

it,

I

mean

if

anyone

had

any

suggestions

either

way.

K

A

I,

remember

correctly,

this

was

for,

like,

as

you

said,

for

nesting

things

and

at

some

point

there

were

even

discussions

of

dropping

this

entirely.

So

I

think

your

best

bet

is

to,

if

you

want

to

revive

it

is

to

like

come

up

with

a

cap

and

then

present

here,

why

it

makes

sense

and

how

it

fits

into

the

current

username

space

efforts

like

what

are

the

use

cases

that

IT

addresses

and

yeah

I'm

going

to

write

a

cap

for

it.

K

Yeah

I

mean

I,

don't

really

want

to

take

up

any

more

time.

If

there's

not

any

detail,

it's

just.

If

there

was

anything

that

people

remembered

it

might

you

might

be

interested

I

recently

implemented

supporting

cryo

for

it,

so

it

is

now

supported

in

cryo

and

containerdy,

so

that

might

be

of

interest

to

someone

but

other

than

that

I.

Don't

really

have

anything

else

to

report

right

now.

E

But

David

thanks

for

for

pick

up

this

one.

So

there's

the

couple

things:

if

we

have

the

wallet

use

kisses

and

also,

if

so,

the

concern

I

can,

if

this

work

with

the

current

Dynamic

space

and

can

improve

the

reduce

those

negative

security

concern

and

please

propose

those

things

and

then

we

can

move

forward

to

the

better

if

we

couldn't

there's

no

other

use

cases

actually,

because

we

do

think

on.

The

job

also

welcome

to

remove

this

and

also

allowance.

E

M

M

Yeah-

and

this

is

only

for

non-root

users,

so

an

argument

could

be

made

that,

like

we

shouldn't

have

like

those

users,

shouldn't

have

really

been

able

to

get

those

capabilities

in

the

first

place.

But

basically,

when

you

run

a

container

with

a

non-root

user

and

a

capability

added,

the

old

Behavior

was

the

inheritable

capabilities

would

have

been

passed

and

that

would

have

basically

merged

with

permitted

and

bounding

set,

that

the

container

is

still

given

and

that

effectively

makes

it.

M

M

You

can

use

the

docker

file

to

change

the

binary.

It's

like

changing

the

capabilities

on.

You

know

for

the

file

itself,

so

you

know

what

I

did

was

I

used

the

docker

file,

because

the

you

know

default

Alpine

image

doesn't

have

those

capabilities

by

default,

so

you

have

to

add

them

the

inheritable

cases

or

the

permitted

and

effective

capabilities

to

the

binary,

and

you

can

do

that

through

the

docker

file.

It.

A

I

feel

like

this

is

something

that

ambient

capabilities

was

supposed

to

help

solve

and

I

think

we

stalled

that

some

time

ago.

Maybe

we

revived

that

back

again

and

see

if,

if

it

can

work

around

this

issue,

because

I

don't

think

that

the

changing

the

capabilities

of

the

file

is

a

good

work

around

here.

If

you

can

solve

it

with

ambient,

instead,.

E

M

A

K

M

Yeah,

we

could

definitely

investigate

that

I

I'm

sympathetic

and

that's

why

I

didn't

just

regret

it

automatically

is

that

you

know

it

does

technically

solve

a

CV,

the

cve

as

far

as

I

understand

it

basically

was

created

because,

like

cryo

and

continuity

were

making

like

a

not

idiomatic

Unix

environment,

by

giving

the

inheritable

capabilities,

apparently

process

managers

or

processes

like

damage

and

stuff

through

the

beginning,

inheritable

capabilities

technically

so

it

I

didn't

really

find

anything

where

it

was.

This

is

like

a

direct

security

or

like

issue.

It

was

a

low

cve.

M

E

If

we

couldn't

find

the

runtime

thing

so

the

end

of

we

have

two

people

to

look

around

this

one

and

they

have

to

run

as

the

root

right

so

yeah.

So

all

they

have

to

come.

We

compile

generate

the

battery.

The

problem

is

a

lot

of

users

be

using

binary.

Is

not

the

control

right

see

a

lot

of

people

using

existing

images,

so

the

and

the

app

will

I

just

don't

know

how

many

have

that

is.

It

is

a

problem,

so

the

risk

analysis

is

based

on

no

data

at

this

moment.

E

So

I

just

don't

know

how

many

already

have

that

capability

built

into

their

binary.

So

then

how

many

users

will

be

impact

so

so

then,

if

if

they

couldn't,

if

we

couldn't

find

even

we

find

the

solution

if

the

solution

is

not

so

anyway,

so

that's

kind

of

the

could

be

like

a

push.

Everyone

to

say:

okay,

read

your

head

as

the

root:

that's

even

more

problem

right.

M

I

was

worried

originally

that

that

was

the

only

work

around

I

mean

the

existence

of

the

doc.

You

know,

Dr

file

change

doesn't

really

change

it

for

people

who

aren't

capable

of

changing

their

image,

but

at

least

it

does.

It

is

a

workaround

that

doesn't

involve

the

you

know:

capability

escalation,

but

yeah

I

agree

that

it.

H

H

H

M

H

M

The

the

one

thing

about

an

annotation

is,

you

need

a

way

for

the

admin

to

be

able

to

configure

like

who

can

use

that

annotation,

which

is

like

crowd,

has

that

capability.

But

it's

cut

it's

not

like

the

most

idiomatic

way

that

we

go

about

it,

or

else

it

basically

is

effectively

like

everyone

just

gets

it

anyway.

M

Okay,

so

it

sounds

like

we

want

to

keep

this

Behavior

even,

but

we

want

to

find

a

way

to

help

people

migrate

to

it

rather

than

just

you

know,

make

the

change

and

break

a

bunch

of

people,

so

I

think

that

that's

I

think

that's

a

good

compromise

and

I

think

that's

good

with

me.

As

long

as

someone

else

has

anything

else

to

talk

about

there.

M

C

M

No

it

actually.

This

was

a

testing

Gap

in

CRI

test

and

our

our

Downstream,

with

my

red

hat

on

the

downstream

openshift

test,

did

have

a

test

testing

this,

which

we

changed,

but

they

just

tested

the

capabilities

they

didn't

test.

What

the

result

of

giving

the

capabilities

to

a

pod

was

so:

okay,

there's

nothing

Upstream

that

prevented

us

from

making

this

change,

because

we

did

and

nothing

broke

until

people

started.

Opening

the

shoes.

C

I

was

trying

to

think

if

we

want

to

come,

if

we

had

a

conformance

test,

what

we

wanted

to

be

then

post

mitigation,

behavior

and

I

think

that's

probably

the

case,

but

then

you

wouldn't

really

want

to

make

people

that

were

previously

conformant,

no

longer

conformant

either.

So

it's

just

a

tricky

issue

either

way

it

seems

like

yeah

Peter.

It

seems

like

we

have

a

clear

path

on

this

cool.

L

Hi,

it's

the

first

time

I've

been

here

as

well.

I'll

try

to

keep

it

quick,

so

basically

like

downward

API,

has

not

allowed

node

levels

and

or

like

annotations

historically

and

there's

like

an

issue

going

back

like

six

years

about

this

and

a

PR

trying

to

implement

it.

I

guess,

like

my

my

general

question,

is:

is

this

something

that

can

be

Revisited

after

six

years?

L

I

think

that

a

lot

of

users

generally

run

their

clusters

in

a

way

where,

like

information

like

labels

and

annotations

from

the

nodes,

is

pretty

like

readable

by

anything.

So,

for

example,

like

we

don't

like,

prevent

our

users

from

being

able

to

get

that

particular

information

or

anything

so

I

guess.

My

question

is:

is

this

something

that

could

be

Revisited

and

if

so,

what's

the

right

way

to

go

about

that.

N

L

A

it's

a

cluster

scope

resource

and

we

don't

by

default,

Grant

this

access

to

to

anything

through

like

our

back,

so

it

makes

sense

not

to

automatically

basically

assume

that

everything

is

going

to

be

able

to

read

or

that

pods

are

going

to

be

able

to

read

this

because

pods

are

a

namespace

scope.

Resource

I

believe

is

the

justification.

C

So

that

would

be

my

recommendation

is

to

start

with

your

minimal

need,

and

then,

let's

see,

if

that

is

fine,

I

think

there

are

speaking

with

my

own

red

hat

on.

There

are

users

I'm

aware

of

who

would

treat

labels

as

sensitive,

because

the

notes

out

things

are

operating.

I

might

be

in

particular,

security

zones

that

the

workload

can't

be

aware

of

so

like

I

I

do

think

going

with

an

allowless

type

approach

might

be

reasonable

and

labels

have

been

more

normalized

since

2017.

So

we

might

have

good

success

for

your

use

case.

A

A

F

N

Or

bigger

update

next

week

or

the

week

after,

okay,

what

has

been

updates

there,

one

just

the

heads

up

that

I

was

advised

and

otherwise

to

write

a

blog

post

about

about

this

stuff,

like

in

the

early

summer,

Springtime

and

I.

Finally,

deep

deep,

fried

wanted

kubernetes

program

now

kubernetes

developers

or

submitted

to

PR.

N

It

was

in

June

if

I

remember

correctly,

but

now

there

are

good

review

comments

and

comments

from

Peter

Hunter's

brother,

probably

the

blog

post.

It

doesn't

make

sense

to

kind

of

publish

it.

The

blog

post

on

the

other

until

the

cap

has

been

kind

of

at

least

accepted,

and

there

is

clear,

clear

ways,

so

it

will

be

supported

in

kubernetes

if

it

will

be

so

I.

A

D

D

It

is

in

the

master

now

I

think

what

we

want

to

do

is

get

to

get

a

sense

of

with

the

next

continuity

release

will

be

and

when

that

will

get

picked

up

once

that

is

in

I,

think

we

can

move

forward

with

merging

the

rest

of

the

pr

testing

and

merging

because

we'll

have

the

full

loop

on

the

CI

and

address

any

issues

that

are

there.

At

this

point,

I

saw

your

comments

on

C

group

B2,

you

did

the

review.

Thank

you

very

much.

I

think

I'll.

Add

that

unit

test.

D

A

D

Please

yeah

yeah

I'll,

address

them

and

then

I'll

do

an

update

with

the

unit

test

and

all

that

was

one

of

the

known

items

that

was

missing

and

finally,

I.

Think

Marion

lubber

from

gke

team

in

Warsaw

is

offered

to

help

with

this

effort

and

I.

Think

I

can

get

us

good

test

coverage

in

Alpha.

He's

got

a

very

good

use

case.

Please

welcome

him

and

to

believe

that

covers

it.