►

From YouTube: Kubernetes SIG Node 20210420

Description

Meeting Agenda:

https://docs.google.com/document/d/1j3vrG6BgE0hUDs2e-1ZUegKN4W4Adb1B6oJ6j-4kyPU

A

A

B

C

Yeah,

I

noticed

there

were

tons

of

cherry

picks

sitting

in

the

triage

column,

and

then

we

had

another

urgent

one

come

in

yesterday,

which

I

think

is

good

to

go.

So

I

don't

think

that

we're

going

to

have

another

patch

release

until

next

month,

because

the

cherry

pick

deadline

for

april

was

a

couple

weeks

ago,

so

we'll

see

but

yeah

those

are

gone

through

there

other

than

that.

Definitely

I

think

the

prs

are

growing

right

now,

but

we

will

get

through

them.

D

D

Okay,

hopefully

you

can

see

my

my

slides

okay,

so

thank

thanks.

So

the

work

we

are

like

to

propose

is

basically

a

new

policy

for

cpu

manager,

and

this

sparked

a

lively

discussion

about

how

do

we

extend

cpu

manager

in

the

first

place,

but

I

will

get

to

them

in

a

minute.

First

of

all,

I

would

like

to

cover

what

is

this

new

policy

is

about.

D

We

need

a

bit

more.

We,

the

biggest

thing

we

want

to

avoid

is

the

noisy,

neighbor

scenario

on

which

a

physical,

core

or

mid-level

cache

are

shared

among

different

containers.

So

let

me

just

quickly

illustrate

the

case.

Let's

consider

this

very

simple

case

with

let's

say:

eight

physical

cpus,

each

of

them

with

two

virtual

gpus

we

allocate

with

static

policy.

We

allocate

a

guaranteed

quality

of

service

container,

which

requires

five

cpus,

so

you

get.

D

Of

course

you

get

five

virtual

cpus,

but

you

also

get

three

physical

cores,

one

of

them

being

shared

because

it's

partially

allocated

so

other

containers

playing

around

there,

because

you

know

other

policies

are

in

the

shared

pool,

so

everything

can

run

there.

You

can

have

noisy

neighbors,

so

you

can

have

latency

spike,

for

example,

in

latin

sensitive

application

worst

worst

case,

even

well,

not

really

words,

but

more

different

cases.

D

So

in

this

sense

this

is

an

extension

of

the

static

policy.

So

it's

an

additional

guarantee.

We

believe

that

the

most

natural

extension

is

having

a

new

policy,

because

we

want

to

preserve

the

current

guarantee

of

the

static

policy.

It's

unlikely.

Every

workload

which

already

is

using

the

static

policy

could

benefit

of

it.

So

we

propose

an

extension,

could

be.

A

new

policy

could

be,

for

example,

an

extended

behavior,

a

static

policy

that

pods

could

be

able

to

opt

in

or

an

even

accumulated

option,

but

still

should

be

obtain

this

behavior.

D

How

do

we

do

that

and

what?

Why

is

the

problem?

Because

you

know,

for

all

intents

and

purpose,

let

me

let

me

actually

go

back

you

you

the

workload

request

and

are

now

the

amount

of

cpus,

because,

if

you

fulfill,

if

you

have

a

workload

which

requires

an

amount

of

cpus

such

as

every

physical

car,

is

fully

allocated

no

big

deal

but

no

issue

at

all.

Actually.

But

if

it

is

not

the

case,

do

we

do

that

to

prevent

that

we

said

we

need

to

over

allocate

let's

say,

allocate

a

physical

cpus.

D

For

this

approach,

for

example,

for

example,

I

think

alternatives

we

we

considered,

but

we

consider

less

signal

than

that

at

the

next

generation,

much

like

by

the

way

cpu

puller

is

doing,

but

if

we

had

an

extended

results

to

convey

the

fact

you

want

physical

chords

well,

first

of

all,

it

clashes

with

the

existing

resource.

You

need

to

specify

bot

if

you

don't

specify

cpu

results

out,

you

can

obtain

in

guaranteed

quality

of

service

class,

which

is

a

problem

which

is

a

nice

property.

We

want

to

keep

so.

This

is

not

seems

ideal.

D

Another

option

could

be,

maybe

not

maybe

let

the

results

the

cpu

results,

specify

a.

We

want

actually

physical,

core

being

a

physical

core

capable

of

hosting

two

or

more

virtual

cpus.

We

can

maybe

stretch

the

the

definition

and

consider

it

a

multiple.

A

physical

call

could

be

seen

a

multiple

of

virtual

codes,

but

this

relationship

again

is

depending

on

the

adwords

settings

on

the

other

on

the

specific

of

our

births

and

really

doesn't

feel

like

a

good

direction

at

this

moment.

D

But

when

I

started

a

a

conversation

to

get

feedback

about,

if

this,

how

the

community

feels

about

the

noisy

neighbor

problem,

of

which

other

I

mean

consumers,

can

we

see,

besides

the

very

specific

low

latency

scenarios

like

seeing

like

telco

workloads

or

even

high

frequency

trading,

or

something

like

that?

The

very

first

question,

which

is

actually

the

the

very

first

question

I'm

going

to

rise,

is

though

oh.

D

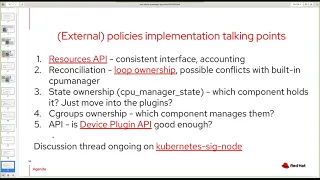

Do

we

even

extend

the

cpu

manager

because

the

very

first

proposal

we

with

the

very

first

answer

we

we

got-

was

like

hey,

let's

considering

adding

external

policies

and

making

the

cpu

manager

work

much

like

the

external

plugins,

with

much

like

we

have

for

device

plugin,

but

for

cpu

manager.

So

this

is

actually

the

very

first

question.

I

believe

we

should

consider

in

the

first

place,

so

we

we

can

do.

D

Okay,

just

add

a

new

built-in

policy

or

make

changes

to

the

existing

policy,

or

we

can

have

the

external

and

external

policies

we

had

already.

It

was

told

that

was

not

involved

in

kubernetes

there.

We

we

kind

of

covered

this.

Last

week

we

had

an

initial

discussion

and

about

having

a

let's

say

those

external

policies,

but

that

approach

was

not

really

gaining

traction

last

time.

D

D

If

we

move

out

out

of

three,

how

do

we

still

use

the

core

results?

We

use

an

external

research

like

cpu

puller

does

again

which

api

we

can

use

and

who

owns

the

c

groups

who

sets

them.

But

again,

my

the

the

very

first

question

we

we,

I

I

think

we

should

discuss,

is

what's

the

preferred

way,

look

going

forward

to

extend

cpu

manager

to

enable,

for

example,

the

policy

we

are

looking

forward

to

implement

or

general

policies

to

to

be

implement,

or

it

depends

on

on,

for

example,

on

the

magnitude

or

the

policy.

D

A

A

A

So

that's

why,

at

the

end,

we

decided

to

start

from

the

building,

but

we

again

we

didn't

rule

out

completely

off

the

external

demon

side

like,

for

example,

intel

have

the

new

management,

a

lot

of

things

which

basically

guide.

That

idea

and

they

have

their

own

external

resource

management,

even

collaborate

with

the

built-in

cpu

and

the

memory

so

yeah.

I

just

share

some

background

contacts

here,

so

any

other

one

have

some

comments

on

this

topic.

E

Well,

my

comment

will

be

more

generic,

so

if

you're

talking

about

cp

extending

cpu

manager,

we

cannot

do

it

alone.

So

if

we

really

fight

for

a

noisy

neighbor

situation,

cpu

is

not

enough.

You

always

need

to

consider

how

the

memory

is

connected.

What

kind

of

memory

is

used?

What

kind

of

buses

are

connected

and

so

on

and

so

forth?

So

if,

if

we

really

do

external

apis

when

it

means

like

full

resource

management

or

topology

manager,

api

which

needs

to

be

taken

into

account.

D

Thanks

alexander,

I

have

just

a

follow-up

question

because

I

I

was

following

even

though

not

involved

as

I

would

like

the

efforts,

for

example,

to

container

device

initiative,

and

my

question

is:

I

understand

the

long-term

goal

to

have

all

the

resource

management

in

some

way

being

modular

external,

but

maybe

it's

not

at

least

but

what's

the

the

the

migration

pathways

path

because,

in

my

opinion,

having

external

policies

could

be

for

cpu

manager

could

be

a

nice

middle

ground

step

towards

that

direction.

I

was

under

the

impression.

E

E

D

D

I

understand

that

we

will

have

and

not

resolve

not

resources

in

a

malware

fashion,

but

my

question

was

just

okay:

I

get

that

cpu

manager,

external

policy

could

be

cool

cause

issue

in

this

case,

but

granted

that

how

will

the

transition

look

like

if

doing

modular

mean

and

doing

small

changes?

Let's

say

starting

for

superior

measure,

for

example,

and

then

maybe

extending

for

memory

manager

is,

is

not

the

ideal

direction.

How

the?

F

D

We,

yes,

we

do

have

a

prototype.

Yes,

we

are

in

the

process

of

testing

that.

But

the

reason

why

asking

the

first

place,

which

was

kevin,

actually

said,

okay

first

answer

from

him,

was

hey,

let's

evaluate

again

the

external

policy,

so

I

will

actually

ask

the

the

the

as

much

as

I

can,

the

community.

You

see

yeah.

F

G

D

D

A

I

agree

with

alexandra

earlier

I

I

just

want

to

say

the

island

okay,

so

so

I

I

agree

with

alexandra

so

because

when

we

talk

about

external

resource

api,

this

is

what

we

have

in

the

past.

Also

we

passed

that

decision.

The

reason

it

is

use

cases

back

then

use

cases.

We

don't

have

those

those

rich

use

cases

like

customer

user

having

to

get

to

this

stage.

Obviously

today's

stage

we

predict

will

be

get

here,

but

we

know

that

then

packaging

kubernetes

adoption

is

not

that

high.

A

Yet

so

we

haven't

have

those

real-time

use

cases

and

even

like

the

typology,

a

while

use

cases,

loyalty

labor

would

use

this

customer

user

haven't

get

that

stage

yet

so

so,

at

the

same

time,

we

also

realize

one

thing,

which

is

exactly

alexandra

mentioned

earlier

here,

because

they

have

the

background

from

the

intel

and

we

also

have

the

background

from

the

board

and

the

red

hat

also

have

the

openshift

have

the

background

in

the

past.

So

we

know

there's

the

half

to

those

resources.

A

Civil

resources

have

to

working

together

to

provide

the

the

to

meet

customer

requirement

but

to

settle

down

with

that

resource,

all

three

type

of

the

resource,

even

there's

some

disk

related

stuff-

and

it

is

a

hard

decision

for

us

at

that.

So

here,

what

do

you

propose?

Still?

It

is

the

cpu.

So

if

we

go

with

the

extra

api,

that's

the

concept,

maintenance,

people

concept,

it's

not

like

the

okay,

we

cannot

maintain.

A

It

is

once

you

only

focus

on

the

cpu

and

then

a

lot

of

when

there

maybe

just

go,

implement

their

own

and

based

on

that

api,

and

it's

really

hard

for

us.

If

we

have

to

reverse

api,

if

you

saw

that

the

c

cri

container

runtime

interface

today,

even

that's

really

wild

guided,

while

the

group

and

the

api,

it

is

post

problem,

same

thing

for,

like

the

today's,

the

csi

storage

api,

like

the

every

time,

there's

the

production

and,

if

you're,

using

different

vendors

implementation

of

the

csr.

A

D

D

So

I

we,

I

think

we

will

just

post

the

pr

to

let

people

review

and

make

comments

and

polish

the

cap,

which

we

already

almost

done

so

people

can

review

and

regarding

the

external

policies,

kevin

ed

seems

to

have

the

some

ideas,

so

I

will

just

reach

with

with

him

and

in

parallel.

Let's

see

work

with

him

to

see

how

we

could

look

like,

but

for

the

real

deal,

which

is

the

policy

we

would

like

to

do,

we'll

just

keep

with

built

in

does

it

does

it

seem

fair

to

everyone

there.

E

D

I

Just

before

we

wrap

up

francesca,

I

just

want

to

mention

that

I

think

prototype

came

into

discussion.

We

do

have

a

prototype,

maybe

next

week

we

can

come

and

show

a

demo.

We

have

it

already

working

and

even

the

implementation

is

pretty

much

complete,

so

it

might

give

people

clarity

as

to

what

we

are

proposing

and

it

might

help

with

the

reviews

as

well.

F

F

We

could

evaluate

any

externalization

approach

against

like

the

present

state,

but

I

I

just

want

to

make

sure

that,

like

this

isn't

the

only

use

case

that

would

allow

us

to

iterate

and

evolve,

and

I

wasn't

sure

if

that

was

understood.

I

I

more

look

at

this

than

just

ask.

Was

it

an

error

when

we

did

the

static

policy

that

we

didn't

ask?

F

Is

it

hyper

threat

aware

or

not,

and

is

it

an

error

that

when

we

define

the

policy

flag

that

we

only

did

it

with

one

flag

which

was

static,

you

know

none

or

whatever,

and

we

didn't

provide

a

secondary

flag,

which

would

say

like

something

more

nuanced,

like

hyper

thread,

aware

or

you

know

some

opaque

string

or

something.

So

I

don't

know

that

that's

kind

of

how.

I

I

Yeah,

I

think

what

one

comment

I

have

on

that

is

given

that

cpu

manager

it

does

a

best

effort

of

first

allocating

on

the

basis

of

sockets

and

then

tries

to

get

cores

and

then

kind

of

remaining

threats.

It

was

kind

of

the

decision

that

was

made

that

you

didn't

do

the

best

effort

job,

but

in

scenarios

that

it's

not

able

to

do

it

wouldn't

fail,

whereas

here

what

we

are

trying

to

emphasize

is

that

if

the

request

cannot

be

fulfilled

from

single

core,

it

ends

up

in

an

error.

F

Or

its

best

effort,

and

basically

should

we

have

a

policy

knob

on

cpu

manager

that

we

didn't

have

in

mind.

That

would

let

us

express

this

without

having

to

feel

we

need

to

get

all

externalization

perfect.

Is

my

my

thought

like

we

can?

We

can

learn

as

we

go.

It

seems

like

we

would

want

maybe

two

pieces

of

information

where

we

had

one

now,

but

anyway,.

I

And

and

one

more

thing

like

even

in

the

implementation,

what

we

are

doing

is

we

are

not

exactly

copying

what

static

policy

already

does.

We

are

trying

to

reuse,

static

and

add,

essentially

an

admit

handler

with

the

additional

check

that

we

care

about,

so

it's

not

replacing

the

entire

or

just

entirely

replicating

starting

and

making

a

minor

change,

so

implementation

wise,

we've

kept

that

in

mind.

But

again,

I

think,

with

the

demo

and

kept

kind

of

polished

a

bit

more,

it

would

become

clearer

and

we

can

follow

next

week

on

this.

A

Thank

you

francesco

and

to

this

one.

So

let's

move

to

next

topic,

so

we

can

follow

up

next

week

unless

even

more

detail

with

the

demo

with

the

sweaty

and

alexandra

francisco.

So

next

one

there's

another

from

curry

from

the

april

13,

but

so

at

you

do

you

want

to

talk

about

this.

One

extended

part

resource

api

with

the

memory

management

matrix.

J

Yeah

hi

folks,

so

in

general,

part

of

promotion

of

the

memory

manager

to

better

derek

requested

to

provide

some

kind

of

metrics

and

I

believe,

the

best

way

just

to

provide

such

metrics

under

the

pod

resources

api,

because

we

already

have

the

end

point

and

we

already

provide

some

cpu

metrics

under

it.

Regarding

the

node

regarding

the

pod

and

container.

J

F

J

A

K

Yeah

sure

so

I

think,

last

week

we

went

through

a

first

round

of

planning.

We

talked

about

all

the

features

in

the

document

and

then

one

thing

we

wanted

to

do

is

kind

of

prioritize

all

of

them.

So

I

was

thinking.

Maybe

we

can

quickly

go

through

the

list

and

attach

like

a

high

medium

low

to

that

list

as

a

group,

so

we

know

like

what

you

want

to

focus

on

first

and

we

know

what

we

can

drop

if

we

don't

have

enough

bandwidth.

K

A

K

K

C

K

So

I

think

we

need

to

clarify

right,

like

as

a

from

a

priority

perspective.

We

are

talking

priority

for

not

just

stuff

that

is

graduating,

but

also

in

terms

of

bandwidth

will

assign

to

work.

That

is

that's

ongoing,

like

c

groups

b2

when

cri

graduation

is

high,

it

may

not

make

it

so

I

don't

know

derek

don.

What's

your

perspective

like

do

we

want

to

only

talk

about

things

that

we

want

to

change

the

level

of,

or

also

ongoing,

work.

C

B

K

You

know

for

this

one

like

what

we

said

was

like

we'll

stay

beta,

but

we'll

probably

we'll

add,

more

features

here

like

on

the

value

of

the

priority

class.

So

what

do

we

want

to

do

like?

Do?

We

also

want

to

give

a

priority

to

everything

based

on

things

that

level

is

not

going

to

change,

but

we're

still

going

to

work

on

it

or

no,

and

then

sergey

suggested

we

could

do

a

beta

2,

which

doesn't

sound

bad

to

me.

N

M

F

F

A

What

means

high

or

what

mean

media

media

highs

mean

like

the

okay.

If

we

want

to

meet,

we

want

to

meet

our

target.

So

if

we

couldn't

do

it,

then

we

will.

We

will

shift

some

engineering

resources

to

make

sure

finish

this

one.

Otherwise

that

is

a

little

bit.

It's

like

the

cases

like,

for

example,

cri

graduation,

it

is,

I

know,

open

source

is

hard

to

shift

to

internal

resources

to

do

something,

but

at

least

let's

kind

of

indicate

our

our

intention

right.

So

I'm

not

sure

this

one.

K

Maybe

just

an

order

of

like

just

ordering

this

list

right

now.

This

list

is

unordered

don

like

like

in

our

planning.

We

also

have

like

a

blocker

thing

that

we

do,

which

means

we

won't

absolutely

ship

without

this,

but

like,

as

you

said,

it

opens,

the

open

source

is

going

to

be

hard

right.

We

we

can't

like.

F

The

community

to

kind

of

express

our

our

interest

in

the

shared

outcome

right

and

obviously

some

things

that

folks

have

higher

interest

in

than

others

individually.

But

I

I

feel

like

parker

on

this

list

was

just

like

a

grab

bag

from

a

wide

variety

of

areas,

and

maybe

it's

not

people's

immediate

interest.

Right.

K

F

L

F

C

Yeah,

from

my

perspective,

I

would

read

low

as

things

that

are

safe

to

drop

from

the

release

medium

as

things

that

I'm

gonna

rely

on

the

owners

to

drive

and

hide

as

things

that

we

as

a

sig

have

decided

on

and

like.

Therefore,

I

should,

if

I

see

it

like

lagging

jump

in

to

try

to

help.

I

don't

know

if

we

have

anything

that

could

be

considered

a

blocker

for

this

release.

C

K

A

K

So

the

next

one

is

memory

qos

for

c

groups

v2.

I

think

like

from

what

we

said

earlier,

like

the

author

is

interested

in

present.

So

should

we

put

this

in

the

medium

bracket,

but

we

still

need

to

get

the

kept

merge,

so

this

would

be

like

definitely

not

high,

like

a

low

to

medium,

depending

on

what

folks

think.

C

M

K

M

K

A

G

F

G

A

K

A

K

C

K

K

A

A

B

A

J

K

K

B

B

K

K

F

O

M

K

B

I

D

Yeah,

so

hey

from

side

t-shirt

sides

it's

more

leash,

because

the

code

that

was

checking

right

now

is

one

thousand

line

of

code

nine,

eighty

percent

tests.

So

it's

really

s

and

effort

wise.

If

we

think

about

well

priority-wise

sorry

for

us,

we

have

use

cases

so

I'd,

say

hi,

it's

important

for

us.

It's

self-contained!

So.

K

I

C

C

D

F

A

F

A

F

I

D

A

I

O

C

K

K

H

This

is

somewhat

similar

to

the

type

of

username

space,

but

it's

different,

so

username

space

proposal

is

for

just

running

containers

as

a

non-reducer,

but

on

the

other

hand,

rootless

mode

means

running

everything,

including

cube

rate

quantity

in

run

c,

as

well

as

continuous

as

a

knowledge

user.

So

it's

different

from

the

user

namespace

proposal

and

do

not

conflict,

and

actually

the

project

is

very,

very

small,

because

almost

all

stuff

followed

by

root

risky

is

a

turret

used

by

both

joker

and

fatima

and

the

contrary.

H

It

runs

the

already

supports

rules

mode,

so

the

parts

on

the

keyboard

is

really

really

small.

So

the

cube

route

needs

to

be

patched

to

ignore

some

errors

during

setting

system

records,

and

we

also

need

to

ignore

everything

setting

our

limit

values

so

that

the

changes

on

humans.

It

is

very

small,

just

just

just

a

few

lines

of

the

course

and

actually

a

kind

keyboardist

in

darker

already

supports

wilderness

mode

without

patching

puberties.

H

F

Yeah,

so

I

just

one

question

I

had

here

was

as

a

goal.

I

I

wanted

to

make

sure

that,

like

the

cube

was

always

set

up

to

be

able

to

pass

conformance,

I

know

in

earlier

iterations

on

the

cap

I

had

asked

if

we

felt

that

running

rootless

would

actually

require

us

to

change

how

we

evaluate

conformance

tests.

F

I

think

in

a

past

comment,

you

noted

that

nfs

and

block

and

other

storage

tests

might

prove

problematic.

Yeah

note

you

had

on

cis

controls,

given

that

I

know

cis

controls

just

got

promoted

to

conformance

in

their

last

release

kind

of

gives

me

a

similar

pause.

So

I

guess

what

I

was

wondering

was:

if

we

proceed

on

this

work,

how

do

we?

How

do

you

guide

us

to

ensure

that

we

can

be

conformant

with

that

word.

H

So

I

think

we

can

use

sono

boy,

sorry,

I

don't

know

how

to

pronounce

but

there's

you

know,

there's

almost

tesla

switch,

and

so

I

think

we

need

to

skip

tests

related

to

cctls

and

individuals

anyways,

but

other

tests

should

work

and

we

can

use

a

kind

for

testing

lutely

mode.

So

we

use

a

router

stroker

or

user

sportsman

as

the

provider

of

kind,

and

we

can

run

kind

of

for

running

most

of

performances.

F

Yeah

I

just

this

is

the

thing

that

I

think

is

like

an

unclear

cost

so

like

when

we

size

this.

I

think

we

need

to

make

sure

that

we

have

those

testing

things

called

out

and

I

wasn't

sure

them

as

if

you

wanted

to

approach

this

from

the

perspective

of

like

a

different

conformance

profile.

You

know,

I

think

about

earlier

conversations

we

had

where

I

think

jack

came

forward

to

the

sig

and

was

like.

I

want

to

change

the

visibility

of

mount

propagation

points

and

we

had

a

lot

of

back

and

forth

on.

F

O

F

H

I

O

F

F

K

K

K

H

H

G

G

So

the

the

idea

is,

you

know,

in

the

secure

by

default

context,

you

would

you

would

you

would

think

that

when

pod

a

pulls

an

image

that

required

a

secret

to

get

that

pod

b

would

not

be

able

to

use

that

image

if

plot

b

didn't

have

that

secret

right,

but

unfortunately

we're

in

current

situation

where

you

can

configure

where

pod

b

will

use.

You

know,

never

pull

or

you

know,

use

the

image

that's

already

on

there.