►

From YouTube: Kubernetes SIG Node 20200512

Description

Meeting Agenda:

https://docs.google.com/document/d/1j3vrG6BgE0hUDs2e-1ZUegKN4W4Adb1B6oJ6j-4kyPU

A

So

welcome

everyone

to

the

May

12th

Cigna

meeting

if

a

number

of

items

just

a

reminder

that

meetings

are

recorded

and

later

uploaded

to

YouTube

for

posterity

and

subsequent

viewings,

so

please

make

sure

to

generally

be

kind

and

be

all

on

our

best

behaviour.

So

with

that

brief

intro

first

topic

today

was

advanced

resource

management

experiences.

A

C

B

B

A

D

I

will

jay

is

not

able

to

attend

today,

but

you

know

we

had

the

test

kick

off.

It

was

we

had

great

attendance,

I,

don't

know,

I,

think

there

was

like

25

folks

there

or

so

and

for

the

first

meeting

it

was

pretty

much

introduction

to

the

test

grid

into

and

no

test.

Some

of

the

folks

were

just

you

know,

new

and

not

familiar

with

that,

and

so

we

spent

the

majority

of

the

time

there.

D

And

then

we

looked

at

the

spreadsheet

and

talked

about

sort

of

held

to

correlate

a

test

with

the

test

code

and

where

the

job

is

a

fun

and

DIMMs

was

there

and

he

gave

us

a

great

overview

and

I

just

want

to

give

him

a

big

shout

out

say

thank

you

for

attending.

Thank

you

for

sharing.

That

was

really

good.

That's

just

the

heart

of

open

source

and

I

really

appreciate

that

and

I'm

sure

others

on

the

call

did

too

so.

Thank

you,

dims

for

that

I

shared

the

recording.

D

So

we

did

record

the

meeting

for

folks

that

we're

not

able

to

attend,

and

so

the

next

thing

we're

going

to

do

is

complete

the

spreadsheet,

which

has

a

list

of

all

the

tests.

There's

a

little

feedback,

maybe

do

a

little

cleanup

and

then

volunteers

can

sign

up

for

the

test

to

investigate

there's

already.

Some

that

have

signed

up

and

I

want

to

say

thanks

for

that

appreciate

that

and

I

think

that's

it.

A

B

B

B

B

All

right,

some

colleagues,

my

name

is

Alexander.

Can

you

ski

work

for

Intel

and

in

past

year,

or

so

me

and

engineers

from

our

team?

We

were

trying

to

do

some

different

proof

of

concept,

work

for

our

customers,

all

related

to

hardware,

resource

management,

and

when

we

recently

saw

some

of

my

discussions

regarding

with

the

poetry

manager,

especially

like

this

memory

manager,

they

realize

it

would

not

overwork

what

we

were

doing

is

well.

You

know

now

like

some

of

experiences.

B

What

we

had

is

actually

might

be

useful

for

our

followers,

because

we

have

a

bit

range

of

use

cases.

So

we

would

I

want

to

talk.

Today

is

what

I

will

show

some

time

and

when

I

will

talk

about

like

specifics

of

hardware,

like

specifically

sack

CPU

memory,

what

we

know

about

currently

and

already

like

some

on

the

water

stones,

what

we

already

stepped

on

so

probably

like

when

we

are

designing

some

new

things

we

can

try

to

avoid

word,

and

the

last

part

is

about

user

experiences

again

something

what

we

try

it

on

something.

B

What

we

we

think

might

be

better.

So,

first

of

all,

nobody

Emma,

it's

recorded

screencast,

it's

about

an

Ian.

So

long,

it's

a

stop.

Kubernetes

like

nothing

modified,

stop

container

D,

nothing

modified,

plus

few

components

are

that

in

between,

where

dear

mom

will

be

focusing

mostly

on

CPU

and

memory,

but

we

also

can

do

some

things

related

to

block

a

or

to

cache

management

and

memory

ban.

This

and

few,

our

like

small

things.

B

So,

let's

start

with

with

Emma

what

Emma

is

going

to

be

showing

like

one

single

node

cluster,

where

machine

is

actually

was

standard.

Dell

server

like

two

sockets

Intel

CPU

many

cores

were

few

things

which

was

a

bit

tricky.

Mr.

machine

is

what

it's

well.

It

enable

the

configuration

of

memory

latency,

specifics

and

plus

it

also

had

memory

which

is

so-called

like

persistent

memory

or

into

DC

memory,

which

Michael

asked

you

to

have

a

lot

more

memory,

which

and

cheaper

one

theorem.

B

So

by

default,

let's

look

what's

happening.

If

you

try

to

run

application

like

we

have

one

pot,

multiple

containers,

each

offer

was

like

more

stable

and

running.

Very

simple

application

and

with

simple

application

will

be

just

reporting

where

exactly

it

actually

runs

so

like

which

memory

nodes

are

enabled

which

CPU

pools

are

enabled

where

it's

located

like

socket

and

so

on.

B

So

we

first

container

we

can

see

what

it's

located

on

Durham,

no

zero.

We

have

shared

pool

of

CPUs

and

you

can

see

what

it

shows,

what

it's

on

CPU

socket:

zero

and

Numa

node

zero,

a

second

container

Mumma

node,

two

again

different

set

of

shared

pools

of

CPUs

still

within

same

physical

socket

like

soap,

zero,

but

on

different

Numa

node

in

terms

of

memory.

A

third

container

spread

it

to

the

next

circuit,

again

separates

pool

of

shared

CPUs,

separate

memory,

nodes.

B

And

we

for

container

a

game

in

second

circuit,

when

I

would

another

memory

node

and

our

set

of

shared

CP,

so

you

might

ask

like

okay,

so

we

have

a

containers

which

will

fit

a

nice

small,

shared

pool

of

CPUs

like

part

of

a

system.

What

happens

if

I

have

a

workload

which

actually

tries

to

use

something

more

than

word,

so

how?

How

is

going

to

be

placed?

And

for

that

we

have

a

second

part.

B

B

So

the

next

thing

is

what

like,

what

scenarios,

what

we

discussed

it.

So,

what

happens

if

I

have

a

workloads

which

actually

wants

to

run

together

so,

for

example,

like

using

the

shared

memory,

so

we

try

to

experiment

with

something

and

we

come

up

with

mechanism

to

do

like

affinity

and

Angevin

idea.

So

you

can

look

you

might

know

just

like

in

metadata.

We

have

annotation,

which

actually

says

like

what

container

1

and

contain

your

free

should

be

close

together

and

container.

B

B

And

container

it

has

affinity

towards

container

one,

so

it

landed

also

to

a

same

shared

pool

the

same

as

container

one.

So

socket

zero,

Mumma,

node,

zero

in

terms

of

memory

and

container

for

it

doesn't

have

any

preferences.

So

a

system

balances

it

out

to

and

have

a

socket

just

to

spread

the

Lord's

between

all

parts

of

a

system.

B

All

right,

so

the

next

thing

is

actually

so.

This

is

like

we

CPU

and

memory

resources.

Wherever

you

can

optimize.

The

next

thing

will

be

about

where

devices

so

right

now

with

the

pollution

manager

is

only

capable

of

aligning

the

resources

when

you

have

explicitly

requesting

some

of

the

devices

what

we

try

it

and

what

we

implement

that

is

actually

possibility

to

detect

if

container,

is

using

something.

B

So

in

this

Yunel

example,

where

container

free

is

using

the

volume

which

is

actually

located

on

the

local

physical

disk,

so

we

know

what

it's

like:

it's

local

storage,

it's

PCI

device

behind

it

and

we

need

device

behind

it.

So

we

we

can

try

to

place

it

close

so

to

avoid

with

data

transfer

between

where

different

parts

of

a

system

just

to

improve

performance

of

disk

operations-

and

this

is

practically

like

we

can

detect

what

what

kind

of

PCI

devices

it's

located

to.

B

We

can

detect,

which

cores

are

close

to

it

and

when

it

means

what

container

will

be

placed

again

close

to

two

O's,

and

this

is

actually

working

across

different

devices.

So

we

tried

it

with

storage.

We

tried

it

with

NFS

share,

so

we

can

detect

if

it's

connected

to

some

network

and

obviously,

if

your

container

is

using

some

of

device

exposed

via

device

plug

in

any

device

note

if

we

can

detect

topology

of

red

device

from

Linux

corner

when

we

do

think

so,

here's

way

container

free.

B

B

The

last

part

of

fátima

it

will

be

something

what

is

not

very

well

or

not

very

common,

yet

in

the

system

or

in

the

world,

but

something

what

soon

we'll

be

able

to

do

big

data

centers.

So

practically

we

could

with

thing

is

memory

key

link.

So

it's

scenario

when

you

have

in

the

same

system

different

types

of

a

memory

as

I

mentioned

like

we

have

dear

arm,

we

have

a

bit

slower

persistent

memory

in

users

and

volatile

mode

and

we

have

a

container

which

will

be

utilizing

both

of

rows.

B

B

B

B

And

practically

like

Reese's

not

limited

only

to

P

ma'am,

we

also

able

to

detect

high

bandwidth

memory

and

do

exactly

the

same

thing.

So

if

you

have

some

specific

Network

workload

which

is

really

really

sensitive

on

web

and

this

or

latency

or

for

memory,

you

must

probably

want

to

use

for

higher

in

this

memory

and

when

set

your

application,

would

use

with

CPUs

from

closest

region

from

from

this

memory

type.

B

B

B

Some

devices

are

attached

and

when

people

are

talking

about

what

nominals

way,

assuming

what

like

one

socket

equal

to

one

Numa

node

and

trying

to

use

it

as

a

synonyms,

the

thing

is

what

this

picture

was

accurate,

maybe

about

15

years

ago,

but

actually

even

about

like

10

years

ago.

This

picture

become

obsolete,

so

the

current

generation

of

a

hardware.

What

people

are

using

nowadays

and

the

data

center

is

actually

a

bit

more

complex.

So

we

have

still

like

multiple

physical

packages

of

CPUs.

B

We

have

one

or

more

dies

or

chips

inside

with

physical

packages

and

when

those

chips

contains

multiple

cores

and

many

of

your

scores,

actually

not

equal

like

some

might

be

running

faster.

Some

some

might

be

slow,

so

I

might

have

a

tuba

frequency.

Some

might

be

located

a

bit

closer

to

some

to

somehow

interconnects

and

so

on,

and

you

might

have

interconnects

between

wells,

physical

packages

and

apology

of

those

interconnects.

It

really

depends

on

the

vendor

on

the

type

of

a

motherboard.

B

It

buys

sightings

and

many

other

things,

and

when

we

are

talking

about

memory

actually

modern

CPUs,

we

usually

have

more

than

one

memory

controller

and

each

of

memory.

Controller

have

multiple

channels.

So

when

we

are

talking

about

the

Numa

note,

what

Linux

kernel

sees

it

might

be

working

in

different

variants.

So

one

more

event

is,

would

like

a

standard,

but

most

commonly

used

is

what

when,

where

two

controllers

are

working

in

the

same

like

in

the

parallel.

B

But

you

have

instead

with

two

Numa

nodes,

visible

in

the

Linux

kernel

and

as

soon

as

you

start

to

add

more

different

memory

types

you,

like

Linux

kernel,

will

start

to

see

an

additional

nominals

which

actually

doesn't

have

a

CPU

cores.

So

when

you're

planning

to

use

the

Numa

node

for

something

you

need

actually

to

think

about

like

how

it

actually

represent

them,

how

it's

actually

connected

and

when

we

have

devices

so

even

with

Linux

kernel

reports

that

attach

it

to

some

to

some

Numa

node.

B

In

reality,

each

PCI

devices

way

I

actually

have

a

locality

to

some

PCI

course,

because

with

PCI

controllers,

somewhere

close

to

one

core,

whereas

1/2

an

hour

and

when

we

have

a

bit

more

complicated

before

the

devices

like

well

inter

device

connections

with

devices

which

can

be

connected

in

our

in

our

means

rather

than

PCIe.

So

you

might

want

to

detect

more

additional

hardware

things.

But

it's

out

of

scope

for

today,

and

I

think

what

I

also

mentioned

is

what

like

beside

where

devices

work

is

covered

by

accelerator.

B

So

you

usually

have

bunch

of

the

devices

was

just

in

in

your

motherboard.

So

all

kind

of

I/o,

all

kind

of

envy,

the

chipset

features

all

kind

of

network

interfaces,

and

so

so

when,

when

you

are

thinking

about

like

how

we

all

work

load

is

actually

instantly

optimized

for

Hardware

things.

You

need

to

think

about.

All

of

those

interconnects

between

the

devices

and

let's

easily

become

like

when

vendor-specific,

unfortunately,

so

what

we

learn

it

when

we

try

to

manage

with

CPU

based

resources.

B

So,

first

of

all,

as

I

mentioned,

where

CPU

is

a

bit

complex

thing,

even

where

were

Cooper

nitrous

right

now

is,

does

a

lot

of

simplification

about

well

course

with

threads,

all

CPUs

are

equal

in

reality.

It's

a

bit!

It's

it's

not

even

go

over.

Here

we

have

different

usage

of

of

CPUs,

so

like

shared

with

Xclusive,

which

is

possible.

This

current

CPU

manager,

but

one

even

for

exclusive

usage.

What

is

the

interest

in

different

high

performant

workloads

to

utilize?

Well,

isolated,

one

so

isolated

is

the

one

which

Linux

kernel

is

not

automatically

scheduled.

B

So

a

task

so

well

workload

needs

to

be

specifically

peanut

to

be

utilizing

those.

So,

of

course

we

can

try

to

introduce

my

new

resources

forward,

but

it

doesn't

really

make

a

lot

of

sense,

so

it

will

complicate

with

user

experience

for

for

the

things.

So,

in

a

new

policy,

what

I

showed,

unfortunately,

it

was

not

part

of

fátima,

but

I

would

say

if

we

infer

container

would

be

requesting

CPU

like

full

physical

CPU,

and

we

have

in

my

system

detect

that

what

were

our

isolated

CPUs

from

Linux

kernel?

B

We

would

give

to

this

guaranteed

container.

We

exclusively

isolated,

CPU

again

transparently

for

for

current

implementation,

but

beside

wet.

So

we

CPU

what

we

know.

It's

like

just

shared

time

like

differently

use

it,

but

it's

still

like

the

one

big

share

time

where

CPU

actually

have

a

bit

more

resources.

So

we

have

cache

control

and

we

have

a

memory

bandage

and

those

thing:

it's

not

something.

So

it's

it's

harder

to

represent

it

with

a

current

resource

model

model.

B

So,

for

example,

like

my

memory

bandage,

you

can

say

what,

for

my

record,

I

want

to

limit

fit

for

50%

of

memory

bandwidth.

So

it's

not

exclusive

allocation.

It's

not

it's

kind

of

like

gouge

type

of

resource

which,

which

is

elastic,

and

it

depend

also

on

memory

controller.

So

you

have.

If

you

have

multiple,

you

need

to

specify

with

limits

on

multiple

controllers.

B

So

next

thing

we

interconnect

as

I

mentioned

its

vendor

and

hardware

dependent

like

how

many

interconnects

you

have

how

much

traffic

you

have

on

those

and

when

people

are

referring.

What

like

I,

have

a

memo

problem

and

because

of

that

I

have

a

performance

and

it

usually

means

what

your

interconnect

between

the

CPUs

is

busy

between

for

moving

data

between

memory

controllers

and

actual

CPU

core,

which

processes

which

shrink-

and

that

leads

to

do,

we

think,

is

what

again

misconception

is

what

luma

is

related

to

the

CPU.

B

So

Numa

is

really

memory

and

different

vendors

represent.

We

have

a

different

meaning

of

a

Numa,

and

this

is

really

configurable

wire

bias

settings

so

in

into

it's

called

S&C

in

AMD

processor,

it's

called

like

NPS

notes

per

socket

and

it

can

be

like

1

to

4

in

different

configurations.

But

again

it's

it's

something.

What

you

need

to

take

in

mind

if

you

try

to

start

exposing

this

thing

to

it

over

users,

so

a

good

thing

about

what

CPU

actually

is

what

what

Claude?

B

B

It's

a

bit

less

important,

so

it

depends

on

how

your

hypervisor

on

in

under

cloud

actually

exposing

the

information

to

to

a

couplet.

So,

for

example,

I

I

saw

some

presentation

about

VMware

tons

of

thing

and

we

implemented

actually

very

good

scenarios.

What

were

when

were

virtual

note

or

well

dynamic

notice

provision

that

we

create.

We

note

which

hypervisor

actually

put

nicely

inside

some

memory

and

CPU

domain,

so

you

get

well

after

Matic

optimization

of

alpha

topology,

but

it's

like

it's

done

by

a

VMware

hypervisor.

B

C

We

and

also

cuoco

TC.

You

also

have

the

similar

things.

We

have

met

the

optimized,

the

VM

for

customer

and

to

benefit

from

the

optimized

of

the

Numa

and

secure

and

memory

and

the

needs

of

the

host

and

therefore

that

technical

VM.

So

a

lot

of

those

details

hide

inside

that

rather

implementation,

so

so

I

just

want

to

share

here.

Yeah.

B

And,

and

actually

the

whole

reason

why

I

am

talking

about

here

is

and

actually

the

reason

why

I

show

it

with

them

is

what

like

we

use

of

experience

is

important

back

in

November.

After

cube

corner

I

spoke

with

Tim

Hawking,

and

he

mentioned

also.

His

scenario

is

what

like

Google

cloud

for

philosophy.

What

we

used

and

when

create

kubernetes

was

like

to

hide

with

complexity

from

the

user,

and

that's

why

we

also

trying

to

use

the

same

principles

so

looking

at

CPU

resources,

please

to

know

this.

What

I

wrote

here.

B

So

this

is

how

we

actually

look

at

apology

of

things,

so

we

can

detect.

Would

it

be

like

bare

metal,

or

would

it

be

a

cloud

hypervisor

which

expose

it,

but

from

Linux

kernel?

What

we

can

find

out

is

what

like,

what

socket

number

like

the

physical

package

ID.

We

can

find

how

many

CPU

dice

or

chips

present

in

one

physical

package

and

when

we

have

group,

of

course,

which

is

close

to

the

error

within

one

by

visiting

some

socket.

B

So

for

us,

what

we

actually

do

is

we

create

in

this

kind

of

virtual

tree

of

resources?

So

we,

like

literally

splitting

whole

node

into

multiple

zones

and

for

each

group

like

each

of

these

leaf

nodes,

we

have

group

of

CPUs,

we

have

group

of

memory

and

what

could

actually

end

up

in

this

group.

So

inside

this

group

we

have

similar

to

what

currently

have

like

we

CPU

manager.

We

have

shared

pool

and

we

have

exclusive

allocation

if

workload

requires

a

exclusive

fate.

B

But

what

we

also

able

to

do

is

we

can

create

dynamic

pools

so,

for

example,

like

isolated,

CPU

or

special

case,

which

rattled

so,

for

example,

if

you

run

avx-512

heavy

instruction

and

start

to

throttle

CPU

cores

to

to

do

this

big

vector

operations

so

to

not

affect

our

workloads,

we

creating

with

throttled

pool

for

NEX

workload

and

the

rest

of

the

workloads

is

moving

out

of

the

shared

pool,

so

we

are

not

affected

by

this

scale

down

of

frequencies

and

what

happens

if

we

cannot

fit

in

this

small

group.

So

it's

easy.

B

We

just

go

one

level

up,

so

this

helps

us

to

actually

eliminate

scenarios.

What

we

are

trying

to

we

get

to

the

situation,

what

we

cannot

feed

and

they

cannot

reject.

So

we

need

to

reject

football

and

all

this

tree

structure,

what

we

are

having

is

actually

really

dynamically

based.

So,

for

example,

if

we

detect

what

we

have

in

the

system,

you

have

only

one

diaper

socket,

so

the

whole

this

layer

of

dice

will

be

missing,

so

the

tree

will

be

like

not

wet,

deep

and,

of

course

like.

B

So,

let's

CPU

and

as

soon

as

we

start

adding

the

memory

in

the

picture,

it

becomes

a

bit

more

complicated.

So,

as

I

mentioned,

we

have

like

most

people

know

right

now

what

we

you

have

only

gear

on

nice,

good

thing,

but

in

the

market

in

the

current

hardware,

he

textures

for

our

two

other

types

of

memory,

which

is

also

present

in

some

of

the

scenarios.

So

one

is

persistent

memory

which

was

used

in

volatile

system

mode,

so

I

called

pmm

in

this

presentation

and

an

hour

is

hi

Ben.

B

When

you

have

two

types

of

memory

inside

the

Linux

kernel,

so

one

is

normal,

like

your

normal

jerem

and

second

is

movable,

and

we,

we

think

which

is

interesting

in

removable

part,

is

what

like

you

cannot

like

removable.

The

kernel

will

not

allocate

any

special

pages

inside

removable,

so

if

you

try

to

run

any

workload

and

only

allow

to

allocate

memory

from

movable

mode,

you

will

end

up

on

the

trouble.

So

kernel

will

not

be

able

to

run

it

because

it

it

simply,

it

cannot

load

with

shared

libraries

into

it.

B

So

if

you

have

a

system

where,

like

some

of

your

memory

represented

as

moveable,

you

need

always

to

select

workload

which

says

like

one

normal

DRAM

memory,

plus

this

moveable

and

the

overlord.

When

should

be

able

to

distinguish

between

those

and

then

do

a

balancing

inside

your

user

space

application

and

what

leads

to

to

actually

the

problem

of

Numa.

So

when

we

are

talking

about

one

Numa

and

specifically

memory

part

of

it,

so

we

need

to

understand

what

kind

of

memory

it

is

like.

Is

it

normal?

Is

it

mobile

how

it's

connected?

B

Does

it

have

CPU

or

not?

Is

it

like,

even

if

it

doesn't

have

a

CPUs?

What

actually

were

closest

Numa

node

is

a

CPU,

so

you

can

define

you

can

define

for

your

workload,

how

it

should

be

placed,

and

when

you

have

a

distances

and

distances

it

can

help

you.

It

can

give

you

a

hint

how

it's

connected,

but

you

actually,

you

can't

trust

those

distance

numbers

for

any

performance

validation,

because,

like

many

buyers

may

many

several

vendors.

B

So

if

you,

if

you

have

a

workload

and

you

want

to

migrate

it

between

like

one

region

to

an

hour,

if

you

do

it

within

one

physical,

like

cheap

or

one

physical

package,

usually

it's

relatively

well

it's

costly

operation,

but

it's

doable,

but

as

soon

as

you

start

to

cross

inter

socket

communication

to

move

the

pages

between

them,

it's

become

really

really

high

load

for

the

system.

So

kernel

will

lock

your

application.

You

will

get

latencies,

you

will

get

interruptions

on

your

workload,

so

try

to

avoid

those.

D

B

B

Related

to

it,

so

one

modification

as

soon

as

soon

as

we

are

that

peak

memory

in

the

picture,

so

what

we

can

detect

we

can

detect

how

topology

is

a

topology

of

shift

system

is

located.

We

can

detect

how

the

memory

and

I

control

also

located

and

when

we

can

locate

where

we

CPUs

daram

and

amendment

h-beam

am

allocated

so

practically

like.

Even

though,

if

it's

represented

as

a

different

luminance,

we

group

one

into

the

same

pool

and

when

we

allow

workload

to

actually

specify

what

kind

of

memory

it

will

be

able

to

use.

B

So

by

default,

we

allow

both

kiram

and

PMM

and

the

reason

for

it

is

actually

we

how

its

implemented

in

linux

kernel.

So

if

you,

if

you

have

this

scenario

where

multiple

Numa

nodes

are

enabled

for,

were

quad,

and

some

of

them

is

Durham

and

some

of

them

is

p.m.

is

what

kernel

will

start

to

do

similar

to

after

normal

scenario,

but

instead

of

like

locality

of

CPU,

it

will

start

to

analyze

how

cold

or

hot

your

data

is

so

well

colder.

Your

data

is

for

less

used

or

read-only

usage.

B

It

will

be

migrated

in

the

background

to

a

persistent

memory

and

what

more

actively

use

it

in

Madeira.

So

again,

Colonel

helps

to

optimize

the

performance

of

your

application,

all

right,

almost

end,

so

well:

user

control

and

UX,

as

I

mentioned

a

bit

earlier.

So

we

really

want

to

have

something:

what

is

suitable

for

public

laws

for

VMs,

for

bare

metals

and

so

on,

and

especially

for

all

non-trivial

resources

and

again

this

good

user

experience,

and

if

you

start

to

track

all

of

those

and

you

start

to

place

in

your

workload.

B

Sack

sooner

or

later

you

end

up

an

elicitation

like

okay

can

I

fit

it

into

the

node,

and

we

have

like

two

scenarios,

like

one

scenario,

is

rejecting:

let's

practically

we'll,

only

think

what

is

possible

currently

in

with

apology

manager,

because

all

the

place

when

done

inside

the

admission

loop

and

second

thing

is

rebalancing,

meaning

what

okay.

We

got

something

new,

but

can

we

adjust

something

in

the

run

time

to

two

feet,

so

a

famous

a

story

of

a

jar

and

different

sizes

of

frogs.

B

So

if

you

put

it

on

wrong

order,

you'll

end

up

with

a

problem

with

the

waiting

you

need

to

reject.

But

if

you

start

reshuffling

well,

you

might

able

to

fit

more

and

based

on

this

reshef

link

and

rebalance

in

what

we

had.

We

figured

out

so

like

well

again

as

I

say

it

like

a

sign

that

devices

is

something

what

you

definitely

cannot

move.

B

So

why,

at

all

I'm

talking

about

all

of

this,

so

he

reacts

mostly

reacts.

So

the

scenario

when

we

are

trying

to

expose

all

this

new,

my

awareness

to

end

user

I

think

it's

it's

not

a

great.

So

what

user

expects

by

default?

It

was.

It

presses

a

button

like

put

it

and

in

a

trance

the

thing

when

some

of

the

patterns,

what

what

we

seen

so

instead

of

defining

like

I,

want

with

amount

of

memory

from

Numenor

0

and

now

a

set

of

amount

of

memory.

B

B

Ok,

how

would

the

Vice

is

connected

to

a

system,

but

for

also

in

many

scenarios

will

be

interested

to

have

device

pipeline

so,

for

example,

a

GPU

connected

to

fpg

connected

to

some

network

card

which

is

connected

to

specific

network

and

and

so

on.

So

it's

less

about

how

it's

connected

to

CPU.

It

smoking

it's

more,

how

it's

connected

to

out

of

bound

services

and,

of

course,

a

future.

So

we

shouldn't

be

doing

something

today,

which

we

know

what

it

will

be

absolutely

by

the

hardware.

B

How

Hardware

is

a

wall

in

the

future

and

the

main

thing

is:

what

do

we

really

want

to

have

all

this

complexity

in

kakou

blood,

especially

like

if

we

have

nowadays

a

lot

of

VM

based

VM

runtimes?

If

we

have

like

hypervisor

like

VMware,

turns

on

and

so

on

so

might

be.

We

need

to

think

what

what

part

should

be

done

on

couplet

and

what

part

should

be

done

in

run

times,

so

we

can

enable

again

the

competitiveness

between

run

times

and

get

it

more

pluggable,

get

it

more

advanced.

B

I

would

say

based

on

the

actual

use

case,

so

you

can

have

a

generic

couplet

for

four

whole

cloud

and

where

you

have

like

resource

specific

needs

when

you're

on

time

we'll

be

handling

it

all

right.

So

that's

what

I

wanted

to

show

I

have

power

slide,

but

I

don't

have

time

for

it

actually

how

its

implemented

I

guess.

B

A

B

Well,

let

me

let

me

show

one

more

slide

so

well.

I

shall

think

what

I

demonstrated,

how

a

demo

is

done,

it's

done

by

our

proof-of-concept

project,

because

here

are

a

resource

manager,

so

we

used

it

for

prototyping

all

of

these

additional

things.

So

how

would

how

it

works?

It's

just

a

proxy

between

work,

couplet

and

actual

continued

runtime.

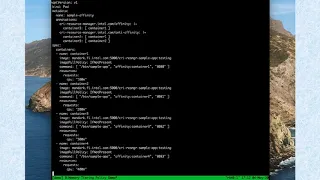

B

So

the

thing

is

we

implemented

it,

but

the

main

point

of

implementation

of

it

and

where

main

point,

why

I

showed

all

this

like

tree

structure

is

what

we

probably

want

to

revisit,

how

we're

actually

thinking

about

what

major

resources

in

the

system.

So

we

can

do

both

ways.

We

can

try

to

make

recuperate

more

hardware

or

we

can

do

the

opposite.

B

What

couplet

will

be

a

bit

less

Hardware

aware,

but

continued

runtime

itself

will

be

trusted

more

on

actually

on

allocation

of

hardware

resources,

but

when

we

need

to

enhance

where

CRI

messages

actually

to

expose

what

contagion

actually

wanted.

So

like

like

real

memory

requirements,

not

on

the

limit

the

same

with

CPUs,

we

save

these

huge

pages

and

so

on.

So

for

continued

runtime

in

similar

to

like

what

virtual

couplet

is

doing

is

what

like

continued

runtime.

B

A

Yeah

I

was

gonna,

say,

I

feel

like

each

week.

We're

getting

cool

to

explore

different

tension

and

all

designs

require

a

sacrifice

on

some

dimension,

so

I

think

as

a

broader

community

and

a

set

of

users

you're

looking

to

support

like

its

inherent,

you

have

to

make

a

sacrifice

that

upsets

some

balance

in

the

system

and

I.

Think

what

you

presented

here

is

is

really

cool

to

see.

A

I

would

say

that

probably

the

sacrifice

that

it

doubles

down

on

is

the

cluster

watch,

scheduler

not

being

node

local,

aware

and

I

could

see

how,

for

many

folks

that

that

is

perfectly

reasonable

and

then

I

could

see

how

some

others

wouldn't

appreciate

that

sacrifice

so

I

guess

in.

In

closing,

like

one

I

know,

Victor

was

looking

to

set

up

a

more

recurring

discussion

on

this

topology

where

problems,

so

we

can

get

all

these

ideas

out

and

so

Alex

a

big

thank

you

for

theirs.

A

This

now

I

think

in

that

presenting

vomit

would

be

good

you

to

talk

through

like

what

the

design

sacrifices

are

that

are

made

and

personally

I

feel

better.

If

we

can

keep

the

cubelet

in

a

space

that

doesn't

make,

it

have

to

be

the

actor

that

makes

that

sacrifice,

and

so

maybe

that

your

your

presentation

today

is

one

that

I'll

have

to

reflect

on

and

think

through,

but

I

appreciate

the

that

the

deep

detail.

A

B

B

So

what

I

was

trying

to

say,

even

with

splitting

the

system

into

multiple,

smaller

buckets,

if

we

cannot

fit

into

a

small

bucket,

we

can

bubble

up

to

upper

layer

and

on

the

upper

wheel,

because

it's

just

like

summary

of

available

resources,

it

will

exactly

match

what

scheduler

things

about.

What

not

so

we

end

up

this,

like

less

amount

of

rejections

from

OneNote

for

a

container.

B

Well,

the

only

way

were

only

scenario

when

we

go

to

rejection

is

with

scenario

when,

with

container

have

a

very

strict

by

annotation

set

with

criteria

so

like

aligning

on

top

of

a

device

allowing

me

containers,

and

we

simply

can't

fit

it

even

if

they

do

rebalance.

You

know

for

existing

work

laws

so

again

UX

matters

and

we

are

trying

to

make

sure

what

stock

kubernetes

was

talk.

User

experiences

hiding

all

this

complexity.

A

D

Sure

Thank

You

Derrick

Alex,

that

was

a

great

presentation,

I

think

you

know

I

learned

a

lot

on

that

too,

very,

very

good.

So

what

Derrick

is

referring

to

is,

you

know,

there's

an

effort

to

get

everyone

together

for

topology,

where

discussion

we

have

been

doing

this

for-

and

you

know,

topology

manager

for

several

months

now,

but

primarily

it

was

focused

on

getting

to

beta.

D

So

now,

what's

red

of

that

we're

looking

at

new,

more

we're

scheduling

so

I'm

just

like

this

meeting,

it

takes

a

very

long

time

to

present

for

people

to

absorb,

and

so

the

idea

is

we

would

have

a

separate

meeting

to

focus

on

this.

What

would

propose

is

that

we

well

first,

we

better

channel,

create

a

channel

topology

or

we're

scheduling

that

these

are

in

the

signal

media

minutes,

but

we

could

propose

having

a

meeting

on

Thursdays

9:00

a.m.

D

Eastern,

Daylight,

Time

and

I

know

that

won't

work

for

everyone

and

if

we

need

to,

we

can

do

a

survey

and

try

to

find

a

time.

But

up

until

this

point

it's

been

some

folks

from

Samsung

Chris

and

B

GE

and

Nvidia

and

Intel.

Looking

at

some

of

the

previous

proposals

and

I

think

this

fits

right

in

so

yeah.

That's

what

we

want

to

do.

Take

it

sort

up

to

a

different

meeting.

D

B

It

was

already

like

for

a

long

time,

discussion

about

like

let's

bring

good,

workman

or

group

or

resource

management

for

which

topic

specifically,

but

as

they're

excited

a

couple

of

weeks

ago,

we

can

do

it.

It

lasts

for

now,

and

yes,

I

am

also

from

Intel,

and

we

are

working

closer

to

two

colleagues

like

Connor

Nolan

xyt

in

the

past.

So

we

all

on

the

same

team,

we're

just

looking

from

different

perspective,

one

of

the

same

problems

and

like

some

things

more

than

some

things,

more

simple

use

cases.

A

F

Did

well

I,

don't

know

what

to

to

what

degree

you

want

me

to

go

into

details.

We

probably

don't

have

time

for

a

lot.

In

eight

minutes,

I

can

say

that

I've

been

down

a

very,

very

deep

rabbit

hole

over

the

last

three

or

four

weeks

when

it

comes

to

the

combination

of

Postgres,

with

kubernetes

and

the

linux

kernel

and

out

of

memory

killer

and

all

of

that

Postgres,

because

it,

you

know,

has

very

high

guarantees

when

it

comes

to

durability

and

correctness

and

availability

all

at

the

same

time

has

for

actually

found.

F

You

know

you

see

in

that

write-up

I

did

there's

a

footnote

linking

to

the

original

mailing

list.

Discussion

about

the

Linux

kernel,

om

killer,

describing

it

basically

as

a

bug

in

Linux

kernel.

So

that's

kind

of

that

summarizes

the

way

the

Postgres

community

has

felt

about

that

facility.

For

many

years

it's

been

17

years.

F

Although

many

of

our

customers,

like

the

idea

of

running

guaranteed

pods,

the

downside

of

running

a

guaranteed

pod

is

that

because

there

is

a

memory

limit,

if

you

exceed

that

memory

limit,

the

om

killer

is

what

enforces

it,

and

that

has

a

number

of

bad

effects

on

Postgres,

which

I

also

outlined

and

I.

Does

that

kind

of

summarize

it

at

all?

Yeah.

C

Do

things

or

internal

region

QoS,

we

are

not

using

the

oom

score.

Disabled

won't

kill

the

disabled

like

the

negative

one.

Sorry

it

is

because

if

you

specify

making

especially

like

the

database

type

of

the

workload,

if

you

are

using

those

kind

of

things

and

you

end

up

the

kernel,

maybe

couldn't

kill

anything

became

memory,

you

could

end

up,

have

the

whole

node

crash

and

the

end

up

have

database

could

be

have

the

cropped.

It

data,

that's

even

more

than

jurors.

C

I

just

want

to

share

it

here,

and

so

this

is

the

life

Swindon

in

the

kernel

before.

But

of

course,

the

new

kernel

I

know

they've

returned

of

the

more

memory

and

earlier

neck.

Alex

also

mentioned

something

at

the

touch

base

a

little

bit,

but

not

really

so

they

learn

more

memory

for

those

kind

of

things.

So

this

this

you

could

peek

here.

So

we

have

experience

before

not

even

Cronos

ride

and

reclaimers

ride

cannot

run

and

ii

decided

to

which

container

to

cry

to

kill

so

that

all

can

do

is

just

crash

holder

machine.

C

H

On

this

is

Vinay

I

think

we

touched

upon

this

earlier

in

our

prototype,

where

we

experimented

with

the

enabling

own

kill

disable

for

specific

parts.

Why

our

annotations?

And

if

we

combine

this

with

vertical

scaling,

I,

think

there

might

be

a

solution

where

the

application

is

paused

just

until

the

resizer

can

determine

you

need

or-

and

you

can

get

more

instead

of

killing

the

pod

it.

It

goes

into

a

pause

state

until

VP.

A,

for

example,

is

able

to

resize.

C

F

That's,

that's

probably

true.

Let

me

just

add

one

one

little

bit

of

information

about

this,

and

that

is

that,

unlike

a

lot

of

workload,

something

like

Postgres,

a

user

by

writing

a

bad

query

or

by

specifically

writing

a

query

that

needs

a

lot

of

memory.

Can,

if

you

allow

arbitrary

SQL,

can

consume

a

lot

of

memory.

F

So

it's

not

like

the

workload

can

be

guaranteed

to

be

within

a

certain

set

of

limits

very

easily

unless,

unless

you

only

run

a

well-written

application

against

it

and

then

you've

you've

benchmarked

it

and

you

know

how

much

memory

it

needs,

there's

really

no

way

to

prevent

the

memory

usage

from

going

up.

So

you

know

post-course,

I

think,

is

a

little

bit.

You

know

unique

in

that

regard

compared

to

a

lot

of

typical

containerized

applications,

but

I.

You

know,

I

also

think

that

persistent,

you

know,

database

type

workloads

are

becoming

more

and

more

common.

F

So

that's

also

different

than

a

lot

of

workloads

where

you're,

better

off

just

killing

the

container

and

spinning

up

a

new

one

in

Postgres

is

case.

You

don't

really

want

to

do

that.

You'd

really

would

rather

either

do

a

sig,

int

or

worst

case

a

sig

term

in

either

of

those

cases,

you'll

you'll

quell

the

memory

usage

and

you

will

not

have

any

dalire.

F

A

First,

for

those

who

can

stay

on

2

or

3

minutes,

extra

I

wanted

to

just

ask

one

other

question:

that's

ok,

so

that

I've

seen

this

similar

feedback

for

other

applications

that

I'm

a

cannabis

problem

to

clear

like

in

analytic

spaces

and

especially

where

they're

running

multiple

processes

within

a

container.

The

one

thing

I

was

curious

about

was.

A

A

F

There's

a

couple

of

pieces:

there

I

think

worth

addressing

one,

the

the

own

score

just

of

minus

a

thousand

only

applies

to

the

postmaster,

which

is

really

just

a

listener.

It

doesn't

use

much

memory

and

part

of

the

reason,

for

that

is

its

orchestrating

all

of

the

other

processes,

the

other

processes

we

normally

score

it.

F

We

normally

have

an

adjust

score

adjust

of

zero,

which

allows

them

to

actually

have

a

known

score,

and-

and

that's

so

that

the

runaway

and

the

other

thing

that

maybe

I

didn't

say

but

I

should

have,

is

that

Postgres

is

multi-process

architecture.

So,

every

time

a

client

connects

there

is

a

another.

Can

another

process

that's

spawned

by

the

postmaster

postmaster,

just

basically

grabs

the

incoming

connection

to

a

socket

and

forks

a

child

process.

F

The

child

process

does

all

the

work

so

and

and

if

you've

got

you

know

in

a

typical

scenario,

you've

got

three

two

or

under

two

or

three

hundred

maximum

connections.

You

might

have

three

hundred

Postgres

processes

running

inside

that

one

container,

each

one

handling

a

different

client

back-end,

but,

as

I

said,

only

the

postmaster

is

scored

at

minus

a

thousand.

The

rest

of

those

child

processes

and.

F

All

at

zero

for

a

score

adjust.

The

other

thing

I

will

say

is

our

experience

that

you

know

the

company

I

work,

for

we

have

a

post

current

operator

that

is

actually

I,

think

probably

the

farthest

along

of

any

of

the

ones

that

are

out

there.

We

have

many

customers

that

are

running.

You

know

some

cases,

thousands

of

instances

of

Postgres

in

containers

on

kubernetes

already

we

haven't

seen

a

widespread

problem,

but

we

have

had

people

complain

about

things

getting

you

know

om

killed

in

the

field

because

it

does

happen.

F

C

C

C

F

C

E

E

A

Again,

I

apologize

that

we

had

a

lot

of

topics

on

today's

agenda

and

so

we're

trying

to

get

more

bandwidth

to

have

time

to

discuss

complicated

topics

for

the

crunchy

topic.

I

think

if

it's

okay,

I'd

like

to

do

a

deeper

dive

on

it

next

week

to

see

like

what

we

could

do

and

maybe

what

that

model

may

or

may

not.

Look

like,

and

thanks

again

for

joining

today

and

sharing

experience,

I

appreciate.

F

A

Yeah

I

guess

it

just

gives

us

time

to

think

through

it.

The

only

thing

I

I

feel

like

earlier

iterations

are