►

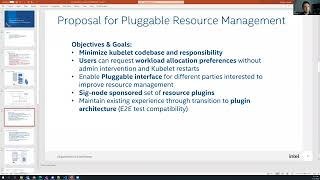

From YouTube: Kubernetes SIG Node 20220913

Description

SIG Node weekly meeting. Agenda and notes: https://docs.google.com/document/d/1Ne57gvidMEWXR70OxxnRkYquAoMpt56o75oZtg-OeBg/edit#heading=h.adoto8roitwq

GMT20220913-170500_Recording_3840x2160

B

C

D

A

E

F

F

So

in

terms

of

agenda

for

for

the

talks

today,

I

will

start

with

short

summary

of

the

current

state

of

Technology

what

we

have

inside

cubelet,

covering

mostly

CPU

management

memory

management,

topology

management-

how

it's

done

today,

very

short,

one

slide

overview

of

that,

and

then

we

will

go

through

our

proposal.

How

this

can

be

extended

or

basically

can

be

done

as

a

plugable

resource

management

mechanism,

we

will

have

some

overview

of

the

possible

architecture

and

and

what

kind

of

steps

we

are

planning.

F

So

it's

and

the

final

two

points

of

the

agenda

are

actually

how

we

want

to

proceed.

If

the

feedback

is

positive

of

the

approach,

what

we

are

planning

we

are.

We

would

like

to

open

a

cap

and

basically

start

a

PR

process

for

that,

and

to

show

you

that

this

is

actually

a

feasible

idea.

What

we

have

in

mind

I

prepared

a

small

demo,

basically

with

with

which

we

will

cover

at

the

end,

if

we

have

enough

time

feel

free

to

interrupt

me.

F

Basically,

as

you

know,

those

are

the

CPU

manager,

memory

managers,

topology

manager

and

Device

Manager,

and

this

already

shows

the

one

of

the

problems.

What

we

have

we

have

four

pieces

managing,

sometimes

the

same

Hardware

part

of

them

are

managing

different

Hardware

like

device

manager,

but

basically

one

of

the

issues.

What

we

see

three

of

them

are

not

extendable,

not

really

extendable,

they

are

hardly,

they

are

integrated

inside

cubelet

and

if

vendors

want

to

put

New

Logic

new

CPU

logic,

if

there

are

changes

coming,

this

is

very

hard.

F

So

keeping

up

the

up-to-date

with

Hardware

becomes

more

difficult

with

the

time

right,

and

this

led

this

kind

of

three

managers

they're

somewhat

limited.

They

are

not

exposing

the

hardware

up

to

the

level

what

we

we

would

actually

like

with

future

architectures,

and

this

led

also

in

in

the

community.

You

see

a

lot

of

custom

made

solutions

from

users

which

saw

those

limitations

of

of

a

CPU

manager

and

memory

manager

and

others.

F

So

a

lot

of

solutions

exist

out

there

and

yeah

we.

Another

drawback

is

basically,

if

you

do

configuration

of

of

this

components

like

the

managers,

usually

you

need

to

restart

cubelet

and

a

lot

of

the

configuration

is

done

through

from

administrator

and

the

the

level

of

configuration

which

the

user

can

do.

It's

quite

limited

and

right

and

always

has

to

go

through

this

mechanism,

the

other

drawback.

F

What

we

see

is

if

we

look

in

today's

topology

manager,

it

has

also

limits

already

on

today's

Hardware

or

the

hardware

coming

this

year,

basically

to

topology

manager

runs

into

limitations

because

of

more

complex,

triplet

architectures,

which

are

currently,

you

can

hardly

basically

work

with

with

the

ownership

manager

and

must

probably

lose

performance

right,

and

the

main

problem

for

us

is

actually

having

those

four

pieces.

And

if

you

want

to

push

let's

say

new

code

inside

this,

is

this

will

blow

up

cubelet?

It

will

make

it

bigger.

F

It

will

make

it

more

complex

right.

So

it's

really

hard

for

us

basically

to

extend

CPU

manager

memory

manager

having

it

ready

integrated

inside.

That's

why

we

are

looking

to

propose

a

plugable

architecture

where

vendors

can

propose

or

basically

write

their

own

plugins

or

for

CPU

management

and

the

other

kind

of

resource

management

resources.

F

So,

just

as

a

short

overview

of

the

architecture

of

current

cubelet,

we

looked

inside

the

code,

the

main

building

blocks

of

the

current

architecture.

You

have

a

a

container

manager

which

takes

care

for

the

lifetime

events

and

basically

inside

cubelet,

you

have

the

four

different

managers

and

the

lifetime

events

are

called

for

all

these

four

managers

between

the

managers.

You

have

also

separate

connections,

usually

they

are

going

mostly

from

from

the

topology

manager

calling

or

other

managers

and

the

other

interesting

piece.

F

What

what

we

see

is

device

manager

is

the

only

one

clickable

so

which

has

some

sort

of

register

mechanism

and

device

plugins.

As

we

know,

those

are

demon

sets

when

allocated

when

basically

created,

they

call

the

registration

of

device

manager

and,

and

they

can

be

basically

instantiated

in

the

runtime.

The

the

size

has

some

meaning

here.

We

we

denote

with

the

size,

actually

the

complexity

of

those

Miniatures.

If

you

look

inside

the

code,

topology

manager,

CPU

manager,

number

of

lines

of

code

of

Dozer

is

growing

and

they're

becoming

more

and

more

complex.

F

The

other

kind

of

important

message

of

the

current

or

important

aspect

of

the

current

architecture.

What

we

currently

see

yeah

one

one

drawback:

if

you,

if

we

look

into

the

topology

manager,

you

have

the

bit

life

cycle,

basically

for

for

containers

and

the

issue

what

we

saw

when

looking

inside

the

code,

it

actually

has

a

lot

of

side

effects.

F

This

kind

of

calls

inside

the

topology

manager

there

in

the

mid

life

cycles,

they're

they're,

a

lot

of

allocations

happening

already

on

devices

and

other

managers

which,

which

makes

it

hard

to

understand,

makes

it

hard

to

predict

from

from

what

what

happens

from

in

terms

of

later

extensions,

if

you

extended,

a

lot

of

things

can

break

almost

probably

so.

This

is

one

of

the

design

things

with

which

we

currently

also

found

out.

F

So

basically,

we

would

like

those

managers

and

and

those

resource

managers

basically

to

live

outside

of

cubelet

to

be

pluggable.

So

if

you

have

a

CPU

resource

manager,

basically

this

this,

the

idea

is

to

be

outside

of

cubelet

and

similar

to

device

plugins.

It

connects

to

a

to

certain

registration

service

and

it

becomes

visible

for

cubelet.

F

F

F

H

F

F

F

We

would

like

to

keep

existing

technology

the

existing

existing

kind

of

implementation

lodging

still

inside

kubernetes.

So,

basically,

we

propose

to

guard

to

introduce

Gatekeepers

for

the

New

Logic,

which

will

be

basically

which

will

disable

the

standard.

Cpu

managers

topology

managers,

when

enabled

so

basically

they

will

be

mutually

exclusive,

and

this

will

be

controlled

through

a

gatekeeper,

router,

end-to-end,

test

compatibility.

This

will

be

our

Target.

We

already

started

working

on

that

in

the

Prototype

and

we

showcased

that

it

is

feasible.

F

Performance

impact

is

also

important

for

us.

As

this

flexibility

comes

with

a

price,

we

would

want

to

know

that,

and

we

would

like

to

put

the

cards

open

on

the

table

for

the

community

to

see

what's

the

price

having

a

plug

texture.

Basically

and

last

but

not

least,

we

want

to

support

device

manager,

make

a

device

manager

capabilities

as

they

are

existing

today.

So

we

don't

want

to

change

anything

there.

F

G

F

F

F

You

have

basically

some

sort

of

admission

cycle

done

with

with

allocate

at

container

functions,

remove

containers

when,

when

you

are

basically

deleting

containers

and

some

of

the

managers

like

CPU

manager,

have

an

internal

reconciled,

Loop,

which

we

want

to

cover

through

a

special

reconcile

event,

those

events,

basically,

after

certain

allocation

happens,

we

we

return.

If

the

allocation

was

successful,

we

will

return

the

CPU

set

or

the

certain

resource

or

location.

F

This

is

usually

a

set

of

yeah,

the

the

structural

set

of

assigned

CPUs.

No,

the

idea

is

that

actually,

our

plugins

do

not

do

any

allocations.

They

return

the

desired

allocation

Set

to

the

cubelet

and

cubelet

then

basically

calls

the

runtime

service.

The

runtime

service

provides

an

interface

to

allocate

all

the

desired

resources

and

we

use

it

inside

kubernet.

So

the

this

this

makes

it

nice

in

terms

of

plug-in

perspective.

F

This

is

the

main

mechanism.

What

we

are

thinking

about,

how

to

cover

basically,

this

kind

of

applicable

resource

management

in

terms

of

implementation

of

possible

plugins,

we

started

with

basically

something

like

which

covers

the

existing

functionality.

What

you

have

today,

we

have

a

plugin

which

had

combines

the

the

current

CPU

manager

memory

manager

and

to

biology

manager

together,

they're

one-to-one

the

same

code.

What

you

have

in

the

current

kubernetes

and

they

are

not

duplicated,

so

you

don't

have

a

code

duplication.

F

It's

just

instantiation

of

those

managers

inside

the

plugin,

so

actually

code

is

not

being

moved.

Code

is

not

being

duplicated

in

that

sense,

as

we

know

the

Poetry

manager

in

in

this

space,

we

we

want

to

structure

the

approach

in

several

phases.

In

the

first

phase,

we

basically

will

need

some

sort

of

integration,

because

topology

manager

basically

relies

on

hints

from

devices

and

other

topology

resources

and

yeah.

F

F

So

we

want

basically

a

single

point

of

contact

manager

to

introduce

a

single

point

of

contact

measure

inside

cubelet

for

this

kind

of

pluggable

resources

and

to

maintain

compatibility

with

Device

Manager

and

basically

to

to

not

to

change

the

code

we

just

want

to

instantiate

device

manager

inside

and

all

the

the

logic

basically

will

remain

the

same

as

you

can.

It's

in

device

manager,

no

code

changes

are

required

here.

F

F

So

this

would

be

our

phase

two

suggestion.

If

we

can

come

up

with

the

approach

where

we

can

handle

all

all

resources

through

a

single

point

of

contact

single

manager

inside

inside

cubelet,

we

call

it

resource

manager.

It

supports

basically

the

same

kind

of

events,

what

we

discussed

so

far,

and

it

is

Backward

Compatible

with

any

device

plugins.

What

you

have

currently

and

you

can

then

add

new

resource

plugins,

and

you

have

also

backward

compatibility

with

with

with

the

standard,

CPU

manager,

memory

manager,

topology

manager,

logic.

I

I

So

we've

tried

to

do

an

exercise

similar

to

what

you're

doing

now

at

some

point

in

the

past,

I

wouldn't

say

it

failed,

but

it's

always

a

big

undertaking,

because

you

know

there's

so

many

things

that

are

in

place.

We've

been

doing

things

the

way

they

are.

You

know

I'm,

not

necessarily

happy

with

the

current

architecture

that

we

have

today.

I

It

doesn't

feel

like

plugins

for

any

of

this

stuff

really

actually

belong

in

kubernetes

in

the

kublet

anywhere

at

all

and

actually

belong

down

at

the

container

runtime

level

kind

of

how

the

cni

stuff

has

now

moved

down

to

be

completely

runtime

plug-in,

rather

than

something

that's

that

runs

in

a

pod

inside

kubernetes,

and

we

can

talk

about

the

details

of

some

of

that

later

and

I'm,

not

necessarily

saying

that

we

don't

want

to

go

this

direction,

because

this

definitely

will

clean

things

up.

Quite.

J

I

If

we

decide

this

is

the

right

thing

to

do

and

there's

people

to

actually

put

in

the

effort

to

work

on

it,

but

I

do

think

we

need

to

sit

down

and

have

a

conversation

about

whether

we

even

want

to

continue

maintaining

these

components

the

way

they

are.

You

know

the

one

thing

that

that

jumped

out

of

me

when

you're

talking

about

you

know

making

sure

we

have

backwards

compatibility

with

the

existing

CPU

memory

manager,

topology

manager,

CP.

F

Correct

so

we

are

on

the

same

page,

so

basically

here

or

our

goal

would

be

also.

This

new

architecture

should

not

carry

this

baggage.

What

you

have

with

the

old

architecture,

but

the

plug-in

mechanism

allows

you

to

have

a

single

plugin

which

which

can

emulate

more

or

less

the

architecture.

So

if

people

want

the

old

architecture,

they

can

instantiate

a

single

plugin

which

does

it

and

It's

Made

Simple

for

them,

but

yeah.

F

Our

idea

here

is

keep

the

interface

or

Define

a

new

interface,

which

is

not

driven

by

the

alt

architecture,

but

it's

actually

driven

by

yeah

a

meaningful

management

of

this

kind

of

plugable

resources,

and

then

we

we

want

to

emulate

more

or

less

that

the

current

state,

so

that

users

still

can

use

what

they

are

used

to

it.

So.

I

I

Obviously,

because

not

everyone's

going

to

be

able

to

move

off

of

this

style,

Resource

Management

to

dra,

maybe

they

don't

even

want

to

move

off

of

the

old

style

onto

dra

going

forward

right,

so

we're

always

going

to

have

to

have

some

way

to

have

these

two

things

coexist,

and

so

you

know

if

this

is

the

way

to

clean

up

the

old

style,

Resource

Management

that

I'm

that

I'm

all

for

it.

If

that's

what

the

community

decides

that

we

want

to

do

so,.

C

C

C

C

To

ignore

this

entire

processing

piece

and

if

a

customer,

for

instance,

has

10

nodes

of

which

they

want

to

do

a

special

resource

plugin

they

can

so

when

you're

talking

about

research

like

it's

CERN

with

Ricardo

over

there,

then

you

can,

they

can

say,

oh

well,

we

want

to

do

some

new

examples

to

see

if

we

can

get

speed

ups

for

certain

types

of

resource

managers

and

do

there.

This

also

gets

some

of

the

community.

C

You

know

the

vendors,

because,

where

I

didn't

tell

right

we're

not

going

to

hide

that

and

but

it

lets

Avengers

start

releasing

specialty

resource

plugins

for

CPU

Etc

without

complicating

the

community,

so

the

community

no

longer

has

to

deal

with

it.

So

it

lets.

You

know

the

maintainers

sleep

at

night

and

focus

on

keeping

things

simple

and

running

and

lets

the

vendors

deal

with

the

the

complexity.

A

So

that's

actually

not

true

my

co

for

all

those

things.

It

is

a

favor

of

those

vendor

which

is

like,

for

example,

storage.

Vendor

here

is

the

different

type

of

the

resource

computer

resource

or

whatever

resource

offer

winter,

not

really

necessary.

Fever

of

the

kubernetes

offer

right,

so

they

also

have

different

type

of

the

platform

vendor.

So

there

are

so

many

of

the

different

plugin.

So

then

there's

the

compatibility

integration,

all

those

kind

of

things.

A

So

my

my

actually

the

presentation

here

I,

because

we

try

to

have

the

separate

of

the

resource

management

from

day

one

actually,

but

because

that's

over

complicated.

So

we

want

to

make

that

simple.

So

that's

why

we

have

next

building

but

from

day

one

we

think

about.

Maybe

we

should

separate.

We

even

talk

to

Docker

packages

say

that

switches,

a

name

is

Kevin

earlier

said

we

from

day

one

we

basically

start

to

say:

oh,

can

we

push

down

that

one

to

The,

Container

level

but

the?

A

But

that

time

we

really

believe

push

it

down

to

down

level

will

be

helpful,

but

after

over

seven

years

on

this

kubernetes

actually

sometimes

I

feel

like

what

you

just

say

actually

the

different

level

of

the

problem.

But

the

one

comment

earlier

try

to

say:

oh

this

is

simplified,

two

things.

The

motivation

I

disagree

here

clearly

disagree

when

it

is

restart

kubernetes

most

time

it

is

the

event

winter

offer

or

maybe

like

the

platform

offer

or

whatever

kubernetes

offer.

A

The

uid

will

endorse

what

kind

of

the

plugin

what

kind

of

the

resource

they

are

going

to

support

offer

to

their

customer.

So

anyway,

they

are

going

to

promising

those

nodes

province

in

those

resource

and

the

promising

those

clusters,

so

restart

kubernetes

is

really

cheap

things.

It's

super

cheap.

It's

not

like

the

restart

note

right.

So

when

you

pack

into

some

new

resources,

sometimes

you

to

craft

the

kernel,

reboot

not

even

mentioned

kubernetes

restart.

So

that's

not

the

problem

for

customer

actually

for

user.

A

A

lot

of

cases

that's

hidden

next,

also

just

the

hidden

things

like

the

kubernetes

have

will

restart

the

node.

When

certain

error

detector,

then

we

restart

the

node.

We

could

restart

the

container

D,

also

maybe

next

kubernetes,

so

they

have

the

different

complexity

to

the

customer,

but

that's

hidden

to

the

customer,

restart

the

kubernetes

most

cheap

one.

So

analysis.

What

happens

in

that?

Oh

that

just

solved

that

to

make

the

kubernetes

easy.

Actually

it's

not

I

think

about

the.

A

So

when

you

have

the

more

things

you

know

you

have

to

combine,

if

you

look

at

everyone,

look

at

the

cncf

that

huge

gland

and

then

people

keep

asking

me

my

top

question.

Actually

I

received

from

the

kubernetes

user

today

is

down.

Can

you

tell

me:

what's

the

opinionated

offer

from

the

kubernetes

I

can't

because

we

have

so

many

plugins

we

have

so

many

we

are

have

that

one

way

is

the

good.

Is

the

ecosystem

for

the

other

way,

users

don't

know

what

they

should

be

choose.

F

Oh,

in

any

case,

you

will

not

have

some

a

double

number

of

plugins.

So,

as

you

as

you

see

today,

for

example,

you

have

one

one

GPU

plugin,

you

have

one

I,

don't

know

adapter

network

adapter

plugin,

so

they

are

very

clear

what

what

kind

of

plugins

you

will

have

there

are

not

thousands

of

them

and

in

terms

of

performance,

okay,

you,

you

have

a

little

bit

better

performance

if

you

live

inside

cubelet

and

all

stuff

calling

the

managers,

but

the

actual

performance

is

the

workload

performance.

F

C

That

that

maybe

is

partially

slanted

by

my

experience

with

kubernetes,

because

my

initial

thing

I

was

working

at

a

AI

startup

equivalent

and

we

had

a

hell

of

a

time.

We

started

couplet

because

we

have

complex

networking

and

so

every

time

you

restarted

Google

it

didn't

play

in

with

the

network.

So

actually

we

started

Kublai.

It

meant

I

had

to

restart

the

node,

which

meant

to

my

neckline

and

I.

C

Don't

think

maybe

that

environment

was

unique

but

I

don't

think

it's

necessarily

unique

when

you're

talking

about

complex

things,

so

I

think

if

you're

talking

just

regular

couplet

with

you

know

a

very

simple

infrastructure,

maybe

that's

true,

but

for

us

deploying

a

Daemon

set

was

easy.

If

we

were

going

to

have

to

change

anything

on

the

Kublai,

it

stopped

us.

So

we

didn't.

Does

that

make

sense.

A

So

once

you

have

this

plugable,

so

you

are

going

to

have

the

so

many

different

demon

side

to

different

implementation,

but

I

agree

with

the

atnas

earlier

say

anyway.

Today,

even

today,

we

have

the

different

of

the

type

of

the

plugin

for

this

device

plugin

right,

so

we

already

have

that.

My

point

is

to

see.

A

This

is

why

we

are

simplify,

actually

is

not

I

just

want

to

make

them

more

clear,

because

once

you

have

that

API

there

will

be

count

on

the

vendor

right

to

implement

as

well

so

end

up

which

one

for

customer

to

using

that's

the

cut.

Kubernetes

Community

always

try

to

answer

that

question,

especially

on

the

story.

Network

notice

that

there

will

be

have

tons

of

those

questions

you

need

to

answer

so

customer

access,

the

user

kubernetes

user

today

is

actually

most

problem

is

too

complicate,

because

so

many

choices.

G

C

A

B

B

Topology

manager,

what

is

the

ideal

plan

is

Kublai

will

be

configured

with

configuration

specific

for

resource

manager

and

then

how

admin

will

synchronize

like

resource

manager

set

and

like

Google

configuration.

That

will

be

a

little

bit

complicated

task.

So

maybe

what

Kevin's

suggesting

to

move

this

entire

logic

to

continue?

F

G

F

Being

able

to

choose

policies,

basically,

if

you

think

about

we,

we

spoke

with

some

some

different

customers

like

Telco

companies

and

so

on.

They

expressed

the

need

to

change

policies

like

between

static

and

I.

Don't

know

different

topology

policies.

They

want

to

express

it

in

in

a

spec

or

through

annotation

or

something

they

don't

want

to

express

it

through

a

configuration,

so

some

admin

might

might

for

some

admin

might

make

sense

to

configure

the

cluster

to

have

a

certain

policy,

and

then

users

can

deploy

on

that.

F

F

F

I

I

You

know

for

these

additional

fields

that

were

very

specific

to

topology

management,

and

then

the

proposal

came

on

the

table

where

we

could

just

use

annotations,

and

then

you

know

the

argument

always

against.

That

is

that

we

don't

want

the

you

know.

Kubernetes

code

base

itself

to

be

inspecting

these

opaque

types

that

can

be

embedded

in

annotations

that

don't

have

any

meaning

to

kubernetes

itself.

I

B

This

is

what

you

get

and

that

there

is

no

like

back

door

with

the

plugin

model.

I

think.

Maybe

we

need

to

and

I

I,

don't

I

don't

have

anything

against

it

like

I

know

that

there

are

many

problems,

but

maybe

we

can

come

up

with

idea

like

how

to

like

split

people

on

roles

and

decide,

which

shows

will

do

what

and

then

have

put

at

least

some

limitations

on

what

the

resource

plugins

will

do.

Otherwise,

we'll

get

into

what

Kevin

describes

annotations

yeah.

E

G

I

Is

actually

one

of

the

things

dra

tries

to

help

with

because

with

the

flame

parameters

they're

defined

by

the

driver,

they're

checked

as

part

of

a

crd?

Rather

than

being,

you

know,

opaque

annotations.

But

you

know

it's

a

completely

different

mechanism

using

something

like

dra

than

this

simple

device

and

CPU

and

memory

management

that

we

have

in

in

this

architecture.

C

I

D

H

Obviously

we

need

to

be

able

to

manage

resources

across

pods

CPUs.

You

know

other

resources,

gpus,

we

get

a

lot

of

requests

for

this

it

we

want

to

be

able

to

run

small

pods

or

long

running

pods

with

small.

You

know

quickly,

running

Services,

fast

services

in

a

container

and-

and

that's

it's

a

primary

case

even

in

kubernetes

today,

right,

it's

not

just

all

about

long

running.

You

know

pods

that

are

scaled

across

a

cluster

right.

A

I

have

to

do

the

time

check

here

so

so

can

we

convert?

Can

you

share

the

the

slide

back

to

the

meeting

agenda

and

also

can

we

cover

this

to

the

cap

and

continue

discussing

there

and

Analysis

Kevin

and

we

need

we

have

the

several

proposal,

especially

for

the

dynamic

resource

allocator.

So

can

we

converging?

Can

we

see

what

is

clearly

defined?

What's

the

scope,

because

at

this

moment

there's

some

overlap

here?

A

Can

we

can

we

agree

on

what

kind

of

things

and

who

is

going

to

address

what

one

thing

otherwise

we'll

be

I

can

say

that

immediately

we

have

to

slow

down

both

right

because

until

we

figure

out

because

we

don't

want

two

things

over

liable

to

address

the

same

problem,

and

so

can

we

address

that

first

and

and

then

say

the

both

different

type

address

the

what

kind

of

problem.

What's

the

goal?

I

also

agree

with

the

currency,

the

needs.

A

If

we're

doing

this

kind

of

things,

we

may

not

want

to

carry

off

the

old

baggage

here

and

but

I

think

there's

a

certain

feature

parity.

Maybe

we

need

to

consider

you're,

not

unnecessary.

You

have

to

choose

executive

feature

parody,

but

do

we

need

to

consider

say

okay

well,

for

customer

ask,

for

it

hasn't

used

to

ask

for

this

kind

of

things

how

we

are

going

to

equivalent

something

I

think

that's.

G

F

D

I

think

don

what

we

can

do

is

like

we

can

probably

move

the

planning

to

the

top

of

the

list

next

week

and

meanwhile,

what

Reuben

and

I

are

asking

everyone

to

do

is

take

a

look

at

the

document

and

then

just

tell

us

that

you

have

the

time

to

work

on

that

list.

I

know

that

the

top

seven

that

that

are

carried

over

from

125

like

people

are

actively

working

on

it,

but

that's

the

second

table.

D

A

K

A

Oh,

if

you

are

okay

with

the

move

to

next

week,

oh-

and

that

will

be

wonderful

if

you

want,

but

otherwise

I-

think

about

that

now

we

can

go

over

one

by

one.

Hopefully

we

can

have

some.

Sometimes

if

we

don't,

we

can

see

what's

what's

what

we

do

to

do

with

demo.

Yeah

I

definitely

want

to

see

the

diamond

also,

but

we

can

do

through

some

other

channel

yeah.

K

Yeah

so

for

the

windows

here,

I

sandbox,

Fields,

I,

think

this

is

there's

still

some

active

discussions

going

on

in

that

in

that

pull

request.

I

think

that

my

question

isn't

James

isn't

here

today,

but

it

seemed

like

a

couple

of

weeks

ago

we

were

settling

on

having

the

same

sets

of

fields

for

the

CRI

stats

and

then

last

week

there

was

a

demo

of

a

whole

bunch

of

new

Linux

specific

stats,

getting

added

which

made

me

kind

of

reconsider

and

say.

J

L

L

We

have

a

lot

of

other

metrics

today

that

people

use

VSC

advisor,

and

so

one

of

the

ideas

we're

coming

up

with

in

that

cap

was

that

we're

going

to

add

basically

the

missing

fields

that

were

served

by

sea

advisor

in

the

CRI,

and

so

that

way

the

container

runtime

could

serve

them

and

then

the

kublic

could

basically

expose

them

as

Prometheus

metrics

kind

of

for

backwards

compatibility

for

C

advisors.

So

that's

kind

of

the

context

of

why

we're

wanting

to

add

those

the

rest

of

those

Linux

stats.

J

So,

given

the

change

in

scope

of

the

that

we're

thinking

of

passing

these

metrics

up

through

the

CRI,

rather

than

having

the

runtime

report,

the

C

advisor

metrics

directly

I

do

think

that

it

makes

sense

to

have

separate

separate

objects.

We

just

have

to

kind

of

figure

out

what

the

oh

like,

if

we're

going

to

have

any

overlap

between

those

objects

or

if

we're

going

to

have

them,

be

totally

distinct

and

have

the

handling

be

totally

distinct.

Between

platforms.

L

L

A

H

G

E

K

Update

the

CRI

probably

update

the

CRI

API,

because

the

all

of

the

name

spacing

options

are

under

the

Linux

run,

pod

sandbox,

config

field

and

I

think

we

would

want

to

have

some

options

either

more

generic

or

on

the

Windows

run,

pod

sandbox

config

field,

and

then

the

rest

of

the

cubelet

updates

are

just

filling

in

those

those

fields.

If

the

pod

spec

says

to

use

the

host

network

mode,

so

I

was

wondering

if

anybody

had

any

concerns

with

moving

forward

with

that.

K

E

I'm

sorry

I

was

in

mute.

So

what

what

do

you

have

like

two

minutes

left

so

I

had

a

like

short

update

on

the

QR

spices

that

has

been

updated

lately

and

I'm

kind

of

trying

to

get

it

now

included

in

one

126,

but

I

think

we

can

adopt

it

next

week

if

we

are

now

running

out

of

time,

because

I

have

a

few

slides

of

that

kind

of

what

has

been

happening

there.

So.

A

So

Atlas-

and

we

only

have

the

one

minute

sorry,

but

I

can't

stay

longer

anyway.

I

asked,

can

you

stay

longer

and

also

another

possibility?

Is

this

we

record

the

demo

and

put

the

link

record

of

the

link

in

here

and

the

next

week

we

make

another

announcements,

so

people

make

sure

people

didn't

miss

those

your

demo.

A

F

Yeah,

let

me

share

so

I

hope.

You

still

see

my

screen

right

in

terms

of

demo.

What

you

will

see

is

I

have

a

plugin

where

I

reference,

two

kind

of

containers,

one

container

has

CPU

manager

set

to

none,

and

one

container

has

a

basically

one

plugin

that

will

be

with

CPU

manager

and

on

the

other.

Plugin

will

be

CPU

static,

CPU

manager

with

one

one

kind

of

reserved

CPU,

the

the

change

is

just

changing

the

container

it's.

F

F

F

Right,

you

will

see

something

like

that

in

terms

of

picture,

not

in

your

spins

course

can

move

around

or

loads

can

basically

Linux

scheduler

decides

which

chord

to

ticket,

then

I

delete

the

workloads.

I

delete

the

the

plugin

and

and

I

do

a

small

modification.

I

use

the

static

CPU

manager,

just

by

changing

the

container

choice.

F

If

we

look

at

the

Lots,

basically

you

will

see

that

becomes

more

yeah

it.

This

time

it's

a

static,

CPU

manager

and

yeah

I

can

now

try

again

the

workload.

You

will

see

a

little

bit

different

Behavior

so

that

the

course

will

not

move

around.

They

will

be

actually

compact

placed,

but

it

takes

some

time

until

the

workload

Comes

live

foreign.

F

From

the

original

CPU

manager,

it

just

lives

in

a

plugin,

so

nothing

changed

what

what

we

are

running

but

yeah.

This

is

the

the

static

CPU

manager

Behavior.

What

you

could

expect

so,

just

just

to

demonstrate

that

you

can

change

policy

without

need

to

restart

anything.

You

just

instantly

the

new

plugin

and

it's

done.

A

Thank

you

so,

just

like

what

earlier

discuss

what's

the

next

step

right

so

there

we

can

have

the

work

group

and

the

discussing

this

one,

and

especially

on

the

what

we

should

converge

in

what

we

should

separate

from

the

dynamic

the

resource,

allocator

and

then

another

one.

It

is

then

covered

this

into

a

cap.

Then

we

move

forward.

Let's

see,

what's

yeah.

I

And

so

I'm

happy

to

take

part

in

the

networking

group

and

I

know

that

Dynamic

resource

stuff,

pretty

well

and

I,

also

know

the

existing

components

that

they're,

trying

to

you

know

abstract

out

here

really

well

so

yeah

I

think

that

that

sounds

like

a

good

plan.

That's

strength,

Converge

on

what

how

these

two

things

differ

and

what

the

goals

from

each

of

them

are

separately

and

we

can

move

forward

from

there.