►

From YouTube: Secrets Store CSI Community Meeting - 2022-03-17

Description

No description was provided for this meeting.

If this is YOUR meeting, an easy way to fix this is to add a description to your video, wherever mtngs.io found it (probably YouTube).

A

Hello

and

welcome

to

the

csi

secrets,

call

sponsored

by

the

cncf

today

is

march,

17th,

2022

and

just

to

let

everyone

know,

this

call

is

under

the

guidance

of

cncf

and

the

code

of

conduct

is

in

use

if

you're

not

familiar

with

the

code

of

conduct.

Please

visit

the

repo

and

view

the

code

of

conduct

markdown

file,

all

right

so

welcome

everyone.

A

Almost

a

couple

of

weeks

ago

to

believe

10

days

ago,

so

that's

the

latest

release

there

on

the

driver

and

I

believe,

a

provider

side

to

correct

me

if

I'm

wrong

not

much

of

an

agenda

today.

So

I

think

that

I

think

what

we'll

do

is

we'll

open

it

up.

If

there's

any

comments

or

anything

that

anyone

wants

to

discuss,

if

not,

we

can

actually

just

go

through

the

issues

list

and

see

if

there's

any

issues

out

there,

that

we

need

to

talk

about

or

higher

like

to

the

community.

B

Yeah,

I

think

a

quick

update

is

so

we

merged,

I

think,

about

six

pr

for

helm,

charts

and

then

also

cve

fix

yeah.

So

the

third

pr,

eight

nine

eight

yeah.

So

we

merged

that-

and

I

have

a

pr

open

to

cherry

picker

to

the

release

brand

and

then

we

also

merged

a

pr

to

fix

a

cve

in

the

base

image.

So

we

were

actually

thinking.

If

there's

nothing

else,

then

we

can

cut

1.1.2

next

week.

So

so

slightly

have

a

patched

version

available.

A

Okay,

this

is

that

cherry

pick

that

you

were

referencing

there

and

that's

right:

yeah,

yeah,

okay,

yeah

and

then

the

first

pi

is

also

ready

for

review.

So

it's

like

a

new

contributor.

I

had

opened

an

issue

about

adding

all

the

command

flags

that

we

have

for

the

driver

today,

like

the

only

way

that

a

user

would

know

is

they

have

to

go.

Look

at

our

main

dot.

Go

so

basically

we're

adding

this

to

our

documentation

so

yeah

whenever

everyone

anyone

gets

chance.

A

All

right

initially

mentioned,

there

was

one

particular

issue

that

you

wanted

to

chat

about,

yeah

the

I

may

like

also

mention

the

other

one

that

I

created

right.

So

the

first

two

issues,

I

think,

are

the

ones

that

we

we

should

discuss.

The

first

one

is

specific

to

the

driver.

The

second

one

is

just

general

kubernetes

announcement,

I

guess

but

yeah

we

can

go

through

it

in

the

same

order.

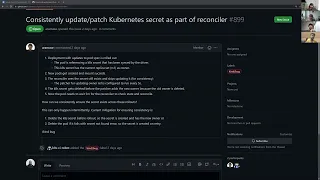

Yeah

yeah,

so

consistently

update

patch

community

yeah

reconcile

okay

yeah.

So

I

tried

to

summarize

what

the

problem

is.

A

So

the

thing

is,

let's

say:

a

user

creates

a

deployment

with

one

replica

today

and

then

they

reference

the

csi

driver

and

then

they

also

sync

as

kubernetes.

So

when

that

deployment

gets

created,

we

do

everything.

So

the

for

the

part

gets

created,

provider

gets

called

provider,

calls

external

secret

store,

returns

the

secret,

and

then,

at

that

point,

in

our

note,

publish

volume,

we

mount

it

and

say

everything

is

good,

and

then

we

create

the

secret

provider

class

parts

titles.

A

Then

we

have

a

reconciler

which

watches

on

the

secret

product

class

part

status.

It

says,

okay,

this

is

created

now.

Let

me

go

check

if

the

user

also

wants

to

sync

us

kubernetes

secret

and

then

if

the

secret

does

not

exist,

it

will

go

created,

but

it

doesn't

update

it

because

we

don't

want

to

do

any

kind

of

inconsistency

across

the

data.

The

only

controller

that

updates

an

existing

kubernetes

secret

today

is

the

rotation

preconciler.

A

So

this

is

without

rotation

in

picture

right

after

they

set

up

everything,

everything

works

right

now.

Let's

say

they

go

and

update,

and

then

also

when

this

kubernetes

secret

gets

created.

We

add

the

replica

set

as

the

owner

for

the

kubernetes

secret,

because

we

try

to

tie

the

lifetime

right

now.

Let's

say

they

go

and

do

a

rolling

update

of

this

deployment

like

they

want

to

they've

made

changes

to

their

application,

they're

doing

a

rolling

update.

A

So

when

they

do

a

rolling

update,

our

new

replicas

that

gets

created

the

new

pod

git

comes

up,

and

then

the

old

part

goes

away,

so

the

new

replica

set

comes

in

and

the

old

replicas

it

goes

away,

and

during

this

time

what

happens

is

when

the

new

replica

set

gets

created.

The

mount

succeeds,

our

rotation,

our

secret

sync

controller,

triggers

and

says.

A

So

this

one

says

the

old

owner

exists

and

then

it

just

the

old

secret

exists

and

it

skips

creating

the

secret.

But

then,

after

the

check

is

done,

the

secret

actually

goes

away

because

the

old

owner

gets

deleted

and

then

the

pod,

when

it's

trying

to

start

up

it

says,

hey,

look,

I

need

the

secret,

but

I

don't

find

it

so.

I'm

just

going

to

be

stuck

in

container,

creating

state

until

the

secret

gets

created

and

our

reconciler

checks

every

five

minutes

to

check.

If

the

current

state

of

the

world

matches

the

desired

state.

A

So

after

five

minutes

it

eventually

goes

and

creates

secret.

It

sees

it

missing

and

creates

it,

but

there

is

a

time

delay

of

five

minutes

that

the

user

can

enter,

and

this

is

a

very

subtle

race

conditions.

That

cannot

happen.

All

the

time

like

this

is

very

intermittent

like

if

your

cluster

has

really

high

load,

then

that

is

when

garbage

collection

takes

a

long

time.

So

that

is

when

this

can

happen

and

then

the

only

way

they

can

mitigate

it

now

for

a

deterministic

behavior

is

before

they

do

a

rolling

update.

A

They

delete

the

existing

secret

so

that

when

the

new

part

comes

in,

the

secret

will

not

exist

and

will

be

created,

and

then

the

second

option

is,

if

they

see

the

pod

in

the

fail,

stay,

they

go

and

delete

the

part

that

will

trigger

the

whole

flow

for

the

mount

and

sync

and

everything

and

that

works.

So

I

created

an

issue.

I

tried

to

re

repro

this

I

was

not

able

to,

but

I

did

find

one

user

who

hit

the

issue.

A

A

The

new

part,

the

pods

reference,

this

kubernetes

secret

as

environment

variables,

so

when

the

secret

doesn't

exist,

it

just

doesn't

start

running

at

all,

because

the

controller

run

time

says:

hey,

I

didn't

find

the

secret

yeah

and

then

it'll

just

get

stuck

and

then

the

weird

thing

is

when

it

gets

stuck

in

that

state

there

is

no

retry,

so

the

part

gets

stuck

like

that

forever

until

like

manually

deleted,

wow

okay,

so

even

if

you

create

the

secret,

they

don't

see

it

so

they

have

to

go

and

restart

it.

Sometimes.

A

Another

question

was

like

deployment,

has

one

behavior

but

like

replica

sets,

can't

kubernetes

allows

you

to

change

how

new

things

are

deployed.

Right,

like

you

know,

like

you,

can

say,

like

it,

delete

everything

and

then

start

it

up

right

like

it's

not

always

a

rolling.

Is

that

true,

like

we

can't

just

assume

that,

like

the

new

pod

will

be

created

before

old,

pod

is

deleted

right?

A

So

if

we

don't

assume

that

that's

actually

good

right

like

if

the,

if,

if

new

part

gets

created

only

after

all,

is

create

deleted,

then

that

is

a

very

deterministic

indicator

like

in

that

case

the

secret

will

go

away

and

then

the

new

part

comes

in.

It

won't

find

the

secret

we

created,

but

I

think

only

in

case

of

deployment,

not

even

payment.

I

think

only

deployment

the

replica

set.

They

have

this

revision

history

time.

A

So

if

you

roll

out

a

new

version

of

the

deployment,

it

just

creates

a

new

replica

set

and

then

once

the

replica

set

conditions

are

met,

it

will

go

and

delete

the

old

replica

and

the

old

replica

set

doesn't

necessarily

get

deleted.

Even

there,

you

have

a

configuration

option

on

how

many

revisions

to

keep.

So,

if

you

actually

say

keep

100

revisions,

the

old

replica

sets

will

never

get

deleted.

A

A

A

So

if

you

do

a

completely

new

deployment

like

what

tommy

said

right

like

you,

delete

and

create

a

new

one,

you're

good,

because

it's

a

brand

new

deployment

or

if

you

have

a

division,

history

actually

you're

safer,

because

kubernetes

won't

delete

the

older

replica

sets

yeah,

then

so

your

like

secret

will

exist,

but

if

you

set

it

to

zero,

then

basically

there's

that

race,

where

kubernetes

is

deleting

it.

We

are

checking

it

and

then

we

see

it,

but

it

deletes

it

after

we

see

it.

A

A

A

So

this

scenario

happens.

This

is

a

very

weird

race

condition,

which

happens

where

none

of

this

things

take

place

like

it's

like

this.

It's

the

controller

checks,

the

secret

exists

and

then

it's

going

to

skip

it,

and

then

the

secret

gets

deleted

right

before

the

patcher

runs

to

add

the

owner

reference.

A

So

that

can

that

can

potentially

avoid

that

weird

timing

situation?

Probably

so

today

we

read,

I

mean

we

try

to

see

what

different

secrets

or

replicas

that

you

have

to

go

ahead

and

look

for

in

order

to

delete

the

secret

and

within

that

processing

time

either.

It

might

already

have

debated

or

created

something

like

that.

So

we

don't

do

any

deletion

today

at

all

so

like

we

only

add

the

owner

and

then

when

the

owner

gets

deleted,

it

gets

garbage

collected

by

kubernetes

itself.

A

A

Yeah

I

mean

one

way

I

can

see.

Is

we

tune

up

the

timings

so

that

the

user

doesn't

have

to

wait

a

long

time

also?

This

is

not

a

very

common

issue.

Right

like

this

is

probably

the

first

one

that

we

have

gotten.

So

I

was

thinking

we

can

get

ahead

of

it

and

see

if

there's

a

better

way,

we

can

solve

this

in

the

upcoming

release,

like

maybe

for

1.2,.

A

Yeah

all

right,

so

that

was

59,

and

then

we

have

the

pods

using

csi

volumes

fail

to

terminate

you've

seen

that

driver

parts

have

been

evicted

yeah.

So

this

one,

I

don't

think,

is

actually

a

see

our

driver

issue

per

se,

but

I

think

this

is

just

a

general

kubernetes

issue

and

so

two

scenarios.

The

thing

is

you,

your

csi

driver

pods

need

to

be

running

on

your

node.

A

If

you're,

if

you

want

your

pod

volume

to

succeed

and

then

similarly,

you

need

a

csi

for

driver

parts

to

be

running

on

the

node.

If

you

want

your

pod

volume

unmount

to

succeed,

so

in

both

the

scenarios,

you

need

the

csi

driver

port

to

be

running

so

that

your

part

can

get

created

with

a

new

volume.

And

then

your

part

can

also

get

deleted

if

it

is

using

a

volume

right

and

then

the

problem

arises

when

there

is

a

scale

up

event

or

a

scaled

down

event.

A

When

the

new

node

gets

created,

there

is

no

way

to

guarantee

that

your

csr

driver

is

the

first

part

that's

running

on

the

node.

So

if,

when

your

new

node

comes

up,

if

your

application

starts

running

before

the

driver

port

is

running,

then

the

volume

mount

will

fail,

because

cubelet

will

try

to

talk

to

the

csr

driver

and

then

it

will

say:

hey.

I

don't

find

any

driver

running

here

and

it'll

just

fail.

A

The

volume

on

cubelet

has

internal

retries

to

succeed,

but

the

thing

is

cubelet

also

exponentially

backs

off

after

the

first

few

set

of

errors.

So

if

the

driver

is

still

not

running

after

two

minutes,

then

tubelet

will

back

off

and

then

retry

after

a

certain

time.

So

essentially

your

part

might

be

stuck

in

container

creating

state

because

it's

not

able

to

mount

the

volume

for,

like

maybe

five

or

ten

minutes.

A

A

When

your

node

is

actually

getting

deleted.

There

is

no

way

to

tell

the

scheduler

what

order

to

evict

the

parts

in

right.

So,

in

the

worst

case

scenario,

if

your

csi

driver

pod

is

the

first

one

that

gets

emitted

when

the

node

is

deleting

now

all

the

pods

that

used

the

csr

driver

to

mount,

the

volume

are

stuck

in

unmounting

forever.

A

A

So

both

the

problems

are

in

with

scheduling

and

I

think

they

open

the

issue

here

to

say:

maybe

we

can

do

system

d

similar

to

cni

drivers,

so

basically

install

it

on

the

node

as

a

post

process,

and

then

it

keeps

running

with

system

b.

I

don't

think

that's

a

good

long

term

solution.

So

I

added

that

as

a

comment,

because

csi

drivers

can

be

deployed

and

deleted.

A

It's

not

necessarily

running

all

the

time

like

it's

totally

up

to

the

user

when

they

want

to

install

it

and

remove

it

like

that's

the

convenience

and

then

also

we

rely

on

all

these

side.

Cars

right,

like

cni,

doesn't

have

all

the

side

cars.

So

if

we

have

to

go

into

system

d

and

all

that

we

have

to

re-architect

the

whole

thing

and

then

also

come

up

with

a

way

to

do

this

on

windows,

because

again,

windows

doesn't

allow

you

to

just

throw

anything

on

the

host.

A

A

Well,

okay,

so

I

get

I

get

on

creation

of

the

note,

but

then

does

it

like

reverse

the

order

on

deletion?

Okay,

so

that

one

was

only

handling

creation

and

then

even

for

creation.

There's

actually

like

a

way

we

can.

There

are

multiple

ways

we

can

accomplish.

This,

like

a

lot

of

people

have

just

developed

their

own

solution

for

it,

but

there's

nothing

for

deletion

so

far,

but

I

think

tommy

had

something

I

can

go

through

the

solution.

A

A

Yeah,

this

was

the

link

to

the

gap,

so

this

was

not

readiness

gate,

but

this

was

specifically

for

the

creation

and

you're

right.

The

creation

actually

is

not

a

big

problem,

like

I

think

it's

just

a

better

user

experience

because

it

does

eventually

resolve

today,

but

on

the

delete

path.

Yes,

there

is

no

resolution,

it

just

needs

someone

to

manually

go

and

delete

it,

and

even

when

they

manually

delete

it,

it's

still

not

the

clean

way

to

do

it.

A

Do

you

think

our

efforts

are

to

just

enhance

the

the

cap

to

handle

this?

I

just

want

to

clarify

that

yeah.

So

actually,

for

this,

this

gap

eventually

got

closed.

I

think,

but

like

some

of

the

solutions

that

people

are

using

for

the

create

paths

today

is

when

you

deploy

a

cluster,

you

can

actually

bootstrap

it

with

certain

set

of

teams,

like

custom

teams

to

say,

like

add-on,

critical,

equal

to

false

or

something

like

that,

and

then

you

can

make

all

your

add-on

faults

tolerate

that

paint.

A

A

So

that

hand

is

the

create

path.

I

think

that

there

are

a

couple

of

custom

implementations

for

it

today

there

is

one

from

wish

called,

not

pain,

controller,

but

I

think

for

the

delete.

There

is

no

way

to

do

it

like

we

can

see

if

the

community

has

anything

for

it

or

if

their

recommendation

is

that

each

driver

has

to

handle

this

on

their

own,

because

I

think

for

us

it's

still

pretty

simple

right.

A

A

A

A

Now

for

878,

I

think

they

they

signed

the

cla,

but

they

have

issues.

So

I'm

going

to

point

them

to

the

cla

support

team,

but

they

have

a

pr

open

and

test

infra

with

their

gcp

secrets,

manager,

instance,

and

then

I

think

tommy

also

looked

at

it.

It

mostly

looks

good,

so

if

they

get

through

the

cla

stuff,

we

can

help

merge

that

one

pr

and

then

like

help

them

with

the

second

set

of

vr

to

add,

like

jobs,

to

test

it

out.

A

Yeah

and

then

I

think

there

are

a

couple

of

our

documentation

stuff

too,

so

maybe

we

can

add

those

to

1.2

like

I

think

it's

been

open

for

a

while.

So

we

can

get

that

done

this,

like

it's,

not

a

blocker,

but

I

just

think

we

can

plan

it

for

this

milestone.

So

we

all

we

have

assignees

for

each

effort.

We

just

add

all

the

stuff

to

the

dock.

A

A

A

A

A

A

A

There

is

actually

like

some

the

recommendations

on

that

issue

and

what

can

be

done,

then?

Maybe

we

can

just

go

ping

and

see

like

hey.

Is

this

going

to

happen

and

then

we

can

follow

the

same

process

for

other

stuff

too

yeah.

I

think

the

general

thing

is

that

it

it's

unlikely

to

happen,

but

like

it,

it's

been

open

for

a

while

and

hasn't

been

picked

up.

That's

it

is.

A

I'm

sorry

go

ahead.

No!

No!

I

was

just

gonna

say

so

with

that

thought.

If

someone

had

the

right

level

of

permissions

executing

into

the

pod

and

seeing

the

clitex

secret

that's

mounted,

is

that

what

that

would

fix,

or

I

think

again,

mainly

protect

against

things

like

a

vulnerability

that

allows

you

to

get

like

file

read

permissions,

but

not

necessarily

execution

permissions,

so

like

yeah

for

like

path,

terrible

traversal,

vulnerabilities

and

things

like

that.

A

A

A

We

have

not

set

a

date

yet,

but

I

think,

like

the

I'm,

the

required

republish.

I

already

have

most

of

the

changes,

so

I

can

get

that

going.

I

think

once

this

and

the

other

pr

lines,

I

think

we

can

actually

start

planning

for

the

release

like

apart

from

that,

I

think

most

of

the

other

things

that

we

want

to

do

are

dark,

updates

and

stuff

like

that.

So.

A

Hey

yeah,

nothing

for

me

thanks

all

right

ray,

I'm

I'm

not

sure.

If

I'm

familiar

with

you

do

you

want

to

introduce

yourself

to

the

community

sure

my

name

is

raymie

abraham.

I

assured

my

ray.

I

fairly

new

to

the

kubernetes

platform

industry

and

kind

of

picked

up

the

group,

as

I

guess

this

group

that

participates

frequently

and

I'm

always

desire

hunger

to

learn.