►

From YouTube: 20210325 SIG Architecture Community Meeting

Description

No description was provided for this meeting.

If this is YOUR meeting, an easy way to fix this is to add a description to your video, wherever mtngs.io found it (probably YouTube).

A

A

B

B

I'm

laura

I'm

visiting

from

sig

multi-cluster

brought

some

of

my

posse

with

me,

so

jeremy

ot

is

on

the

call

chair

of

a

signal

type

cluster

paul

morey

is

on

the

call,

also

a

chair

of

sig

multicluster,

and

then

what

I

want

to

talk

about

today

is

a

cap

that

sigmulti

cluster

has

been

working

on

about

cluster

id,

so

also

on

the

call

david

eads

is

our

production

readiness

reviewer

for

that,

and

I

think

that's

everybody

who

I

I'm

rolling

with

today.

So

just

fyi

brought

some

of

my

friends.

B

B

So

I'm

going

to

talk

a

little

bit

about

both

of

them

and

explain

why

we

need

both

of

them

and

motivate

a

little

bit

that

we

something

we've

run

into

in

the

sig

that

this

cluster

id

thing

that

we've

been

working

on

seems

to

have

some

more

utility

outside

just

the

bounce

of

sig

multi-cluster,

and

bring

up

that

there's

been

kind

of

like

this

timeline

inside

the

sig.

Where

we've

been

talking

about

you

know,

should

we

implement

this

as

a

crd,

which

was

our

original

design?

B

B

We

need

to

be

worried

about

and

then

kind

of

like

tear

down

from

there

and

see

what

sig

arch

thinks

about

this

in

general,

so

just

to

give

a

little

background

on

what

we're

talking

about

in

sigma

multi

cluster,

there's

kind

of

two

big

caps

we're

working

on

well,

one

super

big

cap

that

we're

working

on

and

one

that's

related

to

it.

So

cap

1645

the

multi-cluster

services

api.

B

This

is

a

kept

to

describe

how

services

can

be

shared

between

multiple

clusters

right,

so

there's

two

implementations

and

one

in

progress

based

on

the

on

this

api,

but

the

kep

itself

still

needs

a

few

more

pieces

and

one

of

those

pieces

is

cluster

id,

particularly

for

the

purposes

of

multi-cluster

dns,

which

I'm

going

to

briefly

go

over

in

a

second.

So

this

cap

cab

2149.

This

is

the

one

that

I'm

working

on

and

kind

of

advocating

right

here

today,

but

it's

very

close

to

this

other

cap.

B

It's

a

blocker

for

this

other

cap

is

why

I

bring

that

up

and

this

one's

stolen,

provisional

status.

We

have

a

pr

open

to

put

it

into

implementable

status,

as

we

get

all

of

our

final

reviews

through

and

by

by

design

right

now,

since

we're

from

sig

multicluster

right

it

targets,

non-multi-cluster,

use

cases.

So

in

the

cap

we

talked

about

multi-cluster

logging

and

debugging,

and

we

talked

about

multi-cluster

dns.

B

We

had

a

survey,

and

that

was

the

thrilling

original

name

that

we

came

up

with

for

what

this

is,

so

just

to

briefly

show

you

what

this

crd

looks

like

it's

very

basic

in

general,

really

it

just

these

objects

just

have

a

value,

and

the

idea

is

to

provide

some

type

of

generalized

storage

for

any

arbitrary

properties

about

a

cluster

and

in

particular

for

sig

mc

there's.

These

two

properties

we

need

to

have

that

we

think,

should

go

in

here.

The

cluster's

name

and

the

cluster

set

that

the

cluster

is

in.

B

So

like

the

name

of

the

multi-cluster

group

that

this

cluster

is

a

member

of.

So

this

is

the

way

that

we

think

that

that

might

look.

We

have

some

stuff

in

the

in

the

cap

that

describes

that

the

name

should

the

name

of

the

cluster

should

be

in

a

cluster

property

object

that

has

a

metadata

name

id.case.io

and

might

have

a

value

like

this.

This

is

a

cubesystem

ns,

namespace

uuid,

but

you

know

it

could

be

banana

or

it

could

be

like

my

favorite

cluster

whatever.

B

B

So

we

have

a

proposal

out

also

about

handling

multi-cluster,

dns

and

kind

of

the

big

case

that

I

want

to

highlight

here

where

this

cluster

name

shows

up

for

us.

Is

that

and

when

we

want

to

make

a

dns

name

for

headless

services,

if

there's

a

headless

service

over

in

cluster,

a

that

has

the

host

name

one?

B

So

all

that's

kind

of

background

about

why

multi-cluster

thinks

this

is

important,

but

something

that

has

come

up-

and

this

is

what

I

really

want.

The

insight

from

sig

arch

architecture

about

is

that

we've

started

to

get

some

information

from

other

cigs

and

then

just

like

a

feeling

from

from

various

people

in

the

in

our

own

cig

that

this

would

be

useful

outside

of

multi-cluster.

So

a

couple

pieces

of

evidence

that

have

collected

over

the

time

here

there

was

actually-

and

maybe

everybody

here

already

talked

on

this

thread.

B

This

is

actually

where,

as

far

as

I

know,

where

lore

was

where

the

idea

of

using

the

cube

system

namespace

uuid

as

a

cluster

id

kind

of

first

came

to

as

an

idea

and

in

the

process

of

working

on

this

cap,

I

went

to

talk

to

sid

cluster

lifecycle,

particularly

the

cluster

api

working

group

about

you

know.

If

they

need

something

like

this,

it

turns

out

that

they

do.

B

It

also

turns

out

that

they

implemented

something

on

their

own

to

kind

of

handle,

the

situation

that

they

needed

right

now,

but

that

they

would

prefer

to

use

something

centralized

kind

of

more

official

in

their

case

they're,

using

annotations

on

the

node

resource

in

their

namespace

and

then

in

the

process

of

talking

to

cluster

api.

I

also

heard

from

someone

on

the

virtual

cluster

project,

who

also

needs

something

along

these

lines,

so

it

started

to

seem

like

there

are

other

people

who

might

need

this

too.

B

So,

as

a

result,

this

conversation

started

going

on

so

should

we

should

we

make

this

a

crd,

or

should

we

try

and

pursue

putting

this

as

a

core

kubernetes

api?

Is

this

something

that

you

know?

There's

kind

of

this

general

sense

in

in

the

sig

architecture

thread

that

it

should

be

somewhere.

You

know

core,

but

I

don't

know

if

that's

necessarily,

you

know

what

we

feel

now

I'll

come

back

to

this

slide

later.

B

If

we

want

to

look

at

it,

but

basically

we

have

an

understanding

in

sig

mc

of

what

the

pros

and

cons

one

side

or

the

other

would

be.

But

of

course,

this

whole

chart

is

predicated

on

the

assumption

that

we

do

want

it

to

be

ubiquitous

and

have

a

default

always

set,

and

that's

you

know

why

we

would

like

the

yes

side

versus

the

no

side

of

a

pro

con

list

here

so

happy

to

take

any

questions

on

any

of

the

stuff

I

just

went

over.

B

I

wanted

to

make

sure

we

were

on

the

same

page,

but

this

is

the

slide

of

the

real

questions

that

I

have

for

sig

architecture

and

would

like

to

open

the

discussion

so

kind

of

step.

One,

I

feel

is

whether

we

agree

that

a

cluster

local

representation

of

a

cluster's

name

should

be

consistently

available

in

kubernetes

clusters,

so

we're

kind

of

like

operating

under

this

assumption.

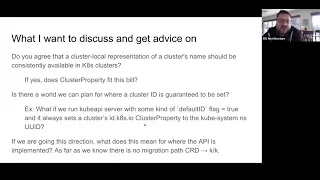

B

As

we've

been

talking

to

people,

but

that's

kind

of

the

baseline

assumption

that

everything

else

falls

out

of

so

I

want

to

open

the

discussion

for

that

and

then,

if

so,

is

this

api

that

we

are

suggesting

this

cluster

property

kind,

something

that

would

fit

the

bill

for

that

and

then

everything

else

falls

out

of

that

too.

So

you

know:

is

there

a

world

where

we

can

plan

for

a

cluster

id

being

guaranteed

to

be

set

like?

B

Could

we

run

cube

api

server

with

some

sort

of

flag

that

always

sets

this

cluster

property

to,

like?

Maybe

the

cube

system,

namespace

uuid

right?

This

is

more

like

details,

but

like

is

there

a

world?

We

would

want

to

go

towards.

That's

something

like

that

and

if

we're

going

in

that

direction,

what

does

this

mean

for

where

we

choose

to

implement

the

api

and

in

particular,

as

far

as

we

know,

we

don't

know

that

there

is

any

migration

path

to

go

from

something

that

started

out

as

a

crd

and

then

actually

gets

into

core.

A

One

of

the

issues

we

discussed

a

long

time

ago

here

was

that

we

don't

have

any

facility,

and

I

believe

this

is

still

true

and

david

may

be

able

to

say

something.

We

don't

have

any

facilities

sort

of

to

automatically

load

crds

in

a

sort

of

default

manner,

and

so

that

that

adds

to

a

lot

of

complexity

in

you

know:

aha

setups,

for

creating

your

your

bootstrapping,

your

cluster,

basically

so

making

it

a

built-in

really

helps

alleviate

some

of

those

concerns

as

well.

So

I

will,

I

will

yield

my

time.

C

C

D

C

Yes,

for

the

record,

and

for

the

record

I

do

believe

tim

is,

is

an

approver,

so

I

don't,

I

don't

think

it's

a

very

high

bar

for

you,

but

I

do

want

to

make

sure

that's

reflected

here

on

this

chart.

I

had

another

question

about

functionality.

Is

there

any

actual

shortcoming

of

crds

that

are

known

that

that

prevent

this

other

than

your

deployment

capabilities?

B

I

think

where

it

becomes

questionable

to

us

is

really

in

the

space

of

do

we

need

do

we

want

to

as

a

larger

architecture,

idea

and

kubernetes

have

this

available

more

broadly,

so

I

think

for

the

mcs

api

implementation

and

feel

free

anybody

else

to

hop

in

from

sig

mc2.

The

language

in

the

cap

is

very

like.

If

you

are

an

mcs

api

implementation,

you

must

follow

xyz.

You

know

things

about

how

this

sets

up,

so

that

puts

the

burden

on

the

implementations

for

mcs

api.

B

A

I

think

the

bootstrapping

thing

david,

that

I

mentioned,

and

the

fact

that

you

would

want

some

default

set

sort

of

at

cluster

creation

time.

It's

something

you

can

easily

do

with

a

crd,

so

every

client

has

to

rely

then

upon

it

has

to

have

the

logic,

to

notice

that

there

isn't

one

and

either

fail

or

generate

one

in

some

way.

C

I

had

I

had

one

other

comment

on

this

with

regard

to

the

ubiquity

and

the

always

available,

and

I'm

interested

in

what

distinguishes

this

from

other

apis

that

are

crds

right.

So

I

have

you

know

those

basically

seem

to

be

true

for

every

api

and

would

be

true

of

things

that

people

are

looking

at

in,

say,

segoff

projects

around

around

resources

for

describing

policy

right

and

policy

auditing

capabilities-

I

I

guess

I'm

looking

to

say

like

is

there

some

line

we

can

draw

here?

That

says

this?

A

Yes,

that's

true

it's

it's

just

I

mean.

I

think

this

is

sort

of

a

a

sort

of

a

fundamental

property

or

of

a

cluster

is

being

able

to

identify,

but

you're.

It's

a

valid

point.

I

don't

want

to

dominate

here.

We

do

have

other

hands

and

I

so

unless

so,

if

you're,

okay,

david,

that's

a

good

point.

I

think.

E

Yes,

thank

you

so

me,

and

nikita

have

been

talking

about

the

bootstrap

problem

and

we

might

have

something

you

know

we

have.

Nikita

is

prototyping

something

that

we

can

bring

to

this

team

here.

So

that

should

help

with

you

know

this

problem

of.

Do

we

need

to

do

it

as

a

crd

or

does

it

need

to

be

built

in?

E

E

I

haven't

thought

about

that

one

yet,

but

you

know

we

can

talk

about

that

a

little

bit,

but

I

do

want

to

say

that

this

would

this

cap

would

go

well

in

hand

in

hand

with

what

is

prototyping

and

use

use

that

as

a

thing

to

like

get

support

for

the

bootstrap

problem

into

kubernetes.

Really

soon.

That's

you

know

hopeful,

that's

my

hope.

With

with

this

back

to

you.

B

I

do

want

to

hear

I

do

think

it's

interesting

that

there's

just

other

work

going

on

about

making

crds

boots

droppable,

so

I

think

we

definitely

should

sync

up

and

see

where

the

overlaps

are

there,

but

yeah.

I

think

I

don't

know

exactly

the

details

of

that,

but,

as

you

had

just

mentioned,

if

we

want

like

an

idea,

a

situation,

I'm

thinking

about

is

if

cluster

api

definitely

wants

to

use.

This

and

mcs

definitely

wants

to

use

this,

but

we

offload

the

setting

of

the

default

to

an

mcs

controller

implementation.

B

Then

now

anytime,

cluster

api

wants

to

use

this.

The

cluster

has

to

have

the

mcs

controller

installed

right,

so

I

think

figuring

out.

If

we

can

have

that

default

value

set

thing

happening

more

generally

and

again,

whether

that's

part

of

the

work,

that's

going

on

with

the

crd,

installers

or

not,

is

an

interesting

point.

F

This

and

there's

there's

a

couple

elements

of

this.

Actually,

I'm

a

little

concerned

about

tying

too

many

of

these

elements

together

too

early

because,

like

the

point

of

an

api,

is

that

it

has

a

consumer.

The

api

means

something

cluster

claim,

I

think,

makes

sense

from

you

know

I

this

cluster

belongs

to

this.

It

has

a

well-known

name:

it's

a

singleton

you're,

making

a

statement

in

that

api

that

this

means

something

and

the

consumer

has

a

meaning,

and

there

might

be

other

consumers

right.

F

So

if

the

mcf,

if

a

controller

off

cluster,

is

looking

at

it

and

saying

I

look

at

this

cluster,

I

know

what

it

means.

I

know

who

it

is

saying

it

is:

that's

still

not

the

same

thing

as

the

actual

unique

identifier

of

the

cluster,

it's

just

a

convention

that

they

both

overlap.

I

get

a

little

uncomfortable

for

that

because,

in

the

use

case

of

cluster

claim,

you

could

actually

have

other

types

of

systems

where

you

say

a

cluster

actually

has

two

different

names

that

are

imposed

at

the

higher

level.

F

They

both

map

to

something

underlying

someone

might

actually

have

a

different

api

for

cluster

claim

that

we,

wouldn't

you

know,

eliminate

it.

They

can

go,

do

that

today,

there's

nothing

that

stops

them

when

you

have

to

create

the

cluster

claim

that

has

a

specific

meaning,

and

that

has

a

bunch

of

behavior

on

the

other

system.

I

get

a

little

nervous

about

that,

because

I

agree

with

the

use

cases

that

a

cluster

should

have

an

identifier

that

identifier

should

mean

something

I'd

probably

like

to

keep

those

more

orthogonal,

but

have

a

clean

way.

F

For

someone,

like

you

say

to

say

like

this

is

what

the

cluster's

idea

is.

It

should

propagate.

I

don't

think

they

have

to

be

the

crd

necessarily

they

could.

The

way

controllers

are

booted

kind

of

leans

towards

that,

so

that

part's,

a

little

uncomfortable,

there's

also

a

disclosure

aspect

to

this,

which

is,

if

we

create

it

by

default.

F

We

put

it

on

the

cluster

that

may

actually

be

information,

that's

confidential

to

an

operator,

and

so,

like

derek's

question

about

download

api

like

I

could

see

that

potentially

having

a

few

impacts,

which

is

yeah

sure

you

gotta,

protect

it

with

our

back,

but

then,

if

it

shows

up

silently

in

events

and

someone

forgets

and

puts

it

in

an

event,

that's

another

thing

that

might

actually

disclose

some

information

that

the

end

user

might

not

actually

be

privileged

to

so

a

few

wrinkles

around.

Like

that,

I

don't

I

I

like

the

idea.

F

D

B

A

if

a,

for

example,

cluster

is

unregistered

from

a

cluster

set.

Then

we

effectively

delete

this

object

for

that

cluster

and

then

they

could

re-register

even

under

the

same

name

and

that's

the

operation

that

actually

makes

them

be

able

to

have

a

different

cluster

set

value.

So

I

guess

that

another

way,

no

in

this,

in

the

sense

that

we

expect

these

to

be

deleted

and

re-registered

as

when

they're

edited,

but

they

are

repeatable.

A

B

F

B

I

think

there's

two

sort

of

points

here

and

again

me

coming

from

sig

multi-cluster.

These

are

very

related

to

each

other,

but

the

cluster

name

itself.

Ideally,

we

would

like

it,

especially

if

we're

going

this

trend

of

it

being

generally

useful

and

available

in

default,

et

cetera,

et

cetera,

be

unique

beyond

the

properties

of

beyond

the

bounds

of

a

cluster

set.

B

But,

as

we've

talked

about

it

to

date,

it

has

a

relationship

in

the

sense

that

a

cluster

can

only

join

a

certain

cluster

set

if

it

has

a

unique

name

inside

that

cluster

set.

So

if

this

cluster

was

called

banana

and

it

joined

banana

cluster

set

and

then

some

other

cluster

tried

to

say,

their

name

was

banana

and

joined

the

banana

cluster

set,

they

either

can't

join

the

banana

cluster

set

or

they

can

once

they

try

and

change

their

name

away

from

banana.

D

If

I

derrick

had

a

second

question,

but

I

I

would

like

to

get

to

it,

if

I

can

just

remind

the

group

and

the

record

of

some

of

the

history

here,

we

added

a

cluster

name

field

to

object,

meta,

ostensibly

to

handle

this

ish

problem

and

then,

as

a

group,

we

decided

not

never

to

use

it

and

there's

code

somewhere

in

the

rest

path.

That

says,

cluster

name

must

be

empty

and

blast

it

with

an

empty

string

and

a

lot

of

the

reasoning

there

was.

D

We

just

couldn't

drive

consensus

on

how

the

cluster

name

came

into

the

cluster

and

who

gets

to

set

it.

Was

it

mutable?

Was

it

a

uuid?

Was

it

a

human

friendly

string?

Does

it

change?

Are

there

aliases

to

it

and

at

that

point

we

kind

of

punted

honestly,

we

just

didn't

solve

this

problem,

but

we

agreed

that

coming

in

being

able

to

read

it

at

that

level

was

not

the

important

part

we

did

discuss,

passing

a

flag

into

like

controller

manager

or

api

server

rather

and

saying

this

is

this?

D

Is

your

cluster's

name

change

it

at

your

own

risk

right

and

being

a

flag?

You

know

is

unlikely

to

get

changed

very

often,

and

that

was

one

way

that

we

could

have

gotten,

that

particular

datum,

but

this

api

is

sort

of

the

hyper-generalized

form

of

that

right,

because

it's

not

just

the

cluster

identifier

that

can

be

stored

in

one

of

these

cluster

properties

right.

We

can

store

other

information

in

them,

which

is

an

interesting

way.

I

think

to

assert

information

about

the

cluster

from

the

administrator

or

or

provider's

point

of

view.

D

One

thing

that

this

is

missing

and

I

know

laura's,

probably

tired

of

hearing

me

say

it,

but

at

some

point

we

will

need

to

deal

with

like

validating

the

like

a

signature

on

these

right,

like

as

a

as

a

hosted

provider.

I

would

like

to

be

able

to

make

an

assertion

about

the

cluster

that

lives

within

the

cluster

that

I

can

then

validate

that

it

was

in

fact

me

who

made

it

right,

because

we

don't.

We

don't

have

a

concept

really

of

truly

immutable

immutable

apis

there.

D

Our

back

for

specifically

named

resources

does

exist,

so

you

know

we

can

encourage

people

to

use

that

if

they,

if

they

need

to

last

point

to

the

question

of

ubiquity

and

autoloading

crds

the

the

last

time

we

had

a

discussion

about

autoloading

crds,

it

kind

of

came

to

a

dead

end

and

I'm

partly

guilty

for

that.

I'm

just

having

gotten

busy

and

not

founding

not

having

found

consensus

on

it.

So

I'm

super

eager

to

hear

dims

what

you've

got

up

your

sleeve

and

nikita.

D

B

B

That's

really

only

relevant

to

the

cluster

set,

a

cluster

set

name,

the

clusterset.case.io

value

in

its

relationship

to

a

cluster

locals

representation

of

its

own

cluster

name,

so

they're

very

connected

for

me,

but

in

reality

the

cluster

id

it

can

can

be

centralized,

and

it's

only

when

it

reacts

has

to

react

to

other

clusters

in

the

situation

of

multi-cluster

api

that

we

have

a

definition

of

how

it

should

behave

externally,

but

you

have

another

hand

so

go

ahead.

Clayton.

F

Like

like,

we

always

kind

of

took

like

so

if

brian

were

here,

brian

would

be

like

maximum

orthogonality

like

good

orthogonal.

Apis

were

great,

that's

partially,

why

I

brought

that

up,

but

also

like

it

lets

people

react

and

put

the

systems

together.

We've

started

to

like

we've,

been

in

more

opinionated

about

deployment,

but

we

still

take

a.

We

have

a

cautious

line

like

cube.

Admin

is

a

default

and

it's

reasonable

and

it

we

try

to

drive

consensus

on

deployment

patterns.

We

have

caps

for

that.

F

I

think

there's

a

very

useful,

like

I

really

do

agree

that

the

idea

of

all

of

the

things

we

talked

about

that

are

internal

to

a

cluster

like

cube,

has

a

name

for

what

this

is

that

is

used

in

the

systems

that

have

to

go

connect,

it's

a

hard

problem.

It

feels

like

it

deserves

its

own

topic

and

discussion,

and

maybe

we

just

run

on

ground

on

it.

I

actually

would

probably

argue

it

feels

like.

F

I

don't

know

that

just

given

the

scope

of

kubernetes

today,

if

everybody

has

to

have

a

cluster

idea

id

and

it's

always

immutable,

and

it's

always

per

cluster

there's.

So

many

different

things

that

it's

going

to

touch

that

it

driving

it

through,

would

either

oversimplify

and

close

the

door

for

some

flexibility

down

the

road

or

it

would

lead

us

into

places

where

we

get

halfway

down

and

then

realize

that

we

gotta

stop

and

so

having

a

nice

like

cluster

claim

seems

awesome.

F

Most

people

from

sanity

perspective

would

love

to

set

a

cluster

id

that

shows

up

in

the

logs

and

diagnostics

or

audit,

especially

like

this

is

a

great

field

to

go

and

audit,

and

if

we

can

figure

out

a

way

to

line

up

each

of

those

so

that

they

overlap

where

they

need

to

they

touch

loosely

and

that

it's

very

natural

to

use

them

in

the

same

way.

That

sounds

awesome.

F

I

do

worry

about

the

you

create

a

crd

that

we

invited

in

the

project

and

then

a

bunch

of

things

read,

and

then

we

realized

halfway

down

we're

like.

Oh,

we

didn't

actually

want

that

to

be

the

same

for

this

use

case.

That

was

mostly

what

they

were

talking

about

and

even

tim's

points

like

all

of

this

is

it's

good.

Each

of

these

individually

is

good,

putting

them

together

too

soon.

We

should

just

try

to

figure

out

how

to

line

them

up

just

so

they

touch

where

they

need

to.

F

F

We

have

crds

and

we

have

config

maps.

Cluster

property

feels

like

a

different

kind

of

config

map

and

at

least

for

validation

and

signatures.

There's

an

argument

of

you

know:

are

we

just

not

doing

enough

to

support

use

cases

that

config

maps?

We

care

about

like

scientific

maps

that

was,

that

was

a

side

separate

topic

again

maximum

orthogonality.

Could

you

use

an

existing

concept

effectively?

We

use

config

maps

to

carry

data

so.

D

So

once

upon

a

time

we

talked

about,

should

we

have

an

in

cluster

cluster

object

and

use

that

as

the

sort

of

schematized

version,

and

then

we

don't

have

any

api

machinery

for

like

singleton

resources,

and

so

we

had

to

deal

with

well,

it's

it's

enery,

whether

we

like

it

or

not,

right-

and

so

I

think

cluster

claim

has

a

nice

property

there.

That

it's

natural

n

airiness

is

is

a

is

a

useful

property,

but

I

also

I

do

hear

your

point

clayton.

D

You

know,

config

maps

aren't

that

different,

although

we

don't

have

cluster

scope,

config

the

I.

I

find

it

interesting

and

useful

to

sort

of

assert

but

not

enforce

that

a

cluster

property

is

a

single

value

and

should

be

a

single

data

point

people

who

want

to

bend.

It

will

find

ways

to

bend

it,

but

you

know

we

can

encourage

people

to

say

that

this

is.

This

is

supposed

to

be

one

thing.

D

I

I

still

like

the

object,

meta

cluster

name

field,

and

I

think

it

would

be

really

useful

to

return

some

some

information

on

every

api

object,

and

I

think

it

gives

an

interesting

way

to

start

to

build

more

multi-cluster

apis

right,

like

we

use

the

namespace

field

to

route

within

a

cluster,

I

could

use

the

cluster

name

field

to

route

within

a

cluster

set

right

like

that.

I

think

that's

an

interesting

property,

it's

unexplored.

D

I

would.

I

would

be

happy

to

reopen

that

can

of

worms

if

I

thought

that

there

was

actually

a

path

forward

on

cluster

name

as

a

distinct

thing.

So

you

know

if

anybody

feels

like

that's

its

time

has

come

like

that.

I

think

it's

orthogonal

to

cluster

property

overall,

because

cluster

property

is

useful

as

a

generalized

form,

but

maybe

promoting

cluster

name,

to

be

a

very

specifically

special

thing

that

we

embed

in

object

meta.

F

The

requirements

for

logs

might

include

uniqueness,

and

differentiation

and

the

the

requirements

for

cluster

name

and

object.

Meta

might

be

clarity

for

end

users.

Similar

have

overlapping

properties.

Is

it

worth

it

forcing

them

all

to

be

the

same,

I

think,

would

be

the

the

key

question.

Is

they

feel

related

and

important

to

get

consistent

where

possible,

but

not

forced

too

hard.

D

Yeah

one

of

the

things

that

we

discussed

early

on

with

the

cluster

claim

cluster

property

was

like

if

we

can't

get

people

to

agree

on

the

globally

or

non-global

uniqueness

of

a

built-in

name,

let's

just

give

them

some

guidelines

and

tell

them

what

we're

going

to

do

with

it

and

then

give

them

the

responsibility

of

doing

the

right

thing

with

it

right.

So

one

of

the

things

that

we

really

swirled

on

when

we

talked

about

using

cluster

name

metadata

that

cluster

name

before

was

well.

Some

people

will

need

it

to

be

globally

unique.

D

So

let's

enforce

it

to

be

globally

unique,

in

which

case

it's

just

a

uuid,

and

we

already

have

uuids

they're

attached

to

objects,

so

let's

just

create

an

empty

object

and

use

that

uuid

and

but

that's

super

not

useful

in

dns,

like

I'm,

not

sure

I

want

to

see

you

know:

fubar

dot,

xyz

one,

two,

three,

four,

five,

six

dash,

seven,

eight

one!

You.

G

D

So

then

we

have

then

we

swirl

on

aliases,

again

right

and

and

we're

back

to

oh,

my

gosh.

We

got

more

than

more

than

one

of

these

things.

So

one

of

the

things

I

really

liked

about

the

cluster

property

proposal

that

laura's

putting

forward

is

it's

optional

and

it

tells

you

how

we're

going

to

use

it.

It

lets

you

decide

if

you

want

to

put

banana

in

there

like

that's

on

you.

If

that's

unique

enough

for

you

bully

and

if

it's

not,

then

you

can

do

something

else.

B

F

To

get

cluster

property

to

be

useful,

does

everyone

have

to

buy

into

it,

and

I'm

always

cautious

about

saying

everyone

has

to

buy

into

our

net

new

thing?

For

it

to

be

useful,

it

doesn't

mean

we

shouldn't

try

in

some

cases

is.

It

is

just

optional

that

you

can

set

if

you

want

to

buy

into

the

schema,

and

we

can

try

it

out

like

doesn't

have

to

be

enforced

for

us

to

find.

F

B

B

I

think

the

question

about

whether

the

service

provider

is

the

right

boundary,

which

david

also

asks

after

that

is

a

good

question

like

we

wouldn't

want

to

risk

collisions

on

gk

and

eks.

I

think

the

conversation

in

sig

multi-cluster

is

definitely

more

on

the

uuid

side.

If

we're

going

to

go

for

the

like

much

more

globally

unique

side

and

not

just

hide

in

the

cluster

set

world,

that's

that's

what

we

want,

then.

The

next

hand

is

from

paul.

H

Yeah,

I

was

gonna

say

that,

like

here's,

my

own

version

of

what

I

think

the

like

the

sweet

spot

would

be

to

just

riff

a

little

bit

on

clayton

so

like

in

in

the

possibility

that

clayton,

like

just

articulated

verbally

of

like

having

something.

That's

optional,

that

maybe

like

a

cloud

provider

or

two

could

enable

bugs,

could

enable

on

you

know,

clusters.

They

were

running

themselves

without

consuming

a

star

ks

product.

H

That

kind

of

thing

where

the

sweet

spot

is

for

me

is

like,

in

those

scenarios

enough

utility

being

derived

from

it,

that

you

would.

You

would

have

folks

lobbying

their

star

ks

provider

that

didn't

enable

it,

because

it

was

so

useful

if

that

makes

sense.

I

think

that

would

be

a

good

place

to

be,

but

definitely

agree

that

you

know

it's.

It's

probably

a

better

approach

to

have

like

demand

side

pressure,

rather

than

like

forcing

people

to

use

something.

H

I

Yeah,

I

think

paul

paul

touched

on

what

I

was

going

to

say

so

I'll

skip

to

that

part

but

clayton.

I

think

your

last

comment

about

the

warm

feeling

for

domain-specific

uniqueness.

This

is

basically

how

we

got

to

some

of

these

requirements

because,

like

we,

we

wanted

globally

unique

in

time

and

space

because

it

felt

right,

but

but

in

terms

of

actual

use

cases

like

that's

really

hard

and

and

doesn't

work

for

everybody.

So

you

know

within

the

that

cluster

set

for

multi-cluster

services.

I

It

makes

sense,

and

maybe

over

time

you

know

if

other

projects

adopt

cluster

id.

You

know

as

a

concept,

then

maybe

that

expands

and

eventually

we

see

so

much

demand

that

that

it

should

be

enabled

by

default

and

follow

some

convention,

but

I

just

yeah,

I

don't

think

we'd

have

enough

information

to

make

any

statements

about

that

today.

E

Yeah,

so

we

can

look

at

this

two

ways

right.

Well,

it

feels

like

there

are

two

caps

in

here,

not

just

one

right.

One

is

about

doing

something

in

a

cluster

that

can

be

used

in

logs

and

audit,

and

things

like

that

clayton

was

talking

about

the

second

one

is

like:

how

can

an

external

api

like

mcs

or

cluster

api

be

allowed

to

set

and

play

with

the

things

that

are

on

a

specific

cluster?

E

Yeah,

the

first

one

is

okay.

Then

there

needs

to

be

something

in

a

cluster

that

needs

to

be

persisted,

and

then

we

have

this

question

of,

like

logging

and

auditing

and

basic

stuff,

that

we

need

to

do

for

the

life

cycle

or

within

a

specific

cluster

right.

So

that

would

be

like

one

set

of

work

that

a

bunch

of

people

will

have

to

end

up

doing

to

make

it

make

it

work

well

with

a

single

cluster

right.

So

that

would

be

like

one

body

of

work,

the

other

body

of

workers.

A

B

Great

question:

I

did

get

a

lot

of

ideas

and

I

think

I

get

the

sense

that

sig

architecture-

I

guess

generally,

if

everybody's

speaking

here

is

representative

of

this-

think

that

something

like

a

cluster

name

should

exist

and

I'm

definitely

getting

the

feeling

that

there's

potentially

multiple

use

cases

that

might

want

to

be

separated

out

a

little

bit.

So

that's

not

all

has

to

be

fit

into

this.

B

B

A

Yeah

and

in

the

chat

that

seems

to

be

so

no

no,

of

course,

you're

talking.

How

do

you

read

chat

and

talk,

so

it

sounds

like

the

the

consensus

would

be

sync

up

with

dims

see

if

we

can

resolve

these

bootstrapping

issues

and

then,

if

that

works,

we

go

with

crd

and

we

we

move

on

from

there.

So

thank

you

laura.

That

was

a

great

discussion

and

thank

you.

Everybody

who

participated.

B

A

So

I

didn't

move

some

things

around

on

the

agenda,

because

there

was

an

item

around

a

for

a

working

group

formation

and

so

han

or

or

whoever

it

is

that

wants

to

talk

about

that

lori.

I

know

you

have

an

item

on

here.

I

don't

think

this

is

actually

that

the

enhancements

leads

on.

This

is

the

right

forum

for

that.

I

think

that's

a

release

team

concern.

We

talked

about

that

on

slack,

I

believe

and

rihanna.

A

J

Sure

yeah,

so

this

is

regarding

a

working

group

formation.

We

blasted

it

out

on

the

sig

arch

google

group.

Specifically,

we

are

proposing

forming

a

working

group

for

the

structured

logging

effort.

It

was

pretty

involved

in

the

last

release

and

involved

a

large

number

of

people

across

a

number

of

sigs

and

the

migration

is

still

occurring,

so

it

seems

to

make

sense

to

form

a

working

group

for

this.

E

A

J

E

So

the

only

guidance

I

would

give

is

like

like

what

happened

in

121.

You

know

cubelet

was

targeted

and

we

a

bunch

of

people

like

swarmed

over

it

and

got

it

done.

So

that

would

be

like

a

good

pattern

to

follow

and

like

hit

one

after

another.

You

know:

do

q

proxy

controller

manager,

you

know,

go

in

that

or

you

know,

go

go

in

some

order

where

you

can

like

feel

the

progress,

and

you

know

full

components,

get

moved

that

that's

the

only

thing

I

can

think

of,

but

clayton.

F

I

actually

wanted

to

riff

on

that

dim.

So

like

there

is

an

element

of

this

is

like

a

working

group

or

something

like

a

channel

like

it

seems

like

a

reasonable

thing.

The

feedback

loop

of

we

did

a

component.

Is

it

working?

Have

we

soaked

it?

Have

we

absorbed

feedback

from

all

the

operators?

Did

structured

logging

and

cubelet

accomplished

the

goals?

Did

we

regress

performance?

Did

we

miss

logs?

Do

we

need

to

change

our

process

for

next

time?

That's

actually

an

area

which

I

know

in

other

bits

of

feedback.

F

I've

received

around

structured

logging.

It's

you

know

it's

moving

just

fast

enough

and

we

swarmed

cubelet

and

we

changed

some

stuff

that

it's

the

it's

right

on

that

edge

of

like

it's

introduced

change.

That

is

impactful

that

it

might

be

good

to

say,

like

a

more

formal

thing

of

like,

let's

gather

the

feedback

and

take

the

like.

Did

we

succeed,

I'm

less

concerned

about

how

fast

it

rolls

out

than

did

we

did

we

nail

structured

logging

or

did

we

would

we

go

back

and

do

a

second

pass

on

cubelet

based

on

learnings?

F

D

D

G

So

at

the

big

like

that,

when

we

concepted,

where

the

original

concept

for

the

group

was

created,

was

mainly

focused

on

collecting

people

to

to

to

do

the

migration,

but

I

think

the

the

finishing

the

point

like

doing

depletion

touches

so

some

long-term

changes

that

technically

were

discussed

like

constant,

removing

the

k-log

or

like

changing

the

character

to

logger

or

contextual

logging.

This

is

something

that

we,

the

same

group,

would

be

capable

to

tackle

like

long-term

and

planet.

With

the

same.

G

D

D

We

don't

just

need

to

change

client

go,

but

you

need

to

like

find

the

edge

where

client

go

reaches

out

into

other

code

and

either

adapt

with

an

api

like

logger

or

something

else,

and

and

like

seal

the

edges

of

that

boundary.

I

think

that

would

be

a

super.

Awesome

delivery.

That's

one

track!

The

other

track

would

be

I'd,

love

to

see

somebody

tackle

like

q,

proxy

or

cubelet

and

say

it's

completely

converted

to

structured

logging

and

we

swapped

out

zap

for

for

k,

log

right

and

the

logs

got

faster

right.

D

So,

but

again

to

do

that.

It's

like

you

know,

because

our

code

is

so

highly

intertwined.

It's

there's

a

lot

of

ripple

there,

so

you

have

to

define

sort

of

an

edge,

I'm

supportive

of

the

working

group.

I

would

love

to

see

client

code.

I

think

is

like

the

most

return

on

investment

and

then

individual

components,

I

think,

would

be

hot

on

its

tail.

E

An

important

thing

to

you

know

bring

additional

people

into

the

community

as

well

as

introduction

to

the

code

base,

and

you

know

giving

them

context

on

on

what

we

do

here.

That's

definitely

a

good

thing,

but

again

to

watch

out

for

is

fatigue

and

be

mindful

of

that

and

try

to

recruit

more

leaders

in

the

community.

So

you're,

not

the

only

one.

D

K

Think

I

raised

my

hand,

like

my

house,

isn't

really

noisy

right

now.

So

if

you

can

hear

this

derrick,

what

does

the

I

generally

like

the

idea

of

the

work

group?

I

guess

what

I'm

trying

to

figure

out

is

like

what

does

the

working

group

fix

that

was

felt

right

now,

just

so

that

we

can

state

it

back

clearly,

and

one

of

the

things

I

was

wondering

is

like:

does

the

formation

of

the

work

group

are

the

participants

going

to

be

able

to

like

take

over

dominant

approval

flow?

K

Like

one

of

the

things,

I

wondered

when

looking

at

a

lot

of

the

structured

logging

changes

was

like

man.

I

wish

I

wish.

I

could

separate

the

approve

on

this

change

by

just

seeing

something

that

said

literally.

This

pr

did

nothing

but

change

the

log

statement

and

nothing

else,

and

so

what

I'm

wondering

is

like.