►

From YouTube: Kubernetes SIG Cluster Lifecycle 20180425 Cluster API

Description

Meeting Notes: https://docs.google.com/document/d/16ils69KImmE94RlmzjWDrkmFZysgB2J4lGnYMRN89WM/edit#

Highlights:

- Discussion about new clusterctl tool and development location

- Presentation on configurable machine installation/setup on GCE

A

B

Cool

yeah,

so

a

couple

of

weeks

ago,

I

presented

the

proposals

or

what

the

tool

that

cluster

API

comes

with,

look

like

and

more

or

less.

We

converged

on

the

experience

and

the

field

for

that

we

did

not

converge

on

the

name

for

it.

So

I

did

a

boat

and

I've

linked

to

the

issue

for

that

cluster,

cuddle,

1

or

cluster

control.

B

However,

you

want

to

pronounce

it.

Chris

was

a

close

second

for

what

it's

worth,

but

I.

Think

Tim

brought

up

some

interesting

questions

about

planning

for

a

new

repo

I

know.

We

have

discussed

to

have

a

new

repo

for

this

tool,

but

Tim

had

questions

like

what

does

release

look

like

what

this

testing

looked

like,

who

manages

it

and

stuff

like

that

and

honestly

I'm,

not

in

a

position

to

answer

all

of

those

questions.

B

Just

yet,

I

haven't

been

able

to

come

up

with

good

enough

reasons

for

one

way

or

the

other,

except

to

say

that

I

think

it

makes

sense

until

cloud

specific

providers

are

split

from

the

main

repo.

We

should

keep

this

tool

in

the

main

repo

to

prevent

unnecessary

complexity

around

releasing

or

vendor

or

lockstep,

releasing

and

stuff

like

that.

A

C

Don't

disagree

with

what

you're

proposing

I

think

the

original

disagreement

was

to

is

to

create

yet

another

repo

and

I

thought

that

would

add

way

too

much

extra

complexity.

Until

we

had

logistics

worked

out

which

were

proposing

sounds

fine

to

me.

I,

don't

have

a

problem

with

it

I

think

to

add

an

extra

command,

though,

to

as

part

of

the

release

you

might

need

to

I,

don't

know

who

actually

controls

that

anymore.

C

That

then

might

be

sig

release,

or

that

might

be

say

gark.

You

might

need

to

verify

with

them

before

we

can

do

that,

but

it

seems

reasonable

at

this

point

either

that

or

to

have

that

tool

live

or

the

API

proper,

because

you're

still

gonna

have

to

vendor

back

and

forth.

Okay,

so

the

vendor,

a

back

and

forth

bit

I

think

is

gonna,

be

the

issue

until

it's

stable

right.

B

I

think

yeah

the

vendor

in

the

API

will

still

be

something

we

need

to

be

careful

about,

but

we've

done

one

thing

to

worry

about

at

least

cloud

been

during

the

cloud

directories

for

releases

I

don't

know,

is

it

possible

that

one

of

us

and

the

sailor

in

the

owners

file

can

just

go,

build

and

upload

the

release,

but

do

we

have

to

serve

like

a

kubernetes

way

of

doing

releases

for

something

that's

early?

It.

C

Depends

how

what's

the

level

of

support

if

it's

a

if

it's

a

first

class

release

artifact

as

part

of

communities

proper

that

will

be

supported

as

part

of

Brittany's

proper?

Then

it

would

be

part

of

the

bundle.

If

it's

not,

then

it's

dealer's

choice,

and

that

means

the

sub

projects

can

do

what

it

desires

and

to

really

set

its

own

whim

and

folly

so

Justin's.

Here

you

know

the

cop's

folks

release

at

their

own

cadence

and

own

interval

right.

So

that's

that

their

sub

project

that

can

do

their

own

things.

C

So

it's

up

to

it's

up

to

folks

to

determine

whether

or

not

they

want

to

promote

it

to

be

a

first-class

thing

as

part

of

kubernetes

they'll

be

incorporated.

If

so,

you

know,

you

probably

should

make

sure

that

it's

at

a

certain

age

and

stage

when

we

want

to

do

this

and

then

then

to

be

part

of

the

really

spunda

love

kubernetes,

proper

yeah.

C

So,

for

somebody

who

manages

separate

projects

outside

of

kubernetes,

proper

I,

absolutely

love

go

releaser

yeah.

We

have

our

own

travis,

build

artifacts

and

set

up

for

outside

of

kubernetes

proper,

so

some

of

the

stuff

we

do

for

arc

interest

and

ibly

and

for

other

things

that

help

your

products,

create

it's

actually

a

very

nice

facility

to

have

the

auto

CI

set

up

and

build

and

published

artifacts

as

long

along

with

the

entire

release

chain

of

dev

notes

and

everything

else

together.

C

C

D

A

E

So,

as

Chris

I

implemented

this

for

GCE,

this

is

just

kind

of

presenting

the

design,

so

we

can

get

feedback

on

it

and

you

ways

to

improve

it.

It's

kind

of

a

wanted

to

present

it

as

a

suggestion

for

other

cloud

providers

that

could

follow

this

pattern,

but

it's

not

going

to

be

like

a

defect

away.

We're

going

to

be

implementing

it.

Karan

did

mention

that

he

was

going

to

switch

over

his

terraform

controller

to

implement

this

pattern

as

well,

so

to

start

I've

put

a

link

to

the

design

doc

in

the

meeting

notes.

E

So

if

you

want

to

follow

along

there,

you

can.

This

is

why

they're,

basically

just

copied

and

pasted

from

there

and

there's

links

to

the

PR

that

I

have

that

implemented

all

of

this.

So

the

objective

here

was

to

modify

the

GCE

actuator,

so

I

could

handle

a

configurable

kubernetes

versions,

OS

image

and

startup

scripts.

E

So

the

way

it

was

before

this

PR

got

merged

in

the

GCE

actuator,

it

would

compile

a

hard-coded

script

template

which

would

install

kubernetes

on

Ubuntu,

and

so

basically,

if

you

wanted

to

ever

change

the

script,

you

would

have

to

go

into

the

go

file,

change

the

template

and

then

recompile

and

redeploy

the

entire

machine

actuator

so

that

wasn't

very

user

friendly

I

couldn't

really

change

things

easily.

So

you

wanted

to

switch

to

using

a

structured,

config.

F

E

E

And

this

would

do

a

few

things

for

us:

we'd

be

able

to

support

multiple

target

os's

without

having

to

edit

the

controller

binary.

We

could

use

pre-loaded

images

in

this

way.

We

would

just

have

a

separate

config

that

would

specify

this

image

and

then

the

startup

script

would

just

skip

any

of

the

installation.

Steps

admins

would

have

a

list

of

vetted

setup

combinations.

E

The

idea

here

was

that

we

would

have

a

Hamel

file

that

would

basically

list

out

all

the

configs

and

so

then

admin

to

be

able

to

see

what

we

support

and

they'd

also

be

able

to

bring

their

own

OS

and

machine

setup

and

just

be

able

to

add

it

to

this

yellow

file.

Instead

of

having

to

rebuild

their

own

controller.

E

Right

so

to

achieve

all

this

I

added

a

few

types

they

live

in

this

machine

setup

package

here

the

base

type

is

the

config,

so

it

has

a

slice

of

config

params,

an

image

and

the

metadata

and

right

now

the

metadata

just

contains

the

startup

script.

These

config

params

are

the

OS

name,

roles

and

version

info,

and

these

all

have

corresponding

fields

in

the

machine

object.

E

E

So

we'll

have

multiple

of

these

configs

contained

in

a

config

list

and

the

way

whenever

you

want

to

spin

up

a

machine

will

extract

the

the

OS.

The

roles

and

the

version

info

out

of

the

machine

object

to

create

params

object

and

then

we'll

iterate

through

these

configs

and

try

to

find

one

that

has

a

matching

params

and

if

we

find

a

match,

then

we

know

we'll

be

using

that

image

and

metadata

for

the

machine

that

we're

trying

to

setup

and

if

you

don't

find

a

match.

E

E

So

the

way

it

works

is

that

you

would

have

a

config

watch

whenever

you

wanted

to

make

a

new

machine.

You'd

have

to

call

a

method

called

valid

configs,

which

would

return

two

valid

configs

right

now.

It

just

looks

at

the

path

grabs,

the

amal

and

parses

it

out

into

the

configs

one

of

the

future

optimizations

we

could

do

here

and

the

reason

we

set

up

this

config

watch

was

instead

of

having

to

parse

the

amal

each

time

we

wanted

to

create

a

machine

and

each

time

we

needed

to

look

up

a

config.

E

E

So

this

is

just

kind

of

a

temporary

work

around

until

we

can

get

rid

of

the

GCP

deployer.

Once

we

have

actual

bootstrapping,

we

can

create

this

config

map

as

part

of

that

process

and

yeah.

And

so

then,

this

volume

mounted

file

is

what

we're

gonna,

what

the

master

is

going

to

be

using

whenever

it's

provisioning

new

nodes.

E

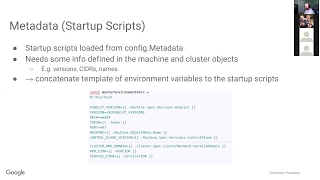

And

for

the

startup

scripts

they're

loaded

from

the

config

metadata,

but

they

need

some

of

the

information

that

are

in

the

machine,

machine

and

cluster

objects,

so

currently

I'm

just

concatenate

in

this

template

of

environment

variables

to

the

beginning

of

startup

scripts.

This

isn't

an

ideal

situation

because

it

limits

it

requires

the

users

to

have

bash

start

at

scripts.

E

So

one

of

the

alternatives

was

to

put

these

values

into

the

GCP

metadata,

but

that

would

make

this

extremely

GCE

like

specific.

So

another

alternative

was

just

to

have

the

startup

script

itself

be

a

go

template,

so

it

could

access

these

these

values

and

then

it

would

just

be

compiled

in

that

way,

and

then

they

can

use

they'd

have

more

flexibility

in

what

language

they

want

to

write

their

script

in

and

it

would

be

more

extensible

to

other

cloud

providers

as

well.

E

And

so

I

added

a

few

command

line

flags

one

command

line

flag

when

to

the

machine

controller

and

one

to

the

GCP

deployer

and

this

flag

just

passes

in

the

Amol

file

location

so

that

they

can

fig

watch

can

be

created

to

look

at

this

file

in

the

machine

controller.

It

should

always

be

that

volume

mounted

path

and

then

in

the

GCP

deployer

it's

just

wherever

you

save

that

file.

E

So

some

of

the

future

work,

as

I

mentioned,

using

a

better

solution

for

the

environment

variables

for

the

startup

scripts.

We

want

to

create

the

or

convert

the

gamma

file

format

to

an

API

type,

so

we

can

have

versioning

for

it

and

then

also

use

the

file

watcher

or

they

can

fig

watch

and

I

think

that's

all

I

have.

D

E

Yeah,

so

here's

the

machine,

setup,

config

CMO.

This

is

the

one

that

I

added

so

here's

the

list

they're

starting

with

the

machine

params.

This

is

for

Ubuntu

1604

LTS

for

the

master,

and

then

we

have

different

other

versions

that

it

supports.

So

if

you

wanted

to,

for

example,

if

your

startup

script

would

support

multiple

kubernetes

versions,

you

would

just

create

a

new

like

copy.

This

put

another

machine

parameter

and

just

change

the

versions

to

whatever

it

would

support.

E

This

image

is

the

full

path

that

you

can

find

it

on

JCP,

and

so

it's

just

the

image

family.

It

can

be

a

specific

custom

image

if

that's

what

you

wanted

to

use

also,

and

then

here's

the

startup

script

down

here,

so

the

machines

yeah

mol

this

and

they

configs.

This

OS

has

to

match

the

machines

OS.

So

this

is.

E

This

can

basically

be

arbitrary,

but

you

know

for

clarity's

sake,

you

can

just

make

it

the

family

name

or

if

it

was

like

a

custom

image,

you

could

say

Ubuntu

1604

custom

or

something

like

that,

though.

As

long

as

this

OFS

can

be

found

in

that

configs

OS,

then

that's

oh

good,

here's,

the

versions

that

it

pulls

out

and

also

the

roles.

So

these

three

things

together,

what

gets

pulled

out

to

make

the

machine

params

when

we're

searching

for

the

match?

C

I'm

kind

of

I'm

new

to

this

area,

but

it

seems

like

you're

pretty

much

doing

provisioning

on

the

fly

is

what

it

sounds

like,

because

otherwise

you

wouldn't

specify

the

parameters

for

some

controller

to

rectify.

On

the

back

end.

Is

there

a

reason

why

you'd

want

to

support

this

capability

versus

saying

you

did

explicitly?

Have

the

other

folks

bake

the

images

proper

with

a

separate

tool

like

separation

concerns

right,

because

you're

basically

conflating

the

notion

of

a

machine

along

with

provisioning

of

its

course

and

components.

C

Sorry,

what

was

that

last

that

you

said

you're

conflating

the

notion

of

a

machine

with

the

provisioning

of

its

good

individual

components.

So,

like

the

the

provisioning

aspect,

is

the

thing

that

I'm

I'm

wondering

about

because

you're

a

machine

could

be

pre-baked,

as

you

mentioned

earlier

on.

This

is

to

provide

the

ability

to

do

provisioning

as

part

of

it.

E

E

D

Guess

you

might

have

I

think

you're

you're

pointing

to

him.

It

might

be

more

about

to

know

how

the

API

is

structured

or

how

our

don't

lose.

Object

is

actually

yeah

he's

actually

taking

care

of

both

provision

in

the

infrastructure

right,

creating

the

machine

and

also

making

sure

everything

like

installing

everything.

If

it's

not

there,

you.

C

H

C

Yeah

I

mean

I'm,

just

wondering

you

do

you

want

to

support

this

because

the

pieces

that

you

adhere

in

a

long

term

of

being

able

to

support

these

individual

components

that

you

want

to

bake

into

your

image

could

be

computationally

expensive

and

could

expand

or

shrink

based

upon.

However,

people

want

to

make

their

images

it's

a

wonderland

out

there,

so

GCE

can

do

whatever

they

want

to

do

and

control

that

environment,

but

Red

Hat

might

want

to

do.

A

At

that

point

to

hand

us

back

a

valid

kubernetes

node,

and

that

is

I,

think

the

only

contract

we

really

care

about

is

that

they

give

us

a

valid

kubernetes

node

that

ostensibly

meets

the

version.

Requirements

like

it

can

be

patched

versions

or

whatever,

but

ostensibly

meets

that

kind

of

requirement.

Let.

A

C

A

Correct

the

only

thing

we

will

probably

do

later

on,

as

is

when

we

actually

get

the

note

object

back

in

the

Capades

API.

We

may

check

like

the

cubelet

version,

is

the

incorrect

version

like

it's

reporting,

though

it

is

1.9,

dot,

4

and

if

I'm

not

even

sure,

if

it

reports

contain

a

runtime,

but

we

would

probably

verify

that

as

well.

G

Yeah

out

of

curiosity,

why

do

we

need

to

even

expose

this

kind

of

versions?

Why

does

it

matter?

I

mean

I?

Guess,

assuming

that

the

author

either

created

an

image,

was

whatever

versions

they

decided

to

create

and

as

long

as

it

satisfies

some

API

from

a

kubernetes

perspective,

why

does

one

has

to

specify

you

know

container

runtime

versions

at

the

top

level.

E

A

A

Going

so

this

is

yeah

more

of

a

question

with

the

machines

object

is

not

this

mapping,

given

that

the

versions

are

there,

I

think

having

the

versions

appear

in

the

mapping

makes

sense,

because

you

may

need

to

install

a

different

version

of

docker

a

different

way.

So

you

may

need

a

different

script

or

even

just

point

to

a

different

image

that

already

has

that

version

installed.

So

the

question

going

back

to,

why

does

this

even

exist

in

the

machine?

A

I'm

not

entirely

certain

on

what

the

needs

for

that

was,

but

being

able

to

lock

into

certain

versions

of

docker.

I

can

definitely

see

being

helpful

from

a

just

support

ability.

Like

I

know,

all

my

machines

are

running

on

this

version

of

docker,

because

that's

all

I

support

given

given

the

evolution

of

this

config

map,

I

see

how

that

can

be

easily

just

put

in

here

and

not

have

to

be

specified

on

the

machine

when

you

have

like

because

it

at

first

we

had

just

the

Machine

as

the

input

and

one

generic

install

script.

A

That

would

take

the

version

of

docker

from

the

machine

and

try

to

install

that

version

from

some

apt

repo,

and

this

allows

more

flexibility

of

just

having

multiple

being

able

to

easily

support

multiple

images

that

are

already

set

up

with

versions

of

docker

or

even

multiple

scripts

that

are

hard-coded

to

so

to

install

certain

versions

of

docker.

And

it

may

be

something

we

need

to

revisit.

D

You

know

kind

of

a

changed

version

on

the

object

and

the

controller

reconciles

you

get

down

on

that

version.

If

you

want

to

have

that

fine,

fine,

grained

control

over

that

particular

part

of

the

notice

software

that

you're

updating,

for

example,

it

could

be

upgrading

just

a

continual

runtime,

nothing

else

and

that's

kind

of

like

if

you

take

a

look

at

this

sort

of

these

other

kind

of

a

use

case

as

well.

It

kind

of

makes

sense.

You

know

it's

kind

of

lease

provides

an

interesting

interface.

I

Can

I

guess

a

clarifying

question,

so

I

mean

how

I

imagine

this

being

used?

Is

that

probably

will

start

off

with

one

of

these

objects

with

multiple

sets

of

machine

params

for

the

different

cubelet

versions,

for

example,

and

that

the

script

would

that

up

and

install

the

correct

cubelet

version,

but

down

the

road?

We

might

pre-baked

images

for

each

of

the

different

configurations

more

like

what

Tim

described

and

just

be

able

to

very

rapidly

install

exactly

the

particular

combination

where

we'd

only

have.

C

I

was

just

separating

the

concerns

so

like

I,

envisioned

setting

up

the

VM

pre-baking

the

image

and

just

referencing

the

images

as

as

you

know,

any

person

can

make

the

images

in

any

way

they

want

to,

because

there's

a

million

tools

to

do

that,

and

they

set

up

the

stack

right

and

then

just

reference

that

image

versus

taking

on

that

domain.

Space

yeah.

I

I

mean

there

are

I,

think

you're

always

gonna

need

to,

even

if

it

becomes

very

short,

but

it

is

certainly

convenient

to

not

have

to

fake

it

on

every

minor

cubed

up

version.

I

would

I

would

say,

particularly

for

CI

right.

If

we

want

to

start

doing

CI

here

where

the

cubelet

version

is

per

PR

or

an

IV

Berkeley

up

for

revision

of

the

PR,

then

you

definitely

want

you

don't

want.

We

don't

I,

don't

think

I

suggest

we

don't

want

to

think

an

ami

/

PR

or

in

the

trip

yeah.

E

H

For

Wordsworth

we've

encountered

something:

we've

done

something

quite

similar.

That's

on

the

redhead

side,

with

a

solution

that

we

have

that's

work.

It's

gonna.

It's

interrupts

it's

working

with

this

API

effort

as

well

like

we

integrate

sort

of,

but

we

have

a

layer

above

and

we

encountered

the

similar

issue

with

what

we

called

cluster

versions

and

they

were

just

pre

baked

images.

Or

do

we

assume

that

everything

in

there

is

just

ready

to

go

in

it?

A

H

C

H

C

A

Justin

brought

up

a

key

use

of

CI

and

that's

something

we

definitely

wanted

to

support

and

we

didn't

want

to

hamstring

people

by

forcing

them

to

pre

build

every

image.

For

every

doctor.

We

want

to

be

able

to

support

a

variety

of

use

cases,

even

though

in

for

GC

and

for

where

we

support

we're,

probably

gonna,

go

heavily

down

the

pre-baked

image

route.

J

The

control

plane

version

or

the

version

they

can

be

somatic

semantics

semantic

version

ranges.

So,

for

example,

this

image

has

support

kubernetes,

1.9,

X

or

or

support

docker

version,

1.2

12x,

or

something

like

this

all

version

between

1.12

and

I,

don't

know

how

they

call

it,

but,

for

example,

one

1.16

or

something

or

something

like

this,

so

I

think

this.

The

better

approach

then

just

simply

start

calling

like

one

a

single

value.

E

A

Yeah,

one

of

the

other

things

that

we

bet

this

brings

us

is

like

a

clip

like

if

you

only

give

a

cluster

and

like

administrator

access

to

this,

but

you

allow

other

users

to

be

able

to

create

machines.

For

some

reason,

if

you

have

an

explicit

list

of

versions

like

this

supports

one

dot,

9.41

dot

9.5

and

your

team

hasn't

qualified

19.6,

yet

it

can

prevent

other

users

from

creating

1.9

dot

six

nodes

until

the

cluster

administrator

updates

us

and

says:

ok,

yes,

we

actually

support

this.

A

No,

and

so

that

was

another

use

case,

but

there's

definitely

ways

to

just

repeat

specific

point

matches

and

I

think

version

ranges

and

version.

Wildcards

are

reasonable

enhancements.

Once

we

get

a

little

more

solid

on

this

and

I

would

want

to

have

this

actually

converted

into

a

version

API

type

before

we

start

adding

stuff

like

that.

D

I

Would

like

to

bring

up

one

thing,

which

is

the

bash

issue

it

is.

It

is

hard

to

get

things

robust

in

bash.

In

my

experience,

like

you

know,

like

the

curl

has

to

be

wrapped

in

a

retry

like

everything

goes

wrong.

Every

single

command

fails

and

I,

don't

know

if

we

can,

if

we

can

do

anything

like

what

we've

tried

to

do

in

cops,

is

try

to

push

people

towards

like

darker

images,

but

of

course

we

have

to

get

the

first

thing

up.

So

we

have.

I

E

E

And

so

this

is

where

the

environment

variables

are.

So

basically

there's

this

metadata

params

here.

So

if

we

just

have

the

startup

script,

be

a

go

template.

They

can

just

use

any

of

anything

in

here

that

they

need

and

just

add

those

as

the

go

template,

and

that

way

they

can

use

a

different

scripting

languages.

That's

what

they

want

to

do.

I

A

We

could

we

shouldn't

shy

away

from

trying

to

do

better,

because

I

I

really

don't

like

that.

It's

forcing

us

to

use

batch.

Ideally,

we

would

just

be

able

to

populate

or

to

find

some

sort

of

structured

parameters

to

the

script

and

say

here

is

like

a

Hamel

serialization

of

the

structure.

Read

it.

However,

you

like

do

whatever

you

want

and

make

it

work.

I

We

have

a,

we

have

a

the

bash.

Script

basically

has

a

curl

to

download

the

magic,

reliable

tool

that

has

retry

logic

built

into

it

and

written

in

go,

but

that

I

think

what's

good

about

this.

Is

it

lets

us

figure

that

out

later

and

it

doesn't

like

some

people

really

want

to

use

chef

on

their

machines

and

we're

like?

No,

you

can't

really

doesn't

really

work,

but

this

the

bash

is

the

sort

of

lowest

Combinator.