►

From YouTube: Kubernetes SIG Multicluster 2022 Feb 8

Description

No description was provided for this meeting.

If this is YOUR meeting, an easy way to fix this is to add a description to your video, wherever mtngs.io found it (probably YouTube).

B

C

Yes,

looks

great,

okay,

so

hi,

I'm

donald

hunter

and

I

work

at

red

hat

and

in

collaboration

with

some

ibm

research.

Colleagues,

and

I

think

eti

is

on

the

call

as

well.

We

are

exploring

a

number

of

hybrid

cloud

use

cases

I

suppose

is

the

best

way,

the

umbrella

to

describe

them,

and

I

think

all

of

these

use

cases

start

to

strain.

C

The

definition

of

a

cluster

set

the

way

it's

currently

designed

defined

in

the

mcs

spec,

and

certainly,

I

think,

break

the

concept

of

name

space

sameness,

so

just

kind

of

as

an

outside

I'll

describe

a

little

bit

of

the

motivation

of

what

we're

trying

to

achieve

and

then

talk

about.

You

know

some

of

the

challenges

I

think

we

face

with

the

current

mcs

spec

as

it

stands.

C

I'll

give

you

a

little

bit

of

detail

about

some.

Just

you

know

sketch

out

the

way

it

looks

for

from

the

perspective

of

a

number

of

use

cases

and

then

just

kind

of

move

on

to

at

least

sharing

some

ideas

about

proposals

for

moving

forward

and

at

any

point

please

do

ask

questions.

I'm

interested

to

hear

what

conversations

have

happened

in

this

space

before

and

what

may

have

led

to

existing

mcs

decisions

and

whether

or

not

what

we're

talking

about

makes

sense

in

the

context

of

of

mcs.

C

Okay.

So

from

a

motivation

perspective,

I

think

one

of

the

key

points

that

we've

started

to

explore

with

hybrid

clouds

is

that

we

need

autonomy

for

multiple

devops

teams

to

or

you

know,

to

carry

out

the

way,

they're

already

functioning,

with

single

clouds

and

with

multiple

clouds

or

multiple

clusters,

where

they

they

potentially

have

deployment

namespaces

that

have

already

been

allocated

to

them.

They

want

to

operate

within

those

namespaces.

C

So

from

what

we

understand.

What

I

understand

of

the

mcs

capabilities

at

the

moment

that

this

bumps

up

against

a

number

of

limitations

of

the

way

I

see

it,

is

the

cluster

set's

really

defined

as

a

homogeneous

cloud

and

sharing

is

blanket

across

the

cluster

set,

with

name

space,

sameness,

assumed

and

possibly

even

required

within

the

cluster

set.

I'm

not

sure

if

I

would

go

as

far

as

saying

it's

required,

but

certainly

assume.

A

There's

a

little

nuance

to

both

of

those

points,

so

we've

been

talking

about

it,

so

I

don't

think

a

cluster

set

is

a

homogeneous

cloud.

Like

yes,

namespace

sameness

is

required,

but

that

doesn't

mean

that

the

namespace

needs

to

be

in

every

single

cluster.

It

just

means

that

for

the

clusters

it's

in

it's

treated

the

same.

A

The

same

can

be

that

in

all

the

clusters

it's

in

the

namespace

is

not

multi-cluster

aware.

What

we

don't

want

is

a

situation

where

a

namespace

means

one

thing

in

clusters:

a

and

b

and

a

different

thing

than

clusters,

c

and

d,

because

that

makes

it

really

hard

to

reason

about

it's

totally,

fine,

that

a

namespace

would

be

in

every

cluster

and

mean

a

different

thing

in

each

cluster,

so

long

as

they

are

basically

cluster

local

namespaces,

or

that

a

namespace

wouldn't

exist

in

a

significant

portion

of

clusters.

That's

absolutely

fine,

too.

A

D

Donald

donald,

sorry,

this

is

tom,

so

from

past

discussions

it

seems

that

from

what

I

recall

is

you

the

name?

What

you

try

to

achieve

is

that

the

the

name

space

for

the

cluster

may

be

called

name

differently

in

different

clusters,

but

is

it

true

that

it's

still

like

actually

the

same

logical

entity,

but

it

may

be

named

differently.

C

I'm

not

sure

it's

as

simple

as

making

that

statement.

No,

I

think

one

of

the

challenges

that

we're

encountering

or

we're

thinking

or

exploring

is

in

a

brownfield

environment

where

you're

you've

got

clusters

that

you

want

to

incorporate

into

a

hybrid

cloud.

In

other

words,

you

want

private

trust

between

between

those

clusters,

rather

than

having

to

expose

things

publicly

for

interconnect.

There's

a

high

degree

of

chance

of

name

space

overlap

which

you

need

to

deal

with.

C

C

C

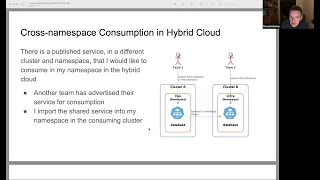

So,

for

example,

here

there's

a

published

service

provided

by

a

different

team

in

a

different

name

space

that

I

want

to

consume

into

my

existing

namespace

by

my

existing

workloads

that

I

deploy

in

my

devops

process

and

without

asking

either

me

to

redeploy

into

a

new

namespace

or

the

other

team

to

to

deploy

into

a

new

namespace.

We

have

a

namespace

difference

that

needs

to

be

resolved.

C

Yes,

I

probably

could

arrange

to

deploy

a

namespace

that

matches

my

existing

workload

namespace,

but

I

need

to

do

it

in

such

a

way

that

the

originating

namespace

I

I

would

prefer

not

to

have

to

redeploy

my

application

to

be

able

to

consume

something

that

is

both

provided

in

my

local

and

in

remote

name

spaces.

I

don't

know

if

this

is

something

that

mcs

can

achieve

today,

but

it's

some

that's

something

that

we

certainly

want

to

to

try

and

achieve.

C

A

Another

this

that

first

use

case

is

actually

something

that

mcs

mostly

supports.

Today

we

did

make

the

decision

to

require

a

change

from

the

server

changing

the

service

name

from

cluster.local

to

clusterset.local

just

so,

there

was

no

accidental

changeover,

like

basically

as

a

consumer.

You,

opt-in,

but

aside

from

that.

Yes,

you

can

absolutely

spread

the

service

across

as

many

clusters

as

you

want.

C

Yeah,

I

think

I

think

the

opt-in

into

dns

naming

or

service

advertisement

is

potentially

one

of

the

challenges

it

feels

like.

You

have

to

reconfigure

and

redeploy

your

workloads

in

order

to

consume

something

that

has

moved,

which

would

be

if,

if

that

was,

if,

if

that

was

our

a

control

choice

on

mcs,

I

think

that

would

be

a

useful

thing

to

consider.

A

Yeah

that

no

that's

a

good

point,

it

also

just

mechanically

gets

difficult

because

your

dns

implementation

may

be

different

for

multi-cluster

yep

than

for

single

cluster,

but

also

to

be

to

be

clear,

it's

entirely

possible

that

you

just

deploy

your

surface

your

service,

consuming

the

multi-clustered

from

the

start,

and

it's

just

deployed

in

your

local

cluster.

That's

that's

kind

of

like

what

we

might

recommend

it

for

future

compatibility

like

just

use

cluster

set.

If

you

are

interested

in

the

multi-cluster

world.

C

E

F

E

A

Oh,

that,

then,

that

service

that

existing

service

could

be

exported.

It

doesn't

impact

the

existing

service

at

all

like

so

mcs

is

entirely

additive

right,

so

in

whatever

service,

that's

exporting

that

service

or

whatever

cluster

that's

exporting

that

service.

It

would

still

be

available

as

cluster.local

no

change,

but

by

exporting

it

now

it

becomes

available

elsewhere

as

clusterset.local.

A

E

E

A

And

well

I

mean,

or

you

can

create

you

know,

services

that

represent

them

like

you're

right.

This

doesn't

solve

all

those

use

cases,

I'm

I'm

not

really

sure

it

necessarily

should.

I

think

you

know

at

some

point

like

the

kubernetes

api

is

incredibly

powerful

and

you

can

do

whatever

you

want

with

it.

The

set

of

things

we

can

easily

automate

and

make

trivial

needs

to

have

some

kind

of

bounce.

A

I

think

you

know

I

well

mcs,

you

know

we

could

potentially

extend

it

to

support

like

importing

services

from

from

other

environments

that

have

nothing

that

have

no

cluster

set

awareness.

I'm

not

necessarily

opposed

to

that.

I

think

that

would

need

to

be

add-on

and

also

very

careful,

because

the

other

thing

is

that

we

try

to

make

mcs

too

powerful

and

the

surface

gets

massive.

It's

no

longer

easy

to

use

and

it's

really

hard

to

actually.

A

E

C

E

A

Well,

I

mean,

I

think

we

need,

we

need

a

url.

We

need

its

own

url

like

if

it's

not

going

to

be

part

of

the

cluster

set,

and

it

doesn't

want

to

opt

into

sameness

it.

It

needs

to

provide

its

own

identity

right

like

so

so

I

I

want

to

be

clear

too,

like

support

with,

I

think

the

gateway

api

is

the

right

answer

here.

E

G

A

A

Mcs

right

mcs

is

a

is

just

literally

stretching

the

service

object

across

clusters.

It's

not

clever.

If

you

want

any

configuration,

if

you

want

traffic

shaping

traffic,

splitting

advanced

routing

any

l7

capabilities,

gateway's

the

answer

to

all

of

those

and

it

would

live

above,

mcs

mcs

just

says

these

two

services

are

in

these

two

clusters

and

I

want

them

to

be

considered

the

same

service

by

anyone

who

can

consume

that

so.

E

A

It

does

that

as

well

in

the

same

way

that,

but,

but

only

with

the

same

capabilities

you

get

with

service

right

like

you

get

a

cluster

ip,

it's

it's

not

intelligent.

You

don't

get

traffic

shaping

you,

don't

get

any

layer,

7

routing

capabilities,

it

you

get

a

cluster

ip.

So

if

you,

if

all

you

want,

is

cluster

ip

is

a

cluster

ip

service

that

you

can

call

from

anywhere.

E

E

The

focus

of

mcs

is

on

services

with

back

ends

split

across

clusters,

I

mean

not

that

it

cannot

address

by

services

that

are

external

services

or

services

within

a

cluster,

but

those

can

be

tackled

through

other

means,

including

the

gateway

api,

and

you

would

perhaps

like

to

see

a

stronger

case

if

we

want

to

extend

mcs

scope

to

include

those

services,

because

at

the

moment

you're

suggesting

that

those

can

be

addressed

via

other

means

and

not

vmss.

Api.

A

A

Yeah

is

it

has

it

been

thought

about?

I

don't

know

how

much

it's

been

thought

about,

but

I

that

seems

pretty

reasonable.

I

guess

what

what

it

comes

down

to

is

like

mcs.

Does

all

these

things

automatically,

because

it

has

hard

assumptions

about

how

things

work

like

the

idea

of

being

able

to

import

some

external

service

that

doesn't

otherwise

adhere

to

namespace

sameness

with

mcs

like

somewhere.

E

E

So

even

if

gateway

api

has

a

class

that

exports

a

l4

service,

it

is

not

doing

service

advertisement,

which

is

what

mcs

is

doing,

so

maybe

it

would

be

useful

to

see

mcs

and

gateway

as

complementary,

in

that

mcs

can

do

service

export

for

all

services

regardless

of

domain

name,

and

that's

something

that

is

more

appropriate

for

mcs

to

do

and

gateway

can

do

load

balancing,

but

not

export

of

services.

I

feel

like

these

are

two

separate

functions.

A

Anyway,

yeah,

I

think

this

probably

needs

more

discussion,

but

it,

but

I

guess

the

the

concern.

Is

it

like

kind

of

again

that

just

mcs

mcs

is

a

narrow,

is

a

more

narrow

api

and

makes

a

lot

of

assumptions,

and

if

we're

saying

that

we

want

it

to

do

more

than

that,

I

wonder

if,

like

if

mcs

is

the

right

home

for

that,

if

we're,

if

the,

if

what's

missing

on

gateway,

is

service,

discovery

or

service

advertisement

is

there,

you

know,

I

donald

you

threw

out

the

idea

of

like

an

extension.

A

I

think

maybe

that

makes

sense,

like

maybe

there's

a

way

to

say

advertise

this

gateway

versus

like.

If,

if

because

mcs

doesn't

only

do

advertisement,

it's

a

it's

a

piece

of

it.

I

wonder

if,

if

it's

really

the

right

place

for

for

that

to

live,

if

we'd

basically

be

saying,

don't

do

most

of

what

mcs

does

just

do

this

service

advertisement

and

most

of

what

mcs

does

would

then

be

handled

by

gateway?

F

My

perspective

is

like

some

of

the

mcf

the

concepts

that

mcs

has

introduced

so,

like

the

service

export

service,

import

would

be

useful,

like

in

the

shape

of

a

gateway

guide,

to

do

something

like

advertise

advertising

catalog

of

services,

which

could

be

selectively

imported

and

consumed.

So

not

the

like

north

south,

but

like

gateway

api,

as

the

east

west

base.

A

Right

yeah

also,

I

just

want

to

throw

out,

there's

absolutely

nothing,

stopping

you

from

like

creating

a

service

import

that

actually

sits

in

front

of

like

points

at

a

gateway.

I

don't

know

it's

something

that

we

want

to

code

in

the

spec,

but

it

would

work.

Definitely

you

know

you

basically

just

give

you

gives

you

an

ip

with

a

name,

although

this

you

know

encoding,

that

inspect

gets

scary,

because

then

you

start

looking

at

like

external

name

services,

which

you

know

have

their

own

words.

So.

E

A

That's

just

the

l7

gateway

class

right,

like

I

think

yes,

most

gateway

use.

Cases

today

sit

in

front

of

some

very

heavy

infrastructure,

but

I

don't

think

that

is

at

all

a

characteristic

of

the

api.

I

think

that's

just

that's

just

what

the

use

cases

that

drove

the

api

are.

I

think,

like

the

api

is

pretty

lightweight.

If

you

want

it

to

be.

C

Yeah,

no,

no,

it's

incredibly

useful

discussion

and

I

think

this

is

what

I

was

just

starting

to

realize.

As

I

was

reading

more

about

gateway

that

there's

definitely,

you

know

meshing

between

what

mcs

is

doing

and

what

gateway

can

do

in

future.

So

I

think

this

is

a

conversation

that

will

we'll

come

back

to.

C

Yeah

so,

but

I

do

still

feel

that

well,

I

I've

put

a

lot

of

thought

into

whether

or

not

we

can

move

forward

effectively

with

and

retain

namespace

sameness.

And

there

are

a

number

of

you

know,

cumbersome

ways

we

could

potentially

do

it.

But

it

seems

to

me

that,

from

a

simplicity

perspective

and

from

what

expectations

are

in

a

relatively

modestly

sized

cluster

set

that

having

some

degree

of

separation

of

name

space

might

be

helpful.

C

Some

of

that

is

just

with

some

simple

policy,

and

the

policy

would

be

around

defining

the

behavior

that

you

want

for

service

import

on

the

server

potentially

on

the

service,

and

I

I

was

initially

thinking

just

on

the

service

import

side,

but

potentially

also

on

the

service

export

side,

where

import

all

is

kind

of

the

current

behavior

import.

Only

from

specific

namespace

import,

with

some

form

of

namespace

mapping

to

overcome

existing

namespace

differences

and

then

no

automatic

import

at

all

was

kind

of

the

the

layers

of

behavior

that

that

I

could

envisage.

A

Think

well,

the

original

spec,

the

early

early

draft

actually

did

have

a

mapping

concept

and

we

backed

it

out

because

we

wanted

to

try

not

to

have

that

not

because

it

was

like

a

don't

ever

do

this.

But

but

one

of

the

things

that

kind

of

kept

coming

up

is

like

brownfield

is

a

real

problem

with

mcs.

There's

no

question:

if

you,

if

you.

D

A

Existing

clusters

to

mcs

work

is

required,

one

way

or

another,

and

and

that's

not

ideal,

the

concern

I

keep

coming

back

to

and

this

isn't

to

say

like

that.

We

shouldn't

keep

having

this

discussion

and

maybe

change

what

we're

doing

today,

but

optimizing

for

for

that.

Transition

from

brownfield

to

mcs

is

certainly

good.

A

The

namespace

sameness

idea

was

always

supposed

to

be

bigger

than

mcs

just

like.

If

we

can

make

this

assumption,

all

kinds

of

things

get

easier.

Some

things

get

harder,

but

we

have

a

very

straightforward

answer

to

those

hard

things

which

is

like

it

sounds

like

we're

describing

two

separate

namespaces,

and

maybe

they

shouldn't

have

the

same

name

and

so

like

what

is

actually

a

better

outcome.

A

C

C

E

And

jeremy,

I

think

the

other

thing

is

that

mcs

can

become

a

generic.

In

my

view,

service

discovery

api.

If

not

right,

and

I

I

still

feel

like

that.

Something

shouldn't

be

overloaded

to

give

api,

but

in

order

to

do

that,

it

has

to

also

address

a

non-kubernetes

clusters

which

don't

even

have

the

concept

of

a

namespace

or

you

know

better

brownfield.

E

So

if

we

want

mcs

to

be

like

an

enterprise-wide

service

discovery,

it

really

cannot.

I

mean

especially

like

retail

edge

where

thousands

of

clusters

right

you.

You

really

cannot

expect

to

have

name

space

sameness

so

having

an

annotation

which

is

which

is

the

alias.

I

mean

something

like

that.

Could

even

work

right.

Here's

an

annotation

where

this

namespace

is

called

foo

but

really

means

its

actual

name.

Is

you

know,

foo

prime

and

then

use

foo,

prime,

as

your

alias.

A

Yeah,

I

I

mean

I

I'm

just

not

sure

mcs

is

the

right

place

for

a

generic

server

discovery

api.

I

mean

we

like

that's.

That

is

a

problem

for

sure,

but

it's

not

necessarily

a

problem.

We

went

to

set

out

and

you

know

I

think,

having

a

nice

easy

way

to

in

a

platform

agnostic

with

a

platform

agnostic

api,

you

know,

exposed

services

in

kubernetes

that

may

not

even

live

on.

Kubernetes

is

really

useful.

A

Yeah,

but

we

could

just

make

one

I

mean

like

like

it's.

The

other

thing

is,

I

would

really

like.

We

have

a

bunch

of

mcs

implementations,

and

you

know

this

is

actually

what

I

want

to

talk

about

again.

So

I'll

stop

in

a

second.

But

there

are

a

bunch

of

implementations

now

and

everything

we're

talking

about

with

mcs

seems

to

be

additive,

and

I

would

really

like

to

graduate

mcs

to

to

beta,

so

so

we

can

call

it.

A

I

also

think

if

mcs

is

beta

and

we

have

a

simpler

api

that

looks

like

mcs

for

generic

service

discovery,

it'll

probably

be

easier

to

just

kind

of

move

along

with

that,

because

a

lot

of

will,

you

know

we'll,

have

that

endorsement

of

the

of

the

basic

concepts,

so

I'm

very

happy

to

explore

service

discovery

in

general.

For

sure

I

think

this

is

important.

I'm

just

worried

that

this

becomes

the

you

know

the

infinite

problem

space

and

we

try

to

grow

mcs

too

big

and

and

we're

perma

alpha.

C

C

I

did

have

a

couple

more

slides,

one,

one

of

which

is

that

other

aspects

of

you

know

things

that

could

move

towards

this,

and

that

is

using

labels

and

selectors

as

an

alternative

to

relying

on

namespaces

as

the

unit

of

policy

enforcement,

and

then

I

know

this

is

kind

of

somewhat

out

with

mcs

and

much

more

like

a

submariner

implementation

or

possibly

some

of

the

implementations

that

google

have

for

fleets

is

that

you

actually

have

some

kind

of

active

broker

or

control

cluster.

That

decides

what

gets

shared.

A

Yeah,

that's

a

really

good

question.

I

think

the

answer

right

now

is

no

well

to

the

first

question.

Is

there

any

work

going

on?

I

think

should.

Should

we

move

ahead

in

this

form?

Absolutely,

I

think,

like

the

history

of

sig,

multi-clusters

started

with

basically

trying

to

define

like

the

cluster

registry

and

all

those

pieces,

and

and

it's

too

broad

so

with

mcs.

We

we

wanted

to

step

back

and

start

with

something

very

purpose-built

to

solve

problems

that

we

know

everybody

has.

I

think

at

some

point

can

a

control

plane.

A

C

Balancing

perspective,

etc,

etc,

and

obviously

this

would

impact

on

multi-cluster

network

policy

which

nobody's

even

really

well,

I

am

sorry

andrew,

is

probably

pushing

in

a

different

context.

So

yeah

it's

definitely

it's

open

for

further

discussion

and

I

guess

we'd

all

like

to

be

involved

with

quite

a

crowd

of

us.

Well

then,.

A

A

But

yeah

well

so

I've

kind

of

always

thought

of

mcs

as

being

an

api

that

serves

two

different

groups.

They

need

to

work

together,

but

one

role

is

not

really

interacting

with

both

sides.

Right,

there's

the

consumer.

Who

just

cares

that

the

service

is

exposed

to

them

and

their

application

can

consume

it.

A

They

may

care

where

it

comes

from,

but

frankly

I

don't

think

that's

their

role.

I

think

that's

more.

The

role

of

you

know

the

the

platform

administrator

the

service

owner

to

make

sure

it

gets

to

the

right

places

but

like

if,

if

a

service

is

imported

to

me

as

foo,

I

don't

really

care

if

it

was

originally

named

bar.

I

just

care

that

it's

now

named

foo

and

somebody

made

the

decision

that

that

is

a

good

identity

for

it

and

someone.

A

A

Because

most

most

users

of

the

mcs

api

live

on

either

the

exporting

or

the

importing

side

for

a

given

service

they're,

not

necessarily

concerned

with

making

sure

their

exports

and

imports

always

line

up

like

I

want

my

service

to

be

exposed

to

everyone

in

my

cluster

set

is

a

decision

I

make

as

a

service

owner

I'm

not

consuming

my

service

as

a

service

owner.

Somebody

else

is

that's

not

entirely

true.

A

When

you're,

you

know

you

have

a

multi-cluster

application

and

it's

all

one

team

and

you

do

want

that

local

exposure,

like

I

want

my

service

to

spill

across

a

couple

clusters

for

failover,

but

it's

only

consumed

by

a

front-end

by

the

same

team,

but

that

actually

doesn't

seem

very

likely

to

bump

up

against

namespace

sameness

because

you're

talking

about

a

team

as

a

unit

here.

So

all

this

to

say

that

kind

of

getting

to

the

the

topic

I

wanted

to

bring

up

today.

A

I

think

actually,

this

is

really

helpful

in

that

is

like

we

have

a

bunch

of

problems

in

this

space.

I

think

there's

a

lot

of

future

discussion

that

we

need

to

have

around

this

deck.

Thank

you.

Donald,

this

is

really

good.

What

I'm

not

seeing

here

is

anything

that

actually,

like

anything,

that

wouldn't

be

an

add-on

to

mcs.

I'm

not

seeing

anything

that

suggests

that

the

mcs

api

isn't

a

fine

starting

place

like.

A

A

E

So,

yes,

I

agree

that

namespace

non-simness

can

be

an

add-on

starting

with

namespace

sameness,

but

one

thing

that

essence

is

kind

of

not

good

with

the

current

api.

Separate

topic

is

almost

a

slightly

artificial

separation

of

service

and

service

import,

so,

for

example,

in

gateway

api.

We

are

suggesting

that

if

the

back

end

reference

is

service,

then

your

load,

balancing

within

the

cluster.

If

the

backend

service

is

service

import,

then

you're

load

balancing

across

the

entire

global

service

that

requires

applications

to

change

their

manifests

from

service

to

service

import.

E

Ideally,

a

service

import

should

automatically

create

services

so

that

applications

can

treat

services

and

service

imports.

The

same.

That's

that's

not

possible,

that's

that

that

doesn't

work

very

cleanly

with

the

current

api.

So

I

don't

know

if

I'm

saying

this

right,

but

a

applications

have

to

change

the

dns

from

cluster

set

local

to

clusters.

A

And

so

that's

where

we

said

that

you

know

just

overriding

cluster

local,

which

other

platforms

have

decided

to

do

in

the

past,

can

cause

problems

when

all

of

a

sudden,

you're

spraying

traffic

across

regions-

and

you

didn't

know

that

was

even

an

option,

and

so

opting

in

is

is

straight.

Is

you

know

a

safer

approach?

We're.

E

Opting

to

so

two

points

on

that

one

is

opting

in

could

have

been

done

by

retaining-

let's

say,

cluster

local

or,

let's

say

retaining

service

object

instead

of

the

service

import,

but

having

an

additional

field

in

the

service

object,

saying,

oh

by

the

way,

this

service

actually

is

a

multi-cluster

service.

So

just

extending

the

service

there.

A

A

Aside

from

the

you

know,

the

whole

headless

cluster

ip

like

these

are

unrelated

concepts.

Essentially

that

are

exposed

through

the

same

resource,

and

then

we

have

node

port,

and

then

we

have

load

balancer.

Then

we

have

external

name,

there's

a

few

there

that

do

kind

of

build

on

each

other

and

then

there's

a

few

that

have

almost

nothing

to

do

with

each

other,

except

they

also

rely

on

a

pod

selector.

A

A

So

you

know

like

yes,

service

in

service

export

is

another

resource

that

in

many

cases,

could

just

be

a

label,

but

it's

also,

you

know

a

very

like

you-

can

stick

your

service

export

in

the

same

file

as

your

service.

If

you

want,

and

now

it's

nice

and

easy

to

list

all

your

exposed

services

and

we

don't

have

to

extend

service-

I

think

which

is

actually

a

pro,

because

services

is

a

big

mess.

I

think.

A

Yeah

we

also

talked

with

gateway

about

having

the

selector

just

apply

to

both.

I

think

you

know

that's

a

point

of

alignment

with

with

gateway

like

you,

it's

it's.

It

would

be

easy

enough

to

say,

select

all

services

or

or

service

imports.

I

I

agree

that

it

doesn't

seem

entirely

necessary

to

have

specific

selectors

for

service

imports

and

services.

A

I

don't

have

a

real,

strong

opinion

that

way

gateway

went

for

the

separate

selectors.

I

think

network

policy

should

align

with

gateway

regardless

of

the

choice,

but

I

don't

think

that

necessarily

precludes

having

a

separate

service

import.

The

other

thing

is

that,

like

things

use

services

today

and

using

a

service,

import

may

not

always

be

like

using

a

multi-cluster

service

may

not

always

be

safe.

A

A

C

A

A

A

I

you

know

there

are

services

in

the

multi-cluster

space

that

do

this

istio's

one

that

already

kind

of

takes

over

cluster

local

now

mechanically

mcs

is

a

is

an

api

with

no

implementation

right.

We

have

a

bunch

of

implementations,

but

there's

no

like

entry

implementation

mandating.

This

would

be

really

hard

managing

this

behavior

and

including

it

as

part

of

the

core

spec,

because

corey

and

s

cube

dns

already

work

the

way

they

do

and

we

would

be

basically

saying

you

have

to

use

multi-cluster

services.

A

Yeah,

if

you

that's

a

blunt

way

of

aliasing

yeah,

if

you

I

mean

nothing,

some

things

will

get

slower.

I

think

it

would

normally

work

mechanically

implementations

do

this,

so

it's

not

a

problem,

but

they

don't

they

don't

front

it

with

the

same

service.

So

the

big

problem

there

is

how,

since

we,

the

service

is

untouched,

that's

exporting

the

single

cluster

if

you

were

to

import

as

the

service

with

the

same

name,

to

take

it

over.

How

do

you

actually

handle

the

case

where

a

cluster

both

exports

and

imports?

A

That's

that's

real!

That's

the

problem.

We

haven't

really

been

able

to

wrap

our

hands

around.

So

even

if

we

made

it

cluster

local,

we

might

want

to

make

it

cluster

local,

with

a

different

name

which

doesn't

solve

the

fact

that

you

have

to

have

to

change

your

configuration,

often,

which

is

not

ideal,

and

why

we

kind

of

go

with

the

message

that

hey,

maybe

just

start

with

multi-cluster

services

from

day

one.

A

So

you

don't

have

to

worry

about

this

opt-in,

even

if

you're

only

cluster

local,

there's

nothing

like

multi-cloud

services

can

be

cluster

local.

That's

not

a

perfect

answer,

because

a

lot

of

people

are

using

kubernetes

and

day.

One

is

long

past.

So

you

know

that's

that's

difficult,

but

I

always

worry

about

optimizing

for

the

transition

at

the

expense

of

the

long

term.

E

Cluster

set

membership

to

physical

clusters,

meaning

that

you

know

maybe

having

like

more

like

logical

clusters,

be

part

of

the

cluster

set

and

a

physical

cluster

can

consist

of

multiple

logical

clusters

so

that

you

can

have

physical

cluster.

A

has

got

logical

cluster

a.1,

which

has

these

namespaces

and

logical

cluster

a.2,

which

has

these

other

namespaces.

A

Yeah,

so

the

problem

there

is

that

namespace

sameness

becomes

transitive,

but

but

awareness

of

of

other

namespaces

doesn't

right

like

if

you

have

namespaces

one

through

three

are

in

this

cluster

set

over

here

and

name

spaces.

Four

through

six

are

in

this

cluster

set

over

here.

You

still

can

never

create

namespace

one

in

this

cluster

set

right,

because

the

joint

cluster

has

them

both.

But

this

cluster

set

actually

doesn't

know.

Namespace

one

exists,

and

so

you

might

just

want

them

to

all

be

connected

and

then

have

network

policy.

A

And

it's

not

perfect,

we

definitely

have

people

saying

like

well.

What,

if

I

want

to

stitch

cluster

sets

together

and

so

the

blanket

answer

has

been

gateway,

but

I

don't

think

we

have

like

solid

community

best

practices

for

exactly

how

which

probably

should

have

come

accompany

that.

If

that's

the

answer

yeah,

I.

E

A

I

I

agree,

I

think

gateway

gateway

is

designed

to

be

a

lot

more

flexible

and

have

a

lot

more

room

for

growth

than

mcs

like

mcs

is

kind

of

the

opposite.

Mcs

is

super

opinionated

and

simple,

whereas

gateway

is

a

lot

less

opinionated

and

a

lot

more

flexible,

but

you

know

I

imagine

that

advanced

use

cases

with

gateway

are

going

to

be

they're,

going

to

have

a

learning

curve

like

with

mcs.

We

specifically

wanted

no

learning

curve

like

just

make

your

service

multi-cluster,

but

obviously

this

has

caused

problems.

A

We

are

out

of

time

today,

and

so

I

you

know,

I

want

to

respect

everyone.

Who's

got

other

meetings

after

this,

but

this

has

been

an

amazing

conversation.

Maybe

we

can

continue

this

in

in

two

weeks

with

some

more

thought.

I

think

this

is.

This

is

really

a

really

really

good

topic.

I

know

that

laura

who

wasn't

able

to

make

it

today

is

going

to

be

putting

together.

A

You

know

some

final

notes

on

hopefully

graduating

both

mcs

and

and

the

cluster

property

proposal

to

beta

in

the

next

week

or

so

so,

if

anyone

you

know

will

make

sure

that

that

gets

sent

out

to

the

the

mailing

list.

But

if

you

know

anyone

has

any

comments

there

please

join

in,

but

hopefully

we

can

continue

this

conversation

because

I

think

there's

some

really

good

points

brought

up

here

and

some

problems

we

need

to

solve,

which

is

kind

of

what

the

sig

is

looking

for

next

anyway,

so

yeah.