►

Description

Kubernetes Storage Special-Interest-Group (SIG) Object Bucket API Standup Meeting - 22 February 2021

Meeting Notes/Agenda: -

Find out more about the Storage SIG here: https://github.com/kubernetes/community/tree/master/sig-storage

A

A

So

the

reason

it

was

it

was

hard

to

do

with

the

existing

model

is

because

if

we

were

to

mutate

well,

one

is

because

only

the

admin

can

do

it,

because

br

cannot

do

that

as

it

is

now

and

two

if

we

were

to

load

mutation

on

any

one

bucket

when

we

do

share

that

bucket.

We

end

up

in

a

situation

where

one

bucket

has

a

current

data

and

the

others

don't

well

hold

on

to.

C

B

As

buckets

requests

and

buckets

are

bi-directionally

bound,

you

just

have

a

controller

that

propagates

the

changes

directly

from

the

bucket

request

to

the

bucket

and

you're

done.

The

problem

is

once

it

ends

up

on

the

bucket.

You

have

the

same

problem

as

if

the

administrator

did

it,

which

is

what's

the

source

of

truth,

yeah.

The

the

self-service

aspect

of

it

is

not

a

problem.

A

B

A

Yeah

yeah,

so

so

one

of

the

approaches

we

talked

about

that

will

actually

address

a

bunch

of

problems

that

we've

been

facing.

So

so

one

of

the

reasons

we

came

up

with

an

approach

that

the

approach

that

we

have

today

is

because

we

wanted

to

avoid

any

manual

steps

or

admin

related

steps

in

the

process.

A

B

No,

no.

It's

talking

about

the

fact

that

admin

has

to

be

a

part

of

the

process.

No,

but

but

only

once

up

front

when

they

create

their

bucket

class,

which

is

then

they

always

have

to

be

involved

in

creation

of

the

bucket

class.

So,

like

there's

just

a

different

decision,

they

have

to

make

up

front

to

say,

allow

mutations,

but

who,

who

makes

the

mutation

than

the

end

user

on

the

object.

B

Don't

have

to

go

down

that

route,

but

my

argument

would

be

for

for

these

things

that

are

going

to

be

represented

as

first

class

objects

in

the

api

they

shouldn't

be

in

the

storage

class.

They

should

always

be

on

the

bucket

requests,

and

then

you

just

always

set

it

on

the

bucket

request

and

you

always

get

what

you

want.

B

C

B

A

Yeah,

so

so

you

know

it's

good

that

you

brought

it

up.

So

if

you

go

through

the

list

of

things

that

are

configurable

and

then

let's

first

define

what

an

application

developer

is

like

the

person

who's

requesting

for

a

bucket

is

someone

who

is

concerned

with

how

their

application

works,

whose

job

is

to

work

on

their

specific

application.

Now

there

are

obviously

outliers

people

who

do

everything,

but

but

that's

more

the

exception

than

the

rule.

A

B

A

B

Can

I

take

a

swing?

Yeah

go

ahead

so

so,

like

the

reason

something

like

object,

locking

enabled

matters

is

if

my

application

is

designed

around

the

assumption

that

it's

going

to

be

able

to

lock

objects

because

it

can't

function

correctly.

Otherwise,

and

like

I

install

this

on

a

particular

kubernetes

cluster

and

it

works

when

I

take

the

same

application

installed

on

a

different

kubernetes

cluster,

it

better

work

exactly

the

same

or

my

I'm

not

I

don't.

I've

lost

portability

right

so,

like

you're,

not

saying.

A

Because

there's

an

even

important,

more

important

reason

why

the

user

should

not

do

it,

the

user

doesn't

have

any

visibility

and

how

other

namespaces

use

the

bucket,

which

applications

use

the

bucket.

If

a

user

can

go

and

mutate

these

policies-

and,

let's

say

a

different

application-

was

relying

on

one

of

these

parameters.

B

But

if

the

case

I'm

concerned

about

is

if,

if

the

underlying

storage

system

doesn't

support,

object,

level,

locking-

and

I

I

try

to

run

my

application

on

that

particular

kubernetes

cluster-

where

that

particular

driver

and

storage

system

is

installed,

it's

not

going

to

work

well

right,

because

my

my

pi

I'll

get

the

bucket

my

pod

will

start

up.

My

pod

will

begin

making

object,

lock,

requests

through

whatever

the

cozy

standard

way

that

we

say

you

should

do

that.

C

B

They're

going

to

fail

because

the

guy

on

the

other

end

doesn't

know

what

you're

talking

about

and

like

you

want

to

have

a

way

of

formally

saying

like

this

bucket.

I'm

asking

for

needs

to

have

this

feature

that

I'm

going

to

depend

on

and

then

and

then,

if

the

cloud

can't

do

it

like

or

or

maybe

the

cloud

has

two

different

storage

systems

and

it's

got

to

pick

the

one

that

they

can

do

it.

I

mean

you

know

those

kinds

of

things.

B

C

B

Will

work

or

not

work

depending

on

whether

the

feature

is

present,

then

it

feels

like

it

needs

to

bubble

up

all

the

way

to

the

to

the

top.

Assuming

that

it's

not

like

some

proprietary

thing

that

only

one

vendor

supports

right.

It

would

have

to

be

something

that

would

be

supported

across

the

industry

right

right.

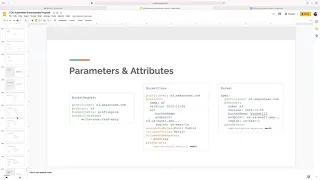

A

Exactly

yeah

yeah,

that's

that's!

This

is

the

list

on

the

left.

So

coming

back

to

that,

if

we

were,

let

me

think

about

this.

So

what

was

I

thinking?

I

had

a

really

good

point

here:

man,

okay

anyways,

so

so

if

we

were

to

look

at

these

options

right

in

front

of

us,

none

of

them

so

so

yeah.

This

is

what

I

was

thinking

about.

So

an

application

works

with

the

data

data

apis,

an

application

almost

never

cares

about.

A

B

B

Was

if

it's

something

that's

supported

by

multiple

vendors

and

we

want

to

standardize

it

that's

when

it

gets

promoted

and

the

reason

you

do.

That

is

because

it

has

to

flow

all

the

way

through

the

api

from

the

user

saying

I

want

this

all

the

way

through

to

the

downward

facing

part

where

the

pod

says.

I

got

the

thing

that

I

asked

for

and

it's

because

it

changes

what

the

pod

can

or

cannot

do.

Yeah.

C

C

B

If

we

don't

put

that

in

the

spec,

then

it's

it's

the

wild

west

right.

Anyone

can

do

anything

so

like,

and

I

don't

know

if

object

level.

Locking

is

the

thing

that

will

you

know

be

an

example

of

this

or

not,

but

it

feels

like

one

to

me

because

it's

who's

going

to

use

it.

If

not

the

application

running

in

the

pod.

A

B

A

A

B

B

C

B

B

A

B

B

There

was

this:

there

was

this

theoretical

workflow

in

which,

where

you

want

to

create

a

bucket,

that's

initially

not

public,

and

you

want

to

run

some

workloads

and

sort

of

fill

it

up.

Then,

after

it's

filled

up,

you

want

to

flip

it

to

public,

so

that

people,

I

guess

outside

the

kubernetes

cluster,

can

start

to

use

it

and

yeah.

A

Through

that

something

like

that,

you

you

do

on

a

you

know,

you're

talking

about

a

manual

operation

at

there

either

you

don't

go

through

cozy

for

a

bucket

like

that,

but

if

you

have

workloads

that

are

going

to

fill

up

something

like

that,

the

switching

is

still

manual.

It's

not

it's,

not

it's

not

like

the

workload

decides

to

do

that.

I

can't

think

of

scenario

where

that

needs

to

be

automated.

B

It

seems

perfectly

reasonable

to

say

just

go

flip

the

switch

on

the

bucket

yourself

because

you're

already

not

in

kubernetes

anymore

and

and

and

to

be

clear

like

even

if

it's

a

green

field

bucket,

you

can

still

find

the

actual

bucket

through

kubernetes

right

it'll.

Tell

you

how

to

go,

go

to

the

actual

management

plane

for

that

cloud

provider

and

go

flip

the

bits

you

want

so

yeah.

I

I

am

on

board

with

that

say

you

do

that

outside

kubernetes.

A

Yeah

yeah

exactly

bucket

tagging

again

similar

to

that

you

know

you

put

tags

on

the

bucket

to

be

able

to

filter

it

and

all

that

I

need

to

figure

out

what

bucket

this

might

be.

I

don't

know

what

this

policy

is.

Oh

another

thing

about:

coming

back

to

anonymous

access,

there

is

a

more

important

thing

about

anonymous

access.

Anonymous

access

is

a

deprecating

feature,

so

people

don't

want

us

to

use

anonymous

access

at

all.

It

is

actually

a

security.

A

It's

easy

to

get

into

security,

loopholes

or

vulnerabilities

using

this.

So

it's

a

acl

really

for

the

entire

bucket

and

aws

actively

aw

supports

were

three

types

of

authentication

like

acls

and

the

iem

and,

like

simple

token,

service

aws

wants

us

to

stop

using

the

acls.

Acls

are

just

by

design.

You

know

like

a

catch-all

kind

of

access

policy

and

or

access

control,

and

the

lack

of

granularity

makes.

The

you

know

makes

it

really

easy

for

someone

to

assume

that

say.

A

B

D

A

Readable

to

the

whole

world,

that's

only

for

static

provisioning,

so

so,

ideally

like

take

any

database.

For

instance,

you

have

the

database

always

prompted

by

some

kind

of

application,

and

and

the

link

between

the

application

of

the

database

is

private,

but

an

application

is

the

one

that's

front

facing.

B

B

I

guess

the

argument

there

is

like

you

would

never

create

that

bucket

through

cozy.

You

would

just

have

a

bucket

and

then

kubernetes

would

write

stuff

into

it,

but

you

wouldn't

automate

the

creation

of

that

bucket

enough,

which

I

buy,

which

I

totally

buy

because

yeah

it's

it's,

the

bucket

is

necessarily

exposed

outside

kubernetes.

So

why

would

you

create

it

inside

kubernetes

right?

You

would

create

it

through

the

normal

way.

A

A

Yeah

so

so

yeah

so

acknowledge

and

then

I

say,

block

public

access

to

buckets

and

objects

granted

to

new

access

controllers.

So

once

you

do

this

right,

like

there's

a

permanent

like

you

know,

one

of

these

caution

signs

that

shows

up

above

your

bucket

saying

that

this

this

acl

set

on

a

con.

You

know

start

using

iam

instead,

even

with

iam.

You

know

you,

you

have

this

policy

where

your

love,

you

know

access

for

everyone,

but

but

they

themselves

discourage

it.

B

B

Get

buckets

and

do

it

like

that's

you

know,

that's

that's

why

s3

became

popular

initially

and

then

it

got

all

these

other

use

cases

added

to

it

over

the

years

and

and

maybe

those

became

more

of

their

core

business,

but

the

whole

point

originally

was.

I

just

need

a

place

to

push

stuff

out

on

the

internet

right.

A

C

B

But

there

could

I

mean

s3

doesn't

but

like

there's,

no

reason

that

a

decent

object

system

couldn't

like,

like

sure

yeah

the

future

of

object

storage

is,

is

very

bright

and

like

stuff

like

object

level,

real

object,

level

locking

will

come

someday.

You

know

bucket

level

snapshots

are

coming,

they

already

exist.

B

A

A

B

C

C

B

A

Agreed,

I

was

coming

more

from

the

point

of

whose

responsibility

would

it

be

within

the

organization

to

configure

and

assuming

the

application

has

the

provisions

to

do

it

right,

but

it

needs

to

be

configured

who

would

be

responsible

for

doing

it.

That

would

be

that

yeah

yeah

yeah

yeah,

so

yeah

like

I

was

saying

so

like.

I

think

I

think

it's

fair

to

make

the

delineate

delineation

between

provisioning

time

concerns

and

and

access

time

concerns

like

provisioning

time

concerns

are

what

we're

dealing

with

here

and

access

time.

A

A

For

instance,

you

know

they

might

want

the

bucket

that

they

create

they

might

want

to

make

it

right

only

for

a

particular

directory

or

or

you

know

not

listable

or

whatever,

and

we

can

configure

all

of

that

through

the

bucket

access

request.

That's

the

access

policy,

that's

the

that's!

The

authenticated

access

policy

so

so

again,

going

back

to

this.

This

delineation,

calling

it

provisioning

time

concerns

and

like

io

apis,

I'm.

B

A

Yeah

for

that,

for

that

I

yeah

it

can

be

multiple

connections,

but

for

that

I

am

user

right,

yeah

yeah.

So

so,

if

we

looked

at

it

that

way,

provisioning

time

versus

versus

you

know

access

time,

maybe

there's

a

better

way

to

you

know

better

words

to

put

it

again

bucket

mutation.

So

since

we

only

deal

with

provisioning

and

bucket

mutation

is,

is

a

provisioning

level

concern?

I

I

I'm

actually

more

convinced

now

that

you

know

we

should

not

be

doing

bucket

mutation.

A

B

No,

no,

I

mean

yes,

we

can

always

say,

like

you

do,

that

out

of

band

right,

that's

what

we

do

for

pvcs

yeah

right

like

there's

a

million

options

you

can

set

in

your

volumes

and

kubernetes

doesn't

understand

any

of

them

other

than

like

the

size

and

the

access

mode

and

the

volume

mode

like

those

are

the

three

that

that

matter

and

then

everything

else

is

sort

of

out

of

band.

So

that's

a

good

starting

point,

but,

like

then

along

came

resize

on

the

pvc

where

we're

like.

Oh

okay.

B

Now

you

need

to

be

able

to

take

an

existing

pvc

and

change

its

size,

and

that

means

we

got

to

write

all

these

new

controllers

and

all

these

new,

all

this

new

logic

to

deal

with

that,

and

someone

is

going

to

ask

like

that-

will

inevitably

happen

here.

Where

there's

at

least

one

thing

that

you

will

decide,

you

want

to

be

able

to

change

after

the

fact.

B

A

A

So

there's

only

one

br

and

one

b,

and

that

br

is

you

know

it

will

be

in

the

username

space

and

that

pr

can

actually

go

and

change

or

will

have

the

options.

For

you

know

the

parameters

that

go

into

the

bucket,

the

bucket

is

still

in

the

you

know:

global

name,

space,

cluster

scoped

and

for

brown

field.

A

A

A

C

B

I

have

a

group

if

I

have

a

green

field

vr

in

one

namespace

and

it

does

the

whole

provisioning

workflow

out

pops

a

bucket.

Now

I

want

to

share

with

other

namespaces,

like

I

envision

that

there's

some

controller

at

some

point,

which

is

okay,

I'm

gonna

take

it

and

I'm

gonna

copy

it

from

namespace

food

and

namespace

bar.

So

you

know

if

it

was

created

in

foo,

I'm

just

gonna

create

another

br

namespace

bar

I'm

using

bad

bad

example.

Names.

B

Like

pr,

but

so

like

the

controller,

could

automate

the

whole

process

of

like

duplicating

the

b

with

with

a

different

the

different

deletion

policy

and

everything,

and

it

could

duplicate

the

vr

into

the

other

namespace

and

it

could

cause

them

to

bind,

and

you

could

do

this

all

in

a

very

safe,

controlled

way.

You

know,

with

with

appropriate

access

control

and

appropriate

preventions

of

races,

et

cetera,

et

cetera,

no.

A

B

We

started

from

the

presumption

that

yeah,

the

user

can't

see

outside

his

name

space

right.

So

whatever

exists

out,

there

is

invisible

to

him.

So

the

idea

is,

you

got

to

give

him

something

that

is

visible

and

today

that's

and

if

you

were

in

the

greenfield

name

space

the

thing

that

you

would

bind

your

bar

to

would

be

the

br,

because

you

know

it

you

created

it.

You

picked

the

name

so

to

mirror

that

pattern

in

somebody

else's

namespace.

B

You

said:

well,

you

just

give

them

a

br

that

happens

to

point

to

the

same

thing

in

some

brownfield

way

and

then

their

bar

creation

process

is

identical,

but

you're

saying

no

like

you

actually

do

it

differently.

If

it's

not

the

original

you

yeah

you're,

given

some

string

and

then

you

just

directly

refer

to

it.

A

A

C

A

B

A

B

C

A

B

B

If

I

have

to

be

able

to

summon

up

the

bucket

name,

then

you

have

to

wait

for

a

bind

to

occur

before

you

can

fill

in

your

bar.

So

I

feel

like

at

the

very

least

you're

going

to

have

to

allow

either

the

bucket

if

it's

a

brown

field

or

the

bucket

request.

If

it's

a

green

field

and

then

you're

going

to

have

to

deal

with

the

ugliness

of

an

api

object

where

it's

like

one

or

the

other.

A

A

A

So

a

controller

will

will

take

the

br

from

the

original

namespace

and

create

a

new

br

in

the

new

namespace,

wherever

it's

meant

to

be

shared.

So

so,

when

you

create

a

br,

what

does

that

other

br

refer

to

that?

That

directly

has

a

a

you

know:

fixed

reference

to

the

original

bucket,

there's

only

one

bucket

instance.

So

now

you

have

many

to

one

now.

A

It's

only

a

problem

if

you're

doing

that

matching

algorithm,

but

but

if

you're

dynamically

provisioning

and

there's

no

matching

going

on

it's

not

like.

There

are

many

buckets

that

you

know

many,

it's

not

like

pvs

and

pvcs,

where,

where

pvc

can

be

matched

with

one

bucket

but

before

or

one

pv,

but

before

the

pv

is

actually

matched

back

to

the

pvc,

someone

else

could

match

to

this

pv.

A

A

A

A

Yeah

so

anyways

wherever

that

matching

algorithm,

is

there

we

it?

It

clearly

says

that

the

pro

the

the

real

problem

is

with

that

matching

it's

it's

not

just

you

know

it's

not

because

it's

bi-directional,

but

like

bi-directional

by

itself,

is

not

is

not

bad.

What's

bad

is

the

fact

that

we

rely

on

that

to

do

this

matching

algorithm

and

then

matching

by

default.

I

can

tell

you,

you

know,

stops

it

from

being

declarative,

it

works

most

of

the

times,

but

it

doesn't

work

every

time.

I.

B

A

You,

okay,

so

so

is

there

any

other

reason,

because

if

that's

the

only

problem,

that's

a

non-problem

right

because

you

can

have

the

main

created.

We

are

the

first

one,

be

the

one

that

that

does

this

on

on

clones,

we

we

mark

it.

As

you

know,

it's

a

clone.

We,

we

have

a

special

marker,

say

an

annotation.

B

C

B

A

A

B

A

No,

we

have,

we

have

a

lot

of

namespaces

in

it.

We

have

allowed

namespaces

in

the

original

br

and

and

when

the

controller

sees

that

the

original

br

has

been

created

with

more

than

one

allowed

namespace,

and

then

we

I

mean

other

than

the

where

it's

originally

created,

then

we

go

and

and

create

brs

in

the.

In

that

new

name,

space

and

and

in

those

name

spaces

say

the

br

names

are

predictable.

B

C

C

A

A

A

B

A

B

So

so

I

mean

the

thing

I

liked

about

the

having

lots

of

copies

of

the

bee.

Was

that,

like

over

time

as

you

decide

to

share

with

different

people,

it's

very

obvious

what

to

do

to

create

a

new

copy

and

expose

it

to

someone

like

yeah,

you

end

up

with

lots

of

copies

of

the

b,

which

is

the

ugly

part

of

it,

but

every

other

aspect

of

it

is

kind

of

nice.

B

Not

good

with

you

no

again,

and

furthermore,

I

want

to

emphasize

like

this

is

what

we

have

done

with

snapshots

like

when

you

want

to

share

a

snapshot

with

multiple

namespaces.

You

have

to

create

multiple

copies

of

the

volume

snapshot,

content

and

another

copy

of

the

volume

snapshot

in

the

other

namespace,

and

then

let

them

bind.

B

And

it's

just

that

that

pattern

exists

today

and

it's

again,

it's

it's

ugly

for

the

same

reasons

like

having

lots

of

copies

of

the

volume

snapshot

content.

That

I'll

point

to

the

same

snapshot

is

weird,

because

if

you

get

the

deletion

policy

wrong

on

one

of

them,

somebody

can

delete

a

snapshot,

that's

in

use

by

another

guy.

B

So

you

have

to

be

very

careful

about

the

deletion

policy,

but,

like

aside

from

the

ugliness

of

the

multiple

copies

of

the

object

and

having

to

get

the

deletion

policy

right,

everything

else

about

it

is

pretty

tolerable,

yeah,

yeah

agreed.

I

agree

and-

and

I

was

trying

to

push

the

cozy

design

in

that

same

direction

because

it

seems

like

the

least

of

all

evils

to

me.

I

agree.

A

Agree,

no,

there

are

two

problems

here

again

we

really

haven't.

I

mean

I

even

like

again,

I

don't

know

a

hundred

percent

sure

that

this

one

to

many

mapping

is

going

to

be

just

fine.

One

two

is

again:

we

we

talked

about

this.

This

allowed

namespaces

thing

it

gets,

it

gets

tricky

and

it

gets

weird.

Yes,

so.

B

B

C

B

C

B

B

C

F

B

Created

stuff,

a

bunch

across

a

bunch

of

name

spaces

and

then

a

deletion

in

somebody

else's

name

space

causes

an

object

in

my

name

space

to

go

away.

It's

very

weird:

it's

almost

better

to

have

to

have

just

a

broken

copy

where,

where

I

still

have

my

object,

but

it

doesn't

work

anymore,

because

the

object

is

gone.

B

D

B

Would

happen

with

the

snapshot

today

if

you

cloned

a

snapshot

to

another

namespace

and

then

someone

deleted

it

out

from

under

you,

you

would

still

have

your

volume

snapshot

object.

You

just

couldn't

use

it

anymore,

because

snapshot's

gone

true

and

that's

not

a

horrible

failure

mode.

From

my

perspective,.

A

I

I

can,

I

can

get

behind

that.

So

so

you

see

I'm

bringing

this

up

as,

as

you

know,

the

fact

that

we've

been

thinking

of

alternate

solutions.

Maybe

this

is

not

the

one

that

will

work,

but

but

if,

if

we,

if

we

do

end

up

in

a

situation

where

an

alternate

solution

is

needed,

where

we

need

to

be

able

to

mutate

some

option,

I

think

the

best

answer

we

have

is

the

admin

can

still

do

it,

go

to

the

bucket

objects

and

directly

just

do

it

and

we'll

react.

We'll

react.

B

C

A

A

A

B

A

When

they

do

join,

you

know

I'm

going

to

preface

it

saying

through

all

these

discussions,

it's

pretty

clear

to

us

that

that

mutation

policy

is

not

something

we

should

be

supporting,

but

right

now

in

case

mutation

policy

is

something

that

that

you

know

mutation

is

something

that's

absolutely

needed

for

a

new

field

that

we

completely

didn't

anticipate.

We

do

have

an

answer.

B

C

C

C

A

A

B

Well,

that's

totally.

I

mean

if,

if

that's

what

they're

proposing

and

we

like

it,

then

that's

a

huge

deviation

from

the

current

plan

right.

That's

too

much

of

a

deviation!

Yes,

but

no!

No,

I'm

I'm

not

saying

it's

too

much,

I'm

just

saying

it's!

We

we

better

find

out

about

that

soon

and

start

changing

our

plans.

If

that's

actually

the

right

answer,

because

it's

going

to

be

a

huge

amount

of

work.

A

B

B

Oh,

how

do

you

I

I

don't

know

about

the

discovery

aspect

of

it?

That's

an

interesting

question,

but

like

I,

I

could

see

that

you

know

if

for

workloads

where

you

never

ever

needed

to

share,

you

could

just

create

buckets

in

your

own

namespace

and

use

them

in

your

own

namespace

and

be

happy

forever,

never

sharing

and

then

for

ones

where

you

did

need

to

share.

B

You

would

either

force

people

to

create

it

outside

of

the

cluster

and

then

use

the

cluster

bucket

mechanism.

Whatever

that

ends

up.

Looking

like

I'm

curious

about

it

or

you'd

have

a

way

to

promote,

maybe

a

namespace

bucket

to

a

cluster

bucket

through

some

process

to

make

it

shareable.

If,

if

you

wanted

to

do

that,.

A

D

A

Yeah

anyways.

I

think

this

is

a

good

discussion.

There

was

one

other

concern

I

wanted

to

bring

up.

That

is

provisional

registration.

We

never

talked

about

having

an

api

for

that

api

object,

for

that,

some

like,

like

csi,

has

the

concept

of

a

csi

driver

and

csi

node.

We

don't

have

something

similar

for

buckets,

so

I

had

a

proposal

here.

We

don't

have

time

today,

but

I'll

bring

it

up

on

thursday.

If

we

haven't.