►

From YouTube: Kubernetes SIG Storage 20180524

Description

Kubernetes Storage Special-Interest-Group (SIG) Meeting - 24 May 2018

Meeting Notes/Agenda: https://docs.google.com/document/d/1-8KEG8AjAgKznS9NFm3qWqkGyCHmvU6HVl0sk5hwoAE/edit#heading=h.dru4072fukqt

Find out more about the Storage SIG here: https://github.com/kubernetes/community/tree/master/sig-storage

Moderator: Saad Ali (Google)

Chat Log:

N/A

B

Right

good

afternoon,

everyone,

this

is

the

May

24th

storage,

special

interest

group

for

Nettie's

meeting.

Remember

this

meeting

is

recorded

and

public.

So

what

would

you

say?

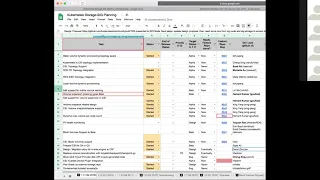

Let's

run

through

our

agenda?

I'll

start

with

a

planning

spreadsheet

and

then

we'll

go

through

any

design

reviews

or

any

hours

that

people

want

to

talk

about.

B

A

B

A

A

B

A

Deep

has

been

working

on

both

the

GCE,

PD

and

AWS

parts

of

this

right

now

he's

looking

at

making

some

changes

to

the

existing

in

mission

controllers.

To

put

the

to

add

the

PD

node

affinity

by

default

to

new

PB

objects.

So

a

PR

is

out

for

that

and

we

are

reviewing

and

that's

probably

the

most.

We

are

going

to

be

able

to

get

in

for

111,

there's

still

a

ton

of

other

topology

integration

items.

A

B

B

B

A

B

C

C

And

obviously

we

need

the

kubernetes

part

as

well

to

be

done

a

controller

and

we

have

to

make

plug-in

changes

in

the

CSI

entry

plug-in

and

kubernetes

like

interface

changes.

So

just

like

we

had

to

first

get

the

CSI

plug-in

model

then

make

the

entry

changes

and

I.

Don't

think

we'll

be

able

to

do

this

in

1.11,

both

the

stuff.

C

Okay,

yeah,

oh

yeah,

that's

a

peer

out

for

review.

Yanis

reviewed

it

I

has

reviewed

it.

We

just

I've

tested

it.

Yogesh

is

writing

some

e

to

eat

just

for

it.

He

has

already

written

it.

So

I,

just

I

think

we

need

LG

TM

by

today

or

maybe

tomorrow,

and

we

should

be

ready

to

much.

But

it's

been

tested

and

yeah.

C

D

B

B

C

There's

a

PR

open

and

like

the

CSI

proposal,

was

moist,

so

the

pier

is

open

and

for

CSI

I

think

you've

got

point

three

release:

I'm

writing

code

to

see

its

implementation

for

the

implementation

for

same

thing,

but

I

think

these

two

pieces

will

go

as

separate.

Prs

like

the

CSI

appear.

I

would

like

to

keep

it

as

separate

like

the

volume

counting

for

CSI

will

be

separate,

pier,

but

and

for

entry.

Plugins

is

already

PR

open.

B

B

G

G

F

G

G

G

A

G

H

E

E

A

E

G

So,

there's

a

bunch

of

different

items

that

went

under

this

one

of

the

two,

the

two

big

items

that

we're

tracking

for

this

quarter

are

moving

CSI

to

block,

which

is

on

track.

Thanks

to

glad.

The

second

big

item

that

we

wanted

to

get

in

was

driver

plug-in

registration

with

cubelet.

We

want

to

have

a

standard

mechanism

by

which

the

CSI

plugins

are

registered

and

so

that

there's

a

discovery

mechanism

for

cubelet.

G

G

Unfortunately,

that

work

is

blocked

on

on

this

plugin

registry.

The

plug-in

registry

stuff

is

also

something

that

Vlad's

helping

drive.

So

those

two

projects

are

looking

good.

The

driver,

registrar

work

is

going

to

have

to

wait

until

we

have

this

plugin

registry,

so

we're

going

to

tackle

that

next

quarter.

If

we

can

get

this

driver

registry

stuff

done

this

quarter

and

then

topology

was

another

big

feature

that

we

all

wanted

to

get

into

this

quarter.

G

The

CSI

aspect

has

a

PR

open

against

it.

There's

ongoing

discussion

on

that.

It's

probably

going

to

get

in,

but

the

wiring

between

kubernetes

and

CSI

is

probably

not

going

to

go

in

this

quarter.

It

would

go

in

next

quarter,

so

work

in

progress,

probably

not

going

to

hit

a

hundred

percent

of

the

goals

that

we

set

for

this

quarter,

but

work

in

progress.

B

G

B

D

B

G

I

can

give

a

quick

I

company,

so

at

the

face-to-face

meeting,

David

did

a

presentation

of

the

plan

that

he's

been

working

on

with

the

on

to

migrate

the

entry

volume

plugins

to

CSI.

There

was

a

lot

of

discussion

and

a

lot

of

good

feedback

that

he

got

from

that

he's

going

to

update

the

design

dog,

which

is

currently

in

a

Google

Doc

and

he's

gonna

prototype

some

of

the

ideas

that

he

has

laid

out

and

once

he's.

G

G

G

G

Is

started,

Vlad's

been

doing

a

lot

of

the

heavy

lifting

and

trying

to

get

the

conversation

going.

He

set

up

a

meeting

yesterday

with

me,

Sergei

Luiz

and

a

few

other

folks

I

think

we

are

comfortable

with

what

the

proposal

is

and

Vlad's

going

to

help

Shepherd

that

PR

towards

getting

merged

and

then

Sergei

is

helping

work

on

this

ESI

integration.

For

that,

so

it

started.

It's

probably

still

a

little

bit

tight

but

we'll

see

where

it

goes

by

Monday.

B

Containerized

cubelet

issues

and

in

testing

I

have

found

a

owner.

I

found

a

group

to

own

this

in

Red

Hat,

but

I

haven't

linked

them

down

to

actually

owning

a

so

basically,

their

manager

said

they

don't

yet

but

I

they

haven't,

but

we.

So.

This

is

still

in

progress.

A

host

pathway

and

reconstruction

support.

I

B

G

B

I

B

A

B

A

B

B

That's

a

good

point:

that's

not

something

that

we

do

so

our

users

can

never

go

back,

there's

no

back

to

the

old

version,

so

we

we

would

like

someone

with

experience

with

the

upgrade

downgrade

to

when

we

exercise

it

to

improve

it.

I

think

I

think

that's

why

we

haven't

had

any

approvals

from

our

side.

That

makes

sense.

You

know

with

I

know:

Pamela's

wasn't

overly

familiar

with

the

working

I

wanted,

someone

that

knew

the

downgrade

function

to

actually

do

the

review.

B

B

E

Yeah,

so

for

the

past

five

months,

I've

been

working

on

a

project

called

a

container

native

virtualization,

which

is

like

the

turducken

of

the

aims

and

kubernetes.

That's

how

I

like

to

explain

it.

So

it's

running

a

VM

inside

a

container

inside

kubernetes

and

my

team

was

brought

into

that

to

kind

of

oversee

the

storage

aspect

of

it,

and

some

interesting

tech

has

come

out

of

it,

but

needs

to

kind

of

be

contributed

back

into

the

community.

E

They're

using

whatever

technology

exists,

on

that

storage

array,

to

basically

to

do

the

storage

and

create

a

copy

on

the

back

end

of

a

PV,

and

this

is

done

in

the

effort

to

take

like

a

golden

image

of

a

the

vm,

have

it

saved

in

a

persistent

volume

and

then

be

able

to

copy

that

volume

to

instantiate

another

instance

of

a

virtual

machine.

So

that's

the

premise:

they

are

wanting

to

do

a

couple

of

things

that

could

possibly

be

problematic

just

based

on

our

discussions

with

snapshotting,

and

one

of

that

is

the

host

assisted

cloning.

E

E

B

So,

what's

the

food

storage

change?

These

are

six

storage

features.

We

should

run

them

through

like

them

complaining,

and

then

you

know

they

I'm

guessing

that

you'd

want

this

as

part

of

112,

so

you

probably

want

to

create

features

for

it

against

112

and

then

you

know

add

it

to

the

plain

spreadsheet:

to

track

well-designed

documents

with.

E

It

right

I

mean

I,

guess,

I

think

they

want

to

make

sure

they're

doing

things

properly

and

that's

all

goodness,

with

things

moving

out

of

tree

I

think

we

have

less

control

out

of

how

things

are

done,

but

certainly

if

it's

a

controller

that

is

doing

things

like

copying

volumes

across

namespaces

I

feel

like

the

storage

sink,

should

be

that

discussion

and

weigh

in

on

on

that.

So

this.

K

L

So

this

is,

this

is

Adam.

The

sender

provisioner

is

over

at

the

cloud

OpenStack

cloud

provider

repo,

so

there's

a

PR

out

for

it.

There

we

have

folks

in

with

the

Gluster

provisioner,

who

want

to

implement

the

same

cloning

annotation

on

pvcs

and

I'll

spoke

with

NetApp

and

they're

doing

something

exactly

the

same,

using

a

different

annotation

for

trident.

L

So

one

of

the

things

that

we

would

want

is

an

agreed-upon

standard

annotation

for

this,

and

maybe

some

sort

of

conventions

around

what

happens

if

a

provisioner

does

or

does

not

support

an

annotation

as

well

as

looking

into

CSI

for

how

you

might

do

something

like

this

in

CSI,

with

maybe

a

different

volume

source

type,

which

is

another

volume.

So

some

of

those

things

where

is

where

I

think

the

touch

points

are

with

the

sig

well,.

E

And

I

mean

ultimately

yeah.

It's

it's

at

a

tree

Brad,

but

I

think

they

are

methods

by

which

other

storage

providers

are

going

to

want

to

utilize

this,

and

it

would

be

nice

to

contribute

them

back

to

the

community.

I

guess

is

really

my

intent,

so

even

if

it's

something

that

exists

in

an

external

provisioner,

cloning

I

think

is

a

very

powerful

feature

that

should

be

made

aware

as

part

of

the

storage

sig

are.

I

B

Point

of

view,

if

you

guys

want

to

get

a

project

as

an

official

cig

project,

that

means

they

would

live

under

one

of

our

organizations.

Really

really

these

sick

kubernetes

saves

and

we

have

a

state

storage.

Your

project

name

I,

you

I

think

it's

too

late,

probably

to

put

in

external

storage

I,

know,

there's

some

stuff

there,

but

you

know

we're

trying

to

get

everything

out

of

there.

B

If

it's,

if

there's

a

CSI

touch

point-

and

you

know

it's

a

CSI

project,

we

have

a

communities

CSI,

you

know

organization,

they

can

host

suicide

specific

stuff

to

me,

it

sounds

like

this

stuff

is

being

hosted

already

and

Synder

or

outside

of

kind

of

the

cube

sphere

of

influence.

I

I

mean

we

can

we

definitely

listed

as

a

project,

and

you

know

point

to

the

external.

B

L

So

I

think

we're

the

cig

can

contribute

here

is

that

we

have

a

bit

of

a

wild

west

with

all

of

the

out

of

tree

storage,

plugins

and

provisioners

everybody's

using

different

annotations,

and

also

how

you

might

hook

us.

Basically,

what

I

think

you

tend

to

want

to

do

is

have

a

storage

back-end

based

smart

cloning

as

I've

been

calling

it

with

a

backup

using

hosts,

assisted

cloning.

L

So

I

think

there

is

a

bit

of

a

an

ability

to

have

an

ecosystem

around

this,

especially

with

security,

best

practices

and

one

of

the

things

that

I'm

hunting

around

for

is

in

an

out

of

tree

world.

How

do

we,

how

do

we

have

that

organization

or

kind

of

take

away

the

anarchy

there?

If

there's

a

way

to

do

that,

the.

B

G

E

I'm

aware

of

the

process,

Brad

and

I

appreciate

you

bringing

that

up,

but

I

think

it's

in

our

best

interest

to

come

up

with

something

that

is

consistent.

I

think

Adam

hit

the

nail

on

the

head,

I

mean

it

everything

moving

at

a

tree,

it's

scary,

and

we

talked

about

how

do

we

validate

eight

or

certify?

G

Think

out

of

the

snapshots

discussion,

what

were

people

want

after

that?

As

the

next

step

is

migrating

data

around

and

being

able

to

import

and

export

volumes?

That

is,

you

know,

going

to

be

a

real

challenge.

We

may

not

be

able

to

get

to

that

point,

but

this

discussion

kind

of

overlaps

with

that

and

it's

worth

having

the

discussion

rather

than

not

yes,

I

thought

I.

L

Was

just

gonna

say

quickly,

that's

a

really

great

point,

because

we

have

that's

another

one

of

our

features.

We

were

looking

into

that

I

brought

up

it.

Cube

Khan

was

the

the

import/export

ideas

as

well

and

I.

Think

the

same

exact

framework

and

rules

apply

because

you

can

implement

it

as

entirely

an

application,

but

maybe

there's

you

and

a

stander

and

earn

well

in

that

way.

I.

B

G

L

You

know

so

we

have

some

stuff.

We

have

code

for

CDI,

I

have

PRS

for

the

cloning.

That's

actually

been

implemented

in

multiple

provisioners,

but

so

I

think

one

of

the

things

were

recognized

as

we

made

a

little

mistake

in

May.

We

wanted

to

see

if

it

could

work,

but

now

we

really

want

to

engage

the

community

on

a

design,

that's

proper.

So

so.

G

G

Let's

start

the

process

off,

let's

get

a

design,

doc

written

and

then

use

that

to

focus

further

discussion

about

cloning

for

their

discussion

about

import/export

see

if

there

is

common

things

that

can

go

into

the

core

of

kubernetes

or

if

everything

is

going

to

be

external

and

then

once

we've

locked

down

the

design,

then

we

can

talk

about

next

steps

about

if

there

are

code

artifacts

where

they

should

be

moved

to

or

where

they

should

let

there.

Okay.

E

And

they'll

probably

be

three

things

and

Adam

mentioned

CDI.

That's

our

container

data

importer.

Today,

it's

specific

to

VMs

that

actually

could

be

used

as

a

method

to

import

proceed.

Pbs

like

if

you

wanted

to

backup

a

database

on

to

a

PV.

It

started

in

your

namespace,

for

instance,

it

could

be

a

method

for

that,

so

it

there's.

Definitely

some

useful,

tooling

I,

think

that

we

want

to

on

I,

think

there's

probably

going

to

be

three

different

things

that

will

permit

those

I

think.

G

E

I

So

this

is

the

eyes

because

he

deletion

changed

to

to

prevent

data

corruption

in

cases

where

I

LUNs

get

reused

on

the

back

end.

So

when

I

was

down

there,

hacking

on

the

yes,

because

he

could

I

didn't

see

any

locks

and

I

kind

of

assumed

that

some

sort

of

locking

was

happening

at

a

higher

level

to

prevent

any

kind

of

race

conditions

with

the

I

scuzzy

sessions,

because

I

scuzzy

login

sessions

are

shared

resource

across

multiple

PBS.

I

So

I

was

talking

to

some

people

about

it

last

week

and

I

understand

now

that

there

aren't

any

locks,

and

so

there's

probably

just

race

conditions

around.

If

you

happen

to

do

a

detach

of

the

last

volume,

that's

using

a

particular

I

scuzzy

session

right

around

the

same

time,

you

do

an

attach

of

a

new

one,

there's

the

potential

for

them

to

race

and

for

for

what

I'm

going

to

blow

up

so

I.

I

Don't

think

might

change

make

this

any

worse,

and

so

we

could

just

treat

it

as

a

separate

issue

and

merge

this

fix

and

then

deal

with

the

races

as

a

separate

issue.

But

if

you

wanted

me

to

deal

with

the

races,

there's

a

bunch

of

different

approaches

and

I

didn't

know

what

sort

of

stylistically

we

preferred

to

do

in

kubernetes.

As

for

dealing

with

race

conditions,

so

I

wrote

up.

You

know

a

couple

of

different

options

for

for

doing

this.

I

options

are

written

in

the

agenda

document.

Right.

Do.

I

G

To

avoid

different

layers,

there

is

the

core

kubernetes

volume

layer,

where

we

work

very

hard

to

make

sure

that

multiple

operations

are

not

started

on

the

same

volume,

but

we

don't

really

do

anything

about

within

the

same

plugin

having

multiple

volumes

being

mounted

or

unmounted

at

the

same

time,

those

that

the

plug-in

itself

will

take

care

of

that.

Okay.

I

G

G

That

would

be

okay.

The

challenge

is

I

mean

as

far

as

the

core

kubernetes

code

isn't

concerned.

The

way

it's

factored

right

now

is

that,

even

if

the

amount

are

attached

on

any

particular

operation

hangs,

the

core

code

can

continue

to

run

without

issue.

So

yes,

it's

okay,

but

you'd

have

to

do

it

very

carefully

to

ensure

that

you

don't

have

any

deadlocks

or

anything

like

that.

Okay,.

I

So

so

I'll

outline

the

the

ideas

that

have

been

suggested

so

far.

Okay,

humble

in

the

PR,

actually

suggested.

Maybe

we

should

just

never

log

out

of

these

sessions.

Let

them

leak

that

would

that

would

that

would

solve

the

problem,

but

it

could

create

a

significant

resource

leak

over

time,

because

if

we

never

log

out

the

I

scuzzy

sessions

are

going

to

consume

kernel

memory,

they're,

gonna,

consume,

I.

Think

the

challenge.

G

Here

might

be

the

fact

that

having

a

single

lock

object

that

you

share

across

multiple

attach

detach

our

amount.

Unmount

operations

is

probably

gonna,

be

the

most

challenging

part,

but

I

believe

there

is

the

plug-in

interface

level

object

that

you

could

theoretically

put

things

in

and

it's

initialized

once

per

plugin,

and

so

theoretically,

you

could

put

a

locking

mechanism

there

and

then

that

would

allow

you

to

coordinate

between

different

operations.