►

From YouTube: Kubernetes SIG Storage 20170216

Description

Kubernetes Storage Special-Interest-Group (SIG) Meeting - 16 February 2017

Meeting Notes/Agenda: https://docs.google.com/document/d/1-8KEG8AjAgKznS9NFm3qWqkGyCHmvU6HVl0sk5hwoAE/edit#heading=h.vfa89xk0z3sk

Find out more about the Storage SIG here: https://github.com/kubernetes/community/tree/master/sig-storage

Moderator: Saad Ali (Google)

Chat Log:

09:42:45 From serge : will do

09:56:46 From Chris Dragga : I think to really make this work for replicated file systems, you may need storage plugins specific to the file system.

09:59:04 From stephenwatt : Got to drop. Good discussion!

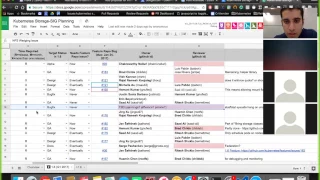

A

A

Items

that

folks

are

working

on

for

this

quarter

as

a

reminder:

release

code

freeze

for

1.6

in

one

week

away.

We

have

next

week

and

then

the

following

monday

is

code

freeze.

So

if

you

have

features

in

flight,

please

make

sure

that

they

get

time

to

make

sure

we

check

in

on

what

we're

planning

for

this

release.

Let's

apply

just

go

over

the

spreadsheet,

meaning

I'll

see.

If

I

can

share

my

screen.

B

A

Cool,

so

out

of

tree

volume,

plugins

was

supposed

to

be

in

design

this

quarter,

so

the

announcement

here

is

that

it

is

currently

in

the

works.

A

lot

of

folks

me,

Michael

tim

has

started

talking

to

folks

from

mezzos

cloud

foundry

and

dr.

about

having

some

sort

of

standard

interface,

a

container

storage

interface

that

we

can

all

agree

on

as

a

standard

model

and

then

kind

of

a

dessert

we're

hoping

the

implementation

falls

out

of

that.

So

those

discussions

are

on

going

we're

going

to

start

sharing

the

notes

from

that

more

widely

right.

A

B

F

A

G

A

H

So

I

put

out

a

design

proposal

is

paying

it's

like

the

coaching

yourself,

I've

started

barely,

but

there's

a

lot

of.

We

are

getting

close

to

a

final

design.

That's

like

that

should

be

done

by

today.

I

hope

and

I

should

be

able

to

present

the

work

sometime,

like

you

know,

like

maybe

wednesday

next

week.

Actually

the

first

cut

of

the

code

for

that.

Okay.

A

And

so

I

think

the

last

open

question

there

was

whether

we

were

okay

bumping,

that

field

from

volume

specific

to

a

first

class

field

globally

yeah

in

the

pod

definition-

and

I

think

the

answer

to

that

is

it

should

be

fine.

It

is

different,

for

example,

FS

tight,

but

it's

not

completely

unheard

of,

for

example.

Mount

path

currently

is

a

first-class

feel

that

gets

interpreted

by

volume

plugin,

so

having

mount

options

be

a

first-class

feel

that

gets

interpreted

by

volumes

is,

should

be

ok

as

well.

Ok,.

I

Michelle's

got

this

amazing

proposal.

Just

go

one

item

back

on

this:

persistent

storage

for

local

disk

will

block

devices

there's

loads

of

feedback

on

it,

but

I'm

noticing

the

usual

characters

in

the

community

that

are

trying

to

run

data

management,

platforms

and

stuff

like

I'm,

not

without

commenting

on

it,

but.

E

I

Take

any

case-

yes,

maybe

they

just

don't

sort

of

know

about

us

or

so

I'm,

trying

to

take

a

second

if

you're

working

on

something

like

1,

I'm

cassandra

in

cube

using

local

disks

or

or

glycerol

safe,

or

you

know

any

kind

of

storage

platform

or

something

that

uses

loaf

of

this

fit

erase.

That

provides

an

API

for

consumption

of

that

storage.

This

is

a

freaking

massive

proposal.

It's

hugely

important

as

big

as

dynamic

provisioning.

In

my

opinion,

you

didn't

my

vacation

too

yeah.

G

A

J

I

think

we've

made

some

really

good

progress

with

the

testing

getting

rid

of

flakes

getting

quite

a

few

PRS

merged.

Since

the

last

meeting,

I've

also

had

some

more

people

ask

to

be

invited

to

the

meeting

through

the

community,

so

I

think

we're

getting

more

involvement

in

more

buy-in,

we're

continuing

to

work

on

the

destructive

testing

and

some

of

the

the

high

priority

tests,

including

the

the

wedging.

So

we're

on

track

and

I

look

forward

to

seeing

some

new

members

tomorrow

at

the

meeting

an.

A

H

So

Prasad

payment,

oh

yeah,

like

we'd

CFS

of

working

on

this,

like

we

have,

we

have

calls

Thursday

1pm

jealous.

If

we

have,

we

have

consulted

some

items

like

like

access

to

track

on

and

they're

working

to

so

the

things

working

on

the

caching

proposal.

I

opened

a

proposal

for

bulk

volume

that

will

the

bulk

volume

pollen

pulling

and

I'm

going

to

have

a

peregrine

have

a

call

today

to

discuss

that

proposed.

Also

that

we

eliminate

some

extra

cause

that

we're

making

in

a

SS.

K

L

L

H

A

So

some

of

the

items

is

there

purely

bug

fixes

could

theoretically

be

done

after

code

freeze,

but

it

sounds

like

a

lot

of

fitted

feature

work,

so

I

definitely

bring

that

up

at

the

meeting

today.

If

you

can

okay

sure

we

reminder

to

everyone.

Alright

cool

next

up

is

the

NFS

wedging

issue.

This

I

think

we

may

have

dropped

the

ball

on

a

little

bit.

Does

anyone

know

if

anyone

took

ownership

of

this.

J

A

A

A

N

F

A

Not

expand

some

of

your

reviews

to

John

yeah

this.

That

would

be

great

yeah

cool,

okay,

Thank,

You,

Brad,

alright,

so

next

up

is

default.

Storage

classes

for

cloud

providers.

Yawn

already

had

the

pr

out

for

this

I

think

the

only

open

items

here

were

coming

up

with

a

better

mechanism

for

being

able

to

disable

the

default

storage

classes

because

they

request

for

add-ons

and

they

require

access

to

the

master,

to

delete

I.

Think

Michelle

had

a

work

that

she

had

a

bug

that

she

was

tracking

Michelle.

G

G

A

H

A

O

A

P

Q

A

A

That

would

be

awesome

and

we've

got

a

week

left,

so

we

may

need

to

scramble

some

resources

to

get

this

done,

and

then

we

need

to

decide

if

it's

a

blocker

or

not

for

going

ga

for

storage

glasses.

The

other

open

issues

for

going

ga

for

storage

classes

is

whether

we

are

allowed

to

promote

the

storage

class

field

to

a

first

class

field

in

pvcs.

I

think

brian

grams

had

some

input

there,

but

he's

out

in

tahoe

at

the

open

source

can

leadership

convention.

He

should

be

back

next

week

and

will

have

his

input.

A

P

Was

another

discussion

around

the

attribute

stuff?

Is

that

right

now,

with

TVC

anybody

being

able

to

set

the

attribute

for

TVs

to

be

belonging

to

a

storage

classes

that

basically

reduce

the

storage

class

down

to

a

label

and

I,

don't

know

if

the

seeker

6

feels

that

they

should

be

a

stronger

relationship,

the

similar

to

what

exists

between

claims

and

PV,

where

the

relationship

between

the

PV

and

a

storage

class

is

more

hardened

so

that

it

actually

needs

to

be

something

that

really

belongs

so

the

stuff

not

just

0e?

P

A

P

A

A

Storage

classes

to

GA,

we

already

went

over

metrics

no

capabilities.

I

have

not

started

working

on,

but

it's

supposed

to

be

designed

for

this

quarter,

so

I

plan

on

starting

on

it

after

code,

complete

our

code,

freeze,

snap,

shots

I

think

we

do.

You

know

what

the

status

of

this

is

I

believe

we're

punting

on

this.

N

N

I

Also

just

to

address

where

you

know

for

openshift

we're

pretty

big,

I'm

trying

to

get

snapshots

in

sooner

rather

than

later,

so,

we've

also

had

on

the

Red

Hat

side

internal

design

discussions.

So

maybe

it

might

be

an

idea

just

to

get

together

with

the

folks

that

are

designed

just

designed

together

just

to

move

the

ball

forward

on

the

set

yeah.

A

L

B

H

L

A

Thank

you

do

that

next

up

is

bringing

storage

classes

to

GA,

we've

already

discussed

it.

There

are

some

open

items

that

may

put

this

at

risk.

We'll

carry

this

discussion

offline

and

then

hopefully

have

a

better

answer.

Next

week

you

doing

metrics

on

volume

controllers,

I

thought

we

already

reviewed

that

wasn't

that

tracked

above

somewhere.

M

A

Let

me

mark

it

as

orange

as

a

reminder

to

myself

to

go

in

and

review

the

proposal,

but

yet

it's

non

critical

for

this

release.

Yeah

we

great

if

we

can

get

it

in

if

not

no

big

deal

next

up

is

the

deli

MC

scale,

io

volume

plugin.

I

think

this

has

been

blocked

on

me

for

code

review,

but

I've

assigned

it

to,

I

believe,

jing

or

michelle

to

take

a

look

at

it.

A

R

A

A

A

A

I

L

I

I

A

A

G

A

G

A

A

I

The

late

you

can

exchange

slips

up

the

color

interesting,

like

I,

didn't

I

looked

at

James

proposal.

Initially

I

had

a

misconception

that

I

discovered

later,

which

was

that

things

like

glossary.

When

you

take

a

snapshot,

it's

immediately

available

as

an

additional

money

that

you

can

mount

that

snapshot

right

away,

which

is

a

true

for

a

lot.

You

know,

apparently,

all

the

cloud

providers

a

lot

of

matter

of

Technology,

so

the

axe

phoenix.

I

What

I

wasn't

thinking

one

was

before

I

discovered

that

was

sort

of

being

able

to

support

of

some

sort

of

annotation

on

a

claim

or

something

that

would

kick

off

the

semantic

profit

profit

snapshots

of

work

possess

the

back

ends

of

life

I

associated

with

the

distance

and

volume

and

create

a

mirror

for

system

volume

and

claim

for

that

cream.

So

that's

the

developer

could

initiate

the

snapshot,

see

them

all

themselves

when

they

do.

You

know

to

control

gear.

Pvc

then

see

the

fair

shot

in

the

list.

I

You

know,

label

claims

act,

snapshots

or

something

like

that,

but

that

all

got

a

bit.

I'm

aroma.

When

you

know

it's

not

as

simple

as

just

creating

it's

like

a

two-phase

process

create

snapshots,

and

then

you

know

turn

that

that

snapshot

into

an

EBS

s-

or

you

know

something

to

that

effect.

So

anyways,

like

I,

guess

I'm

just

interested

in

hearing

what

jingle

thinking

about

her

approach

around

that

problem,

and

you

know

just

moving

the

ball

forward

over

the

line.

I

wonder.

A

I

L

I

T

I

L

M

Yup

I

mean

no

I

did

want

to

our

final

touches

on

that:

okay,

massage

just

photos

of

who

is

actually

working

at

the

young.

The

story

slide

on

and

go

back

inside.

So

when

we

are

forced

to

PR

three

know

who

should

contact

you

for

the

youth?

Sorry,

three

working,

my

god

is

actively

owner

story

side,

not

here.

So

what

we

are

now

communicates

with

you.

You

know

who

have

the

bandwidth

to

review

and

well.

A

Who

should

be

reviewing

us

so

it

should

be

pretty

well

distributed

across

the

storage.

Big

I

think

pretty

much

everyone

who's

been

contributing.

The

code

can

be

a

reviewer

and

I

think

they're

automatically

reviewers.

There

are

a

handful

of

approvers,

but

as

far

as

I

understand,

approvers

are

more

or

less

rubber

stamps,

so

they

are

to

the

last

case

with

the

reviewers.

It

looks

good

the

approver

just

come

in,

take

a

quick

look

at

it

and

improve

it.

So

you

should

be

able

to

spread

the

bandwidth

across

pretty

much.

The

whole

team

are.

A

A

I

think

so

they

we

have

these

owner

owners

files

and

inside

the

owners

files

there

are

approvers

and

reviewers

defined

for

each

each

directory

and

the

the

system

are

automatically

will

distribute

code

reviews

across

the

reviewers

and

that

reviewer

list

is

fairly

large

and

if

you'd

like

to

be

added

to

that

reviewers

list,

please

just

create

a

PR

and

add

yourself

to

it

and

sign

it

to

me

or

Tanner.

All

these

folks,

okay,.

A

T

S

S

A

L

A

C

A

B

A

D

A

J

Have

I

made

sure

on

our

side

that

it

would

not

be

a

huge

impact

openshift,

probably

direct

management

agreed

that

they

could

just

play

in

the

current

release.

That

would

be

deprecated

in

the

next.

So

the

business

impact

on

our

side,

which

I

think

was

we

were

worried,

was

going

to

be

the

greatest

minimal,

so

I'm

happy

to

just

I

guess

I

need

to

what

do

I

need

to

do

then.

Do

I

need

to

go

to

Brian,

grant

Mac,

we

can

have

an

exception

or

what

what's

the

are.

A

So

so,

just

to

put

this

into

context

for

everyone

else.

The

proposal

here

is

the

acceleration

deprecation

process

for

the

recycler

policy,

because

we

technically

been

trying

to

deprecate

it

for

a

longer

period.

The

social

I

think

brad

had

additional

deprecation

proposal

that

was

as

up

in

merge

chef

Brad

thank.

F

A

So

regardless

I

think

is

that

we

may

not

have

followed

the

official

process

yet

to

the

official

countdown

clock,

which

is

it

here

or

major

release

is

basically

screwing

us

over

and

we're

going

to

see

if

I

could

get

a

a

get

around

that,

but

yeah

Brian

grant

will

be

the

right

person

to

talk

to

that

about

Aaron.

Take

the

lead

on

that

that'd

be

great

yep.

A

O

L

A

A

U

A

G

U

U

A

G

I

But

then

you

have

to

carefully

curated

look

like

that

right

now:

sort

of

adaptive,

self-healing

capability

with

includes

an

ad

that

you

can

leverage

with

that

pattern.

So

if

we

can

take

local

file

systems,

you

know

in

direct

attached

storage

and

expose

them

as

persistent

volumes.

Then

we

can

hooker

things

like

respectful

set.

Then,

basically,

I

believe

idea

is

to

update

their

scheduler

with

some

additional

scheduling.

Intelligence

that

says.

Look

at

this

this

part

or

replica

in

the

stateful

phase,

is

using

persistent

longing.

That

happens

to

be

a

local

disk.

I

And

then

for

a

faithful

set

like

so

for

self-healing,

capability

or

Kinesis

to

say

I

have

a

sec,

no

Cassandra

cluster.

So

since

my

fateful

stateful,

Cassandra

and

I've

got

you

know

six

persistent

bonding

queen,

but

are

all

local

disks

associated

with

each

replica

and

staple

set

that

that

that

works?

That's

also,

but

if

one

dies

that

we

obviously

don't

have

the

capability

to

dynamically

provision.

So

you

just.

We

just

have

like

service

life,

additional

persistent

volumes

that

can

then

be

used

for

that

Rick.

Okay,

in

the

faithful

thing,

yeah.

G

V

G

O

G

G

G

I

And

then

varying

levels

of

forgiveness

right

so,

like

you

know,

you

want

to

avoid

creating

a

data

replication

storm

every

time.

I

know

it

goes

down,

so

you

could

play

like

look

if

you

know

parts

being

unavailable,

41

minutes

in

the

stables.

Let's

do

nothing,

you

know

if

it's

been

unavailable

for

one

hour

do

nothing,

but

if

it's

been

available

unavailable

for

one

day

replace

it,

it

had

yeah.

G

I

I

But

your

stakes

will

set

my

sony,

be

5,

5,

5,

45

minutes,

so

it'll

go

just

pick

5

and

go

run

them

according

to

whichever

maybe

quality

of

service

each

specified

for

your

local.

Just

that

you

want

and

we'll

just

go

around

them

and

it

will

find

of

those

die.

So

let's

go

find

five

other

nodes

with

the

similar

local

this

and

is

automatically

going

to

make

sure

it

starts

up

and

runs

over

there.

So

there's

a

huge

step

forward

and

self

healing

capabilities.

K

I

have

a

question

so

looks

like.

If

the

note

goes

down,

I

mean

chances

are

for

many

of

these

local

storage

systems.

Its

data

is

replicated

and

if

a

note

goes

down,

you

just

don't

want

to

grab

a

new

TV

cool.

You

want

to

be

able

to

grab

a

TV

at

points

they're

replicas,

so

your

application,

you

know,

can

resume

already

left

off.

Is

that

something.

K

Yes,

yes,

sets,

are,

you

know,

instance,

that's

running

on

a

machine,

but

many

of

the

posts

like

sources

that

you

know

they

have

the

data

replicated

on

different

notes,

and

when

that

machine

crashes,

we

can

launch

that

instance

on

a

different

note.

That

has

a

replica

of

the

data

that

was,

you

know

you

so,

instead

of

erratic

a

new

TV

that

points

to

scratch

space,

you

want

to

be

able

to

point

with

volume

that

has

a

copy.

Okay,.

G

L

O

That's

a

that's

right.

I

mean

I

concur

with

what

the

hour

earlier

person

said.

It

may

not

have

a

pot

on

it

so

like,

for

instance,

with

port

works.

We

may

have

another

replica

set

placed

on

a

different

note.

So

let's

say

there's

10

nodes

in

the

cluster.

Three

of

those

nodes

may

actually

already

contain

the

data,

so

it

would

be

preferred

to

take

any

one

of

those

others.

We

know

that

application

will

just

review

again,

even

that

we

do

accomplish

today

by

way

of

node

labels

and

note

collectors.

O

I

Like

sense,

that

makes

sense

yeah

but

sounds

usually

so

I

get

it,

but

with

data

replication

you

usually

want

to

make

some

sort

of

quorum

rights

of

you

know.

You

want

two

replicas

or

three

red

Booker's.

So

if

you've

lost

the

note

you're

down

a

replica,

so

you

want

to

basically

be

able

to

you

know,

generally

speaking

across

mostly

data

management

platforms

right

with

it,

whatever

it

is

because

spin

up

a

new

pod

on

a

new

host

with

the

blank

disk

and

have

that

sets

be

available

and

then

rebound.

L

So

that's

actually

one

of

the

questions

that

I

have

a

multiplication

document

because

obviously

is

sort

of

similar,

but

not

exactly

so.

If

it's

MongoDB

kind

of

application,

then

you're

right,

it's

exactly

like

the

cell

replicates

down,

which

means

the

manga

video.

Whatever

the

databases

is

one

sharp

fewer.

You

need

to

create

a

new

short

and

repopulated,

but

if

Akitas

volumes

you

might

want

to

start

with

that

replica

as

a

seed

for

the

new

shark

yeah.

I

L

I

More

like

a

block

replication

as

an

application

right.

So

if

I,

if

I'm

understanding

you

correctly

we're

talking

about

like

hey

I,

have

you

know,

Dave

ACB

on

turtle,

one

and

I,

and

maybe

I

have

a

file

system

on

it

and

then

I

want

to

replicate

the

content.

Use

some

mechanism

to

replicate

the

context

of

this

Davis

BB.

To

give

FDB

on

server2

is

that

right,

yeah

for

its

Bella.

L

K

I

L

T

A

G

G

Okay,

yeah

so

yeah.

Thanks

for

the

explanation

we

were

targeting,

we

were

mostly

targeting

we

use

cases

where

applications

do

the

application

level

replication

and

not

so

much

the

volume

level

replication.

We

can

definitely

think

about

how

how

this

can

support

the

use

cases

also.

Maybe,

if

you

can

send

me

some

more

details

about

that

use

case

specifically,

if

we

have

anything

like

that,

hey

excusing

that

doing.

That

would

be

good

to

see.

R

E

R

Q

R

Time

so

you

have

the

biscuits

actually

only

one

pd,

logically,

it

just

change

its

location

transfer.

The

previous

active

note

to

the

new

active

note,

so

you

don't

have

to

express

it.

Soupy

DS.

You

can

express

this

as

one

pd.

That's

currently

have

a

location

on

notte

now

switch

location.

To

note,

be

that

makes

things

simpler

if.

L

I

L

A

D

Thanks

yeah

I

think

that

this

is

a

hybrid

between

local

storage

and

volume

mouth,

because

you've

got

two

sons

growing

in

here

that

there's

it's

not

an

open-ended

external

attached,

because

there

are

constraints

on

what

compute

node

it

might

be.

Attachable

to

and

potentially

with

some

forms

of

replication

is

glossy

so

that

it

you'd

view

it

as

essentially

at

the

image

copy

that

doesn't

have

hundred

percent

fidelity,

but

maybe

the

app

it

could

take

advantage

of

that

and

save

effort

on

the

rebuild

yeah.