►

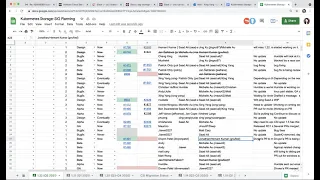

From YouTube: Kubernetes SIG Storage - Bi-Weekly Meeting 20210701

Description

Meeting of Kubernetes Storage Special-Interest-Group (SIG) Bi-Weekly - 01 July 2021

Meeting Notes/Agenda: -

Find out more about the Storage SIG here: https://github.com/kubernetes/community/tree/master/sig-storage

Moderator: Xing Yang (VMware)

A

Hello,

everyone

today

is

july

1st

2021.

This

is

the

kubernetes

36

meeting

today

we

will

go

over

our

1.2

planning

spreadsheet

and

after

that

I

think

we

have

a

couple

of

things

on

the

agenda,

so

we

will

look

at

those

after

our

regular

planning

and

also

as

a

reminder

next

thursday

july.

8Th

is

the

code

freeze.

So

if

you

have

something

that

you

want

to

get

into

1.22,

then

you

need

to

get

that

merged.

The

code

is

in

tree,

okay,

so

look

at

the

spreadsheet.

C

A

A

A

B

A

A

Okay,

it's

probably

the

same,

I

think

I'll

just

say

I

don't

have

no.

What

is

update

unless

someone

know,

because

I

think

young

is

not

here-

patrick's,

not

here

today,

okay,

so

this

one

yeah,

so

the

spreading

overfilled

failure

domain.

This

one

is

depending

on

the

next

one.

One

group-

I

don't

have

an

update

on

this

one,

so

I'll

try

to

update

the

cap.

D

A

A

A

A

D

A

E

I

have

not

yet

got

it

to

pass

prow,

because

I

have

discovered

that

when

you

add

a

new

api

field,

there's

all

kinds

of

other

things

you

need

to

do

to

prevent

tests

from

failing

and

I'm

like

working

my

way

through

that

list

of

things,

the

the

pr

is

there,

I've

added

tim

hawkin

for

review.

I

haven't

gotten

reviews

yet,

but

I'm

still

focused

on

just

fixing

everything

that

is

failing

in

prow

as

a

result

of

having

a

new

api

field.

A

E

A

E

A

A

E

App,

yes,

sid

is

rewriting

the

cap

for

those

that

don't

know

the

the

original

author

of

the

cap

had

transitioned

off

the

project

and

sid

had

inherited

ownership

of

it

and

he

was

just

sort

of

tweaking

the

existing

cap,

but

after

receiving

review

on

it

for

the

122

cycle,

he

decided

it'd

be

better

to

just

start

over

and

and

sort

of

redesign

the

kept

from

the

ground

up

to

focus

on

the

the

right

things

incorporating

feedback

from

from

api

reviewers.

A

lot

of

the

old

kep

was

focused

on

stuff.

E

A

A

F

Hey

this

is

deep,

so

we

just

released

the

rc1

last

week

and

marching

towards

the

v1,

and

so

yeah

things

are

looking

pretty

good.

If,

if

anyone

has

any

feedback

from

using

it,

maybe

for

the

csi,

vsphere

port

for

windows

or

aws

that'd

be

great.

We

already

have

quite

a

bit

of

feedback

from

gcpd

and

azure.

A

Okay,

no

update

and

then

probably

the

we

have

a

few

csm

migration

related

items.

Maybe

we

don't

have

update.

This

is

joey's,

not

here,

maybe

they're

just

the

same

as

before.

So

so.

If

anyone

knows

just

speak

up,

I

don't

know

I'll

just

put

no

update

here.

The

next

one

is

v

sphere.

So

what

I

know

is

that

deviant

has

a

pr

to

update

the

topology

label.

That's

merged

row.

Block

support

will

we'll

add

that

as

an

offer

really

soon

windows

support

is

your

outstanding.

A

G

G

A

G

G

A

G

A

A

A

A

A

A

A

So

I

will

ping

him

later

and

see

if

he

found

out

anything

and

I'm

also

looking

at

the

gracefulness

cab

and

implementations

and

see

if

there's

anything

that

we

can

leverage

there,

because

I

think

they

actually

added

like

a

node

shutdown

managing

cubelet

it.

It

actually

knows

if

it's

really

a

shadow.

So

if

we

are

going

to

narrow

down

the

scope,

as

we

discussed

in

our

previous

meeting,

then

maybe

we

could

look

into

there

and

see

if

we

can

add

anything.

A

A

No

okay,

because

even

I

think,

even

in

previous

release,

this

one

is

getting

very

close

as

well

morning.

Extension

for

stefan

said

so.

I

try

to

do

this

shalini,

who

is

looking

at

this?

I

think

she

has

some

questions.

She's

still

working

on

the

updating

the

cap

and

the

next

one

is

a

container

notifier.

A

I'll

just

say

no

update

and

the

next

one

is

prioritization

and

volume

capacity,

I'm

not

sure.

Okay,

there's

some

prs

that

have

been

updated.

So

I

don't

have

an

update

on

this

one.

Let

me

show

it's

not

here

so

I'll.

Just

put

no

update

here

and

the

last

one

is

a

css

service

account

token.

I

think

we

are

trying

to

bring

this

to

ga

and

all

right.

So

I'm

not

sure.

What's

the

status

since

michelle's,

not

here

not

here,.

C

Do

you

want

to

talk

about

this

yeah

hi,

everybody

so

am

alice

and

I'm

from

riot.

So

I'm

working

currently

in

tumor

community

and

currently

we

I'm

trying

to

solve

a

problem

that

would

I

call

it

pvc

locking

and

you

can

see

the

current

proposal

there

so

briefly

to

summarize

the

problem.

So

basically

we

are

creating

a

tecton

pipeline

or

creating

part

that

I

have

inside

vms,

where

the

disk

is

stored

on

mpvc.

C

And

we

are

trying

to

find

a

mechanism

to

lock

the

ppc

to

avoid

data

corruption,

so

I

know

that

there

is

this

new

access

mode

that

is

read,

write

pod.

Once,

however,

keepers

use

share

volumes

for

virtual

machine

migration,

so

this

is

access

mode,

it's

a

little

bit

too

restrictive

and

doesn't

apply

to

to

this

use

case.

C

C

C

C

A

Internally,

do

we,

I

guess

my

question

is

when

I

was

reading

this.

Let's

say

we

have

a

controller

right

hosted

by

us,

but

then

I

was

wondering

who

is

going

to

use

it.

I

mean

we

can

of

course

have

a

controller

to

apply

the

logs,

but

then,

in

your

case

I

know

that

you

know

you

said

the

keyboard

will

be

using

this,

but

in

our

case

and

that

that's

the

part,

I'm

not

quite

sure.

So

I

see

you

have

this

example

of

deployment

here.

C

You

could

add

a

custom

task

that

create

this

this

resource

and

then,

basically,

in

order

to

perform

some

disk

operation

on

the

pvc

you

check.

If

this

lock

has

been

acquired

in

the

state

of

this

pvc

locking

is

successful

and

then

you

continue

with

the

pipeline

and

for

the

entire

pipeline.

Your

pvc

is

locked

and

yeah.

A

Yeah,

so

I

think

normally

like

we

need

to

have

a

use

case

inside

kubernetes

to

bring

this

one

in.

That's

the

one

thing

that

I'm

I

still

can't

figure

out

yet

because

I

can

clearly

see

a

use

case

for

cooper.

Right,

maybe

give

women,

but

we

don't

know

exactly

how

that

is

used.

So

if

we

say

hey,

there

is

a

deployment.

There

is

a

use

case

for

deployment.

Then

I

think

that

makes

sense

to.

C

C

Yeah,

that's

exactly

I

mean

if

you

can

see

or

are

aware

about

any

possible

use

case.

Of

course

I

am

aware

about

the

cuber

one,

so

I

I

could

see

maybe

some

analogy

if

there

are

workloads

that

use

shared

storage,

where

use

cases

where

you

can

read

write

one

spot

is

too

restrictive

because

the

storage

maybe

has

to

be

attached

to

multiple

nodes,

or

maybe

you

want

to

guarantee

that

the

btc

is

owned

by

a

controller

and

the

pod

level

is

too

restrictive.

A

A

A

A

A

E

Oh

yeah,

thank

you

shank,

so

this

is.

This

is

something

I

wanted

to

socialize

among

this

group.

It's

not

a

specific

initiative

that

anyone's

working

on

yet

to

my

knowledge,

but

we've

had

a

various

we've

had

various

problems

over

the

last

couple

of

years,

with

user

id

ownership

of

files

and

pvcs

and

access

of

pods

to

be

able

to

read

and

write

files

in

a

pvc,

I

think,

mostly

on

container

run

times

that

that

do

like

uid

shifting,

and

so

there

have

been

a

ver.

E

There

have

been

some

workarounds

implemented

in

kubernetes

like

the

recursive

challenge

of

files,

like

the

group

fsid

and

the

the

security

context

where

you

can

run

pods

as

specific

users

and

specific

groups,

and

all

these

workarounds

are

mostly

just

to

deal

with

the

fact

that

you

know.

If

you

have

multiple

pods

accessing

a

pvc

over

the

lifetime

in

the

pvc,

you

don't

want

to

have

a

situation

where,

like

the

second

or

third

or

fourth

pod,

can't

access

the

files

that

the

first

pod

wrote

and

the

underlying

problem

is.

E

Is

that

the

way

containers

work

is

just

sort

of

badly

designed?

With

regard

to

this

specific

aspect

and

I've

been

looking

for

a

long

time,

for

you

know

a

better

solution

in

the

linux

kernel

to

basically

solve

the

problem

at

the

at

the

root

of

it,

which

is

the

fact

that

you

know

when

you

shift

a

user

id

in

a

container

the

you

can't

see

the

user

id

that

you're.

Actually

writing

on

the

files.

E

You'll

you'll

think

that

you're

root

inside

the

container,

but

you're

actually

like

uid

one

million

outside

the

container,

and

so

when

you

write

files

to

the

file

system,

they're

getting

written

as

user

id

1

million.

But

you

don't

know

that

and

like

that.

That's

really

just

a

bad

design

in

terms

of

the

how

the

container

runtime

deals

with

file

systems.

So

what

I

wanted

to

bring

to

people's

attention

was

that,

finally,

in

linux,

5.12,

which

shipped

this

april

so

about

two

two

and

a

half

months

ago,

a

facility

was

added

to

linux.

E

When

you

access

the

file

system,

which

is

what

you

want,

if

you

you

know,

if

you

think

that

you're

root

the

file

system

should

treat

you

like

your

root,

at

least

for

that

particular

bind

mount.

So

I

if

we

could

take

advantage

of

this

facility

in

kubernetes,

it

would

solve

all

of

the

problems

around

user

id.

You

know,

and

user

ids

and

pods

not

being

able

to

access

files

and

volumes.

I

believe,

but

it's

going

to

require

changes

potentially

at

the

cri

level.

E

You

know,

file,

system,

ownership,

problems

and

I'd

like

to

sort

of

recruit.

Some

people

to

you

know

that

are

interested

in

solving

this

to

to

look

at

a

some

kind

of

design

to

take

advantage

of

this

in

kubernetes,

because

it's

it's

it's

in

linux,

now

we

can

start

playing

with

it

and

it'll

soon

be

widely

available.

E

It's

just

a

matter

of

figuring

out

how

we

can

use

it

in

kubernetes

to

to

really

solve

the

problem

of

pod

file

system

access

with

pvcs,

so

yeah.

I

just

wanted

to

socialize

this

make

people

aware

that

it's

fine,

that

the

the

real

solution

to

this

problem

is

is

finally

available

to

us,

and

it's

just

a

matter

of

implementing

it

in

kubernetes.

E

Linux

kernels

I

mean

it

depends

on

the

distro,

some

distros

just

wait

for

the

some

just

ship,

relatively

modern

kernels

other

distros.

Only

ship

lts

kernels

but

like

I

believe,

and

someone

from

red

hat,

could

correct

me

here,

but

like

red

hat,

will

back

port

features

from

new

kernels

into

old

kernels

if

they're

useful

enough.

E

What

they

won't

do

is

back

port,

a

feature

that

hasn't

merged

to

the

mainline

kernel

yet,

but

now

that

it's

in

512

and

it's

committed,

presumably

it's

possible

for

someone

to

back

port

it

to

a

lts

kernel

or

some

sort

of

more

stable

kernel.

That

is,

you

know

shipped

on,

like

a

red

hat,

os

or

or

you

know,

maybe

it'll

start

showing

up

in

core

os

or

one

of

the

container

optimized

devices.

I

mean,

there's

all

these

different

linux

distros

that

have

different

approaches

to

to

kernels.

But

it's

just

a

matter

of

time.

E

E

E

B

B

So

I'd

like

to

help

I'm

just

I'm

time

constrained

like

everyone

else,

so

I

don't

know

how

much

I

can

commit

right

now,

but

it's

something

I

started

to

look

at

a

little

bit

at

least

and-

and

one

thing

I

noticed

when

I

was

reading

through

some

of

the

docs

on

this

feature-

is

that

they

it's

it

has

to

be.

It

has

to

have

file

system

support,

so

it's

implemented

for

ext4

and

phat

right

now.

E

Yeah

yeah,

it

is

file

system

specific,

but

I

thought

that

they

had

covered

ext4

and

ext3

and

xfs.

I

could

be

wrong

about

exactly

which

file

systems

have

been

covered,

but

I

think

the

the

big

common

ones

have

been

and

you're

right.

We

may

need

a

fallback

for

esoteric

file

systems

that

that

where

this

can't

work,

but

that's

we

already

have

file

system

type

checks.

When

we

decide

whether

to

do

the

recursive

chart

or

not

in

cubelet,

so

we

could

just

modify

those

checks

to

opt

out.

E

You

know

for

you'd

have

to

have

some

way

of

detecting

whether

the

feature

was

even

available

on

the

platform

where,

where

cubelet

was

running

and

then,

if

it

was,

you

could

use

it

for

the

fascisms

where

it

worked,

and

if

it

wasn't,

you

could

just

use

the

fallback

if

you

have

to

be

a

runtime

check,

because

it's

not

widely

available

in

kernels.

Yet.

B

H

E

Point

so

so

I

I

gave

a

little

bit

of

thought

to

you

know:

how

exactly

would

you

implement

this

and-

and

I

don't

want

to

rule

out

the

involvement

of

the

cri

or

the

csi

interfaces,

as

I

mentioned,

but

I,

I

suspect

that

we

probably

don't

need

to.

I

suspect

it

all

could

be

done

in

cubelet,

but

I

want

to

keep

an

open

mind

about

that

until

we

have

like

a

poc

sort

of

shows

how

it

can

be

done

in

particular,

because

it

relies

on

linux

namespaces.