►

From YouTube: Kubernetes SIG Storage Meeting 2022-03-10

Description

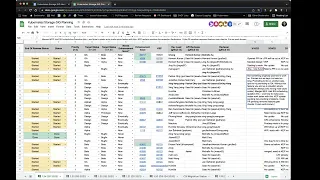

Kubernetes Storage Special-Interest-Group (SIG) Meeting - 10 March 2022

Meeting Notes/Agenda: https://docs.google.com/document/d/1-8KEG8AjAgKznS9NFm3qWqkGyCHmvU6HVl0sk5hwoAE/edit#heading=h.88ry3bnhqkwc

Find out more about the Storage SIG here: https://github.com/kubernetes/community/tree/master/sig-storage

Moderator: Saad Ali (Google)

A

Okay,

today

is

march

10

2022.

This

is

the

meeting

of

the

kubernetes

storage

special

interest

group.

As

a

reminder,

this

meeting

is

public

recorded

and

posted

on

youtube.

So

today

we're

gonna

go

through

the

agenda.

As

usual,

we're

going

to

do

the

1.24

planning

spreadsheet,

to

figure

out

what

items

the

sig

is

working

on,

what

the

status

of

each

one

of

those

items

is

upcoming

deadlines

to

be

aware

of.

B

Yeah,

so

I

opened

pr

yesterday

for

cleaning

up

some

of

the

going

resizing.

Ga

removing

some

deprecated

features

that

we

like.

We

used

to

call

notes,

expand

between

node

stage

and

not

publish

we

just

deprecated

that,

because

we

are

going

to

call

note

expand,

always

after

node

publish.

So

this

is

confusion,

so

I

opened

up

here

for

that.

I

have

a

I'm

working

on

making

a

read.

Write.

Many

actually

read

write

many

for

read

many

volumes.

We

do

not

use

to

call

node

expand

on

every

node.

B

I

had

appeared

for

that

like

long

time

back

like

a

year

back.

Actually

I'm

just

reworking

that

pr,

I'm

almost

done,

but

I

have

to

just

fix

it

up

for,

like

recently

change

recover

from

resize

feature

that

will

merge

in

large

circles.

So

I'm

just

updating

that

I

should

be

opening

that

second

pr

by

maybe

today

tomorrow,

like

I

have

this

yeah

and

then

after

these

two,

I

think

I

will

open

another

pr

to

flip

the

feature

gate

to

ga.

A

B

I

had

I

have

not

like

I

have

to

work

on

a

slight

design

item

for

that

one,

because

we

have

a

goal

to

previously.

We

only

allowed

recovering

to

a

value

higher

than

previous

value,

so

it's

like

it

has

to

be

like

at

least

one

byte

or

a

little

bit

higher

than

what

was

the

previous

actual

volume

size

now

michelle

and

we

discussed

that

it

should

be

possible

to

recover

it

all

the

way

back

to

original

size.

So

for

that

I

have

to

kind

of

tweak

the

design

a

little

bit.

B

B

A

C

So

the

original

author

is

not,

I

don't

see

any

content

like

the

next

step

work.

Yet

I

am

thinking

to

ping

him

to

see

whether

his

plan

to

work

on

that.

Otherwise,

so

we

can

find

some

help

or

I

can

pick

it

up.

So

basically,

we

need

to,

I

think,

imported

the

new

version

of

that

kind

of

mount

info

your

tail

and

then

we

can

utilize.

The

mounted

fast

function

in

kubernetes.

D

The

open

was

still

around

integration

with

the

outer

screener

had

a

pr

opened

for

that,

but

it

hadn't

been

been

reviewed

for

a

while,

given

the

choice

between

promoting

it

to

ga

and

doing

another

beta,

we

instead

opted

to

try

the

ga

promotion

because

it

is

working

when

you

don't

use

autoscaler

it

does

what

it's

supposed

to

do.

You

get

the

ability

to

fully

put

schedule,

parts

that

use

the

entire

storage

cluster,

all

the

all

the

storage

capacity

in

the

cluster.

D

Without

this

feature,

in

this

scenario,

you

end

up

with

a

situation

where

the

port

can't

be

scheduled

because

autoscaler

is

always

ever

quickly.

Schedule

is

always

picking

the

wrong

node.

So

I

updated

the

cap.

That's

I

think,

yeah!

Well,

we

we

merged

the

cap.

We

then

had

folks

from

six

scheduling

questioning

their

decision.

A

little

bit

and

they

started

to

look

at

what

would

he

would

be

needed

to

make

outer

scalar

integration

work

better

and

that's

where

we

are

at

the

moment.

D

I

think

I've

convinced

those

people

who

were

a

bit

skeptical

about

going

ga

that

we

are

okay

with

doing

it.

The

cap

there's

a

cap

update

pending

with

further

instructions

or

limit,

or

this

documenting

the

limitations.

There

is

a

code

pr

pending

that

does

the

api

change

and

removes

for

feature

gate

check.

So

from

that

perspective,

it's

fully

implemented.

D

It

just

all

needs

to

be

merged

to

be

completed

for

124.,

and

then

we

know

where

future

work

might

be

needed

if

it

really

affects

users-

which

perhaps

at

this

point

probably

is

big

stamping

block

for

me.

I

don't

know

who

is

going

to

deploy

local

storage

in

a

cluster

with

autoscaler,

how

they

are

doing

it,

how

they

want

it

to

work,

and

if

I

don't

have

user

feedback,

it's

it's

fairly

low

priority

compared

with

the

other

things

that

I

could

be

working

on,

so

that

that's

currently

where

I.

D

No

not

anymore,

it

looked

like

they

would

for

a

while,

but

I've

been

working

with

aldo

in

particular

intensively

the

last

one

or

two

weeks

running

tests,

scale

tests

again

to

show

him

that

it

works

and

how

it

works,

discussing

the

open,

autoscaler

pr

again

and

how

how

it

could

be

improved.

So

I

think

they

are

now

accepting

that

we

move

ahead

with

this.

They

they

weren't

too

happy

because

they

would

have

liked

a

much

different

solution.

D

But

I

think

I'm

not

sure

what

that

other

solution

that

they

have

in

mind

is

is

practical

or

what

other

drawbacks?

It

will

have.

That's,

definitely

an

area

that

would

need

to

need

some

more

investigations,

and

my

my

feeling

is

that

we

shouldn't

block

this

this

current

state

of

of

a

feature

for

that,

because

it's

it's

useful

as

it

is.

E

Yeah,

that's

me

yeah,

so

I'm

working

on

a

bug

fix

right

now.

Someone

raised

this

in

slack

taoshen

mentioned

that

fs

group

does

not

get

applied

on

csi

inline

volumes.

So

I'm

working

on

addressing

that.

I

think

I'll

have

a

fix

out

for

review

shortly.

I'm

just

testing

that

today

there's

still

an

open

bug

on

volume,

reconstruction

that

I

need

to

look

into

and

still

have

some

work.

I

need

to

do

to

make

sure

that

the

tests

the

tests

are

all

covered.

A

A

G

A

A

A

A

J

Okay,

good,

the

the

the

metric

support

in

the

library

is

almost

done

and

then,

after

that,

be

able

to

do

the

beta

release

there.

There

is

an

entry

component

of

this,

which

is

updating

the

feature

gate

to

beta,

I'm

going

to

go

ahead

and

push

that

pr

this

week,

even

before

we

have

the

other

release

out

just

so,

people

can

review

it

and

make

sure

there's

no

problems.

J

K

J

A

F

A

F

A

L

A

D

H

H

A

A

F

B

F

I

I

don't

know

so

we'll

have

to

see

because

we're

talking

it's

confusing,

I

see

there

are

different

documents

in

different

places.

We

have

different

version

so

just

trying

to

sort

that

out.

If

it's

still

the

same

as

what

we

declared

before,

which

is

67.3,

then

we

don't

have

to

do

anything

and

continue.

A

G

G

F

A

F

A

A

A

H

A

I

A

A

A

Okay

sounds

like

we're

still

looking

for

an

owner,

so

if

anybody

is

sitting

on

the

sideline

looking

for

an

item,

this

might

be

a

good

one

to

jump

into.

We

may

not

be

able

to

get

it

done

for

124,

but

you

can

start

investigating

and

figuring

out

what

needs

to

be

done,

and

then

we

can

pick

it

up

for

125.

A

A

G

Yeah,

so

the

this

is

staying

in

alpha

or

124,

I

mean

I

think

our

goal

will

be

to

do

get

it

in

beta

and

125,

but

until

we

start

that

cycle,

I

don't

know

if

there's

going

to

be

more

updates

here.

Okay,

the

the

the

gap

here

is

that

I

think

we

didn't

actually

end

up

having

a

great

plan

for

how

to

validate

this

in

alpha,

which

I

will

work

on,

although

if

any

of

the

old-timers

have

any

suggestions

around

that

I'd

love

to

talk

with

them.

A

A

F

A

F

All

right,

so

we

talked

about

this

in

the

data

protection

running

group

yesterday

as

well.

So

you

know

we

have

several

faces

for

the

volume

snapshot

api

and

in

window

20.

That's

the

the

first

phase.

When

we

add

we

want

support

and

the

phase

two

was

in

kubernetes

by

about

21,

we

changed

the

story

version

from

beta

1,

even

and

then

we

also

duplicated

the

v1

beta1

api.

F

G

I

guess

that

will

plan

for

a

ratcheting

web

hook

seems

to

have

worked

quite

well

like

I.

I

think

that

was

a

good

strategy

for

this.

We

only

had

one

cluster

where

someone

had

a

v1

beta

1

snapshot

resource.

That

would

be

invalid

in

my

v1

so,

like

I

think,

that's

good

news

and

yeah.

We

should

probably

proceed

with

the

deprecation

before

anyone.

F

G

F

F

F

A

F

So

yeah,

so

I

send

it

this

out.

I

got

feedback

from

humboldt.

He

actually

writes

for

pretty

detailed

notes

here,

so

it

looks

like

they

are

still

using

that.

So

that's

fine.

I

think

we

can

keep

those

two

the

last

projects.

That

means

for

the

for

the

remaining.

I

think

there

are

five

nb

remaining

projects

that

we

will

submit

for

archive.

So

if

there

are

any

concerns,

please

speak

out,

or

should

we

wait

a

little

bit

longer?

We

can

wait

a

little

bit.

F

So,

okay,

so

it's

actually

actually

four

yeah.

So

it's

mainly

just

a

two

because

the

the

other,

the

csr

app

that

ap

csi

api

that

one

we

know

because

that's

just

no

longer

used.

So

basically,

it's

just

the

css

driver

in

each

populator

and

css

lib

fc.

Those

two

looks

like

we

have

not

got

any

response.

Saying

people

need

them.