►

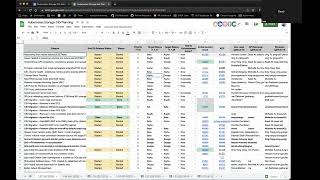

From YouTube: Kubernetes SIG Storage Meeting 2023-01-12

Description

Kubernetes Storage Special-Interest-Group (SIG) Meeting - 12 January 2023

Meeting Notes/Agenda: https://docs.google.com/document/d/1-8KEG8AjAgKznS9NFm3qWqkGyCHmvU6HVl0sk5hwoAE/edit#heading=h.yilfuaafqpay

Find out more about the Storage SIG here: https://github.com/kubernetes/community/tree/master/sig-storage

Moderator: Saad Ali (Google)

A

All

right,

let's

go

ahead

and

kick

it

off.

So

today

is

January

12,

2023,

Happy,

New,

Year

everyone

and

welcome

back

today

we're

going

to

go

over

the

127

planning

spreadsheet.

So

we

are

in

the

beginning

of

the

127

planning

Milestone.

If

you

have

enhancements

that

you

want

to

go

into

one

step,

one

seven,

please

ensure

that

your

enhancement

ends

up

with

a

lead

opted

in

label.

A

You

can

reach

out

to

Michelle

myself,

Shang

or

Yan

to

help

get

that

done,

and

the

important

important

dates

to

be

aware

of

are

listed

here.

The

upcoming

date

to

be

aware

of

is

February

2nd,

which

is

the

production

Readiness

freeze,

which

means

all

the

features

that

we

want

to

go

into.

127

must

have

their

caps

approved

by

this

date

and

let's

have

an

enhancement,

bug

and

have

caps

approved

by

this

date.

So

if

you

have

features

that

you

want

to

get

in,

please

keep

those

dates

in

mind.

A

A

C

D

A

A

B

A

B

A

A

B

D

E

A

H

A

A

F

B

H

H

A

B

B

A

H

A

F

A

D

C

C

A

C

B

C

C

A

I

A

A

F

A

B

E

E

F

A

D

D

A

A

B

I

E

A

A

A

Okay,

I'm

gonna

drop

it

for

now

and

then

the

remainder

here

are

actually,

let's

pull

these

two

up.

So

we'll

put

the

cross

Sig

ones

at

the

bottom,

so

better

default

storage

class

wait

for

One

More

release

before

moving

to

GA.

Do

we

want

to

do

anything

else

here

this

release,

or

is

this

just

for

tracking

some

work?

Next

release.

C

A

B

I

Actually,

there's

note

in

the

agenda

I

think

we'd

like

to

do

a

cap

for

127.

We

should

have

a

draft

of

that

available

there.

As

you

all

know,

there's

that

we've

we've

been

been

having

a

discussion

and

a

meeting

on

that.

We,

you

know:

I

haven't

reached

consensus,

but

I

think

we've

explored

the

ideas

enough.

I

I

A

A

Four

volumes:

okay,

cool

thanks

Matt,

so

the

rest

of

these

are

co-owned

by

between

six

storage

and

other

cigs.

So

first

up

we

have

Sig

node,

non-graceful,

node

shutdown,

I

think

this

was

adding

end-to-end

tests,

we'll

make

wait

one

more

release

for

GA,

so

I

think

this

is

just

for

tracking

this

cycle.

B

A

A

A

G

G

A

E

I

I

A

G

A

J

A

J

D

C

So

yeah

we've

got

a

customer

who

wants

to

have

a

custom

policy

when

the

garbage

collect

release

PVS,

so

they

want

to

have

it

like

fairly

configurable

and

that's

probably

out

of

scope

of

this

call,

but

they

need

to

know

when

a

PV

was

released

like

a

timestamp

or

something

like

that.

There

are

many

ways

how

to

do

it.

A

F

C

A

A

F

C

B

D

B

One

of

the

reason

that

we

said

previously

was

that

since

kubernetes

are

not

using

it,

we

cannot

add

that

as

a

first

class

field,

I

was

just

wondering:

I

mean

I'm,

fine,

adding

this

one

I'm

just

wondering.

Can

you

also

add

a

watering

house

status

in

in

there?

Of

course,

it

will

be

a

different

picture.

C

B

D

B

B

A

C

K

Yeah,

so

actually

it's

an

interesting

point

that

you

bring

up.

We

are

actually

in

the

process

of

implementing

volume,

Health

monitoring

for

AWS,

and

we

have

been

looking

at

the

current

proposal

and

right

now,

I

think.

One

of

the

challenges

that

we

have

is

that

we

just

get

like

a

single

bit

to

declare

whether

the

volume

is

healthy

or

unhealthy

and

calling

a

volume.

K

Unhealthy

has

a

lot

of

consequences

in

terms

of

how

that

is

perceived

by

the

application,

so

we'd

like

to

get

a

little

bit

more

granularity

in

terms

of

when

we

report

status

as

to

you

know

what

what

might

be

happening

with

the

volume.

So

we,

this

is

just

based

on

some

preliminary

thought

that

we

we

cause

that

we

have.

B

K

B

There's

one

challenge

is:

is

to

like

how

to

use

this

field

like

well.

That's

the

you

know

exactly

what

we're

discussing

here

right.

That's

the

one

thing

that

we

have

not

reached

the

consensus,

but

for

us

internally

we

actually

do

have

a

warring

House

Field,

that

to

reuse,

which

is

just

whether

the

Voting

is

accessible

or

not

accessible

and

based

on

that

higher

level

application

will

make

decisions

about

what

to

do

with

it.

Yeah.

K

So

I

think

one

thing

that

I

can

tell

you

right

now

is

that

from

the

AWS

perspective

right,

you

may

want

to

declare

like

there

may

be

something

going

on

with

a

volume

it

may

not

be

in

in

like

good

health,

but

we

the

moment

you

declare

a

volume

to

be

unhealthy.

There

can

be

consequences

in

terms

of

how

that

volume

is

actually

handled

in

terms

of

faults,

alarms,

Etc,

so

you

may,

you

may

not

want

to

trigger

something

that

causes.

K

That

indicates

that

the

volume

is

completely

unusable

right.

So

what

would

be

helpful

is

if

you

could

have

some

kind

of

a

progression

in

indicating

that

okay,

this

it's

not

healthy.

You

know

the

volume

is

not

healthy

at

this

point,

but

but

we

don't

want

any

further

action

taken

at

this

point

and

then

see

it

up

to

the

application

to

determine

what

the

behavior

might

be

in

that

case,

or

something

along

those

lines

so

I

we

can

certainly

provide

something

which

explains

a

position

better

in

a

in

a

future

proposal,

if

necessary,.

A

L

K

My

first

preliminary

thought

on

that

and

it's

I

may

be

wrong

about

this-

is

that

you

know

if

we,

if

we

can

come

up

with

some

kind

of

a

framework

where

we

don't

have

to

specify

the

entire

set

right

away,

but

just

provide

a

a

set

of

values

that

we

know

for

a

fact

would

be

helpful

and

that

can

be

extended

and

extended

in

the

future.

I

think

that

that

would

be

the

way

to

go,

because

you

could.

K

L

It

does

no

good

if

one

storage

vendor

implements

a

bunch

of

different

levels

and

then

custom

codes,

their

application

to

deal

with

those

levels,

it's

not

interoperable

with

anything

else,

and

so

whatever

we

Define

has

to

be

something

that

you

know

any

you

can

plug

in

any

storage

system

and

get

the

same

results

got

it

got

it

if,

if

I

mean

it

is

true

that

store

a

lot

of

individual

storage

systems

have

tons

of

detail,

but

the

right

way

to

extract

that

detail

is

through

some

proprietary

Channel

rather

than

this.

The

standardized

interface.

K

A

A

B

I

was

just

saying

that,

like

in

our

case,

we

actually

just

have

this

one

flag,

but

we

don't

call

it

healthy

or

not

healthy.

We

call

it

accessible

or

not

accessible.

So

just

this

one

value

and

based

on

that,

we

make

decisions.

This

is

already

in

production

yeah,

but

definitely

we

say

we

probably

need

to.

A

B

C

G

F

They

don't

and-

and

they

don't

work

if

the

controller

crashes

MP

starts,

because

it

had

to

keep

a

bunch

of

State

in

memory

of

like

an

operation

and

so

I

think

having

it

in

the

actual

kubernetes

API

I

think

would

be

easier

to

or

would

be

more

reliable

and

there's.

Also

things

like

there's

projects

like

Cube

State

metrics.

That

I

think

could

take

advantage

of

that.

If,

if

we

actually

had

the

information

in

the

API

itself,.

A

I

This

is

exactly

the

thing

I

mentioned

before

about

the

kept

proposal.

As

I

said,

we'll

have

a

draft

out

shortly:

I

put

a

link

into

the

doc.

That's

captured

the

existing

discussion

meetings

from

last

month,

so

please

check

it

out

and

watch

this

space

for

the

cap

and

you'll,

see

the

cap

issue

and

please

review

and

give

comments.

A

I

I

Haven't

haven't

had

a

regular

meeting,

we

had

a

meeting

before

the

holidays,

and

this

stock

represents

picking

that

up

having

you

know,

if

there's

interest

in

having

a

weekly

meeting

as

we

we

find

the

cap.

That

would

be

great,

so

yeah

I

will

for

sure

post

additional

details

here,

but

you

haven't

missed

anything

well.