►

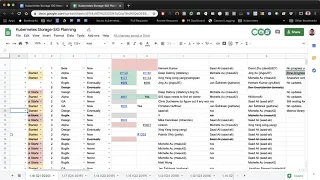

From YouTube: Kubernetes SIG Storage 20200102

Description

Meeting of Kubernetes Storage Special-Interest-Group (SIG) - 02 January 2020

Meeting Notes/Agenda: https://docs.google.com/document/d/1-8KEG8AjAgKznS9NFm3qWqkGyCHmvU6HVl0sk5hwoAE/edit?pli=1#heading=h.oh2hv7jn222m

Find out more about the Storage SIG here: https://github.com/kubernetes/community/tree/master/sig-storage

Moderator: Saad Ali (Google)

A

A

So

let's

go

ahead

and

get

started.

The

agenda

is

here

feel

free

to

add

anything

that

you'd

like.

If

there's

any

PRS

that

you

want

to

discuss

any

designs,

you

want

to

talk

about,

or

anything

else

feel

free

to

add

to

the

agenda

and

we'll

cover

it

as

we

go.

First

item

is

going

over

the

items

that

we

committed

to

you

for

the

next

quarter,

for

the

1.18

release

of

kubernetes

for

storage

and

just

get

status

updates.

There's

not

that

many

people

on

the

call

today.

A

A

B

A

B

A

D

D

D

D

A

A

A

A

B

B

A

A

B

A

A

A

A

A

D

B

C

A

D

A

A

D

A

I

think

they

both

kind

of

were

thinking

about

the

problem

space,

and

so

they

both

came

up

with

caps,

and

then

they

realized

that

they

were

trying

to

tackle

the

same

problem

space

and

are

in

the

process

of

deduping

and

and

kind

of

figuring

out

how

to

move

forward.

It

is

my

understanding,

so

I

think

my

feedback

to

Patrick

was

if

they

can

figure

that

out

on

their

own

great.

If

not,

we

can

set

up

a

meeting

to

talk

through

and

figure

out

what

next

steps

are.

E

A

A

Okay,

so

that'll

be

a

fun

one.

This

quarter

next

item

is

the

CSI

driver

is

that

this

community

should

own

that

we

cannot

find

owners

for

NFS,

I,

scuzzy,

fiber,

channel

and

flex

adapter.

The

last

status

here

was

that

we

had

no

owners

for

this

and

without

owners

who

were

planning

to

deprecate.

These

so

question

is:

is

anyone

on

the

call

interested

in

potentially

picking

these

up?

Otherwise,

we

will.

A

B

A

B

I

think

the

problem

from

my

perspective

is

is

now

that

everyone

has

CSI

drivers

that

do

all

of

this

stuff

for

at

least

their

own

storage

systems.

Nobody

cares

about

this

stuff

anymore,

because

because,

in

theory,

what

we're

telling

our

users

don't

use

that

you

should

use

the

CSI

driver,

so

the

incentive

to

make

that

stuff

high-quality,

has

gone

away

yeah

so.

A

A

A

A

Okay,

moving

on

CSI

migration,

next

steps,

deep,

Chiang,

David

I-

don't

think

anybody

is

on

the

line

for

that

so

I'm

gonna

mark

that

as

no

updates.

Next

item

is

SELinux

recursive

permission

handling.

This

is

trying

to

improve

the

performance

impact

of

every

time.

There's

a

mount

going

through

and

recursively

joining

every

single

directory,

but.

A

E

A

D

D

D

A

Thank

You

Shane

next

is

the

object.

Storage,

API,

Jeff,

Vance

and

crew

are

working

on

a

cap

here,

and

the

cap

is

out

and

I

believe

they're

beginning

to

address

feedback.

I,

don't

think

there

has

been

much

progress

in

the

last

couple

weeks

over

the

holidays.

If

you

have

interest

in

this

area,

please

feel

free

to

take

a

look

and

provide

feedback.

A

B

E

F

B

A

Big

I

think

drawback

here

was:

we

were

coupling

the

design

for

generic

volume

data

sources

with

an

external

populate

er,

and

that

is

a

much

more

complicated

design,

external

popular

meaning.

The

thing

that

populates

the

volume

being

different

from

the

storage

system,

and

for

that

is

a

lot

larger,

but.

B

That's

what

I'm

working

on

so

I'm

interested

in

it

and

and

that

here's

the

thing

all

of

the

data

populated

stuff

can

be

done

out

of

tree,

but

the

the

thing

that

is

currently

stripping

out

the

the

data

sources

that

are

not

volumes

and

not

PvE

and

not

snapshots,

is

the

kubernetes

api.

So,

like

I'm

wondering

that

that's

the

only

core

piece

of

this

is

that

is

it's

basically

dropping

things

that

are

not

snapshots

and

not

volumes

and

I'm

wondering

what

is

the

harm

in

in

having

something

there

that

the

system

doesn't

understand?

B

A

That's

a

really

good

question,

I

think

mostly

it

was.

We

wanted

to

be

very

cautious

as

we

approached

this

model

of

data

source,

we

weren't

confident

that

it

was

the

right

way

of

doing

things

and

even

today,

I

think

we

have

some

questions

about

the

way

that

it

was

done.

It

should

be

done.

I

think

last

quarter,

big

discussion

about

whether

data

source

should

be

a

standalone

object

now,

but

that

ship

has

kind

of

sailed.

So

it's

very

difficult

to

undo

that

decision.

Right

then

I.

B

A

D

B

D

B

B

A

So

I

I

think

it

makes

sense

to

take

steps

towards

this

you're

right.

This

is

the

direction

that

we

want

to

go

in,

but

I

also

agree

with

Shing

API

reviewers

may

push

back,

and

if

we

don't

have

a

concrete

implementation

to

go

along

with

it,

they

might

say

hey

what

the

heck.

What

are

you

doing

this,

for?

You

can't

prove

that

this

work

yeah.

B

Yeah,

so

I

totally

agree

that

we

would

want

to

show

at

least

an

implementation

of

an

external

populate

er

that

that

works,

but

but

I

think

the

really

important

thing

to

show

is

that

if

someone

puts

garbage

in

there

they

that

it's

harmless

right,

because

because

that

that's

the

bar

that

that

they

should

really

care

about

is

at

least

you

didn't

break

anything

right.

It

yeah

you

can

put

garbage

in

there

and

it's

system

doesn't

do

anything

and

that's

weird,

but.

F

B

A

D

B

B

B

Pods

themselves

tend

not

to

have

the

right

permissions

and

you

probably

don't

want

to

give

them

those

permissions

in

general,

because

that

just

to

be

a

management

headache

for

if

every

pod

needs

permission

to

do,

FS

freeze,

so

I

started

thinking.

This

is

probably

a

service

that

should

be

provided

by

this

CSI

driver.

There

needs

to

be

a

way

to

say:

hey,

I'm,

about

to

snapshot

this

volume.

Please

you

know

flush

the

file

system

buffers

or,

if

do

an

FS

freezer

or

or

do

a

sinker

or

whatever

it

is

that

we

need

to

do

so.

D

B

D

B

D

B

So

the

problem

is

that,

because

we

don't

want

to

elevate

the

permissions

of

every

pod,

that

needs

to

be

snapshotted.

Something

outside

of

the

pod

needs

to

be

able

to

freeze

the

file

system,

and

that's

the

like

that

at

least

that

portion

of

the

work

I

think

should

be

done

in

the

CSI,

node

plugin

and,

of

course,

the

other

all

of

the

other

stuff.

B

This

application-specific,

of

course,

has

to

exist

in

the

execution

of

itself

and

run

inside

the

pod,

with

the

application,

but

I

just

I,

don't

see

a

way

to

to

actually

force

the

file

system

buffers

to

flush

to

disk,

so

that

you

can

take,

isn't

snapshot

without

this

piece

and

because

it

requires

elevated

permissions,

I

think

it

has

to

go

into

the

node

plugin.

So.

B

Well,

we

would

have,

we

would

have

a

new

CSI

we'd

have

two

new

CSI

node,

our

pcs.

You

know

a

freeze

in

a

thaw

and

then

whatever

was

orchestrating

the

the

taking

of

the

consistent

snaps

I

would

have

to

have

a

phase

where

it

called

back

out

to

the

to

cubelet

and

said:

hey,

go

freeze

this

fastest

in

there.

A

So

if

you

had

such

a

call

with

a

CSI

driver,

be

able

to

do

the

right

thing

if

the

volume

is

already

mounted

at

some

path

is

the

assumption

that

the

CSI

driver

has

access

to

that

mount

because

they

created

it

it's

running

with

sufficiently

elevated

permissions

that

it

can

do

whatever

it

wants

on

that

path.

Well,.

B

F

B

Don't

see

why

not

I

haven't

actually

tried,

it

I

mean

I,

guess

I

guess:

I

should

I

should

just

go

prototype

this

and

brother.

It

works,

but

but

I

I

want

to

decouple

it

from

the

execution

hooks

stuff,

because

this

is

generically

useful

right

today

you

can

take

snapshots

of

pods

or

of

PVCs

individually

and

today,

unless

you

have

a

way

of

forcing

them

to

sync

you're,

going

to

lose

the

last

five

or

ten

seconds

of

data.

You

know

when

you

take

that

snapshot,

because

it's

sitting

in

some

buffer

cache

and

it's

just

annoying.

B

D

B

A

B

A

A

A

D

B

Yeah,

this

is

like

number

three

or

four,

but

I

wanted

to

bring

it

up

and

get

it

on

people's

minds,

because

I

think

that

it's

absence

is

going

to

really

hurt

us

when

people

start

to

actually

try

to

take

snapshots

and

they're

going

to

be

like

hey

wait.

Why

is

my?

Why

am

I

missing

my

last

few

rights?

This.

B

E

C

C

E

C

D

B

D

B

It

might

be

good

to

like

actually

build

a

working

implementation

of

the

really

complex

thing.

It

does

everything

and

then

take

a

step

back

and

look

at

it

and

say

this

is

horrible.

We

can,

we

can

do

it

better,

but

but

I

don't

think

we'll

see

how

we

can

do

it

better

until

we

have

the

ugly

version

of

it

bill.