►

From YouTube: Kubernetes SIG Storage 20180719

Description

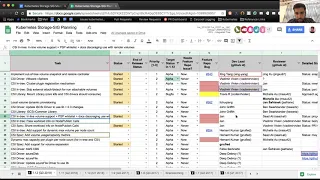

Kubernetes Storage Special-Interest-Group (SIG) Meeting - 19 July 2018

Meeting Notes/Agenda: https://docs.google.com/document/d/1-8KEG8AjAgKznS9NFm3qWqkGyCHmvU6HVl0sk5hwoAE/edit#heading=h.wej10zne6qo2

Find out more about the Storage SIG here: https://github.com/kubernetes/community/tree/master/sig-storage

Moderator: Saad Ali (Google)

Chat Log:

19:36:46 From Jan Šafránek : I made the hidden lines appear, there was filter on Target Status column to list only alpha features

19:39:12 From Saad Ali : Thanks!

A

A

So

if

you

have

anything

that

you

want

to

discuss,

please

feel

free

to

add

it

to

the

agenda.

High-Level

we're

gonna

go

over

status

for

the

different

features

that

we're

tracking

for

the

current

release.

Then

we're

gonna

discuss

any

PRS

that

need

attention

bugs

that

we

want

to

discuss

and

finally

design

reviews.

So

let's

kick

it

off

by

taking

a

look

at

the

planning

spreadsheet

and

doing

a

review

of

the

features

that

we're

tracking

for

this

release.

A

We

have

a

lot

of

them

I'm

going

to

skip

over

the

CSI

driver

statuses,

because

there's

a

lot

of

CSI

drivers

and

they're

not

going

to

be

entry,

so

we

can

get

an

end

of

quarter

status

at

the

end

of

the

quarter,

instead

of

doing

a

weekly

or

bi-weekly

update

on

them.

So

first

up

is

implementing

out

of

tree

volume,

snapshot

and

restore

controller.

B

B

C

A

A

Looks

like

he's

not

on

the

call

today.

I

can

try

and

give

an

update

on

his

behalf,

so

the

meetings

were

discussing

whether

a

registration

mechanism

is

necessary

or

not,

and

if

so,

what

would

it

look

like?

Would

it

be

a

CR

D?

Would

it

be

an

entry

object?

Could

we

even

reuse

the

existing

node

objects

at

the

moment,

we're

leaning

towards

doing

a

first

class

entry

object.

Yan

is

planning

on

putting

together

a

proposal

for

cig

architecture

to

see

what

their

thoughts

on

it

are

and

based

on

that

we'll

proceed

from

there.

A

Next

feature

is

skipping

external

attach

detach

for

non

attachable

volumes

for

CSI.

This

has

a

dependency

on

the

plug-in

registration

mechanism.

If

the

plug-in

registration

mechanism

goes

in,

this

feature

can

use

that

mechanism.

Otherwise

we'll

have

to

come

up

with

something

else

here.

So

it's

waiting

on

number

5

to

get

unblocked

next

up

is

the

mount

library

Travis.

Do

you

want

to

give

an

update

here.

D

D

A

A

A

A

A

So

this

is

a

feature

of

for

CSI,

where

CSI

currently

is

only

accessible

through

PV

PVC,

but

for

ephemeral

volumes.

Ideally,

we

want

it

to

be

accessible

through

inline,

but

at

the

same

time

we

don't

really

want

attachable

volumes

to

be

accessible

in

line,

so

it

puts

us

in

a

weird

spot

and

the

design

here

is

to

come

up

with

solution.

Yon

has

a

proposal

out.

Please

take

a

look

at

it

and

provide

feedback,

currently

its

outstanding

waiting

feedback.

A

A

Next

up

is

the

CSI

spec

having

work

load,

information

passed

to

the

node

published

call.

This

was

one

of

the

items

we

believe

we

need

for

a

specific

type

of

volumes

that

require

identity,

pod

identity

and

we're

trying

to

work

through

those

use

cases.

At

the

moment

we

had

a

CSI

community

call

yesterday,

where

we

discussed

this

at

at

length.

A

E

F

Yes,

sorry

so

we

got

the

CSS

spec

mouthful.

You're

gonna

have

a

call

on

Monday.

Like

we

act,

we

had

to

have

a

general

consensus

about

it.

You

had

a

call

about

CSI

driver

is

history

on

Monday

or

last

Monday,

and

the

idea

is

like

we

have

a

general

consensus

how

to

proceed,

but

we

have

some

remaining

items

that

made

you

need

to

figure

out

like

how

to

take

into

account.

F

F

So

we

had

a

call

CSI

and

the

CSI

community

meeting

yesterday

and

we

agreed

on

the

broader

approach

of

not

calling

it

matrix,

but

calling

it

like

capacity

mission

that

will

expose

the

both

eye

notes

available,

bytes

available

that

we

have

currently

and

allied

open

a

proposal

in

CSI

to

expose

that

capacity.

Information.

A

new

RPC

call

at

the

node

code

level

and

that

can

be

used

by

by

communities

and

other

SEOs

are.

E

F

I

notes

could

be

faced

from

the

yeah,

the

using

Stata

fiscal.

So

that's

a

that's.

That's

a

that's

an

old

property

where

the

load

is

hosted.

The

capacity,

though,

is

not

depends

on

how

the

volume,

what

type

one

column

is,

so

the

plug-in

will

report

both

and

it

will.

It

will

report

from

the

node,

so

I.

A

E

E

F

E

G

F

G

H

F

F

All

right,

so

this

is

I

think

this

is

the

one

that

we

are

talking

about

earlier,

like

the

the

things

that

are

left

to

be

discussed,

because

certain

volume

types

not

only

there

is

a

limit

account

of

how

many

volumes

you

can

attach,

but

there's

also

how

much

you

can

attach

a

capacity

related

constraint.

So

I

think

this.

This

is

kind

of

like

I'm,

sorry.

F

I

A

A

F

There's

a

spec

already

out,

but

there's

like

at

least

like

I,

think

there's

a

couple

of

items

that

are

one

item

that

is

pending

is

whether

the

online

next,

the

capability

of

what

kind

of

expansion

the

volume

the

column

plug-in

supports

online

versus

offline,

whether

it

should

be

exposed

as

a

capability

or

not

is

that

one

is

not

quite

nailed

down

yet

and

I.

Think

that's

where

the

mean

this

thing

is.

We

had

a

discussion

last

week

in

CSI

this

week.

We

didn't

talk

about

this

particular

thing.

A

Sounds

good,

thank

you

come

on

moving

on,

we

have

a

few

CSI

drivers

which

I

am

going

to

skip

over.

If

you

are

a

feature

owner

for

the

CSI

driver,

please

continue

to

work

on

it

and

we'll

get

a

status

update

near

the

end

of

the

quarter,

CSI

library,

the

Sif's

common

library.

Deep,

do

you

want

to

talk

about

this?

Yes,.

H

H

H

This

insig

windows

I

think

that

the

decision

was

pretty

much

that

there

was

no

no

confidence

case

where

this

would

be

used

in

Windows

and

since

the

support

doesn't

exist,

it

will

take

a

bit

of

work

apparently

to

surface

the

the

block

devices

through

the

windows

containers.

So

as

a

result,

unless

there's

like

explicit

customer

requirement

or

a

use

case

around

it,

okay.

H

A

A

A

G

I've

only

started

looking

at

this

in

detail

this

week,

so

I

don't

know

for

sure,

but

my

suspicion

is

that

it

probably

there's

no

reason

to

believe

yet

that

it's

going

to

be

needed

at

all.

It's

just

amount.

So

if

that

changes,

all

oh

I

should

find

out

soon,

because

I'm

really

spending

a

lot

of

time

with

the

CSI

NFS

driver.

This

week,

perfect.

J

F

A

F

I

I

A

A

A

I

A

I

E

A

I

A

A

A

G

Well,

yeah

I

only

bring

it

up

because

I'm

I

don't

understand

like

what.

What

didn't

go

right

here,

because

the

code

was

more

or

less

ready

for

111

and

it

was

sort

of

late

in

the

release.

So

I

mean

I'm,

not

super

mad

I.

Didn't

it

didn't

merge,

but

I

want

to

know

like

what

should

I

have

done.

Should

I

have

been

poking

people

personally,

yeah.

A

G

A

G

A

E

E

We

already

have

some

tests

here

for

ephemeral

volumes.

As

part

of

this

we

can

evaluate

if

there

are

more

test

cases,

we

should

add

to

the

ephemeral

volumes

such

as

the

sub

path

feature,

and

maybe

some

I'm

not

sure-

maybe

some

node

failure

handling

cases,

some

new

ideas

that

I

had

of

test

cases

that

could

fit

in

core

performance.

Our

PDC

features

that

don't

require

a

specific

volume

plug-in

to

actually

test.

So

these

are

things

like

pre,

binding

or

a

static

binding.

E

The

PV

protection

feature

testing

that

we

claimed

retain

reclaimed

policies,

some

things

like

that.

So

that's

the

basic

idea

behind

the

first

suite

the

then

there's

the

second

suite

which

I

am

calling

the

persistent

volume

plug-in

profile.

This

is

the

profile

suite

I

think

is,

is

basically

a

concept

where

it's

not

strictly

required

for

kubernetes

conformance,

but

it

can

be

some

optional,

additional

formed

in

sweet

that

kubernetes

distributions

can

optionally

conform

to

so

here.

E

So

those

kind

of

things

that,

like

you,

know,

basic

functionality

that

every

plugin

should

support

can

go

here,

and

the

idea

with

this

profile

suite

is

that

distributions

can

bundle

one

or

more

persistent

volley

plugins

by

default

in

order

to

conform

to

this

profile

suite

and

then

the

third

category

of

tests

is

basically

the

this

is

sort

of

like

that.

Everything

else

suite

where

we

test

a

lot

of

features

that

not

all

volume

plugins

may

support.

E

E

E

E

E

E

That's

correct

so

I

want

to

formulate

some

of

these

test

cases

from

the

pod

perspective

and

also

like

the

node

perspective,

so

like

say

like

the

pod

can

recover

if

it

gets

rescheduled

on

a

different

node

or

it

can

recover

if

the

node

fails

or

it

can

recover.

If

the

node

reboots

you

test

cases

like

that,

I

love.

H

E

I

think

there's

right

now:

I

just

have

like

a

really

rough

idea

right

now,

I'm,

like

possible

scenario,

so

test

I,

think

when

we

actually

go

and

investigate

writing

these

test

cases.

We

can

actually

work

out

those

details,

so

I

have

a

spreadsheet

that

I

started

drafting

some

more

specific

test

cases,

although

right

now

the

spreadsheets

also

pretty

generic

and

not

very

detailed,

but

I-

think

the

idea

is

each

each

kind

of

common

class

of

test

cases

we

want

to

add.

E

We

can

open

up

an

issue

for

that,

and

then

we

can

work

out

more

of

the

details

of

exactly

what

the

test

needs

to

do

and

how

to

propagate

or

how

to

you

know,

trigger

some

of

those

failures.

So

if

you

guys

have

any

ideas

of

more

test

cases

that

we

can

add,

feel

free

to

add

them

to

this

spreadsheet

I

have

I've

given

six

storage,

edit

capabilities.

E

I

E

I

definitely

think

like

workload.

Integration

should

be

part

of

this

too

I

forgot,

where

I

put

it

I

might

have

put

it

under

optional

features,

or

did

they

put

it

under,

maybe

I

put

on

their

second

one.

I

had

like

one

bullet

point

about

stateful

sets

yeah

workloads.

We

can

potentially

add

some

more

things

here

and

you

know

flush

it

out

a

little

more

there's.

I

K

E

I

think

we'll

have

to

think

about

it

like,

like

from

a

user's

perspective.

It's

definitely

important

to

from

a

user

that,

like

their

volume,

is

going

to

behave

properly

in

disruptive

scenarios

we'll

have

to

see

we

might

not

be

able

to

run

it.

We

might

need

to

make

changes

to

the

way

that

conformance

tests

are

run

to

be

able

to

allow

distrub

disruptive

tests.

E

E

E

I

C

E

Assuming

well,

this

theoretically

is

just

e

to

e

test

changes

like

we're

not

making

changes

to

the

kubernetes

core

code

in

order

to

enable

this.

So,

theoretically,

if

we,

you

know,

write

this,

we

can

potentially

run

the

test

against

older

clusters

too.

I

think

that

that's

possible

I

think

from

a

conformance

point

of

view,

I

don't

think

they

allow,

like

backporting

tests,

conformance

tests

to

previous

releases

right.

E

I

think

I've

liked

that

the

test

framework

could

be

made

to

like

work

against

other.

You

know

different

kubernetes

releases

I,

think

that's

fine,

so

that

we

can

act

for

conformance

and

might

only

run

against

the

latest

kubernetes

releases.

But

for

just

a

testing

point

of

view,

we

can

certainly

run

these

tests.

E

L

I

L

E

Think

it's

more

so

I

don't

think

we

have

like

plugin,

there's

no

plugins

certification.

So

all

of

this

has

to

be

tested

against

a

kubernetes,

distro.

So

I

think

it's

up

to

the

kubernetes

distributions

to

choose

which

volume

plugins

they

want

to

test

for

conformance

and

say

that

you

know

certify

that

this

volume

plug-in

works

well

on

their

environment.

A

K

Where

I

went

through

the

existing,

it

would

just

be

happy

to

burn

at

least

for

storage.

I

went

through

the

cloud

from

photo

volume.

Plugins.

There

are

cloud

based.

We

have

ng

of

test

for

Amazon,

as

your

UCPD

opens.

The

can

side,

and

this

fear

we

don't

have

any

foreigner

of

fire,

but

it

seems

nobody

cares

and

then.

K

The

test

job

that

actually

runs

the

test

and

the

end

it

gives

the

different

picture

because

the

tests

AWS

and

the

test

GCP

you

none

any

tests

on

a

hurry.

We

don't

have

OpenStack,

we

don't

dismiss

fear

I,

know

that

we

remember

run

bunch

of

their

own

into

invest

and

they

are

fixing

the

bugs

and

pushing

PRS.

But

I

don't

know

if

Microsoft

or

somebody

who

cares

about

OpenStack

runs

the

test,

but

I

don't

think

I

can

do

anything

here.

I

hope

Microsoft

does

around

yeah.

H

K

K

Know,

rich

OS:

there

is

no

test

there

and

I

looked

at

for

free

test

behalf

and

looked

which

do

you

run

and

we

run

quite

a

lot

of

them,

but

we

don't

run

safe,

X,

cozy

and

safe

RDD

and

I

would

like

to

run

them

because

we

have

to

technology

it's

open

source.

We

can

run

it.

Nothing

is

preventing

us,

except

for

the

images

that

the

run

end

to

end

tests

for

are

all

the

tests

run

on

GC

I,

which

is

immutable.

K

So

I

fought

about

it.

How

to

write

and

I

found

couple

of

issues

like

that

surf

server.

Image

is

for

definitive

I

cut,

the

image

is

gone,

but

I

I

am

fixing

them,

but

if

we

add

a

new

job

that

would

run

all

the

tests

we

have,

including

self

and

I

see.

We

could

run

this

test

if

the

job

runs.

Ubuntu

instead

of

you

see,

I

I

know

take

that

couple

of

other

that

just

ordered

a

run.

You

boom-boom,

so

it

shouldn't

be

a

problem.

K

So

we

get

the

necessary

kernel

modules

and

we

also

have

the

possibility

to

run

the

mount

utilities

like

I,

scuzzy,

ATM

or

staff,

these

inside

containers,

and

not

to

have

them

on

the

host.

So

this

way

we

could

run

the

tests

in

some

end-to-end

job

with

every

commit,

or

at

least

once

a

day.

It

requires

couple

of

changes,

mostly

in

the

test

framework.

We

use,

for

example,

I

need

to

add

new

parameter

to

and

to

end

test

binary

that

will

run

the

container

of

the

computer.

K

It

is

on

every

host

before

the

test

start,

so

the

test

itself

expects

that

the

Monte

relatives

are

available

somewhere.

If

somebody

wants

to

install

to

run

the

tests

on

its

own

machine

with

the

utility

on

the

host

and

run

it

and

in

the

same

way

somebody

can

run

the

test

on

its

own

house,

the

wave

the

boundary

boundary

in

the

container

so

I

want

to

test

not

to

care

where

it

runs.

That's

why

I

need

to

end-to-end

test

suite

to

curl.

K

Well,

so

I

need

the

job,

I

need

the

new

parameter

and,

basically

that's

it

so

I.

What

I

need

here

is

somebody

from

6:00

to

show

you

this

combo

stick

which

perspective,

because

I

will

run

all

the

tests

that

have

6

storage

tag

and

I

need

to

skip

all

the

disruptive,

because

on

Ubuntu

it's

not

so

simple

to

restart

you

but

I

think

I

will

skip

the

flaky

I

work

in

the

stereo,

because

the

boots

everything

down

but

I,

will

include

or

slow

tests.

I

did

some

measurement.

It

will

take

16

minutes

fifteen.

K

Sixteen

minutes

run

all

the

tests,

including

one

other

thing

want

in

/.

Oh

yeah

and

everything

works.

So

again.

What

I

need

here

is

somebody

from

sixth

original

review

dates.

If

it

is

good

thing

to

do,

this

will

run

only

the

tests

which

we

have

right

now

in

the

future.

I

can

imagine

that

we

can

extend

to

the

tests

and

coverage

and

I,

don't

know,

run

run

full

example.

K

Soft

pass

test

for

iced

coffee

and

for

safar

baby

and

run

I,

don't

know

what

FS

group

tests

and

all

the

features

we

run.

We

test

if

other

volumes

which

we

could

test,

also

with

the

ice,

Kazi

ancef

and

maybe

in

the

future.

We

could

also

add

some

tests

for

what

works

or

for

which

OS

and

the

storage

vendors

that

they,

which

care

about

kubernetes

I

know

that

crocodile

I

haven't

heard

from

storage

OS

for

a

long

long

time.

K

E

A

E

A

K

A

We

do

something

ugly,

like

have

a

common

method,

that

all

the

storage

methods

will

run

and

every

test

must

pass

true,

true

or

false

to

it,

and

if

it's

true,

then

we

install

it

and

then

the

test

itself

has

to

determine

if

it

should

install

the

utility

and

it'll

have

to

implement

logic

like

if

I

am

you

know,

running

this

test.

With

this

specific

driver,

then

I

will

utility.

A

L

G

A

K

Another

thing

I

wanted

to

get

help.

This

is

I

want

to

write

through

some

room,

the

proof

606

testing.

So

if

anybody

has

any

contact

there,

I

would

like

them

to

approve

this.

Come

on.

Induction

me

in

this

job

would

make

sense

or

they

have

better

options.

I

take

whole

six

testing

on

the

PR,

but

yeah

I.