►

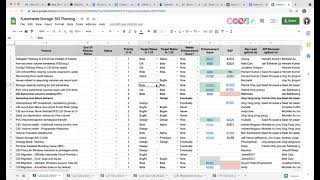

From YouTube: Kubernetes SIG Storage - Bi-Weekly Meeting 20210826

Description

Kubernetes Storage Special-Interest-Group (SIG) Bi-Weekly Meeting - 26 August 2021

Meeting Notes/Agenda: -

Find out more about the Storage SIG here: https://github.com/kubernetes/community/tree/master/sig-storage

Moderator: Xing Yang (VMware)

A

A

So

there

are

a

few

deadlines

coming

september,

2nd

next

thursday,

we

have

a

soft

freeze

deadline

for

production,

redness,

meaning

that,

if

you're

working

on

a

feature

that

is

targeting

alpha,

beta

or

ga,

you

need

to

have

the

production,

readiness

review,

form

filled

out

and

pin

the

reviewer

say

that

it

is

ready

for

review

and

then

the

following

week

september.

9Th

that's

the

cap

freeze

deadline.

So

all

the

caps

has

to

emerge

by

that

day,

and

then

we

have

a

few

topics

today

that

we

can

go

to

after

our

planning.

B

A

C

A

B

And

he's

dead,

I'm

not

gone

okay,

yeah,

so

I'll

follow

up

with

him

and

either

of

us

like

I'm,

not

sure

he

he

has

some

time

cycle

like

actual

work,

is

pretty

minimal

to

actually

move

the

future

kit.

But

I

think

this

also

has

to

go

undergo

a

pr

review,

because

the

feature

is

going

from

beta

to

g

all

right

so

I'll

sync

with

him

and

and

work

on

updating

the

appearance.

A

Okay,

but

I

think

the

forms

for

beta

and

ga

seems

to

be

the

same-

I

mean

if

you

are

filling

out

the

pr,

I

think

so

at

least

I

don't

see

any

section

that

is

only

required

for

g

but

not

for

beta,

but

I

think

it's

still

good

too.

I

think

they

still

need

to

review

it

if,

since

you're

changing

the

target

right

and.

B

A

A

B

B

Yeah,

I

just

don't

see

a

way

me

and

michelle

talked

offline

about

this

one.

I

just

don't

see

a

way

of

we

could.

We

could

support

both.

Then

it

becomes

like

the

two

source

of

truth

and

it's

confusing

so

yeah

and

I

think

I

think

the

api

reviewers

jordan

and

tim

didn't

get

any

input

on

this

one.

So

I

don't

know

if

we'll

have

will

end

up

copying

the

field

from

pv

to

sorry

sc

to

pv.

B

Yeah

so

I

have

update

to

the

cap

posted,

but

I'm

working

on

the

state

machine

diagram

actually

like

and

getting

it's

it's

complicated

features.

It

requires

a

little

bit

like

yeah,

more

details,

datagram

so

and

the

pri

review

was

approved

previously,

but

I

might

get

another

pr

review

just

in

case

and.

E

A

D

A

D

A

G

D

D

D

Or

a

mailing

list,

something

like

that.

I'm

just

wondering

what

happens

if

we

don't

get

feedback,

because

that

really

means

we

now

depend

on

users

to

tell

us

what

we

might

but

just

gets

blocked

by

by

people

not

acting,

although

they

are

using

it.

That's

my

biggest

concern

actually,

so,

even

though

it's

technically

ready

and

it

is

getting

used-

we

just

don't

know.

Well

that's

the

case,

so

I.

H

D

Yeah,

okay

and

and

others

have

fixed

issues

around

it,

so

rose,

probably

was

also

motivated

by

actually

depending

on

it.

So

I

can

go

back

to

those

people

and

get

see

whether

they

can

confirm

that

they

are

using

it.

The

the

controller,

the

configuration

thing

that

was

a

pr

that

came

from

someone

else

who

has

not

been

involved

with

the

cap

before

oh

yeah.

That

might

be

two

people.

D

D

H

H

H

D

Yeah,

I

I

checked

that

when

we

went

to

to

beta

and

and

and

that

it's

listed

again

under

ga,

I

think

it's

just

from

the

from

from

the

template.

I'm

not

even

sure

what

we've

I

don't

think,

we've

discussed

in

detail

what

was

meant

with

it.

We

are

doing

that

now

anyway,

I

think

it's,

it's

not

a

big

big

problem

for

this

particular

feature

anyway.

D

Scalability

testing

here

I

just

asked

some

questions

on

slack

and

we

can

follow

up

there.

I

can

certainly

add

something

to

cluster

loader

to

to

test

this

running.

It

then

we'll

have

the

same

issues

with

as

and

all

of

our

storage

tests.

You

basically

need

to

know

what

cluster

has

a

storage

pro

provisioner

that

you

can

use

for

this

particular

test.

D

A

A

A

So

I'll

just

say

my

did

not

show

us

the

status

of

this

one

and

the

next

one.

Oh,

the

deprecation

notice

for

flexful.

I

think

I

think

there

are

still

some

unresolved

discussions

in

that

google

doc,

where

we

have

this

message

drafted.

So

I

think

maybe

I'll,

pin

michelle

and

young

and

I

think

I'll

see

if

we

can

finalize

this.

A

A

And

next

one

csi

warning

house

additional

matrix

yeah,

so

I

updated

the

cap,

I'm

not

sure

if

we

can

directly

go

to

beta

because

the

the

the

matrix

actually

has

some.

There

is

no

kubelet

metrics

api

that

was

not

there

before

so

now

we

added

those

not

sure

if

we

can

actually

go

directly

to

beta

michelle.

If

you

can

take

a

look

and

then

we

can

decide

whether

you

know

we

should

still

stay

in

alpha

or

go

directly

to

beta

for

that.

But

the

cap

is

ready

for

review

sounds

good.

Thank

you.

A

Okay,

thanks!

The

next

one

is

csm1

house

programmatic

response.

So

last

time

we

said

we

want

to

split

this

from

the

previous

item

so

that

we

can

make

progress

in

parallel.

So

nick

actually

said

his

co-worker

has

written

down

some

details,

how

they

are

handling

the

this

case,

so

how

they

do

reaction.

I

think

he's

going

to

clean

up

that

a

little

bit

and

then

add

that

to

google

doc.

So

so

then

we

can

go

from

there.

I

Then,

yes,

I

think

I

think

you

know

what's

going

on

here,

we're

we're

working

on

releasing

the

alpha

builds

of

these

things.

We're

currently

blocked

on

a

large

number

of

in

for

people

being

out

on

vacation

this

week,

but

eventually

people

will

come

back

from

vacation

and

we'll

get

the

infrastructure

changes

merged

the

release

tools,

stuff

patrick,

is

helping

with

and

then

and

then

those

releases

will

go

regarding

123

the

hope

is

to

go

to

beta.

I

was

looking

at

the

graduation

criteria

that

we

wrote

down

in

the

kev.

I

F

I

A

A

K

Yeah

so

I

put

in

the

comments

the

issue

number

and

the

gap

br,

so

I

guess

they

just

need

to

be

put

in

the

actual

cells.

Oh

okay,

so

let

me

see

that

I

don't

have

access

but

yeah

so

far.

The

the

initial

cap

here

is

out

and

got

a

lot

of

great

feedback

from

patrick

and

jan

jan

pointed

out

a

bunch

of

good

issues

to

think

about

mainly

around

subpath

and

s,

linux

handling

and

fs

group.

So

yeah.

K

A

A

K

L

A

Okay,

thank

you,

and

next

one

is

see

the

migration

v

sphere,

so

yeah

2.3

just

released.

I

think

those

the

rest

of

them

are

still

the

same.

The

raw

block,

we're

planning

to

have

that

release

end

of

year

nfs

before

business.

The

same

thing

and

this

issue

is,

do

I

think,

we're

trying

to

get

this

pr

merged

to

address

the

crd

issue.

L

B

A

L

L

L

A

H

A

A

C

A

A

A

L

A

A

M

A

Okay,

next

one

execution

cool

container

notifier,

so

we

finally

get

some

reviews

from

sig

notes.

We

actually

added

a

lot

of

comments.

We

also

get

some

new

comments

from

an

api

reviewer

from

clayton,

so

shanti

and

I

are

addressing

that.

I

actually

need

to

discuss

about

it,

but

we

have

already

addressed

some

of

those

comments.

A

A

A

A

Okay,

so

the

pressure

leave

that

blank

all

right.

So

that's

all

we

have

here.

If

you

have

any

other

items

you

want

to

track.

Please

add

it

to

this

spreadsheet,

just

to

remember

that

your

deadlines

are

approaching

already

okay.

So

the

next

item

is

here:

we

have

one

from

command.

You

want

to

talk

about

this.

B

Not

a

design

issue,

but

we

have

been

noticing

like

a

lot

of

drivers,

since

the

drivers

actually

are,

like,

I

think,

azure

and

then

many

other

drivers

don't

take

name

names

but

like

basically

bringing

the

csi

spec

like

why

it

exists

and

and

making

directly

talking

to

kubernetes

api,

and

they

require

kubernetes

api

to.

They

require

credentials

and

set

up

like

like

service

account,

tokens

and

whatnot

to

talk

to

kubernetes

and

the

deployment

as

a

result

has

become

kind

of

complicated

and

moves

away

from

the

original.

A

B

A

Server

to

get

yeah,

so

so

it's

a

little

different,

so

I

can

say

I

can

tell

you

that

how

we

are

using

this

that

the

the

cs

driver

call.

You

know

the

rpc

that

insight

that

we

are

not

talking

to

kubernetes

at

all.

This

is

like

another

component,

so

there

are

other

information

that

we

need

from

the

kubernetes

cluster,

but

it's

not

inside

of

those

api

functions.

I

mean

inside

those.

A

A

B

Discussion,

let

me

finish

what

I

was

trying

to

say

is

that

why

driver

talks

through

kubernetes

api

server

depends

on

what

each

driver

is

trying

to

do.

Sometimes

it's

secret.

Sometimes

it's

like

trying

to

get

the

cluster

and

the

nodes

that

in

which

the

the

driver

is

so

that

the

node

can

figure

out

the

topology

of

the,

so

the

driver

can

figure

out

the

topology

in

which

it

exists.

Sometimes

it

wants

to

like

post

the

meta

information

about

pod

pvc

back

to

its

own

storage

apis.

F

F

B

All

right,

so

so

the

the

reason

the

driver

is

is

talking

directly

to

kubernetes.

Api

is

different.

It

varies

from

each

case.

Each

use

case

like

I

was

just

wondering

and

then

like

I

was

talking

to

yan

and

other

than

oh.

We

were

wondering

if

we

have

like

like

failed

in

in

that

sense

in

the

csi

design,

that

it

should

be

ceo

agnostic,

whereas,

like.

A

So

there's

one

time

we

actually

have

a

issue.

I

think

it

has

to

do

with

the

board

expansion.

I

think

we

are

actually

getting

a

recommendation

from

kubernetes

side

that

we

actually,

we

should

actually

just

add

in

a

mission

controller

from

our

own

side

to

prevent

that

from

happening.

I

think

you

are

actually

aware

of

that

issue,

so

I

I

just

think

it's

it's

kind

of

a

yeah.

A

H

A

B

B

A

B

Just

like

like,

for

example,

if,

if

we

don't

deploy

the

no

like

the

cs,

the

v-sphere

csi

driver

has

a

dependency

on

on

cloud.

The

external

ccm

external

cloud

control

manager

because

expects

the

node

objects

to

have

the

spec

dot

provider

id

if

it

is

not

set,

the

csi

driver

cannot

function,

and

the

point

is

that

that

it's

it's

getting

list

of

nodes

and

and

figuring

out.

What

is

the

provider

id

on

those

nodes

and.

C

I

I

A

J

Taking

a

step

back,

it

sounds

like

I

think

the

fundamental

problem

lamont

is

raising

is

legitimate

right.

Ideally,

we

want

csi

drivers

not

to

have

to

go

around

csi's

back

and

talk

directly

to

kubernetes.

It

sounds

like

there

are

a

number

of

cases

for

different

drivers,

different

reasons

for

them

to

reach

around

and

talk

to

kubernetes

directly.

J

I

think

the

best

thing

we

could

do

is

try

and

start

to

itemize

those

items

and

figure

out.

What

are

the

reasons

that

these

drivers

are

needing

to

go

behind

csi,

to

go

talk

to

kubernetes

directly

and

see

if

we

can

start

promoting

some

of

those

use

cases

into

into

the

spec

into

kind

of

the

kubernetes

csi

interface,

so

that

you

know

we

can

simplify

the

drivers

again.

The

goal

goal,

ideally,

is

that

the

drivers

only

go

through

csi

and

don't

have

to

go

around

it.

I

I

mean

I

don't

know

if

that

is

the

goal,

I

think

the

goal

would

be

to

make

it

easier

to

not

need

kubernetes

for

certain

use

cases,

but

like

one

of

the

common

use

cases

I

think

someone

mentioned

is

some

drivers

just

want

to

use

crds

to

persist

their

own

data?

And

it's

like

we're

not

going

to

put

data

persistence.

That's

fair,

yeah.

C

J

Right,

I

guess

it's:

are

there

a

common

set

of

kind

of

use

cases

where

you

know

we,

we

it's

like

low-hanging

fruit

that

we

should

just

put

into

the

specs

so

that

we

can

make

the

life

of

these

drivers

easier,

you're

right.

There

will

always

be

kind

of

one-off

drivers

that

are

doing

something

odd,

that

need

to

talk

to

kubernetes

and

that's

fine,

but

if

there

is

something

we

can

do

to

kind

of

reduce

these

common

cases,

it'd

be

better.

H

I

think

the

main

one

that

I

know

of

is

is

getting

the

provider

id

from

the

node

object.

I

think

this

is

this

is

for

drivers

that

don't

have

access

to

a

metadata

server

that

can

get

this

information,

so

I

think

they

depend

on

kubernetes

to

do

that,

but

I

think,

like

I

think,

aws

is

maybe

one

example

of

this,

but

I

believe

they

have

like

two

modes

like

either.

B

B

B

J

H

J

H

B

I

J

Yeah,

I

think

we'll

have

to

look

at

it

by

case-by-case

spaces.

Make

sure

we're

not.

You

know

leaking

too

many

abstraction

details

and

kind

of

doing

this.

In

the

same

way,

the

provider

id

one's

an

interesting

one

like

kubernetes

has

the

node

get

info

call.

It

has

nothing

on

the

request

today,

so

we

could

potentially

put

in

an

optional

field.

J

That

says

you

know

this

is

what

the

provider

thinks

that

the

id

is,

if

you

need

it,

but

I

would

say

even

you

know,

we

should

hide

that

behind

a

capability

or

something

and

discourage

people

from

using

it,

because

you

don't

want

folks

to

you,

know,

get

dependent

on

the

co

for

the

identity

if

they

don't

need

it,

but

yeah

to

ben's

point.

We

should

definitely

look

at

it

kind

of

a

case

case-by-case

basis.

A

I

I

mean

in

the

long

run

we

might

end

up

in

a

situation

where

we

want

to

define

kubernetes,

specific

csi

extensions

and

just

admit

that

the

vast

majority

of

people

who

are

implementing

csi

only

care

about

kubernetes

and

so

we'll

just

define

some

kubernetes

extensions.

Then

and

say:

look

these!

You

don't

get

these

if

you're

not

doing

kubernetes,

but

we

need

them

in

kubernetes.

So

so

we're

doing

it.

A

B

Interesting

but

nomad

the

like,

the

there

was

a

ebs

driver,

actually,

the

first

pr

that

went

in

ebs.

It

directly

talked

to

api

server

and

it

was

not

possible

to

opt

out.

So

basically,

ebs

driver

broke

in

on

kubernetes

environment

and

then

we

we

had

to

fix

it.

So

the

point

is

like:

yes,

people

are

using

csr

drivers,

maybe

not

all,

but

at

least

some

popular

ones

in

in

some

cus.

That

is,

that

is

not

kubernetes.

B

K

B

K

K

B

A

K

B

I

I

B

I

Yeah,

but

that

that's

a

compatibility

call

that

the

driver

author

has

to

make

right

like

if

they

wanted

to

be

able

if

they

want

to

implement

a

fallback.

So

it

can

work

in

the

absence

of

it.

Then

they

can

do

that.

But

if

there's

no

way

to

do

a

fallback,

they

just

have

to

say

look.

This

is

our

compatibility

like

you

got

to

have

kubernetes

version

x

or

higher,

because

that's

the

one

where

we

implemented

this

new

feature

and

if

you

don't

have

that

it

just

doesn't

work.

H

A

C

C

The

mmf

was

created

to

enable

the

nvme

comments

to

transfer

data

between

a

host

and

ssd

or

system

over

or

networked

fabrics,

just

like

ice

casing,

but

the

nvme

for

hold

the

more

higher

lps

and

the

lower

nations,

and

now

mf4

already

supported

many

transports

beyond

the

pcie,

such

as

ethernet

fiber,

channel

tcp

and

rdma,

and

my

csimaflow

driver

many

supports

are

demanded

tcp

for

sds

in

this

driver.

I

have

already

implemented

basic

interface.