►

From YouTube: Kubernetes SIG Storage 20190117

Description

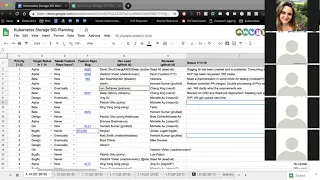

Kubernetes Storage Special-Interest-Group (SIG) Meeting - 17 January 2019

Meeting Notes/Agenda: https://docs.google.com/document/d/1-8KEG8AjAgKznS9NFm3qWqkGyCHmvU6HVl0sk5hwoAE/edit#heading=h.fgw45noj3wk0

Find out more about the Storage SIG here: https://github.com/kubernetes/community/tree/master/sig-storage

Moderator: Saad Ali (Google)

Chat Log:

None

A

Alright,

hello,

everyone-

this

is

the

kubernetes

storage

special-interest

group

today

is

January

17

2019.

As

a

reminder,

this

meeting

is

recorded

and

published

on

YouTube.

Today

we

are

going

to

go

over

the

planning

spreadsheet

to

review

the

items

that

we

are

working

on

as

a

sig

for

q1

for

the

1.14

kubernetes

release.

After

that

we're

gonna

go

over.

Some

of

the

PRS

that

currently

need

attention

on

looks

like

we

don't

really

have

any

designs

that

need

any

discussion.

A

If

there

is

anything

you

want

to

talk

about,

PRS

designs

or

anything

else

feel

free

to

add

to

the

agenda.

The

link

to

the

agenda

document

is

in

your

invite.

So

let's

go

ahead

and

get

started.

First

up

is

kubernetes

storage,

special

interest

group

planning

for

1:14

I'm,

just

going

to

go

down

and

we'll

get

status

updates

for

each

one

of

these

items.

B

Yeah,

so

this

is

deep

I'm

a

light.

This

is

basically

going.

You

know

it's

a

work-in-progress

b-bear

stuck

a

little

bit

earlier

around

staging

with

every.

So

that's

of

cleared

up

the

libraries

now

and

all

the

publishing

BOTS

and

everything

has

been

put

in

place.

So

yesterday

I

checked,

we

are

getting

the

library

synced

up

to

the

staging

repo

so

via

completely

unlock

on

that

area

and

the

work

around

like

provisioning

that

nice

to

touch

controller,

the

Mount

unmount

is

on

progress,

is

in

progress,

taking

a

dependency

on

the

staging

library.

B

C

A

D

So

for

this

one

yesterday,

Kevin

pointed

out

that

there's

a

cap

being

asked

for

this,

so

the

next

step

is

to

create

a

cap

and

I

guess

I'm,

not

exactly

sure

of

the

fit

process.

But

somebody

had

added

a

comment

on

the

on

the

feature:

an

existing

feature

to

create

a

cap.

So

that's

that's

the

next

step,

I've

already

been

meeting

with

Kevin,

Foxx

and

Sergei,

trying

to

plan

a

path

to

if

this

work,

rebooting,

okay,.

A

A

E

Don't

have

an

update

on

this,

yet

I

need

to

figure

out

if

we're

doing

this

in

a

the

host

path

driver

or

the

gcpd

PD

driver

or

a

different

driver.

If

I

have

to

write

this

code,

I

guess

I'll

do

it

in

the

host

path

driver.

But

I

had

been

hoping

that

someone

was

gonna.

You

know,

put

roblox

support

in

one

of

the

the

other

drivers,

so

I

wouldn't

have

to

do

anything.

Got.

E

A

A

E

G

A

A

There

are

two

things:

one

is

a

mock

driver

which

actually

is

most

things

just

for

unit

tests

as

far

as

I

understand,

and

then

there's

a

host

pack

driver

which

is

used

for

kind

of

end-to-end

testing

with

a

demi

glacier.

So

in

this

case,

I

think

the

host

pad

driver

would

be

the

one

that

we

want

to

use

to

exercise

these

co-taught

on

the

community

guide

got

it,

but

if

you

could

get

a

real

implementation

on

a

real

driver,

that

would

be

super

helpful

as

well.

E

A

A

H

So

we

got

initial

resizer

controller

most

and

is

in

progress.

There's

a

there's,

a

double

processing

of

PBC's

issue

that

we

are

trying

to

solve

that

affects

the

entry

controller

as

well.

The

the

steel

TVC

update

pilot

I'll

link

link

to

your

sod,

so

there

is

something

way

to

solve

in

both

the

places.

So

there's

a

Pearson

open

progress,

cool.

A

I

I

A

A

B

A

Cool,

thank

you

for

helping

drive.

This

D

pinching

I

think

it's.

We

are

the

first

sig

kid

to

actually

use

the

AR

DS

for

entry

component,

so

we're

getting

all

the

roadblocks

or

kind

of

forging

the

way

all

right.

Next

up

is

secure

volumes,

Jing

and

Tang

are

working

on

this

I

think.

Actually

it's

mostly

Jing.

A

A

C

J

H

So

I

talked

with

some

six.

She

Julian

folks

yesterday

and

we

haven't

been

up

there

still

working

on

like

like

the

idea

that

we

desire

that

we'll

work

together

to

get

a

design

for

this

thing

actually

say

that

it

makes

everyone

happy,

but

I.

Think

in

this

quarter,

best

we

can

do

is

targeted

design

for

this

one.

A

H

Yeah

we

might

want

like

once

the

like.

That's

another

thing

that

will

Wilk

we'll

come

back

to

this

when

we

go

to

the

design

issues

that

we

have

in

this

six

stories

dark,

so

it

will

become

relevant

when

we

remove

existing

predicates

the

cloud

provider

specific

predicates,

but

until

then

I

think

we

are

fine.

Okay,.

K

Sorry

sorry

I

can

on

the

end,

but

no

worries

I

yeah.

So

we

have

this,

we've

actually

been

holding

weekly

meetings

for

it

and

the

fussen

and

Matthew

are

working

full

speed

on

it.

We

died,

Matthews

got

a

design

document

out

there,

bringing

some

of

the

pieces

together

and

yeah.

This

is.

This

is

going

good.

G

A

H

G

A

H

A

Yeah

I

think

this

is

kind

of

painful

the

way

we

handle

it,

but

also

the

fact

that

we're

leaking

OS

details

into

the

API

is

pretty

bad

too.

It's

a

tough,

tough

problem

to

solve

next

up

is

volume.

Health

coming

up

with

the

design,

for

this

is

Nick,

rent

online

or

anybody

working

on

it.

My

guess

is

now,

since

he's

in

a

different

time

zone.

A

Next

up

is

CSI

entry,

post,

GA

tasks.

The

post,

g8

tasks

include

moving

the

callers

of

volume

attachment

to

b1

refactoring

any

refactoring.

Cleanup

we'd

only

need

changes

that

we

needed

to

do.

I

think

we

are

no

actively

working

on

that.

Considering

the

priority

is

that

correctly

you're?

Not

it's

not

assigned

to

you

so

I-

think

it's

currently

unassigned,

which

is

why

I

assume

it's

not

being

worked

on

right

now.

A

G

G

A

A

A

A

A

C

C

L

A

A

A

A

B

F

A

A

G

H

Don't

think

he'll

be

on

line,

but

this

I

added

it

because

also

being

tracked

by

by

celexa

gaps,

I

think

so.

There's

a

PR

there's

a

design

open.

There's

a

PR

open

as

well

I

encourage

people

to

look

at

that.

I

mean

I,

don't

know

if

it

should

be

now

a

spreadsheet

at

all

or

not,

but

it

was

being

done

so

I

just

added

it

to

make

sure

that

we

are

aware

of

it.

Yeah.

A

L

L

Oh

I

think

there

are

few

options

we

have

and

the

Indies

PR

big

today

he's

trying

to

just

add

a

force

option

to

a

month,

but

we

are

thinking

because

you

add

a

force

here.

That

means

every

amount,

no

matter,

it

is

related

to

an

F

s

volume

or

not.

We'll

use

this

option.

We

are

not

sure

whether

it

is

safe

or

not.

L

We

prefer

not

to

just

directly

use

force

to

to

old

amongst

operation

yeah,

and

so

we

are

thinking

either

one

we

kind

of

recognize

okay,

if

it

is

an

FS

type

of

volume

we

can

put

and

months

Misun

force

and

this

force

option

or

another

option

is,

since

this

relate

to

clean

up

the

volume

right

and

there

is

clean

up,

often

pod

the

function.

So,

whenever

the

parties

and

if

I'd

as

orphans,

so

we

can

maybe

use

force

option

there,

but.

L

A

L

H

L

Before

we,

we

have

a

big

issue

of

it

will

watch

the

whole

couplet

and

we

fix

it.

Right

now

seems

the

the

code

pass

should

only

affect

that

volume

should

not

select

make

the

couplet

hung,

but

still

we

are

not

completely

solve

this

issue,

because

the

apis

mount

is

still

hang

there

just

in

case

somewhere.

We

didn't

fix

correctly.

Maybe

it's

me.

Oh

you.

H

A

H

A

That's

fine

it,

but

I

think

we

were

also

considering

like

options

to

make

the

unmount

succeed.

I

think

it

was

both.

Options

were

under

consideration,

but

for

some

reason

this

was

never

brought

up

and

I

like

to

make

sure

we

understand

why

yeah

so

overall

I

think

this

is

a

good

approach

or

unmanifest

if

it

works

as

we

think

it

does.

A

We

need

to

be

careful

before

we

start

applying

this

in

the

orphan

pod

loop

or

more

generically

to

make

sure

we

don't

have

unintended

consequences,

so

we

could

do

multiple

PRS

one.

That's

focused

on

just

applying

this

to

you

know,

blocked

NFS

mounts

and

then,

if

that

works

as

expected,

we

can

see

where

else

we

can

apply.

It.

A

So

start

small

try

to

make

as

few

changes

as

possible

and

verify

that

it

works,

as

you

think

that

it

does

and

give

that

some

time

to

bake,

and

if

it

does,

we

can

see

where

else

we

can

apply.

This

I

don't

want

to

make

a

big

system

change

that

changes

unmount

for

all

options

and

then

have

that

blow

up

in

our

face.

A

A

H

This

was

already

merged

actually,

but

I

just

wanted

to

everyone

to

be

aware

of

it

like,

and

we

have

follow-up

discussion.

So

the

the

thing

is

like

we

have

three

hard-coded

predicates

for

II

do

like

used

to

be

had

with

it

and

mostly

hard

for

EBS

GC

and

cinder,

so

EBS

GC

energy

R.

So

this

P

R

adds

support

for

cinder

and

the

D

current

default

is

256

to

be

on

conservative

side.

So

it's

something

to

be

aware

of

so

and.

H

H

A

H

Could

be

boarded

wire

notes

allocatable,

so

so

the

that's

where

the

second,

the

design

issue

comes

from

like

like

Bobby

and

Cisco,

neither

one

it's

in

our

interest

to

to

duplicate

all

the

existing

cloud

provider,

specific

predicates.

So

this

would

mean

that

we

are

adding

this

predicate

and

we

are

duplicating

also.

A

H

It's

a

that's.

Some

folks

were

okay

with

adding

it

some

folks

weren't.

So

that's

where

we

stand

right

now,

and

the

thing

is

that

without

this

limit

like

it's,

it's

been

open

issue

since

forever.

Actually,

like

it's

an

ongoing

issue

since

forever,

there

are

many

issues

open

to

that.

People

are

asking

for

it

yeah,

but

after

this,

but

it's.

E

A

H

A

H

H

A

H

So

so

that

will

be

handled

as

part

of

the

how

we

this

is

a

problem

that

will

affect

all

the

other

three

plugins

as

well.

So

the

plan

is

like

as

the

entry

as

the

entry

to

CSM

migration

happens,

so

these

keys

will

be

respected

and

that's

the

plan

at

least

for

now,

and

the

CSI

migration

document

has

to

kind

of

take

into

account

these

keys.

Actually,

as

the

Maggie

didn't

happen.

So

not

only

this

is

tied

to

when

OpenStack.

What,

where

is

it

removed

from

entry?

L

H

A

A

H

A

Let's

move

on

to

the

next

one,

which

is

from

yan,

include

namespace

name

in

random

distribution

of

P

B's

among

availability

zones,

so

I

believe.

The

idea

here

is

that

today

there

is

a

algorithm

when

you

use

a

stateful

set

with

PVCs.

It

generates

the

PBC

names

in

such

a

way

to

allow

for

distribution

of

the

PVCs

among

multiple

zones.

If

you

have

multiple

zones,

that

algorithm

I

believe

is

solely

based

off

of

the

PVC

name

today

and

as

I

understand,

it

I

think

yawn

you're

proposing

incorporating

the

namespace

in

there.

I

I'm

crazy

customer

who

runs

some

kind

of

jobs,

they

create

namespace

with

random

name.

They

create

EVC

with

stable

name

and

they

run

whatever

they

run.

And

there

you

have

multi

zone

master

and

all

their

jobs

run

it

in

the

same

availability

zone

because

of

they

use

the

same

PC

name.

They

just

apply

something,

but

some

chart

and.

I

I

A

I

Is

one

catch?

If

you

have

a

cluster

wave

stick

for

set

and

you

update

the

cluster

the

and

you

resize

the

signal

set

you

at

Newport.

Obviously

it

would

be

scheduled

to

zone

where

there

is

no

pot.

Now

we

change

the

algorithm

in

between

so

it

may

be

scheduled

in

the

zone.

Where

is

that?

That

is

a

port,

so

someone

is

depending.