►

From YouTube: Kubernetes SIG Storage 20200423

Description

Kubernetes Storage Special-Interest-Group (SIG) Meeting - 23 April 2020

Meeting Notes/Agenda: https://docs.google.com/document/d/1-8KEG8AjAgKznS9NFm3qWqkGyCHmvU6HVl0sk5hwoAE/edit#heading=h.9axeng8iw4bc

Find out more about the Storage SIG here: https://github.com/kubernetes/community/tree/master/sig-storage

Moderator: Saad Ali (Google)

A

B

B

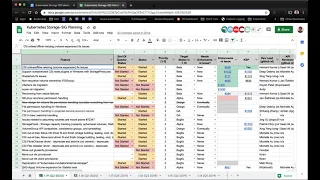

So

today,

on

the

agenda,

we're

just

going

to

go

over

the

planning,

spreadsheet

and

get

a

status

update,

confirm

the

assignments

that

we

did

last,

who,

at

the

last

meeting

for

our

1.19

release,

which

is

the

q2

release,

and

that's

all

we

have

on

the

agenda.

If

you

have

anything

else,

you

want

to

talk

about,

feel

free

to

add

it

to

the

agenda

and

we'll

get

to

it

after

the

planning.

So

let's

jump

right

into

it,

I'm

going

to

create

a.

B

B

D

Yeah,

so

we

had

a

meeting

last

week

and

I'm

planning

to

I'm

going

to

schedule

a

meeting

tomorrow

as

well.

I

was

looking

for

empty

slots

and

we're

just

having

bunch

of

feature

discussion

amazing.

So

we

have

a

PR

that

Michelle

is

reviewing

for

upgrading

the

CSS,

spec

and

other

changes.

Then

we

have

a

bunch

of

discussions

in

progress

about

recovering

from

precise

trade.

You

aware,

we

largely

have

achieved

it.

Consensus

then

that

next

week

week

we

have

I

think

I'm

going

to

give

the

status

for

others

items

on

as

well.

D

D

For

that

there's

a

CSI

expect

meeting

next

week,

29th

I

will

add

it

to

the

agenda

and

the

consensus

so

far

that

we

have

achieved

is

that

relaxed

the

wording

in

the

CSS

spec

to

change

shall

to

should

and

do

a

best-guess

check

in

the

external

resizer

about

volume

is

in

use

or

not,

and

that

we

have

a

read-only

expansion

issue

and

I

think

that

will

be.

That

is

something

we'll

discuss

tomorrow

and

we

have

a

solution,

but

you

have

we'll

discuss

it

tomorrow,

the

first

where

it

is.

B

I'd

say:

yeah,

there's

a

lot

going

on

here

with

a

volume

expansion

for

CSI

and

there's

been

at

least

one

or

two

meetings

already

to

discuss

the

existing

issues

and

looks

like

there's

gonna,

be

some

follow-up

meetings.

If

you're

interested,

please

attend

those

next

up,

we're

gonna

get

a

status

update

on

the

storage

proxy

for

Windows,

for

CSI,

deep

or

Jing

are

either.

If

you

on

the

call,

or

is

anyone

on

the

call

able

to

give

a

status

update,

maybe

take

it.

E

So,

yes,

we

have

CSI

windows,

meeting

every

Friday

and

last

Friday,

we

kind

of

list

the

plans

read

all

the

tasks

for

moving

CSI

proxy

to

beta

and

there

are

a

number

of

tasks

and

that

we

roughly

assign

the

tasks

among

a

people

post.

We

have

in

the

group

and

right

now

me

and

deep

and

Mark

and

the

kiya

from

Microsoft

and

right

now,

I'm

more

focusing

on

the

proxy

and

the

driver

build

and

release

too,

and

also

we

need

help

on,

like

a

testing

part,

to

have

like

three

tests

or

different

components.

E

B

Thank

You

Jane

and

anybody

on

the

call

who's

interested

in

helping

this

might

be

a

good

place

to

start

contributing

if

you're

interested,

please

reach

out

to

Jing

or

deep,

and

they

can

now

get

you

started

next

up.

We

have

snap

shots,

fix

fixing

issues,

we're

keeping

snapshots

and

bait

out

this

quarter

to

address

the

existing

issues.

Shank,

you

want

to

give

an

update

here.

Yeah.

F

So

we

have

been

making

bug

fixes,

so

we

have

already

caught

a

2.1,

but

then

there

was

a

some

shot.

Jen

discovered

some

bug,

so

he

fixed

that

and

we're

gonna

cut

another

release

for

that

and

then

Michelle

and

grand

also

fixes

some

CI

issues

so

we'll

be

cutting

a

release

shortly

for

that

and

then

continue

with

bug

fixing

so

still

stay

in

beta.

He

went

on

1900

and

also

I

think

we

shall

send

out

email

to

the

mailing

list

to

see

coq10

sure

about

this

deployment.

B

That's

an

important

issue

result.

Anyone

here

who

represents

a

distributor

of

kubernetes,

it's

an

important

problem

as

we

as

kubernetes

becomes

more

and

more

extensible.

The

components

that

are

core

are

no

longer

shipped

as

part

of

the

core

binary.

So,

for

example,

snapshot

has

a

standalone

controller

that

needs

to

be

installed

in

order

for

volume.

Snapshots

to

work,

and

distributors

of

kubernetes

are

responsible

for

deploying

that

controller.

B

If

you

have

a

storage

vendor

that

wants

to

install

a

CSI

driver,

they

can

install

the

CSI

driver

support

snapshots,

but

as

long

as

that

controller

doesn't

exist,

you

know

the

snapshot.

Functionality

is

not

going

to

work.

It's

up

to

the

distributor

of

the

kubernetes

installation

to

deploy

that

controller,

and

this

email

that

Michelle

sent

out

to

take

arch

is

kind

of

asking.

How

do

we

do

that

in

a

consistent

way

to

ensure

that

all

these

distributors

are

actually

bundling

and

deploying

these

controllers

that

we're

adding

that

no

longer

part

of

the

core

binaries.

B

D

F

Working

on

adding

this

a

flag

and

also

doing

some

prototyping

we're

also

looking

at

how

do

you

enable

CI

for

this

one?

We

do

have

one

question,

though,

so

this

let

me

show

these

questions

for

you,

so

this

needs

to

have

a

public

CI

for

for

beta,

but

the

problem

for

us

is

so.

If

we're

running

this

on

the

cloud,

we

would

have

certain

features,

depending

on

certain

new

feature:

vSphere

that

is

not

released

yet

so

we're

wondering

if

we

can

like

drowning

those

internally

and

publish

results,

I

think.

G

F

B

B

D

D

B

H

B

E

So

right

now

we

didn't

do

much

about

it.

It's

because

it

doesn't

cause.

There's

no

II

like

current

date,

there's

no

issues,

but

we

want

to

kind

of

get

some

expert

in

Windows

and

to

understand

deeper,

relate

this

area,

I

think,

especially

in

literature.

If

we

want

to

investigate

how

to

share

you

know

between

Linux

and

windows,

and

then

there

will

be

probably

become

issue

so

yeah

I

know.

I

B

J

B

G

So

Andy

has

refreshed

the

PR

and

it's

probably

ready

to

merge,

but

actually

there

was

a

new

email

sent

out

by

sig

release,

asking

people

asking

all

the

SIG's

to

very

carefully

consider

any

changes

in

119

that

might

require

an

action

required

release.

Note

so

I

think

that's

something

we

need

to

potentially

think

about

and

balance

if,

if

we

think

this

fix

is

gonna

fix

enough

issues

to

potentially

but

on

the

downside

potentially

break

some

CSI

drivers.

B

G

B

K

I'm

here

I

think

we

we

just

had

a

very

productive

design

meeting

where

we

agreed

that

we

want

to

move

ahead

for

119

with

storage

capacity.

We

have

one

key

question,

but

we

need

to

figure

out

around

the

API,

but

some

way

will

we

move

forward

with

that

one

way

or

the

other,

and

for

the

related

issue

about

inline

volumes.

K

We

also

decided

to

move

ahead

with

something

that

is

a

bit

more

generic,

a

new

design

for

inline

volumes,

where

we

have

some

persistent

volume

claim

inside

the

pot

spec,

and

we

will

create

a

separate

persistent

volume,

claim

object

from

that

and

then

use

for

normal

provisioning,

and

that

will

nicely

integrate

with

the

storage

capacity

tracking.

But

we

would

plan

to

add

these

two

things.

They

are

something

that

we

can

hope

hopefully

have

as

alpha

in

119.

B

G

G

B

B

Speaking

of

the

manual

provisioner,

so

there

is

a

effort

to

move

deprecated,

kubernetes,

external

sorry,

kubernetes

incubator

altogether,

including

the

external

storage

repo,

this

old

external

storage,

repo

contained

a

number

of

provisioners

and

other

miscellaneous

projects

that

we

were

working

on.

So

we

need

to

move

out

anything

important

that

we

have

before

we

just

deprecated

this

old

repo.

The

three

things

that

we've

identified

so

far

are

the

glossary,

FS

provisioner

and

the

two

NFS

provisioners

and

humble

and

Karen

are

working

on

moving

those

out

into

their

own

repos.

J

Yeah

so

last

week

I

filed

the

New

Republic's

proposal

proposal

and

go

to

the

food

on

kubernetes

agrippa

and

the

rapids

are

available

now

I

also

filed

a

draft.

We

are

with

the

migration

code,

few

more

things

to

fill

in

the

PR

and

I

got

distinct

the

breeze

with

that

I'll

continue

on

it

and

get

it

done

soon.

So

we

are

good

at

the

moment

on

this

migration

path.

B

J

B

B

B

So,

if,

if

you

are

someone

on

this

call,

who

cares

about

some

other

project

inside

external

storage,

repo,

please

flag

that

as

soon

as

possible,

because

as

soon

as

the

three

identified

tasks,

the

most

complete

we're

going

to

deprecated

this

repo,

because

we

haven't

heard

of

anything

else

that

needs

to

be

transferred

out?

If

that's

not

the

case,

please

let

us

know

as

soon

as

possible.

B

L

B

Alright

next

is

a

code

between

us

and

say

GARCH,

it's

mostly

a

requirement

from

sig

arch

to

us

to

go

and

do

this

we

had

the

mount

utility,

the

mount

library

that

we

split

out

from

the

kubernetes

repo

and

moved

into

the

kubernetes

util

repo,

and

now

the

suggestion

is

to

move

it

out

into

its

own

repo

to

leave

the

current

proposal

is

that

we're

going

to

move

it

to

kubernetes

staging

Michelle?

Any

updates

on

that

I.

G

B

So

if

anybody

on

this

call

is

interested

in

helping

with

this,

please

let

us

know

this

is

a

major

project.

It's

the

core

mount

code

that

is

used

by

all

of

kubernetes

and

many

many

CSI

drivers.

So

it's

extremely

important

critical

work.

If

you

want

to

get

your

hands

dirty

with

code,

this

is

the

place

to

do

it.

So

if

you're

interested

in

Welling

volunteering

to

work

on

this,

please

reach

out

to

me

or

Michelle

or

yon,

and

we

can

pershing

and

we

can

put

you

in

touch

with

the

right

right.

A

B

B

M

I'm

sorry

I

mean

I,

couldn't

couldn't

get

it.

Unmuted

I

am

working

on

a

update

to

the

design.

For

this

it's

we

need

a

design

that

addresses

in

particular

how

to

handle

the

error

conditions.

That's

the

big

remaining

issue

and

someone

request

something

that

won't

be

successful.

How

do

we

let

them

know.

B

L

B

N

C

B

F

C

O

B

B

B

F

So

we

had

a

meeting

with

Tim

to

get

his

feedback,

and

so

now

we

have

a

more

general

proposal

to

add

a

part

in

line

definition

and

they

have

another

another

API

to

request

those

container

notification.

Quite

a

container

notification

contain

notifier,

so

I

sent

her.

You

know

to

sick,

node

and

also

sick

us

trying

to

get

some

feedback,

but

haven't

really

heard

much.

F

F

I

think

there

are

some

concerns

about

the.

There

is

a

timeout

value

that

now

we

have

that

inside

the

container,

not

not

external,

not

in

the

request

object.

So

there

are

some

questions

on

whether

that

should

be

in

this

request

object.

So

it's

easier

to

control,

that's

one

question

and

then

there

is

also

some

question

on

the

the

privilege.

F

So

if

this

is

basically

if

this

is

even

in

the

even

though

if

it's

inside

the

container

I

think

usually

it

was

still

just

run

with

the

privileges

that

is

already

assigned

to

that

container,

it's

not

going

to

elevate

it.

So

if

you

just

if

we

just

follow

other

hooks

like

the

pre,

pre

stop

and

the

post

start

hook.

So

if

you

really

want

to

do

something

that

needs

higher

privilege,

then

probably

you

need

to

puree

container

that

has

that

privilege.

F

So

those

are

the

things

that

we

you

just

think

about,

but

we

probably

cannot

really

just

give

extra

privilege

because

we

don't

even

know

what

those

comments

are.

It

depends

on

what

comments

you

only

run

like

FS

Frieza

needs

some

more.

He

village,

but

some

other

comments

like

they

just

not

saying

he

does

not

need

it.

So

we

can

really

just

elevate

that

without

knowing

exactly

what

the

comment

is.

B

K

B

B

B

K

B

B

K

What

for

si

si

are

firmly

in

line

volumes.

I

think

we

just

we

just

keep

an

eye

on

it

and

we

don't

do

much

right

now,

but

it's

still

important

for

for

the

next

really

is

after

birth.

Afterwards,

for

these

from

Illinois

I

think

it's

it's

too.

It's

certainly

a

priority

for

me

and

because

it

blocks

kind

of

blocks,

it's

V,

the

other

ephemeral

volumes.

I

think

yeah

sounds

good.

Okay,.

B

K

B

K

H

B

H

What

we

want

in

this

feature

is

we

want

to

have

a

field

in

CSI

driver

object,

so

we

know

if

the

storage

provided

by

the

driver

supports

volume

or

the

ownership

or

not

because

NFS

typically

doesn't

and

the

apt

the

CSI

volume

plug-in

component

is

tries

to

change

this

with

every

scoop

and

that

usually

fails

and

Jana

are

unhappy.

So.

H

B

B

Next

three

items

are

about

CSI

migration,

I

think,

as

many

of

you

are

aware,

we're

planning

to

remove

the

entry

volume

code

by

kubernetes

1.21

as

part

of

a

larger

effort

within

kubernetes,

to

remove

cloud

provider

specific

code

from

the

course

crew

eddie's,

in

order

to

make

sure

that

we

still

give

users

a

seamless

experience.

There's

this

big

library

that's

been

built

out

that

will

automatically

shim

the

existing

entry

drivers

out

to

a

CSI

driver

as

as

long

as

that,

CSI

driver

is

available

in

order

to

complete

that

work.

B

D

G

B

So

I

think

the

plan

is

that

if

we

are

unable

to

get

a

migration,

Shin

created

we're

going

to

move

towards

deprecation

the

idea

being.

If

nobody

really

cares

about

this

code,

then

we're

just

gonna

remove

it,

but

if

there

are

people

that

care

about

it,

we'll

get

folks

to

build

out

the

migration

xem

is.

O

B

B

B

L

Side

one

key

thing:

here:

we

have

work

going

around

I,

think

Andy

is

doing

the

work

and

Michelle

is

reviewing

it

for

removing

the

published

parts

from

CSI.

It

might

be

good

to

track

it

in

the

planning

spreadsheet.

Just

so

that

everybody

who

is

writing

CSA

drivers,

become

aware

of

it

not

really

like

from

a

tracking

perspective,

but

at

least

from

our

perspective

Michelle.

What

do

you

think.

G

L

G

For

this

particular

PR

I

think

the

main

issue

is

that

the

CSI

spec

says

that

the

the

plug-in

is

responsible

for

creating

the

mounts

path,

but

kubernetes

was

actually

mistakenly

creating

the

path.

So

this

PR

is

trying

to

correct

it,

so

that

kubernetes

actually

does

what

the

CSI

spec

says

it

should

be

doing.

But

the

main

worry

here

is

that

plugins

have

probably

adapted

to

the

behavior

that

kubernetes

was

doing

and

we

risk

breaking

them

with

this

change.

That's.