►

From YouTube: Kubernetes SIG Storage 20190926

Description

Kubernetes Storage Special-Interest-Group (SIG) Meeting - 26 September 2019

Meeting Notes/Agenda: https://docs.google.com/document/d/1-8KEG8AjAgKznS9NFm3qWqkGyCHmvU6HVl0sk5hwoAE/edit#heading=h.iroeuobui0yw

Find out more about the Storage SIG here: https://github.com/kubernetes/community/tree/master/sig-storage

Moderator: Saad Ali (Google)

Chat Log:

None

A

Today

is

September

26

2019.

This

is

the

meeting

of

the

kubernetes

storage

special

interest

group.

As

a

reminder,

this

meeting

is

public

recorded

and

posted

on

YouTube.

Today

on

the

agenda,

we're

going

to

go

over

we're

going

to

go

through

1.17

planning,

so

the

1.17

release

dates

are

by

the

15th.

We

need

to

nail

down

the

features

that

we're

working

on

and

have

advancement

issues

open

for

them

and

any

caps

merged

for

those

features

by

November.

14Th

is

code

freeze

and

then,

when

member

19th

Doc's

must

be

completed

and

then

December

9th

is

the

release.

A

This

cycle

overall

is

shorter

than

normal,

because

Q

Khan

is

early,

is

late,

November

and

then

holidays

already

December.

So

just

like

you

are

in

general,

a

shorter

and

then

I

also

want

to

discuss

the

face

to

face

meeting.

Excuse

me

guys

anything

else

you

want

to

discuss,

please

feel

free

to

add

to

the

agenda

and

we'll

take

it

from

there.

A

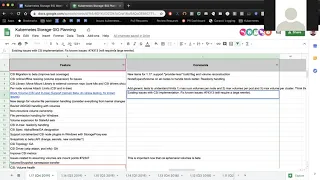

Ok,

next,

let's

move

over

to

the

planning

spreadsheet,

we

moved

over

items

from

1.16

that

were

not

completed,

and

so,

let's

go

through

those

one

by

one.

If

there's

any

item

that

you

think

should

be

on

here

feel

free

to

go

ahead

and

add

to

the

bottom,

and

you

have

the

next

two

weeks

to

do

that,

if

you

think

of

something

within

the

next

two

weeks

feel

free

to

add

to

the

bottom,

and

we

can

review

that

next

time

or

if

you

want

to

you,

could

do

it

during

this

session

as

well.

A

B

I

think

all

three

of

us

will

be

focusing

on

this.

The

couple

of

new

items

I

think

we

want

to

pick

up

for

117-

is

support,

disabling

and

basically

tie

in

with

the

provider

less

bill

flag,

that's

also

being

introduced

separately

in

communities,

so

provided

less

well

get

rid

of

the

in

creeped

logins

at

a

compile

level,

and

this

you're

trying

to

introduce

a

stage

before

that

bill

flag

takes

effect

so

that

you

have

more

confidence

that

things

will

actually

work.

Then

the

entry

plugins

completely

get

wiped

out,

or

rather

compile

out.

Okay,.

A

B

A

A

C

Yeah

yeah

pretty

much.

We

want

to

allow

calling

node

expand

volume

on

all

the

nodes

where

they

try

it.

What

is

it

I,

so

sweet

right

for

read-only

handling?

We

need

expect

change

in

CSI

that

I'll

proposal

for

that

I'm

working

on

it

and

yeah.

That's

the

that's

the

mean

of

the

issues

actually

and

they're

trying

to

fix

for

170,

okay,.

A

A

A

Okay,

no,

we

are

still

committed

to

this

and,

as

far

as

I

understand,

Travis

will

continue

to

work

on.

It.

Next

item

is

per

node

volume

attached

limits.

We

are

trying

to

get

this

to

GA.

This

quarter.

I

am

concerned

about

how

capable

we

are

going

to

be

if

that

Fabio

has

been

the

one

working

on

this

hobby

or

Yan

RI

there

for

you

on

the

line.

C

A

I

mean

I.

What

I

remember

was

the

issues

around

how

I

think

AWS

has

some

weird

issues

with

how

it

calculates

attachment

limits,

instead

of

it

being

only

volume

based.

It

takes

into

account

all

the

resources,

networking

and

other

things

and

calculates

limits

based

off

of

that

which

I

don't

know

if

we're

capable

of

doing

today

and

then

similarly

I

think

be

sphere.

A

C

A

D

E

D

A

A

C

We

are

working

on

it

actually,

as

a

like,

like

Brad

me,

I

had

a

call

with

Jing

last

week

about

where

she

is

with

her

and

we're

trying

to

see

how

we

can

unify

both

a

single

next.

But

how

hard

is

that

was

more

just

about

like

fixing

the

CH

owns

and

chmod

stuff,

and

we

are

also

interested

in

fixing

the

CH

con

I

think

that

is

still

the

right

person

in

at

least

okay.

So.

C

E

For

the

for

the

file

system,

G

IDs

and

you

II's,

it

needs

to

be

configurable

separately

so

that,

if

you

run

a

container

one

day

and

root

is

UID

1

million,

and

then

you

run

the

same

container

the

next

day

and

you

ID

2

million

that

the

the

file

system

you

ID

bits

are

the

same.

I

think

the

problem

is,

is

that

they're

not

and

and

I

I

need

to

look

I

need

to

look

into

exactly

what's

going

on,

but

I

think

there's

just

no

facility.

It's

a

sort

of

deal.

B

E

Translation

on

on

a

like

the

file

file

system,

UID

tid

bits

in

when

you're

running

in

a

container,

and

that's

why

we

have

at

this

problem

I

think

so.

So

any

attempt

to

like

to

you

know,

challenge

and

stuff

is

just

gonna

lead

us

around

in

circles.

If

the

kernel

just

did

the

mapping

for

us-

and

you

could

hide

all

that

and

then

the

problem

would

go

away.

A

All

right,

thank

you.

I'm

gonna,

put

down

Brad's

name

for

all

three

of

these

since

they're

very

closely

related

I

think

at

a

minimum.

We

should

probably

hit

the

non-recursive

volume

ownership

issue.

Since

it's

a

performance

issue,

we

could

go

from

there

next

item

closely

related

is

file

permission

handling

for

Windows

deeper.

You

still

the

right

person

to

work

on

that

yeah.

F

A

C

So

the

cap

was

approved

by

Sega

apps.

It

was

approved

by

I'm

gonna,

approve

it

ILD

200,

but

right

now

it's

Jordan

is

doing

it

for

API

changes

and

he

has

some

comments

open

for

it

and

I

think

that's,

there's

a

there's,

a

open

concern.

It's

already

covered

in

the

cap

itself

that

and

and

enjoyin

saying

it

issue

resolved

before

the

Cape

is

implementable.

Okay,.

E

C

The

CSI

block

volume

has

some

issues

about

like

cleaning

up

is

right

now

because,

like

me,

Michelin

year-

and

this

could

be

the

the

thing

is

that

map

the

stage

and

publish

both

are

done

in

the

same

operation

and

one

fails.

The

other

one

is

like

it

just

left

it

as

it

is,

and

and

the

cleaning

up

handling

is

kind

of

ugly.

So

there's

a

PR

outstanding,

that's

pretty

big,

which

kind

of

detangles

and

and

and

these

two

things

so

that

they're

called

separately.

C

C

E

A

G

A

A

And

then

also

for

anybody

else

on

the

call

I

am

just

assigning

the

the

features

out

to

folks

who've

already

committed

to

them

feel

free

to

jump

in.

If

you

are

interested

in

contributing

to

say,

hey,

I'm,

open

and

I'm

willing

to

work

on

something

we'd

like

to

increase

the

number

of

contributors

we

have

I

know

there's

a

handful

of

people,

in

this

sake,

Amethi

on

been

John

and

Michelle,

and

a

handful

of

others

who

work

on

a

lot

of

stuff,

and

they

have

a

lot

of

load

and

a

lot

of

items.

A

A

B

So

this

work

is

in

progress.

We

managed

to

get

like

a

nice

IKEA,

but

little

earth

in

father

sit

the

proxy

itself

in

the

CSI

proxy

repose

of

Michelle

reviewed

it

that's,

and

so

now

we're

working

on

the

API

groups

to

start

building

up

the

real

proxy

support

for

like

file

system

disk

and

things

like

that.

A

A

A

Next

item

is

snapshots

to

beta

I.

Think

that's

another

item.

We

need

to

prioritize

higher

because

it's

been

a

very

long

time.

There

are

a

number

of

changes

going

into

API

changes,

controller,

split

and

I.

Think

those

are

the

two

big

things:

metrics

Shing

and

Shawn

are

working

on

those

and

then

Michelle

can

help

review.

The

API

and

I

can

help

review

the

code

on

that

Shangri

Shawn.

Do

you

want

to

add

anything

here?

I.

A

A

A

A

Okay,

as

far

as

I

know,

he's

still

committed

to

coming

up

with

a

design

for

this

here

and

I

can

also

put

down

my

name

I'm,

going

to

look

into

how

we

can

surface

some

of

the

operational

metrics

in

the

CSI

drivers.

Up

next

issue

is

related

to

volumes

issue

related

to

assuming

volumes

or

mount

points.

I

haven't

looked

into

this

one,

but

seven,

two,

three,

four

seven

I

think

we

were

looking

for

people

to

help.

With

this

last

quarter

we

weren't

able

to

find

anyone.

A

G

G

What

I

would

like

to

do,

though,

is

within

the

next

couple

of

weeks

if

we

could

finalize

on

our

objective

in

terms

of

the

API

and

agree

and

what

I

mean

by

that

is

agree

that,

even

though

doing

a

generic

API

for

it

we're

not

actually

doing

it

with

the

intent

of

requiring

an

implementation

for

the

other

resources.

I

think

if

you

look

at

smarter

clayton's

comments

and

Tim's

comments

of

what

that

might

be,

causing

some

confusion.

G

A

Sense

and

we

are

planning

a

face-to-face

meeting

the

day

before

cube

Con

in

November

I

believe

November

18th,

I'm

gonna

talk

a

little

bit

about

that

at

the

end

of

this

call,

I

think

this

will

be

an

important

design

to

discuss

there.

So

if

we

have

something

kind

of

more

fleshed

out,

we

can

present

it

to

the

wider

community

there.

Okay,

it's

for

visibility

and

discussion.

A

A

That'd

be

great,

and

what

I'll

do

is

I'll

put

together

an

agenda

dock

where

people

can

start

listing

items

that

they're

interested

in

in

discussing-

and

we

can

add

this

there

as

well.

Next

item

is

volume

group

API.

This

is

something

that

we

use

kind

of

pump

the

brakes

on.

We

should

probably

pick

it

up

soon.

I

think

having

it

a

lower

priority,

p3

is

still

fine

and

designed

for

this

quarter

would

be

fine

Shing.

Are

you

still

interested

in

helping

drag

back?

Yes,.

H

A

H

A

A

A

All

right

next

item

are

CSI

drivers,

we've

got

NFS,

I,

scuzzy,

fiber,

channel

and

flex

for

NFS

and

I

scuzzy.

Both

of

them

have

standalone

repos

already

and

they

have

code

in

those

repos,

but

we

need

to

do

image.

Building

testing

see

ICD

documentation

to

actually

make

them

usable

for

fiber,

channel

and

flex

they're,

currently

in

a

common

repo,

and

we

want

to

split

that

out

and

kind

of

do

what

we

did

with

as

you

Nana

fest

for

those

Mithu

sin

was

assigned

to

work

on

the

NFS

one

Mathewson.

A

G

G

Last

week

he

had

an

interesting

proposal

about

modifying

some

of

these

a

little

bit

so

rather

than

just

offer

the

library

to

make

the

connections

and

stuff

like

that,

the

helper

libraries

he

had

talked

about

actually

having

a

base,

for

example,

I

scuzzy

driver,

so

all

of

the

basically

all

of

the

node

operations

would

be

implemented

and

a

common

thing

that

you

would

just

deploy,

and

then

you

would

only

have

to

implement

the

controller

side

I

just

wanted

to

raise

it

here

is

something

that

I

think.

Maybe

we

should

talk

about

and

investigate.

G

A

So,

just

to

make

sure

I

understand

correctly

currently

there's

two

models

that

are

supported.

One

is

you

get

the

melt

code

for

NFS

or

ice

cozy

or

fiber

channel

as

a

library

that

you

can

import,

and

then

you

write

going

to

write

the

rest

of

your

driver,

including

the

controller

and

provisioner,

and

the

second

model?

Is

you

get

a

very

stripped-down

version

of

these

drivers?

That

only

knows

how

to

do

the

mount

doesn't

know

how

to

do

provision

and

so

I

guess.

A

G

Correct

that

that's

the

that's

the

gist

of

it.

There

were

some

details.

He

couldn't

quite

sort

out

for

me

personally,

I

wasn't

a

I'm

personally,

not

a

fan

of

the

idea.

I

think.

The

last

thing

we

need

is

yet

another

path,

but

it's

not

fair

for

me

to

to

make

that

decision

on

my

own,

so

I

wanted

to

raise

it

to

the

recipe

so.

A

A

Keyboards

are

the

most

important

thing,

so

if

anyone

on

the

call

is

interested

in

working

on

these

in

any

capacity,

please

speak

up

now

or

feel

free

to

reach

out

reach

out

to

me.

Offline

and

I

can

connect

you

to

the

right

folks.

Otherwise,

for

the

time

being,

beads

will

continue

to

be

sadly

neglected.

D

A

In

some

capacity,

yes,

the

I

think

the

problem

is

that

drivers

code

is

there,

but

they

haven't

really.

It

hasn't

really

been

tested.

We

don't

actually

build

images.

So

how

do

you

deploy

the

driver

that

kind

of

got

it

at

least

for

NFS

and

I

scuzzy,

and

then

for

fibre

channel

and

flex?

There

was

an

initial

attempt

when

we

were

first

doing

CSI

to

build

these

out

and

they

haven't

really

had

much

love

since

then.

So

the

first

step

there

is

getting

it.

A

A

A

A

C

There's

a

general

problem:

I'd

set

a

set

out

to

fix

just

for

like

mount

car

like

stage

and

unpublished,

but

it's

a

problem

in

general

in

in

in

Canaries

like

in

cubelets

I'd.

If

you,

if

you

try

to

stage

a

volume

and

then

the

stage

operation

times

out

and

user

deletes

the

part,

then

that

part

that

that

mount

is

forever

lost

or

it's

not

cleaned

up

properly.

Similar

thing

would

happen.

Unpublish

also,

similarly

like

when

you

unpublish

a

volume

and

unpublished

operation

times

out.

That

has

to

be

handled

correctly.

C

So

it's

like

I'm

similarly,

like

UNPO

on

stage,

has

to

have

an

assembler

problem

and

the

rob

lock

devices

have

similar

problem,

but

they're

good

math

is

different.

They

have

to

be

fixed

in

similar

way,

so

I

have

a

peer

open

for

that

fixes,

just

at

least

right

now.

It

just

fixes

like

mount

device

and

mount

volume

good

path,

but

I'm

working

on

public,

unpublished

or

an

on-stage

also,

but

I

think

I

need

help

fixing

rob

block

cleanup

and

it's

just

a

problem

that

has

to

be

fixed

in

cubelet

as

over

our

overall

and

the.

A

C

C

A

C

A

Sounds

good,

okay,

I

think

that

is

all

the

items

that

we

have

there's

still

a

lot

of

items

and

I'm

still

concerned

about

people

who

have

multiple

items

assigned

to

them,

especially

given

that

this

is

a

short

quarter.

So

please

take

a

look

at

your

assignments

and

if,

in

two

weeks

time,

when

we

meet

again,

if

there's

something

that

you

can't

commit

to,

you,

please

feel

free

to

to

update

us

and

we'll

see

if

we

can

either

drop

the

commitment

for

this

quarter

or

find

somebody

else

to

work

on

an

item.

A

A

A

Okay,

so

now

the

date

to

remember

going

forward

is

October

15th.

So

if

you

have

a

feature

assigned

to

you,

please

make

sure

that

it

has

a

enhancement

issue

opened

and

if

it's

a,

if

it

requires

a

cap

that

the

cap

is

merged

and

approved

by

that

date,

October

15th

Oh

two

weeks,

basically

moving

on,

looks

like

deep

has

a

PR

that

needs

attention:

support

for

Windows,

I,

scuzzy

flex,

volume.

B

Yes,

so

this

is

basically

in

the

target:

D

repo,

that's

still

under

kubernetes

incubator.

So

basically,

what

we

wanted

to

do

from

the

seg

window

side

was

since

the

CSI

support

is

still

being

developed

and

it's

an

alpha.

There

myself

released

a

couple

of

flex

volume

scripts

to

support

ice,

cozy

and

SMB,

and

this

adds

the

support

for

that

flex.

Volume

script

in

the

target,

D

provisioner

nicely.

D

B

A

A

A

Where

cube

con

is

located,

it

is

going

to

the

event

is

going

to

be

on

Monday

November

18.

This

is

the

day

before

the

conference

it's

going

to

be

9:00

a.m.

to

5:00

p.m.

so

it's

a

full-day

event

keep

an

eye

out

for

an

email.

I'll

show

you

I'll

send

that

out

shortly

detailing

how

you

register

for

this

face

to

face

meeting.

Please

note

that

it

does

require

a

registration

for

cube

con

itself.