►

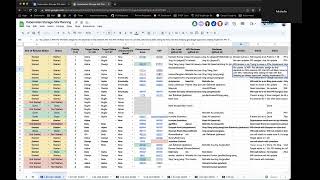

From YouTube: Kubernetes SIG Storage Meeting 2023-05-18

Description

Kubernetes Storage Special-Interest-Group (SIG) Meeting - 18 May 2023

Meeting Notes/Agenda: https://docs.google.com/document/d/1-8KEG8AjAgKznS9NFm3qWqkGyCHmvU6HVl0sk5hwoAE/edit#heading=h.ysglv6ob2p59

Find out more about the Storage SIG here: https://github.com/kubernetes/community/tree/master/sig-storage

Moderator: Saad Ali (Google)

A

All

right

today

is

May

18

2023.

This

is

the

meeting

of

the

kubernetes

storage

special

interest

group.

As

a

reminder,

this

meeting

is

public

recorded

and

posted

on

YouTube.

So

we

are

at

the

beginning

of

a

new

kubernetes

release

cycle

version.

128

127

was

released

a

few

weeks

ago

and

in

the

last

meeting,

Shing

actually

got

us

started

and

created

a

new

tab

in

our

planning

spreadsheet,

for

the

128

planning.

For

the

most

part

we

copied

over

the

remaining

uncompleted

items

from

the

127

side.

A

I

will

go

over

that

today.

Some

timelines

to

be

aware

of

the

cycle

has

already

begun

for

128

and

June

8th

is

going

to

be

the

production

freeze

or

production.

Readiness

freeze

this

date

means

that

this

is

a

date

where

you

must

have

any

new

features,

declared

and

officially

kind

of

approved

by

having

your

PRD

approved

and

so

on.

Then

the

16th

is

enhancements,

freeze

and

then

the

tenth

is

code.

Freeze

and

sorry.

This

is

19th

of

July

is

code.

A

A

If

you

have

any

items

that

you

think

should

be

in

128

in

terms

of

storage

features,

now

is

a

good

time

to

bring

it

up

and

we

can

go

ahead

and

add

it

to

the

list

and

start

tracking

it

as

part

of

the

work

for

the

Sig

Beyond

planning.

There

are

a

couple

of

items

I

already

see

here,

PR's

that

need

attention

and

designs

that

need

to

be

reviewed.

A

If

you

have

anything

that

you

would

like

to

discuss,

please

feel

free

to

add

to

the

agenda

at

any

point

and

we'll

get

to

it

after

planning.

You

can

find

the

link

to

the

agenda

in

your

calendar,

invite

so

with

that.

We

will

go

ahead

and

switch

over

to

the

planning

Tab

and

get

started

so

I'm

going

to

create

a

new

column

here

for

today

and

we'll

start

getting

status

updates

on

where

things

are.

For

the

beginning

of

this

new

cycle.

A

B

Yeah

I'm

here

yeah,

so

we

are

still

we

had.

We

missed

the

cut

last

time

by

a

couple

of

days,

so

we

have

most

of

the

code

done

so

I

need

to

update

it

and

and

work

on

one

more

bit

just

like

to

recovery

to

all

the

way

to

the

original

size.

That's

something

I

did

not

factored

in

originally,

so

that's

something

I'll

be

working

on

this

release,

but

this

yeah,

this

feature

is,

should

be

on

track.

A

C

B

C

C

A

E

A

C

A

H

E

Oh

I

think

also

so

the

design

of

this

also

depends

on

the

reference

Square

API

that

the

Sip

Network

had

but

I

think

we're

looking

at

trying

to

bring

that

API

into

core

kubernetes

instead

of

the

Gateway

apis,

where

it

currently

is,

I.

Think

the

networking

folks

reached

out

to

us

and

they're

looking

for

help

with

that.

I

E

I

E

I,

so

that's

that's

where

that's.

Why

they're

asking

us

for

help

right,

because

I

think

from

their

perspective

they

have

their

API

and

they

don't

I,

don't

think

they

plan

to

switch,

and

so

now

really

the

push

to

generalize.

It

is

mainly

coming

from

us

because

we

want

to

use

the

API

for

our

purposes.

C

A

Cool.

Thank

you

for

the

background

on

that

Michelle

and,

if

folks

are

interested

in

helping

here,

looks

like

there

is

an

opportunity

to

go

potentially

Beyond

sync

storage

work

and

help

with

moving

some

of

this

logic

from

Cigna

working

into

course.

So,

if

you're

interested

please

reach

out

to

one

of

the

tech

leads

Michelle

and

we'll

put

you

in

the

right

direction,.

A

J

A

C

So

we

yeah-

we

reviewed

this

again

in

yesterday's

data

production

meeting

I.

Think

then,

if

you

want

chamin,

please

as

well

so

right

now,

we

are

in

a

good

shape,

but

I

think

the

the

person

who

is

doing

the

POC

was

not

in

the

meeting

yesterday,

so

we're

just

waiting

for

them

to

decide

whether

they

want

to

go

ahead

and

pursue

how

far

or

not.

A

C

E

E

A

C

E

C

A

A

A

A

A

A

A

H

Yeah

so

hi

this

is

Manu,

so

we

we

discussed

internally

and

we

are

interested

in

contributing

to

this

I.

Just

a

few

minutes

ago,

I

reached

out

to

Mauricio

over

slack

and

asked

if

we

could

set

up

some

time

with

him

to

talk

in

detail

about

what

the

work

is

and

what

the

and

how

we

can

best

help

out

in

this

respect.

A

A

A

Okay,

so

then

I'll

go

ahead

and

remove

this

and

we'll

wait

another

cycle

before

reintroducing

it

okay.

So

next

up

is

SC

Linux

relabeling,

using

Mount

options,

CSI

driver

API

change

required

beta

on

by

default,

so

last

update.

There

was

a

bug:

it's

disabled

in

master.

Branch

may

need

to

do

another

beta

in

128..

G

A

L

E

L

A

A

C

J

J

C

C

C

A

That's

a

good

call

out

shank

So

for

anybody

on

the

call.

If

you

know

somebody

who's

using

ceph

RBD

the

entry

version,

they

should

reply

to

humble's

email

on

Sig

storage

and

let

us

know

because

the

assumption

is

that

nobody's

using

it

and

instead

of

doing

a

clean,

CSI

migration,

as

we

did

for

a

lot

of

these

other

plugins,

if

no

one's

using

it,

the

plan

would

just

be

to

deprecate

the

entry

one

without

a

proper

migration

story.

C

So

stephas

that's

more

clear

right.

They

didn't

even

have

an

opera

version,

so

that

humble

sent

that

one

out

earlier,

though

so

we

didn't

get

any

reply

saying

anyone's

using

it

and

then

for

surf

RVD.

It's

just

to

see

that

we

already

made

a

lot

of

effort

moving

that

to

to

Beta.

But

he

told

me

there

are

no

users,

I

think

even

a

young

and

also

confirm

that

right

so

for

openshift

side

there

are

no

users

using

that.

So

I

mean.

C

J

C

A

A

D

H

A

H

A

C

I

think

we

should

wait,

because

we

actually

want

to

want

the

backup

vendors

to

make

a

change

in

their

logic

if

they

are

relying

on

this

feature,

because

otherwise

they

can

be

broken,

because

we

are

also

going

to

more

without

a

flag

in

the

external

provisioner

and

snapshotter

to

to

true

no

the

feature

flag

for

this

feature.

So

once

we

enable

that,

if

they

don't

change

their

logic,

then

it

could

be

broken.

J

C

A

Okay,

so

it

sounds

like

Jan

you've

confirmed

we'll

Target,

GA

and

128.,

yes,

cool

all

right,

we'll

get

keep

it

here

and

has

work

started

or

not

yet

not

really.

Okay,

so

we'll

keep

that

as

not

started

and

then

keep

tracking

it

for

128.

thanks

Sean

next

is

quality

of

service

for

volumes,

so

I

know

Sonny's

been

working

on

this

I

assume

she'll

continue

to

drive

this

to

Alpha

this

cycle.

F

H

H

We

have

been

getting

a

lot

of

interest

from

customers

on

this

and

in

order

to

unblock

our

customers,

we

have

gone

ahead

and

implemented

temporary

custom

annotations

based

solution

for

for

this

capability,

but

we

are

very,

very

interested

in

seeing

the

standardization

effort

being

completed

and

agreed

upon.

So

I

would

love

to

be

part

of

that

discussion.

H

Unfortunately,

I

do

have

a

heart

stop

at

right

now,

right

after

this,

but

I

didn't

want

to

say

that

you

know

there

are

a

couple

of

things

that

we

would

like

to

clarify

about

this,

and

it

would

be

great

if

you

could

have

a

discussion

around

that.

If

not

today,

then

at

some

point

very

in

the

very

near

future,.

H

L

H

As

well

in

terms

of

the

interim

solution,

yeah,

that

was

exactly

what

I

wanted

to

cover

as

part

of

a

design

meeting

as

well.

The

solution

that

we

have

in

place

is,

while

it's

proprietary

for

now

I

think

there

is

some

room

to

kind

of

have

other

storage

providers

utilize

that

as

well

in

the

short

term

until

the

final

thing

becomes

available.

So

if

you

folks

are

interested

in

utilizing

that

we

are

more

than

happy

to

talk

about

how

we

can

make

that

possible,

so.

A

A

H

G

A

A

B

C

A

A

A

K

Yes,

I

am,

can

you

hear

me

properly?

Yes,

all

right

lost

weight

loss

meeting

we

were

here

to

say:

October

announced

that

we're

gonna

start

looking

at

all

endpoints

for

storage.

That

does

not

have

conformance

tests

and

we

started

to

work

on

that.

We

picked

up

three

three

quick

wins

the

delete,

storage

collection

and

the

patch

and

replace

for

the

same.

There

was

test

in

place

for

create,

read

list

and

delete.

K

So

what

we

did

is

we

looked

at

the

pattern

of

that

test

corrected

for

because

there

was

a

lot

of

changes

recently

in

the

way.

E2

exist

is

written

for

conformance,

so

we

updated

it

to

have

the

right

stylish

arrangements

for

conformance

test,

and

then

we

added

the

three

new

endpoints

so

now

that

new

tests

cover

all

seven

endpoints

for

that

specific

resource.

K

So

the

idea

is

to

have

the

stage

reviewed.

We

already

have

had

a

quick

review

with

only

one

small

knit,

so

I'll

appreciate

some

more

reviews.

It

is

quarter

to

five

in

the

morning

in

New

Zealand,

where

our

people

are

also

shortly

within

the

next

few

hours.

We

will

quickly

fix

the

net

and

I

appreciate

this

on

the

test

grid

as

soon

as

possible.

Then

the

idea,

once

we

got

this

on

the

tester,

it

has

to

run

its

two

weeks

for

like

free

once

it's

past

the

flight

3.

K

We

will

promote

it

to

conformance,

and

then

we

plan

to

remove

the

current

test

to

reduce

the

dish

load.

So

basically,

then

have

just

one

single

test:

testing

all

seven

endpoints,

but

we'll

only

do

that

once

we

monitored

at

least

for

a

couple

of

weeks

to

make

sure

that

the

promoted

test

does

not

flake

on

us

and

we

don't

remove

a

good

test,

replacing

it

with

a

birthday.

So

we

do

new

monitor

afterwards.

A

Awesome,

thank

you

so

much

for

the

update

and

thank

you

for

giving

an

update

so

early

in

the

morning.

Your

time

I

appreciate

it.

It

sounds

like

a

follow-up

step.

Here

is,

let's

just

make

sure

we're

getting

folks

to

help

review

this

so

folks,

on

the

call

can

help

review

and

get

this

moved

along.

That

would

be

awesome

and

then,

once

it

gets

merged,

looks

like

it'll

go

into

a

two-week

quarantine

to

ensure

it's

not

flaky,

and

then

it

should

be

done.

A

K

Thank

you

very

much.

We

really

appreciate

some

reviews

and

it

is

Friday

in

New

Zealand.

So

if

we

we'll

try

and

get

to

the

reviews

today,

otherwise

early

next

week

on

your

Sunday

we'll

deal

with

it,

get

it

in

as

soon

as

possible

and

then

the

monitoring

for

the

two

weeks

flight

free.

We

at

the

moment,

you

can

only

see

two

days

on

postgrad,

but

we

have

a

trick

that

we

basically

screenshot

the

the

test

grid,

monitor

it.

So

we'll

come

back

in

two

weeks

time

we'll

be

peers

reporting

on

the

flake

freeness

invertise.

A

All

right

next

up

is

design

review,

so

Sunny

for

the

CSI

spec

modify

volume.

It

sounds

like

you're

gonna

set

up

a

follow-up

meeting

to

discuss

with

folks.

So

if

anybody's

interested

please

reach

out

to

Sunny

offline

on

slack

and

she

can

help

get

you

added

to

the

meeting.

But

if

there's

anything

you

want

to

talk

about

in

this

meeting,

Sunny

feel

free.

F

Yeah,

so

just

bring

everybody

up

to

speed

that

the

design

decision,

considering

all

the

storage

prior

there

is

that

we

will

use

the

volume

qos

class

as

a

medium

for

a

cluster.

It

means

to

manage

the

configurations

and

then

the

end

user

will

apply

the

volume

qos

class

for

a

different

performance

parameters

setting,

and

there

are

some

discussions

or

questions

coming

up

from

the

design

that

that's

new

to

the

group.

F

C

Right,

so

for

us,

it's

more

since

in

our

case

is

more

adamant

driven.

So

that's

why

I

actually

brought

up

the

the

boarding

Qs

class

case

that

you,

the

admin

go

apply,

make

changes

to

the

voting

Qs

class.

Then

we

can

apply

that

for

every

volumes,

but

not

like

directly

renaming

that

on

a

particular

volume.

So

so

it's

more

when

you're

changing

this,

it's

more

like

affecting

everything

that

is

using

that

Qs

class.

E

C

That's

why

I

thought

that

that's

why

I

thought

the

proposal

actually

commonly

that?

Okay,

it's

right,

because

you

have

this

two

paths.

One

path

is

you

go,

modify

the

Qs

class

parameters

and

then

it's

going

to

run

into

your

controller.

The

resizer

controller

will

be

modifying

all

the

volumes

that

has

that

Qs

class

right

so

that

that

model

would

work

for

us,

but.

C

C

E

E

C

Well

so

in

this

case,

when

someone

is

changing

the

parameters

in

the

volume

Qs

class,

that's

still

a

admin

who

understands

right

what

it

is

going

to

change.

It's

not

like

just

like

a

regular

user,

go

change

that

right,

so

the

admin

would

have

some

knowledge

and

understand,

he's

changing

something

that

is

compliant

from.

Let's

say,

A

to

B.

L

So,

just

just

to

pile

on

in

that,

like

one

of

the

motivating

examples

we

have

for

this

cap

is

exactly

the

case

where

the

application

Dev,

who

is

not

kubernetes

cluster

admin,

is

tuning

a

workload

in

exactly

the

same

way

that

the

application

Dev,

who

is

not

a

cluster

admin,

chooses

the

size

of

their

disks.

They

are

also

tuning

the

IO.

L

C

No,

that's

not

what

I'm

saying

can

you

can

you,

sir?

Can

you

share

that

diagram

I

think

that

diagram

is

actually

pretty

clear,

I'm

I'm,

not

saying

just

one

way:

that's

definitely

not

what

I'm

saying

I'm

just

saying

that

for

us

to

be

able

to

support

something

like

this.

We

need

that

second

case.

That's

all

I'm

saying,

whereas

I

think

this

diagram

actually

captured

everything.

F

B

L

B

C

C

E

L

E

I

guess

what

I'm

I

guess?

What

I'm

wondering,

though,

is

if

say

in

the

vsphere

implementation

we

can

kind

of

like

hide

that

restriction

and

still

like

create

a

second

qos

class,

and

then

you

know,

go

through

the

the

first

method

of

a

PVC

being

able

to

control

wind

change,

because

I

feel

like

in

general,

like

just

the

the

flow

of

like

an

admin,

changing

the

Qs

class

and

then

having

that

just

roll

out

to

all

the

volumes.

I

think

is

kind

of

dangerous

or

kind

of

risky

yeah.

E

I

But

I

did

read

it

during

this

meeting,

and

the

thing

that

sticks

out

to

me

is

that

the

the

design

calls

for

the

system

to

apply

the

the

qos

class

at

creation

time,

but

also

for

it

to

remain

bound

to

the

qos

class

throughout

the

lifetime

of

the

PVC,

which

is

different

than

storage

classes,

right

storage

classes

that

can

be

deleted,

they

can

be

changed

and

kubernetes

won't

do

anything.

So

if

we're

going

to

go

with

this

design,

I

mean

first

of

all,

I

think

that's

weird!

I

I

I

I,

don't

think

that

I

mean

I

mean

like

changing

the

pvc's

storage

class.

From

like

silver

to

gold,

for

example,

should

cause

kubernetes

to

the

reconciler

to

reconcile

something.

If

what

silver

is

just

changes,

I

I

wouldn't

expect

every

every

PVC

that

is

currently

silver

to

go,

get

updated.

That's

that's

complicated

and

hard.

I

I

I

guess

what

I'm

saying

is:

I

mean

I,

don't

like

the

idea

of

the

volume

remaining

bound

to

its

qos

class

throughout

its

life

and

maybe

maybe

one

way

to

fix.

That

is

to

say

it's

not.

But

if

you

change

it,

then

it

goes

and

reads

whatever

you

changed

it

to

and

it

updates

it

to

that

so

like.

If

you

define

silver

and

then

you

create

a

volume,

it's

going

to

get

the

silver

parameters.

I

I

Yeah

I

mean

just

just

imagine

like:

where

do

you

even

store

the

reconciler

state

to

tell

you

that,

like

I've

gotten

through

379

of

the

you

know,

8

000

volumes,

you

know

how

do

you

even

keep

track

of

where

you

are

in

the

process

of

updating

things?

It's

really

hard,

it's

easier,

just

to

say.

Okay,

every

volume

has

has

a

or

every

PVC

has

a

spec

volume,

qos

class

and

a

status

Qs

class,

and

if

they

don't

match

we'll

reconcile

it

and

if

they

match

we

won't

do

anything.