►

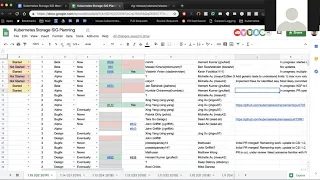

From YouTube: Kubernetes SIG Storage 20190411

Description

Kubernetes Storage Special-Interest-Group (SIG) Meeting - 11 April 2019

Meeting Notes/Agenda: https://docs.google.com/document/d/1-8KEG8AjAgKznS9NFm3qWqkGyCHmvU6HVl0sk5hwoAE/edit#heading=h.m97h3g6dgt4l

Find out more about the Storage SIG here: https://github.com/kubernetes/community/tree/master/sig-storage

Moderator: Saad Ali (Google)

Chat Log:

None

A

A

If

you

are

working

on

any

of

the

items

for

1.15,

it

would

be

good

to

be

aware

of

the

dates

that

you

are

working

with,

especially

considering

cube.

Con

is

going

to

be

in

the

middle

of

this

cycle,

so

the

first

important

date

coming

up

is

the

enhancements

freeze.

This

is

the

date

by

which

you

must

have

any

feature

that

you're

working

on

declared

as

make

sure

you

have

a

issue

opened

up

for

it

as

well

as

a

kept

approved

and

then

May

30th

is

the

actual

code.

Freeze

date.

A

That

means

all

code

needs

to

be

committed

by

then

for

the

feature.

So

with

that,

let's

go

ahead

and

move

to

the

spreadsheet

and

we'll

just

get

a

quick

status

update

from

folks

and

where

their

features

are

so

far.

First

up

we

have

the

migration

engine

for

CSI,

for

inter

entry

to

CSI

I

believe

David

is

still

out

on

vacation

Chang

or

deep.

Do

you

have

any

updates

yeah.

B

C

A

E

A

F

D

G

Sure

I

think,

basically,

we

want

to

be

able

to

figure

out

what

the

limit

limits

are

both

with

various

aspects.

One

aspect

is

the

maximum

number

of

volumes

that

you

can

mount

on

a

node

another

one

is

the

maximum

number

of

volumes.

A

single

pod

can

request

and

then

there's

also

just

maximum

number

of

volumes

in

the

whole

cluster.

So.

D

G

G

D

G

D

G

D

G

H

A

G

A

A

C

A

C

A

I

C

It

uses

the

get

volume,

stats,

there's

a

no

level,

so

it

uses

that

RPC

call

to

get

get

the

stats

information

and

then

just

publish

it

like

it

doesn't

even

publish

it's

like.

If

Prometheus

calls

it,

then

it

will

publish

it,

whoever

asked

for

it

and

it

will.

It

will

make

that

PC

call

and

fill.

It

will

return

the

data

that

that

is

already

a

format

defining

community.

Is

it

so

it

doesn't

implement.

J

I

I

C

K

C

G

I

I

A

I

We

yeah

we've

had

a

lot

of

discussions.

We

also

synced

up

with

the

sake

apps

again,

so

we're

thinking

that

so

we

will

be

what

can

this

machine

cook

and

then

sick

apps?

They

are

working

on

this

application

snapshot,

so

this

execution

hook

and

she

can

be

used

by

both

one

snapshot

and

application

snapshot,

and

then

we

also

talked

a

little

bit

about

the

group

snapshot

CG.

I

A

A

A

A

A

I

A

I

A

I

A

I

A

A

Okay,

next

item

is

okay.

Excuse

me,

CSI,

out

of

tree

moving

NFS

driver

to

a

separate

repo,

specifically,

the

work

that

was

remaining

was

updating

to

CSI

1.0,

adding

deployment

scripts,

adding

CI

CD,

cutting

new

branch

and

putting

deprecation

notices

on

the

old

repo,

Mathewson

or

pratik.

Any

updates

on

this

either

for

I

scuzzy

or

her

NFS

I.

A

A

Are

you

dependent

on

that

node

to

be

able

to

recover?

I

was

talking

to

Ben

about

this

offline

and

they

have

some

cool

things

that

they

do

for

their

driver

or

they're

considering

doing

for

their

driver,

but

also

something

for

others

to

keep

in

mind.

Is

you

know

in

kubernetes?

The

assumption

is

not

that

the

node

is

going

to

exist

forever.

Something

bad

might

happen

to

a

given

node.

It

might

hang

because

of

some

reason,

and

if

that

happens,

kubernetes

will

move

the

workload

to

a

different

node

and

it'll.

A

D

It's

actually

I

mean

that

is

a

problem,

but

there's

another

related

situation,

which

is

in

the

in

in

the

case

of

a

split

brain

where

the

old

node

is

actually

still

alive.

But

kubernetes

can't

talk

to

it.

Kubernetes

will

move

the

load

anyways

and

then

you'll

have

two

things

trying

to

use

the

same

volume

and

you

better

make

sure

that

only

one

of

them

wins

yep.

A

I

B

A

So

there's

they're

actually

two

parts

for

each

one

of

these

drivers.

There

is

a

repo

that

contains

that

common,

mount

libraries,

and

then

there

is

these

reference

implementations,

the

reference

implementation

doesn't

provisioner,

and

so

the

idea

is

people

can

use

that

as

a

basic

kind

of

driver.

If

they

really

want

to

or

storage

vendors,

can

take

that

common

library

and

use

that

to

build

their

own

driver

and

add

their

own

external

provisioner

or

whatever

they

need.

J

A

So

it

depends

on

if

you

implement

attached

or

not

if

you've

implemented

attach,

then

you

will

get

a

controller,

unpublished

or

a

detached

call

for

the

old

volume

after

six

minutes

of

waiting

for

a

clean

unmount,

which

will

never

happen

because

the

original

node

is

locked

up

now,

I

was

talking

to

Michelle

about

this

and

she

said

that

might

actually

no

longer

happen.

If

you

are

a

rewrite

once

volume

is

that

true

Michelle.

G

A

Okay,

so

in

the

happy

case,

what's

supposed

to

happen,

is

that

we'll

wait

a

maximum

of

six

minutes

after

the

pod

is

deleted?

To

give

it

a

chance

to

unmount,

then

we'll

call

detach,

which

for

CSI

is

controller,

unpublished

on

the

old

volume?

And

if

that

succeeds,

then

we

call

controller,

publish

for

the

new

node

on

the

same

volume

and

then

node,

publish

or

node

stage

and

note

publish.

J

A

There

was

a

big

discussion

around

this.

Jing

has

been

kind

of

guiding

it

a

little

bit.

The

proposal

was

hey.

If

we

know

for

certain

that

a

node

is

down,

we

should

just

you

know,

flush

things

out

much

more

quickly.

So

there's

a

proposal

out

for

some

sort

of

taint

to

apply

to

nodes

that

could

be

used

as

a

way

to

say:

hey.

This

node

is

down

for

certain.

You

don't

actually

need

to

wait

for

it

that

hasn't

gone

anywhere.

Yet

I

haven't

seen

an

owner

for

it.

I

know.

A

E

E

B

A

M

A

N

This

is

definitely

progressing

actually

earlier

this

week

and

if

last

week

another

PR

went

in

it's

still

been

kind

of

chunking,

this

up

into

smaller

pieces

in

figuring

out

what

items

in

the

mount

package

need

to

be

moved

elsewhere

to

within

KK,

so

that

will

just

be

left

with

something

that

can

be

moved

out.

So

the

last

thing

that

moved

was

the

the

namespace

enter

or

the

lien

Center

mounter,

so

that

was

moved

having

a

pending

open

PR

that

moves

a

global,

constant,

that's

still

waiting

on

review

on

the

windows

side.

N

Michelle

has

been

very

helpful

around

that

I

had

one

open

question

that

I

wanted

to

ask

here,

because

I

might

get

an

answer

quickly

at

the

husband

at

slack,

which

is

the

next

thing

I

want

to

move.

Is

the

exec

mounter

I?

Don't?

And

so

the

question

is:

is

there

any

reason

that

a

CSI

driver

would

want

to

use

the

exact

mounter?

So

this

is

it

wraps

other

mounters

and

it'll?

And

but

my

understanding

is

that

CSI

should

be

using

propagations

I,

don't

see

any

reason

why

a

CSI

plugin

would

use

the

accept

founder.

Sorry.

N

E

J

N

A

B

B

N

A

N

A

N

D

C

A

I

I

A

I

A

L

One

one

piece

that'll

be

interesting

for

that

is

you

know

today,

because

the

ownership

doesn't

pass

down?

Operators,

don't

garbage

collect,

PBC's

and

so

kind

of

the

expected

behavior

is

that

when

you

delete

a

primary

resource,

the

PVC

stick

around

if

we

pass

ownership,

I

think

that

means

that

the

PVCs

will

start

getting

garbage

collected,

so

I

think

it'll

be

important

to

make

sure

that,

like

the

existing

behavior

is

retained,

we

introduced

yeah.

K

K

And

that's

kind

of

the

thing

is

a

destructive

problem

is

gonna,

it's

gonna,

be

so

similar

to

that

like

do

you

make

a

new

field?

It's

just

that

same

structure,

that's

sort

of

the

the

the

way

it's

unfolding

right

now

how

to

handle

that.

Is

it

the

same

thing

or

is

it

a

different

thing?

Basically,

right.

C

K

C

A

A

M

M

A

Next

item

is

CSI

entry,

read-only

handling.

We

were

looking

for

an

owner

here.

This

will

become

important

once

we

have

cloning

or

populate

errs,

which

we're

working

on

for

this

order.

Anybody

interested

in

working

on

this

I

think

if

you've

looked

at

the

kubernetes

api,

you'll

notice.

That,

though,

there's

like

four

different

places,

you

can

specify

the

read-only

flag

and

what

each

read-only

flag

does

is

pretty

kind

of

random.

You

have

to

really

know

what

you're

doing

in

order

to

make

them

all

work.

A

Okay,

now

item

is

CSI

alpha-beta,

GA

designation.

This

has

been

started

in

the

CSI

community.

We've

had

multiple

discussions

about

what

we

can

do

to

try

to

try

to

to

basically

add

alpha

functionality

without

polluting

the

main

speck,

and

there

is

a

proposal

that

James

is

a

proof

of

concept

that

James

is

working

on.

If

you're

interested

go,

look

at

that

in

the

container

storage

interface,

spec

Rico,

he

has

a

pull

request

out

right

now.

So.

B

Yeah,

basically,

I

got

I

started

off

with

a

GCPD

plugin

and

like

a

precursor

to

this,

was

just

supporting

all

of

that

to

Windows

and

making

sure

the

node

plugin

is

getting

picked

up

by

the

node

driver

registrar,

so

the

plug-in

mechanisms

cubelet

is

working,

so

I

got

all

of

that

set

up

and

working

where

it's

getting

registered,

so

the

driver

register

side

is

up

and

the

next

phase

is

actually

plumbing

in

the

storage

proxy

in

there.

So

yeah

the

socket

communication

on

the

window

side.

Surprisingly

just

worked,

which

was

wow.

B

B

A

G

A

B

A

B

D

B

I

A

A

L

A

Yep

yeah

we

need

to.

We

need

to

think

this

through

I

think

there's

a

couple

ways

we

could

do

it.

We

could

open

up

a

whole

and

say

hey.

You

can

pass

through

any

generic

metrics

with

you

know,

that's

I,

don't

know

maybe

like

Prometheus

format

or

something,

but

then

we

have

to

worry

about

wiring

that

through

all

the

way

in

the

system

that

doesn't

really

buy

us

much,

we

could

also

say

hey.

A

J

J

Yeah,

this

is

really

just

a

just

a

it's

Jerry.

By

the

way,

very

soon

everything

I

would

say

is

very

written.

There.

I

was

at

the

epoch

bookshop

out

in

San,

Diego

and

I

get

a

blurb

book.

What

happens

is

under

there's?

Basically,

the

high-energy

physics

engineering

team

that

keeps

the

hundreds

of

petabytes

of

data

and

compute

clusters

running

giba

leaks,

the

attendees,

a

lot

of

people

inside

Brookhaven.

J

J

So

people

were

aware

of

that.

You

know

they

just

sort

of

informational

with

respects

to

singularity,

if

you've

never

heard

of

it.

It's

just

it's

not

off

it's

a

not

the

author,

though

it

can

take

a

docker

image

and

and

convert

it

and

run

it.

It

has

the

advantage

that

it's

run

in

the

users

space

there's,

no

only

root

access

to

run,

singularity

containers,

and

so

the

punchline

is

there.

J

There

is

a

CRI

implementation,

just

starting

with

them,

starting

to

talk

with

them

about

it,

because

the

high

energy

physics

wants

to

be

able

to

use

kubernetes

what

we

think

you'll

already,

not

docker

and

and

so,

which

beats

the

final

question.

If

anyone

has

had

a

chance

to

play

with

it,

if

they

tend

any

experience

using

CSI

with

it,

I

don't

know

this

maturity

level

and

more

important.

Most

importantly,

why

I'm

matching

it

now

is

if

I

have

a

call

with

them

tomorrow.

A

J

A

And

in

terms

of

making

it

work

with

CSI

I

think

the

biggest

requirement

we

tend

to

have

is

being

able

to

run

a

container

that

is

capable

of

doing

mount

namespace

propagation

by

basically,

if

you

set

up

a

mount

inside

of

the

container,

you

should

have

that

somehow

be

able

to

be

shared

outside

the

container

to

the

host

machine

and

as

long

as

the

container

has

some

way

to

be

able

to

do

that,

CSI

should

work.

That

tends

to

be

the

most

kind

of

specific

container

runtime

specific

thing

so

right

now.